Unlearning for One-Step Generative Models via Unbalanced Optimal Transport

Recent advances in one-step generative frameworks, such as flow map models, have significantly improved the efficiency of image generation by learning direct noise-to-data mappings in a single forward pass. However, machine unlearning for ensuring th…

Authors: Hyundo Choi, Junhyeong An, Jinseong Park

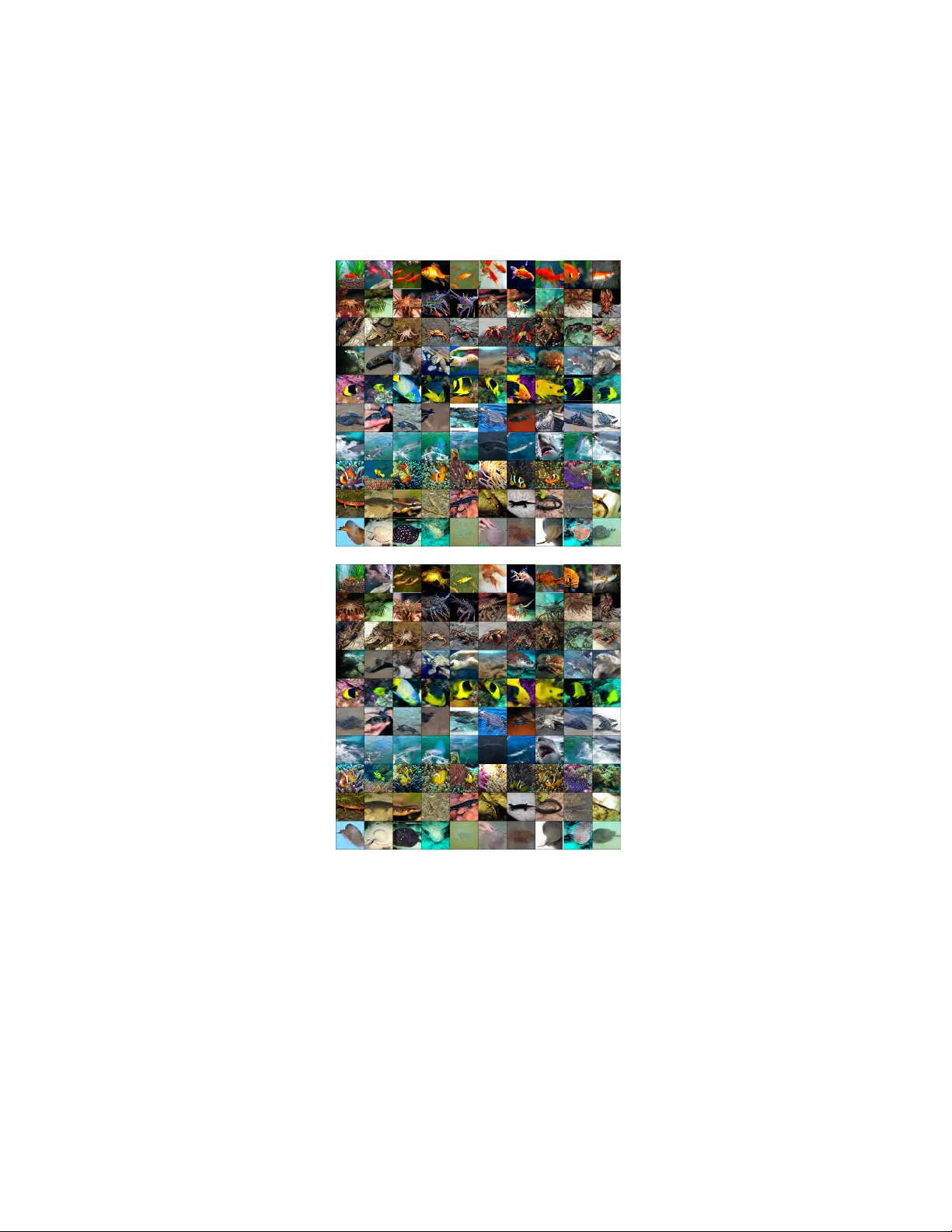

Unlearning for One-Step Generativ e Mo dels via Un balanced Optimal T ransp ort Hyundo Choi 1 ⋆ , Junh yeong An 1 ⋆ , Jinseong P ark 2 † , and Jaew o ong Choi 1 † 1 Sungkyunkw an Univ ersity , Seoul, Republic of K orea 2 K orea Institute for A dv anced Study , Seoul, Republic of Korea {hyuncde12, jhan0429, jaewoongchoi}@skku.edu, jinseong@kias.re.kr Abstract. Recen t adv ances in one-step generative frameworks, suc h as flo w map mo dels, ha ve significan tly impro ved the efficiency of image generation b y learning direct noise-to-data mappings in a single forw ard pass. How ev er, machine unlearning for ensuring the safet y of these p o w- erful generators remains entirely unexplored. Existing diffusion unlearn- ing methods are inheren tly incompatible with these one-step models, as they rely on a multi-step iterative denoising process. In this w ork, w e propose UOT-Unlearn, a no vel plug-and-play class unlearning frame- w ork for one-step generative models based on the Un balanced Optimal T ransp ort (UOT). Our metho d form ulates unlearning as a principled trade-off b et w een a forget cost, which suppresses the target class, and an f -div ergence p enalt y , which preserves ov erall generation fidelit y via re- laxed marginal constraints. By lev eraging UOT, our metho d enables the probabilit y mass of the forgotten class to b e smo othly redistributed to the remaining classes, rather than collapsing into lo w-qualit y or noise-like samples. Exp erimen tal results on CIF AR-10 and ImageNet-256 demon- strate that our framework achiev es sup erior unlearning success (PUL) and retention quality (u-FID), significantly outp erforming baselines. Keyw ords: Machine Unlearning · Unbalanced Optimal T ransp ort · One- step Generative Mo dels · Flo w Map Mo dels 1 In tro duction Generativ e mo dels, particularly diffusion mo dels, hav e achiev ed high-quality im- age synthesis [11, 24, 26]. How ever, their practical utilit y is heavily b ottlenec k ed b y slo w inference sp eeds, which stem from the requiremen t of tens to hundreds of iterative denoising steps. T o o v ercome this limitation, recen t adv ances hav e rapidly shifted to wards one-step generativ e architectures, such as consistency mo dels or flow maps [1, 17, 18, 25]. By directly mapping the noise distribution to the data distribution in a single forw ard pass, these models ac hieve near- diffusion-lev el generation qualit y with an adv an tage in sampling sp eed. ⋆ Equal contribution. † Corresp onding author. Preprin t. Under review. 2 H. Choi et al. As these generative mo dels grow faster and more pow erful, the risk of pro duc- ing undesirable conten t, suc h as Not Safe F or W ork (NSFW) imagery or cop y- righ ted materials, has simultaneously amplified. T o mitigate these risks without the prohibitive cost of retraining mo dels from scratch, machine unlearning has emerged as an essen tial safeguard [3, 20]. While unlearning tec hniques hav e b een activ ely developed for standard m ulti-step diffusion mo dels, the field of one-step generativ e mo dels remains entirely unexplored. This gap is particularly concern- ing because the extreme generation speed of one-step models can drastically accelerate the spread of harmful conten t. Therefore, establishing an unlearning framew ork for one-step generators needs to b e inv estigated. Crucially , existing diffusion unlearning metho ds [6, 7,15, 30] cannot b e straight- forw ardly applied to one-step architectures. Previous tec hniques inherently rely on m ulti-step denoising pro cesses, t weaking noise predictions or gradients at sp e- cific intermediate timesteps. In contrast, one-step mo dels map noise to data in a single forward pass. Without intermediate steps during sampling, traditional step-b y-step mo difications are difficult to apply in one-step generators [8]. T o bridge this critical gap, we propose UOT-Unle arn , the first plug-and- pla y class unlearning framew ork designed sp ecifically for one-step generativ e mo dels. Our framework addresses the unlearning problem b y exploiting the Un- balanced Optimal T ransp ort (UOT). Unlik e the standard Optimal T ransp ort (OT), which enforces strict distribution matching, UOT relaxes the marginal constrain ts and instead minimizes a trade-off b et ween the transp ort cost and distributional deviation. This flexibility enables us to control the balance b e- t ween removing the forget class and preserving the ov erall data distribution. In particular, w e introduce an unlearning cost that p enalizes samples b elonging to the forget concept. This hea vy p enalization induces distribution mismatch for the forget concept, which leads to the unlearning. Based on the neural op- timal transp ort formulation for UOT, we derive the unlearning ob jectiv e that fine-tunes one-step generative models. Imp ortan tly , UOT-Unlearn op erates us- ing only generated samples and a forget centroid, eliminating the need for real retain datasets while preserving the o verall generation qualit y . – W e introduce UOT-Unlearn, the first unlearning framework tailored for one- step generative mo dels based on the optimal transp ort form ulation. – W e formulate a nov el UOT-based ob jective that smo othly redistributes the target class probability into the remaining classes via an f -divergence p enalty . – Exp erimen ts on b enc hmark datasets (e.g., CIF AR-10, ImageNet-256) using represen tative one-step arc hitectures, suc h as CTM and Meanflow models, demonstrate that our metho d ac hieves sup erior class unlearning (PUL) and reten tion quality (u-FID). 2 Preliminaries 2.1 One-Step Generative Mo dels via Probability Flo w Con tinuous-time generativ e mo dels, suc h as diffusion mo dels [26] and flow matc h- ing [17, 18], aim to learn a con tinuous transformation b et w een a tractable noise Unlearning for One-Step Generative Mo dels via UOT 3 distribution p 0 and a target data distribution p 1 = p data . This transformation is represen ted by the Ordinary Differential Equation (ODE) modeling the proba- bilit y flow: d x t = v θ ( x t , t )d t, t ∈ [0 , 1] , (1) where v θ denotes a time-dep enden t v elo cit y field. In diffusion mo dels, v θ is im- plicitly defined via the score function (PF-ODE) [26]. In flo w matching, it is learned through a direct regression to the conditional v ector field. Generating samples from these mo dels requires numerically integrating this ODE from t = 0 to t = 1 . This iterativ e pro cess requires tens to hundreds of neural netw ork ev al- uations, making inference computationally expensive. T o ov ercome this limitation, recen t adv ances in Flow Map generative mo del- ing [8, 13, 25] aim to directly learn the solution to the probabilit y flo w (Eq. (1)), thereb y enabling one-step or few-step generation. F ormally , models lik e Con- sistency T ra jectory Model (CTM) [13] and MeanFlo w [8] distill or explicitly parameterize the flo w map ψ ( x t , t, s ) , which maps a state at time t directly to time s : ψ ( x t , t, s ) = x t + Z s t v θ ( x τ , τ )d τ . (2) By learning this mapping directly , the entire generation pro cess can b e executed in a single forward pass: x 1 = ψ ( x 0 , 0 , 1) = G θ ( x 0 ) . (3) Existing machine unlearning metho ds for generative mo dels are largely designed for multi-step diffusion pro cesses [6, 7, 15, 30], where mo difications are applied at intermediate denoising steps. Suc h approac hes are inheren tly incompatible with one-step generative arc hitectures. UOT-Unlearn addresses this gap by in- terv ening strictly at the final mapping stage G θ ( x 0 ) . This tra jectory-agnostic approac h allo ws our metho d to b e seamlessly in tegrated in to an y pretrained one-step mo del. 2.2 Un balanced Optimal T ransp ort (UOT) Optimal T ransp ort (OT) pro vides a principled framework for finding a cost- efficien t mapping that transforms a source distribution µ into a target distribu- tion ν [23, 29]. In the Kantoro vic h form ulation [12], this is defined by searching for a joint probabilistic coupling π that bridges these t wo distributions while minimizing the total transp ort cost [29]: C ( µ, ν ) := inf π ∈ Π( µ,ν ) Z X ×Y c ( x, y ) d π ( x, y ) , (4) where Π( µ, ν ) denotes the set of all joint probabilit y distributions whose marginals π 0 and π 1 m ust exactly match the source µ and target ν , resp ectiv ely [29]. Although OT provides a mathematically principled framework for distribution alignmen t, the strict marginal constraints can mak e the resulting transp ort plan 4 H. Choi et al. o verly rigid. In scenarios where probabilit y mass m ust b e remo v ed or redis- tributed, such as mac hine unlearning, this rigidit y b ecomes problematic. Un balanced Optimal T ransp ort (UOT) addresses this limitation by relaxing the hard marginal constraints through divergence penalties [4, 16]. Instead of enforcing exact marginal matching, UOT minimizes a principled trade-off b et w een the transport cost and the marginal deviation [2, 5]: C ub ( µ, ν ) := inf π ∈M + ( X ×Y ) Z X ×Y c ( x, y ) d π ( x, y ) + D Ψ 1 ( π 0 | µ ) + D Ψ 2 ( π 1 | ν ) , (5) where M + ( X × Y ) denotes the set of p ositiv e measures defined on X × Y , and π 0 , π 1 are the marginal distributions of π . The tw o f -divergences D Ψ measure the discrepancy b et ween the marginals ( π i ) and the corresp onding source and target distributions ( µ and ν ). F ormally , for a con vex, low er semi-con tinuous, and non-negative entrop y function Ψ , the f -div ergence b et w een the marginal π 0 and the source distribution µ is defined as: D Ψ 1 ( π 0 | µ ) = Z X Ψ 1 d π 0 ( x ) d µ ( x ) d µ ( x ) , (6) and similarly for D Ψ 2 ( π 1 | ν ) . Notably , UOT serv es as a generalization of OT; if Ψ 1 and Ψ 2 are chosen as conv ex indicator functions of { 1 } , the formulation precisely recov ers the classical OT problem as any marginal mismatch results in infinite cost [5]. Choi et al . [5] prop osed a neural optimal transp ort algorithm, called UOTM, for learning the optimal transport map of the UOT problem. By lev eraging the semi-dual form of UOT, UOTM in tro duces the following learning ob jectiv e where the transport map T θ and the dual p oten tial v ϕ are parameterized b y neural netw orks: inf v ϕ Z X Ψ ∗ 1 − inf T θ [ c ( x, T θ ( x )) − v ϕ ( T θ ( x ))] d µ ( x ) + Z Y Ψ ∗ 2 ( − v ϕ ( y )) d ν ( y ) , (7) where Ψ ∗ denotes the conv ex conjugate of Ψ . After training, the transp ort net- w ork T θ learns an unbalanced optimal transport map betw een the source distri- bution µ and the target distribution ν . In this w ork, we lev erage the in trinsic trade-off in the UOT formulation b et w een the transp ort cost and the marginal error to dev elop a nov el unlearning algorithm. In tuitively , the UOT ob jective (Eq. (5)) allo ws marginal mismatches when the resulting reduction in the transp ort cost outw eighs the corresp onding increase in the f -div ergence penalties. This prop ert y is particularly suitable for mac hine unlearning. By designing a cost function that p enalizes the generation of unlearn target, the UOT framework encourages the mo del to shift probability mass aw a y from the for get class while maintaining the ov erall in tegrity of the remaining distribution through a relaxed matc hing pro cess (Sec. 3.2). Unlearning for One-Step Generative Mo dels via UOT 5 3 Prop osed Metho d 3.1 Problem F ormulation W e consider the problem of class unlearning for a one-step generative mo del . Let G pre : Z → X denote a pretrained one-step generative model that maps a prior noise distribution x 0 ∼ µ Z to the learned data distribu- tion p pre = ( G pre ) # µ Z . The mo del is trained on the full data distribution p data , whic h includes b oth the target concept to be remo ved (the for get class ) and all other concepts (the r emaining classes ). Our goal is to fine-tune G pre in to a new generator G θ that remov es the forget class while preserving the generation qualit y and div ersity of the remaining classes. F ormally , let S f ⊂ X denote the semantic support in the data space corre- sp onding to the forget class, and let S r ⊂ X denote the seman tic supp ort for the remaining c lasses. The goal of our mac hine unlearning framew ork is to suppress the probability of generating forget samples while main taining generation within the retain region. This ob jective can be expressed as P G θ ( x 0 ) ∈ S f → 0 , and ideally , P G θ ( x 0 ) ∈ S r → 1 . (8) Our framework aims to achiev e this ob jectiv e for one-step generators operating in a single forward pass, without requiring access to real retain data during the unlearning optimization. 3.2 Unlearning via Unbalanced Optimal T ransport W e formulate the mac hine unlearning pro cess as a distribution transportation problem using the Un balanced Optimal T ransp ort (UOT) framework. The k ey idea is to exploit the in trinsic trade-off b et w een the transportation cost and the distribution matching error. Specifically , b y designing a cost function c ul ( · , · ) that imp oses a hea vy p enalt y on the for get region (detailed in Sec. 3.3), w e force the transp ort plan to a void generating p enalized concepts. T o mitigate this massive cost, the UOT ob jective naturally allows for a distribution matc hing error, safely steering the generative path wa y to ward an unle arne d distribution. T o formalize this framework, we consider the follo wing UOT problem where the source is the pretrained distribution, i.e., µ = p pre , and the target distribution is the full data distribution ν = p data . inf π ∈M + ( X ×Y ) Z X ×Y c ( x, y ) d π ( x, y ) + D Ψ 1 ( π 0 | p pre ) + D Ψ 2 ( π 1 | p data ) , (9) In this formulation, the source distribution µ represen ts the starting p oin t of the unlearning pro cess. The transp orted marginal π 1 corresp onds to the distribution pro duced b y the up dated generator after unlearning. The UOT framework induces t wo desirable properties for π 1 . First, the diver- gence term D Ψ 2 ( π 1 | p data ) encourages π 1 to remain close to the data distribution, thereb y preserving the o verall generation fidelity . Second, when the transp ort 6 H. Choi et al. cost assigns a large p enalt y to the forget region S f , the optimal transport plan a voids placing probabilit y mass in this region. As a result, the optimal trans- p orted marginal π ⋆ 1 satisfies S f ⊂ supp( π ⋆ 1 ) , whic h effectiv ely suppresses the generation of the forget class and leads to P G θ ( x 0 ) ∈ S f → 0 . (10) A t the same time, the divergence regularization ensures that the redistributed probabilit y mass remains within the support of the data distribution. In partic- ular, when D Ψ 2 is set to the Kullback–Leibler divergence (as used in our exp er- imen ts), D Ψ 2 ( π 1 | p data ) corresp onds to a reverse KL divergence, whic h exhibits the mo de seeking property [19]. This div ergence heavily penalizes probability mass assigned to regions where the data distribution has negligible densit y [19]. In other words, the optimal unlearned distribution π ⋆ 1 do es not assign p ositiv e mass outside S f ∪ S r . As a result, the redistributed mass concentrates on v alid seman tic regions corresponding to the remaining classes, leading to P G θ ( x 0 ) ∈ S r → 1 . (11) Based on this formulation, w e derive the unlearning ob jective using the semi- dual UOT framework. Let ∆T denote the un balanced optimal transp ort map that transforms the pretrained distribution p pre to the unlearned distribution π 1 . F ollowing the UOTM formulation (Eq. (7)), w e parameterize this transp ort map by a neural netw ork ∆T θ and obtain the following learning ob jective: inf v ϕ " Z X Ψ ∗ 1 − inf ∆T θ c ( x 1 , ∆T θ ( x 1 )) − v ϕ ( ∆T θ ( x 1 )) d p pre ( x 1 ) + Z Y Ψ ∗ 2 ( − v ϕ ( y )) d ν ( y ) # . (12) T o optimize this ob jectiv e efficien tly , w e leverage the pushforw ard structure of the pretrained one-step generator ( p pre = G pre# µ Z ). This allows us to repa- rameterize samples from the generated distribution through the laten t v ariable x 0 ∼ µ Z , where x 1 = G pre ( x 0 ) . Applying this change of v ariables yields inf v ϕ " Z Z Ψ ∗ 1 − inf ∆T θ c ( G pre ( x 0 ) , ( ∆T θ ◦ G pre )( x 0 )) − v ϕ (( ∆T θ ◦ G pre )( x 0 )) d µ Z ( x 0 ) + Z Y Ψ ∗ 2 ( − v ϕ ( y )) d ν ( y ) # . (13) Unlearning for One-Step Generative Mo dels via UOT 7 By iden tifying the composed mapping ( ∆T θ ◦ G pre ) with the fine-tuned gen- erator G θ , we obtain the follo wing unlearning ob jective: inf v ϕ " Z Z Ψ ∗ 1 − inf G θ c ul ( G pre ( x 0 ) , G θ ( x 0 )) − v ϕ ( G θ ( x 0 )) d µ Z ( x 0 ) + Z Y Ψ ∗ 2 − v ϕ ( y ) d ν ( y ) # . (14) Finally , in our constrained unlearning setting where real retain data cannot b e accessed, directly ev aluating the exp ectation with resp ect to the data distri- bution ν = p data is infeasible. T o address this issue, w e appro ximate the target distribution using the pretrained distribution itself, i.e., ν ≈ p pre . This approx- imation enables a fully data-free optimization pro cedure while preserving the structural guidance provided b y the original generator. inf v ϕ " Z Z Ψ ∗ 1 − inf G θ c ul ( G pre ( x 0 ) , G θ ( x 0 )) − v ϕ ( G θ ( x 0 )) d µ Z ( x 0 ) + Z Z Ψ ∗ 2 − v ϕ ( G pre ( x 0 )) d µ Z ( x 0 ) # . (15) 3.3 Cost Design for Unlearning T o implement the ob jective in Eq. (15), we in tro duce the unlearning cost function c ul ( · , · ) that explicitly p enalizes the generation of the forget class while preserving the remaining concepts. W e first compute an anchor vector µ f that represents the semantic center of the forget class in a feature space: µ f = 1 |D f | X x ∈D f f ( x ) , (16) where D f is a set of real forget samples and f ( · ) is a pretrained feature extrac- tor. This anchor pro vides a compact representation of the forget concept in the feature space. Using this anchor, w e define the forget region directly in the generated image space. Sp ecifically , the active for get r e gion R f ⊂ X consists of generated samples whose feature representations lie within a margin m of the forget anc hor µ f under the cosine distance: R f = n x ∈ X d cos f ( x ) , µ f < m o . (17) where d cos denotes the cosine distance and m defines the seman tic b oundary of the forget concept in the feature space. During training, generated samples are dynamically chec k ed against this region to identify forget-like outputs. 8 H. Choi et al. Algorithm 1 Class Unlearning via UOT Require: pretrained generator G pre , unlearned generator G θ (initialized from G pre ), dual p oten tial v ϕ , pre-computed forget anchor µ f . 1: for each training iteration do 2: Sample indep enden t noise batches B 1 , B 2 , B 3 ∼ µ Z 3: Compute c ul ( G pre ( x 0 ) , G θ ( x 0 )) for x 0 ∈ B 1 ∪ B 3 via Eq. (18) 4: Up date Dual Poten tial 5: Compute L v = 1 |B 1 | P x 0 ∈B 1 Ψ ∗ 1 v ϕ ( G θ ( x 0 )) − c ul ( G pre ( x 0 ) , G θ ( x 0 )) 6: + 1 |B 2 | P x 0 ∈B 2 Ψ ∗ 2 − v ϕ ( G pre ( x 0 )) 7: Up date ϕ to minimize L v 8: Up date Generator 9: Compute L G = 1 |B 3 | P x 0 ∈B 3 h c ul ( G pre ( x 0 ) , G θ ( x 0 )) − v ϕ ( G θ ( x 0 )) i 10: Up date θ to minimize L G 11: end for Based on this region, the unlearning cost is defined as c ul ( G pre ( x 0 ) , G θ ( x 0 )) = ( λ · m − d cos ( f ( G θ ( x 0 )) , µ f ) , if G θ ( x 0 ) ∈ R f , τ · ∥ G pre ( x 0 ) − G θ ( x 0 ) ∥ 2 2 , otherwise . (18) This cost function provides t wo roles in our unlearning framework: – F orget Cost (A ctiv e Expulsion): F or generated samples inside R f , a hinge-lik e p enalt y activ ely pushes the generated features aw a y from the for- get anchor µ f b ey ond the margin m . – Retain Cost (Fidelity & T ransp ort): F or samples outside R f , we imp ose a squared L 2 cost betw een the current output G θ ( x 0 ) and the pretrained output G pre ( x 0 ) . This term preserv es the fidelit y of the remaining classes while simultaneously serving as the transp ort cost in the UOT ob jective. λ and τ act as balancing weigh ts for the activ e forgetting and the fidelit y reten- tion, resp ectively . Through this design, our UOT framework safely redistributes the forgotten concepts into high-qualit y retain samples. 4 Related W orks Existing unlearning methodologies for generative mo dels are often tailored to the iterative denoising structure of diffusion mo dels. As a foundational baseline, Gradien t Ascent (GA) [27] attempts to reverse the training process by directly applying gradient ascent on the forgetting data. How ever, this naive parameter- space update often leads to sev ere instabilit y and catastrophic forgetting, de- grading the ov erall generation quality . T o mitigate suc h issues, Selective Amnesia (SA) [9] uses Elastic W eight Consolidation (EWC) to p enalize parameter changes based on the Fisher Information Matrix (FIM), but its reliance on expensive FIM Unlearning for One-Step Generative Mo dels via UOT 9 T able 1: Comparison of data requiremen ts during the unlearning optimization phase . Our UOT-based framework is the only metho d that op erates with zero real data , relying solely on syn thetic samples and a pre-computed cen troid. Metho d Optimization-phase Data Real F orget Real Retain Generated data Gradien t Ascen t (GA) Required None None VDU Required None None Selectiv e Amnesia None None Required SalUn Required Required Required ∗ UOT-Unlearn (Ours) None † None Required ∗ SalUn utilizes generated data only in specific large-scale generation tasks. † Real forget data is used only for one-time pre-computation of µ f , ensuring no real data is accessed during the optimization phase. calculations and generative replay limits real-time efficiency . Saliency Unlearn- ing (SalUn) [6] iden tifies sensitive weigh ts through gradient-based saliency to create forgetting masks, though its p erformance is highly sensitiv e to gradient and arc hitectural biases. V ariational Diffusion Unlearning (VDU) [21] introduces a v ariational inference framework that balances plasticity and stability to for- get sp ecific classes, reducing computational o verhead but still tied to the noise sc heduling and transitional k ernels of diffusion mo dels. Unlik e these diffusion-centric approaches, our UOT-based framew ork (Sec. 3) serv es as a univ ersal unlearning solution tailored for one-step generators. As summarized in T able 1, our structure-agnostic method ov ercomes the in tensive data dependencies of prior w orks b y requiring strictly zero real data during optimization, enabling a significan tly more efficien t unlearning procedure for one-step generators. 5 Exp erimen ts This section provides a systematic ev aluation of our UOT-based unlearning framew ork. Our experiments are organized to progressiv ely v alidate the core prop erties of the prop osed metho d. In Sec. 5.1, w e utilize a 2D synthetic dataset to visually analyze the probabilit y redistribution during the unlearning pro cess. In Sec. 5.2, we ev aluate the framework on high-dimensional image b enc hmarks, including CIF AR-10 [14] and ImageNet-256 [22], to assess its scalabilit y and the fundamental trade-off b et ween concept erasure and generativ e fidelity . In Sec. 5.3, we conduct an ablation study to c haracterize the sensitivit y of the opti- mization dynamics to core hyperparameters. Detailed implementation settings, including specific netw ork arc hitectures and h yp erparameter configurations, are pro vided in Appendix. W e compare our approac h against the established unlearning baselines: Gr a- dient Asc ent (GA) , Sele ctive Amnesia (SA) [9] , Saliency Unle arning (SalUn) [6] , and V ariational Diffusion Unle arning (VDU) [21] . Because these methods are 10 H. Choi et al. 1.5 1.0 0.5 0.0 0.5 1.0 1.5 x 1 1.5 1.0 0.5 0.0 0.5 1.0 1.5 x 2 p d a t a p p r e t r a i n (a) Pretrained 1.5 1.0 0.5 0.0 0.5 1.0 1.5 x 1 1.5 1.0 0.5 0.0 0.5 1.0 1.5 x 2 p d a t a p V D U (b) VDU (Baseline) 1.5 1.0 0.5 0.0 0.5 1.0 1.5 x 1 1.5 1.0 0.5 0.0 0.5 1.0 1.5 x 2 p d a t a p o u r s (c) UOT-Unlearn (Ours) Fig. 1: Unlearning results on a 2D toy dataset where the forget mo de is lo cated at (0 , 1) . (a) Pretrained one-step generator. (b) VDU leads to o verall distribution dis- tortion. (c) Our metho d redistributes the forget mode to the remaining mo des. designed for m ulti-step iterative denoising, w e adapt their ob jectives to operate within a single forward-pass generation framew ork (see App endix for adaptation details). 5.1 2D Synthetic Data W e utilize a 2D syn thetic dataset consisting of three Gaussian mo des to examine the unlearning dynamics in a visually interpretable setting. The primary goal of this experiment is to ev aluate whether the probability asso ciated with the forget target is successfully re-assigned to the remaining classes. Our UOT-based framew ork enables a principled redistribution of probability mass by leveraging the f -divergence p enalt y to relax strict marginal constraints. As shown in Fig. 1, the probability densit y previously assigned to the forget mo de is smo othly remapp ed tow ard the supp orts of the retain mo des. This mechanism ensures that the generator remains within the supp ort of the remaining classes, effectiv ely displacing the targeted concept while preserving the shap e and densit y of the retain distribution. In con trast, while the baseline metho d (VDU [21]) is successful in unlearning the forget mo de (at (0 , 1) ), the remov ed probabilit y mass is redistributed into the in v alid regions outside the support of p data . 5.2 Image Unlearning Benchmarks Exp erimental Setup. W e ev aluate our metho d on image-generation b enchmarks. Our primary exp eriments are conducted on the CIF AR-10 dataset using Con- sistency T ra jectory Mo dels (CTM) [13] and MeanFlow (MF) [8] as representa- tiv e one-step generativ e arc hitectures. T o assess concept erasure performance, w e p erform single-class unlearning targeting classes 1 ( automobile ), 6 ( fr o g ), and 8 ( ship ). W e further scale UOT-Unlearn to ImageNet-256 using the class- conditional Meanflow mo del. T o apply our unconditional unlearning framework, w e marginalize ov er class lab els, effectively treating it as an unconditional gen- erator. W e fo cus on aquatic classes to ev aluate seman tic shift. T o compute the Unlearning for One-Step Generative Mo dels via UOT 11 Pretrained Unlearned CTM (a) Class 1 (b) Class 6 (c) Class 8 (d) Class 1 (e) Class 6 (f ) Class 8 MF (g) Class 1 (h) Class 6 (i) Class 8 (j) Class 1 (k) Class 6 (l) Class 8 Fig. 2: Qualitative unlearning results on CIF AR-10 . Unconditional samples of target classes (1, 6, and 8) generated by CTM (top) and MF (b ottom) arc hitectures. T o clearly illustrate the semantic erasure of targeted concepts, the Unle arne d outputs are generated using the exact same initial noise seeds as their Pr etr aine d coun terparts. unlearning cost c ul , we employ pretrained netw orks as the feature extractor f ( · ) . In constructing the forget anc hor µ f , w e use only a small subset of forget-class samples (512 images), demonstrating that the proposed method requires minimal data to identify the target concept. Evaluation Metrics. T o quantitativ ely ev aluate the p erformance, we employ tw o primary metrics. First, P ercen tage of Unlearning (PUL) [28] measures the effectiv eness of removing the forget class by computing the relative reduction in its generation frequency . Let N pre and N unl denote the n umber of images gener- ated by the pretrained and unlearned mo dels, resp ectiv ely , that are classified as the forget class. The PUL score is defined as PUL = N pre − N unl N pre × 100% . (19) T o ensure fair ev aluation, we use indep enden t classifiers that are not inv olved in the training pro cedure, i.e., in computing the unlearning cost. Second, Unlearned FID (u-FID) [21] ev aluates the generative qualit y of the retained classes. W e compute the F réchet Inception Distance (FID) [10] b et w een the generated image distributions and the real images restricted to the retain classes. Sp ecifically , on CI F AR-10, w e calculate the u-FID be t w een the 45,000 real retain images (the full training set excluding the forget class) and 45,000 images randomly generated b y the unlearned mo del. On ImageNet-256, u-FID is computed against a lo calized retain subset of aquatic classes to precisely quan tify semantic collateral damage. Exp erimental R esults. Our framework demonstrates a strong abilit y to na vigate the trade-off betw een concept suppression and generativ e fidelity . As summarized in T ab. 2 and Fig. 3, our metho d achiev es consisten tly high PUL scores across all 12 H. Choi et al. T able 2: Unlearning p erformance on CTM and Meanflow. Best results are highligh ted in b old , and second-b est results are underlined. UOT-Unlearn consisten tly ac hieves the highest Percen tage of Unlearning (PUL) while preserving the original FID. Unlearned Original GA SA SalUn VDU UOT-Unlearn (Ours) Class FID ↓ PUL (%) ↑ u-FID ↓ PUL (%) ↑ u-FID ↓ PUL (%) ↑ u-FID ↓ PUL (%) ↑ u-FID ↓ PUL (%) ↑ u-FID ↓ T arget Model: Consistency T ra jectory Mo dels (CTM) [13] Class 1 (Auto) 4.53 88.07 160.03 91.16 49.80 90.64 41.36 53.86 71.98 80.32 9.90 Class 6 (F rog) 5.02 39.16 72.17 61.85 42.54 43.16 39.00 51.25 47.11 90.98 5.11 Class 8 (Ship) 4.36 95.40 208.80 64.50 62.48 48.65 45.70 48.43 52.07 85.23 5.88 A verage 4.64 74.21 147.00 72.50 51.61 60.82 42.02 51.18 57.05 85.51 6.96 T arget Model: Meanflow [9] Class 1 (Auto) 7.73 93.43 115.59 98.05 82.92 99.35 62.77 33.70 73.73 90.63 17.69 Class 6 (F rog) 9.23 60.59 47.40 58.49 21.14 50.14 20.06 50.42 66.86 96.25 19.58 Class 8 (Ship) 7.08 85.74 49.08 84.98 20.48 78.01 15.32 71.47 33.06 90.43 21.31 A verage 8.01 79.92 70.69 80.51 41.51 75.83 32.72 51.86 57.88 92.44 19.53 Class 1 Class 6 Class 8 CTM u-FID ↓ 20 40 60 80 100 4.53 20 40 60 80 100 0 20 40 60 80 100 5.02 20 40 60 80 100 0 20 40 60 80 100 4.36 20 40 60 80 100 0 P r etrained CTM Ours GA VDU SalUn S A PUL (%) ↑ PUL (%) ↑ PUL (%) ↑ MF u-FID ↓ 20 40 60 80 100 7.73 20 40 60 80 100 0 20 40 60 80 100 9.23 20 40 60 80 100 0 20 40 60 80 100 7.08 20 40 60 80 100 0 P r etrained MF Ours GA VDU SalUn S A PUL (%) ↑ PUL (%) ↑ PUL (%) ↑ Fig. 3: Step-wise unlearning tra jectories on CIF AR-10. W e analyze the trade-off b et w een concept erasure (PUL ↑ ) and generative fidelity (u-FID ↓ ) across unconditional CTM (top row) and MF (b ottom ro w) models. Our framework achiev es a sup erior trade-off, erasing target class while preserving distributional consistency . Metrics are computed sequentially using fixed initial noise seeds for fair v ariance analysis. targeted classes while maintaining an exceptionally lo w u-FID. This p erformance indicates that the cost-driven probabilit y redistribution effectively suppresses the forget class without degrading the learned data distribution. Qualitative results in Fig. 2 further v alidate that our approac h eliminates targeted semantics while preserving the structural integrit y of remaining concepts. High-resolution generation tasks in a class-conditional setting highlight the limitations of standard unlearning ob jectives. As rep orted in Fig. 4, ac hieving ev en mo derate concept erasure with GA on ImageNet-256 targeting the ‘Gold- fish’ concept fails to preserve the structural fidelity of the retain distribution, Unlearning for One-Step Generative Mo dels via UOT 13 Pretrained Baseline (GA) Ours F orget Class Retain Class Fig. 4: Unlearning p erformance on ImageNet-256 (Goldfish). Samples for the F or get and R etain classes. GA suffers from severe structural corruption (u-FID: 79.89) to achiev e concept suppression. In contrast, UOT-Unlearn achiev es robust erasure (85.08% PUL) while preserving generativ e fidelit y (u-FID: 20.16) relative to the base- line (FID: 11.57). u-FID is computed o ver 36 aquatic classes. inflating the u-FID to 79.89. T o ensure a structured probability redistribution, w e restrict the conditioning lab els during the generator up date to a lo calized subset of 37 aquatic classes, comprising the forget target and 36 seman tically adjacent concepts. This constraint encourages the model to remap the p enalized concepts in to relev an t retain domains, facilitating a smo oth semantic shift rather than in tro ducing unin tended distributional shifts. Consequen tly , our metho d yields a robust PUL of 85.08% while successfully limiting u-FID degradation to 20.16, generating div erse, high-fidelit y scenes even when strictly conditioned on the forget class. 5.3 Ablation Study W e in vestigate the sensitivit y of the prop osed UOT framework to its core hyper- parameters: the for get loss weight λ and the fe atur e distanc e mar gin m (Eq. (18)), b y targeting Class 8 on the CTM architecture. The parameter λ controls the strength of the unlearning term. As illustrated in Fig. 5a, setting λ = 1 . 0 pro- vides the most fav orable trade-off b et ween concept erasure (PUL) and generative fidelit y (u-FID). Relaxing this p enalty ( λ = 0 . 1 ) provides insufficient optimiza- tion signal for target remov al. Con versely , an excessively large weigh t ( λ = 5 . 0 ) destabilizes the unlearning pro cess. Rather than monotonically impro ving era- sure, this ov er-p enalization alters the learned flow mapping, leading to a simul- taneous degradation in b oth PUL and u-FID. 14 H. Choi et al. 0.1 0.5 1.0 5.0 W e i g h t ( ) 0.70 0.75 0.80 0.85 0.90 0.95 P U L ( U n l e a r n i n g D e g r e e ) PUL Selected FID 0 5 10 15 20 25 u - F I D ( I m a g e Q u a l i t y ) S e n s i t i v i t y t o L o s s W e i g h t ( ) (a) Sensitivity to Loss W eight ( λ ) 0.20 0.25 0.30 0.35 0.40 0.45 M a r g i n ( m ) 0.70 0.75 0.80 0.85 0.90 0.95 P U L ( U n l e a r n i n g D e g r e e ) PUL Selected FID 0 5 10 15 20 25 u - F I D ( I m a g e Q u a l i t y ) S e n s i t i v i t y t o M a r g i n ( m ) (b) Sensitivity to Margin ( m ) Fig. 5: Ablation study of k ey h yp erparameters for our UOT framework, con- ducted on CTM model for CIF AR-10 with target class 8. W e independently v ary the forget loss w eight λ (left) and the semantic distance margin m (righ t). The star marker ( ⋆ ) denotes our optimal configuration, whic h effectiv ely balances the concept erasure efficacy (PUL ↑ ) and the generative fidelity (u-FID ↓ ). The margin m defines the feature-space boundary required to accurately isolate and displace the target distribution. W e observ e an empirical optimum at m = 0 . 34 , where the target concepts are successfully erased without corrupting adjacen t seman tic regions (Fig. 5b). A conserv ativ e margin ( m ≤ 0 . 30 ) fails to fully encapsulate the forget set. Consequently , the mo del generates residual target semantics that mismatc h the true retain distribution, increasing the u- FID. On the other hand, an ov erly aggressiv e margin ( m ≥ 0 . 40 ) forces the unlearning ob jective to encroach up on neighboring retain classes. This excessiv e margin degrades the structural fidelity of non-target domains, demonstrating that a precisely calibrated margin is essential for localized concept remo v al. 6 Conclusion W e in tro duced a mac hine unlearning framew ork for one-step generativ e mod- els. Rather than adapting iterative denoising-based strategies, we cast concept erasure as an unbalanced optimal transport (UOT) problem and incorp orate a transp ort cost directly into the single-pass mapping. This formulation redis- tributes probability mass aw a y from the forget region without requiring access to real retain data. Empirical results show that baselines adapted to the one-step regime con- sisten tly exhibit an efficacy–fidelit y trade-off. Strong suppression often leads to significan t deviations from the original data distribution, whereas milder in ter- v entions lea v e residual target semantics. In contrast, our metho d consistently attains high unlearning p erformance (PUL) while main taining limited deviation in u-FID. These observ ations indicate that the UOT regularization induces a structured redistribution of probabilit y mass rather than large-scale distortion of the learned distribution. Unlearning for One-Step Generative Mo dels via UOT 15 Because the prop osed ob jectiv e op erates on the syn thetic probability flo w without arc hitectural modifications, it is compatible with differen t classes of fast generative mo dels. Viewing unlearning as constrained probabilit y transport offers a clear formulation for balancing remo v al strength and distributional con- sistency . F uture work includes exploring structured probabilit y redistribution across related seman tic classes within highly structured latent spaces, such as large-scale hierarchical datasets, as well as analyzing the theoretical stability of the unlearning pro cess under alternative cost form ulations. A c kno wledgements Hyundo Choi, Junh yeong An, and Jaew oong Choi are partially supp orted by the National Researc h F oundation of Korea (NRF) grant funded by the Korea go vernmen t (MSIT) (No. RS-2024-00349646). Jinseong Park is supp orted by a KIAS Individual Grant (AP102301, AP102303) via the Center for AI and Natu- ral Sciences at Korea Institute for Adv anced Study . This work was supp orted by the Center for A dv anced Computation at Korea Institute for Adv anced Study . References 1. Alb ergo, M., V anden-Eijnden, E.: Building normalizing flows with sto c hastic in- terp olan ts. In: ICLR 2023 Conference (2023) 2. Bala ji, Y., Chellappa, R., F eizi, S.: Robust optimal transp ort with applications in generative modeling and domain adaptation. Adv ances in Neural Information Pro cessing Systems 33 , 12934–12944 (2020) 3. Bourtoule, L., Chandrasek aran, V., Cho quette-Choo, C.A., Jia, H., T ra vers, A., Zhang, B., Lie, D., Papernot, N.: Machine unlearning. In: 2021 IEEE Symp osium on Securit y and Priv acy (SP). pp. 141–159. IEEE (2021). https: //doi .org/ 10. 1109/SP40001.2021.00019 4. Chizat, L., Peyré, G., Sc hmitzer, B., Vialard, F.X.: Unbalanced optimal transp ort: Dynamic and k an torovic h formulations. Journal of F unctional Analysis 274 (11), 3090–3123 (2018) 5. Choi, J., Choi, J., Kang, M.: Generativ e mo deling through the semi-dual form u- lation of unbalanced optimal transport. In: Proceedings of the 37th In ternational Conference on Neural Information Pro cessing Systems. pp. 42433–42455 (2023) 6. F an, C., Liu, J., Zhang, Y., W ei, D., W ong, E., Liu, S.: Salun: Emp o wering ma- c hine unlearning via gradien t-based weigh t saliency in b oth image classification and generation. In: International Conference on Learning Represen tations (2024) 7. Gandik ota, R., Materzynsk a, J., Fiotto-Kaufman, J., Bau, D.: Erasing concepts from diffusion mo dels. In: Proceedings of the IEEE/CVF International Conference on Computer Vision. pp. 2426–2436 (2023) 8. Geng, Z., Deng, M., Bai, X., Kolter, J.Z., He, K.: Mean flows for one-step generative mo deling. In: Adv ances in Neural Information Pro cessing Systems (2025) 9. Heng, A., Soh, H.: Selectiv e amnesia: A contin ual learning approach to forgetting in deep generative mo dels. In: Adv ances in Neural Information Pro cessing Systems. v ol. 36, pp. 17170–17194 (2023) 16 H. Choi et al. 10. Heusel, M., Ramsauer, H., Unterthiner, T., Nessler, B., Ho chreiter, S.: Gans trained b y a tw o time-scale up date rule conv erge to a lo cal nash equilibrium. In: A dv ances in neural information pro cessing systems. v ol. 30 (2017) 11. Ho, J., Jain, A., Abb eel, P .: Denoising diffusion probabilistic mo dels. In: Adv ances in Neural Information Pro cessing Systems. v ol. 33, pp. 6840–6851 (2020) 12. Kan torovic h, L.V.: On a problem of monge. Uspekhi Mat. Nauk pp. 225–226 (1948) 13. Kim, D., Lai, C.H., Liao, W.H., Murata, N., T akida, Y., Uesak a, T., He, Y., Mitsu- fuji, Y., Ermon, S.: Consistency tra jectory mo dels: Learning probabilit y flow ode tra jectory of diffusion. In: International Conference on Learning Representations (2024) 14. Krizhevsky , A., Hinton, G., et al.: Learning multiple lay ers of features from tin y images. T ec h. rep., Univ ersity of T oron to (2009) 15. Kumari, N., Zhang, B., W ang, S.Y., Shec htman, E., Zhang, R., Zh u, J.Y.: Ablating concepts in text-to-image diffusion models. pp. 22634–22645 (10 2023). https : //doi.org/10.1109/ICCV51070.2023.02074 16. Liero, M., Mielke, A., Sav aré, G.: Optimal entrop y-transp ort problems and a new hellinger–k an torovic h distance b et ween p ositiv e measures. In ven tiones mathemat- icae 211 (3), 969–1117 (2018) 17. Lipman, Y., Chen, R.T.Q., Ben-Ham u, H., Nic k el, M., Le, M.: Flo w matc hing for generative modeling. In: In ternational Conference on Learning Representations (ICLR) (2023), https://openreview.net/forum?id=PqvMRDCJT9t 18. Liu, X., Gong, C., Liu, Q.: Flow straight and fast: Learning to generate and transfer data with rectified flow. In: International Conference on Learning Represen tations (2023) 19. Murph y , K.P .: Probabilistic machine learning: an introduction. MIT press (2022) 20. Nguy en, T.T., Huynh, T.T., Ren, Z., Nguyen, P .L., Liew, A.W.C., Yin, H., Nguyen, Q.V.H.: A surv ey of machine unlearning. ACM T ransactions on Intelligen t Systems and T ec hnology 16 (5) (2025). https://doi.org/10.1145/3749987 21. P anda, S., V arun, M., Jain, S., Maharana, S.K., Prathosh, A.: V ariational diffusion unlearning: A v ariational inference framework for unl earning in diffusion models. In: NeurIPS Safe Generative AI W orkshop (2024) 22. Russak ovsky , O., Deng, J., Su, H., Krause, J., Satheesh, S., Ma, S., Huang, Z., Karpath y , A., Khosla, A., Bernstein, M., et al.: Imagenet large scale visual recog- nition challenge. International journal of computer vision 115 (3), 211–252 (2015) 23. San tambrogio, F.: Optimal transp ort for applied mathematicians (2015) 24. Song, J., Meng, C., Ermon, S.: Denoising diffusion implicit models. In: In ternational Conference on Learning Represen tations (2021), https://openreview.net/forum? id=St1giarCHLP 25. Song, Y., Dhariwal, P ., Chen, M., Sutskev er, I.: Consistency mo dels. In: Interna- tional Conference on Machine Learning (2023) 26. Song, Y., Sohl-Dickstein, J., Kingma, D.P ., Kumar, A., Ermon, S., P o ole, B.: Score- based generative mo deling through sto c hastic differential equations. In: Interna- tional Conference on Learning Represen tations (2021) 27. Th udi, A., Deza, G., Chandrasek aran, V., Papernot, N.: Unrolling sgd: Understand- ing factors influencing machine unlearning. In: 2022 IEEE 7th European Symp o- sium on Securit y and Priv acy (EuroS&P). pp. 303–319. IEEE (2022) 28. Tiw ary , P ., Guha, A., Panda, S., AP , P .: A dapt then unlearn: Exploring param- eter space semantics for unlearning in generative adversarial netw orks. T ransac- tions on Machine Learning Research (2025), https://openreview.net/forum?id= jAHEBivObO Unlearning for One-Step Generative Mo dels via UOT 17 29. Villani, C., et al.: Optimal transp ort: old and new, v ol. 338. Springer (2009) 30. Zhang, G., W ang, K., Xu, X., W ang, Z., Shi, H.: F orget-me-not: Learning to forget in text-to-image diffusion mo dels. In: Pro ceedings of the IEEE/CVF Conference on Computer Vision and P attern Recognition. pp. 1755–1764 (2024) 18 H. Choi et al. A Implemen tation Details A.1 Exp erimen tal Settings In our unlearning exp erimen ts, we ev aluate our approach on b oth unconditional and class-conditional generation tasks across different resolutions. T o ensure re- pro ducibilit y , all generative models, classifiers, and feature extractors used in our exp erimen ts are adopted from publicly av ailable pretrained chec kp oin ts. – CIF AR-10 [14] (Unconditional Generation): W e utilize Consistency T ra jectory Model (CTM) [13] and Meanflo w [8] mo dels as unconditional generators. W e focus on the class unlearning task—erasing a sp ecific sin- gle class from the dataset—and conduct separate unlearning exp erimen ts targeting Class 1, Class 6, and Class 8. – ImageNet-256 [22] (High-Resolution Class-Conditional Setting): W e employ the Meanflo w [8] mo del. Rather than unlearning across all 1,000 classes, we restrict the conditioning labels during the generator up date to a lo calized subset of 37 aquatic classes. Sp ecifically , w e c ho ose “goldfish” as our target forget class, while the remaining 36 classes serve as seman tically adjacen t concepts. This localized constraint is inten tionally designed to en- courage the model to remap the forgotten concept in to relev an t domains, facilitating a smo oth semantic shift rather than introducing unintended dis- tributional shifts. – F orget Anc hor ( µ f ) Calculation: T o compute the unlearning cost c ul and construct the forget anc hor (centroid) µ f in the feature space, we employ a pretrained feature extractor f ( · ) on a small subset of sampled images from the forget class. Sp ecifically , we utilize 512 images for the CIF AR-10 dataset and 260 images for the ImageNet-256 dataset (representing exactly 20% of the approximately 1,300 a v ailable training images p er class). This demonstrates that our prop osed method requires minimal data to accurately iden tify and erase the target concept. – Strict Unlearning Scenario: F urthermore, w e operate under a strict un- learning scenario where the original retain data is completely inaccessible, and only the fully pretrained c heckpoint is av ailable. This sp ecific c heck- p oin t constrain t necessitates structural mo difications to the baseline meth- o ds, whic h are detailed in Section B. A.2 Pretrained Mo dels and Ev aluation Netw orks Pr etr aine d Gener ative Mo dels F or unconditional generation on the CIF AR-10 dataset, we adopt the pretrained CTM (FID: 1.73) and Meanflow (FID: 2.80) c heckpoints. F or high-resolution conditional generation on ImageNet-256, we uti- lize the pretrained conditional Meanflow chec kp oin t (SiT-XL/2) with an initial FID of 3.43. F ollowing the ECCV anonymit y p olicy , exact links to the pretrained mo dels are omitted and will b e added later. Unlearning for One-Step Generative Mo dels via UOT 19 Evaluation Networks T o rigorously ev aluate the efficacy of our unlearning frame- w ork, we emplo y the follo wing pretrained ev aluation netw orks: – Classifier (for PUL calculation): F or CIF AR-10, we use a pretrained DenseNet-121 model which ac hieves an accuracy of 94.06%. F or ImageNet- 256, we emplo y a pretrained ViT-L/16 mo del, achieving an accuracy of 88.55%. – FID Calculation: W e compute the F réchet Inception Distance (FID) and unlearned-FID (u-FID) using the standard Inception-v3 netw ork. A.3 UOT-Unlearn Implementation Network Ar chite ctur e Our prop osed UOT-Unlearn framew ork consists of a gen- erator and a discriminator. Since the primary ob jective is to unlearn sp ecific concepts from a pretrained mo del, the generator is directly initialized with the w eights of the fully pretrained CTM or Meanflow c heckpoint. F or the dis- criminator, we adopt the exact architecture prop osed in the standard Un bal- anced Optimal T ransp ort (UOT) generative mo del [5]. Sp ecifically , we use their small ResNet-based discriminator v arian t, featuring a base c hannel size of 64, LeakyReLU (0.2) activ ations, and a minibatch standard deviation lay er. F e atur e Extr actor (for Centr oid Calculation) T o extract feature representations and compute the forget centroid, we utilize the p en ultimate lay er of a pretrained ResNet-56 model (94.37% accuracy) for CIF AR-10, and a pretrained ResNet-50 mo del (81.19% accuracy) for ImageNet-256. T o ensure a strictly fair and unbi- ased ev aluation, w e in tentionally emplo y these ResNet-based feature extractors during the unlearning phase, which are distinctly separated from the classifiers (DenseNet-121 and ViT-L/16) used later for calculating the Probability of Un- learning (PUL) metric. T r aining Hyp erp ar ameters F or the CIF AR-10 unlearning experiments, w e use a batc h size of 128. Regarding the exponential moving av erage (EMA) of the generator w eights, we disable EMA during the CTM unlearning pro cess, whereas w e set the EMA deca y rate to 0.99 for the Meanflow unlearning pro cess. F or the high-resolution ImageNet-256 exp erimen ts, w e utilize a batch size of 8 and set the EMA deca y rate to 0.999. All other optimization settings strictly follow the default configuration provided in the original UOT implementation (e.g., Adam optimizer with learning rates of 1 . 6 × 10 − 4 for the generator and 1 . 0 × 10 − 4 for the discriminator). B Baseline Metho ds T o ev aluate the effectiveness of our prop osed framew ork, we compare it against sev eral represen tative unlearning baselines. F or fair comparison under our strict unlearning scenario (where only a single pretrained chec kp oin t is av ailable and retain data is inaccessible), w e establish the follo wing baseline setups. 20 H. Choi et al. B.1 Gradien t Ascen t (GA) F or the Gradient Ascen t baseline, we directly maximize the original training loss on the entire training set of the target class, denoted as D forget . Since we apply this to the Consistency T ra jectory Mo del (CTM) and Meanflow mo dels, the GA loss is simply the negativ e of their respective training ob jectives: L GA ( θ ) = − E x ∼D forget [ L train ( θ ; x )] (20) where L train denotes either the CTM or Meanflo w loss. F or the class-conditional setting (i.e., ImageNet-256), the training ob jective inheren tly depends on b oth the image data and the class condition. Let y f denote the target forget class lab el. The GA loss is naturally extended to maximize the exp ected conditional training loss ov er the forget data: L GA ( θ ) = − E x ∼D forget [ L train ( θ ; x, y f )] (21) where L train ( θ ; x, y f ) represents the conditional training ob jective for a giv en image-lab el pair. This formulation ensures the mo del parameters are up dated to div erge from the original conditional distribution asso ciated with y f . B.2 V ariational Diffusion Unlearning (VDU) [21] The V ariational Diffusion Unlearning (VDU) framework form ulates the unlearn- ing ob jective by integrating the negativ e training loss with a parameter p enalt y term to preven t catastrophic degradation. The general VDU loss is defined as: L V D U ( θ ) = − (1 − γ ) L train ( θ ; D forget ) + γ d X i =1 ( θ i − µ ∗ i ) 2 2 σ ∗ 2 i (22) where γ is the p enalty weigh t, d is the total n umber of mo del parameters, and µ ∗ i and σ ∗ i represen t the empirical mean and standard deviation of the i -th parameter, respectively . F or our experiments, w e set γ = 0 . 005 for CTM and γ = 0 . 001 for Meanflow. T o compute the parameter statistics ( µ ∗ i and σ ∗ i ), the original VDU metho d relies on multiple historical chec kpoints sav ed during the initial pretraining phase. How ever, under our strict setting where only a single fully pretrained c heckpoint is pro vided, w e adopt a practical alternative strategy to estimate these statistics. Starting from the pretrained c heckpoint, we fine-tune the mo del on the full training dataset for 4 epo chs (with a learning rate of 1 × 10 − 6 and a batc h size of 128). W e sa ve a chec kp oin t at the end of every epo ch. Com bined with the initial pretrained chec kpoint, this yields a total of 5 chec kpoints (from 0 to 4 ep ochs), whic h we use to compute the required empirical mean and v ariance for the p enalt y term. Unlearning for One-Step Generative Mo dels via UOT 21 B.3 Selectiv e Amnesia (SA) [9] Existing mac hine unlearning metho ds, such as Selective Amnesia (SA) and Saliency Unlearning (SalUn), are primarily designed for conditional generativ e models. T o apply these methods to our unconditional generators, w e first construct a common base unlearning ob jectiv e ( L base ) that utilizes noise mapping and a pseudo-retain dataset. Common Base Obje ctive ( L b ase ) Since our unconditional generator lac ks ex- plicit class conditions to locate the target concept, we use a pretrained auxiliary classifier to filter the prior noise space. Let Z forget denote the set of prior noise v ectors x 0 ∼ N (0 , I ) that the generator maps to images classified as the target concept. W e introduce a Noise Mapping L oss to force the generator to output pure random noise ϵ for these sp ecific laten ts: L forget_noise ( θ ) = E x 0 ∼Z forget ,ϵ ∼N (0 ,I ) h ∥ G θ ( x 0 ) − ϵ ∥ 2 2 i (23) Sim ultaneously , we form ulate a retain loss using a fixed pseudo-retain dataset, D pr . W e construct this set offline b y generating images with the original gener- ator and filtering out the target concept using the auxiliary classifier. W e apply the standard training ob jective to these samples: L retain ( θ ) = E x ∼D pr [ L train ( θ ; x )] (24) The base unlearning ob jective is the weigh ted sum of these t wo terms: L base = α L forget_noise + β L retain . W e empirically tuned the h yp erparameters via grid searc h, utilizing α ∈ { 0 . 05 , 0 . 1 } and β ∈ { 0 . 5 , 5 . 0 } . SA Obje ctive T o constrain parameter deviation during unlearning, SA incor- p orates an Elastic W eight Consolidation (EWC) p enalt y into the base loss. This requires the computation of the Fisher Information Matrix (FIM), de- noted as F . W e compute the FIM using the entire generated pseudo-dataset, D pseudo = D pr ∪ D pf . In practice, a diagonal appro ximation is adopted where eac h diagonal element F i corresp onding to parameter θ i is computed as F i = E x ∼D pseudo [( ∇ θ i L train ( θ pre ; x )) 2 ] . The final SA ob jective is: L SA ( θ ) = L base + λ SA 2 X i F i ( θ i − θ pre ,i ) 2 (25) where θ pre represen ts the pretrained weigh ts, and the p enalty scale λ SA is em- pirically set (e.g., 5 . 0 or 1000 . 0 based on the model scale). B.4 Saliency Unlearning (SalUn) [6] SalUn prev en ts catastrophic forgetting b y restricting w eigh t updates to only the most salien t parameters associated with the target concept. Rather than mo difying the loss function directly with a p enalt y , SalUn optimizes the same 22 H. Choi et al. base ob jective ( L base ) defined in Section B.3, but applies a binary gradient mask M during the up date step: M = I ∇ θ E x ∼D forget [ L train ( θ ; x )] θ = θ pre ≥ γ (26) While the original SalUn pap er recommends a sparsity ratio of 50% for multi- step diffusion mo dels, we empirically found that unfreezing suc h a large prop or- tion of w eights sev erely disrupts the single-pass mapping of one-step models, leading to a collapse in generation qualit y . Th us, we strictly adjust the threshold γ to enforce a sparsity ratio of 95%, selecting only the top 5% of the parame- ters to b e up dated. During optimization, w e freeze the non-salien t w eights and up date only the salient parameters selected b y the mask: θ t +1 = θ t − η ( M ⊙ ∇ θ L base ) (27) where ⊙ is the elemen t-wise pro duct and η is the learning rate. C Visualization C.1 CIF AR-10 W e present visual results on the CIF AR-10 dataset based on the configurations that achiev ed the b est p erformance in T able 2 of the main text. Fig. 6 and Fig. 8 compare the unlearning patterns of the forget class across the baselines and our UOT-Unlearn, using the CTM and MF mo dels, resp ectively . F urthermore, Fig. 7 and Fig. 9 d ispla y how randomly generated images from the pretrained gener- ators change after applying UOT-Unlearn. Overall, these visualizations demon- strate that our metho d not only erases the target concept but also naturally shifts its generation tow ard the retained classes, successfully preserving the structural la yout of the original scenes. C.2 ImageNet 256 × 256 As an extension to the results sho wn in Fig. 4 of the main text, we provide additional visualization results on the ImageNet 256 × 256 dataset using the Meanflo w mo del (Fig. 10). Using identical initial noises, we compare the pre- trained model and UOT-Unlearn across the target forget class (goldfish, top ro w) and 9 aquatic retain classes. Visually , UOT-Unlearn effectively erases the sp ecific features of the forget class while largely preserving the structural lay outs of the retain classes. These qualitative observ ations align with our robust metrics (85.08% PUL; u-FID 20.16 vs. pretrained FID 11.57). Unlearning for One-Step Generative Mo dels via UOT 23 Pretrained GA VDU SA SalUn UOT-Unlearn Fig. 6: Qualitative comparison of unlearning metho ds on the Ship class (Class 8) us- ing the Consistency T ra jectory Mo del (CTM). Generated under fixed seed setting, this figure illustrates how the target class is erased across different approaches. Notably , unlik e other baselines generate corrupted images, UOT-Unlearn demonstrates a dis- tinct tendency to transition the forgotten concept in to features of other classes while preserving the o verall spatial la yout. 24 H. Choi et al. Pretrained UOT-Unlearn (CTM) Fig. 7: Visual comparison of images generated from the pretrained CTM versus our UOT-Unlearn-applied CTM trained to forget the Ship class (Class 8). The results demonstrate that UOT-Unlearn successfully erases the forget class, seamlessly transi- tioning it in to features of other classes, while strictly preserving the visual quality and structural lay out of the remaining retain classes. Unlearning for One-Step Generative Mo dels via UOT 25 Pretrained GA VDU SA SalUn UOT-Unlearn Fig. 8: Qualitativ e comparison of unlearning metho ds on the Ship class (Class 8) using the Meanflo w (MF). Generated under fixed seed setting, this figure illustrates how the target class is erased across different approaches. 26 H. Choi et al. Pretrained UOT-Unlearn (Meanflo w) Fig. 9: Visual comparison of randomly generated images from the Pretrained mo del and our UOT-Unlearn (MF) trained to forget the Ship class (Class 8), using the same initial noises. Unlearning for One-Step Generative Mo dels via UOT 27 (a) Pretrained (b) UOT-Unlearn (Meanflo w) Fig. 10: Unlearning results on the ImageNet 256 × 256 dataset using the MeanFlow (MF) mo del. The top ro w of each generated batc h displa ys the Goldfish class (forget class), while the subsequen t b ottom rows sho w 9 other aquatic animal classes (retain classes) using the exact same initial noises.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment