DST-Net: A Dual-Stream Transformer with Illumination-Independent Feature Guidance and Multi-Scale Spatial Convolution for Low-Light Image Enhancement

Low-light image enhancement aims to restore the visibility of images captured by visual sensors in dim environments by addressing their inherent signal degradations, such as luminance attenuation and structural corruption. Although numerous algorithm…

Authors: Yicui Shi, Yuhan Chen, Xiangfei Huang

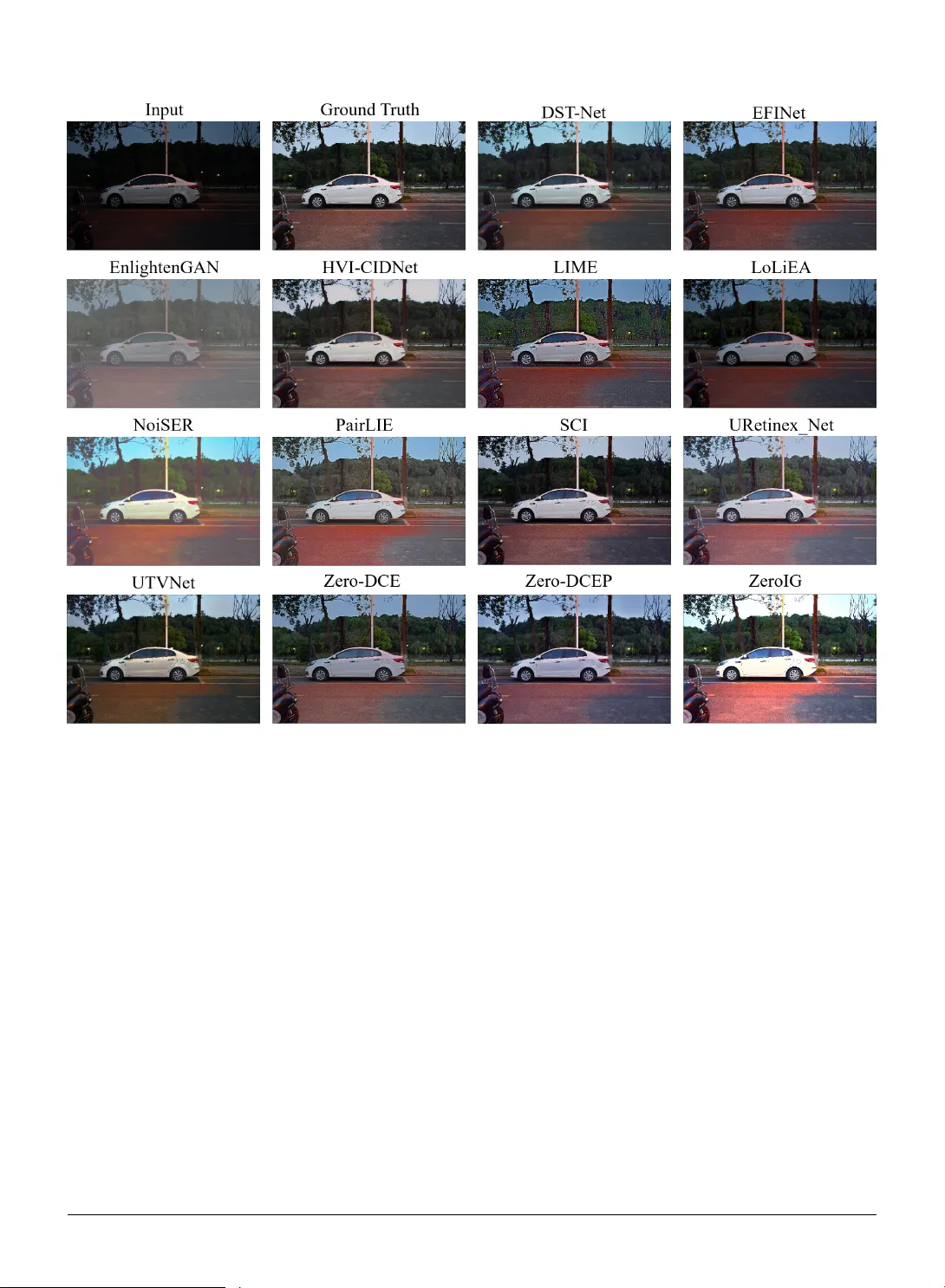

DS T -Ne t: A Dual-Stream T ransf or mer with Illumination-Independent Feature Guidance and Multi-Scale Spatial Con v olution f or Lo w -Light Image Enhancement Yicui Shi a , Y uhan Chen a , Xiangf ei Huang a , Zhenguo W ang b , W enxuan Y u a and Ying Fang a a Colleg e of Mec hanical and V ehicle Engineering, Chongqing Univ ersity, Chongqing, 400044, China b Shanghai Zhenhua Heavy Industries Co.,Ltd., Shanghai, 200125, China A R T I C L E I N F O Keyw ords : Low -light image enhancement Transf or mer 3D Convolution A B S T R A C T Low -light imag e enhancement aims to restore the visibility of images captured b y visual sensors in dim environments by addressing their inherent signal degradations, such as luminance attenuation and structural corr uption. Although numerous algor ithms attempt to improv e image quality , existing methods of ten cause a severe loss of intr insic signal pr iors. They struggle to achieve substantial brightness improv ements while maintaining color fidelity , preserving geometric structures, and reco vering high-frequency fine textures. To overcome these challenges, we propose a Dual-Stream Transf ormer N etw ork (DST -Net) based on illumination-agnostic signal prior guidance and multi-scale spatial conv olutions. First, to address t he loss of critical signal features under low -light conditions, we design a feature extraction module. This module integrates Difference of Gaussians (DoG), LAB color space transformations, and V GG-16 f or texture extraction, utilizing decoupled illumination-agnos tic f eatures as signal priors to continuously guide the enhancement process. Second, we construct a dual-stream interaction architecture. By emplo ying a cross-modal attention mec hanism, the netw ork le verag es the extracted pr iors to dynamically rectify the deteriorated signal represent ation of t he enhanced image, ultimatel y achieving iterative enhancement through differentiable curv e estimation. Further more, to ov ercome the inability of e xisting methods to preserve fine structures and textures, we propose a Multi-Scale Spatial Fusion Block (MSFB) featuring pseudo-3D and 3D gradient operator con volutions. This module integrates explicit g radient operators to reco ver high-frequency edges while capturing inter-channel spatial correlations via multi-scale spatial conv olutions. Extensive evaluations and ablation studies demonstrate that DS T -Net achiev es superior performance in subjective visual quality and objective metr ics. Specifically , our method achie ves a PSNR of 25.64 dB on t he LOL dataset. Subsequent validation on the LSR W dataset further confir ms its robust cross-scene generalization. 1. Introduction Rapid adv ancements in visual sensor technology ha ve dr iven t he widespread deployment of image acquisition devices in fields like autonomous dr iving, video sur veillance, and smar tphone photography . Although t he per f or mance of modern sensor hardware continues to impro ve, acquiring clear visual signals remains a sev ere challenge in adv erse lighting environments like nighttime, backlighting, or extreme low illumination [ 1 – 3 ]. Constrained by limited exposure times and the phy sical proper ties of photosensitiv e elements, images captured in dim environments typically suffer from sev ere signal degradations, including insufficient luminance, compressed dynamic ranges, and heavy noise ar tifacts [ 4 ]. Such signal deg radations cause t he loss of critical object information, compromising human subjective perception and hindering computer vision algorithms reliant on clear featur e inputs [ 5 , 6 ]. Theref ore, dev eloping efficient low-light image enhancement algor ithms to effectivel y restore underl ying signal structures and high-frequency te xture details while increasing luminance holds substantial theoretical and practical value f or improving the all-w eather adaptability of visual systems [ 7 ]. Con v olutional Neural Ne tw ork (CNN) and Tr ansf ormer-based algorithms hav e g raduall y replaced traditional methods, dominating the field of lo w -light image enhancement [ 4 , 7 , 8 ]. Lev eraging strong local feature extraction capabilities, CNN-based methods effectively learn ∗ Corresponding author yicuishi@cqu.edu.cn (Y . Shi); 20240701028@stu.cqu.edu.cn (Y . Chen); hxf0213@stu.cqu.edu.cn (X. Huang); wangzhenguo_ssz@zpmc.com (Z. W ang); wenxuanyu@cqu.edu.cn (W . Yu); yingfang@stu.cqu.edu.cn (Y . Fang); liguofa@cqu.edu.cn (Y . Fang) OR CID (s): Page 1 of 19 DST-Net fo r Low-Light Image Enhancement low -light to normal-light mappings or estimate physical illumination parameters [ 9 ]. Mean while, recent Transf or mer architectures employ self-attention mechanisms to capture long-range dependencies, fur ther impro ving t he global consistency of the enhanced results [ 10 – 12 ]. Despite the significant prog ress of dat a-driven methods, most existing approaches focus on pixel-le v el luminance enhancement, of ten ov erlooking the preser v ation of structural and textural details inherent in illumination-Independent signals [ 9 ]. Consequently , these models str uggle to achie ve substantial luminance improv ements while maintaining color fidelity , geometric integr ity , and fine texture details. More impor tantly , existing algorithms often cause a sev ere loss of critical signal f eatures during comple x non-linear enhancement pr ocesses. The enhanced images frequently exhibit blur red edges or details obscured by noise, failing to meet the demands of high-precision downstream vision tasks [ 13 , 14 ]. While deep learning-based methods ha ve adv anced significantly , mainstream iterative low -light enhancement algorit hms such as Zero-DCE [ 15 ], and Zero-DCE++ [ 16 ] still str uggle with signal f eature preser v ation. These approaches typically rely on pixel-le vel curve estimation or weight mapping to iterativel y increase image luminance. How ever , such strategies f ocusing solely on pixel intensity adjustments of ten neglect intrinsic semantic information and structural f eatures [ 7 , 17 ]. Due to the lack of explicit constraints and protection for high-lev el f eatures during multi- stage iterativ e enhancement, high-frequency textur e details and edge str uctures in the degraded signals of ten suffer ir re v ersible degradation alongside non-linear luminance stretching. In other w ords, despite effectiv ely illuminating dark regions, t hese methods sacr ifice cr itical image features and ultimately fail to meet t he demands of high-quality visual perception [ 18 , 19 ]. Incorporating feature-le v el enhancement strategies is cr ucial f or preserving t he authenticity of imag e content in restoration tasks [ 20 , 21 ]. Prior-guided signal enhancement strengthens t he representational capacity of networks while effectivel y retaining critical inf or mation during comple x transf ormations [ 19 , 22 ]. Inspired by t his, we propose an enhancement sc heme guided by illumination-independent signal f eatures. Unlik e directly manipulating RGB pixels, our DST -Net focuses on extracting and preser ving intrinsic illumination-independent signal priors from lo w-light images, including textures, geometric str uctures, and color components. These stable feature maps ser v e as signal pr ior inf ormation to continuously guide the low -light image enhancement process. Through this mechanism, the netw ork lev erages the extracted f eature maps to perform spatiall y adaptiv e adjustments across different regions of the low - light image by suppressing noise in smooth areas and reinf orcing high-frequency details in texture-rich zones. This dual-stream interaction design ensures that w e meticulously restore fine texture details and str uctural integr ity while achie ving global luminance enhancement, thereby delivering superior visual quality . T o ov ercome the aforementioned c hallenges, particularly t he se vere loss of feature information caused by e xisting iterativ e methods confined to pixel-le v el mappings, we propose a Dual-Stream Transf or mer Netw ork (DST -Net) based on illumination-independent signal pr ior enhancement and multi-scale spatial fusion con volutions. Moving bey ond the limitations of traditional end-to-end mappings, our DST -Ne t pr imarily consists of three core components: First, to ov ercome signal f eature deg radation under low -light conditions, we design an illumination-independent f eature extraction module. This module integrates Difference of Gaussians (DoG), LAB color space transf ormations, and V GG-16 texture features to construct intr insic representations decoupled from luminance, thereby pro viding continuous and stable signal pr ior guidance throughout the enhancement process. Second, we introduce an improv ed dual-stream Transf or mer interaction architecture. By employing a cross-modal attention mechanism, our networ k lev erages the extr acted illumination-independent f eatures to dynamically rectify the deteriorated signal representation of the enhanced image, ultimately achie ving high-fidelity iterative enhancement via differentiable curve estimation. Furt hermore, to ov ercome the inability of existing Con v olutional Neural Netw orks (CNNs) to capture inter -channel spatial correlations and prev ent the resulting blur ring of high-frequency fine textures, we dev elop a Multi-Scale Spatial Fusion Block (MSFB) featuring pseudo-3D conv olutions. This module integrates e xplicit gradient operators such as Sobel and Laplacian to recov er high-frequency edge details while exploiting deep spatial cor relations along the channel dimension via multi-scale channel con v olutions, thereby significantl y enhancing the capability of our model to preser v e geometric structures under extremely lo w signal-to-noise ratios. The main contr ibutions of this paper are summar ized as follo ws: • W e propose a Multi-Scale Spatial Fusion Block (MSFB) featuring pseudo-3D conv olutions. By integrating multi- scale v ox el spatial conv olutions and explicit 3D g radient operators such as Sobel and Laplacian, this module effectivel y exploits inter-c hannel spatial correlations, significantly enhancing the capability of the netw ork to capture geometric structures and high-frequency fine te xtures in low signal-to-noise ratio en vironments. P age 2 of 19 DST-Net fo r Low-Light Image Enhancement • W e lev erage decoupled lo w-light color, str ucture, and texture f eature maps as signal pr iors. By employing a cross-modal attention mechanism and iterativ e differentiable cur v e estimation, w e dynamically rectify the deteriorated signal representation dur ing the enhancement process, thereby ensur ing ex ceptional image fidelity while substantially increasing luminance. • Extensive ablation studies and comparativ e evaluations on benchmark datasets suc h as LOL, L SR W -N, and LSR W -H demonstrate t hat our proposed method achiev es outstanding per f ormance in subjective visual quality and objective metrics, exhibiting robus t cross-scene generalization capabilities. 2. Related W ork 2.1. Low -Light Enhancement 2.1.1. T raditional Methods Earl y approaches to lo w -light image enhancement primar il y relied on Histogram Equalization (HE) and Retine x theor y . HE improv es global contrast by expanding the dynamic range of images. T o mitigate the loss of local details caused by global HE, Pizer et al. [ 23 ] proposed Contrast Limited Adaptiv e Histogram Equalization (CLAHE) to suppress noise over -amplification by restricting the height of local histograms. Fur thermore, Gamma Cor rection [ 24 ] serves as a widely adopted non-linear transf or mation technique to adjust t he luminance distribution of images. Beyond statistical approaches, algorit hms based on Re tinex theory assume that observed images can be decomposed into illumination and reflectance components. Follo wing the initial introduction of Re tinex theor y by Land [ 25 ], Jobson et al. [ 26 ] developed Single-Scale Re tinex (SSR) and Multi-Scale Retine x (MSR) algor ithms to estimate t he illumination component via Gaussian filtering. Building on this f oundation, Guo et al. [ 27 ] proposed the LIME method to impro ve enhancement quality by imposing str uctural priors on the illumination map. 2.1.2. Deep-Lear ning Methods While traditional phy sics-based models such as LIME ha ve achie v ed notable success via illumination map estimation, data-dr iv en appr oaches ha ve gradually become the mainstream paradigm owing to the pow erful feature representation capabilities of deep neural netw orks. Early deep learning methods primar ily focused on constructing algorit hm unrolling networks based on Re tinex t heory to balance physical interpretability wit h the robust fitting capacity of deep models. For instance, Liu et al. [ 28 ] utilized neural architecture search to construct R U AS, an unrolling architecture wit h cooperative priors. W u et al. [ 29 ] designed URetinex-N et by unfolding t he decomposition process into phy sically constrained deep modules, while Zheng et al. [ 30 ] introduced an adaptiv e unrolling total variation netw ork f or noise smoothing. Fur thermore, Fu et al. [ 31 ] explored learning simple yet effective enhancers from paired low -light data. T o eliminate t he dependency on paired data and improv e inference efficiency , unsuper vised learning and iterative curve estimation methods hav e gained prominence. Jiang et al. [ 32 ] pioneered EnlightenGAN by utilizing unpaired generativ e adversarial networks for unsupervised enhancement. Subsequently , Guo et al. [ 15 ] introduced Zero-DCE, which reformulates the enhancement task as a pixel-lev el high-order curve estimation problem, a frame wor k later refined by Li et al. [ 16 ] in Zero-DCE++. Seeking faster con ver gence and super ior performance, Ma et al. [ 33 ] dev eloped SCI, lev eraging a weight-sharing mechanism for ultra-f ast iteration. Pan et al. [ 34 ] introduced Chebyshe v polynomial appro ximation to determine optimal enhancement cur v es. Meanwhile, Liu et al. [ 35 ] e xplored iterativ e mechanisms f or combined enhancement and fusion via EFINet. Recentl y , sev eral adv anced algorit hms ha ve emerg ed, achie ving breakthroughs in both efficiency and robustness. Chen et al. introduced FMR-N et [ 36 ] and FRR -Net [ 37 ] to significantly optimize netw ork per f ormance through multi- scale residuals and re-parameterization techniques. To handle intract able noise and ar tif acts, Zhang et al. [ 38 ] proposed a noise autoregressive lear ning paradigm that enables joint denoising and enhancement without task -specific data. Similarl y , Shi et al. [ 39 ] dev eloped ZER O-IG f or zero-shot illumination-guided joint denoising. Fur ther more, Chen et al. [ 40 ] designed a lightweight real-time enhancement network, deliv ering superior visual quality while maintaining low comput ational comple xity . 2.2. Multi-scale F eature Extraction Block Extracting multi-scale conte xtual information is a piv otal strategy for addressing scale variations and detail restoration in image enhancement. T o ov ercome the restricted receptive fields of single-scale conv olutions, various hierarchical architectures aim to capture diverse str uctural inf ormation. For instance, Li et al. [ 41 ] proposed the P age 3 of 19 DST-Net fo r Low-Light Image Enhancement Multi-Scale R esidual Bloc k (MSRB), utilizing parallel conv olutional lay ers with different kernel sizes to detect multi- frequency image features while promoting efficient inf ormation flo w through local residual connections. Inspired by these designs, MIRNe t [ 42 ] integ rates multi-scale residual str uctures into multi-resolution streams to enable effective cross-scale feature interaction. Similarly , FMR-N et [ 36 ] incor porates multi-scale residual attention, while Cho et al. [ 43 ] achie ve coarse-to-fine f eature refinement via multi-scale pyramid reconstruction. Attention-based mechanisms are widely emplo yed to adaptively calibrate feature responses during fusion and recalibration. For instance, Dai et al. [ 44 ] introduced Attentional Feature Fusion (AFF) to dynamically calculate fusion weights by integrating local and global contextual attention. Similarly , Hu et al. [ 45 ] and W oo et al. [ 46 ] respectively introduced SE-Net and CB AM to recalibrate feature responses along channel or spatial dimensions to enhance networ k sensitivity to critical information. Additionally , Liu et al. [ 47 ] dev eloped FFA -Net to explore the potential of pix el-lev el weighting f or addressing non-uniform deg radation via a Feature Attention (F A) module. To address spatial correlations across f eature channels, we e xplore a Pseudo-3D architecture. This design exploits vo xel-le vel spatial cor relations similar to 3D conv olutions. It av oids the redundancy inherent in full 3D operations and maintains the computational efficiency of 2D conv olutions. 2.3. Dual-Stream F eature Guidance Single-stream netw orks often struggle to balance global luminance adjustment and local detail rest oration under complex noise and degradation. Consequentl y , dual-stream architectures utilizing feature enhancement hav e emerg ed as a prev alent paradigm. W ei et al. [ 48 ] pioneered Retine xNe t based on Retine x theor y . Zhang et al. [ 49 ] refined this frame w ork in KinD by decoupling illumination adjustment and reflectance denoising modules. Utilizing auxiliary f eature maps as pr ior inf ormation guides t he enhancement process alongside physical component decoupling. Ma et al. [ 50 ] proposed a Structure-Map-Guided (SMG) strategy . Xu et al. [ 51 ] designed SNR -Net to constr uct spatially-v ar ying f eature guidance via Signal-to-Noise Ratio (SNR) maps. Tr ansf ormer architectures ha ve facilitated increasingly flexible and efficient cross-modal f eature guidance. Cui et al. [ 52 ] proposed the Illumination- Adaptiv e Transf ormer (IA T). Cai et al. [ 53 ] integ rated illumination pr iors into the self-attention mechanism within Retine xf ormer . Xu et al. [ 20 ] introduced UPT -Flow , employing unbalanced point maps to guide normalizing flow models and rectify non-uniform R GB distributions. T o address these limitations, we utilize extracted illumination-Independent te xture, structure, and color features as K ey and V alue to continuously guide the learning of original image features ser ving as the Quer y . 3. Proposed Method 3.1. Ov erall Pipeline Figure 1 illustrates the ov erall architecture of DST -Net. W e de velop an enhancement framew ork based on dual- stream interaction. For a giv en low -light input imag e 𝐼 ∈ ℝ 𝐻 × 𝑊 ×3 , the pipeline commences wit h a specialized illumination-independent feature extraction module. This module integ rates str uctural information from the Difference of Gaussians (DoG), c hromaticity components from t he LAB color space, and texture f eatures extracted via VGG-16. This design decouples physical f eatures insensitive to illumination variations from t he low -light input and subsequentl y injects t hese featur es into the auxiliary stream to serve as signal pr ior guidance. The extracted str uctural, chromaticity , and te xture f eatures undergo continuous inf ormation ex chang e and deep enhancement with the low -light image via a cross-modal dual-stream T ransf ormer architecture. The f eature stream le verag es its preserv ed structural inf ormation to dynamically rectify noise-cor rupted signal distributions within the image stream. W e integrate a Multi-Scale Spatial Fusion Bloc k (MSFB) dur ing this encoding process to capture spatial correlations across c hannels more precisely . This module utilizes Pseudo-3D con v olutions coupled with e xplicit Pseudo-3D g radient operators such as Sobel and Laplacian to facilitate t he deep extraction of high-frequency details and edge inf or mation. For t he final image reconstruction stage, we adopt a strategy that combines feature fusion wit h residual compensa- tion. The netw ork concatenates refined f eatures from the dual-stream T ransf ormer with initial shallow f eatures and the original low light image along the channel dimension to preserve comprehensiv e contextual inf ormation. Subsequentl y , we con vol ve the fused features and add them to the residual ter m generated by t he iterative enhancement module. This ensures t hat the final enhanced image integrates fine-grained details captured by the T ransf ormer with global signal amplitude adjustments obtained through iterative cur ve estimation. W e design this residual connection to maximize geometric and chromatic fidelity while effectiv ely enhancing brightness. P age 4 of 19 DST-Net fo r Low-Light Image Enhancement Figure 1: DST-Net Overall Net wo rk Architecture. 3.2. Illumination-Independent F eature-Guided Dual-Stream T ransformer W e propose the Illumination-independent Feature-Guided Dual-Stream Transf or mer to address common semantic blur ring and cr itical signal feature loss during low-light image enhancement. Unlike traditional methods that pr imarily perform end-to-end mapping within the R GB space, our framew ork consists of two core stages: Illumination- independent Feature Generation and Cross-Modal Dual-Stream Interaction. 3.2.1. F eature Generation Low -light image degradation primar ily manif ests as luminance attenuation and noise inter f erence, yet underl ying geometric str uctures and mater ial proper ties suc h as reflect ance and chromaticity typically remain stable. W e design a multi-dimensional feature extraction module to capture t hese physical attributes decoupled from illumination intensity . For a given input low -light image 𝐼 𝑖𝑛 ∈ ℝ 𝐻 × 𝑊 ×3 , we con vert the image from sRGB space to LAB color space to isolate t he luminance component 𝐿 from the chromaticity components 𝐴 and 𝐵 . W e then appl y the Difference of Gaussians (DoG) operator to the 𝐿 component to capture robust edges and geometric structures. This operat or effectivel y suppresses high-frequency noise and enhances edge responses, as defined below : 𝑑 𝑜𝑔 = 𝑁 ( 𝐼 𝐿 ⊗ ( 𝐺 𝜎 1 − 𝐺 𝜎 2 ) ) , 𝐺 𝜎 ( 𝑥, 𝑦 ) = 1 2 𝜋 𝜎 2 𝑒 − 𝑥 2 + 𝑦 2 2 𝜎 2 (1) where ⊗ denotes t he conv olution operation, 𝐺 𝜎 1 and 𝐺 𝜎 2 represent Gaussian ker nels wit h standard deviations satisfying 𝜎 1 < 𝜎 2 , and 𝑁 ( ⋅ ) is a normalization operator t hat maps f eature values into a stable range. W e derive color f eature maps from the c hromaticity components of the LAB color space. Because the 𝐴 and 𝐵 channels provide c hromaticity-opponent information decoupled from the luminance component 𝐿 , they ser v e as an effective color prior . W e formulate the generation of the color f eature 𝑐 𝑜𝑙𝑜𝑟 as: 𝑐 𝑜𝑙𝑜𝑟 = Norm ( 𝐼 2 𝑎 + 𝐼 2 𝑏 + 1 𝑒 −5 ) (2) where 𝐼 𝑎 and 𝐼 𝑏 denote the 𝐴 and 𝐵 channel tensors in the LAB color space, while ⊕ signifies concatenation along the channel dimension. W e le v erage a pre-trained V GG-16 netw ork to extract deep te xture features that supplement high-level semantic inf ormation often elusive to shallo w f eatures. W e select specific intermediate activ ations to define the texture P age 5 of 19 DST-Net fo r Low-Light Image Enhancement representation 𝑡𝑒𝑥 : 𝑡𝑒𝑥 = 𝑃 𝑣𝑔 𝑔 ( ( 𝐼 𝑖𝑛 )) (3) where 𝑃 𝑣𝑔 𝑔 denotes the f eature projection transf ormation of V GG-16, while ( ⋅ ) signifies necessar y resizing and preprocessing operations. Finall y , we perform multi-scale fusion of t he complement ary structural, chromatic, and te xtural f eatures along the channel dimension to generate the comprehensiv e illumination-independent guidance feature 𝑖𝑛𝑣 : 𝑖𝑛𝑣 = Cat ( 𝑑 𝑜𝑔 , 𝑐 𝑜𝑙𝑜𝑟 , 𝑡𝑒𝑥 ) (4) 𝑖𝑛𝑣 incorporates low -lev el geometric edges and physical colors alongside high-le vel semantic te xtures. This fusion pro vides comprehensive pr ior guidance for subseq uent dual-stream Transf or mer interaction. 3.2.2. T ransf ormer F eature Extraction Block As shown in Fig. 2 , Let 𝑋 𝑙 𝐼 and 𝑋 𝑙 𝐹 denote the image and feature stream features at t he 𝑙 -th la yer . W e project the low light image stream f eatures as the Quer y within the cross-attention module while utilizing the features as the Ke y and V alue to integrate per tinent textur e details. W e compute the matrices 𝑄 , 𝐾 , and 𝑉 as follo ws: 𝑄 = 𝑋 𝑙 𝐼 𝑊 𝑄 , 𝐾 = 𝑋 𝑙 𝐹 𝑊 𝐾 , 𝑉 = 𝑋 𝑙 𝐹 𝑊 𝑉 (5) The variables 𝑊 𝑄 , 𝑊 𝐾 , and 𝑊 𝑉 denote learnable linear projection matr ices. W e calculate the cross-modal attention map via a scaled dot-product based on these matr ices. This operation fuses guidance inf or mation to yield the intermediate f eature 𝑋 𝑙 𝐼 : 𝑋 𝑙 𝐼 = 𝑋 𝑙 𝐼 + Sof tmax 𝑄𝐾 𝑇 𝑑 𝑘 𝑉 (6) The variable 𝑑 𝑘 denotes the scaling factor . Different channels in the feature maps contr ibute unequally to the restoration task f ollowing cross-modal interac- tion. W e introduce a Lightweight Channel Attention (LCA) module to adaptivel y recalibrate channel dependencies. LCA utilizes both Global A verage Pooling (A v gPool) and Global Max Pooling (MaxPool) to aggregate spatial contextual information. This agg regation generates channel descriptors: 𝐹 𝑎𝑣𝑔 = A v gPool ( 𝑋 𝑙 𝐼 ) , 𝐹 𝑚𝑎𝑥 = MaxPool ( 𝑋 𝑙 𝐼 ) (7) Subsequentl y , we feed these two descriptors into a shared Multi-Lay er Perceptron (MLP) to capture inter-channel non-linear relationships. This MLP consists of two 1 × 1 con volutional lay ers separated by a ReL U activation function. W e calculate the final channel attention w eight 𝑀 𝑐 as f ollow s: 𝑀 𝑐 ( 𝑋 𝑙 𝐼 ) = 𝜎 ( MLP ( 𝐹 𝑎𝑣𝑔 ) + MLP ( 𝐹 𝑚𝑎𝑥 )) (8) where 𝜎 deno tes the Sigmoid activation function. W e obtain t he image stream output 𝑋 𝑙 +1 𝐼 at the ( 𝑙 + 1) -th lay er b y applying the learned channel weights to the normalized intermediate f eatures. This operation recalibrates the response of each channel to highlight inf ormative f eatures while suppressing noise: 𝑋 𝑙 +1 𝐼 = LN ( 𝑋 𝑙 𝐼 ) ⊙ 𝑀 𝑐 ( 𝑋 𝑙 𝐼 ) (9) This dual interaction-recalibration mechanism enables t he networ k to lev erage external illumination-independent f eatures f or macro-guidance and ex ecute micro-lev el f eature screening through inter nal channel attention. This design maximizes noise suppression while preser ving intricate te xture details throughout t he enhancement process. P age 6 of 19 DST-Net fo r Low-Light Image Enhancement Figure 2: Overall Netw ork Architecture of TFEB and MSFB. 3.3. Multi-Scale Spatial Fusion Block Tr aditional 2D conv olutions often neglect spatial correlations across feature channels, whereas standard 3D con v olutions incur prohibitive computational cos ts. W e propose t he Multi-Scale Spatial Fusion Block (MSFB) to capture vo x el-lev el spatial-c hannel dependencies and reinf orce edge details while maintaining com putational efficiency . As sho wn in Fig. 2 , MSFB comprises three parallel branches: an explicit gradient injection branch, a multi- scale Pseudo-3D conv olution branch, and a hierarchical attention fusion mechanism. T o heighten sensitivity to high-frequency details, we embed g radient pr iors directl y into the feature extraction process. W e design Pseudo-3D Laplacian and Sobel operators to capture isotropic texture details and anisotropic edge gradients. For an input feature 𝑋 ∈ ℝ 𝐶 × 𝐻 × 𝑊 , the g radient branc h operates as follo w s: 𝐿𝑎𝑝 = GELU 𝑑 ∈{ 𝑥,𝑦,𝑧 } 𝑋 ⊛ 𝐾 𝑑 𝐿𝑎𝑝 , 𝑆 𝑜𝑏 = GELU 𝑑 ∈{ 𝑥,𝑦,𝑧 } 𝑋 ⊛ 𝐾 𝑑 𝑆 𝑜𝑏 (10) Here ⊛ denotes 3D conv olution. 𝐾 𝑑 𝐿𝑎𝑝 and 𝐾 𝑑 𝑆 𝑜𝑏 represent discrete differential kernels along t he width, height, and c hannel directions respectiv ely . This decomposed computation scheme effectiv ely reduces the total number of parameters. The approach also pre vents o v er -smoothing dur ing the denoising process. W e design a multi-scale Pseudo-3D (P3D) residual bloc k to capture spatial-channel cor relations across diverse receptiv e fields. The P3D block differs from standard conv olutions by decomposing a 𝑘 × 𝑘 × 𝑘 3D conv olution into three or thogonal plane conv olutions. These components include the channel-height conv olution 𝐶 𝑐 ℎ , the channel- width con volution 𝐶 𝑐 𝑤 , and the spatial height-width conv olution 𝐶 ℎ𝑤 . W e also integrate an ort hogonal decomposition con v olution for t he origin projection 𝑂 . W e calculate t he output f eature 𝑀 𝑖 f or the 𝑖 -t h scale branch as f ollo ws: 𝑀 𝑖 = ReL U Con v2D 𝑝 ∈{ 𝑐 ℎ,𝑐 𝑤,ℎ𝑤,𝑂 } ReL U ( Con v 𝑝 3 𝐷 ( 𝑋 )) + 𝑋 (11) W e propose the Multi-scale Attention Feature Fusion (MAFF) module to integ rate f eatures across various scales effectivel y . This architecture is designated as MAFF . W e descr ibe the inter nal processing flow as f ollows: 𝑎𝑡𝑡 = Attn 𝑆 + 𝐶 ( ReL U (Φ 𝑙𝑜𝑐 𝑎𝑙 ( 𝑖𝑛 ) + Φ 𝑔 𝑙𝑜𝑏𝑎𝑙 ( 𝑖𝑛 ))) 𝜔 𝑖 = Softmax Ψ([ 𝜎 ( Rep 𝑖 ( 𝑎𝑡𝑡 )) ⊗ 𝑓 𝑖 ] 5 𝑖 =1 ) 𝑜𝑢𝑡 = Con v 5 𝑖 =1 𝜔 𝑖 ⋅ 𝑓 𝑖 (12) where Attn 𝑆 + 𝐶 achie v es fine-g rained f eature refinement through t he sequential integration of spatial and channel attention. Specialized representations are generated for each branch by Rep 𝑖 to extr act salient f eatures. Ψ maps these P age 7 of 19 DST-Net fo r Low-Light Image Enhancement enhanced f eatures to a decision space to calculate nor malized weights 𝜔 𝑖 . These w eights ensure the dynamic and complementar y integration of input branches during the fusion process. W e adopt a hierarc hical s trategy to agg regate f eatures 𝑘 = { 𝑀 𝑘 } 𝑘 ∈{1 , 3 , 5 , 7 , 9} progressivel y within the MSFB module. W e define t his process as f ollo ws: 𝐻 1 = MAFF 1 ( 𝑘 ( 𝑋 )) + 𝐿𝑎𝑝 + 𝑋 𝐻 2 = MAFF 2 ( 𝑘 ( 𝐻 1 )) + 𝑆 𝑜𝑏 + 𝑋 + 𝐻 1 (13) The variables 𝐻 1 and 𝐻 2 represent the fused f eatures of the first and second stages, respectivel y . The module integrates all computational pat hs to generate t he final f eature 𝑌 𝑀 𝑆 𝐹 𝐵 in the concluding step: 𝑌 𝑀 𝑆 𝐹 𝐵 = MAFF 3 ( 𝐻 2 , 𝐻 1 , 𝐿𝑎𝑝 , 𝑆 𝑜𝑏 , 𝑋 ) + GELU ( Con v 𝑟𝑒𝑠 ( 𝑋 )) (14) 3.4. Deep F eature-Guided Iterativ e Curv e Enhancement While t he dual-stream T ransf ormer and MSFB modules effectivel y perform feature extraction and detail restoration, directly reg ressing pix el v alues via con volutional networks often induces color bias or une ven luminance. W e propose a Deep Feature-Guided Iterative Cur v e Estimation strategy to achiev e natural illumination enhancement while maintaining signal processing stability . This strategy lev erages deep semantic features e xtracted by t he dual-stream Tr ansf ormer to generate high-order curve parameters, enabling precise and adaptive adjustments f or complex lighting scenarios. Parameter map estimation ser v es as the core of the cur v e enhancement process. At the decoder output, we fuse t he image stream feature 𝑌 3 𝑢𝑝 and feature stream f eature 𝑋 3 𝑢𝑝 to generate the enhanced f eature map 𝐹 𝑏𝑜𝑜𝑠𝑡 . To fur ther incor porate multi-scale conte xt, we feed 𝐹 𝑏𝑜𝑜𝑠𝑡 into an additional MSFB module f or f eature refinement: 𝐹 𝑏𝑜𝑜𝑠𝑡 = 𝑏𝑜𝑜𝑠𝑡 ( 𝑋 3 𝑢𝑝 + 𝑌 3 𝑢𝑝 ) (15) Subsequentl y , we par tition 𝐹 𝑏𝑜𝑜𝑠𝑡 along the channel dimension into 𝐾 groups of parameter maps 𝐴 = { 𝐴 1 , 𝐴 2 , … , 𝐴 𝐾 } . The variable 𝐾 denotes the number of iterations. W e set 𝐾 = 4 in this study . 𝐴 𝑛 represents the pixel-le vel adjustment coefficient f or the 𝑛 -t h iteration. W e introduce a specific pixel-lev el high-order curve to adjust the dynamic range of the image prog ressiv ely . This cur ve is differentiable and monotonic within the inter val (0 , 1) . This mathematical proper ty effectivel y a v oids overe xposure and ar tif acts. W e define t he 𝑛 -th lev el enhancement result 𝐿𝐸 𝑛 ( 𝑥 ) as f ollow s given the input image 𝐼 𝑖𝑛 and the parameter map 𝐴 𝑛 : 𝐿𝐸 𝑛 ( 𝑥 ) = 𝐿𝐸 𝑛 −1 ( 𝑥 ) + 𝐴 𝑛 ( 𝑥 ) × ( 𝐿𝐸 𝑛 −1 ( 𝑥 ) − 𝐿𝐸 𝑛 −1 ( 𝑥 ) 2 ) (16) where 𝑥 denotes pix el coordinates and 𝐿𝐸 0 ( 𝑥 ) = 𝐼 𝑖𝑛 ( 𝑥 ) defines the initial state. The quadratic term 𝐿𝐸 𝑛 −1 ( 𝑥 ) 2 imposes a nonlinear constraint that enables the enhancement process to adaptivel y stretc h dark regions and suppress highlights. After 𝐾 iterations, we obtain t he global illumination-enhanced image 𝐼 𝑐 𝑢𝑟𝑣𝑒 = 𝐿𝐸 𝐾 ( 𝑥 ) . This iterativ e strategy simulates progressive light-filling to ensure a natural transition of ov erall brightness. While cur ve estimation effectiv ely reco vers global illumination, it may neglect cer tain high-frequency textures. To address t his limitation, we treat the fine f eature 𝐹 𝑓 𝑖𝑛𝑒 from the Tr ansf ormer as a texture residual ter m and superimpose it on the enhanced result 𝐼 𝑐 𝑢𝑟𝑣𝑒 : 𝐹 𝑓 𝑖𝑛𝑒 = 𝑒𝑛𝑑 ( Cat ( 𝑋 𝑜𝑢𝑡 , 𝑌 𝑜𝑢𝑡 , 𝐼 𝑖𝑛 , 𝐼 𝑓 𝑒𝑎𝑡 )) (17) 𝐼 𝑓 𝑖𝑛𝑎𝑙 = 𝜎 ( Conv 𝑜𝑢𝑡 ( 𝐹 𝑓 𝑖𝑛𝑒 + 𝐼 𝑐 𝑢𝑟𝑣𝑒 )) (18) 3.5. Loss Functions W e design a multi-constraint objective function to restore br ightness while accurately preserving g eometr ic structures and color fidelity . This objective function includes pix el reconstruction, perceptual str uctural similarity , noise-resistant spatial consistency , and color supervision. W e adopt the 𝐿 1 loss as the fundament al constraint to ensure high consistency between the enhanced image 𝐼 𝑒𝑠𝑡 and the reference normal-light image 𝐼 𝑔 𝑡 regarding color and luminance. The 𝐿 1 loss exhibits low er sensitivity to outliers P age 8 of 19 compared with the 𝐿 2 loss. This property helps prev ent g radient e xplosion during the training process. The use of 𝐿 1 DST-Net fo r Low-Light Image Enhancement loss also facilitates the generation of shar per edges: 1 = 1 𝑁 𝑝 ∈Ω 𝐼 𝑒𝑠𝑡 ( 𝑝 ) − 𝐼 𝑔 𝑡 ( 𝑝 ) (19) where 𝐼 𝑔 𝑡 represents the reference image. The variable 𝑁 denotes the total number of pixels. W e utilize the Structural Similar ity (SSIM) loss to constrain the luminance, contrast, and str uctural components of the image. This constraint addresses t he inherent sensitivity of t he human visual system to str uctural information. Our approach prev ents the deg radation of high-freq uency textures dur ing the illumination enhancement process. W e define the SSIM loss as f ollow s: 𝑠𝑠𝑖𝑚 = 1 − 1 𝑀 𝑀 𝑗 =1 SSIM ( 𝐼 ( 𝑗 ) 𝑒𝑠𝑡 , 𝐼 ( 𝑗 ) 𝑔 𝑡 ) (20) where 𝑀 denotes t he number of sliding window s. W e incor porate tw o physical pr ior constraints dur ing training to compensate for the limitations of single supervised learning. These constraints also mitigate noise amplification and under-e xposure issues common in low signal-to- noise ratio envir onments. W e introduce an exposure control loss to regulate image exposure levels explicitl y . This loss measures t he distance between the a ver age intensity value 𝜇 𝑘 of local region 𝐾 in the enhanced image and the targ et exposure level 𝐸 . W e empir icall y set the value of 𝐸 to 0.6: 𝑒𝑥𝑝 = 1 𝐾 𝐾 𝑘 =1 𝜇 𝑘 ( 𝐼 𝑒𝑠𝑡 ) − 𝐸 (21) How ever , stretc hing contrast dur ing lo w-light enhancement frequently amplifies latent noise. T o suppress suc h high-frequency ar tif acts, w e introduce To tal V ariation (T V) loss as a smoothing regularization ter m. 𝑡𝑣 minimizes image gradient magnitudes to promote the generation of piecewise-smooth results, defined as follo ws: 𝑡𝑣 = 1 𝐶 𝐻 𝑊 𝑐 ,ℎ,𝑤 (∇ 𝑥 𝐼 𝑐 ,ℎ,𝑤 𝑒𝑠𝑡 ) 2 + (∇ 𝑦 𝐼 𝑐 ,ℎ,𝑤 𝑒𝑠𝑡 ) 2 (22) The variables ∇ 𝑥 and ∇ 𝑦 represent t he hor izontal and vertical gradient differences, respectivel y . This loss term maintains the shar pness of pr imary edges dur ing noise removal. Such a mechanism est ablishes a closed loop of extraction and constraint with the explicit gradient injection design in our MSFB module. Drastic illumination enhancement often leads to sev ere color shifts and ov ersaturation in low -light image restoration tasks. W e introduce a color fidelity loss ℎ𝑠𝑣 based on the HS V color space to supervise color information. This color space comprises Hue, Saturation, and V alue components. W e apply a minimum circular distance to Hue ( 𝐻 ) to a void mathematical tr uncation between red and purple. W e also emplo y an 𝐿 1 constraint f or Saturation ( 𝑆 ): ℎ𝑠𝑣 = 1 𝑁 𝑝 ∈Ω 𝜆 ℎ𝑢𝑒 ⋅ min( 𝐻 𝑝 𝑟𝑒𝑓 − 𝐻 𝑝 𝑒𝑠𝑡 , 2 𝜋 − 𝐻 𝑝 𝑟𝑒𝑓 − 𝐻 𝑝 𝑒𝑠𝑡 ) + 𝜆 𝑠𝑎𝑡 ⋅ 𝑆 𝑝 𝑟𝑒𝑓 − 𝑆 𝑝 𝑒𝑠𝑡 (23) where 𝜆 ℎ𝑢𝑒 and 𝜆 𝑠𝑎𝑡 are the trade-off weights f or the hue and saturation loss ter ms, respectivel y , both of which are set to 1 in our experiments. 𝐻 𝑝 𝑟𝑒𝑓 and 𝐻 𝑝 𝑒𝑠𝑡 denote the hue values of the ref erence (g round tr uth) and the estimated (enhanced) images at pix el 𝑝 , while 𝑆 𝑝 𝑟𝑒𝑓 and 𝑆 𝑝 𝑒𝑠𝑡 represent their cor responding saturation values. W e define the final loss function of DST -Net as a weighted combination of the four components discussed previousl y . The formula is expressed as follo ws: 𝑡𝑜𝑡𝑎𝑙 = 1 + 𝑤 1 𝑠𝑠𝑖𝑚 + 𝑤 2 𝑒𝑥𝑝 + 𝑤 3 𝑡𝑣 + ℎ𝑠𝑣 (24) 4. Experiments W e evaluate DST -Net using the LOL [ 48 ], LSR W -HU A WEI, and LSR W -NIKON [ 54 ] datasets. The LOL dataset represents the inaugural benchmark pro viding authentic paired images for lo w-light enhancement and cont ains 500 P age 9 of 19 DST-Net fo r Low-Light Image Enhancement Figure 3: Qualitative comparison of DST-Net with other state-of-the-art algorithms on the LOL dataset. pairs of synt hetic and captured low -light/normal-light samples. W e furt her utilize the Large-Scale Real- W orld (LSR W) dataset to assess model adapt ability across broader scenar ios and diverse acquisition hardw are. This benchmark comprises two subsets of paired images captured with HU A WEI P40 Pro and NIKON D7500 devices, respectiv ely . W e implement the DS T -Net architectur e using the PyT orch 2.1.1 frame work. W e conduct all training and inf erence sessions on a computational platf orm equipped wit h an NVIDIA vGPU with 32GB of memor y . For netw ork optimization, we utilize the Adam optimizer with an initial lear ning rate of 0.005 and a batch size of 9. W e employ a step lear ning rate decay strategy to prev ent training oscillations and ensure conv ergence, reducing t he lear ning rate by a f actor of 0.5 ev ery 50 epochs. W e par tition all datasets into training and testing sets according to a 9:1 ratio. To maintain input consis tency and accommodate multi-scale con volutions, we crop all training image pairs to 192 × 192 pixel patches using a center -cropping strategy . W e subsequentl y apply the standard To T ensor transf or mation to normalize pixel values to the [0 , 1] range and conv er t the dat a into the PyTorc h T ensor f or mat for network processing. 4.1. Comparativ e Results 4.1.1. Qualit ative Results W e perf orm a compr ehensive visual comparison betw een DS T-Ne t and pre vailing State-of-the- Art (SOT A) methods to ev aluate the perceptual quality of different algorit hms in comple x real-world scenarios. W e select three representative imag e pairs from t he LOL, LSR W -NIKON , and LSR W-HU A WEI benchmarks f or demonstration, as P age 10 of 19 DST-Net fo r Low-Light Image Enhancement Figure 4: Qualitative comparison of DST-Net with other state-of-the-art algorithms on the LSRW-H dataset. illustrated in Fig. 3 , Fig. 4 , 5 and Fig. 6 , respectivel y . From a holistic visual perspectiv e, DST -Ne t e xhibits super ior enhancement per f or mance across div erse scenar ios characterized by extreme low illumination, color shifts, and intricate textures. The resulting images achiev e an optimal balance among brightness restoration, color naturalness, and detail clar ity . P erfor mance on L OL Dataset : Figure 3 illustrates the performance of various methods on the LOL dat aset. Most exis ting algorithms str uggle with e xtreme luminance degradation. Enhancement results from EFINet, LIME, SCI, UTVNet, and Zero-DCE g enerally exhibit underexposure, where images remain under-illuminated and shadow details are indistinguishable. While EnlightenGAN and N oiSER increase brightness, the y induce se vere f eature loss, with NoiSER fur ther suffer ing from significant chromatic imbalance. Images generated by LoLiEA, URetine x-Net, ZeroIG, and Zero-DCEP de viate substantially from the Ground T r uth (GT) due to pronounced color shifts. Although PairLIE achie v es better brightness, it still lac ks accurate texture rest oration. In contrast, DST -Net successfull y restores normal brightness while accuratel y recov er ing fine bicycle f eatures and av oiding any obvious color bias. P erfor mance on L SR W-HU A WEI Dataset : W e ev aluate the generalization capability of all models by per f orming inf erence on the LSR W -HU A WEI dat aset using weights pre-trained on the LOL dataset. Figure 4 illustrates these cross- dataset results, which pose a severe challeng e to model robustness. Both NoiSER and LIME suffer from catastrophic chromatic collapse and distortion. Among t he relativel y competitiv e me thods, UTVNet remains cons trained b y insufficient luminance enhancement. Although HVI-CIDNet and PairLIE maintain color balance, they exhibit evident blur ring and structural degradation when processing high-frequency det ails such as leaf te xtures. In contrast, DST -Net P age 11 of 19 DST-Net fo r Low-Light Image Enhancement Figure 5: Qualitative compa rison of DST-Net with other state-of-the-art algo rithms on the LSRW-H dataset with zo omed-in patches. accurately restores colors and preser v es fine te xtures by lev eraging illumination-independent feature guidance. These results demonstrate the robust generalization performance of our method, confirming its ability to produce super ior visual outcomes in diverse real-w orld scenarios. P erfor mance on LSRW -H Dataset (Magnified Details) : Figure 5 presents a magnified visual compar ison of local details on the LSR W-H dataset to fur ther ev aluate enhancement fidelity . The results generated by NoiSER, LoLiEA, LIME, EnlightenG AN, SCI, UR etine x_Net, Zero-DCEP , and ZeroIG exhibit pronounced c hromatic aber rations, manif esting as a severe pur ple spectral shif t across the visual signal. Further more, methods including EFINe t, ZeroDCE, Zero-DCEP , and SCI suffer from suboptimal signal amplitude recov er y , leading to underexposed images with insufficient o ver all luminance. In contrast, DST -Net, HVI-CIDNet, and PairLIE demonstrate superior low-light enhancement capabilities, effectivel y suppressing noise to produce spatially smooth visual representations. Our method clearl y recov ers sharper object contours and more accurate color distr ibution compared to others. P erfor mance on L SR W-NIK ON Dataset : Figure 6 presents the visual compar isons on the LSR W-NIK ON dat aset captured by a DSLR camera. The enhancement results of EnlightenGAN, LIME, NoiSER, ZeroIG, and PairLIE remain unsatisf actory due to persistent ar tif acts or low contrast. LoLiEA and UTVNet generate images with insufficient ov erall luminance while UT VNe t fails to restore accurate colors f or ar tificial light sources. URetine x-Net suffers from sev ere ov erexposure across the entire frame due to ex cessiv e illumination correction. Zero-DCE and Zero-DCEP exhibit a distinct cool tone and a noticeable blue shift. Among all ev aluated methods, EFINet, HVI-CIDNet, and DS T -Ne t most effectivel y restore original scene te xtures. Closer inspection rev eals that HVI-CIDNet introduces c hromatic deviations P age 12 of 19 DST-Net fo r Low-Light Image Enhancement Figure 6: Qualitative comparison of DST-Net with other state-of-the-art algorithms on the LSRW-N dataset. in sky regions. Collectiv el y , DST -Net achie ves optimal performance in e xposure control, white balance restoration, and detail reconstr uction. Our method pro vides a visual perception most consistent with real-w orld scenes. 4.1.2. Quantit ative Results T o objectively and quantitatively ev aluate enhancement performance, w e utilize a multi-dimensional testing framew ork compr ising full-ref erence and no-ref erence metrics. The full-ref erence metrics include Peak Signal-to- Noise Ratio (PSNR), Structural Similar ity (SSIM), and Lear ned Perceptual Image Patc h Similar ity (LPIPS). The no-ref erence metrics consist of Lightness Order Error (LOE), Discrete Entropy (DE), and Enhancement Measure (EME). PSNR indicates pixel-le vel reconstr uction quality and luminance fidelity while SSIM ev aluates the preservation of geome tric str uctures and textures. The remaining metrics assess perceptual quality , illumination naturalness, and inf ormation entropy . P erfor mance on the LOL Dataset : T able 1 presents the quantitative ev aluation results of the compared algorit hms on the e xtreme low-light LOL dataset. DST -Net achie ves the highest PSNR value among all ev aluated methods. This achie vement demonstrates the significant advantag es of our approach in luminance restoration, global chr omatic fidelity , and noise suppression under extreme low illumination. DST -Net additionally secures the second-highest SSIM score. This per f or mance fully validates the effectiveness of our proposed dual-stream Tr ansf ormer arc hitecture. By incorporating illumination-independent f eatures from the DoG and LAB spaces as pr ior guidance, the network prev ents P age 13 of 19 DST-Net fo r Low-Light Image Enhancement T able 1 Quantitative compa rison of DST-Net with other state-of-the-art algorithms on the LOL dataset. Metho d SSIM ↑ PSNR ↑ LPIPS ↓ LOE ↓ DE ↑ EME ↑ HVI-CIDNet [ 21 ] 0.9185 25.3487 0.1106 34.0741 2.3893 6.1795 ZeroDceP [ 16 ] 0.6750 22.0951 0.2226 12.6747 2.3185 30.6413 ZeroDCE [ 15 ] 0.6692 20.8229 0.2054 16.8188 2.1803 30.5752 SCI [ 33 ] 0.6386 18.4658 0.1994 1.2873 2.1410 32.0614 RUAS [ 28 ] 0.4853 14.2947 0.2396 0.1121 1.4897 34.3492 EnlightenGAN [ 32 ] 0.8141 21.0852 0.1788 29.7257 1.6721 3.7087 F mrNet [ 36 ] 0.8584 18.0725 0.1438 39.0410 2.5819 5.1740 LIME [ 27 ] 0.5651 19.8706 0.3164 88.9189 2.2180 32.9591 FRRNET [ 37 ] 0.7259 17.9800 0.2192 6.6306 2.3512 7.4730 UTVNet [ 30 ] 0.8773 23.3542 0.1195 17.5475 2.0826 8.4664 Cheb yLighter [ 34 ] 0.8583 19.8584 0.0871 16.5365 2.4267 6.1861 EFINet [ 35 ] 0.7775 18.6969 0.1657 22.1341 1.8055 8.9206 P airLIE [ 55 ] 0.8571 24.6975 0.1159 38.6787 2.3218 6.6339 LoLiIEA [ 56 ] 0.6306 23.9123 0.2365 32.5626 2.2875 10.3545 NoiSER [ 38 ] 0.6978 13.7586 0.3635 61.8879 2.6637 4.2352 URetinex_Net [ 29 ] 0.8664 19.0972 0.0814 22.9149 2.4423 6.0096 ZeroIG [ 39 ] 0.5466 19.8245 0.2825 10.0040 2.8366 31.9599 DST-Net (Ours) 0.9073 25.6400 0.1300 39.5175 2.3851 5.4464 T able 2 Quantitative compa rison of DST-Net with other state-of-the-art algorithms on the LSRW-H dataset. Metho d SSIM ↑ PSNR ↑ LPIPS ↓ LOE ↓ DE ↑ EME ↑ HVI-CIDNet [ 21 ] 0.6784 20.0579 0.3673 23.6796 2.0622 3.0621 ZeroDceP [ 16 ] 0.5586 15.7394 0.3892 19.1252 1.7650 17.0958 ZeroDCE [ 15 ] 0.5540 15.5735 0.3526 16.3137 1.6102 17.0669 SCI [ 33 ] 0.4906 14.1192 0.3886 3.4174 1.3497 17.5129 RUAS [ 28 ] 0.3798 11.4869 0.4568 0.0123 0.9217 17.1161 EnlightenGAN [ 32 ] 0.6309 18.2836 0.3330 51.4119 1.5657 1.9064 F mrNet [ 36 ] 0.6887 20.3573 0.3396 13.6543 2.2736 2.5441 LIME [ 27 ] 0.4002 14.1842 0.5017 109.0293 1.8135 20.5171 FRRNET [ 37 ] 0.6568 19.9004 0.3929 23.9182 1.9824 2.6512 UTVNet [ 30 ] 0.6585 18.2777 0.2412 19.2575 2.2806 4.1818 Cheb yLighter [ 34 ] 0.6627 19.6746 0.2963 17.0408 2.1312 3.1945 EFINet [ 35 ] 0.5590 13.9895 0.3689 16.4348 1.6485 4.6768 P airLIE [ 55 ] 0.6601 19.0896 0.3310 37.4023 1.8716 2.9873 LoLiIEA [ 56 ] 0.6028 16.8211 0.3766 19.2828 1.8618 6.6287 NoiSER [ 38 ] 0.6313 17.4389 0.5122 33.3548 2.0890 1.6669 URetinex_Net [ 29 ] 0.6731 20.5522 0.2789 19.0211 2.1614 3.6529 ZeroIG [ 39 ] 0.5159 17.7150 0.4046 6.9008 2.0880 17.7718 DST-Net (Ours) 0.7070 20.8486 0.3093 19.5574 2.2160 2.6002 critical f eature loss while significantly enhancing image luminance to preserve t he phy sical structure of the original scene. P erfor mance on the LSRW -HUA WEI Datase t : T o fur ther inv estigate model generalization capabilities, we conduct cross-dataset testing on t he LSR W -HU A WEI dat aset using weights trained on the LOL dataset wit hout any fine-tuning. T able 2 demonstrates the remarkable generalization performance of DST -Net. Our model achiev es the highest scores in the core full-ref erence PSNR and SSIM metrics. DST -Net additionall y outperforms baseline models across t he LPIPS, LOE, and DE perceptual and naturalness indicators with the sole ex ception of t he EME metr ic. This super ior P age 14 of 19 DST-Net fo r Low-Light Image Enhancement T able 3 Quantitative compa rison of DST-Net with other state-of-the-art algorithms on the LSRW-N dataset. Metho d SSIM ↑ PSNR ↑ LPIPS ↓ LOE ↓ DE ↑ EME ↑ HVI-CIDNet [ 21 ] 0.5032 17.6521 0.3801 34.5776 1.5881 5.1321 ZeroDceP [ 16 ] 0.4228 16.7121 0.3709 34.4376 1.4832 15.7426 ZeroDCE [ 15 ] 0.4130 16.0385 0.3613 37.2273 1.2396 15.7546 SCI [ 33 ] 0.3723 16.1506 0.3783 17.0591 1.3567 16.7963 RUAS [ 28 ] 0.3449 13.5064 0.4063 0.1973 0.9308 16.7991 EnlightenGAN [ 32 ] 0.4321 15.6333 0.3838 75.0077 0.9099 2.5021 F mrNet [ 36 ] 0.5084 18.1907 0.3362 27.5902 1.7119 3.3538 LIME [ 27 ] 0.2865 14.7569 0.4787 102.7659 1.4909 18.7863 FRRNET [ 37 ] 0.5010 17.0669 0.4191 48.7073 1.3202 2.6650 UTVNet [ 30 ] 0.4678 16.1310 0.3319 23.4239 1.6151 5.7153 Cheb yLighter [ 34 ] 0.4216 15.3371 0.3742 54.1259 1.6989 4.7313 EFINet [ 35 ] 0.4376 15.5073 0.3478 33.3085 1.3715 5.8460 P airLIE [ 55 ] 0.4543 17.1800 0.3676 53.1862 1.4388 3.9103 LoLiIEA [ 56 ] 0.4180 16.0149 0.4088 33.6016 1.4865 12.0068 NoiSER [ 38 ] 0.4509 15.9170 0.5532 47.8126 1.4590 2.1554 URetinex_Net [ 29 ] 0.4689 17.7877 0.3341 26.9199 1.6173 4.3061 ZeroIG [ 39 ] 0.3027 14.5517 0.4630 23.8077 1.6611 16.5508 DST-Net (Ours) 0.5323 17.9031 0.3519 35.1890 1.6687 3.5275 T able 4 DST-Net loss function selection effectiveness ablation learning on the LOL dataset. Smo oth L1 MS-SSIM TV EXP HSV PSNR ↑ SSIM ↑ × 19.07 0.8154 × × 16.67 0.7945 × × 22.14 0.8350 × × 15.55 0.7264 × × 19.33 0.8133 25.47 0.8522 performance strongl y indicates that DST -Net accurately controls str uctural distortion and maintains high visual fidelity when encountering unkno wn complex degradation scenarios. P erfor mance on the LSR W-NIK ON Dataset : Table 3 repor ts the quantitative results of the ev aluated algor ithms on the L SR W-NIK ON dataset. Our method maintains top-tier perf ormance on this high-resolution and texture-rich benchmark. DST -Net achie v es the highest SSIM score and the second-highest PSNR value. This super ior SSIM performance directly reflects the dominance of our netw ork in fine texture reconstr uction and edge preser vation. W e attribute this success pr imarily to our proposed Multi-Scale Spatial Fusion Block (MSFB). The integrated Pseudo-3D con v olutions and explicit Laplacian and Sobel g radient operators deeply exploit spatial cor relations across channels. This architectural design maximizes the restoration of high-frequency boundar y information under complex low -light conditions. 4.2. Ablation Study Effectiveness of the Composite Loss Function: W e conduct comprehensive ablation experiments on the LOL dataset to validate the objectiv e function design and hyperparameter selection of DST -Net. T able 4 details the quantitativ e ev aluation results for different loss function configurations. The model employing a composite loss f ormulation including Smooth L1, MS-SSIM, TV , EXP , and HSV components achiev es optimal o verall per f ormance. DST -Net consistently maintains super ior SSIM scores regardless of t he specific ablated loss ter ms. This consistency strongl y demonstrates the architectural efficiency in preserving geome tric structures and fine textures. The composite loss f ormulation furt her drives the model tow ard a globall y optimal solution atop t his baseline structural stability . P age 15 of 19 DST-Net fo r Low-Light Image Enhancement T able 5 DST-Net performs loss function w eight pa rameter selection ablation learning on the LOL dataset. 𝑤 1 PSNR ↑ SSIM ↑ 𝑤 2 PSNR ↑ SSIM ↑ 𝑤 3 PSNR ↑ SSIM ↑ 𝑤 1 = 0 . 5 23.66 0.9039 𝑤 2 = 0 . 1 18.48 0.8059 𝑤 3 = 0 . 1 22.84 0.9069 𝑤 1 = 1 24.75 0.9166 𝑤 2 = 0 . 5 14.95 0.7523 𝑤 3 = 0 . 5 20.54 0.8899 𝑤 1 = 2 25.83 0.8886 𝑤 2 = 1 23.69 0.8611 𝑤 3 = 1 23.58 0.8862 𝑤 1 = 10 21.77 0.9114 𝑤 2 = 1 . 5 22.52 0.8587 𝑤 3 = 2 23.76 0.8605 𝑤 1 = 15 14.51 0.8356 𝑤 2 = 2 22.56 0.8521 𝑤 3 = 5 20.05 0.8123 𝑤 2 = 5 20.49 0.8230 𝑤 3 = 15 18.46 0.7649 T able 6 DST-Net performs feature map validit y ablation learning on the LOL dataset. Colo r Structure F eature PSNR ↑ SSIM ↑ × 25.36 0.8836 × 23.68 0.8970 × 22.98 0.8710 25.64 0.9073 Impact of Hyperparameter W eights: T o in v estigate loss weight perturbations on final imaging quality and identify optimal hyperparameters, we perform an ablation study on the MS-SSIM, EXP , and T V loss weights. T able 5 details these quantitative results. Impact of MS-SSIM Loss W eight ( 𝑤 1 ): W e test 𝑤 1 values of 0.5, 1, 2, 10, and 15. The SSIM metric peaks at 0.9166 when 𝑤 1 equals 1 while t he PSNR metric ac hiev es a maximum of 25.83 dB when 𝑤 1 equals 2. W e select 𝑤 1 equal to 1 as the default configuration to maximize the preser vation of inherent structural information. The model achiev es optimal structural similarity at this weight with negligible PSNR deg radation. This setting str ikes an optimal balance between pix el and structural fidelity . Impact of EXP Loss W eight ( 𝑤 2 ): W e ev aluate the exposure control loss weight 𝑤 2 using candidate values of 0.1, 0.5, 1, 1.5, 2, and 5. Both PSNR and SSIM metrics con v erg e and reach maximum v alues simultaneously when 𝑤 2 equals 1. This outcome indicates a positiv e synergis tic effect between appropriate exposure constraints and structural loss. Impact of TV Loss W eight ( 𝑤 3 ): W e ev aluate the smoothing loss weight 𝑤 3 across candidate values of 0.1, 0.5, 1, 2, 5, and 15. The SSIM metr ic achie ves its maximum value of 0.9069 when 𝑤 3 equals 0.1 and consistently declines as the w eight increases. Increasing 𝑤 3 generates progressiv ely smoother images while ex cessive smoothing alters underlying str uctural information to cause this continuous str uctural degradation. The PSNR me tric exhibits a fluctuating trend and peaks at 23.76 when 𝑤 3 equals 2 before dropping significantly at larg er values. Effectiveness of Illumination-Independent Feature Maps: W e conduct an ablation study on the f eature maps to verify the effectiv eness and individual contr ibutions of t he illumination-independent featur es within the dual-stream architecture. T o maintain structural integrity and parameter consistency , we isolate specific f eatures b y filling t he cor responding tensor with a minute constant of 10 −6 rather t han deleting the channel. T able 6 details the quantitative results for models lacking color, str uctural, and textural features. Both PSNR and SSIM metr ics exhibit varying degrees of degradation reg ardless of the disconnected prior f eature guidance. This consistent deg radation pr ov es that each f eature map play s an indispensable role in the lo w-light rest oration task. 5. Conclusion This paper proposes a Dual-Stream T ransf ormer Netw ork (DS T -Net) guided by illumination-independent features and multi-scale spatial fusion con volutions to address image luminance degradation and cr itical feature loss in low -light environments. Existing methods often str uggle to balance color fidelity and te xture preservation. DST - Net addresses t his deficiency b y extr acting illumination-independent DoG structural, LAB chromatic, and V GG-16 textural f eatures as priors. The netw ork utilizes a dual-stream interaction architecture and a cross-modal attention mechanism to continuously correct and guide the iterative curve enhancement process. W e fur ther design a Multi-Scale Spatial Fusion Bloc k (MSFB) employing pseudo-3D conv olutions and explicit 3D gradient operators to deepl y exploit spatial correlations across channels and effectivel y strengthen image edges and fine structures. Extensive comparative P age 16 of 19 DST-Net fo r Low-Light Image Enhancement ev aluations and ablation studies demonstrate the superior performance of DST -Net. The proposed method achie ves a PSNR of 25.64 dB on t he LOL dataset while exhibiting robust cross-scenar io generalization on the L SR W benchmarks. Future work will optimize algorithmic computational efficiency to facilitate real-time deplo yment on edge devices and explore application potential in comple x scenar ios including low -light video sequence enhancement. A ckno wledgments This wor k was suppor ted in part by the National Ke y Researc h and Dev elopment Prog ram of China under Grant 2025YFB2606504. During the preparation of this manuscr ipt, the author used Google Gemini (V ersion 3.0 Pro) f or the pur poses of impro ving sentence flow and academic tone. The aut hor has review ed and edited the output and takes full responsibility f or the content of this publication. CRedi T authorship contr ibution statement Yicui Shi: Conceptualization, Methodology , Visualization, Writing - original draft, W riting - revie w & editing, Resources, V alidation. Y uhan Chen: Dat a curation, In v estigation, Writing - revie w & editing. Xiangfei Huang: V alidation, Visualization, Resources. Guofa Li: Conceptualization, In ves tigation, Writing - revie w & editing, Method- ology . W enxuan Y u: V alidation, Inv estigation. Ying Fang: V alidation, Inv estigation. Declaration of Compe ting Interest The authors declare that t he y ha ve no known competing financial interests or personal relationships that could hav e appeared to influence the work reported in this paper . Data a vailability The authors do not hav e permission to share data. P age 17 of 19 DST-Net fo r Low-Light Image Enhancement Ref erences [1] Q. Zhao, G. Li, B. He, et al., Deep lear ning for low -light vision: A comprehensiv e sur v ey , IEEE Transactions on Neural Networ ks and Lear ning Systems (2025). [2] Y . Li, P . Zhou, G. Zhou, et al., A comprehensive survey of visible and infrared imaging in complex environments: Principle, degradation and enhancement, Information Fusion 119 (2025) 103036. [3] L. Sun, Y . Bao, J. Zhai, et al., Low -light image enhancement using ev ent-based illumination estimation, in: Proceedings of the IEEE/CVF International Conference on Computer Vision, 2025, pp. 6667–6677. [4] D. Ding, F. Shi, Y . Li, Low -illumination color imaging: Progress and challenges, Optics & Laser Tec hnology 184 (2025) 112553. [5] Y . M. Alsakar , N. A. Sakr, S. El-Sappagh, et al., Underwater image restoration and enhancement: a comprehensiv e review of recent trends, challenges, and applications, The Visual Computer 41 (6) (2025) 3735–3783. [6] V . Jain, Z. Wu, Q. Zou, et al., Ntire 2025 challeng e on video quality enhancement for video conferencing: Datasets, methods and results, in: Proceedings of the Computer Vision and Pattern Recognition Conf erence, 2025, pp. 1184–1194. [7] P . Singh, A. K. Bhandari, A review on computational lo w-light image enhancement models: Challenges, benchmarks, and perspectives, Archiv es of Computational Methods in Engineering 32 (5) (2025) 2853–2885. [8] Z. He, W . Ran, S. Liu, et al., Low-light image enhancement with multi-scale attention and frequency -domain optimization, IEEE Transactions on Circuits and Systems for Video Technology 34 (4) (2023) 2861–2875. [9] S. Zheng, Y . Ma, J. Pan, et al., Low-light image and video enhancement: A comprehensive survey and beyond, arXiv preprint (2022). [10] Y . W en, P . Xu, Z. Li, et al., An illumination-guided dual attention vision transformer for low -light image enhancement, Pattern Recognition 158 (2025) 111033. [11] X. Pei, Y . Huang, W . Su, et al., Fftformer: A spatial-frequency noise aw are cnn-transformer for low light image enhancement, Knowledg e-Based Systems 314 (2025) 113055. [12] A. Brateanu, R. Balmez, A. A vram, et al., Lyt-net: Lightweight yuv transf ormer-based network for low-light image enhancement, IEEE Signal Processing Letters (2025). [13] J. Guo, J. Ma, Á. F . García-Fer nández, et al., A sur ve y on image enhancement for low-light images, Heliyon 9 (4) (2023). [14] Y . Song, H. Ma, B. Y ang, et al., Troublemaker learning f or low-light image enhancement, in: Proceedings of the 2025 Inter national Conference on Multimedia Retrieval, 2025, pp. 1172–1181. [15] C. Guo, C. Li, J. Guo, C. C. Loy , J. Hou, S. Kwong, D. Tao, Zero-refer ence deep curve estimation for low -light image enhancement, in: Proceedings of the IEEE/CVF conference on computer vision and patter n recognition, 2020, pp. 1780–1789. [16] C. Li, C. Guo, C. C. Loy , Lear ning to enhance low -light image via zero-ref erence deep curve estimation, IEEE Transactions on Patter n Analysis and Machine Intelligence 44 (8) (2021) 4225–4238. [17] R. X u, Y . Niu, Y . Li, et al., Urwkv: Unified rwkv model wit h multi-state perspective for low-light image restoration, in: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2025, pp. 21267–21276. [18] Y . Lin, X. Xu, J. W u, et al., Geometric-aw are low -light image and video enhancement via depth guidance, IEEE T ransactions on Image Processing (2025). [19] Y . Qi, Z. Y ang, W . Sun, et al., A comprehensiv e o verview of image enhancement techniq ues, Archives of Computational Methods in Engineering 29 (1) (2022) 583–607. [20] L. Xu, C. Hu, Y . Hu, et al., Upt-flo w: Multi-scale transformer -guided normalizing flow for low -light image enhancement, Pattern Recognition 158 (2025) 111076. [21] Q. Y an, Y . Feng, C. Zhang, et al., Hvi: A new color space for low -light image enhancement, in: Proceedings of the computer vision and pattern recognition conference, 2025, pp. 5678–5687. [22] Y . Sun, W . W ang, Role of image feature enhancement in intelligent fault diagnosis f or mechanical equipment: A review , Engineering Failure Analy sis 156 (2024) 107815. [23] S. M. Pizer, E. P . Ambur n, J. D. Austin, R. Cromar tie, A. Geselowitz, T . Greer, B. ter Haar Romeny , J. B. Zimmerman, K. Zuiderveld, Adaptive histogram equalization and its variations, Computer vision, graphics, and image processing 39 (3) (1987) 355–368. [24] S.-C. Huang, F.-C. Cheng, Y .-S. Chiu, Efficient contrast enhancement using adaptive gamma correction with weighting distribution, IEEE transactions on image processing 22 (3) (2012) 1032–1041. [25] E. H. Land, The retinex theor y of color vision, Scientific amer ican 237 (6) (1977) 108–128. [26] D. J. Jobson, Z.-u. Rahman, G. A. W oodell, A multiscale retinex f or br idging the gap betw een color images and the human observation of scenes, IEEE Transactions on Image processing 6 (7) (1997) 965–976. [27] X. Guo, Y . Li, H. Ling, Lime: Low -light image enhancement via illumination map estimation, IEEE Transactions on image processing 26 (2) (2016) 982–993. [28] R. Liu, L. Ma, J. Zhang, Y . Xin, Z. Luo, Retine x-inspired unrolling with cooperative pr ior architecture search for low-light image enhancement, in: Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 2021, pp. 10561–10570. [29] W . Wu, J. W ang, M. Gong, Y . Y ang, et al., U retinex-ne t: Retinex-based deep unf olding network f or low -light image enhancement, in: Proceedings of the IEEE/CVF conference on computer vision and patter n recognition, 2022, pp. 5901–5910. [30] C. Zheng, D. Shi, W . Shi, Adaptiv e unfolding total variation netw ork for low -light image enhancement, in: Proceedings of the IEEE/CVF International Conference on Computer Vision, 2021, pp. 4439–4448. [31] Z. Fu, Y . T . Y ang, X. T u, et al., Lear ning a simple low-light image enhancer from paired low -light instances, in: Proceedings of the IEEE/CVF Conf erence on Computer Vision and Pattern Recognition, 2023, pp. 22252–22261. [32] Y . Jiang, X. Gong, D. Liu, Y . Cheng, C. Fang, X. Shen, J. Y ang, P . Zhou, Z. W ang, Enlightengan: Deep light enhancement without paired supervision, IEEE transactions on image processing 30 (2021) 2340–2349. P age 18 of 19 DST-Net fo r Low-Light Image Enhancement [33] L. Ma, T . Ma, R. Liu, X. Fan, Z. Luo, T ow ard fast, flexible, and robust low-light image enhancement, in: Proceedings of the IEEE/CVF conf erence on computer vision and pattern recognition, 2022, pp. 5637–5646. [34] J. Pan, D. Zhai, Y . Bai, et al., Cheby lighter: Optimal curve estimation f or low -light image enhancement, in: Proceedings of the 30th ACM International Conference on Multimedia, 2022, pp. 1358–1366. [35] C. Liu, F . Wu, X. W ang, Efinet: Res toration f or low -light images via enhancement-fusion iterativ e netw ork, IEEE T ransactions on Circuits and Systems for Video T echnology 32 (12) (2022) 8486–8499. [36] Y . Chen, X. Wang, Z. Li, et al., Fmr-net: a fas t multi-scale residual network for low -light image enhancement, Multimedia Systems 30 (2) (2024) 73. [37] Y . Chen, X. W ang, Z. Li, et al., Fr r-net: a fast reparameterized residual netw ork for low-light image enhancement, Signal, Image and Video Processing 18 (5) (2024) 4925–4934. [38] Z. Zhang, S. Zhao, X. Jin, et al., Noise self-regression: A new learning paradigm to enhance low -light images without task -related data, IEEE Transactions on Patter n Analysis and Machine Intelligence 47 (2) (2024) 1073–1088. [39] Y . Shi, D. Liu, L. Zhang, et al., Zero-ig: Zero-shot illumination-guided joint denoising and adaptive enhancement f or low -light images, in: Proceedings of the IEEE/CVF conference on computer vision and patter n recognition, 2024, pp. 3015–3024. [40] Y . Chen, Y . Shi, G. Li, et al., A lightweight real-time low -light enhancement networ k for embedded automotive vision systems, arXiv prepr int arXiv:2512.02965 (2025). [41] J. Li, F . Fang, K. Mei, G. Zhang, Multi-scale residual network f or image super-resolution, in: Proceedings of the European conference on computer vision (ECCV), 2018, pp. 517–532. [42] S. W . Zamir, A. Arora, S. Khan, M. Hay at, F. S. Khan, M.-H. Y ang, L. Shao, Learning enriched features for real image restoration and enhancement, in: Proceedings of the European Conference on Computer Vision (ECCV), 2020, pp. 492–511. [43] S.-J. Cho, S.-W . Ji, J.-P . Hong, et al., Rethinking coarse-to-fine approach in single image deblurring, in: Proceedings of t he IEEE/CVF International Conference on Computer Vision, 2021, pp. 4641–4650. [44] Y . Dai, F . Gieseke, J. Oeppen, et al., Attentional feature fusion, in: Proceedings of the IEEE/CVF Winter Conf erence on Applications of Computer Vision, 2021, pp. 3560–3569. [45] J. Hu, L. Shen, G. Sun, Squeeze-and-ex citation networks, in: Proceedings of the IEEE confer ence on computer vision and patter n recognition, 2018, pp. 7132–7141. [46] S. W oo, J. Park, J.- Y . Lee, I. S. Kweon, Cbam: Conv olutional block attention module, in: Proceedings of the European conference on computer vision (ECCV), 2018, pp. 3–19. [47] X. Qin, Z. W ang, Y . Bai, et al., Ffa-net: Feature fusion attention network for single image dehazing, in: Proceedings of the AAAI Conference on Ar tificial Intelligence, 2020, pp. 11908–11915. [48] C. W ei, W . W ang, W . Y ang, J. Liu, Deep retinex decomposition f or low -light enhancement, arXiv preprint arXiv:1808.04560 (2018). [49] Y . Zhang, X. Guo, J. Ma, W . Liu, J. Zhang, Beyond brightening low -light images, International Journal of Computer Vision 129 (4) (2021) 1013–1037. [50] L. Ma, T . Ma, R. Liu, et al., Str ucture-preserving low -light image enhancement, in: Proceedings of the European Conference on Computer Vision (ECCV), 2022, pp. 245–261. [51] X. Xu, R. W ang, C.-W . Fu, et al., Snr-a ware low-light image enhancement, in: Proceedings of the IEEE/CVF Conference on Computer Vision and Patter n Recognition, 2022, pp. 17714–17724. [52] Z. Cui, K. Li, L. Gu, et al., Y ou only need 90k parameters to adapt light: a light weight transformer for image enhancement and exposure correction, arXiv preprint arXiv:2205.14871 (2022). [53] Y . Cai, H. Bian, J. Lin, et al., Retinexf or mer: One-stag e retine x-based transf ormer for low-light image enhancement, in: Proceedings of the IEEE/CVF inter national conference on computer vision, 2023, pp. 12504–12513. [54] J. Hai, et al., R2r net: Lo w-light image enhancement via real-low to real-normal network, Jour nal of Visual Communication and Image Representation 90 (2023) 103712. [55] W . Y ang, S. W ang, Y . Fang, et al., From fidelity to perceptual quality: A semi-super vised approach f or low -light image enhancement, in: Proceedings of the IEEE/CVF conference on computer vision and patter n recognition, 2020, pp. 3063–3072. [56] E. Perez-Zarate, O. Ramos-Soto, E. Rodríguez-Esparza, et al., Loli-iea: low -light image enhancement algor ithm, in: Applications of Machine Learning 2023, V ol. 12675, SPIE, 2023, pp. 248–263. P age 19 of 19

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment