DISCOVER: A Solver for Distributional Counterfactual Explanations

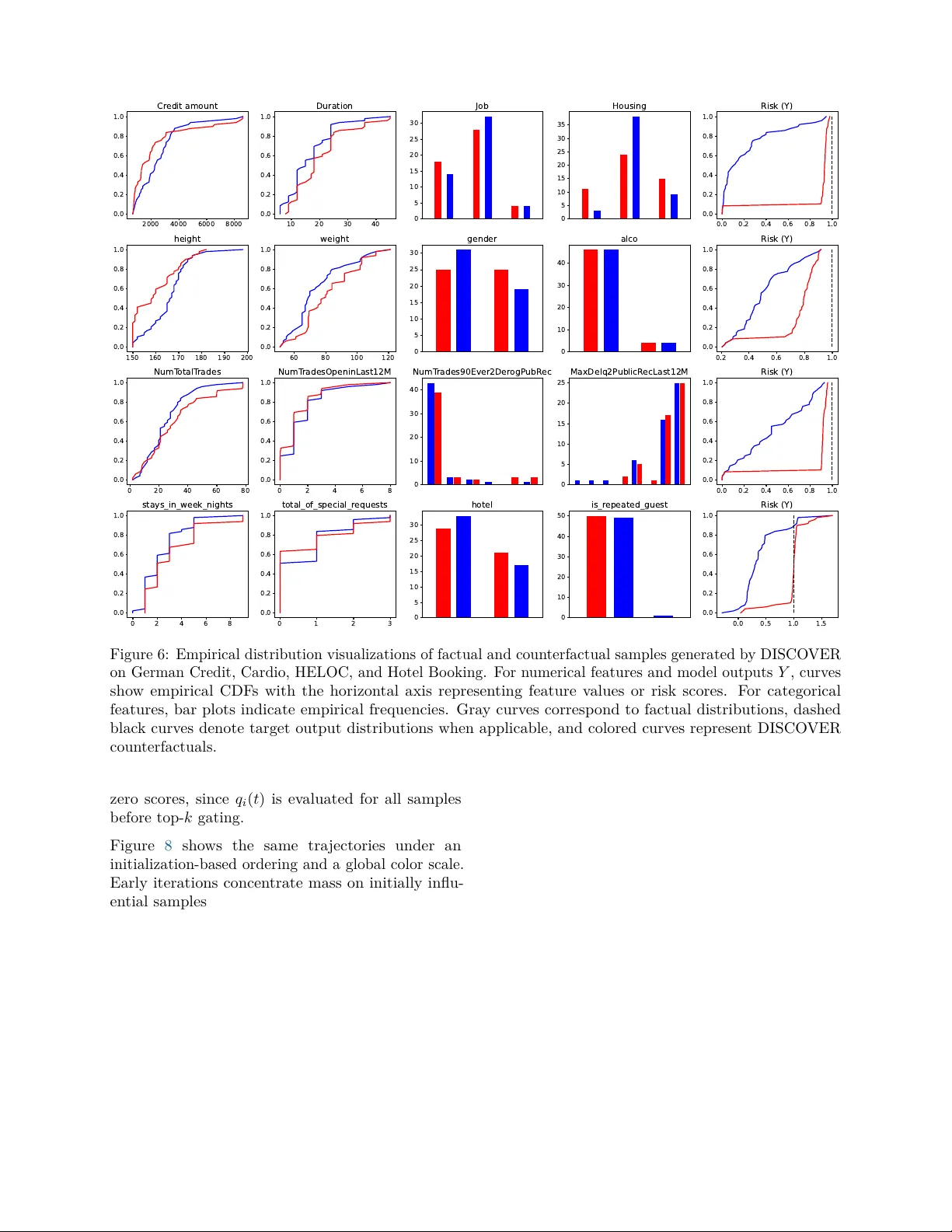

Counterfactual explanations (CE) explain model decisions by identifying input modifications that lead to different predictions. Most existing methods operate at the instance level. Distributional Counterfactual Explanations (DCE) extend this setting …

Authors: Yikai Gu, Lele Cao, Bo Zhao