From Natural Language to Executable Option Strategies via Large Language Models

Large Language Models (LLMs) excel at general code generation, yet translating natural-language trading intents into correct option strategies remains challenging. Real-world option design requires reasoning over massive, multi-dimensional option cha…

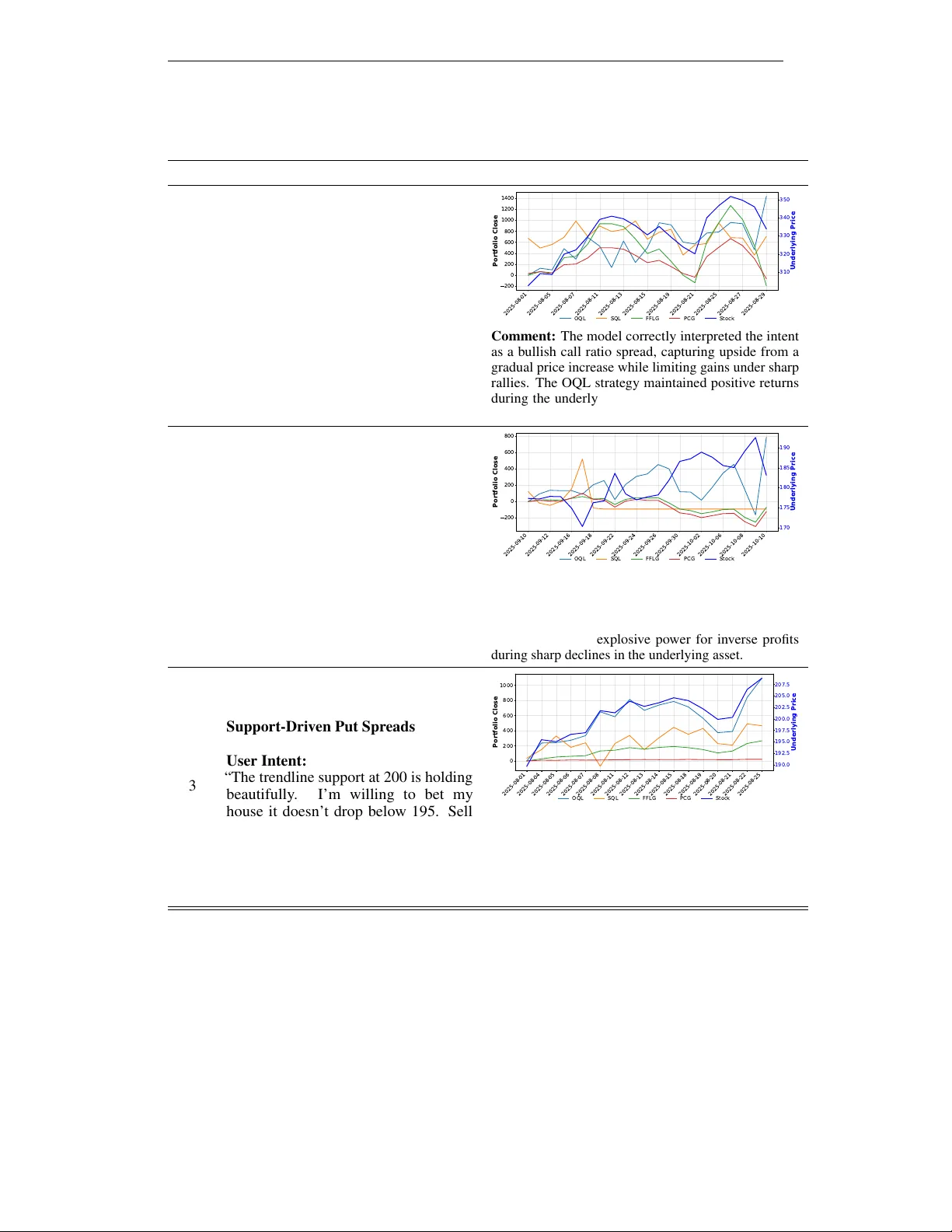

Authors: Haochen Luo, Zhengzhao Lai, Junjie Xu