Poisoning the Pixels: Revisiting Backdoor Attacks on Semantic Segmentation

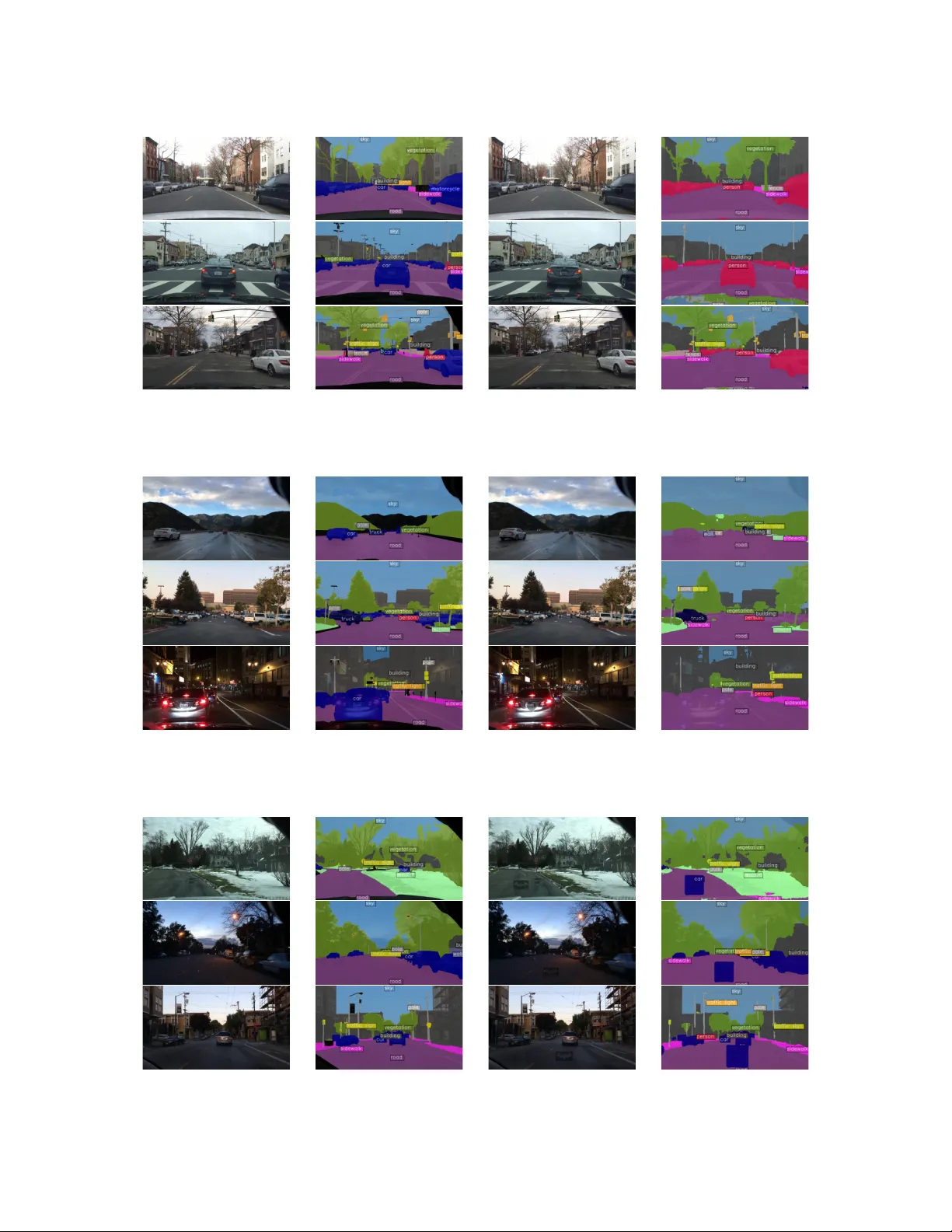

Semantic segmentation models are widely deployed in safety-critical applications such as autonomous driving, yet their vulnerability to backdoor attacks remains largely underexplored. Prior segmentation backdoor studies transfer threat settings from …

Authors: Guangsheng Zhang, Huan Tian, Leo Zhang