Explainable machine learning workflows for radio astronomical data processing

Radio astronomy relies heavily on efficient and accurate processing pipelines to deliver science ready data. With the increasing data flow of modern radio telescopes, manual configuration of such data processing pipelines is infeasible. Machine learn…

Authors: S. Yatawatta, A. Ahmadi, B. Asabere

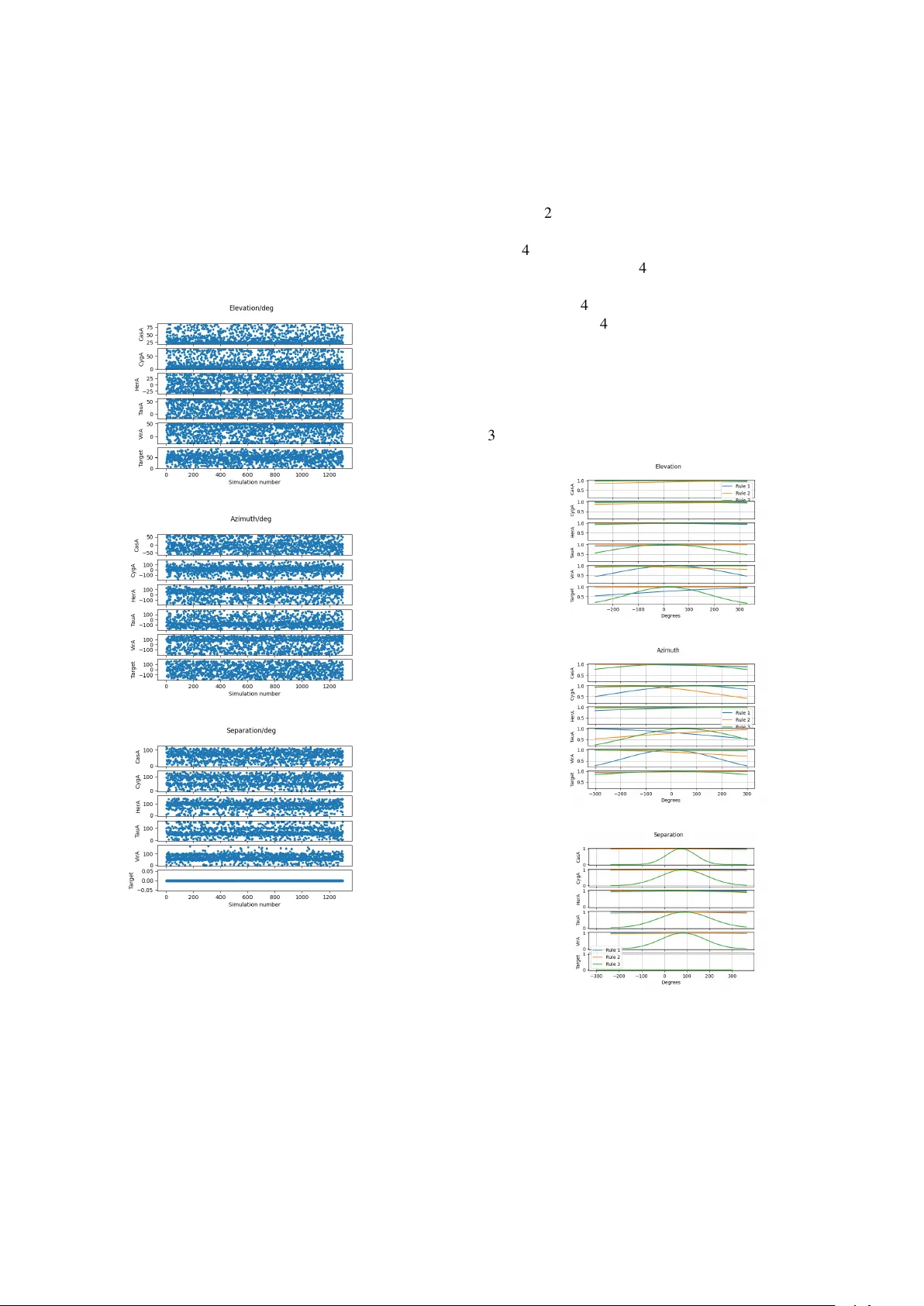

URSI GASS 2026, Krakow , P oland, 15 – 22 August 2026 Explainable machine learning w orkflows f or radio astr onomical data pr ocessing S. Y ataw atta, A. Ahmadi, B. Asabere, M. Iacobelli, N. Peters, M. V eldhuis* ASTR ON, The Netherlands Institute for Radio Astronomy , Dwingeloo, The Netherlands, https://www .astron.nl/ Abstract Radio astronomy relies heavily on ef ficient and accurate processing pipelines to deliv er science ready data. W ith the increasing data flow of modern radio telescopes, man- ual configuration of such data processing pipelines is in- feasible. Machine learning (ML) is already emerging as a viable solution for automating data processing pipelines. Howe v er , almost all existing ML enabled pipelines are of black-box type, where the decisions made by the automat- ing agents are not easily deciphered by astronomers. In order to improve the explainability of the ML aided data processing pipelines in radio astronomy , we propose the joint use of fuzzy rule based inference and deep learning. W e consider one application in radio astronomy , i.e., cal- ibration, to sho wcase the proposed approach of ML aided decision making using a T akagi-Sugeno-Kang (TSK) fuzzy system. W e provide results based on simulations to illus- trate the increased e xplainability of the proposed approach, not compromising on the quality or accuracy . 1 Introduction Numerous data processing steps are required by radio tele- scopes to produce science ready results from raw observa- tions. In order to cope with large volumes of data, such processing workflows need to operate in almost real time, with highest computational efficienc y , b ut without compro- mising the quality of the data. T o achiev e these goals, most of these pipelines are configured and tuned by e xpert as- tronomers although ML is becoming a viable alternati ve [ 1 , 2 ]. A typical ML model that is trained for a specific task appears as a a black-box to the end user . This is a hindrance for b uilding confidence in their use in data processing. Fur- thermore, enhancing such models with the existing human expertise requires a mechanism for understanding the be- havior of such black-box ML models. In this paper we propose the use of fuzzy inference [3, 4 ] to impro ve the explainability of traditional ML models (see [ 5 ] for an in-depth surve y). W e select direction dependent calibration for outlier source remov al to apply the proposed technique. As sho wn in Fig. 1 , bright outlier sources in the sky act as interference to a target being observed, espe- cially in low frequenc y observ ations (LOF AR [ 6 ]). Making the correct choice of which outliers to remo ve from the data Beam Receiver Correlato r V pq T arget Outlier Beam J p J q Receiver Outlier Outlier below horizon Horizon Separation Figure 1. A geometrical overvie w of the observing setup. While a target area in the sky is being observed, several strong outlier sources act as interference. is a matter of importance (in addition to other hyperparam- eters such as the time-frequency resolution of the calibra- tion). It is possible to use a purely data-dri ven approach to make this choice [ 7 ] but under noisy conditions (very lo w frequency observations) this becomes unreliable. Note also that selecting all the outliers for remov al is also not feasible – because increasing of the number of free parameters (to solve for) will make the problem ill-posed. Therefore, the optimal choice of the outlier selection for removal needs to be made uniquely for each observ ation. Noting the astro- metric stability of the positions of the outliers with respect to a target in the sky , we propose a model-dri ven approach, ideally suitable for the use of ML methods. In order to make the ML models more explainable, we also propose the use of Fuzzy inference as an addition to traditional multilayer perceptron based ML models. The rest of the paper is organized as follo ws: in section 2 , we describe directional dependent calibration for outlier remov al and the data-driven approach for making the op- timal choice. In section 3 , we provide a brief overvie w of fuzzy inference. Next in section 4 , we provide results (based on simulations) for the feasibility of the proposed model-driv en approach as well as the explainability of the trained models and finally in section 5 , we draw our con- clusions. 2 Directional calibration f or outlier remov al Giv en a pair of recei vers p and q (out of a total of N ) on Earth as in Fig. 1 , the output at the correlator can be giv en as [ 8 ] v pq = K ∑ i = 0 s pqi + n pq (1) where s pqi is the signal from the i -th direction in the sk y ( K outliers) and n pq is the noise [ 7 ]. W e stack up v pq in ( 1 ) for all possible p , q and for all time and frequency ranges within which a single solution for calibration is obtained (say y ). W e can do the same for the right hand side of ( 1 ) for each direction i to get s i and also for the noise to get n . Let us rewrite ( 1 ) after stacking as y = s 0 + ∑ i ∈ I s i + n (2) where we have s 0 for the model of the tar get direction and the remaining directions are taken from the set I . After calibration, we find the residual signal where the outlier sources hav e been removed as r = y − b s 0 − ∑ i ∈ I b s i (3) where b s i is the model constructed by calibration (so not nec- essarily the true model). The problem we need to tackle is to determine I out of all possible outlier sources. A data- driv en approach would use ( 3 ), and find the Akaike infor- mation criterion (AIC [ 9 ]) as AIC I = N σ r σ y 2 + N | I | (4) where σ y and σ r are the standard deviations of y and r in ( 3 ), respectiv ely . The cardinality of I is gi ven by | I | (proportional to the number of free parameters). By trying out all possible choices for I , we select the outlier direc- tions that minimize the AIC as the optimal choice. How- ev er, under very noisy conditions, the ratio σ r σ y in ( 4 ) will hardly change for the various choices of I , making the data-driv en approach less reliable and motiv ating us to se- lect a model-driv en approach. 3 Fuzzy inference A fuzzy v ariable is more related to a concept or a linguistic (semantic) v alue rather than an exact mathematical number [ 3 , 4 ]. Consider a vector of (crisp or exact) input values x of size D × 1, where x d is the d -th input feature. The antecedent and the consequent of a TSK fuzzy system with R rules (first order) can be described as Rule r : IF x 1 is X r , 1 and x 2 is X r , 2 . . . and x D is X r , D (5) THEN y = w 1 x 1 + w 2 x 2 + . . . + w D x D + b where X r , d is the fuzzy membership function of the d -th in- put feature in the r -th rule. The crisp output y of the conse- quent is determined by the weights w d and the bias b . The weights are determined by the degree of membership of the antecedent (and additional linear transforms if necessary). For our e xample, we select Gaussian membership functions to represent X r , d in ( 5 ) that are described as µ r , d ( x d ) = exp − ( x d − m r , d ) 2 2 σ 2 r , d ! (6) where m r , d and σ 2 r , d denote its mean and the variance. The degree of fulfillment (or the firing rate) of each rule f r ( x ) can be calculated in many w ays [10] and we use the product f r ( x ) = Π D d = 1 µ r , d ( x d ) . (7) In order to calculate the output (defuzzification) weights w d , we use f r ( x ) = f r ( x ) ∑ R i = 1 f i ( x ) (8) which is similar to the SoftMax operation in deep learning. Additional multilayer perceptrons can be added to the out- puts of ( 8 ) before calculating the crisp output [10, 11]. 4 Results W e simulate data for the LOF AR telescope for v arious con- figurations as listed in T able 1 . The target direction is ran- domly selected to be anywhere in the sky and the epoch of the observ ation is also randomly selected (provided the target is above the horizon). In Fig. 2 , we show the distri- T able 1. The simulation and deep learning settings Outliers CasA, CygA, T auA, V irA, HerA Number of outliers K 5 Observation duration 30 s Number of subbands 3 Frequency range [ 15 , 70 ] or [ 110 , 180 ] MHz Stations N 46 (LB A) or 62 (HBA) Signal to noise ratio ∥ y ∥ / ∥ n ∥ [ 1 , 10 ] Fuzzy rules R 3 Input features D 20 = ( K + 1 ) 3 + 2 (elev ation,azimuth,separation) × K log(frequency),stations T raining samples 1300 bution of the various geometric parameters in the simulated data. The geometry of each observ ation is basically deter- mined by the azimuth and ele v ation of each direction (in- cluding the target) and the angular separation of each out- lier from the tar get. As seen from Fig. 2 , we have almost uniform co verage of the sky with these v ariables. Note also that the separation of the target from itself is always zero as seen in Fig. 2 (c), but we kept this redundant input to test the output of the learned fuzzy membership functions. (a) (b) (c) Figure 2. The statistical spread of the simulated data used in training the ML model: (a) elev ation (b) azimuth (c) sep- aration. In order to build the deep neural network with a fuzzy in- put layer , we use the PyTSK toolkit [12]. For comparison, we also trained a traditional multilayer perceptron using the same data. The outputs of both models are a K × 1 v ector of probabilities of each outlier being selected to be included in I of ( 2 ) ( > 0 . 5 being selected). The training and testing losses (mean squared error) for both models are shown in Fig. 4 (a). The trained models are compared to the data driv en approach in Fig. 4 (b) using additional simulations. The reward for e valuation is directly calculated using the negati ve AIC ( 4 ). W e get close agreement in all three ap- proaches in Fig. 4 (b), but the data-dri ven approach per- forms an exhaustiv e search of all possible configurations of I and is therefore computationally expensi ve. The ML model-based approaches are much f aster . Compared to the traditional multilayer perceptron based approach, the fuzzy inference based approach is more explainable as seen if Fig. 3 . (a) (b) (c) Figure 3. The learned Gaussian membership functions for (a) elev ation (b) azimuth (c) separation. Looking at their complexity , we see that the azimuth plays an equally impor- tant role as the ele vation in making a correct choice (unlike the separation, which is probably dependent on the azimuth and elev ation). By looking at the learned Gaussian membership functions in Fig. 3 , we draw se veral conclusions. (i) Using the tar- get separation (alw ays zero) adds no e xtra information and can be removed from the input. (ii) Both azimuth and el- ev ation has information that are used to produce the out- put (their membership functions have more variation). This contrasts with the use of separation as seen in Fig. 3 , which has only one membership function with v ariation (indicat- ing only one acti ve rule). (iii) The elev ation membership functions for outliers that does not go below the horizon (CasA, CygA) has less v ariation than the other outliers, but HerA is an exception (probably due to lack of v ariation in the training data). (a) (b) Figure 4. The training losses and the performance of the trained ML model compared with a purely data driven ap- proach. (a) training/testing losses (b) rew ard (negati ve AIC) ev aluation of the trained ML models compared to the data- driv en approach. T o summarize, the increased explainability of the ML model enables us to: detect and exclude redundant input, detect e xamples that are rare and generate more data co ver - ing such cases, and detect more influential and also less in- fluential input features. Further explainability can be added by using semantic rule mining techniques as future work [13]. 5 Conclusions W e hav e proposed a model-based ML approach with added explainability for the determination of the optimal configu- ration of outlier remo v al in radio interferometric data. Sim- ulated results show that the trained ML model is capable of performing comparable to a data-driven approach (and probably is better when the noise is high). W e also show that by adding the fuzzy input layer , we can peer into the learned ML models and add further enhancements. Future work will expand the capabilities of such fuzzy inference and deep learning systems to handle more input parameters and other configuration parameters as output. References [1] Sarod Y atawatta and Ian M A vruch, “Deep rein- forcement learning for smart calibration of radio tele- scopes, ” Monthly Notices of the Royal Astr onomical Society , vol. 505, no. 2, pp. 2141–2150, 05 2021. [2] S. Y atawatta, “Reinforcement learning, ” Astr onomy and Computing , vol. 48, pp. 100833, 2024. [3] T omohiro T akagi and Michio Sugeno, “Fuzzy iden- tification of systems and its applications to modeling and control, ” IEEE T ransactions on Systems, Man, and Cybernetics , vol. SMC-15, no. 1, pp. 116–132, 1985. [4] J.-S.R. Jang, “ ANFIS: adapti ve-network-based fuzzy inference system, ” IEEE T ransactions on Systems, Man, and Cybernetics , vol. 23, no. 3, pp. 665–685, 1993. [5] Y uanhang Zheng, Zeshui Xu, and Xinxin W ang, “The fusion of deep learning and fuzzy systems: A state-of- the-art surve y , ” IEEE T ransactions on Fuzzy Systems , vol. 30, no. 8, pp. 2783–2799, 2022. [6] M. P . van Haarlem, M. W . W ise, A. W . Gunst, et al., “LOF AR: The LOw-Frequency ARray, ” Astr onomy and Astr ophysics , vol. 556, pp. A2, Aug. 2013. [7] Sarod Y atawatta, “Hint assisted reinforcement learn- ing: an application in radio astronomy , ” arXiv pr eprint arXiv:2301.03933 , 2023. [8] J. P . Hamaker, J. D. Bregman, and R. J. Sault, “Un- derstanding radio polarimetry . I. Mathematical foun- dations., ” A&A Supp. , vol. 117, pp. 137–147, May 1996. [9] H. Akaike, “A New Look at the Statistical Model Identification, ” IEEE T ransactions on Automatic Con- tr ol , vol. 19, pp. 716–723, Jan. 1974. [10] Xiangming Gu and Xiang Cheng, “Distilling a Deep Neural Network into a T akagi-Sugeno-Kang Fuzzy Inference System, ” arXiv e-prints , Oct. 2020. [11] Y uqi Cui, Dongrui W u, and Y ifan Xu, “Curse of Dimensionality for TSK Fuzzy Neural Networks: Explanation and Solutions, ” arXiv e-prints , p. arXiv:2102.04271, Feb. 2021. [12] Y uqi Cui, Dongrui W u, Xue Jiang, and Y ifan Xu, “PyTSK: A Python T oolbox for TSK Fuzzy Systems, ” arXiv e-prints , p. arXiv:2206.03310, June 2022. [13] F Camastra, A Ciaramella, G Salvi, S Sposato, and Staiano A, “On the interpretability of fuzzy kno wl- edge base systems, ” P eerJ Computer Science , 2024.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment