NeSy-Route: A Neuro-Symbolic Benchmark for Constrained Route Planning in Remote Sensing

Remote sensing underpins crucial applications such as disaster relief and ecological field surveys, where systems must understand complex scenes and constraints and make reliable decisions. Current remote-sensing benchmarks mainly focus on evaluating…

Authors: Ming Yang, Zhi Zhou, Shi-Yu Tian

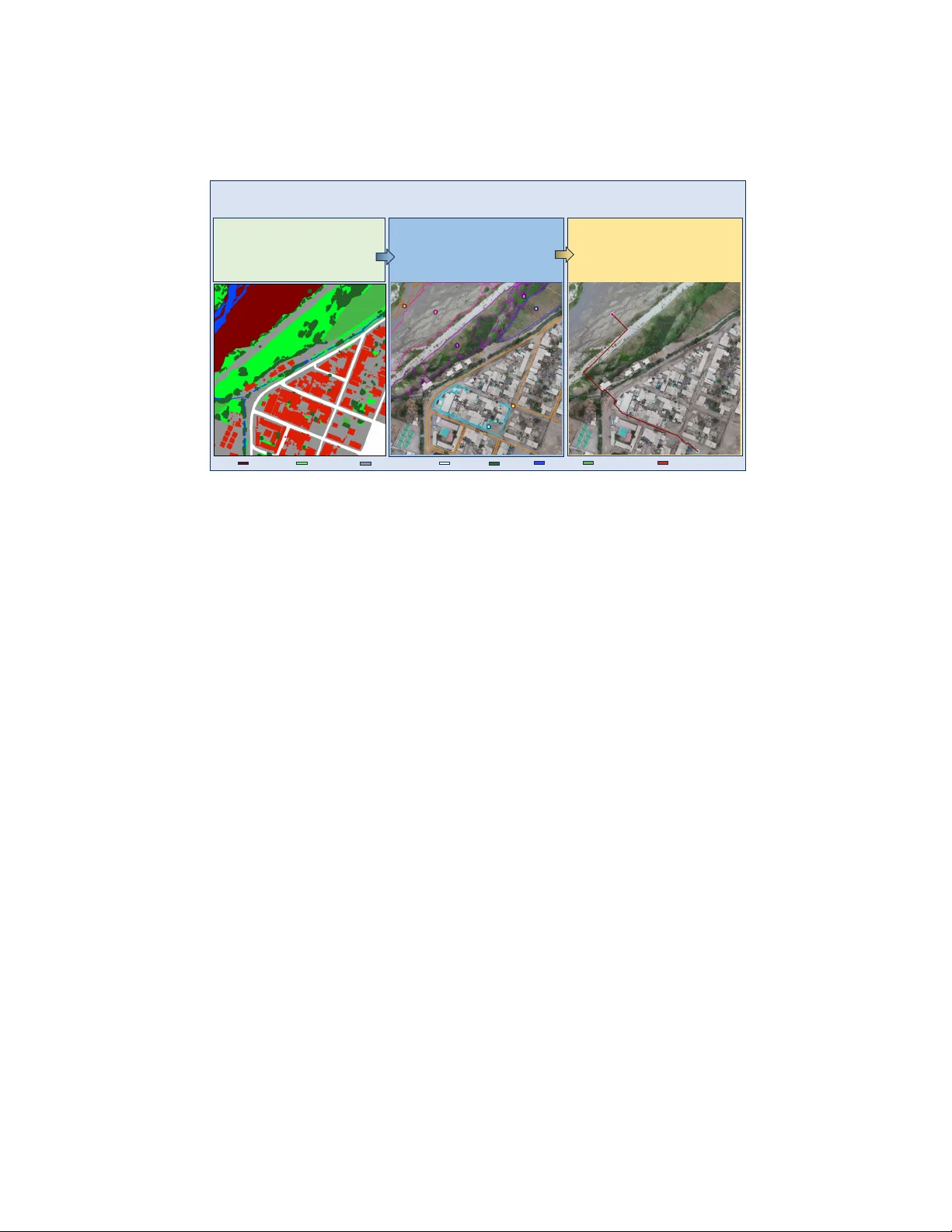

NeSy-Route: A Neuro-Sym b olic Benc hmark for Constrained Route Planning in Remote Sensing Ming Y ang 1 , 2 , Zhi Zhou 1 , Shi-Y u Tian 1 , 2 , Kun-Y ang Y u 1 , 2 , Lan-Zhe Guo 1 , 3 , and Y u-F eng Li 1 , 2 1 State Key Lab oratory for No v el Softw are T echnology , Nanjing Univ ersity , China 2 Sc ho ol of Artifical In telligence, Nanjing Universit y , China 3 Sc ho ol of In telligence Science and T echnology , Nanjing Universit y , China {yangm}@lamda.nju.edu.cn Abstract. Remote sensing underpins crucial applications suc h as disas- ter relief and ecological field surv eys, where systems must understand complex scenes and constraints and make reliable decisions. Current remote-sensing b enc hmarks mainly focus on ev aluating p erception and reasoning capabilities of m ultimo dal large language mo dels (MLLMs). They fail to assess planning capability , stemming either from the diffi- cult y of curating and v alidating planning tasks at scale or from ev aluation proto cols that are inaccurate and inadequate. T o address these limita- tions, we introduce NeSy-Route, a large-scale neuro-sym b olic b enc hmark for constrained route planning in remote sensing. Within this benchmark, w e in tro duce an automated data-generation framework that integrates high-fidelit y semantic masks with heuristic searc h to produce diverse route-planning tasks with prov ably optimal solutions. This allows NeSy- Route to comprehensively ev aluate planning across 10,821 route-planning samples, nearly 10 times larger than the largest prior b enc hmark. F ur- thermore, a three-level hierarc hical neuro-symbolic ev aluation proto col is dev elop ed to enable accurate assessment and supp ort fine-grained analy- sis on p erception, reasoning, and planning simultaneously . Our compre- hensiv e ev aluation of v arious state-of-the-art MLLMs demonstrates that existing MLLMs show significan t deficiencies in p erception and planning capabilities. W e hop e NeSy-Route can supp ort further research and de- v elopment of more pow erful MLLMs for remote sensing. Keyw ords: Remote Sensing · Neuro-Sym b olic AI · Route Planning 1 In tro duction In recent y ears, multimodal large language mo dels (MLLMs) [1, 2, 7, 29, 31] hav e ac hieved remark able progress in visual p erception and complex reasoning. Re- mote sensing imagery , a crucial source for observing the Earth’s surface, supp orts applications such as environmen tal monitoring [19, 26], disaster assessment [6], agricultural management [41], and urban planning [43]. Among these applica- tions, route planning is particularly imp ortan t for tasks such as emergency re- sp onse and resource allo cation. Ho wev er, conv entional route-planning systems 2 M. Y ang et al. Constraint: Starting from a patch of loose, sandy ground, a hiker needs t o reach a paved, stable area. The hiker is wearing sturdy boots and can traver se the loose ground, but it is uneven and m ay shift underfoot. The paved ar ea is firm and predic table. The hiker m u st avoid any dense vegetation or tree s, as they could hide obstacles or cause trippi ng. Task1: Textual Con straint Underst anding Q: How shoul d the traversability and co sts of different land types be assigned to ensure safest p ath? A: Traversability Vector : [1, 1, 1, 1, 0, 0, 1, 0] Preference Vector : [1, 2, 5, 4, 0, 0, 3, 0] Task2: Text – Image Constraint Alignment Q :Integrating t he numbered regions in the image with the specified constraints, wh at ar e the traversability status and priority ra nkings for each land cover ty pe? A: Region Vector : [7, 1, 4, 6, 2, 3, 5, 0] Traversability Vector : [1, 1, 1, 1, 0, 0, 1, 0] Preference Vector : [1, 2, 5, 4, 0, 0, 3, 0] Task3: Constrained Route Planning Q: Starting point with a magenta circle is at [257, 190]. Ending point with a cyan cir cle is at [765, 1002] . What is the safest optimal path for the hiker to take? A: Route Path [ [257 190] , [262 195], [271 204], [273 206], [280 213],……, [7 36 976 ], [764 1002], [765 1002] ]. Legend Bareland (ID:0) Rangeland( ID:1) Developed space(ID: 2) Road(ID:3) Tree(ID:4) Water(ID:5) Agriculture land(ID:6) Building(ID:7) Fig. 1: A t ypical example from our NeSy-Route Benchmark. NeSy-Route ev aluates MLLMs through a hierarc hical reasoning pip eline consisting of three in tegrated tasks. T ask 1 inv olv es extracting symbolic trav ersability and cost vectors from the provided hik er mission scenario. T ask 2 fo cuses on anc horing these symbolic constrain ts to sp e- cific iden tified regions within the remote sensing image. T ask 3 assesses the capabilit y to generate a sparse wa yp oin t tra jectory that a voids obstacles and minimizes the cu- m ulative cost. rely on costly terrestrial surv eying [12] to construct road net work maps, making them vulnerable in disaster-stric ken or p o orly mapp ed regions. MLLMs offer a p oten tial alternative by directly processing remote sensing im- agery for route planning, yet existing remote-sensing b enc hmarks [20, 32] mainly ev aluate p erception and reasoning, providing little insight into constrained plan- ning abilities, which stem either from the difficulty of curating and v alidating planning tasks at scale or from ev aluation proto cols that are inaccurate and inadequate. T o address these c hallenges, we introduce NeSy-Route, a neuro-symbolic ev al- uation b enc hmark for constrained route planning built on OpenEarthMap [38], designed to hierarchically assess route planning capability of MLLMs in remote sensing. The b enc hmark organizes its task across three levels: 1. T extual Constraint Understanding T ask. This task ev aluates the capac- it y of MLLMs to deco de natural language instructions into formal symbolic logic through 3,607 problems, establishing a critical prerequisite for p erform- ing constrained path planning. 2. T ext–Image Constrain t Alignment T ask. This task c hallenges mo dels to anc hor textual constraints onto 12,975 samples with iden tified visual re- gions, requiring join t reasoning to determine terrain-sp ecific tra versabilit y and priorit y rankings. NeSy-Route: Neuro-Sym b olic Route Planning Benchmark 3 3. Constrained Route Planning T ask. This task assesses end-to-end plan- ning solver p erformance by requiring the generation of executable wa yp oin t sequences b etw een designated start and end p oin ts that strictly honor b oth top ological barriers and land type constraints across 10,821 samples. In summary , our main con tributions are as follows: 1. W e prop ose NeSy-Route, the first neuro-symbolic ev aluation b enc hmark for route planning in remote s ensing. It hierarc hically assesses the constrained planning capabilities of MLLMs with a symbolic ev aluator across three di- mensions: textual constraint understanding, text–image constraint align- men t, and constrained route planning. 2. W e design an automated, symbolized data generation framework and dev elop a sym b olized route planning ev aluator, establishing a closed-lo op framework that in tegrates neuro generation with symbolic verification. 3. W e conduct a comprehensiv e ev aluation of state-of-the-art MLLMs and re- v eal significant limitations in their perception, reasoning, planning capa- bilities simultaneously in real remote sensing scenarios. W e further discuss p oten tial directions for MLLMs in adv ancing planning capabilities. 2 Related W ork 2.1 Remote Sensing Benc hmark In recent years, the rapid adv ancemen t of MLLMs has accelerated the transi- tion of remote sensing research from fundamen tal p erception tasks to complex reasoning scenarios featuring smaller targets or higher spatial resolutions. While early b enc hmarks primarily addressed semantic segmentation [4, 35, 38], ob ject detection [16, 18] and classification [37], more recent efforts ha ve expanded into V QA task suc h as spatial relationship reasoning [20, 24, 27], temporal change de- tection [17], and climate-related risk prediction [33]. Ho w ever, these datasets are predominan tly designed for descriptive reasoning regarding sp ecific ob jects and their mutual relationships, thereb y neglecting the c hallenges of route planning within complex remote sensing contexts. XLRS-Benc h [32]manually annotates 16 sub-tasks and is the first to introduce the Route Planning task in the remote sensing domain. This task is categorized as an L3 task within the task hierar- c hy . It adopts a m ultiple-c hoice format, requiring the mo del to select the correct route description from several candidate options. Ho wev er, suc h an ev aluation paradigm cannot quantitativ ely assess planning capability in real remote sensing en vironments, where routes must b e generated through spatial reasoning rather than selected from predefined candidate options. 2.2 Multimo dal Large Language Mo del MLLMs ha v e adv anced rapidly in recent years, and most of them no w sup- p ort inputs at native image resolution. Represen tative closed-source mo dels in- 4 M. Y ang et al. E r o s i o n O p e r a t i o n ( , , ) A - S t a r S ea rch ( ) S eg m en t at i o n M ap C o s t M ap ′ , ′ , ′ = { 1 , 2 , … , } K n o w l ed g e b as e G en era t i o n C o n f i g : 1 . Ta s k Ty p e 2. A g e nt T y pe 3. S t a r t i ng P oi nt L a nd T y pe 4. E ndi ng P oi nt L a nd T y pe 5 . T r av er s ab i l i t y V ect o r , � , � L o g i c C o n s i s t en cy Q u es t i o n G en era t i o n , Fig. 2: An o verview of automated data generation framew ork. The pip eline in tegrates dual-LLM logical verification for query synthesis, morphological erosion for seman tic visual grounding, and constrained A-Star search algorithm for deriving mathematically optimal tra jectories under symbolic rules. clude Gemini-3-Pro [7], GPT-5.1 [31], and Qwen3-VL-Plus [2], which demon- strate strong capabilities in perception and reasoning. Among open-source mo d- els, dense architectures include LLaV A-One Vision [1], the Intern VL-3.5-8B [36], Qw en3-VL-8B [2], and Qwen3.5-27B [29], while mixture-of-exp erts (MoE) ar- c hitectures include Qw en3-VL-30B-A3B [2] and Qwen3-VL-235B. These op en- source mo dels also achiev e comp etitiv e p erformance.MLLMs hav e also shown promising progress in the remote s ensing domain. Geo c hat [15], built on the LLaV A-1.5 arc hitecture [22], is trained to enable multi-task conv ersational ca- pabilities. SkySenseGPT [8], also based on LLaV A-1.5, achiev es impro ved per- formance across a v ariet y of remote sensing tasks through large-scale training. EarthGPT [40] fo cuses on understanding of multi-sensor remote sensing data. 3 Automated Data Generation F ramew ork As illustrated in Fig. 2, the automated framework generates image-text pairs, GT v ectors, and optimal tra jectories for sub-tasks through a three-stage pro cess. 3.1 Kno wledge Base Construction F ollo wing OpenEarthMap [38] standards, w e define our knowledge base(KB) constrain ts across eigh t land-cov er types: Bareland (ID:0), Rangeland (ID:1), Dev elop ed space (ID:2), Road (ID:3), T ree (ID:4), W ater (ID:5), Agriculture land (ID:6), and Building (ID:7). Let C = { c 0 , c 1 , . . . , c L − 1 } b e the set of L = 8 predefined land-cov er classes. Sp ecifically , we define three levels of trav ersabilit y: alw ays trav ersable, conditionally tra versable, and non-trav ersable: NeSy-Route: Neuro-Sym b olic Route Planning Benchmark 5 – Alw ays T rav ersable ( T A ) : T errains that are alw ays passable b y default. These areas are considered accessible unless explicitly restricted by sp ecific problem constrain ts. – Conditionally T rav ersable ( T C ) : T errains that are initially impassable. These areas are considered blo ck ed unless specific conditions for passage are met, as defined b y the problem constraints. – Non-tra versable ( T N ) : T errains that are p ermanen tly impassable. These areas are permanent obstacles that cannot be crossed under any circum- stances, regardless of task-sp ecific requirements. T able 1: Agen t-Sp ecific T ra versabilit y Definitions Agen t T A T C T N P edestrian { c 2 , c 3 } { c 0 , c 1 , c 4 , c 6 } { c 5 , c 7 } Car { c 2 , c 3 } { c 0 , c 1 , c 6 } { c 4 , c 5 , c 7 } Drone { c 0 , c 1 , c 2 , c 3 , c 5 , c 6 } { c 4 , c 7 } ∅ Boat { c 5 } ∅ { c 0 , c 1 , c 2 , c 3 , c 4 , c 6 , c 7 } Based on the land-cov er physical prop erties, we define four agent types (p edestrian, v ehicle, drone, and b oat) with trav ersability constrain ts in T ab. 1. T able 2: Agen t-Sp ecific T ask Priorit y Rankings Agen t F astest Comfort Safest Shortest P edestrian c 0 = c 1 = c 2 = c 3 = c 4 = c 6 c 2 > c 3 > c 6 > c 1 > c 0 > c 4 c 2 > c 3 > c 6 > c 1 > c 0 > c 4 c 0 = c 1 = c 2 = c 3 = c 4 = c 6 Car c 3 > c 2 > c 6 > c 1 > c 0 c 3 > c 2 > c 6 > c 1 > c 0 c 3 > c 2 > c 6 > c 1 > c 0 c 0 = c 1 = c 2 = c 3 = c 6 Drone c 0 = c 1 = c 2 = c 3 = c 5 = c 6 = c 7 > c 4 c 0 = c 1 = c 2 = c 3 = c 6 = c 7 > c 5 > c 4 c 0 = c 1 = c 2 = c 3 = c 6 > c 4 = c 5 = c 7 c 0 = c 1 = c 2 = c 3 = c 5 = c 6 = c 7 > c 4 Boat c 5 c 5 c 5 c 5 A dditionally , we define four routing ob jectives: shortest, fastest, safest, and most comfortable in T ab. 2. F or each routing ob jectiv e, a priority ranking of land cov er types is established, which determines the preferred terrains for each agen t dep ending on the sp ecific goal. 3.2 Problem F orm ulation Giv en a remote sensing visible light image I ∈ R H × W × 3 and a natural language query Q describing a route planning s cenario, w e formulate the constrained route 6 M. Y ang et al. planning task as a hierarchical ev aluation process. The ob jective is to derive sym b olic constrain ts from Q , align them with the visual contexts in I , and generate an optimal tra jectory τ ∗ that minimizes cost function derived from these constrain ts. T ask 1: T extual Constraint Understanding Giv en Q , the mo del extracts sym b olic trav ersability and preference vectors via f text : Q → ( V trav , V pref ) . – V trav ∈ { 0 , 1 } L : Binary vector where V trav [ i ] = 1 if class c i is trav ersable for the sp ecific agent, and 0 otherwise. – V pref ∈ { 0 , . . . , K } L : Ordinal v ector represen ting the priority of class c i under the sp ecified task ob jective, where K is the maximum priority tier. The higher the v alue, the higher the priority . T ask 2: T ext–Image Constraint Alignment Given Q and the image I anno- tated with regions and their corresp onding IDs, the mo del outputs symbolic re- gion, trav ersabilit y , and preference vectors: f align : ( Q, I ) → ( V ′ reg , V ′ trav , V ′ pref ) . – V ′ reg ∈ N L : A vector where V reg [ i ] = Region_ID if land co ver c i is present and iden tified in the image, and 0 otherwise. – V ′ trav and V ′ pref : Represent the trav ersability and preference status sp ecifi- cally for the detected regions to ev aluate whether the mo del can correctly apply sym b olic rules to the fin ite set of terrains visible in I . Based on V ′ pref , w e define cost map W ( p ) for ev ery pixel p ∈ I corresp onding to its land-co ver class c ( p ) : W ( p ) = ( ∞ , if V trav [ c ( p )] = 0 max( V ′ pref ) − V pref [ c ( p )] + 1 , if V trav [ c ( p )] = 1 (1) T ask 3: Constrained Route Planning Giv en Q , I , start point S ∈ N 2 and end p oin t E ∈ N 2 , the ob jectiv e is to generate a tra jectory τ = { p 1 , p 2 , . . . , p n } that minimizes the cum ulative spatial cost, defined as f plan : ( I , Q, S, E ) → τ : min τ n X i =1 W ( p i ) (2) sub ject to p 1 = S and p n = E . 3.3 Sym b olized Data Generation Con trollable Symbolic Query Syn thesis W e initiate the pip eline b y sam- pling a configuration tuple σ = ( agent , task , C start/end , C allow ed ) . The ground- truth symbolic vectors are deterministically deriv ed via KB, whic h ensures that NeSy-Route: Neuro-Sym b olic Route Planning Benchmark 7 the underlying constraint is axiomatically go verned by the rules defined in Sec. 3.1. f KB ( σ ) → ( V g t trav , V g t pref ) (3) W e utilize DeepSeek-V3.2 [21] to synthesize the natural language query Q based on σ . T o enforce semantic alignment, the mo del is required to p erform self-inference, re-deriving the logic v ectors from its own generated text: Q = M ( σ ) , ( ˆ V trav , ˆ V pref ) = P self ( Q ) (4) where M denotes the generative mapping and P self represen ts the self-inference pro cess, which ensures self-inferred vectors matc h the KB’s GT. In addition, w e utilize Gemini-3-Pro [7] to verify that the textual descriptions in Q for all L = 8 land-cov er classes are strictly compliant with the formal KB definitions, minimizing hallucinations and ensuring high-fidelity alignment b et ween textual reasoning and sym b olic constraints. Seman tic Visual Grounding and Region Identification W e ground the sym b olic constraints from task 1 in to segmen tation masks provided b y Op en- EarthMap [38].T o main tain the structural integrit y of critical terrains, we p er- form selectiv e morphological filtering. Small isolated regions are filled with the ma jority class of their neighborho o d, with the exception of functionally narrow classes such as W ater (ID: 5), Road (ID: 3), and Building (ID: 7). This pre- serv es the top ological connectivity required for path planning while reducing visual clutter. T o ensure that eac h iden tified region provides a pure visual signal for T ask 2, w e apply iterative morphological erosion to large-scale land cov er patc hes. F ormally , for a region R i of class c k : E ( R i ) = { p ∈ R i | ∀ p ′ ∈ N ( p ) , c ( p ′ ) = c k } (5) where N ( p ) denotes the lo cal neigh b orho od, ensuring the prob e area is a maxi- m um inscribed pure region. Instead of random pairing, we ensure that the visual scene I can fully supp ort the logic in Q . A query Q is matched with an image I only if the set of land co vers presen t in the image C I is a sup erset of the allo w ed and start/end classes specified in the query: C I ⊇ C allow ed . Sp ecifically , w e select the maxim um area of each class present in I as the primary region of in terest, whic h forces the mo del to reason across diverse ground conditions and complex top ological structures. Constrained T ra jectory Generation and Optimization T o ensure the feasibilit y of the planning mission under sp ecific agent constrain ts, we first con- struct a region-lev el connectivit y graph G = ( V , E ) . Eac h no de v i ∈ V represents a unique land-cov er region R i iden tified in Stage 2. W e construct an initial adja- cency matrix A ∈ { 0 , 1 } N × N , where A ij = 1 if regions R i and R j are connected, and 0 otherwise. T o incorp orate the symbolic constraints, we deriv e a constrained adjacency matrix ˜ A b y masking A with V g t trav : ˜ A ij = A ij · V g t trav [ c ( R i )] · V g t trav [ c ( R j )] (6) 8 M. Y ang et al. where c ( R i ) denotes the class ID of region R i . A candidate pair of ( R s , R e ) is v alidated if a path exists b et ween them. T o ensure a non-trivial navigation c hallenge and mitigate spatial biases, we specifically prioritize pairs ( R s , R e ) that are spatially distan t yet top ologically connected. Up on confirming reac habilit y , we pro ject the sym b olic v ectors on to the pixel grid to construct the cost map W ( p ) following Eq. (1). W e then implement the A-Star search algorithm [10] to find the optimal path. F or any pixel p , the total estimated cost f ( p ) is defined as: f ( p ) = g ( p ) + h ( p ) (7) where g ( p ) is the actual cum ulative cost from the start S to p , and h ( p ) is the heuristic function estimating the cost from p to the destination E . W e employ the Euclidean distance as the heuristic h ( p ) = ∥ p − E ∥ 2 . The optimalit y of the generated path is guaran teed by the following prop erties: – A dmissibility: Since the minimum cost for any tra v ersable pixel is W ( p ) ≥ 1 (follo wing our cost definition), the Euclidean distance alwa ys satisfies h ( p ) ≤ d ∗ ( p, E ) , where d ∗ is the actual minim um cost. – Consistency: Giv en that W ( p ) ≥ 1 and the Euclidean distance satisfies the triangle inequality , the heuristic is consisten t, meaning h ( p ) ≤ W ( p, q ) + h ( q ) for an y adjacent pixels p and q . Because h ( p ) is b oth admissible and consisten t, the A-Star algorithm is guaran- teed to find the optimal tra jectory τ ∗ . 4 NeSy-Route Benc hmark 4.1 Dataset Statistics and Difficult y Stratification The NeSy-Route b enchmark provides a large-scale collection of hierarchical Q- A pairs. T ask 1 comprises 3,607 symbolic samples deriv ed from the knowledge base, sp ecifically designed to ev aluate the model’s comprehension of textual con- strain ts. F or T ask 2, the reasoning complexit y escalates with the seman tic den- sit y of the visual scene; th us, w e stratify the dataset in to three difficult y tiers based on the n umber of annotated land-cov er classes M present in the image. This subset is partitioned into Easy ( 1 ≤ M ≤ 4 ) with 7,659 samples, Medium ( 5 ≤ M ≤ 6 ) with 3,712 samples, and Hard ( 7 ≤ M ≤ L ) with 1,604 samples. W e quantify the route planning c hallenge in T ask 3 using Complexit y Score ( D ): D = λ 1 H inter + λ 2 H intra + λ 3 H count + λ 4 C topo (8) where H inter = − P u k log 2 u k and H count = − P v k log 2 v k measure diversit y via area prop ortions u k and region coun ts v k ; H intra = 1 L P k − P j p kj log 2 p kj log 2 N k quan tifies in tra-class fragmen tation with p kj = a kj / A k and region coun t N k ; and C topo = min(1 . 0 , 2 | E | | V | ( | V |− 1) ) ev aluates adjacency graph density . Based on D , T ask 3 is stratified into Easy (6,492 samples), Medium (2,705 samples), and Hard (1,624 samples), ensuring a gran ular assessment across complex landscap es. NeSy-Route: Neuro-Sym b olic Route Planning Benchmark 9 4.2 Sym b olized Ev aluator A t ypical example in NeSy-Route is shown in Fig. 1. T o quantitativ ely ev aluate the p erformance across three tasks, we employ a comprehensiv e set of metrics to assess the model’s textual constrain t understanding, text-image constraint alignmen t, and the feasibility and optimality of the generated tra jectories. T ask 1: T extual Constraint Understanding F or T ask 1, we emplo y three primary metrics to assess the precision of sym b olic constraint extraction from the query Q. First, the T rav ersability Matching (TM ) measures the exact alignment of the predicted binary tra versabilit y vector with the ground truth: T M = (1 / N ) N X k =1 I ( V trav ,k = V g t trav ,k ) (9) where I is the indicator function and N is the total num ber of samples. Second, the Preference Ranking Correlation (PR) assesses the ordinal alignmen t of the preference v ector. Let M = ∥ V g t trav ∥ 1 denote the num ber of land cov er classes p ermissible for na vigation. W e adopt the Kendall T au co efficien t [14] as the primary indicator, whcih has b een used in many tasks [9, 11, 13]: P R = ( N c − N d ) / M 2 (10) where N c and N d are scalar counts representing the concordant and discordant pairs among the M 2 p ossible com binations. Note that PR is strictly ev aluated only for terrains where V g t trav [ i ] = 1 to ensure the ranking reflects the optimization logic among tra versable surfaces. Finally , the F ully Matc hing A ccuracy (FM) re- quires sim ultaneous mastery of both trav ersability rules and preference rankings.: F M = (1 / N ) N X k =1 I (( V trav ,k = V g t trav ,k ) ∧ ( V pref ,k = V g t pref ,k )) (11) This allows for a granular analysis of how mo dels internalize the symbolic logic em b edded in textual constraints. T ask 2: T ext–Image Constraint Alignment F or T ask 2, we ev aluate the text-image constrain t alignment using the Region Matching Rate (RM), TM, and PR. The RM measures the global accuracy of region identification: RM = (1 / ( N · L )) N X k =1 L − 1 X i =0 I ( V ′ reg ,k [ i ] = V g t reg ,k [ i ]) (12) The TM and PR metrics are applied to the lo calized v ectors V ′ trav and V ′ pref follo wing Eq. (9) and Eq. (10). This allo ws for the precise iden tification of failure b ottlenec ks within the cross-mo dal reasoning pro cess. 10 M. Y ang et al. T ask 3: Constrained Route Planning F or T ask 3, we ev aluate the global tra jectory planning by reconstructing a contin uous dense path ˆ τ from the pre- dicted sparse w aypoints τ . Let S A = { k ∈ { 1 , . . . , N } | ∀ p ∈ τ k , W ( p ) < ∞} denote the subset of samples where all wa yp oin ts reside in trav ersable regions, and S B = { 1 , . . . , N } \ S A b e the complementary subset of non-compliant sam- ples. The A dherence Rate (AR) is thus defined as: AR = | S A | / N (13) F or samples in S A , the dense tra jectory ˆ τ is reconstructed b y connecting adjacen t w aypoints using the A-Star searc h algorithm based on the cost map W ( p ) . F or samples in S B , we utilize the Bresenham line algorithm [5] for in terpolation. The Cost Ratio (CR) is then calculated for S A to assess the optimality relative to the ground-truth tra jectory τ ∗ : C R = (1 / | S A | ) X k ∈ S A ( X p ∈ ˆ τ k W ( p ) / X q ∈ τ ∗ k W ( q )) (14) Con versely , for the non-complian t samples in S B , the Violation Ratio (VR) quan- tifies the prop ortion of pixels in the reconstructed path that infringe up on non- tra versable regions: V R = (1 / | S B | ) X k ∈ S B ( |{ p ∈ ˆ τ k | W ( p ) = ∞}| / | ˆ τ k | ) (15) Finally , to ev aluate the geometric proximit y b et ween the reconstructed dense tra jectory ˆ τ and the optimal tra jectory τ ∗ , we utilize the Chamfer Distance [3] (CD), a standard metric widely adopted in autonomous driving [28, 30, 39] for tra jectory comparison, across all N samples: C D ( τ , τ ∗ ) = (1 / N ) N X k =1 [(1 / | τ k | ) X p ∈ ˆ τ k min q ∈ τ ∗ k ∥ p − q ∥ 2 + (1 / | τ ∗ k | ) X q ∈ τ ∗ k min p ∈ τ k ∥ q − p ∥ 2 ] (16) This m ulti-dimensional metrics system enables a rigorous quan tification of b oth logical adherence and spatial optimalit y in tra jectory ev aluation. 4.3 Comparison with Existing Benc hmarks Lev eraging the comparative analysis in T ab. 3, NeSy-Route distinguishes itself through three k ey inno v ations. First, it significantly expands the scale of remote sensing planning tasks, achieving an order-of-magnitude increase ov er XLRS- Benc h [32] with 10,821 rigorously constrained samples. Second, its unique hier- arc hical and symbolic ev aluation paradigm decouples perception, reasoning, and planning, enabling researchers to precisely trace failure b ottlenec ks to sp ecific cognitiv e stages. Finally , by integrating high-fidelity seman tic segmentation with heuristic search, our automated data generation framework ensures that ev ery ground-truth tra jectory represen ts a mathematically prov en global optim um, es- tablishing an ob jectiv e and highly extensible gold standard for route planning. NeSy-Route: Neuro-Sym b olic Route Planning Benchmark 11 T able 3: Comparison of NeSy-Route with existing remote sensing benchmarks. Dataset Key Statistic Ev aluation P aradigm Construction Pipeline V olume Planning Hierarc hical Symbolic GT Optimalit y Automated RSVQA [23] - ✗ ✓ ✗ ✓ ✓ RSIVQA [42] - ✗ ✗ ✗ ✓ ✓ Earth VQA [34] - ✗ ✗ ✗ ✓ ✗ LRSVQA [25] - ✗ ✓ ✗ ✗ ✗ VRS-Benc h [20] - ✗ ✗ ✗ ✗ ✗ FIT-RSRC [24] - ✗ ✓ ✗ ✗ ✗ XLRS-Bench [32] 1,130 ✓ ✓ ✗ ✓ ✗ NeSy-Route 10,821 ✓ ✓ ✓ ✓ ✓ T able 4: P erformance comparison on T ask 1: T extual Constrain t Understanding. Met- rics include T ra versabilit y Matc hing (TM), Preference Ranking Correlation (PR), and F ully Matching A ccuracy (FM). The b est and second-b est results are highlighted in b old and underlined, respectively . Metho d TM ↑ PR ↑ FM ↑ Close-source Mo dels GPT-5.1 77.02 0.959 69.20 Gemini-3-Pro 98.34 0.962 92.24 Qw en3-VL-Plus 72.97 0.923 66.23 Op en-source Mo dels LLaV A-One Vision 26.68 0.187 9.32 In tern VL-3.5-8B 52.48 0.141 21.18 Qw en3-VL-8B 53.01 0.068 27.67 Qw en3-VL-30B-A3B 66.20 0.752 44.44 Qw en3-VL-32B 69.75 -0.616 31.69 Qw en3-VL-235B-A22B 72.91 0.872 52.98 Qw en3.5-27B 80.32 0.906 63.52 5 Exp erimen t 5.1 Exp erimen tal Setup T o ev aluate the reasoning and planning capabilities of MLLMs on the NeSy- Route b enc hmark, w e ev aluate a div erse suite of state-of-the-art mo dels group ed in to three primary categories: (a) proprietary frontier mo dels represen ting the curren t p erformance ceiling, including GPT-5.1 [31], Gemini-3-Pro [7], and Qwen3- VL-Plus [2]; (b) op en-source MLLMs spanning v arious parameter scales and arc hitectures to inv estigate p erformance in route planning tasks, including the Qw en3-VL series (8B-Instruct, 30B-A3B-Instruct, 32B-Instruct, and 235B-A22B- Instruct) [2], Qwen-3.5-27B [29], Intern VL-3.5-8B [36], and LLaV A-One Vision 12 M. Y ang et al. T able 5: Ev aluation results for T ask 2: T ext–Image Constrain t Alignmen t. The mo del p erformance is assessed via Region Matching Rate (RM), T rav ersability Matc hing (TM), and Preference Ranking Correlation (PR). The b est and second-best results are indicated in b old and underlined, respectively . Method Easy Medium Hard A vg RM ↑ TM ↑ PR ↑ RM ↑ TM ↑ PR ↑ RM ↑ TM ↑ PR ↑ RM ↑ TM ↑ PR ↑ Close-source Models GPT-5.1 69.79 66.37 0.737 48.90 22.84 0.567 50.09 16.72 0.510 56.26 35.31 0.605 Gemini-3-Pro 78.16 58.14 0.582 63.17 10.78 0.402 71.70 13.59 0.404 71.01 27.50 0.463 Qwen3-VL-Plus 72.44 69.50 0.778 46.58 25.85 0.582 53.56 16.67 0.541 57.53 37.34 0.634 Open-source Mo dels LLaV A-One Vision 24.75 24.27 -0.001 16.41 9.93 0.064 12.84 9.36 0.157 18.00 14.52 0.073 Intern VL-3.5-8B 57.99 56.53 0.011 35.48 16.35 0.052 32.69 7.56 0.117 42.05 26.81 0.060 Qwen3-VL-8B 33.21 41.89 -0.107 16.88 11.97 -0.024 15.95 13.07 0.086 22.01 22.31 -0.015 Qwen3-VL-30B-A3B 72.27 65.16 0.280 51.00 40.55 0.365 48.66 22.65 0.617 57.31 42.79 0.421 Qwen3-VL-32B 67.31 62.62 -0.190 37.48 22.15 -0.176 39.26 21.04 -0.154 48.02 35.27 -0.173 Qwen3-VL-235B-A22B 64.25 71.38 0.749 33.81 43.80 0.667 32.92 43.20 0.690 43.66 52.79 0.702 Qwen3.5-27B 71.80 63.95 0.576 52.13 16.04 0.483 57.74 19.48 0.505 60.56 33.16 0.521 [1]. F or a rigorous and fair comparison, all models are ev aluated in a zero-shot setting using uniform prompts for each task, ensuring that the results reflect their inherent p erception, reasoning and planning capabilities. During the ex- p erimen ts w e observe that b oth Geo chat [15] and SkySenseGPT [8] are built up on the LLaV A-1.5 [22] arc hitecture. Neither model was trained with data requiring individual co ordinate p oin ts as outputs, and b oth w ere designed for sp ecific remote sensing tasks. As a result, their instruction-following capability is extremely limited, making them unable to complete the ev aluation pro cess of NeSy-Route. Therefore, the p erformance of these domain-specific models is not rep orted in our exp erimen tal results. 5.2 Main Results T ask 1 rev eals a clear p erformance gradient across mo dels in textual constrain t understanding. The results summarized in T ab. 4 further illus- trate this trend across the ev aluated mo dels. Close-source Mo dels, exemplified b y Gemini-3-Pro, demonstrate constraint understanding capability . This indi- cates that fron tier models are highly capable of precisely parsing nested hard constrain ts for heterogeneous agen ts and constructing rigorous logical chains of priorit y . Concurren tly , Qw en-3.5-27B stands out as a representativ e open-source mo del, attaining a TM of 80.32%, even surpassing certain closed-source coun- terparts whic h v alidates the constraint understanding capabilities. T ask 2 indicates that effective text–image constrain t alignmen t re- mains c hallenging for curren t MLLMs and critically relies on strong textual reasoning capabilities. Compared with p erformance in T ask 1, all NeSy-Route: Neuro-Sym b olic Route Planning Benchmark 13 T able 6: Experimental results on T ask 3: Constrained Route Planning. The tra jectory qualit y is measured by Adherence Rate (AR), Violation Ratio (VR), Cost Ratio (CR), and Chamfer Distance(CD). The top tw o metho ds are denoted by b old and underlined formats, resp ectiv ely . Method Easy Medium Hard A vg AR ↑ VR ↓ CR ↓ CD ↓ AR ↑ VR ↓ CR ↓ CD ↓ AR ↑ VR ↓ CR ↓ CD ↓ AR ↑ VR ↓ CR ↓ CD ↓ Close-source Models GPT-5.1 25.90 31.70 1.47 66.71 14.90 35.8 2.71 74.79 12.10 38.90 1.32 82.12 17.63 35.47 1.83 74.54 Gemini-3-Pro 27.40 28.30 1.20 58.09 15.40 32.70 1.25 70.17 13.30 35.90 1.28 77.96 18.70 32.30 1.24 68.74 Qwen3-VL-Plus 42.80 35.30 7.16 53.78 25.80 37.80 6.61 63.36 20.40 39.60 2.49 68.60 29.67 37.57 5.42 61.91 Open-source Mo dels LLaV A-One Vision 37.64 39.47 12.42 103.76 21.11 38.24 9.24 122.91 16.26 37.54 13.63 160.41 25.00 38.42 35.29 129.03 Intern VL-3.5-8B 34.80 36.50 14.82 109.88 19.40 38.70 15.06 127.07 15.10 41.30 21.38 146.55 23.10 38.83 17.09 127.83 Qwen3-VL-8B 40.30 37.20 13.58 170.86 24.20 39.20 17.68 181.78 18.40 41.60 10.64 232.09 27.63 39.33 13.97 194.91 Qwen3-VL-30B-A3B 36.70 35.70 9.36 95.35 21.30 38.50 8.36 107.58 16.90 40.70 6.12 130.98 24.97 38.30 7.95 111.30 Qwen3-VL-32B 46.00 36.50 7.39 62.60 28.80 38.40 14.86 75.36 23.30 40.40 16.35 75.60 32.70 38.43 12.87 71.19 Qwen3-VL-235B-A22B 40.90 35.50 10.78 58.44 24.00 37.30 10.14 64.91 18.50 39.10 5.19 69.50 27.80 37.30 8.70 64.28 Qwen3.5-27B 30.10 31.90 2.29 67.81 16.50 35.70 6.96 86.89 13.70 38.30 1.26 184.52 20.10 35.30 10.51 113.07 mo dels exp erience a precipitous decline in p erformance up on the in tro duction of visual features, as shown in T ab. 5, which underscores that while MLLMs ma y internalize textual constraint, their p erception ability remains remark ably deficien t. Although Gemini-3-Pro demonstrated the highest level of visual identi- fication with RM of 71.01%, it lagged significan tly behind Qw en3-VL-Plus. This misalignmen t betw een visual p erception and constraint v alidates the necessity of decoupled ev aluation in T ask 2. Notably , the Mixture-of-Exp erts (MoE) based Qw en3-VL-235B-A22B exhibited exceptional performance in logical alignment, with an a verage PR of 0.702 and TM of 52.79%, b oth s ubstan tially surpassing GPT-5.1. F urthermore, empirical data sho w that mo del with p oor logic pars- ing in T ask 1 like LLaV A-One Vision maintains extremely low p erformance in T ask 2, confirming that textual constraint understanding is the cornerstone of text-image constrain t alignment. T ask 3 demonstrates that constrained route planning remains a ma- jor challenge for curren t MLLMs, revealing a clear gap b et ween p er- ceptual understanding and effective planning solutions. As shown in T ab. 6, closed-source mo dels suc h as Gemini-3-Pro exhibit a distinct pattern of high-precision planning. Although the ov erall adherence rate of 18.70% for Gemini-3-Pro is not the highest due to rigorous logical alignmen t requirements, its p erformance on complian t paths demonstrates strong optimality . Specifically , the av erage CR of 1.24 and CD of 68.74 for this model are the b est compared with the optimal tra jectory . In contrast, op en-source models represented b y Qw en3-VL-32B ac hieve a leading adherence rate of 32.70%, demonstrating ro- bust aw areness of lo cal obstacle av oidance. How ever, the a verage CR for these mo dels remains at an elev ated level of 12.87. This disparity suggests that while op en-source mo dels are often successful at remaining within tra versable regions, they lack coherent global strategies, resulting in highly redundan t and ineffi- cien t tra jectories. F urthermore, p erformance metrics deteriorate significantly as 14 M. Y ang et al. en vironmental complexit y increases from easy to hard levels. F or example, the adherence rate of GPT-5.1 decreases from 25.90% to 12.10% as the violation ra- tio increases. These results confirm that path planning is a high-lev el cognitiv e capabilit y that extends b eyond simple seman tic p erception and reasoning. Ev en with a foundation in terrain perception and reasoning, models still struggle to pro duce complex and efficient solutions for route planning. Hierarc hical Analysis Correlation analysis across the three integrated tasks confirms that strong perception and reasoning abilities do not necessarily trans- late into planning abilit y . The exp erimen tal results demonstrate that compre- hensiv e p erception and reasoning abilities constitute a necessary but ins ufficien t condition for the successful execution of complex planning tasks. A deficiency in textual constraint understanding during the first task leads to certain failure in subsequen t stages, whereas strong p erformance in the first and second tasks do es not ensure success in the third task. This disparity indicates that models must bridge a fundamen tal cognitive gap b et ween p erceptual attribute recognition and constrained planning. Discussions Our exp erimen ts reveal tw o limitations of current MLLMs: 1)They struggle to incorp orate land-type constrain ts, lik ely because existing training data emphasizes ob ject recognition while neglecting land-t yp e textures and geo- logical characteristics. 2)Curren t remote sensing MLLMs rely on outdated arc hi- tectures, limiting their ability to handle complex reasoning and planning tasks, whic h necessitates the developmen t of pow erful remote sensing mo dels based on more adv anced architectures. 6 Conclusion In this pap er, we introduce NeSy-Route, a large-scale neuro-symbolic b enc hmark designed to ev aluate constrained route planning in remote sensing. The b enc h- mark assesses the perception, reasoning and planning capabilities of MLLMs sim ultaneously through three hierarchical stages comprising textual constraint understanding, the text to image constraint alignment T ask, and the constrained route planning T ask. Lev eraging high-fidelit y semantic segmentation masks and heuristic search algorithms, we construct an algorithmically derived optimal route for each sample, whic h serves as an ob jectiv e reference for ev aluating mo del p erformance. Exp erimen tal results indicate that while current MLLMs demonstrate comp etence in pure constrain t understanding, they face significant b ottlenec ks in text-image preception and planning capabilities. NeSy-Route in- tro duces a structured ev aluation framework for constrain t-aw are route planning in remote sensing and pro vides a new b enc hmark for studying how MLLMs inte- grate perception, reasoning, and planning in complex geographic en vironmen ts. NeSy-Route: Neuro-Sym b olic Route Planning Benchmark 15 References 1. An, X., Xie, Y., Y ang, K., Zhang, W., Zhao, X., Cheng, Z., W ang, Y., Xu, S., Chen, C., W u, C., T an, H., Li, C., Y ang, J., Y u, J., W ang, X., Qin, B., W ang, Y., Y an, Z., F eng, Z., Liu, Z., Li, B., Deng, J.: Llav a-onevision-1.5: F ully open framework for demo cratized m ultimodal training. In: arXiv (2025) 2. Bai, S., Cai, Y., Chen, R., Chen, K., Chen, X., Cheng, Z., Deng, L., Ding, W., Gao, C., Ge, C., et al.: Qwen3-vl tec hnical rep ort. arXiv preprint (2025) 3. Barro w, H.G., T enen baum, J.M., Bolles, R.C., W olf, H.C.: Parametric correspon- dence and chamfer matc hing: T wo new techniques for image matc hing. T ec h. rep. (1977) 4. Boguszewski, A., Batorski, D., Ziemba-Jank owsk a, N., Dziedzic, T., Zam brzyck a, A.: Landcov er. ai: Dataset for automatic mapping of buildings, woo dlands, water and roads from aerial imagery . In: CVPR. pp. 1102–1110 (2021) 5. Bresenham, J.E.: Algorithm for computer control of a digital plotter. In: Seminal graphics: pioneering efforts that shap ed the field, pp. 1–6 (1998) 6. Dell’A cqua, F., Gam ba, P .: Remote sensing and earthquake damage assessmen t: Exp eriences, limits, and p erspectives. Proceedings of the IEEE 100 (10), 2876–2890 (2012) 7. Go ogle DeepMind: Gemini 3 pro: Model card. Mo del Card (2025), https : / / storage.googleapis. com /deepmind- media/Model - Cards/Gemini- 3- Pro - Model- Card.pdf , accessed: 2026-01-28 8. Guo, X., Lao, J., Dang, B., Zhang, Y., Y u, L., Ru, L., Zhong, L., Huang, Z., W u, K., Hu, D., He, H., W ang, J., Chen, J., Y ang, M., Zhang, Y., Li, Y.: Skysense: A m ulti-mo dal remote sensing foundation mo del tow ards univ ersal interpretation for earth observ ation imagery . In: CVPR. pp. 27672–27683 (June 2024) 9. Hamed, K.: Effect of p ersistence on the significance of kendall’s tau as a measure of correlation betw een natural time series. The Europ ean Physical Journal Special T opics 174 (1), 65–79 (2009) 10. Hart, P .E., Nilsson, N.J., Raphael, B.: A formal basis for the heuristic determina- tion of minim um cost paths 4 (2), 100–107 (1968) 11. He, X., W ang, J., Su, Y., Liu, D., Zhao, J., Lu, G.: Monotonic rank knowledge distillation via kendall correlation. IEEE TCSVT (2026) 12. Hu, R., Bai, S., W en, W., Xia, X., Hsu, L.T.: T ow ards high-definition vector map construction based on multi-sensor in tegration for intelligen t vehicles: Systems and error quan tification. IET Intelligen t T ransp ort Systems 18 (8), 1477–1493 (2024) 13. Huang, T., Niu, S., Zhang, F., W ang, B., W ang, J., Liu, G., Y ao, M.: Correlating gene expression levels with transcription factor binding sites facilitates identifica- tion of key transcription factors from transcriptome data. F rontiers in Genetics 15 , 1511456 (2024) 14. Kendall, M.G.: A new measure of rank correlation. Biometrik a 30 (1-2), 81–93 (1938) 15. Kuc kreja, K., Danish, M.S., Naseer, M., Das, A., Khan, S., Khan, F.S.: Geo c hat: Grounded large vision-language mo del for remote sensing. In: CVPR. pp. 27831– 27840 (2024) 16. Lee, D.H., Hong, J.H., Seo, H.W., Oh, H.: Kfgo d: A fine-grained ob ject detection dataset in k ompsat satellite imagery . Remote Sensing 17 (22), 3774 (2025) 17. Li, K., Dong, F., W ang, D., Li, S., W ang, Q., Gao, X., Chua, T.S.: Show me what and where has changed? question answering and grounding for remote sensing c hange detection. arXiv preprint arXiv:2410.23828 (2024) 16 M. Y ang et al. 18. Li, K., W an, G., Cheng, G., Meng, L., Han, J.: Ob ject detection in optical remote sensing images: A survey and a new b enc hmark. ISPRS journal of photogrammetry and remote sensing 159 , 296–307 (2020) 19. Li, T., W ang, C., de Silv a, C.W., et al.: Cov erage sampling planner for uav-enabled en vironmental exploration and field mapping. In: IEEE IROS. pp. 2509–2516. IEEE (2019) 20. Li, X., Ding, J., Elhosein y , M.: V rsb enc h: A versatile vision-language b enc hmark dataset for remote sensing image understanding. NeurIPS 37 , 3229–3242 (2024) 21. Liu, A., Mei, A., Lin, B., Xue, B., W ang, B., Xu, B., W u, B., Zhang, B., Lin, C., Dong, C., et al.: Deepseek-v3. 2: Pushing the frontier of open large language mo dels. arXiv preprin t arXiv:2512.02556 (2025) 22. Liu, H., Li, C., Li, Y., Lee, Y.J.: Improv ed baselines with visual instruction tuning. In: CVPR. pp. 26296–26306 (2024) 23. Lobry , S., Marcos, D., Murray , J., T uia, D.: Rsvqa: Visual question answering for remote sensing data. IEEE TGRS 58 (12), 8555–8566 (2020) 24. Luo, J., Pang, Z., Zhang, Y., W ang, T., W ang, L., Dang, B., Lao, J., W ang, J., Chen, J., T an, Y., et al.: Skysensegpt: A fine-grained instruction tuning dataset and mo del for remote sensing vision-language understanding. arxiv 2024. arXiv preprin t 25. Luo, J., Zhang, Y., Y ang, X., W u, K., Zhu, Q., Liang, L., Chen, J., Li, Y.: When large vision-language model meets large remote sensing imagery: Coarse-to-fine text-guided tok en pruning. In: ICCV. pp. 9206–9217 (2025) 26. Marso cci, V., Jia, Y., Bellier, G.L., Kerekes, D., Zeng, L., Hafner, S., Gerard, S., Brune, E., Y adav, R., Shibli, A., et al.: Pangaea: A global and inclusive b enc hmark for geospatial foundation mo dels. arXiv preprin t arXiv:2412.04204 (2024) 27. Muh tar, D., Li, Z., Gu, F., Zhang, X., Xiao, P .: Lhrs-bot: Empow ering remote sensing with vgi-enhanced large multimodal language mo del. In: ECCV. pp. 440– 457. Springer (2024) 28. P alladin, E., Bruck er, S., Ghilotti, F., Nara yanan, P ., Bijelic, M., Heide, F.: Self- sup ervised sparse sensor fusion for long range p erception. In: ICCV. pp. 27498– 27509 (2025) 29. Qw en T eam: Qwen3.5: T ow ards native multimodal agen ts (F ebruary 2026), https: //qwen.ai/blog?id=qwen3.5 30. Shi, G., W u, C.C., Hsu, C.H.: Error concealment of dynamic lidar point clouds for connected and autonomous v ehicles. In: GLOBECOM 2023-2023 IEEE Global Comm unications Conference. pp. 5409–5415. IEEE (2023) 31. Singh, A., F ry , A., Perelman, A., T art, A., Ganesh, A., El-Kishky , A., McLaughlin, A., Low, A., Ostrow, A., Ananthram, A., et al.: Op enai gpt-5 system card. arXiv preprin t arXiv:2601.03267 (2025) 32. W ang, F., W ang, H., Guo, Z., W ang, D., W ang, Y., Chen, M., Ma, Q., Lan, L., Y ang, W., Zhang, J., et al.: Xlrs-b enc h: Could your multimodal llms understand ex- tremely large ultra-high-resolution remote sensing imagery? In: CVPR. pp. 14325– 14336 (2025) 33. W ang, J., Xuan, W., Qi, H., Liu, Z., Liu, K., W u, Y., Chen, H., Song, J., Xia, J., Zheng, Z., et al.: Disasterm3: A remote sensing vision-language dataset for disaster damage assessmen t and resp onse. arXiv preprint arXiv:2505.21089 (2025) 34. W ang, J., Zheng, Z., Chen, Z., Ma, A., Zhong, Y.: Earthvqa: T ow ards queryable earth via relational reasoning-based remote sensing visual question answering. In: AAAI. v ol. 38, pp. 5481–5489 (2024) NeSy-Route: Neuro-Sym b olic Route Planning Benchmark 17 35. W ang, J., Zheng, Z., Ma, A., Lu, X., Zhong, Y.: Lo veda: A remote sensing land-co ver dataset for domain adaptiv e semantic segmentation. arXiv preprint arXiv:2110.08733 (2021) 36. W ang, W., Gao, Z., Gu, L., Pu, H., Cui, L., W ei, X., Liu, Z., Jing, L., Y e, S., Shao, J., et al.: In ternvl3. 5: Adv ancing op en-source m ultimo dal mo dels in v ersatility , reasoning, and efficiency . arXiv preprint arXiv:2508.18265 (2025) 37. Xia, G.S., Hu, J., Hu, F., Shi, B., Bai, X., Zhong, Y., Zhang, L., Lu, X.: Aid: A b enc hmark data set for p erformance ev aluation of aerial scene classification. IEEE TGRS 55 (7), 3965–3981 (2017) 38. Xia, J., Y oko ya, N., A driano, B., Broni-Bediako, C.: Op enearthmap: A b enc hmark dataset for global high-resolution land cov er mapping. In: W ACV. pp. 6254–6264 (2023) 39. Zhang, L., Xiong, Y., Y ang, Z., Casas, S., Hu, R., Urtasun, R.: Copilot4d: Learning unsup ervised world models for autonomous driving via discrete diffusion. arXiv preprin t arXiv:2311.01017 (2023) 40. Zhang, W., Cai, M., Zhang, T., Zhuang, Y., Mao, X.: Earthgpt: A universal multi- mo dal large language mo del for m ultisensor image comprehension in remote sensing domain. IEEE TGRS 62 , 1–20 (2024) 41. Zhang, X., Sun, Y., Shang, K., Zhang, L., W ang, S.: Crop classification based on feature band set construction and ob ject-oriented approach using hypersp ec- tral images. IEEE Journal of Selected T opics in Applied Earth Observ ations and Remote Sensing 9 (9), 4117–4128 (2016) 42. Zheng, X., W ang, B., Du, X., Lu, X.: Mutual atten tion inception net work for remote sensing visual question answering. IEEE TGRS 60 , 1–14 (2021) 43. Zh u, Z., Zhou, Y., Seto, K.C., Stokes, E.C., Deng, C., Pick ett, S.T., T aubenböc k, H.: Understanding an urbanizing planet: Strategic directions for remote sensing. Remote sensing of environmen t 228 , 164–182 (2019)

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment