AW-MoE: All-Weather Mixture of Experts for Robust Multi-Modal 3D Object Detection

Robust 3D object detection under adverse weather conditions is crucial for autonomous driving. However, most existing methods simply combine all weather samples for training while overlooking data distribution discrepancies across different weather s…

Authors: Hongwei Lin, Xun Huang, Chenglu Wen

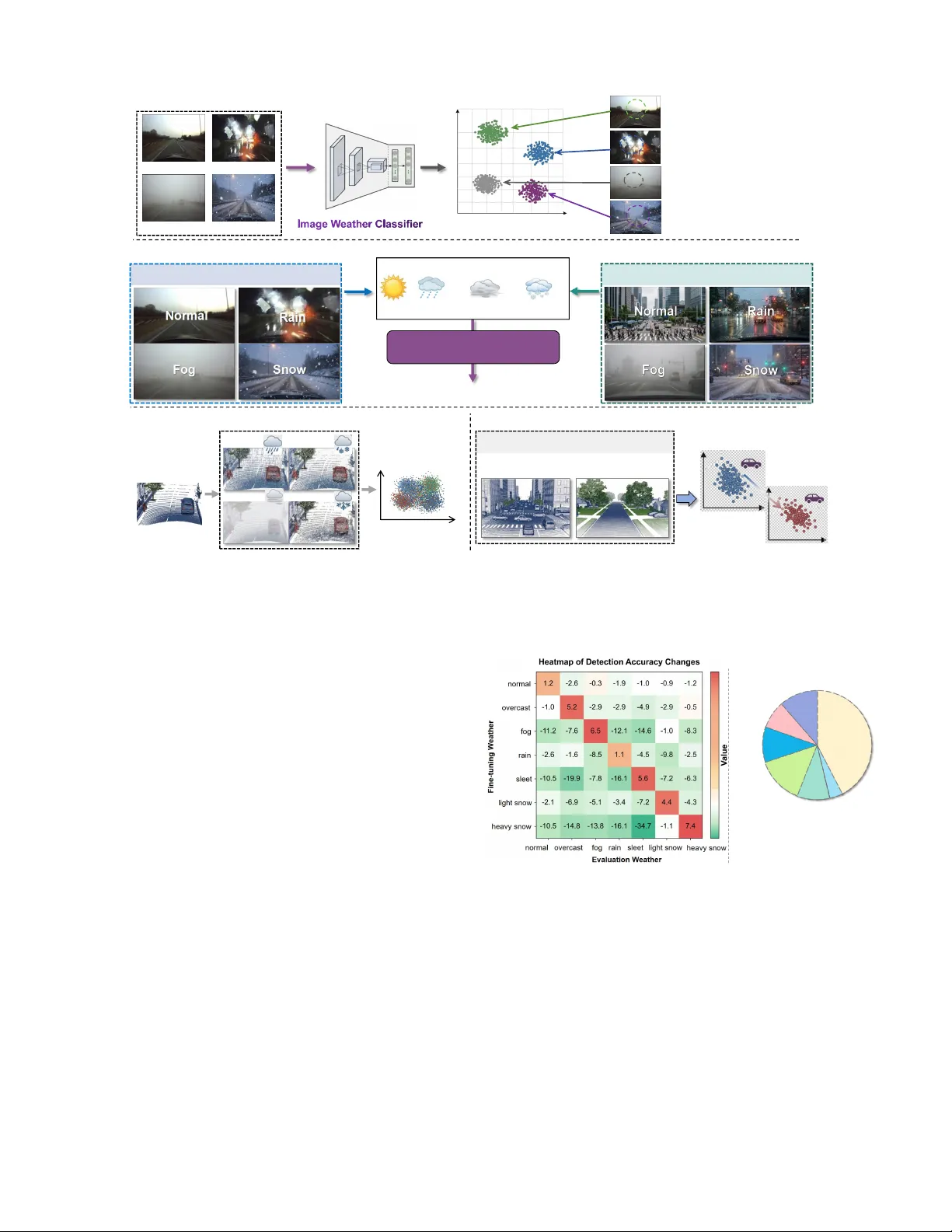

1 A W -MoE: All-W eather Mixture of Experts for Rob ust Multi-Modal 3D Object Detection Hongwei Lin, Xun Huang, Chenglu W en † , Senior Member , IEEE , and Cheng W ang, Senior Member , IEEE Abstract —Robust 3D object detection under adverse weather conditions is crucial for autonomous dri ving. Howe ver , most existing methods simply combine all weather samples f or train- ing while overlooking data distribution discrepancies across different weather scenarios, leading to performance conflicts. T o address this issue, we introduce A W-MoE, the framework that innovatively integrates Mixture of Experts (MoE) into weather -rob ust multi-modal 3D object detection approaches. A W - MoE incorporates Image-guided Weather -aware Routing (IWR), which leverages the superior discriminability of image features across weather conditions and their in variance to scene variations for pr ecise weather classification. Based on this accurate classi- fication, IWR selects the top-K most rele vant Weather-Specific Experts (WSE) that handle data discrepancies, ensuring optimal detection under all weather conditions. Additionally , we propose a Unified Dual-Modal Augmentation (UDMA) for synchronous LiD AR and 4D Radar dual-modal data augmentation while preser ving the realism of scenes. Extensive experiments on the real-w orld dataset demonstrate that A W-MoE achiev es ∼ 15% impro vement in adverse-weather performance over state-of-the- art methods, while incurring negligible infer ence ov erhead. More- over , integrating A W -MoE into established baseline detectors yields performance improvements surpassing current state-of- the-art methods. These results show the effectiveness and strong scalability of our A W -MoE. W e will release the code publicly at https://github .com/windlinsherlock/A W -MoE. Index T erms —3D object detection, Adverse weather , 3D Com- puter vision, Outdoor unmanned systems; I . I N T R O D U C T I ON T HREE-DIMENSION AL object detection, a fundamental task in 3D computer vision, has significantly adv anced autonomous driving and other unmanned systems. Most exist- ing methods rely on the stable performance of sensors, such as LiD AR [ 1 ]–[ 5 ] and cameras [ 6 ], [ 7 ]. Howe ver , under adverse weather conditions (e.g., rain, fog, sno w), sensor performance degrades, leading to weakened system reliability [ 8 ]. Therefore, recent studies have explored dev eloping ro- bust 3D object detection techniques under adverse weather conditions. These works pursue rob ustness through two complementary approaches: the construction of simulation- augmented [ 8 ]–[ 10 ] or real-world datasets [ 11 ] at the data lev el, and the dev elopment of multi-modal fusion tech- niques [ 12 ]–[ 14 ] at the algorithmic le vel. Howe ver , existing methods primarily simply combine all weather samples for Hongwei Lin, Xun Huang, Chenglu W en, and Cheng W ang are with the Fujian Key Laboratory of Urban Intelligent Sensing and Computing and the School of Informatics, Xiamen University , Xiamen, FJ 361005, China. (E- mail: { greatlin, huangxun } @stu.xmu.edu.cn; { clwen, cwang } @xmu.edu.cn). Xun Huang is also with Zhongguancun Academy , Beijing, China. † Corresponding author . training while o verlooking the substantial distrib ution discrep- ancies across adverse weather conditions, which may lead to performance conflicts across various scenarios. T o inv estigate this, we first explore the influence of weather sample bias through fine-tuning the state-of-the-art L4DR method [ 12 ] separately on each weather subset. As shown in Fig. 2 (a), models fine-tuned on a specific weather condition improv e in that condition but suffer degraded performance in others. This phenomenon indicates that significant distribu- tional gaps exist across weather conditions, prev enting a single model from maintaining optimal performance across all con- ditions. Moreover , due to the expensi ve collection of adverse weather data, real-world datasets such as K-Radar [ 11 ] contain far fewer adverse weather samples than normal-weather ones (see Fig. 2 (b)). This imbalanced distribution in weather sam- ples tends to bias training to ward normal weather conditions, thereby further overlooking adverse weather scenarios. T o address these challenges, we propose the Adverse- W eather Mixture of Experts (A W -MoE), the first approach that introduces the Mixture of Experts (MoE) technique to enhance the robustness of 3D object detection under adverse weather conditions. A W -MoE lev erages the MoE mechanism [ 15 ], [ 16 ] to extend a single-branch detector into a specialized multi-branch architecture, in which each branch is explicitly tailored to a specific weather condition. This design enables robust adaptation to diverse adverse-weather scenarios while incurring negligible inference overhead. It is worth noting that the effecti veness of Mixture-of- Experts (MoE) in multi-scenario applications heavily relies on optimal expert routing. Standard MoE frameworks [ 16 ] typically employ Point-cloud Feature-based Routing (PFR), utilizing input point-cloud features to guide the routing pro- cess, as shown in Fig. 3 (a). Howe ver , PFR exhibits signifi- cant limitations in outdoor autonomous driving under adverse weather conditions. First, point clouds suf fer from ambiguous inter-class geometric distortions , making it difficult to pre- cisely differentiate weather conditions in the feature space (see Fig. 1 (c)). Furthermore, the highly dynamic nature of outdoor en vironments leads to scene-induced intra-class distribution shifts , where point clouds of the same weather exhibit massi ve variations across different scenes (see Fig. 1 (d)). In contrast, camera images demonstrate highly fav orable properties for weather perception. First, images present distinct visual characteristics (see Fig. 1 (a)). For instance, normal weather offers clear vision and high definition, rain introduces windshield droplets and strong specular reflections, and snow presents significant sno wflake accumulations. These prominent visual cues enable an Image W eather Classifier to easily 2 Normal Rain Fog Snow Feature Space Visualization Normal Cluster Rain Cluster Fog Cluster Snow Cluster (a) Distinct V isual Characteristics (Image) Normal: Clear vision and definition Rain: Strong specular reflection Fog: Highly Reduced contrast Snow: Highly Visibility-reducing (b) Robustness to Scene V ariations (Image) Scene A: Open Highway (c) Ambiguous Inter -class Geometric Distortions (Point cloud ) (d) Scene-induced Intra-clas s Distribution Shifts (Point cloud) Urban Suburban Feature space shifts Dynamically changing scena rios Rain Sleet Fog Snow Clear Weather Similar Geom etric Distortions Feature Space Scene B: Urban Intersection Image Weat her Classifier Clear Droplets Atmospheric blur Snowflake Robust W eather Prediction Complex distribution domain Fig. 1. Comparison of weather -type discriminability between camera images and LiD AR point clouds. (a, b) Camera images exhibit distinct visual characteristics and robustness to scene variations, facilitating accurate weather classification. (c, d) In contrast, LiD AR point clouds suffer from ambiguous inter -class geometric distortions and scene-induced intra-class distribution shifts, which obscure the boundaries between different weather conditions. distinguish weather conditions in the feature space. Second, images demonstrate strong r obustness to scene variations (see Fig. 1 (b)). Motiv ated by these observations, we propose an Image- guided W eather-a ware Routing (IWR) module, illustrated in Fig. 3 (b). IWR lev erages an Image W eather Classifier to explicitly identify the weather condition, thereby routing the data to the most suitable weather expert to mitigate data distribution discrepancies. As shown in T able I , our IWR achiev es a routing accuracy of nearly 99% across all weather conditions, whereas the baseline PFR struggles significantly to recognize sev ere weather en vironments. Guided by the accurate expert routing of IWR, a W eather-Specific Experts (WSE) module subsequently e xtracts weather-specific features for the corresponding conditions. Moreov er , to mitigate the scarcity of adverse weather samples, we propose a Unified Dual-Modal Augmentation (UDMA) module that performs synchronized data augmentation on both LiD AR and 4D Radar point clouds. Furthermore, we introduce a variant termed A W -MoE-LRC, which integrates image features with the LiD AR and 4D Radar representations. This variant fully exploits the rich semantic information of cameras to achiev e enhanced perception performance. Extensiv e experiments on the real-world K-Radar [ 11 ] dataset demonstrate that A W -MoE outperforms state-of-the-art methods and shows the extensibility of A W -MoE. Our main contributions are summarized as follows: 43% 4% 10% 14% 10% 8% 1 1% Heavy Snow Light Snow Rain Sleet Fog Overcast Normal Normal (43%) Adverse Weather (57%) (a) (b) Fig. 2. (a) Performance changes of L4DR [ 12 ] after fine-tuning on a single weather condition under different weather scenarios. (b) Statistics of data volume across dif ferent weather conditions in the K-Radar dataset [ 11 ]. • W e propose the Adverse-W eather Mixture of Experts (A W -MoE), which first introduce MoE technique to en- hance the robustness of 3D object detection in adverse weather scenarios. The ef fect is remarkably significant, A W -MoE achieves robust detection performance across all weather conditions. • Lev eraging the inherent adv antages of images in dis- tinguishing weather types, we design the Image-guided W eather-a ware Routing (IWR) and W eather-Specific Ex- perts (WSE) modules. This design overcomes the limi- tations of prior MoE routing approaches under varying 3 0.3 0.7 0.2 0.4 ... (a) Point-cloud Feature-based Routing (PFR) (b) Image-guided Weather-aware Routing (IWR) Feature extraction Limited Performance Challenge2 Scene-induced Distribution Shifts Challenge1 Ambiguous Geometric Distortions Point cloud Image 1.Distinct visual characteristics 2.Robustness to scene variations Superior Performance Diverse Weathers Diverse Scenarios Fig. 3. Method comparison between Point-cloud Feature-based Routing (PFR) and the proposed Image-guided W eather-aw are Routing (IWR). weather conditions, thereby enhancing the ov erall ef fec- tiv eness of the framew ork. Additionally , we introduce a tri-modal variant, A W -MoE-LRC, which further incor- porates camera features into the LiDAR and 4D Radar modalities. • Our A W -MoE is also a highly scalable framew ork that can be extended to various 3D object detection methods, yielding substantial performance gains under adverse weather conditions. Extensiv e experiments on real-world datasets demonstrate the superior performance and strong extensibility of our A W -MoE. I I . R E L A T E D W O R K A. 3D Object Detection. 3D object detection [ 17 ]–[ 24 ] is a core task in 3D vision, predominantly relying on ra w point clouds like LiDAR. Exist- ing methods are broadly categorized into three types based on data representation: point-based, voxel-based, and point-v oxel- based. Point-based methods [ 1 ], [ 25 ], [ 26 ] directly sample and aggregate features from raw points. They classify fore- ground points and predict corresponding 3D bounding boxes. This preserves fine-grained geometric details but incurs high computational costs. Conv ersely , voxel-based methods [ 2 ], [ 3 ], [ 27 ]–[ 29 ] partition point clouds into regular grids. They aggregate features within each vox el and apply 3D spatial con volutions. Many models [ 2 ], [ 28 ] further compress these features into Bird’ s Eye V iew (BEV) space for efficient 2D con volutions, significantly accelerating inference. Point- vox el-based methods [ 4 ], [ 17 ] integrate both representations to balance efficienc y and geometric accuracy . While these approaches achieve impressive accuracy under normal condi- tions, their performance drops significantly in adverse weather . En vironmental interference degrades LiD AR signals, severely compromising the reliability of these con ventional methods. B. 3D Object Detection Under Adverse W eather . Under adverse weather conditions, the perception capability of sensors such as LiD AR degrades, leading to reduced detec- tion performance [ 8 ], [ 30 ]. Recent research has extensi vely explored 3D object detection [ 2 ], [ 3 ], [ 31 ], [ 32 ] under such conditions [ 8 ], [ 9 ], [ 12 ], [ 33 ]–[ 37 ]. Some works generate simulated adverse weather data (e.g., rain, sno w , fog) to train robust detection models [ 8 ]–[ 10 ], [ 38 ]. In contrast, others focus on real-world datasets such as K-Radar [ 11 ], which pro- vides multimodal data from LiD AR, 4D Radar, and cameras, and introduces R TNH [ 11 ] using 4D Radar for detection. Fur- thermore, sensor-fusion methods, including Bi-LRFusion [ 39 ], 3D-LRF [ 13 ], and L4DR [ 12 ], lev erage complementary in- formation from LiDAR and Radar to enhance robustness. Although these approaches outperform single-modal methods, they overlook the substantial distribution gaps across dif ferent adverse weather conditions. Our experiments re veal that train- ing a single-branch model with mixed-weather data causes conflicting optimizations among weather scenarios, leading to unstable performance. Therefore, addressing weather-specific discrepancies is essential for maintaining robust and consistent detection across all conditions. C. Mixture of Experts (MoE). MoE [ 40 ]–[ 42 ] has emerged as a powerful frame work for scaling models while maintaining computational efficienc y . Initially proposed by [ 15 ], MoE divides the model into spe- cialized experts and uses a gating network to select the most relev ant experts for each input. Sparsely-Gated MoE [ 16 ] further improves scalability by activ ating only a subset of experts, allo wing models to scale to billions of parameters without significant computational overhead. GShard [ 43 ]opti- mized MoE training on distributed systems, enabling efficient large-scale training. Switch Transformer [ 44 ] simplified expert routing by adopting top-1 selection, enhancing both training stability and scalability . Later works, such as GLaM [ 45 ] and DeepSpeed-MoE [ 46 ], focused on improving MoE for multi- task learning and large-scale training. In contrast, V -MoE [ 47 ] extended MoE to vision tasks by applying sparse activ ation to image patches in V ision T ransformers [ 48 ], thereby improving computational efficienc y . The MoE framew ork offers a promising solution to the chal- lenges posed by di verse data distrib utions in tasks with v arying conditions. Motiv ated by these advantages, we are the first to introduce MoE into 3D object detection under adverse weather conditions, effecti vely addressing inter-weather discrepancies and enabling robust performance across all conditions. I I I . P R O P O S E D M E T H O D A. Problem F ormulation In outdoor adverse weather scenarios, the sensory inputs, denoted as I , are processed by a perception model M to extract deep representations f = M ( I ) . For multi-modal settings [ 12 ], [ 13 ], features f from different sensors are further integrated by a feature fusion module G , producing the fused representation f ′ = G ( { f } ) . The fused features are then fed into the detection head to regress the final 3D bounding boxes B = { b i } N b i =1 , B ∈ R N b × 7 , where N b denotes the number of detected bounding boxes. Our proposed A W -MoE builds upon the state-of-the-art LiD AR–4D Radar fusion framework L4DR [ 12 ] by integrating a Mixture of Experts (MoE) mechanism. The input consists of LiD AR point clouds P l and 4D Radar point clouds P r , 4 (b) Image-guided Weather-aware Routing (a) Unified Dual-Modal Augmentation GT Database r p l p Weather Matchin g Matched GT Sampling Shared Backbone r f l f f f 0.3 0.7 0.2 0.4 ... Weather-s pecific Backbone Feature Fusion Detection Head Expert Routing r 1 f f f 1 l f 1 1 f Weather-specific Feature 1 y Sensitivit Weather-s pecific Backbone Feature Fusion Detection Head r 3 f f f 3 l f 3 3 f Weather-specific Feature 3 y Sensitivi t ... ... ... ... ... ... ... Weighted MoE Loss (c) Weather-Specific Experts p Point Cloud f Feature Map Weighted Sum + + Fig. 4. A W -MoE Framework. (a) Unified Dual-Modal Augmentation (UDMA): Synchronously augments LiD AR and 4D Radar point clouds. Its GT Sampling only selects ground truths matching the scene’s weather. (b) Image-guided W eather-aware Routing (IWR): Uses an Image-based W eather Classifier to predict the scene weather and routes the feature to the top-K most relev ant W eather-Specific Experts. (c) W eather-Specific Experts (WSE): Each expert is specialized for a weather condition, extracting robust weather-specific features and regressing bounding boxes with tailored sensitivity . denoted collectiv ely as P m = { p m i } N m i =1 , m ∈ { l , r } , where p m i represents a 3D point in modality m . B. A W-MoE The overall architecture of A W -MoE is illustrated in Fig. 4 . A W -MoE consists of three main components: a Shared Back- bone, an Image-guided W eather-a ware Routing (IWR) module, and multiple W eather-Specific Experts (WSE). The Shared Backbone extracts general representations from the input data, while the IWR lev erages discriminative visual cues from im- ages under different weather conditions to dynamically route features to the most suitable WSE. Each WSE is specialized in processing features corresponding to a particular weather type, enabling A W -MoE to maintain robust and consistent detection performance across di verse adverse conditions. Moreover , the proposed Unified Dual-Modal Augmentation (UDMA) per- forms synchronized data augmentation for both LiD AR and 4D Radar modalities, ensuring sample authenticity and cross- modal consistency under various weather scenarios. 1) Unified Dual-Modal Augmentation: Data augmenta- tion [ 2 ], [ 3 ], [ 28 ] is widely used in deep learning but has been lar gely ov erlooked in LiD AR–4D Radar fusion [ 12 ], [ 13 ], [ 49 ]. In this work, we address this limitation by proposing Unified Dual-Modal Augmentation (UDMA), which performs synchronized augmentations on LiDAR and 4D Radar data, including flipping, rotation, scaling, and ground-truth (GT) sampling, to maintain cross-modal consistency . Unlike con- ventional GT sampling [ 4 ], [ 27 ] which indiscriminately mixes data from different weather conditions and thereby degrades scene realism, our proposed W eather-Specific GT Sampling (WSGTS) accounts for the substantial geometric and reflec- tiv e variations of objects across diverse weather scenarios. As shown in Fig. 4 (a), WSGTS samples GTs exclusi vely from scenes with matching weather conditions, effecti vely av oiding cross-weather mismatches, preserving en vironmental authenticity , and improving detection performance, as reported in T able VI . 2) Image-guided W eather-awar e Routing: The key to the MoE framew ork’ s effecti veness in handling multi-task and multi-scenario problems lies in the ability of expert routing to accurately select the most suitable expert. As analyzed in the Introduction, the Point-cloud Feature-based Routing (PFR) [ 15 ], which relies on point cloud features, performs poorly in outdoor scenarios due to the highly dynamic nature of environments and the difficulty of capturing point cloud differences under adverse weather such as fog, sleet, and light snow (see Figs. 1 (c, d) and T able I ). Con versely , images offer superior clarity in distinguishing div erse weather patterns while remaining largely in variant to fluctuations in scene geometry (see Figs. 1 (a, b)). Based on this observation, we design an Image-guided W eather-a ware Routing (IWR) to perform expert selection. First, we design a lightweight image-based W eather Classifier to categorize the captured scene images: z = C ( I img ) ∈ R N W , (1) where z denotes the classification result, C represents the W eather Classifier , I img denotes camera image, and N W is the number of weather categories. Then, the classification result z is normalized using a softmax function, where P w denotes the probability corresponding to the w -th weather category: P = softmax( z ) , P w = exp( z w ) P N W i =1 exp( z i ) , w = 1 , ..., N W . (2) 5 DepthNet l p C Lifting Geometry Tra nsformation BEV Pooling C convs Fusion Image Encoder img f W H C 1 projection sparse D W H D 1 context f W H D 2 pr ob D W H C 2 frustum f W H D pooled f 3D V oxel Features r f Splatting l f c f f f Outer Product C Concatenation Fig. 5. The architecture of the A W -MoE-LRC framework. The pipeline comprises three stages: (i) LiDAR-Guided Image Feature Lifting, where sparse LiDAR depth assists in predicting 3D frustum features from images; (ii) 3D Geometry Transformation and BEV Pooling, which projects and aggregates these features into the ego-vehicle BEV space; and (iii) Multi-Modal Feature Fusion, which concatenates the aligned camera, LiDAR, and 4D Radar BEV features along the channel dimension for final conv olution-based integration. V ox elization 0.3 0.9 ... 0.2 0.1 initial conv . H × W × 3 H × W × C BN Depthwise Conv . H' × W' × C Pointwise Conv . H' × W' × C' BN Fig. 6. Architecture of the proposed Image-based W eather Classifier . Finally , we select the top- K weather categories with the highest probabilities in P to determine the corresponding W eather-Specific Experts (WSE): S = T opK( P , K ) ⊂ 1 , ..., N W , |S | = K , (3) where S denotes the set of selected WSE. Since the pro- posed lightweight image-based W eather Classifier achiev es high accuracy in predicting scene weather types (over 99%, see T able I ), our IWR can reliably select the most appropriate WSE. W eather Classifier . The architecture of our Image-based W eather Classifier is illustrated in Fig. 6 . It consists of an initial conv olutional layer followed by a backbone composed of four consecutive Depthwise Separable Blocks. Each Depth- wise Separable Block contains a depthwise con volution, a pointwise con volution, and two normalization layers, which collaborativ ely extract discriminative weather-related features from the input image. Despite its lightweight design, the proposed image-based W eather Classifier achiev es both high efficienc y and accuracy , providing precise and efficient routing for weather-specific experts. 3) W eather-Specific Experts: After IWR selects the most suitable expert, the corresponding W eather-Specific Expert (WSE) is acti vated to handle the scenario under a spe- cific weather condition. Each WSE consists of three compo- nents: a W eather-Specific Backbone, a W eather-Specific Fea- ture Fusion module, and a W eather-Specific Detection Head. T ABLE I C O MPA R IS O N O F W E A T H E R C L A S SI FI C A T I O N AC C U R AC Y B E T W EE N P O IN T - C L O U D F E A T U R E - BA S E D R O U T I NG ( P F R) A N D T H E P RO P O SE D I M AG E - G U I DE D W E A T H E R - AW A R E R OU T I NG ( I W R ). Method T otal Normal Overcast F og Rain Sleet Light Snow Hea vy Sno w PFR 71.3 98.9 12.0 51.9 76.7 57.6 2.1 58.3 IWR 99.0 99.8 100.0 97.3 99.0 97.1 99.3 98.7 The W eather-Specific Backbone is responsible for extracting weather-specific features that cannot be captured by the shared backbone. The W eather-Specific Feature Fusion module per- forms weather-a ware complementary fusion of LiD AR and 4D Radar features according to their quality dif ferences under different weather conditions. The W eather-Specific Detection Head predicts and regresses 3D bounding boxes with varying sensitivities tailored to specific weather scenarios. The ov erall pipeline of WSE can be formulated as: B w = H w ( F w ( E w ( { f } ))) , w ∈ S , (4) where E w , F w , and H w denote the w -th W eather-Specific Backbone, W eather-Specific Feature Fusion module, and W eather-Specific Detection Head, respecti vely . In A W -MoE, all WSEs share the same structural design, with a total of N W = 7 experts corresponding to the number of weather categories. C. A W-MoE-LRC: Inte grating Image F eatures In the A W -MoE frame work (Fig. 4 ), camera images are ex- clusiv ely used within the Image-guided W eather-aw are Rout- ing (IWR) module to select W eather-Specific Experts. The image features are not directly utilized for 3D object detection. T o explicitly integrate image semantics with LiDAR and 4D Radar features, we propose an extended pipeline, A W -MoE- LRC, as illustrated in Fig. 5 . Follo wing [ 7 ], we adopt a LiD AR-guided Lift-Splat-Shoot (LSS) architecture to map 2D image features into a unified Bird’ s-Eye-V iew (BEV) space for multi-modal alignment. This process consists of three main stages: 1) LiDAR-Guided Image F eatur e Lifting: Standard LSS architectures often lack precise geometric constraints for depth estimation. T o address this, we lev erage sparse LiD AR point 6 clouds to guide image depth prediction. First, we project the LiDAR point clouds P l onto the camera image plane using the intrinsic matrix A and extrinsic matrix T ext to generate a sparse depth map D sparse ∈ R D 1 × H × W . This depth map is conv olved, concatenated with the backbone- extracted image features f img , and fed into a DepthNet. The network outputs context features f context ∈ R C 2 × H × W and a discrete depth probability distribution D prob ∈ R D 2 × H × W , where D 2 denotes the number of predefined depth bins. The 3D frustum feature f f rustum is then computed via the outer product of the depth probabilities and context features: f f rustum ( u, v , d ) = D prob ( u, v , d ) ⊗ f context ( u, v ) , (5) where ( u, v ) represents the image pixel coordinates and d is the discrete depth index. 2) 3D Geometry T ransformation and BEV P ooling (Splat- ting): T o map the frustum features into the ego-vehicle coordi- nate system, we compute the 3D coordinate P eg o for each fea- ture point. Given the depth d and pixel coordinate ( u, v ) , and accounting for data augmentations (e.g., image augmentation matrix T img aug and LiD AR augmentation matrix T lidar aug ), the coordinate transformation is formulated as: P eg o = T lidar aug T ext A − 1 T − 1 img aug u · d v · d d 1 . (6) After obtaining the 3D coordinates for all frustum features, we apply an ef ficient BEV pooling operation to aggregate features that fall into the same 3D voxel grid. The features along the Z -axis are then flattened and concatenated across the channel dimension. Finally , a downsampling conv olutional layer is applied to generate the spatial BEV features for the camera branch, denoted as f c . 3) Multi-Modal F eatur e Fusion: Once the image spatial features f c are extracted, they are fused with the LiD AR features f l and 4D Radar features f r within the unified BEV space. W e concatenate the features along the channel dimension and apply sev eral con volutional layers to learn cross-modal interactions and adaptive weight assignments. The final fused feature f f is obtained as follows: f f = Con vs [ f c , f l , f r ] , (7) where [ · ] denotes the channel-wise concatenation. This fusion strategy ef fectiv ely harnesses the rich semantic information from images, the precise geometric structure of LiDAR, and the robust, all-weather dynamic perception of 4D Radar . D. Loss Function and P ost-Pr ocessing In the A W -MoE framework, the IWR selects the top- K W eather-Specific Experts (WSE) to process the input data. During training, each selected WSE computes an indi vidual loss, while during inference, each WSE regresses a dedicated set of 3D bounding boxes. Ho wev er , the relev ance between a WSE and the input data fluctuates based on weather condi- tions. T o account for this varying contribution, a specialized loss function and post-processing strategy are required to aggregate the outputs. W e thus propose the follo wing formu- lations: Algorithm 1: A W -MoE Training Strategy Input: LiD AR point clouds P l , 4D Radar point clouds P r , camera images I img Output: T rained A W -MoE model 1 Stage 1: Pretrain single-branch A W -MoE ; 2 Select a designated WSE d ; 3 for each batch in all-weather data {P l , P r } do 4 Forward: B d ← H d ( F d ( E d ( E shared ( P l , P r )))) ; 5 Compute loss and update parameters of E shared and WSE d ; 6 Stage 2: T rain image-based W eather Classifier C ; 7 for each batch of I img with weather labels do 8 Forward: z ← C ( I img ) ∈ R N W ; 9 Compute classification loss and update C ; 10 Stage 3: Initialize A W-MoE ; 11 Freeze parameters of E shared ; 12 Copy pretrained parameters to all WSE branches: WSE w ← WSE d , w = 1 , . . . , N W ; 13 Stage 4: T rain A W -MoE with IWR ; 14 for each batch {P l , P r , I img } do 15 Extract shared features: f ← E shared ( P l , P r ) ; 16 Compute weather probabilities: P ← softmax( C ( I img )) ∈ R N W ; 17 Select top- K experts: S ← T opK( P , K ) ; 18 Predict 3D boxes: B w ← H w ( F w ( E w ( { f } ))) , w ∈ S ; 19 Compute confidence-weighted loss: L C W ← P w ∈S P w L w ( WSE w ) ; 20 Update parameters of selected experts WSE w , w ∈ S ; 1) Confidence-W eighted MoE Loss: T o account for the varying relev ance between data and experts, we introduce the Confidence-W eighted MoE Loss. This objecti ve function lev erages the routing probabilities P , generated by the IWR, as dynamic confidence scores. The total loss is formulated as a weighted sum over the set of selected experts S : L C W = X w ∈S P w L w ( W S E w ) , (8) where L w denotes the individual loss computed by the w - th WSE. Scaling each expert’ s contribution proportional to its routing probability P w prev ents samples with low rele- vance from disproportionately af fecting the optimization of specialized experts, thereby ensuring stable, weather-aw are con vergence. 2) Confidence-W eighted P ost-Pr ocessing: Complementing the weighted loss, we apply a consistent Confidence-W eighted Post-Processing during inference to aggre gate the 3D bounding boxes B = { b i } N b i =1 regressed by the top- K experts. This process effecti vely integrates multi-expert predictions through two stages: Candidate Selection and Confidence-W eighted Aggregation. Candidate Selection. W e first ev aluate the 3D Intersection ov er Union (IoU) among all predicted boxes. Candidates with 7 T ABLE II Q UA N TI TA T I V E R E SU LT S O F D I FFE R E N T 3 D O B J EC T D E TE C T I ON M E T HO D S O N K - R A DA R DAT A S ET . W E P R E SE N T T H E M O DA L IT Y O F E AC H M E T H OD ( L : L I DA R, 4 D R : 4 D R A DA R ) A N D D E T A I L ED P E R FO R M A NC E F O R E A CH W E A T H E R C O ND I T I ON . B E ST I N B O L D , S E C O ND I N U N D E RL I N E , A N D ∗ I N DI C A T E S R E SU LT S R E P RO D UC E D U S I N G O P E N C O D E . Method Modality IoU Metric T otal Normal Overcast Fog Rain Sleet Light Snow Heavy Snow R TNH [ 11 ] (NeurIPS 2022) 4DR 0.3 AP B EV 41.1 41.0 44.6 45.4 32.9 50.6 81.5 56.3 AP 3 D 37.4 37.6 42.0 41.2 29.2 49.1 63.9 43.1 0.5 AP B EV 36.0 35.8 41.9 44.8 30.2 34.5 63.9 55.1 AP 3 D 14.1 19.7 20.5 15.9 13.0 13.5 21.0 6.36 R TNH [ 11 ] (NeurIPS 2022) L 0.3 AP B EV 76.5 76.5 88.2 86.3 77.3 55.3 81.1 59.5 AP 3 D 72.7 73.1 76.5 84.8 64.5 53.4 80.3 52.9 0.5 AP B EV 66.3 65.4 87.4 83.8 73.7 48.8 78.5 48.1 AP 3 D 37.8 39.8 46.3 59.8 28.2 31.4 50.7 24.6 InterFusion ∗ [ 50 ] (IR OS 2023) L+4DR 0.3 AP B EV 69.5 76.6 84.9 84.3 70.2 35.1 63.1 46.3 AP 3 D 65.6 72.5 81.4 76.9 63.8 34.6 59.9 45.9 0.5 AP B EV 66.1 70.5 82.0 81.8 67.2 33.9 62.9 46.0 AP 3 D 41.7 44.6 53.5 64.8 37.2 25.5 35.4 27.0 3D-LRF [ 13 ] (CVPR 2024) L+4DR 0.3 AP B EV 84.0 83.7 89.2 95.4 78.3 60.7 88.9 74.9 AP 3 D 74.8 81.2 87.2 86.1 73.8 49.5 87.9 67.2 0.5 AP B EV 73.6 72.3 88.4 86.6 76.6 47.5 79.6 64.1 AP 3 D 45.2 45.3 55.8 51.8 38.3 23.4 60.2 36.9 L4DR [ 12 ] (AAAI 2025) L+4DR 0.3 AP B EV 79.5 86.0 89.6 89.9 81.1 62.3 89.1 61.3 AP 3 D 78.0 77.7 80.0 88.6 79.2 60.1 78.9 51.9 0.5 AP B EV 77.5 76.8 88.6 89.7 78.2 59.3 80.9 53.8 AP 3 D 53.5 53.0 64.1 73.2 53.8 46.2 52.4 37.0 L4DR-D A3D [ 51 ] (MM 2025) L+4DR 0.3 AP B EV 80.4 86.5 89.8 90.1 81.0 62.6 89.9 61.9 AP 3 D 79.3 85.9 88.4 89.2 79.7 65.8 89.0 60.2 0.5 AP B EV 78.5 77.4 89.1 90.1 79.3 58.8 88.9 60.6 AP 3 D 61.9 58.9 66.4 79.2 63.0 48.2 64.6 47.6 A W -MoE (Ours) L+4DR 0.3 AP B EV 88.2 87.7 94.5 96.7 88.8 81.0 95.4 70.2 AP 3 D 83.9 84.2 90.0 95.3 84.4 72.9 90.2 64.0 0.5 AP B EV 84.2 82.8 91.6 96.3 85.3 75.0 94.7 66.4 AP 3 D 61.5 59.0 67.2 85.7 63.5 43.3 70.1 53.1 T ABLE III P E RF O R M AN C E ( AP 3 D ) O F A W- M O E A N D I T S C A ME R A - IN T E G RATE D V A R I A NT , A W- M O E - L RC . Method Modality IoU T otal Normal Overcast Fog Rain Sleet Light Snow Heavy Snow FPS [HZ] A W -MoE L+4DR 0.3 83.9 84.2 90.0 95.3 84.4 72.9 90.2 64.0 12.41 0.5 61.5 59.0 67.2 85.7 63.5 43.3 70.1 53.1 A W -MoE-LRC L+4DR+C 0.3 84.3 84.7 91.0 95.3 84.0 72.9 89.6 63.7 10.02 0.5 61.8 60.2 70.4 85.8 63.4 43.7 69.9 52.8 an IoU below a predefined matching threshold are retained as independent detections. Confidence-W eighted Aggrega- tion. For ov erlapping boxes representing the same target, we perform a weighted aggregation. Let Ω denote a set of matched boxes, where each box b j ∈ Ω is associated with its corresponding routing probability p j . The fused bounding box ˆ b is ˆ b = X b j ∈ Ω p j · b j , b j ∈ R 7 (9) By sharing the same IWR-deriv ed weights as the loss func- tion, this post-processing module dynamically prioritizes pre- dictions from experts most relev ant to the current weather , ensuring robust and spatially consistent final detections. E. T raining Strate gy As mentioned in the Introduction, collecting data under adverse weather conditions is challenging, resulting in signif- icantly fewer samples for each adverse condition (see Fig. 2 (b)). Even with top- K expert routing, some W eather-Specific Experts may not receiv e suf ficient training. T o address this, we propose a training strategy tailored for A W -MoE, as summarized in Algorithm 1 . First, all weather data are used to train a single WSE branch, allo wing the model to acquire basic 3D object detection capabilities. Next, the Shared Backbone is frozen, and the trained parameters of this WSE are copied to each branch for further training. Combined with the top- K expert routing, this strategy effecti vely mitigates the training challenges caused by limited adverse-weather data. I V . E X P E R I M E N T S A. Dataset and Evaluation Metrics The K-Radar dataset [ 11 ] contains 58 sequences with a total of 34,944 frames (17,486 for training and 17,458 for testing), collected with 64-line LiD AR, cameras, and 4D Radar sensors. It includes not only normal conditions but also six types of adverse weather, such as fog, rain, and heavy snow . For ev aluation, we adopt two standard metrics for 3D object detection: 3D A verage Precision ( AP 3 D ) and Bird’ s Eye V iew A verage Precision ( AP B EV ), which are measured on the “Sedan” class at IoU thresholds of 0.3 and 0.5. 8 T ABLE IV P E RF O R M AN C E ( AP 3 D ) C O M P A R IS O N O F A W- M O E W H E N E X T E ND E D T O D I FF ER E N T 3 D O B J EC T D E T EC T I ON B AS E L I NE S . Method IoU T otal Normal Overcast Fog Rain Sleet Light Sno w Heavy Snow R TNH (4DR) [ 11 ] 0.3 37.4 37.6 42.0 41.2 29.2 49.1 63.9 43.1 0.5 14.1 19.7 20.5 15.9 13.0 13.5 21.0 6.4 R TNH (4DR) - A W -MoE 0.3 65.7 64.4 72.2 88.7 58.3 64.0 71.2 65.0 0.5 35.7 28.6 44.3 72.9 34.9 32.4 42.7 45.3 0.3 +28.3 +26.8 +30.2 +47.5 +29.1 +14.9 +7.3 +21.9 Impr ovement 0.5 +21.6 +8.9 +23.8 +57.0 +21.9 +18.9 +21.7 +38.9 R TNH (L) [ 11 ] 0.3 72.7 73.1 76.5 84.8 64.5 53.4 80.3 52.9 0.5 37.8 39.8 46.3 59.8 28.2 31.4 50.7 24.6 R TNH (L) - A W -MoE 0.3 81.1 84.0 89.6 93.5 83.4 56.2 89.4 57.3 0.5 55.4 53.0 51.2 82.1 59.4 38.7 69.5 40.5 0.3 +8.4 +10.9 +13.1 +8.7 +18.9 +2.8 +9.1 +4.4 Impr ovement 0.5 +17.6 +13.2 +4.9 +22.3 +31.2 +7.3 +18.8 +15.9 InterFusion [ 50 ] 0.3 65.6 72.5 81.4 76.9 63.8 34.6 59.9 45.9 0.5 41.7 44.6 53.5 64.8 37.2 25.5 35.4 27.0 InterFusion - A W -MoE 0.3 81.7 83.8 89.7 92.7 80.4 71.2 82.5 60.7 0.5 60.3 58.0 70.1 85.9 60.9 47.0 63.4 44.7 0.3 +16.1 +11.3 +8.3 +15.8 +16.6 +36.6 +22.6 +14.8 Impr ovement 0.5 +18.6 +13.4 +16.6 +21.1 +23.7 +21.5 +28.0 +17.7 T ABLE V F P S, A N D F L O PS C O M P A R IS O N O F D E T E CT O R S B E F O RE A N D A F T ER A P PLY IN G A W- M O E ( C O RR E S P ON D I NG T O T A BL E I V ) . Method Sensors FPS [HZ] FLOPS [GB] Param [M] L4DR [ 12 ] L+4DR 13.94 142.65 59.73 L4DR - A W -MoE 12.41 143.41 143.46 R TNH (4DR) [ 11 ] 4DR 14.77 502.57 17.35 R TNH (4DR) - A W -MoE 14.50 503.25 61.41 R TNH (L) [ 11 ] L 14.62 502.53 17.35 R TNH (L) - A W -MoE 14.20 503.22 61.41 InterFusion [ 50 ] L+4DR 31.64 16.72 3.87 InterFusion - A W -MoE 29.94 17.48 20.71 B. Implement Details Our A W -MoE is designed as a general framework that can be extended to various 3D object detection algorithms. In this work, we extend the L4DR [ 12 ] baseline to de velop A W -MoE. Furthermore, we propose A W -MoE-LRC, which integrates camera image features into the A W -MoE frame- work. T o achieve a balance between detection performance and inference ef ficiency , we set K = 1 in the Image-guided W eather-a ware Routing. The model is trained on four R TX 3090 GPUs with a batch size of 3. C. Results on K-Radar Adverse W eather Dataset Follo wing L4DR [ 12 ], we compare A W -MoE with sev- eral modality-based 3D object detection methods, including R TNH [ 11 ], InterFusion [ 50 ], 3D-LRF [ 13 ], L4DR [ 12 ] and L4DR-D A3D [ 51 ]. The results are reported in T able II . A W - MoE consistently outperforms the state-of-the-art methods under both normal and adverse weather conditions, with particularly notable gains under extreme weather such as fog, sleet, light snow , and heavy sno w . Specifically , compared to its baseline L4DR, our extended A W -MoE achiev es a 10% increase in AP 3 D (IoU=0.3) under fog, a 12.5% increase in AP 3 D (IoU=0.5) under rain, a 12.8% increase in AP 3 D (IoU=0.3) under sleet, and approximately 15% improv ements in AP 3 D (IoU=0.3 and 0.5) under light snow and heavy snow . Furthermore, our A W -MoE significantly outperforms L4DR- D A3D across most ev aluation metrics. These improvements are attributed to A W -MoE’ s multi-branch W eather-Specific Expert design, which mitigates performance conflicts arising from large inter-weather v ariations, and the precise expert se- lection enabled by the Image-guided W eather-aw are Routing, which further enhances the model’ s robustness across diverse conditions. D. Extensibility of A W -MoE to Other 3D Detectors T o ev aluate the extensibility of A W -MoE to other 3D object detectors, we applied it to R TNH [ 11 ] and InterFusion [ 50 ], where R TNH includes both LiD AR and 4D Radar variants. As shown in T able IV , incorporating A W -MoE consistently improv es detection performance across v arious weather condi- tions and IoU thresholds, yielding impro vements of o ver 15%. Notably , after integrating A W -MoE, R TNH (4DR) [ 11 ] and InterFusion [ 50 ] outperform the state-of-the-art methods listed in T able II , enabling previously inferior models to surpass them; for instance, InterFusion achieves a 6.8% higher total performance than L4DR in AP 3 D (IoU=0.5), with ev en larger gains under adverse weather . These results demonstrate that A W -MoE is highly compatible and effecti ve across different detectors, further validating the rob ustness and generality of its design. E. P erformance of A W -MOE-LRC T able III presents the ev aluation results of our camera- integrated variant, A W -MoE-LRC, on the K-Radar dataset. Compared to A W -MoE, A W -MoE-LRC moderately improv es detection accuracy under high-visibility conditions, such as normal and overcast weather . Howe ver , it yields negligible gains in se vere weather like fog, rain, and snow . This occurs because camera sensors require clear visibility to capture useful semantic information; in extreme weather, degraded visibility renders these features ineffecti ve for detection. These 9 T ABLE VI P E RF O R M AN C E C O M P A R IS O N B E T WE E N W E A T H ER - S P EC I FI C G T S A M P LI N G ( W S GT S ) A N D W E A T H ER - A G N O ST I C G T S A MP L I N G ( W A GT S ) . N O N - N O R MA L D E NO TE S T H E AG G R EG ATE O F A L L N O N - NO R M AL W E A T H ER C O N DI T I O NS . Method IoU Metric T otal Normal Overcast Fog Rain Sleet Light Sno w Heavy Sno w Non-normal W AGTS 0.3 AP B EV 87.4 87.2 94.7 96.3 87.9 78.8 94.7 71.7 86.9 AP 3 D 82.5 83.6 87.3 93.1 83.6 69.7 89.2 65.5 81.6 0.5 AP B EV 83.2 82.1 90.3 96.1 84.4 70.4 93.8 67.6 83.4 AP 3 D 59.3 58.1 60.6 77.7 63.6 37.4 63.6 50.2 60.1 WSGTS 0.3 AP B EV 88.2 87.7 94.4 96.5 88.3 79.5 95.4 72.8 87.9 AP 3 D 83.6 84.1 89.9 93.4 84.3 70.2 89.0 66.0 82.4 0.5 AP B EV 84.2 82.7 91.6 96.1 85.5 72.2 94.6 67.9 84.7 AP 3 D 60.0 59.0 67.1 73.4 63.3 41.9 67.3 50.8 60.8 T ABLE VII P E RF O R M AN C E C O M P A R IS O N O F AW-M O E U S I N G D I FF E RE N T R OU T I N G S T R A T E G I ES : P O IN T - C L O U D F E A T U R E - B AS E D R O U T I NG ( P F R) A N D I M AG E - G U I DE D W E A T H E R - AW A R E R OU T I NG ( I W R ). Routing IoU Metric T otal Normal Overcast Fog Rain Sleet Light Snow Hea vy Sno w PFR 0.3 AP B EV 58.7 87.3 94.3 23.1 77.7 34.7 70.2 70.9 AP 3 D 52.8 84.0 89.3 11.4 73.0 29.1 64.8 63.6 0.5 AP B EV 55.6 82.1 91.4 22.9 74.4 31.4 69.6 66.9 AP 3 D 35.4 57.8 66.5 6.2 52.4 16.6 46.6 49.9 IWR 0.3 AP B EV 88.2 87.7 94.5 96.7 88.8 81.0 95.4 70.2 AP 3 D 83.9 84.2 90.0 95.3 84.4 72.9 90.2 64.0 0.5 AP B EV 84.2 82.8 91.6 96.3 85.3 75.0 94.7 66.4 AP 3 D 61.5 59.0 67.2 85.7 63.5 43.3 70.1 53.1 T ABLE VIII P E RF O R M AN C E ( AP 3 D ) C O M P A R IS O N B E T W EE N A W- M O E T R AI N I N G S T RAT EG Y A N D D I R E CT E N D - T O - EN D T R AI N I N G . N O N - N O R M AL D E N OT ES T H E AG G R EG ATE O F A L L N O N - NO R M AL W E A T H ER C O N DI T I O NS . T raining Strategy IoU T otal Normal Overcast Fog Rain Sleet Light Sno w Heavy Snow Non-normal Direct Training 0.3 74.9 80.2 84.1 86.5 75.9 53.2 75.8 53.8 72.8 0.5 54.2 54.5 63.3 64.3 53.5 38.8 58.8 41.1 52.3 A W -MoE T raining Strategy 0.3 83.9 84.2 90.0 95.3 84.4 72.9 90.2 64.0 83.9 0.5 61.5 59.0 67.2 85.7 63.5 43.3 70.1 53.1 61.5 results underscore the strategic design of our IWR. By lever - aging the distinct visual characteristics of images to classify weather and route inputs to the appropriate expert, IWR provides a much more effecti ve way to utilize camera data. F . Computational Efficiency of A W-MoE The key adv antage of the MoE framew ork lies in its multi- branch architecture, which effecti vely handles div erse tasks and scenarios. Since expert routing activ ates only a subset of experts during inference, it incurs only a minimal increase in computational cost. Our A W -MoE inherits this property . As shown in T able V , A W -MoE introduces negligible impact on inference speed and FLOPs when extended to different baselines. This efficiency stems from the lightweight design of the Image-guided W eather-a ware Routing module, which precisely selects the appropriate experts while adding only marginal computational overhead. Furthermore, the table in- dicates that the parameter overhead introduced by this design remains within an acceptable range, ensuring its viability for practical deployment. G. Ablation Study 1) Effectiveness Analysis of W eather-Specific GT Sampling: In this section, we compare the proposed W eather-Specific GT Sampling (WSGTS) with traditional W eather-Agnostic GT Sampling (W A GTS). As shown in T able VI , WSGTS consistently outperforms W A GTS under both normal and adverse weather conditions. This improvement stems from WSGTS sampling ground-truth data exclusi vely from scenes matching the current weather , which avoids the insertion of mismatched GT that could compromise scene authenticity while still enabling effecti ve data augmentation. 2) Ablation on Expert Routing: T able I compares the weather classification capabilities of Point-cloud Feature- based Routing (PFR) and Image-guided W eather-aw are Rout- ing (IWR). IWR achiev es approximately 99% accuracy across all weather categories. In contrast, PFR struggles significantly in conditions like overcast, fog, and snow due to the inherent limitations of point clouds in capturing weather semantics. Furthermore, T able VII presents an ablation study on detection performance when integrating these routing methods into the MoE framework. IWR consistently achieves much higher detection accuracy than PFR across all weather conditions. This superior performance stems directly from IWR’ s ability to accurately classify the weather and route features to the optimal expert module. Conv ersely , PFR’ s poor routing accu- racy sev erely degrades final detection performance. T ogether, these ev aluations v alidate the effecti veness and ingenuity of the IWR design. 3) Analysis of A W-MoE T raining Strate gy: In T able VIII , we compare our A W -MoE training strategy with direct train- 10 A W-MoE ( Ours) L4DR InterFusion Normal Overcast Rain Sleet GT Prediction IoU=0.87 IoU=0.61 IoU=0.39 IoU=0.53 IoU=0.32 IoU=0.41 Fig. 7. Comparison of our A W -MoE, L4DR [ 12 ], and InterFusion [ 50 ] visualization results under Normal, Overcast, Rainy and Sleet weather conditions. T ABLE IX E FF EC T S O F D I FF E RE N T T O P - K V A L U E S I N I M AG E - G U I D ED W E A T H E R - AW A R E R OU T I N G ( I WR ) . N O N - NO R M A L D E N OT E S T H E A G GR E G A T E O F A L L N O N - N O R MA L W E A T H E R C O N D IT I O N S . Parameter K IoU T otal Normal Overcast Fog Rain Sleet Light Snow Heavy Snow Non-normal K = 1 0.3 83.9 84.2 90.0 95.3 84.4 72.9 90.2 64.0 83.9 0.5 61.5 59.0 67.2 85.7 63.5 43.3 70.1 53.1 61.5 K = 2 0.3 84.2 84.9 90.0 95.0 84.8 72.8 88.4 64.8 82.8 0.5 61.1 59.3 67.2 81.5 63.7 44.2 67.8 52.7 62.5 K = 3 0.3 83.2 84.1 89.0 94.1 84.7 69.9 88.2 64.4 82.2 0.5 61.0 59.3 69.0 79.7 63.5 42.7 70.2 53.1 62.3 T ABLE X R O B US T N E SS A NA LYS I S O F I W R U N D E R A M BI G U O US W E A T H E R C O ND I T I ON S W I T H V A RY IN G T OP - K V A L UE S . ( 0 . 3 / 0 . 5) I N D IC ATE S T H E I O U V A L U E . Parameter K Metric T otal (0.3 / 0.5) Non-normal (0.3 / 0.5) K = 1 AP B EV 82.8 / 79.1 86.0 / 82.0 AP 3 D 77.0 / 53.3 80.1 / 56.0 K = 2 AP B EV 84.3 / 79.9 87.8 / 82.7 AP 3 D 77.3 / 55.8 80.5 / 58.3 ing. The results demonstrate that our strategy significantly improv es detection performance, particularly under adverse weather conditions. For example, under fog, AP 3 D at IoU=0.5 increases by 21.4%. This improvement stems from pre-training each W eather-Specific Expert (WSE) using all-weather data, allowing the WSEs to acquire basic 3D object detection capa- bilities before further fine-tuning within A W -MoE, effecti vely mitigating the challenges posed by limited adverse-weather data. 4) P arameter K in Image-guided W eather-awar e Routing: W e conducted an ablation study on the parameter K in the IWR module, with results sho wn in T able IX . Overall, K = 1 and K = 2 yield similar performance, and both outperform K = 3 . T o inv estigate the minimal difference between K = 1 and K = 2 , we e valuated their performance under ambiguous weather conditions, where IWR is prone to misclassification (T able X ). In these scenarios, K = 2 performs better than K = 1 . Routing to multiple experts mitigates the impact of classification errors, thereby enhancing robustness. Ho we ver , this advantage is negligible in the overall metrics (T able IX ). Because IWR achieves approximately 99% classification accuracy , misclassified cases are too infrequent to significantly affect global performance. Consequently , to achiev e an optimal balance between computational efficienc y and detection accuracy , we set K = 1 . 11 A W-MoE (Ours) L4DR InterFusion Fog Light Snow Heavy Snow GT Prediction IoU=0.76 IoU=0.63 IoU=0.42 Fig. 8. Comparison of our A W -MoE, L4DR [ 12 ], and InterFusion [ 50 ] visualization results under Fog, Light Snow and Heavy Snow weather conditions. H. V isualization Comparison T o provide a more intuitiv e understanding, we visually com- pare our A W -MoE against the L4DR [ 12 ] and InterFusion [ 50 ] baselines across various weather conditions (Fig. 7 and Fig. 8 ). The visualizations demonstrate two ke y improvements. First, A W -MoE effecti vely reduces missed detections caused by ad- verse weather (highlighted by red circles). Second, it regresses higher-quality 3D bounding boxes that align more closely with the ground truth (GT) (highlighted by blue circles). These enhancements stem from the A W -MoE design, which suc- cessfully mitigates the distrib ution discrepancy across dif ferent weather conditions. V . C O N C L U S I O N In this paper , we propose A W -MoE, the first framew ork to incorporate Mixture of Experts (MoE) for 3D detection under adverse weather, effecti vely addressing performance conflicts caused by large inter-weather data discrepancies in single-branch detectors. Specifically , the proposed Image- guided W eather-aw are Routing (IWR) lev erages the distinct visual characteristics of camera images to classify weather conditions. This ensures precise data routing to the optimal expert model, ef fectiv ely o vercoming the inherent limitations of point-cloud-based routing. Extensiv e experiments on the K- Radar dataset demonstrate the superiority and strong general- izability of A W -MoE. Ov erall, A W -MoE provides an ef fective framew ork for 3D object detection under adverse weather , enabling various detection algorithms to achieve optimal per- formance across dif ferent conditions, while incurring minimal impact on inference speed and computational cost. R E F E R E N C E S [1] S. Shi, X. W ang, and H. Li, “Pointrcnn: 3d object proposal generation and detection from point cloud, ” in Proceedings of the IEEE/CVF confer ence on computer vision and pattern r ecognition , 2019, pp. 770– 779. [2] A. H. Lang, S. V ora, H. Caesar , L. Zhou, J. Y ang, and O. Beijbom, “Pointpillars: Fast encoders for object detection from point clouds, ” in Proceedings of the IEEE/CVF conference on computer vision and pattern r ecognition , 2019, pp. 12 697–12 705. [3] T . Y in, X. Zhou, and P . Krahenbuhl, “Center-based 3d object detection and tracking, ” in Pr oceedings of the IEEE/CVF conference on computer vision and pattern reco gnition , 2021, pp. 11 784–11 793. [4] S. Shi, C. Guo, L. Jiang, Z. W ang, J. Shi, X. W ang, and H. Li, “Pv-rcnn: Point-voxel feature set abstraction for 3d object detection, ” in Proceedings of the IEEE/CVF conference on computer vision and pattern r ecognition , 2020, pp. 10 529–10 538. [5] H. Jing, A. W ang, Y . Zhang, D. Bu, and J. Hou, “Reflectance prediction- based knowledge distillation for robust 3d object detection in com- pressed point clouds, ” IEEE T ransactions on Image Processing , vol. 35, pp. 85–97, 2026. [6] H. W u, H. Lin, X. Guo, X. Li, M. W ang, C. W ang, and C. W en, “Motal: Unsupervised 3d object detection by modality and task-specific knowledge transfer , ” in Pr oceedings of the IEEE/CVF International Confer ence on Computer V ision , 2025, pp. 6284–6293. [7] Z. Liu, H. T ang, A. Amini, X. Y ang, H. Mao, D. L. Rus, and S. Han, “Bevfusion: Multi-task multi-sensor fusion with unified bird’ s-eye view representation, ” in 2023 IEEE international conference on r obotics and automation (ICRA) . IEEE, 2023, pp. 2774–2781. [8] Y . Dong, C. Kang, J. Zhang, Z. Zhu, Y . W ang, X. Y ang, H. Su, X. W ei, and J. Zhu, “Benchmarking robustness of 3d object detection to common corruptions, ” in Proceedings of the IEEE/CVF Conference on Computer V ision and P attern Recognition , 2023, pp. 1022–1032. [9] X. Huang, H. W u, X. Li, X. Fan, C. W en, and C. W ang, “Sunshine to rainstorm: Cross-weather knowledge distillation for robust 3d object detection, ” in Proceedings of the AAAI Confer ence on Artificial Intelli- gence , vol. 38, no. 3, 2024, pp. 2409–2416. [10] M. Hahner , C. Sakaridis, D. Dai, and L. V an Gool, “Fog simulation on real lidar point clouds for 3d object detection in adverse weather, ” 12 in Pr oceedings of the IEEE/CVF international conference on computer vision , 2021, pp. 15 283–15 292. [11] D.-H. Paek, S.-H. Kong, and K. T . Wijaya, “K-radar: 4d radar object detection for autonomous driving in various weather conditions, ” Ad- vances in Neural Information Processing Systems , vol. 35, pp. 3819– 3829, 2022. [12] X. Huang, Z. Xu, H. Wu, J. W ang, Q. Xia, Y . Xia, J. Li, K. Gao, C. W en, and C. W ang, “L4dr: Lidar-4dradar fusion for weather-rob ust 3d object detection, ” in Proceedings of the AAAI Confer ence on Artificial Intelligence , vol. 39, no. 4, 2025, pp. 3806–3814. [13] Y . Chae, H. Kim, and K.-J. Y oon, “T owards robust 3d object detection with lidar and 4d radar fusion in various weather conditions, ” in Pr oceedings of the IEEE/CVF Conference on Computer V ision and P attern Recognition , 2024, pp. 15 162–15 172. [14] D.-H. Paek and S.-H. Kong, “ A vailability-a ware sensor fusion via unified canonical space for 4d radar, lidar, and camera, ” arXiv preprint arXiv:2503.07029 , 2025. [15] R. A. Jacobs, M. I. Jordan, S. J. No wlan, and G. E. Hinton, “ Adaptiv e mixtures of local experts, ” Neural computation , vol. 3, no. 1, pp. 79–87, 1991. [16] N. Shazeer , A. Mirhoseini, K. Maziarz, A. Davis, Q. Le, G. Hinton, and J. Dean, “Outrageously large neural networks: The sparsely-gated mixture-of-experts layer, ” arXiv pr eprint arXiv:1701.06538 , 2017. [17] Z. Y ang, Y . Sun, S. Liu, X. Shen, and J. Jia, “Std: Sparse-to-dense 3d object detector for point cloud, ” in Proceedings of the IEEE/CVF international confer ence on computer vision , 2019, pp. 1951–1960. [18] Q. Xu, Y . Zhong, and U. Neumann, “Behind the curtain: Learning occluded shapes for 3d object detection, ” in Pr oceedings of the AAAI Confer ence on Artificial Intelligence , vol. 36, no. 3, 2022, pp. 2893– 2901. [19] H. Lin, D. Pan, Q. Xia, H. Wu, C. W ang, S. Shen, and C. W en, “Pretend benign: A stealthy adversarial attack by exploiting vulnerabilities in cooperativ e perception, ” in Pr oceedings of the IEEE/CVF International Confer ence on Computer V ision , 2025, pp. 19 947–19 956. [20] Q. Xia, J. Deng, C. W en, H. W u, S. Shi, X. Li, and C. W ang, “Coin: Contrastiv e instance feature mining for outdoor 3d object detection with very limited annotations, ” in Pr oceedings of the IEEE/CVF International Confer ence on Computer V ision , 2023, pp. 6254–6263. [21] H. Wu, S. Zhao, X. Huang, C. W en, X. Li, and C. W ang, “Commonsense prototype for outdoor unsupervised 3d object detection, ” in Proceedings of the IEEE/CVF Conference on Computer V ision and P attern Recogni- tion , 2024, pp. 14 968–14 977. [22] Z. Liu, Z. W u, and R. T ´ oth, “Smoke: Single-stage monocular 3d object detection via keypoint estimation, ” in Proceedings of the IEEE/CVF confer ence on computer vision and pattern r ecognition workshops , 2020, pp. 996–997. [23] J. W ang, F . Li, S. Lv , L. He, and C. Shen, “Physically realizable adversarial creating attack against vision-based bev space 3d object detection, ” IEEE T ransactions on Image Processing , vol. 34, pp. 538– 551, 2025. [24] N. Zhao, P . Qian, F . Wu, X. Xu, X. Y ang, and G. H. Lee, “Sdcot++: Improved static-dynamic co-teaching for class-incremental 3d object detection, ” IEEE T ransactions on Image Pr ocessing , vol. 34, pp. 4188– 4202, 2024. [25] Z. Y ang, Y . Sun, S. Liu, and J. Jia, “3dssd: Point-based 3d single stage object detector , ” in Pr oceedings of the IEEE/CVF Conference on Computer V ision and P attern Recognition (CVPR) , June 2020. [26] W . Shi and R. Rajkumar , “Point-gnn: Graph neural network for 3d object detection in a point cloud, ” in Proceedings of the IEEE/CVF conference on computer vision and pattern recognition , 2020, pp. 1711–1719. [27] Y . Y an, Y . Mao, and B. Li, “Second: Sparsely embedded con volutional detection, ” Sensors , vol. 18, no. 10, p. 3337, 2018. [28] J. Deng, S. Shi, P . Li, W . Zhou, Y . Zhang, and H. Li, “V oxel r- cnn: T owards high performance voxel-based 3d object detection, ” in Pr oceedings of the AAAI conference on artificial intelligence , v ol. 35, no. 2, 2021, pp. 1201–1209. [29] Y . Zhou and O. Tuzel, “V oxelnet: End-to-end learning for point cloud based 3d object detection, ” in Proceedings of the IEEE conference on computer vision and pattern reco gnition , 2018, pp. 4490–4499. [30] J. Ryde and N. Hillier , “Performance of laser and radar ranging devices in adverse environmental conditions, ” Journal of Field Robotics , vol. 26, no. 9, pp. 712–727, 2009. [31] Z. Chen, Z. Chen, Z. Li, S. Zhang, L. Fang, Q. Jiang, F . Wu, and F . Zhao, “Graph-detr4d: Spatio-temporal graph modeling for multi-view 3d object detection, ” IEEE T ransactions on Image Processing , vol. 33, pp. 4488–4500, 2024. [32] C. Zhang, W . Chen, W . W ang, and Z. Zhang, “Ma-st3d: Motion associated self-training for unsupervised domain adaptation on 3d object detection, ” IEEE T ransactions on Image Pr ocessing , vol. 33, pp. 6227– 6240, 2024. [33] L. Kong, Y . Liu, X. Li, R. Chen, W . Zhang, J. Ren, L. Pan, K. Chen, and Z. Liu, “Robo3d: T owards robust and reliable 3d perception against corruptions, ” in Proceedings of the IEEE/CVF International Conference on Computer V ision , 2023, pp. 19 994–20 006. [34] M. Bijelic, T . Gruber, F . Mannan, F . Kraus, W . Ritter, K. Dietmayer , and F . Heide, “Seeing through fog without seeing fog: Deep multi- modal sensor fusion in unseen adverse weather , ” in Pr oceedings of the IEEE/CVF Confer ence on Computer V ision and P attern Recognition , 2020, pp. 11 682–11 692. [35] K. Qian, S. Zhu, X. Zhang, and L. E. Li, “Rob ust multimodal vehicle detection in foggy weather using complementary lidar and radar signals, ” in Pr oceedings of the IEEE/CVF Confer ence on Computer V ision and P attern Recognition , 2021, pp. 444–453. [36] Q. Xu, Y . Zhou, W . W ang, C. R. Qi, and D. Anguelov , “Spg: Unsu- pervised domain adaptation for 3d object detection via semantic point generation, ” in Proceedings of the IEEE/CVF International Conference on Computer V ision , 2021, pp. 15 446–15 456. [37] W . Huang, G. Xu, W . Jia, S. Perry , and G. Gao, “Revi vediff: A universal diffusion model for restoring images in adverse weather conditions, ” IEEE T ransactions on Image Pr ocessing , 2025. [38] X. Huang, J. W ang, Q. Xia, S. Chen, B. Y ang, C. W ang, and C. W en, “V2x-r: Cooperati ve lidar -4d radar fusion for 3d object detection with denoising diffusion, ” arXiv e-prints , pp. arXi v–2411, 2024. [39] Y . W ang, J. Deng, Y . Li, J. Hu, C. Liu, Y . Zhang, J. Ji, W . Ouyang, and Y . Zhang, “Bi-lrfusion: Bi-directional lidar-radar fusion for 3d dynamic object detection, ” in Pr oceedings of the IEEE/CVF Confer ence on Computer V ision and P attern Recognition , 2023, pp. 13 394–13 403. [40] B. Lin, Z. T ang, Y . Y e, J. Cui, B. Zhu, P . Jin, J. Huang, J. Zhang, Y . Pang, M. Ning et al. , “Moe-llava: Mixture of experts for large vision-language models, ” arXiv preprint , 2024. [41] S. Masoudnia and R. Ebrahimpour, “Mixture of experts: a literature survey , ” Artificial Intelligence Review , vol. 42, no. 2, pp. 275–293, 2014. [42] Y . Zhou, T . Lei, H. Liu, N. Du, Y . Huang, V . Zhao, A. M. Dai, Q. V . Le, J. Laudon et al. , “Mixture-of-experts with expert choice routing, ” Advances in Neural Information Pr ocessing Systems , vol. 35, pp. 7103– 7114, 2022. [43] D. Lepikhin, H. Lee, Y . Xu, D. Chen, O. Firat, Y . Huang, M. Krikun, N. Shazeer , and Z. Chen, “Gshard: Scaling giant models with conditional computation and automatic sharding, ” arXiv preprint , 2020. [44] W . Fedus, B. Zoph, and N. Shazeer, “Switch transformers: Scaling to trillion parameter models with simple and efficient sparsity , ” Journal of Machine Learning Research , vol. 23, no. 120, pp. 1–39, 2022. [45] N. Du, Y . Huang, A. M. Dai, S. T ong, D. Lepikhin, Y . Xu, M. Krikun, Y . Zhou, A. W . Y u, O. Firat et al. , “Glam: Efficient scaling of language models with mixture-of-e xperts, ” in International confer ence on machine learning . PMLR, 2022, pp. 5547–5569. [46] S. Rajbhandari, C. Li, Z. Y ao, M. Zhang, R. Y . Aminabadi, A. A. A wan, J. Rasley , and Y . He, “Deepspeed-moe: Advancing mixture- of-experts inference and training to power next-generation ai scale, ” in International confer ence on machine learning . PMLR, 2022, pp. 18 332–18 346. [47] C. Riquelme, J. Puigcerver , B. Mustafa, M. Neumann, R. Jenatton, A. Susano Pinto, D. Ke ysers, and N. Houlsby , “Scaling vision with sparse mixture of experts, ” Advances in Neural Information Pr ocessing Systems , vol. 34, pp. 8583–8595, 2021. [48] R. Ranftl, A. Bochk ovskiy , and V . Koltun, “V ision transformers for dense prediction, ” in Pr oceedings of the IEEE/CVF international confer ence on computer vision , 2021, pp. 12 179–12 188. [49] Y . Chae, H. Kim, C. Oh, M. Kim, and K.-J. Y oon, “Lidar-based all- weather 3d object detection via prompting and distilling 4d radar, ” in Eur opean Confer ence on Computer V ision . Springer, 2024, pp. 368– 385. [50] L. W ang, X. Zhang, B. Xv , J. Zhang, R. Fu, X. W ang, L. Zhu, H. Ren, P . Lu, J. Li et al. , “Interfusion: Interaction-based 4d radar and lidar fusion for 3d object detection, ” in 2022 IEEE/RSJ International Confer ence on Intelligent Robots and Systems (IROS) . IEEE, 2022, pp. 12 247–12 253. [51] H. Y ang, L. Li, J. Guo, B. Li, M. Qin, H. Y u, and T . Zhang, “Da3d: Domain-aware dynamic adaptation for all-weather multimodal 3d de- tection, ” in Pr oceedings of the 33rd ACM International Confer ence on Multimedia , 2025, pp. 2150–2158.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment