Visual Prompt Discovery via Semantic Exploration

LVLMs encounter significant challenges in image understanding and visual reasoning, leading to critical perception failures. Visual prompts, which incorporate image manipulation code, have shown promising potential in mitigating these issues. While e…

Authors: Jaechang Kim, Yotaro Shimose, Zhao Wang

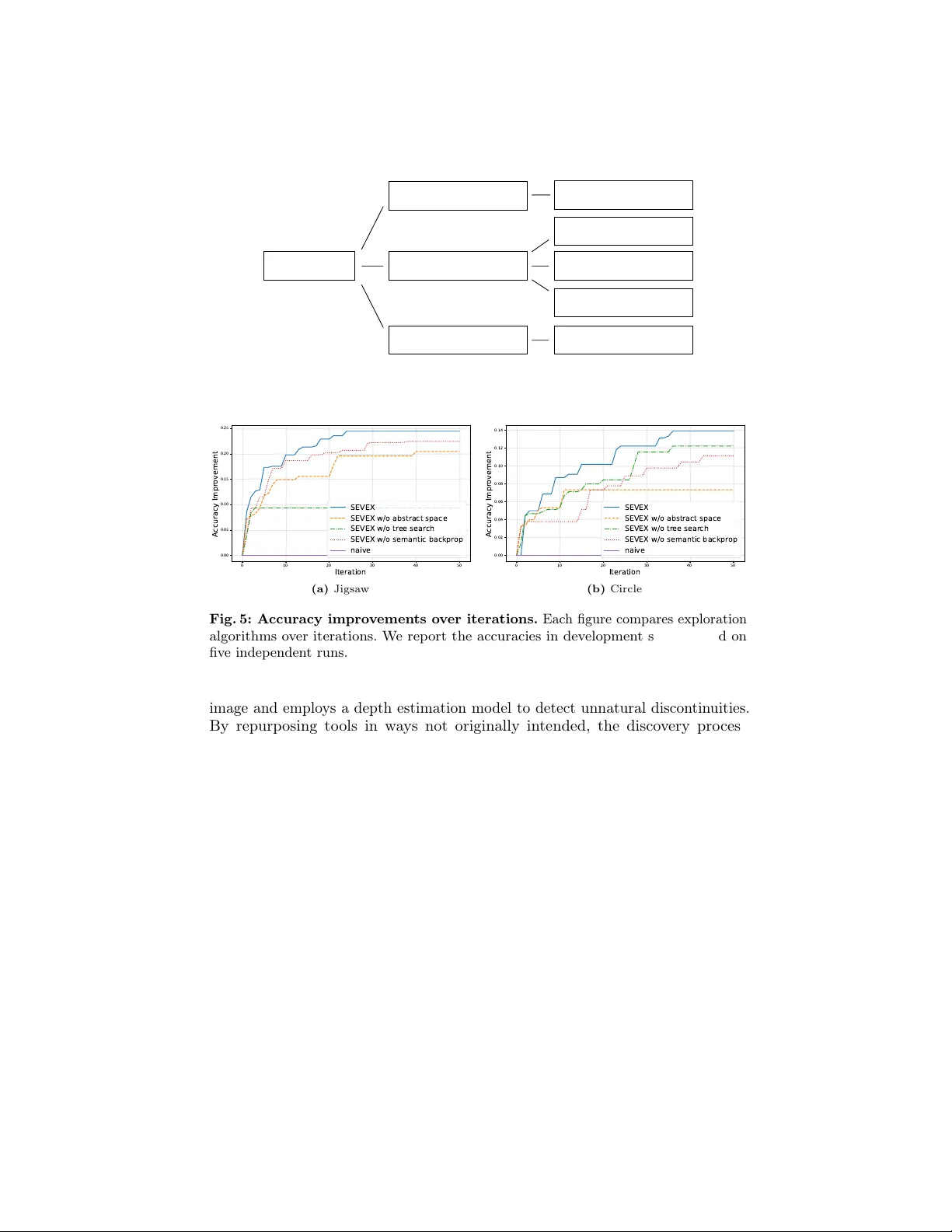

Visual Prompt Disco v ery via Seman tic Exploration Jaec hang Kim 1 , 2 ∗ , Y otaro Shimose 1 , Zhao W ang 1 , Kuang-Da W ang 1 , 3 ∗ , Jungseul Ok 2 , and Shingo T ak amatsu 1 1 Son y Group Corp oration 2 P ohang Universit y of Science and T echnology 3 National Y ang Ming Chiao T ung Universit y ∗ W ork done during an internship in Sony Abstract. Large Vision-Language Models (L VLMs) encounter signifi- can t c hallenges in image understanding and visual reasoning, leading to critical p erception failures. Visual prompts, which incorp orate image manipulation co de, hav e shown promising potential in mitigating these issues. While visual prompts hav e emerged as a promising direction, pre- vious methods for visual prompt generation hav e fo cused on to ol selection rather than diagnosing and mitigating the ro ot causes of L VLM p erception failures. Because of the opacity and unpredictability of L VLMs, optimal visual prompts m ust b e discov ered through empirical exp erimen ts, which ha ve relied on manual human trial-and-error. In this work, we prop ose an automated semantic exploration framework for discov ering task-wise visual prompts. Unlike previous metho ds, our approac h enables div erse yet efficien t exploration through agent-driv en exp erimen ts, minimizing h uman in terven tion and av oiding the inefficiency of p er-sample generation. W e introduce a semantic exploration algorithm named SEVEX, which addresses tw o ma jor challenges of visual prompt exploration: (1) the distraction caused by length y , low-lev el co de and (2) the v ast, unstructured search space of visual prompts. Sp ecifically , our metho d lev erages an abstract idea space as a search space, a no velt y-guided selection algorithm, and a semantic feedback-driv en ideation pro cess to efficien tly explore diverse visual prompts based on empirical results. W e ev aluate SEVEX on the BlindT est and BLINK benchmarks, which are specifically designed to assess L VLM p erception. Exp erimen tal results demonstrate that SEVEX significantly outperforms baseline metho ds in task accuracy , inference efficiency , exploration efficiency , and exploration stabilit y . Notably , our framew ork disco vers sophisticated and coun ter- in tuitive visual strategies that go b ey ond conv entional tool usage, offering a new paradigm for enhancing L VLM p erception through automated, task-wise visual prompts. Keyw ords: Large Vision Language Mo del · Visual prompt · Automated Prompt Engineering 2 J. Kim et al. Q: How man y intersections ? bbox = detect_object(“line”) return crop(image, bbox ) inference using the best visual prompt Q: How man y intersections? A: 2 A: 0 image manipula tion code (a) V isual prompt (code + text prompt) (b) Zero - shot visual prompt generation (c) T ask -wis e visual prompt expl oration (ours) test set dev set Q: Count the i ntersections between li nes semantic ex ploration Suboptimal visual prompt High inference cost Automati c discovery Low infer ence cost Efficient e xplo r ation Effective visu al prompt sub - optimal per - sample vi sual prompt Let’s use divide - and - conquer Let’s use colored outline for lines selecting idea idea à visual p r omp t feed back f r o m exper iments generating ideas for visu al prompt ... img = fill_color(...) return img ... img = fill_color(...) return img exper iments the vi sual pro mpt LVLM accurac y ↑ bbox = detect_object(“line”) return crop(image, bbox[0]) Manual discovery Effective visu al prompt empirical results Fig. 1: Ov erview of visual prompting paradigms for L VLMs. Visual prompts are effective approach for mitigating p erception failure of L VLM. Rather than manual disco very and zero-shot generation, we prop ose a framework to explore task-wise visual prompt efficiently . 1 In tro duction Large Vision-Language Models (L VLMs) hav e demonstrated remark able capa- bilities in complex reasoning and op en-ended dialogue. Ho wev er, L VLMs often struggle with fundamen tal visual p erception suc h as identifying fine-grained attributes or understanding spatial relationships, leading to hallucinations or incorrect reasoning based on misperceived visual input [ 4 , 13 , 14 ]. The inher- en t opacit y and unpredictability of these mo dels mak e it difficult to an ticipate suc h failures, necessitating sp ecialized in terven tions to ground their vision more reliably . One of the most promising directions to mitigate these failures is visual prompting [ 5 – 7 , 16 , 17 , 21 , 23 ]. In addition to traditional text-based prompts, visual prompting inv olves direct manipulation of the input image—often through programmatic co de—to explicitly guide the mo del’s atten tion to relev ant features. By ov erlaying geometric cues [ 7 , 16 ], highlighting sp ecific regions [ 21 ], or applying external to ols [ 6 ], visual prompts enable L VLM to bypass the failure p oin ts and to fo cus on the most imp ortan t part. This programmatic approach allo ws for a precise, repro ducible wa y to enhance visual grounding, offering a p o werful to ol for correcting the p erceptual blind spots of L VLMs. Despite its potential, current research on visual prompt generation remains in its infancy , often fo cusing on to ol usage rather than diagnosing and resolving fundamen tal p erception failures. More imp ortan tly , discov ering an effective visual Visual Prompt Discov ery via Semantic Exploration 3 prompt for a specific task remains a heavily man ual pro cess of trial-and-error. Because L VLMs react to visual changes in highly sensitive and non-intuitiv e wa ys, h uman researchers m ust sp end significant effort empirically testing v arious ideas to find the optimal strategy . This c hallenge is further compounded by the lac k of transferabilit y across different architectures; as w e demonstrate in Section 4.5 , a visual prompt optimized for one bac kb one mo del rarely yields consistent gains when applied to another. This implies that visual prompt for every model needs to b e disco vered indep enden tly . This heavy dep endency on h uman effort limits the scalabilit y of visual prompting and underscores the urgent need for an automated, agen t-driven discov ery framework. Ho wev er, automating this disco very pro cess is not straightforw ard; it presents t wo significan t tec hnical challenges. First, the distraction of low-lev el co de: lengthy and complex image-manipulation scripts can in tro duce unintended noise, o ver- whelming the L VLM instead of aiding it. Second, the v ast and unstructured searc h space: the infinite com binations of visual modifications mak e it nearly imp ossible for a naive agent to find an optimal solution. T o address these, we prop ose an automated seman tic exploration framework of task-wise visual prompts. Instead of searching through raw co de, our approach op erates on a high-lev el idea space. By utilizing a no velt y-guided selection algorithm and backpropagating actionable insights from sample-wise analysis, our framew ork efficien tly explores diverse visual strategies and conv erges on effective, task-wise prompts with minimal h uman o versigh t. W e ev aluate our framew ork on t wo b enc hmarks [ 4 , 14 ] sp ecifically designed to exp ose the visual p erception failures of L VLMs. Our exp erimen ts demonstrate that the automatically discov e r ed visual prompts significan tly outperform ex- isting baselines. F urthermore, our analysis reveals that optimal visual prompts are rarely transferable across different L VLM settings, a finding that strongly emphasizes the necessity of our automated disco very framework for model-sp ecific optimization. These results v alidate the efficiency of semantic exploration and suggest a new paradigm for enhancing the reliability of vision-language mo dels through automated disco very . W e illustrate the problem setting and our motiv ation in Figure 1 and summa- rize our con tributions as follows: – Automated Disco very of T ask-wise Visual Prompts: W e introduce an agen t-driven framew ork that automatically discov ers task-wise visual prompts, mo ving beyond man ual engineering and sub-optimal zero-shot generation. Recognizing that effective visual prompts are highly mo del-specific and rarely transferable, our framew ork pro vides a scalable solution. – Seman tic Exploration: W e prop ose SEmantic Visual prompt EXploration (SEVEX) to o vercome the c hallenges of exploration in raw visual prompt space. SEVEX utilizes 1) an abstract idea space as a searc h space and 2) a no velt y-guided no de selection algorithm to enable efficient and div erse exploration, and 3) a semantic backpropagation that analyzes sample-wise results to actionable insigh ts for future idea generation, enabling the div erse and efficient exploration. 4 J. Kim et al. – P erformance & Analysis: W e demonstrate the effectiv eness of SEVEX on the BlindT est and BLINK b enc hmarks, achieving sup erior task accuracy , in- ference efficiency , and exploration stabilit y . Notably , our framew ork disco vers sophisticated, counter-in tuitive visual strategies, not limited to conv entional to ol usage. 2 Related W ork 2.1 P erception failures in L VLMs and visual prompting P erception failures in L VLMs. Recen t studies ha v e increasingly revealed that L VLMs, despite their adv anced reasoning capabilities, suffer from systematic p er c eption failur es . Benc hmarks suc h as BlindT est [ 14 ], VLM Eye Exam [ 13 ], and Blink [ 4 ] demonstrate that these mo dels often struggle with fundamental visual tasks, including fine-grained attribute identification and spatial relationship understanding [ 19 , 24 ]. These failures lead to hallucinations where the mo del’s reasoning is grounded on misp erceiv ed visual information. Categorizing visual prompting strategies. W e define a visual prompt as a combination of image-manipulation co de and corresp onding text prompts. Existing strategies to enhance L VLM p erception can b e categorized into t w o primary paradigms based on their ob jectiv es and generation timing: 1) Visual to ol-use and infer enc e-time gener ation: Initial works in this domain [ 5 , 6 ], fo cus on visual tool-use, where Python co de is generated at inference time to inv oke external sp ecialized mo dels (e.g., segmen tation, depth estimation). While these metho ds effectively offload the reasoning burden to external to ols, they primarily treat the L VLM as a con troller rather than addressing its in trinsic visual reasoning failures. F urthermore, this zero-shot generation approach lacks a diagnostic mec hanism; if the mo del misin terprets the initial problem, it cannot adapt its to ol-use strategy , often leading to cascading errors and high computational costs during inference. While there is a fine-tuning based approach [ 2 , 18 ], it also fo cuses on selecting prop er to ols rather than understanding when the L VLM fails. 2) Visual sc affolding for intrinsic p er c eption: T o address the fundamen tal p erception failures of L VLMs (e.g., spatial ambiguit y , attribute binding), a different paradigm kno wn as visual sc affolding has emerged. Metho ds like Viser [ 7 ], BBVPE [ 16 ], Zo om-in [ 23 ], and Set-of-Mark [ 21 ] aim to enhance the mo del’s internal reasoning b y ov erlaying geometric cues or markers directly on the input image. These tec hniques attempt to fix the mo del’s eyes by highlighting task-critical regions, y et they remain in an early stage, relying heavily on fixed heuristics, man ual h uman design, or mo del fine-tuning. Despite the p oten tial of visual scaffolding, the field lacks researc h on the automated generation of suc h prompts tailored to sp ecific tasks and model arc hitectures. Most existing scaffolding metho ds are static or require human-in- the-lo op exp erimen tation to find the optimal visual prompts. As our exp erimen ts rev eal, the sensitivity of L VLMs mak es shared visual prompts ineffective across Visual Prompt Discov ery via Semantic Exploration 5 differen t backbones. Our framework bridges this gap by introducing the first agent- driv en system that automatic al ly disc overs task-wise visual pr ompts . By grounding the discov ery pro cess in empirical exp erimen tation, w e enable the discov ery of optimal, mo del-sp ecific visual prompts that mitigate intrinsic p erception failures without the inefficiencies of p er-sample tool generation. 2.2 Automated prompt engineering F rom text to visual domain. Since the in tro duction of Chain-of-Though t (CoT) prompting [ 9 ], prompt engineering has b ecome essen tial for maximizing the p erformance of Large Language Mo dels (LLMs). T o ov ercome the limitations of man ual trial-and-error, automated methods [ 20 , 25 ] ha ve been dev elop ed to optimize prompts based on empirical feedbac k from small datasets. In the visual domain, early optimization efforts primarily fo cused on vision-language represen tation models lik e CLIP , utilizing gradien t-based soft prompt learning [ 3 , 15 ]. How ever, these con tinuous optimization techniques are inapplicable to the discrete and non-differentiable nature of generating programmatic visual prompts for L VLMs, which require structured co de and natural language instructions rather than con tinuous vector adjustments. Complexit y of programmatic visual prompts. A significant hurdle in automating visual prompt engineering is the inherent complexity and length of the prompts. Unlike text-only prompts, a programmatic visual prompt consists of a combination of formatted image-manipulation co de and descriptive text. This h ybrid structure results in significan tly longer con texts, whic h p oses a long-context distraction problem for LLMs [ 11 ]. The increased search space of p oten tial co de snipp ets and their sensitive interaction with the L VLM’s p erception mak e direct generation and optimization via ra w text or code search extremely difficult. Direct searc h often leads to the agen t b eing ov erwhelmed b y lo w-level implemen tation details rather than fo cusing on the high-level logic of visual grounding. Mitigating complexit y via seman tic exploration. T o mitigate these issues, our framework shifts the paradigm from searching in the raw visual prompt to seman tic exploration within an abstract idea space. Instead of forcing the agent to reason ov er ev ery line of lo w-level manipulation co de, our approach enables the agen t to iterate on high-level conceptual strategies. By decoupling the semantic in tent (the "Idea") from its implemen tation (the "Co de"), our method reduces the cognitive load on the agen t and enables a more efficient, no velt y-guided search. This abstraction allo ws the agen t to focus on diagnosing the core perception failures and disco vering effective visual strategies, bridging the gap b et ween automated text prompt engineering and the complex requirements of L VLM visual grounding. 6 J. Kim et al. root drawing lines divide - and - conquer ... overlapping divisions detection tool 4. Node (idea) generation problem description new sibling new children 2. Implementation & Ex ecution code text prompt 3. Semantic Backp r opagation dev data idea implementation of idea expe rimen ts th e visu al pr ompt modified images resu lts update insights of ancestors ... ... Node components Abstr act idea Self - evaluation ( ) Visual pr ompt Experiment History (r eward, in s ight) 1. Node selection Executed Unexecuted prioritizing less - explore d node because of low confidence of their value prioritizing novel ideas because of their potential preventing satur ation from excessive branching visual prompt ... ... ... ... ... + self - evaluation parent child updated parent L VLM sample - wise analysis insight + self - evaluation idea + history from parent and children Fig. 2: Ov erview of SEVEX. SEVEX generates diverse ideas, selects a no de with highest p oten tial, execute the idea, and back-propagate the insights for future idea generation. 3 Metho d W e prop ose SEman tic Visual prompt EXploration (SEVEX), which is designed to discov er optimal, task-wise visual prompts for L VLMs. Unlik e previous works that rely on manual trial-and-error or p er-sample to ol-use, our framework treats visual prompt discov ery as an iterative search problem ov er a high-lev el idea space. The core of our approach is a dynamically expanding search tree T that enables an agent to hypothesize, implemen t, and explore visual prompts based on empirical feedbac k. W e illustrate the o verview of our algorithm in Figure 2 . 3.1 Searc h space and dynamic tree structure T o o vercome the v ast and unstructured nature of visual prompts, we define the searc h space in a high-level domain. The search tree T is not pre-defined but gro ws dynamically as the agen t generates new ideas. Ev ery executed no de has k unexecuted child no des to ensure exploration of diverse no des. Whenever an unexecuted is executed, new unexecuted no de with a new idea is generated. Eac h no de N in the tree represents a distinct idea for a visual prompt and encapsulates the following comp onen ts: – Abstract Idea ( I ): A natural language idea describing a visual prompt. – Implemen tation ( P ): The concrete instan tiation of I , consisting of ex- ecutable Python code utilizing a pre-defined set of visual to ols and the corresp onding textual prompt. Visual Prompt Discov ery via Semantic Exploration 7 – Self-Ev aluation Estimates ( S ): Heuristic scores { s gain , s nov el } ev aluated b y the agent to guide the searc h b efore execution. s gain represen ts exp ected gain by using that idea, and s nov el represen ts the nov elty of the idea compared to its siblings. – Exp erimen t History ( H ): Results of empirical exp erimen ts, including p erformance metrics and qualitative observ ations from the exp erimen t and insigh ts bac k-propagated from descendant no des. 3.2 Exploration pipeline The exploration follo ws a four-stage iterative lo op: 1) Selection , where the most promising idea is c hosen; 2) Implemen tation and Execution , where the idea is translated into a visual prompt and tested on a developmen t set; and 3) Bac kpropagation , where results are distilled into insigh ts to guide the next generation of ideas; 4) Expansion , where new ideas are generated based on the insigh ts from the previous executions. 3.3 No de selection via No v elty-guided UCT W e use the same motiv ation of Upp er Confidence Bound (UCB) [ 1 ] algorithm and UCB for T rees (UCT) [ 8 ] algorithm, which employ node v alue and its p oten tial for exploration. Previous algorithms execute every c hild no de at least once and calculate the potential based on the confidence of node v alue. How ever, this approac h is inefficien t in our context b ecause LLM can generate infinite num b er of new c hildren from a paren t node, and generated ideas can b e similar to eac h other or include less promising ideas. T o ov ercome these challenges, we propose a Nov elty-guided UCT (NUCT) whic h emplo ys no velt y to estimate the p oten tial of an unexecuted no de. NUCT differen tiates the priorit y scoring mechanism based on a no de’s execution status, selecting b et ween using empirical results and using exp ectations. In our tree structure, every executed no de maintains k unexecuted child no des to ensure con tinuous exploration. The nov elty of an unexecuted no de is ev aluated with tw o terms: a no velt y compared to its siblings, and the n umber of executed siblings, whic h corresp onds to the saturation of paren t no de. Specifically , the priority score P i for a no de i is determined as follo ws: F or exe cute d no des ( n i > 0 ): W e follow the standard UCB form ula with slight mo dification. Instead of the mean reward, we use the maximum reward achiev ed b y the no de or any of its descendants ( R max i ) relative to its parent’s reward ( R p i ): P i = ( R max i − R p i ) + λ expl r ln n p i n i (1) , where n i is the visitation count and p i is the parent no de of no de i . This term encourages selecting the no de with best reward in the la yer-wise comparison. F or unexe cute d no des ( n i = 0 ): Since empirical rewards are unav ailable, w e estimate the rew ard and its p oten tial using the agent’s self-ev aluation and saturation of its parent no de: 8 J. Kim et al. P i = s gain i + λ nov el s nov el i + λ sat s ln n p i c exec p i ! (2) , where s gain i and s nov el i are normalized scores for the agen t’s estimate of gain from using the idea and how distinct the idea is from its siblings. c exec p i denotes the n umber of executed children of p i , which is equiv alen t to the num b er of executed sibling no des of no de i . This saturation term (m ultiplied by λ sat ) p enalizes o ver-explored branches, prioritizing deep er exploration of promising descendants rather than executing less-promising c hild ideas of a well-explored parent. 3.4 Implemen tation and empirical ev aluation Once a no de is selected, the agent acts as an “Engineer” to instan tiate the abstract idea ( I ) into a visual prompt ( P ). W e pro vide the agent with a list of visual to ols and do cumen tation to use them. This visual prompt is executed on a developmen t set, which is a small subset for exploration. The p erformance (e.g., accuracy) and intermediate images are sav ed, and numerical score is sav ed as the empirical rew ard R i . 3.5 Seman tic bac kpropagation with sample-wise analysis F ollowing execution, an Analyst agen t p erforms a sample-wise failure analysis. Rather than simply propagating n umerical rewards, we implement Semantic Bac kpropagation . The analyst diagnoses wh y a strategy succeeded or failed with executed results for developmen t set. W e provide predictions and ground truth for each sample, and a few representativ e images actually passed to the L VLM. T o reduce token consumption during exploration, we use representativ e images for successful cases and failed cases instead using all images. These ra w analyses are distilled in to A ctionable Insights —high-lev el lessons ab out whic h visual comp onen ts are effectiv e for the task. These insights are bac k-propagated to the Exp eriment History ( H ) of all ancestor nodes. This ensures that the imp ortan t lessons are propagated, preven ting the agen t from rep eating ineffectiv e visual manipulations in future branc hes. 3.6 Insigh t-driven idea generation The cycle concludes with the expansion of T . Guided b y the updated H , the agen t generates new child no des. T o ensure a balance b et ween depth and breadth, for every executed no de, the agen t generates: sibling no des to explore alternative conceptual directions at the same abstraction level, and c hild nodes to refine and sp ecialize the current strategy based on bac kpropagated feedback. Newly created no des are initialized with self-ev aluation scores S , making them ready for the next Selection phase. Agen t generates self-ev aluation of exp ectation, nov elty , and feasibility . F easibilit y ev aluates if the idea is feasible to be implemen ted Visual Prompt Discov ery via Semantic Exploration 9 T able 1: Description of Visual to ols used in our experiments. Category T ool Name Description Parameter(s) basic get_image_size Returns image dimensions image conv ert_image_grayscale Converts image to gra yscale image crop Crops a specified region of the image image, coordinates ov erlay_images Overla ys two images image1, image2, coordinates, opacit y drawing draw_line Draws a line on the image image, co ordinates, color draw_box Dra ws a rectangle on the image image, coordinates, color draw_filled_box Draws a filled rectangle on the image image, coordinates, color external model detect_ob jects Ob ject detection using GroundingDINO [ 12 ] image, text query , threshold sliding_window_detection Sliding window ob ject detection image, text query segment_and_mark Semantic segmentation using SemanticSAM [ 10 ] image, granularit y , mark type estimate_depth Depth estimation using DepthAnything [ 22 ] image L VLM ask_to_L VLM Sends a query to L VLM and returns resp onse images, text prompt with the giv en to ols. If not, the idea is rejected and new no de is generated instead. Exp ectation scores and nov elty scores are normalized and re-scaled. This closed-lo op system allows the framew ork to autonomously navigate the complex landscap e of visual prompting without human interv ention. 4 Exp erimen ts 4.1 Exp erimen tal settings Datasets. W e ev aluate our framew ork using the BlindT est [ 14 ] and BLINK [ 4 ] datasets, whic h are sp ecifically designed to assess the perception failures of L VLMs. F ollowing the exp erimen tal proto col of Sk etchP ad [ 6 ], we select a set of tasks requiring fine-grained visual grounding and complex reasoning: – BlindT est: Counting Line Intersection, The Circled Letter, F ollowing Single- colored Paths (Subw ay Map), Coun ting Ov erlapping Shapes. – BLINK: Jigsaw, Relative Depth, Spatial reasoning, Semantic Correlation, Visual Correlation. F or eac h task, we randomly sampled 30 images to construct a developmen t set for exploration, reserving the remaining images for the test set. Utilizing a small- sized developmen t set aims to demonstrate that SEVEX’s semantic exploration is significantly more cost-efficien t than alternativ e optimization metho ds such as fine-tuning. W e describe the details of each task and the full list of developmen t set samples in App endix B . Baseline metho ds. W e compare SEVEX against the following visual prompting baselines: – Naiv e : The standard L VLM inference where the L VLM receives the raw image and the task query without additional visual prompting. 10 J. Kim et al. T able 2: Comparison of visual prompting metho ds. Bold and underlined texts denote the b est and second-b est methods for eac h setting, respectively . Inf. cost indicates the num b er of total tokens p er-sample. T ask Naive Sketc hPad SEVEX(ours) accuracy inf. cost accuracy inf. cost accuracy inf. cost LineIntersections 73.0 981 33.3 16730 90.5 991 CircledLetter 78.8 1323 82.0 13902 83.7 1337 Subw ayMap 60.0 1333 58.1 16482 62.8 1585 OverlappingShapes 50.4 2429 16.3 24833 52.4 2612 A verage-BlindT est 65.6 1517 47.4 13987 72.4 1631 Jigsaw 75.8 869 70.8 14896 95.8 682 Depth 83.0 1349 85.1 14158 85.1 1457 Spatial 81.4 1525 85.8 15818 86.7 1555 SemanticCorr. 55.0 712 58.7 9477 63.3 1358 VisualCorr. 87.3 641 90.9 14291 89.4 801 A verage-Blink 76.5 1019 78.3 13728 84.1 1171 A verage 71.6 1240 64.6 15621 78.9 1375 T able 3: Comparison to visual prompt exploration baseline. Expl. cost indicates the num b er of total tokens p er-iteration in exploration stage. Inf. cost indicates the n umber of total tokens p er-sample in inference stage. T ask Sketc hPad + APE SEVEX(ours) dev acc. test acc. expl. cost inf. cost dev acc. test acc. expl. cost inf. cost LineIntersections 30.0 16.4 797 k 11005 93.3 90.5 39 k 991 CircledLetter 93.3 73.7 980 k 17647 85.6 83.7 79 k 1337 Subw ayMap 50.5 42.5 759 k 21471 80.0 62.8 165 k 1585 OverlappingShapes 33.3 22.9 818 k 20672 56.7 52.4 86 k 2612 A verage-BlindT est 51.7 38.9 838 k 17699 78.9 72.4 92 k 1631 Jigsaw 76.7 66.4 761 k 18176 96.7 95.8 75 k 682 Depth 76.7 85.9 794 k 18867 85.6 85.1 87 k 1457 Spatial 90.0 76.2 589 k 19074 91.1 86.7 83 k 1555 SemanticCorr. 76.7 52.6 385 k 12354 72.2 63.3 74 k 1358 VisualCorr. 83.3 83.1 761 k 11937 88.9 89.4 78 k 801 A verage-Blink 80.7 72.8 658 k 16082 86.9 84.1 80 k 1171 A verage 67.8 57.7 738 k 16800 83.3 78.9 85 k 1375 – Sk etchP ad [ 6 ]: A zero-shot test-time reasoning framew ork that dynamically generates image-annotation co de during inference. T o ensure a fair comparison, w e add drawing to ols to the original tool list to matc h the capabilities of SEVEX. Sk etchP ad includes few-shot examples of to ol usage and Blink tasks. – Sk etchP ad + APE [ 25 ]: An enhanced version of Sketc hPad where the text prompt is optimized using Automatic Prompt Engineering (APE). W e applied iterativ e APE with resampling, using the original Sketc hP ad prompt as the initial state. This baseline uses the same dev elopment set as SEVEX for a con trolled comparison. Implemen tation details. W e utilize a comprehensiv e s et of visual to ols, which listed in T able 1 . W e use Gemini-2.5-flash as our primary backbone L VLM. W e Visual Prompt Discov ery via Semantic Exploration 11 prompt = ... bounding_boxes = detect_objects(image, “circle”) cropped_image = crop(image, bounding_boxes[0]) baseline_y = int(height * 0.75) meanline_y = int(height * 0.45) new_image = draw_line ( cropped_image, x1=0, y1=baseline_y, x2=width, y2=baseline_y ) new_image = draw_line ( new_image, x1=0, y1=meanline_y, x2=width, y2=meanline_y ) answer = ask_to_vlm([new_image], prompt) Question: Read the letter within the cir cle in the image. Question: Read the letter within the cir cle in the image. Two ty pog raph ic refe ren ce lin es hav e b een dra wn on the im age to hel p y ou identify the lette r's case (uppe rcase vs. lo wercase). updated text prompt original text prompt image visual prompt in code image1 bounding_boxes (a) An example visual prompt in CircledLetter. It crops the target region and draw lines for distinguishing upp ercase and low ercase. prompt = ... overlay_a = overlay_images(image1, image2, ...) overlay_b = overlay_images(image1, image3, ...) depth_map_a = estimate_depth(image=overlay_a ) depth_map_b = estimate_depth(image=overlay_b ) answer = ask_to_vlm([depth_map_a, depth_map_b], prompt) Question: Which is the missing part of image 1? A) image 2 B) image 3 Question: Which depth image look s more natural? An incorrect depth map will have sharp , unnatural discontinuities . A) image a B) image b updated text prompt original text prompt image1 image2 image3 overlay_a overlay_b depth_map_a depth_map_b ? visual prompt in code (b) An example visual prompt in Jigsaw. It o verla ys images a nd use depth estimation model to judge naturalness of images, although it is not the intended usage of the to ol. Fig. 3: Results of SEVEX. The codes and text prompts are simplified for better visualization. disabled the ‘thinking’ feature during the b enc hmark inference, while enabled it for the SEVEX (e.g., idea generation and implemen tation) to leverage the agent’s reasoning capabilities. According to the do cumen tation of Gemini, disabling thinking yields b etter result in image p erception tasks like image segmenta- tion. Semantic exploration w as conducted for 50 iterations with the following h yp erparameters: λ expl = 0 . 5 , λ nov el = 0 . 15 , λ sat = 0 . 5 , and k = 3 . Ev aluation metrics. The primary metrics are task accuracy and inference cost, where the cost is defined as the sum of input, output, and reasoning tokens. Unless otherwise sp ecified, all rep orted accuracies are based on the test set. 12 J. Kim et al. 4.2 Comparison to visual prompting methods As shown in T able 2 and T able 3 , SEVEX demonstrates sup erior p erformance across all critical dimensions: task accuracy , inference efficiency , exploration efficiency , and exploration stabilit y compared to Sketc hPad and Sk etchP ad + APE. T ask p erformance. SEVEX outp erforms existing baselines in seven out of nine tasks of Blink and BlindT est. SEVEX achiev es a superior av erage accuracy of 78.9%, significan tly surpassing the Naive (71.6%) and Sketc hP ad (64.6%) ap- proac hes. This p erformance gap is most pronounced in the BlindT est b enc hmark, where SEVEX attains an av erage accuracy of 72.4% compared to Sketc hPad’s 47.4%. The failure of Sk etchP ad in BlindT est can b e attributed to its reliance on zero-shot to ol use without an empirical understanding of the L VLM’s sp ecific p erceptual b eha viors. When an L VLM fails at a seemingly trivial task suc h as coun ting line intersections, zero-shot generation metho ds typically fail to detect or reco ver from such errors, whereas SEVEX identifies effective strategies through systematic exp erimen tation. Inference and exploration efficiency . SEVEX demonstrates remark able inference efficiency b y amortizing the cost of prompt generation ov er the explo- ration stage. Consequently , its inference cost is only 10.9% higher than the Naive metho d, while pro viding a 91.2% reduction in tok en consumption compared to Sketc hP ad. F urthermore, SEVEX exhibits sup erior exploration efficiency; its av erage exploration cost is 11.5% of the cost required by Sketc hPad+APE. SEVEX incurs an upfront exploration cost equiv alent to appro ximately 3,442 naiv e inferences or 273 Sk etchP ad inferences. Consequen tly , for tasks requiring more than 273 inferences, SEVEX is more cost-effective solution than Sk etchP ad, while providing the highest accuracy . W e pro vide a more detailed comparison to other exploration strategies in Section 4.4 . Stabilit y of seman tic exploration. Despite utilizing a small-sized dev elopment set, SEVEX exhibits a narro wer generalization gap than Sketc hPad + APE. The significan t generalization gap observ ed in Sketc hPad + APE is expected, as it tends to make subtle differences such as paraphrasing. In contrast, SEVEX main tains higher stability by anchoring its search in high-lev el ideas first, rather than fo cusing on the subtle implemen tation details. 4.3 Qualitativ e analysis of visual prompt and exploration tree W e pro vide qualitative examples of visual prompts disco v ered b y SEVEX in Figure 3 . In the Cir cle dL etter task, the agen t utilizes ob ject detection to lo calize the target region and renders precise t yp ographic reference lines to assist the L VLM in dis tinguishing character cases, demonstrating the framework’s ability to optimize precise parameters for visual tools. In the Jigsaw task, the agent discov ers a counter-in tuitive strategy: it ov erlays missing image comp onen ts onto the query Visual Prompt Discov ery via Semantic Exploration 13 Root (Naive inference) Let’ s focus on geom etric structure Compositing the images to com plete the images Let’ s use semantic segmentation instead of depth estimation Let’ s emphasize the boun daries by drawing lines Let’ s use depth estimation t ogether Let’ s convert the image t o grayscale before highl ighting boundary Draw a box to i ndicate the boundary Revise the prompt: 1. e valuate each scene, 2. answ er the question Fig. 4: An example of ideas in a search tree. It shows which kinds of ideas are explored for Jigsaw task. A small p ortion of nodes is visualized for simplicity . 0 10 20 30 40 50 Iteration 0.00 0.05 0.10 0.15 0.20 0.25 A ccuracy Impr ovement SEVEX SEVEX w/o abstract space SEVEX w/o tr ee sear ch SEVEX w/o semantic backpr op naive (a) Jigsaw 0 10 20 30 40 50 Iteration 0.00 0.02 0.04 0.06 0.08 0.10 0.12 0.14 A ccuracy Impr ovement SEVEX SEVEX w/o abstract space SEVEX w/o tr ee sear ch SEVEX w/o semantic backpr op naive (b) Circle Fig. 5: A ccuracy improv ements ov er iterations. Each figure compares exploration algorithms ov er iterations. W e rep ort the accuracies in developmen t set av eraged on fiv e indep enden t runs. image and employs a depth estimation mo del to detect unnatural discon tinuities. By repurp osing to ols in wa ys not originally intended, the disco very pro cess pro ves that it is not limited b y h uman bias. Figure 4 visualizes a represen tative exploration tree with its ideas. SEVEX first explores div erse conceptual directions at the ro ot level b efore refining these concepts in to concrete implemen tations through a hierarc hical structure. This approach enables the framework to b oth explore a broad strategy space and refine implementation details based on empirical feedback. 4.4 Ablation study ov er exploration strategies The contribution of individual exploration components is analyzed in Figure 5 . W e compare SEVEX against: (1) Naive , (2) SEVEX w/o T r e e Structur e , and (3) SEVEX w/o Semantic Backprop agation , (4) SEVEX w/o Abstr act Sp ac e . The result indicates eac h comp onen t is essential for efficiency of SEVEX. Esp ecially for SEVEX w/o tree structure, it could ac hieve go od p erformance when the first direction is promising, but if the task is complicated and requires trials in div erse directions, it is less likely to find a go od solution. Because of the resource 14 J. Kim et al. T able 4: Non-transferabilit y of visual prompts . W e compare four visual prompts with three L VLM mo dels. A ccuracies are calculated in LineIntersection task. accuracy of Gemini-2.5-flash accuracy of Claude-Sonnet-4 accuracy of GPT-4o naive 73.0 66.3 8.2 visual prompt 1 (found with Gemini) 90.5 82.9 59.1 visual prompt 2 (found with Claude) 87.9 87.8 31.0 visual prompt 3 (found with GPT) 62.3 35.0 57.1 count without an y modification naive provide f ew - shot examples of intersections Visual prompt 1 divide image into thr ee overlapping pieces and count them inde pendently (result = count(img1) - count(im g2) + count(img3)) Visual prompt 2 find important regions and crop Visual prompt 3 à 1 à 0 à ? exam ple im age in put for L VL M idea of visual prompt Fig. 6: Simplified visual prompts used in T able 4 . Each visual prompt is result of SEVEX with different L VLM mo dels. Visual prompts hav e different effects on different L VLM mo del, which means w e cannot exp ect transferabilit y of visual prompts. consumption of each exp erimen t, we only provide ablation studies for Jigsa w and Circle tasks. 4.5 Non-transferabilit y of visual prompt T able 4 and Figure 6 demonstrate that the discov ered visual prompts are rarely transferable across different L VLM backbones. F or instance, the visual pr ompt 2 that divides an image into three ov erlapping pieces yields greater improv ements than visual pr ompt 3 for Claude, whereas this tendency is rev ersed for GPT. These results underscore the necessit y of disco vering model-sp ecific visual prompts tailored to the unique p erceptual biases of eac h backbone. Giv en the lab orious nature of man ual prompt engineering, this highligh ts the imp ortance of our automated discov ery framework. Visual Prompt Discov ery via Semantic Exploration 15 5 Conclusion In this work, we in tro duced SEVEX, an agen t-driven framew ork designed to auto- matically disco ver task-wise visual prompts that mitigate the in trinsic p erception failures of L VLMs. Motiv ated by the observ ation that L VLMs often struggle with fundamen tal visual reasoning despite their adv anced linguistic capabilities, our approac h shifts from man ual trial-and-error or p er-sample co de generation to a structured searc h within a high-lev el, abstract idea space. By decoupling semantic in tent from programmatic implemen tation, SEVEX effectively navigates the v ast searc h space of visual mo difications while a voiding long-context distraction. Our experiments on the BlindT est and BLINK benchmarks demonstrate that SEVEX significantly outp erforms existing baselines, including zero-shot to ol-use frameworks and automated text prompt engineering. A critical takea wa y from our analysis is the non-transferabilit y of visual prompts; optimal visual cues discov ered for one mo del frequently fail or even degrade p erformance whe n applied to another. This finding reinforces the necessity of an automated, mo del- sp ecific disco very pro cess to accoun t for the unique p erceptual biases of different bac kb ones. Finally , we discuss broader implications and future research directions in App endix C . References 1. Auer, P ., Cesa-Bianc hi, N., Fischer, P .: Finite-time analysis of the multiarmed bandit problem. Machine learning 47 (2), 235–256 (2002) 7 2. Bai, T., Hu, Z., Sun, F., Qiu, J., Jiang, Y., He, G., Zeng, B., He, C., Y uan, B., Zhang, W.: Multi-step visual reasoning with visual tokens scaling and verification. NeurIPS (2025), https://arxiv.org/abs/2506.07235 4 3. Chen, A., Y ao, Y., Chen, P .Y., Zhang, Y., Liu, S.: Understanding and improving visual prompting: A lab el-mapping p erspective. In: Proceedings of the IEEE/CVF Conference on Computer Vision and P attern Recognition. pp. 19133–19143 (2023) 5 4. F u, X., Hu, Y., Li, B., F eng, Y., W ang, H., Lin, X., Roth, D., Smith, N.A., Ma, W.C., Krishna, R.: Blink: Multimo dal large language mo dels can see but not p erceiv e. In: Europ ean Conference on Computer Vision. pp. 148–166. Springer (2024) 2 , 3 , 4 , 9 5. Gupta, T., Kembha vi, A.: Visual programming: Comp ositional visual reasoning without train ing. In: Pro ce edings of the IEEE/CVF conference on computer vision and pattern recognition. pp. 14953–14962 (2023) 2 , 4 6. Hu, Y., Shi, W., F u, X., Roth, D., Ostendorf, M., Zettlemo yer, L., Smith, N.A., Krishna, R.: Visual sk etchpad: Sketc hing as a visual c hain of though t for multimodal language mo dels. Adv ances in Neural Information Pro cessing Systems 37 , 139348– 139379 (2024) 2 , 4 , 9 , 10 7. Izadi, A., Banay eeanzade, M.A., Ask ari, F., Rahimiakbar, A., V ahedi, M.M., Hasani, H., Baghshah, M.S.: Visual structures helps visual reasoning: Addressing the binding problem in vlms. In: NeurIPS (2025) 2 , 4 8. K o csis, L., Szepesvári, C.: Bandit based mon te-carlo planning. In: Fürnkranz, J., Sc heffer, T., Spiliop oulou, M. (eds.) Machine Learning: ECML 2006. pp. 282–293. Springer Berlin Heidelb erg, Berlin, Heidelb erg (2006) 7 16 J. Kim et al. 9. K o jima, T., Gu, S.S., Reid, M., Matsuo, Y., Iwasa wa, Y.: Large language models are zero-shot reasoners. A dv ances in neural information pro cessing systems 35 , 22199–22213 (2022) 5 10. Li, F., Zhang, H., Sun, P ., Zou, X., Liu, S., Y ang, J., Li, C., Zhang, L., Gao, J.: Seman tic-sam: Segment and recognize anything at any granularit y . ECCV (2024) 9 11. Liu, N.F., Lin, K., Hewitt, J., Paranjape, A., Bevilacqua, M., Petroni, F., Liang, P .: Lost in the middle: How language mo dels use long contexts. T ransactions of the asso ciation for computational linguistics 12 , 157–173 (2024) 5 12. Liu, S., Zeng, Z., Ren, T., Li, F., Zhang, H., Y ang, J., Li, C., Y ang, J., Su, H., Zh u, J., Zhang, L.: Grounding dino: Marrying dino with grounded pre-training for op en-set ob ject detection. ECCV (2024) 9 13. Nam, H.W., Mo on, Y.B., Choi, W., Lee, H., Oh, T.H.: Vlm’s eye examination: Instruct and inspect visual comp etency of vision language mo dels. T ransactions on Mac hine Learning Research 2025 (2025) 2 , 4 14. Rahmanzadehgervi, P ., Bolton, L., T aesiri, M.R., Nguyen, A.T.: Vision language mo dels are blind. In: Pro ceedings of the Asian Conference on Computer Vision. pp. 18–34 (2024) 2 , 3 , 4 , 9 15. Rezaei, R., Sab et, M.J., Gu, J., Rueck ert, D., T orr, P ., Khakzar, A.: Learning visual prompts for guiding the atten tion of vision transformers. arXiv preprin t arXiv:2406.03303 (2024) 5 16. W o o, S., Zhou, K., Zhou, Y., W ang, S., Guan, S., Ding, H., Cheong, L.L.: Black- b o x visual prompt engineering for mitigating ob ject hallucination in large vision language mo dels. In: Chiruzzo, L., Ritter, A., W ang, L. (eds.) Pro ceedings of the 2025 Conference of the Nations of the Americas Chapter of the Asso ciation for Computational Linguistics: Human Language T echnologies (V olume 2: Short P ap ers). pp. 529–538. Association for Computational Linguistics, Albuquerque, New Mexico (Apr 2025). https://doi .org/10.18653/v1/ 2025.naacl- short.45 , https://aclanthology.org/2025.naacl- short.45/ 2 , 4 17. W u, J., Zhang, Z., Xia, Y., Li, X., Xia, Z., Chang, A., Y u, T., Kim, S., Rossi, R.A., Zhang, R., et al.: Visual prompting in multimodal large language mo dels: A survey . arXiv preprint arXiv:2409.15310 (2024) 2 18. W u, M., Y ang, J., Jiang, J., Li, M., Y an, K., Y u, H., Zhang, M., Zhai, C., Nahrst- edt, K.: Vto ol-r1: Vlms learn to think with images via reinforcement learning on m ultimo dal to ol use (2025), https://arxiv.org/abs/2505.19255 4 19. Xu, W., Lyu, D., W ang, W., F eng, J., Gao, C., Li, Y.: Defining and ev aluating visual language mo dels’ basic spatial abilities: A persp ectiv e from psychometrics. In: Che, W., Nab ende, J., Sh utov a, E., Pilehv ar, M.T. (eds.) Pro ceedings of the 63rd Ann ual Meeting of the Asso ciation for Computational Linguistics (V olume 1: Long Papers). pp. 11571–11590. Association for Computational Linguistics, Vienna, Austria (Jul 2025). https://doi.org/10.18653/v1/2025.acl- long.567 , https://aclanthology.org/2025.acl- long.567/ 4 20. Y ang, C., W ang, X., Lu, Y., Liu, H., Le, Q.V., Zhou, D., Chen, X.: Large lan- guage mo dels as optimizers. In: The T w elfth International Conference on Learning Represen tations (2023) 5 21. Y ang, J., Zhang, H., Li, F., Zou, X., Li, C., Gao, J.: Set-of-mark prompting unleashes extraordinary visual grounding in gpt-4v. arXiv preprint arXiv:2310.11441 (2023) 2 , 4 22. Y ang, L., Kang, B., Huang, Z., Xu, X., F eng, J., Zhao, H.: Depth anything: Un- leashing the p o wer of large-scale unlab eled data. In: CVPR (2024) 9 Visual Prompt Discov ery via Semantic Exploration 17 23. Zhang, J., Khay atkho ei, M., Chhik ara, P ., Ilievski, F.: MLLMs kno w where to lo ok: T raining-free p erception of small visual details with multimodal LLMs. In: The Thirteen th In ternational Conference on Learning Represen tations (2025), https://arxiv.org/abs/2502.17422 2 , 4 24. Zhang, Z., Hu, F., Lee, J., Shi, F., Kordjamshidi, P ., Chai, J., Ma, Z.: Do vision- language mo dels represent space and ho w? ev aluating spatial frame of reference under ambiguities (2025), https://arxiv.org/abs/2410.17385 4 25. Zhou, Y., Muresanu, A.I., Han, Z., Paster, K., Pitis, S., Chan, H., Ba, J.: Large language mo dels are human-lev el prompt engineers. In: The Eleven th International Conference on Learning Representations (2023), https://openreview.net/forum? id=92gvk82DE- 5 , 10 18 J. Kim et al. App endix A Prompts for each step W e pro vide the prompts used for SEVEX. Implemen tation prompt PROMPT = """You are a machine learning engineer. Your task is to analyze the problem and previous attempts, then propose a new improvement idea. , → Another agent will update the code and prompt to implement the idea later. Problem Description: {problem_description} Previous ideas: {parent_idea} {sibling_ideas} Implications from Previous Experiments: {parent_implications} Based on the problem description and the implications from the parent experiment, propose a new idea or strategy to solve the given problem. Consider: , → - What worked well based on the parent experiment implications? - What didn ' t work well according to the implications? - What new approaches could be tried based on these implications? - How can we leverage the available functions more effectively? IMPORTANT: Your idea must be clearly different from the sibling ideas listed above. Generate a promising idea that builds on the parent experiment ' s implications while exploring a distinct direction from what siblings have already tried. , → , → Provide a clear, concise idea description (2-4 sentences) that explains the strategy you want to try next. Make sure the idea is simple and concise to make gradual improvement. , → , → The idea should include: - Plan about which changes to make - What are the expected results and changes in the result image - How to evaluate if the idea is successful or not Which should be excluded in the response: - Previous failed trials - Verbose explanation - Explicit code snippet Available Functions (signatures + summaries): {functions_reference} """ Sample-wise analysis prompt PROMPT = """You are analyzing whether an idea was implemented and used correctly for this sample. , → Context: - Idea (intent of the pseudo-code): {idea} - Prediction (model output for this sample): {prediction} - Ground truth: {ground_truth} Visual Prompt Discov ery via Semantic Exploration 19 - Error message (if any): {error} Image(s) attached in order: 1. Input image for this sample (required) 2. Last image sent to the VLM for answering (optional; if only one image is attached, this was not provided) , → Tasks: (a) Assess whether the idea appears to be implemented and used correctly for this sample given the image(s) and the prediction vs ground truth. , → (b) If there was an error or the prediction is wrong, suggest likely causes (e.g., wrong region used, VLM misinterpretation, code bug, missing preprocessing). , → Respond in the following format (plain text, no markdown bullets): IMPLICATIONS: <1-2 sentences on whether the idea was applied correctly and how it influenced the result> , → CAUSES: , → """ Insigh ts generation prompt PROMPT = """You are an expert in analyzing experimental results. Your task is to summarize the execution results and extract key implications. , → Execution Results: - Success: {success} - Reward: {reward_str} - Error: {error} Idea for This Iteration: {idea} Per-Image Analysis: {image_comparisons} Provide two outputs: 1. A concise summary (2-3 sentences) of what happened during execution and the results , → - Reference how the stated idea influenced the outcome (success/failure, partial progress) , → - Reference the per-image comparisons when noting visual problems or successes 2. Key implications (2-4 bullet points) about what worked, what didn ' t, and what could be improved , → - Highlight which images had the most severe differences Format your response as: SUMMARY: [your summary here] IMPLICATIONS: - [implication 1] - [implication 2] - [implication 3] """ 20 J. Kim et al. Insigh ts back propagation prompt PROMPT = """You are an expert in synthesizing experimental insights. Your task is to revise and consolidate implications by combining the current node ' s implications with insights from its children ' s experiments. , → , → Current Node ' s Implications: {current_implication} Children ' s Implications: {children_implications} Your task: 1. Synthesize the key insights from both the current node and its children 2. Identify patterns, common themes, and important learnings 3. Generate a revised, consolidated list of implications 4. Focus on actionable insights that can guide future exploration Requirements: - Output must be a bulletized list (using "- " prefix) - Maximum 5 bullet points - Each bullet should be concise but informative - Prioritize the most important and actionable insights - Remove redundancy and merge similar points Format your response as a bulletized list: - [revised implication 1] - [revised implication 2] - [revised implication 3] - [revised implication 4] - [revised implication 5] """ Idea generation prompt PROMPT = """You are a machine learning engineer. Your task is to analyze the problem and previous attempts, then propose a new improvement idea. , → Another agent will update the code and prompt to implement the idea later. Problem Description: {problem_description} Previous ideas: {parent_idea} {sibling_ideas} Implications from Previous Experiments: {parent_implications} Based on the problem description and the implications from the parent experiment, propose a new idea or strategy to solve the given problem. Consider: , → - What worked well based on the parent experiment implications? - What didn ' t work well according to the implications? - What new approaches could be tried based on these implications? - How can we leverage the available functions more effectively? IMPORTANT: Your idea must be clearly different from the sibling ideas listed above. Generate a promising idea that builds on the parent experiment ' s implications while exploring a distinct direction from what siblings have already tried. , → , → Visual Prompt Discov ery via Semantic Exploration 21 Provide a clear, concise idea description (2-4 sentences) that explains the strategy you want to try next. Make sure the idea is simple and concise to make gradual improvement. , → , → The idea should include: - Plan about which changes to make - What are the expected results and changes in the result image - How to evaluate if the idea is successful or not Which should be excluded in the response: - Previous failed trials - Verbose explanation - Explicit code snippet Available Functions (signatures + summaries): {functions_reference} """ Idea self-ev aluation prompt PROMPT = """You are a computer science engineer. Your task is to evaluate an improvement idea on three dimensions: feasibility, expectation, and novelty. , → Idea to Evaluate: {idea} Sibling ideas: {sibling_ideas} Available Functions (signatures + summaries): {functions_reference} Evaluate the idea on the following dimensions: 1. **Feasibility** (1-5): Can this idea be implemented using only the available functions listed above? , → - 5: Fully implementable with available functions, no additional capabilities needed , → - 4: Mostly implementable, may require creative use of available functions - 3: Partially implementable, some aspects may be challenging with available functions , → - 2: Difficult to implement, requires additional functions and imports - 1: Not implementable with available functions 2. **Expectation** (1-5): How confident are you that this idea will make significant improvement to the performance? , → - 5: Very high confidence, idea directly addresses key performance bottlenecks - 4: High confidence, idea addresses important aspects of the problem - 3: Moderate confidence, idea may provide some improvement - 2: Low confidence, idea is unlikely to provide significant improvement - 1: Very low confidence, idea is unlikely to help 3. **Novelty** (1-5): How different is this idea from the sibling ideas listed above? , → - 5: Completely different approach, explores new direction - 4: Significantly different, with some unique aspects - 3: Moderately different, some overlap with siblings - 2: Similar to sibling ideas, minor variations - 1: Very similar to sibling ideas, minimal differentiation Return ONLY a JSON object with scores (1-5) for each dimension, without any explanations: , → {{ "feasibility": <1-5>, 22 J. Kim et al. "expectation": <1-5>, "novelty": <1-5> }} """ B Details of datasets B.1 BlindT est: Vision Language Mo dels are Blind The BlindT est b enc hmark focuses on ev aluating the fundamen tal geometric p erception of Vision-Language Mo dels (VLMs). It utilizes simple 2D v ector graphics to ensure that mo dels cannot rely on language priors or contextual cues, forcing them to p erform pure visual reasoning. – Coun ting Line In tersections: This task requires the mo del to determine the exact num b er of in tersection points (typically 0, 1, or 2) b et ween t wo piece-wise linear function segments. It tests the model’s precision in pixel-level co ordinate alignment. – The Circled Letter: Giv en a string of characters where a single letter is enclosed in a red circle, the mo del is asked to iden tify the sp ecific character. This ev aluates the model’s ability to asso ciate a spatial container (the circle) with a sp ecific semantic token (the letter). – F ollo wing Single-colored P aths (Subw a y Map): Inspired b y subw ay maps, this task in volv es tracing a sp ecific colored path from a starting p oin t to an endpoint within a complex graph. It assesses the connectivit y and path-tracing capabilities of L VLMs. – Coun ting Ov erlapping Shapes: The mo del is presented with tw o or more circles and must determine their top ological relationship, such as whether they ov erlap, touch, or main tain a subtle gap. This tests the understanding of spatial b oundaries and containmen t. B.2 BLINK: Multimodal Large Language Mo dels Can See but Not P erceive The BLINK benchmark consists of 14 diverse visual tasks that are trivial for h umans (solv able "in a blink") but c hallenging for current VLMs. These tasks are designed to b e "un-captionable," meaning they cannot b e solved using text-only metadata. W e use five tasks which are used in Sketc hPad. – Jigsa w: This task inv olves reassembling image tiles into their original struc- ture. It ev aluates the model’s grasp of global structural consistency and lo cal texture matching. – Relativ e Depth: Given tw o points (A and B) in a single mono cular image, the mo del is asked to judge whic h p oin t is physically further from the camera. This measures the model’s ability to reconstruct 3D spatial cues from 2D pro jections. Visual Prompt Discov ery via Semantic Exploration 23 – Spatial Relation: This task tests the understanding of 3D spatial arrange- men ts (e.g., "left-of", "b ehind", "on-top-of") b et ween multiple ob jects in a scene, going b ey ond simple 2D b ounding b o x detection. – Seman tic Correspondence: The mo del is asked to iden tify seman tically similar yet visually distinct parts across different instances of a category (e.g., the "b eak" of tw o different bird sp ecies). This requires high-lev el seman tic abstraction of visual features. – Visual Corresp ondence: Unlike seman tic matc hing, this task requires finding the exact same ph ysical p oin t across tw o differen t viewp oin ts, lighting conditions, and time of the same scene. It assesses the model’s robustness to geometric transformations and viewp oin t c hanges. B.3 Indexes for developmen t set Indices for developmen t set { "intersection" : [1153,1290,1354,1623,1660,1740,1761,1989,2016,2274,2300,2459,2694,2731,2751,282 ⌋ 3,2958,2989,2990,3220,3373,3608,3620,3643,3816,3901,4023,4146,4300,4517], , → , → "circledletter" : [7415,7431,7438,7441,7453,7470,7473,7474,7495,7517,7530,7533,7559,7602,7698,770 ⌋ 0,7704,7734,7788,7789,7807,7808,7847,7851,7854,7898,7902,7909,7956,7992], , → , → "subway" : [21,73,75,91,98,128,168,194,212,233,241,253,294,307,326,342,349,372,383,4 ⌋ 08,557,574,582,583,622,629,650,699,702,705], , → "overlappingshapes" : [6442,6466,6501,6516,6518,6530,6533,6546,6552,6554,6587,6594,6602,6619,6691,670 ⌋ 5,6744,6758,6795,6799,6801,6808,6829,6841,6847,6849,6856,6861,6882,6895], , → , → "jigsaw" : [6,13,15,20,26,29,32,39,47,53,55,68,70,82,83,90,92,93,100,101,108,111,122 ⌋ ,126,127,128,129,131,139,145], , → "depth" : [3,9,13,15,17,22,23,24,26,30,33,38,44,48,49,53,58,60,66,67,72,82,87,92,93, ⌋ 107,110,115,118,122], , → "spatial" : [1,5,7,10,11,23,25,29,32,40,42,43,46,51,63,68,72,82,84,86,93,99,103,104, ⌋ 109,110,113,124,133,142], , → "semcorr" : [11,17,22,27,31,34,35,40,42,44,48,56,57,66,70,79,80,84,88,90,100,105,113 ⌋ ,125,128,131,136,137,138,139], , → "viscorr" : [6,20,22,32,35,42,45,46,51,55,59,60,68,72,74,87,88,91,102,103,109,111,11 ⌋ 4,121,136,142,147,148,155,158] , → } C Discussions C.1 Will the visual perception failures of L VLMs disappear in future mo dels? The inheren t opacity of large-scale models makes it fundamentally difficult to fully understand or predict their behavioral tra jectories. While future models ma y improv e, the im a ge p erception issues w e ha ve addressed represen t a broader c hallenge in mo del reliabilit y . W e hav e demonstrated that the engineering of visual prompts can b e effectiv ely automated through an agen tic system that 24 J. Kim et al. lev erages high-lev el seman tic exploration. This is evidenced b y SEVEX’s ability to utilize visual to ols in creativ e wa ys that extend b ey ond their in tended purp oses. F or example, in the Jigsa w task provided in Figure 3 , the agen t utilized a depth estimation mo del to judge the naturalness of an image b y iden tifying sharp, unnatural discon tinuities, which is a coun ter-intuitiv e solution that a h uman- designed heuristic might ov erlo ok. Suc h emergent to ol- use b eha vior highlights the p oten tial of agent-driv en exploration for solving increasingly complex reasoning tasks. C.2 Ho w can h uman-AI collab oration further enhance the result? In tegrating human expertise into the seman tic backpropagation lo op presents a promising direction for future research. By allo wing researchers to provide high-lev el directional guidance, the exploration tree can b e refined more efficiently , pruning ineffective branches based on domain knowledge. This synergy b et w een automated empirical discov ery and h uman in tuition establishes a new paradigm for enhancing b oth the transparency and reliability of L VLMs. C.3 Can SEVEX surpass h uman engineers? The visual prompt of Jigsaw, sho wn in Figure 3 , is an example of coun ter-intuitiv e but effective visual prompts. It sho ws p oten tial of finding solutions which is not easily imagined b y h uman engineer. Not only reducing the human burden in metho d finding, but also it could provide solutions b ey ond the h uman exp ectation.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment