RASLF: Representation-Aware State Space Model for Light Field Super-Resolution

Current SSM-based light field super-resolution (LFSR) methods often fail to fully leverage the complementarity among various LF representations, leading to the loss of fine textures and geometric misalignments across views. To address these issues, w…

Authors: Zeqiang Wei, Kai Jin, Kuan Song

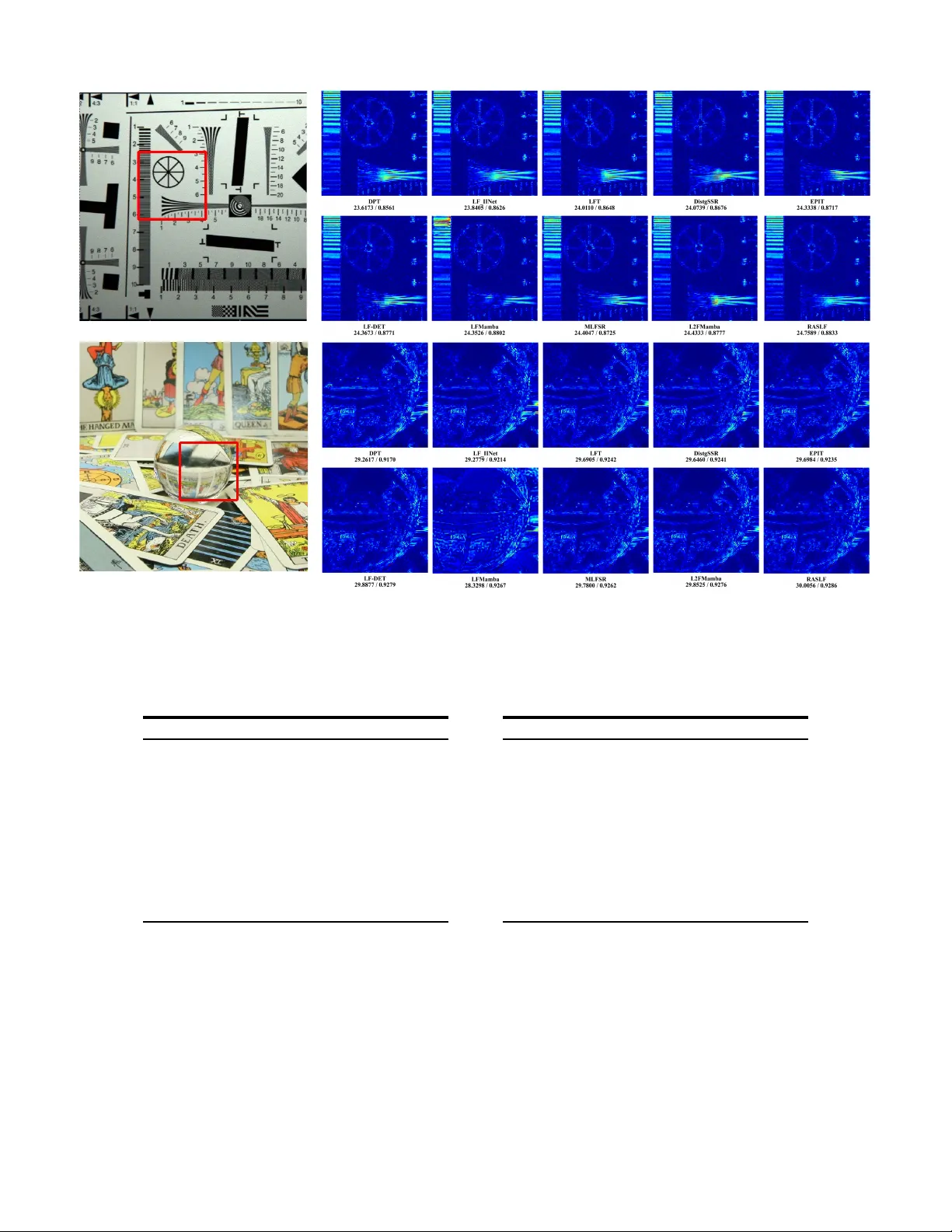

1 RASLF: Representation-A ware State Space Model for Light Field Super -Resolution Zeqiang W ei, Kai Jin, Kuan Song, Xiuzhuang Zhou, W enlong Chen, Min Xu * Abstract —Current SSM-based light field super-r esolution (LFSR) methods often fail to fully leverage the complementarity among various LF repr esentations, leading to the loss of fine textures and geometric misalignments across views. T o addr ess these issues, we propose RASLF , a representation-awar e state- space framework that explicitly models structural corr elations across multiple LF r epresentations. Specifically , a Progressiv e Geometric Refinement (PGR) block is created that uses a panoramic epipolar r epresentation to explicitly encode multi-view parallax differences, thereby enabling integration across different LF repr esentations. Furthermore, we intr oduce a Representation- A ware Asymmetric Scanning (RAAS) mechanism that dynami- cally adjusts scanning paths based on the physical properties of different representation spaces, optimizing the balance between performance and efficiency through path pruning . Additionally , a Dual-Anchor Aggregation (D AA) module improv es hierarchical feature flow , reducing redundant deep-layer features and priori- tizing important reconstruction inf ormation. Experiments on var - ious public benchmarks show that RASLF achie ves the highest reconstruction accuracy while remaining highly computationally efficient. Index T erms —light field image processing, image super - resolution I . I N T RO D U C T I O N L IGHT field (LF) imaging captures both the spatial in- tensity and angular information of light rays, offering rich geometric priors that facilitate various do wnstream ap- plications, including depth estimation [1], [2] and refocusing [3], [4]. Howe ver , the physical constraints of imaging sensors create an inherent trade-off between spatial and angular reso- lutions, usually resulting in sub-aperture images (SAIs) with limited spatial detail. Therefore, light field super-resolution (LFSR) focuses on reconstructing high-quality details from low-resolution data, with the main challenge being to recov er high-frequency textures while maintaining strict geometric consistency across views within the inherent spatial-angular structure. Recently , SSMs [5] have been introduced into LFSR to lev erage linear computational complexity and model long- range dependencies, demonstrating promising effecti veness. Unlike CNNs, limited by local recepti ve fields, and T ransform- ers, b urdened by quadratic complexity , SSMs are theoretically Corresponding author: Min Xu. Zeqiang W ei, Min Xu, and W enlong Chen are with the Capital Normal Univ ersity Information Engineering College, Beijing 100048, China (email: weizeqiang@cnu.edu.cn, xumin@cnu.edu.cn, chenwenlong@cnu.edu.cn). Kai Jin is with the Bigo T echnology Pte. Ltd., Beijing 100020, China (email: jinkai@bigo.sg). Kuan Song is with the Explorer Global (Suzhou) Arti- ficial Intelligence T echnology Co., Ltd., Suzhou, Jiangsu 215123, China (email: songkuan@explorer .global). Xiuzhuang Zhou are with Beijing Uni- versity of Posts and T elecommunications, Beijing 100088, China (email: xiuzhuang.zhou@bupt.edu.cn). better at capturing long-range spatial-angular correlations in high-dimensional LF data. Despite these advantages, current SSM-based LFSR meth- ods [6]–[8] still find it challenging to ef fecti vely capitalize on the diverse representations of LF data. Specifically , many approaches limit their focus to a single LF domain, thereby ov erlooking the structural complementarity offered by other representations. Even for methods that aim to incorporate mul- tiple representations, the lack of a rob ust modeling framework often leads to heuristic aggregation that doesn’t explicitly cap- ture complex cross-view dependencies and long-range spatial- angular correlations. Motiv ated by recent findings [9]–[11] that multi-directional scanning in image-based SSMs often induces feature redun- dancy , we observe that existing SSM-based LFSR methods typically adopt a representation-agnostic configuration that uses a uniform set of scanning paths for all LF represen- tations. Such designs ignore the inherent structural differ - ences among LF representations. For instance, spatial-angular textures usually e xhibit more balanced dependencies across different directions and therefore benefit from multi-directional scanning, whereas epipolar lines follow clear directional tra- jectories, making some scanning paths unnecessary . Therefore, a uniform scanning strategy causes unnecessary computational ov erhead and reduces feature focus in highly structured LF representations. T o ov ercome these limitations, we introduce RASLF , a representation-aware state-space frame work designed for LFSR. Specifically , we dev eloped a Progressive Geomet- ric Refinement (PGR) block that leverages a Panoramic Epipolar Representation to transform fragmented observa- tions into a globally coherent geometric space, f acilitating explicit parallax-aware feature interaction. Building on this, a Representation-A ware Asymmetric Scanning (RAAS) strate gy is introduced to align sequential modeling trajectories with the structural characteristics of dif ferent LF representations, thereby reducing computational redundancy while reinforcing geometric constraints. Moreov er , a Dual-Anchor Aggregation (D AA) module is incorporated to regulate hierarchical feature propagation, thereby filtering deep-layer redundancy and fo- cusing computational budget on reconstruction-critical cues. Extensiv e ev aluations confirmed that RASLF offers a better balance between reconstruction accuracy and computational efficienc y across multiple benchmarks. In summary , our primary contributions are as follows: 1) W e proposed a Progressiv e Geometric Refinement (PGR) block and a Panoramic Epipolar Representation, which jointly transform fragmented local constraints into a glob- 2 ally coherent geometric structure, significantly enhancing cross-view consistency . 2) W e designed a Representation-A ware Asymmetric Scan- ning (RAAS) strategy that aligns sequential modeling paths with the physical and structural characteristics of div erse LF representations, thereby reducing redundancy and computational overhead. 3) T o further improve feature utilization, we designed a Dual-Anchor Aggregation (D AA) module to optimize hierarchical feature propagation and suppress redundancy along the network hierarchy . 4) The proposed RASLF achieves a state-of-the-art (SO T A) balance between reconstruction quality and inference ef- ficiency , as validated by extensi ve experiments on public LF datasets. I I . R E L A T E D W O R K A. Light F ield Representations Light field data is typically parameterized as a 4D function L ( u, v , x, y ) , following the two-plane parameterization [12]. Here, ( u, v ) denotes the angular coordinates of the camera array , and ( x, y ) represents the spatial coordinates within each view . This high-dimensional structure captures both spatial textures and angular correlations, which can be broken down into three functionally distinct 2D representations: • Sub-Aperture Images (SAI): By fixing the angular coordi- nates at ( u, v ) , an SAI I u,v ( x, y ) is obtained. SAIs resemble traditional 2D images and are primarily used to extract spatial features. • Macro-Pixel Images (MacPI): A MacPI I x,y ( u, v ) is formed by gathering pixels from all vie wpoints at a fixed spatial location ( x, y ) . It encapsulates the angular distrib ution of light rays. • Epipolar Plane Images (EPI): By fixing one spatial and one angular dimension, the EPI I y ,v ( x, u ) or I x,u ( y , v ) is generated. Since the parallax of a scene point is proportional to its depth, scene objects appear as directional linear structures with different slopes in EPIs [13]. This structural anisotropy defines EPIs as the primary domain for enforcing geometric consistency . In the LFSR task, these three representations provide dif- ferent but complementary vie wpoints. Early methods [14], [15] predominantly performed spatial super-resolution on in- dependent SAIs, a practice that treats the light field as a set of isolated 2D images while ignoring angular consis- tency . T o establish cross-dimensional correlations, spatial- angular interaction paradigms were dev eloped to model the relationship between spatial textures and angular distributions by synergistically le veraging SAIs and MacPIs. T o further ensure geometric consistency , subsequent research introduced explicit EPI-based constraints on parallax slopes, yielding multi-representation framew orks that integrate spatial, angular, and epipolar information. B. Methods based on Spatial-Angular Interaction Spatial-angular interaction methods circumvent the high complexity of direct 4D processing by decomposing feature extraction into dimensionally decoupled 2D operations. LF- InterNet [16] utilizes parallel branches to iteratively e xchange spatial and angular information. T o mitigate parallax-induced misalignments inherent in decoupled paradigms, LF-DFnet [17] introduced the Angular Deformable Alignment Module (AD AM) for non-rigid feature warping, while LF-IINet [18] refined interactions via parallel intra-inter vie w branches. HDDRNet [19] further simulated 4D correlations by using dense residual connections between the SAI and MacPI rep- resentations to enable intensi ve feature reuse. Ho wever , the localized recepti ve fields of CNNs preclude the capture of long-range dependencies across distant vie wpoints or large spatial structures. T o ov ercome this, transformer -based architectures utilize self-attention mechanisms to aggregate global context. LFT [20] implements this through alternating spatial and angular T ransformer modules, while DPT [21] and M2MT [22] utilize specialized attention blocks to aggregate many-to-many vie w- point priors. Despite their representational power , the quadratic computational complexity of self-attention leads to excessi ve computational and memory ov erhead. Most recently , State Space Models (SSM), particularly Mamba-based architectures, hav e emerged to provide global interaction with linear com- plexity . Recent State Space Models (SSM), such as L 2 FMamba [8] and LFT ransMamba [23], use selective scanning on se- rialized SAI and MacPI tokens, while MLFSR [6] ensures consistency through bi-directional subspace scanning. How- ev er, since these spatial-angular interaction methods rely on implicit feature-lev el correlations rather than explicit epipolar geometry constraints, they often struggle to maintain strict geometric consistency . Crucially , these approaches typically employ symmetric quad-directional scanning across all do- mains, which ov erlooks the structural anisotropy inherent in dif ferent LF representations. The lack of domain-specific physical priors in a uniform scanning technique results in considerable computational redundancy and diminishes the effecti veness of high-dimensional geometric modeling. C. Methods based on Geometric Consistency Enforcing explicit geometric constraints is essential for maintaining the structural integrity of LFSR. EPIT [24] to- kenizes the EPI into se veral EPI-stripes to characterize the non-local properties of epipolar geometry . By applying self- attention mechanisms within these stripes, the model effec- tiv ely models the parallax continuity of long-range geometric structures. Furthermore, to impro ve the geometric consistency of spatial-angular interactions, incorporating EPI geometric con- straints via multi-representation learning has become a com- mon approach. Based on the organizational structure of these representations, existing methods can be classified into paral- lel, sequential, and cascade paradigms. The parallel paradigm, pioneered by the disentangled learn- ing frame work in DistgSSR [25], utilizes separate branches for spatial, angular , and epipolar features to independently extract domain-specific information before fusion. LFMamba 3 Fig. 1. Overall architecture of the proposed RASLF . (a) The Progressi ve Geometric Refinement (PGR) paradigm sequentially refines features using representation-specific VSSM units, and a Dual-Anchor Aggregation module fuses multi-stage features via spatial and geometric anchors. (b) Representation- A ware Asymmetric Scanning (RAAS) tailors SS2D scanning paths Φ and representation transforms T , T − 1 for SAI, MacPI, and EPI, reducing redundant computation while preserving geometry-aware dependencies. [7] further adv anced this architecture by incorporating Mamba into these parallel branches to capture long-range dependen- cies with linear complexity . Ho wev er, such parallel processing often leads to feature redundancy and resource waste because features are extracted independently across domains. The sequential paradigm prioritizes spatial-angular inter- action over the integration of epipolar geometric constraints [26]–[28]. Building on this, HI-LLF [29] introduces inter- leav ed interaction units to establish a hierarchical texture base before applying an EPI-specific refinement module. How- ev er, these methods often accumulate errors during the initial spatial-angular stages, leading to structural misalignments that are dif ficult to rectify in subsequent geometric optimization steps. The cascade paradigm adopts a more fine-grained alternat- ing extraction strate gy . Zhang et al. [30] proposed an adaptiv e feature aggregation (AF A) framew ork based on cascade resid- ual learning. This approach sequentially cascades inter-intra spatial (II-SFE), inter-intra angular (II-AFE), and horizontal- vertical epipolar feature extractors within each fundamental aggregation block, repeating this process across M aggrega- tion groups. Howe ver , the II-SFE and II-AFE modules exhib- ited high similarity and ov erlap in feature processing, leading to significant feature redundancy and wasted computation. Con versely , our proposed RASLF ensures that no redundant information exists between successi ve refinement steps. Unlike con ventional methods that analyze isolated 2D epipolar slices, our approach creates a Panoramic Epipolar Representation to capture global geometric constraints. Consequently , RASLF effecti vely pre vents feature redundancy common in cascaded framew orks and addresses error accumulation common in sequential processing, thereby ensuring strong geometric con- sistency . I I I . M E T H O D O L O G Y A. Overview Ar chitectur e Let I LR ∈ R U × V × H × W denote the input low-resolution (LR) LF image, where ( U, V ) and ( H , W ) represent the angular and spatial resolutions, respectiv ely . The objectiv e of LFSR is to reconstruct a high-resolution (HR) counterpart I H R ∈ R U × V × αH × αW , where α denotes the upsampling factor . T o effecti vely restore high-frequency textures while pre- serving global structural integrity , a residual learning paradigm is adopted. As illustrated in Fig. 1, a global skip connection is established via a bicubic upsampling operator B ( · ) , yielding the base LF image I ↑ = B ( I LR ) ∈ R U × V × αH × αW . The net- work is specifically designed to predict the residual component R , whereby the final super-resolved light field is formulated as ˆ I H R = I ↑ + R . The input I LR is first projected into a latent space via a spatial conv olution S conv , followed by a learnable Angular Embedding P ang to encode vie wpoint-specific geometric con- text: F 0 = S conv ( I LR ) + P ang , (1) where F 0 ∈ R U × V × H × W × C serves as the initial state for subsequent hierarchical feature extraction. The backbone of our architecture consists of M cascaded Progressiv e Geometric Refinement (PGR) blocks for hierarchi- cal spatio-angular feature extraction. Each PGR block applies a sequential transformation across the spatial (SAI), angular (MacPI), and epipolar (EPI) representation domains: F k = PGR k ( F k − 1 ) , k = 1 , . . . , M . (2) This design facilitates step-by-step refinement of spatial tex- tures and angular parallax, leading to a robust, geometrically consistent representation. Furthermore, a Dual-Anchor Aggregation (D AA) module is dev eloped to generate an aggregated feature representation 4 F ag g . By designating the initial and final cascaded features as spatial and geometric anchors, and adding intermediate layers to enhance residuals, the D AA module reduces hierarchical redundancy while maintaining both spatial accuracy and an- gular consistency . The total aggregated representation F ∗ is then generated via a fusion layer: F ∗ = F ag g + F 0 . (3) Finally , F ∗ is processed by a pixel-shuf fle upsampling module P sr to generate the residual R , thereby completing the LF reconstruction. During the training phase, the network is supervised using an L1 loss, L = ∥ ˆ I H R − I H R ∥ 1 . (4) B. Pr ogr essive Geometric Refinement The proposed Progressiv e Geometric Refinement (PGR) block serves as the basic computational unit for transforming LF representations from lo w-lev el spatial textures into high- lev el geometric constraints. Unlike the common parallel un- ordered interaction strategies in previous LFSR methods [7], [25], or the sequential architectures that completely decouple spatial-angular interaction from geometric alignment [26]– [28], our method designs a cascade processing chain that explicitly exploits the multi-dimensional physical properties of LF data. The main motiv ation is that in traditional sequential archi- tectures, early spatial-angular feature extraction lacks explicit parallax constraints, allowing inconsistent feature responses to spread and accumulate through deep layers. Con versely , the proposed cascaded strategy interleav es refinement across the SAI, MacPI, and EPI domains within each PGR block, enabling ”coupling while calibration. ” Performing real-time geometric calibration at each depth le vel establishes accurate search benchmarks for feature extraction, effecti vely reducing visual artifacts caused by matching ambiguities and preventing geometric offsets from accumulating in deeper layers. T o ensure algorithmic consistency and computational ef- ficiency , we adopt the visual state space model (VSSM) proposed in [8] as the base operator , whose effecti veness and efficiency hav e been well proven on the LFSR task. VSSM enables effecti ve long-range dependency modeling with linear computational complexity , which is essential for processing high-dimensional and redundant LF data. W e define the spatial-angular feature extraction process within the PGR block from F k − 1 to F k as a generic processor operator P ( F , T , T − 1 , Φ) . Here, F ∈ R U × V × H × W × C denotes the input 4D LF feature tensor, T ( · ) and T − 1 ( · ) represent the domain-specific transformation and its in verse, respecti vely . Φ denotes the predefined set of scanning paths. W ithin the specific feature extraction pipeline, the input feature F k − 1 first undergoes intra-view spatial refinement. The transformation T sai reshapes the 4D tensor into tiled spatial slices of dimension ( U · V ) × ( H · W ) × C , which are then processed using a domain-specific scanning set Φ S AI . The resulting intermediate spatial-refined feature is expressed as follows: F k − 1 S AI = P ( F k − 1 , T S AI , T − 1 S AI , Φ S AI ) . (5) Fig. 2. Illustration of the Panoramic Epipolar Representation and the corresponding representation-aware scanning paths (indicated by red solid arrows). Subsequently , the feature is reorganized into the MacPI do- main via T M AC , mapping it to a ( H · W ) × ( U · V ) × C tensor to facilitate angular coupling: F k − 1 M AC = P ( F k − 1 S AI , T M AC , T − 1 M AC , Φ M AC ) . (6) Through this hierarchical preprocessing, the features are en- dowed with a rob ust te xtural foundation and initial parallax awareness before the geometric alignment stage. As the key part of the PGR block, the epipolar geometric alignment explicitly enforces the intrinsic linear geometric consistency of the light field. Unlike con ventional methods that analyze isolated 2D epipolar slices, our approach develops a Panoramic Epipolar (PEPI) representation to capture global geometric constraints. The composite epipolar transformation T epi comprises two symmetric reorg anization branches: the V ertical Panoramic EPI (V -PEPI), generated via T v as a ( H · U ) × ( V · W ) × C tensor , and the Horizontal Panoramic EPI (H- PEPI), concurrently reshaped via T h into a ( W · V ) × ( U · H ) × C representation (see Fig. 2). This innov ativ e tiling approach e xplicitly and effecti vely maps parallax-related information, originally dispersed in the 4D spatio-angular domain, onto structured 2D planes. This globalized representation allows the processor to not only capture linear slopes within individual epipolar lines but also to observe structural correlations across different spatial loca- tions, utilizing the long-range modeling ability of SSMs. Ul- timately , the geometric features are integrated and processed, with the output of the PGR block, F k , expressed as follows: F k = T − 1 epi ( P ( ˆ F mac , T epi , T − 1 epi , Φ epi )) , where T epi = {T h , T v } and Φ epi = { Φ h , Φ v } . (7) Compared to the implicit modeling in [8], which scans the angular grid sequentially , our scheme mitigates information decay during long-range state updates and, crucially , circum- vents the geometric constraint lag inherent in the ”coupling before alignment” sequence of traditional architectures. By jointly characterizing spatial positions and view variations on a unified EPI plane within each block, parallax structures are calibrated in real-time in a more concentrated and explicit manner . C. Repr esentation-A war e Asymmetric Scanning Strate gy In two-dimensional (2D) image state-space modeling, scan- ning directions are used to serialize spatial pix els into 5 sequential state-update paths, capturing directional depen- dencies. Current methods [31], [32] typically adopt the 2D Selectiv e Scanning (SS2D) mechanism, which em- ploys a quad-directional scanning configuration Φ 4 - path = { Φ row , Φ ′ row , Φ col , Φ ′ col , } across all feature domains, repre- senting forward/in verse row-major and column-major trajec- tories. Howe ver , we argue that applying this quad-directional scanning uniformly across all LF representations ignores their distinct physical priors and introduces unnecessary compu- tational redundanc y . T o address this issue, we proposed the Representation-A ware Asymmetric Scanning (RAAS) strate gy , which dynamically tailors the scanning path set Φ to the physical characteristics of disparate representation domains, achieving an optimal balance between reconstruction perfor- mance and computational efficienc y through strategic path pruning. In the intra-vie w spatial refinement stage, SAI-VSSM aims to capture local spatial dependencies within indi vidual sub- aperture images. Compared with the strong directional struc- tures in MacPI and EPI representations, SAIs exhibit more locally symmetric spatial dependencies, making simple for- ward scanning suf ficient for effecti ve neighborhood correlation modeling. In this case, backw ard scanning tends to capture largely overlapping contextual dependencies, leading to lim- ited additional benefits while introducing extra computational ov erhead. Consequently , we implemented a path pruning strat- egy in SAI-VSSM by retaining only forward state-update paths, i.e., Φ sai = { Φ row , Φ col } . Unlike SAI, MacPI interleaves spatial and angular dimen- sions, such that neighboring elements along each scan axis no longer represent simple local adjacency , but instead reflect coupled relations across different views and spatial positions. As a result, forward and backward scanning propagate context through different dependency chains and capture distinct yet complementary spatial-angular correlations, making bidirec- tional scanning necessary rather than redundant. Thus, MacPI- VSSM preserv es the full quad-directional scanning set, i.e., Φ mac = Φ 4 - path , to ensure adequate inter-vie w correspon- dence coupling. The path design for EPI-VSSM is motiv ated by the strong directional structure of epipolar representations. In our PEPI representation, epipolar trajectories are consistently aligned with the row axis in H-PEPI and the column axis in V -PEPI. Under this formulation, the SSM can ef fectiv ely propagate parallax-dependent information along these physically mean- ingful linear paths, making a single forward scan sufficient to capture the dominant geometric dependencies. In contrast, scanning along other directions provides limited additional geometric cues, as they do not follo w the principal orientation of epipolar trajectories and mainly introduce redundant context modeling. Therefore, for the decoupled horizontal and v ertical branches, we reduce the scanning paths to a single direction Φ v - epi = { Φ col } and Φ h - epi = { Φ row } , as shown in Fig. 2. From a computational complexity perspectiv e, the RAAS strategy compresses the structure of the state-space modeling branches by matching the scanning density to the needs of physical modeling. Since each scanning path represents an independent state-update operation, reducing the number of di- rections directly lo wers the parameter count and computational cost. As a result, our method attains greater computational efficienc y and stronger geometric consistency representations while still preserving adequate modeling capacity . D. Dual-Anchor Aggr e gation Module In cascaded LFSR architectures, the deliberate integration of hierarchical features is crucial for enhancing reconstruction accuracy . T ypically , the ev olution of features within these cascaded structures shows a clear functional hierarchy . The shallow features tend to retain the local spatial te xtures of the original input, whereas deep features, through receptiv e- field expansion and nonlinear mapping, gradually dev elop into global representations that incorporate spatial-angular geometric information. T o fully utilize these complementary characteristics without creating hierarchical redundancy , we propose the Dual-Anchor Aggregation (D AA) mechanism. LFSR requires balancing the accurac y of high-frequency details with the stability of angular structures; to achieve this, the DAA module explicitly sets the endpoints of the cascading path as main reference points. Since the initial features F 1 hav e not undergone extensi ve abstraction and retain the most intact original spatial details, they are designated as the anchor for spatial texture restoration. Meanwhile, the final features F M , which inte grate spatial-angular geometric constraints, are regarded as the anchor for global geometric modeling. T o enable effecti ve utilization of intermediate feature infor- mation within the cascaded sequence { F k } M − 1 k =2 , we treat them as adaptiv e refinement operators. By injecting progressiv e in- formation from intermediate layers into the boundary anchors through weighted residuals, we constructed enhanced spatial F S and geometric F G anchors: F S = w S 1 · F 1 + M − 1 X k =2 w S k · F k , (8) F G = w G M · F M + M − 1 X k =2 w G k · F k , (9) Where { w S k } M − 1 k =1 and { w G k } M k =2 denote the decoupled weight- ing coefficients that regulate the contribution of each layer to the respective anchors. This design enables thorough recovery of feature resources and maximizes effecti veness, thereby impro ving the te xture ex- pressiv eness of the spatial anchor and strengthening the struc- tural robustness of the geometric anchor . Subsequently , the D AA module uses dimensional concatenation and a projection layer to achie ve deep coupling of these two complementary anchor representations, generating the final aggregated features F ag g : F ag g = MLP ( Concat ( F S , F G )) . (10) This anchor-guided aggregation method explicitly remov es the hierarchical redundancy caused by cascading schemes at the structural level. Instead of simply concatenating all layers, our approach uses intermediate features as directional refinements, keeping the reconstruction rooted in stable spatial references and accurate geometric benchmarks. 6 T ABLE I P S NR / S S IM R E SU LT S C O M P A R E D W I T H S OT A M E T H OD S F OR 2 × A N D 4 × L F S R TA S KS . T H E B E S T A N D S E CO N D - BE S T R E S ULT S A R E , R E SP E C T IV E LY , I N B O LD A N D U N DE R L I NE D . Method Scale EPFL HCINew HCIold INRIA STFgantry A verage RCAN [33] × 2 33.156/0.9635 35.022/0.9603 41.125/0.9875 35.036/0.9769 36.670/0.9831 36.202/0.9743 resLF [34] × 2 33.617/0.9706 36.685/0.9739 43.422/0.9932 35.395/0.9804 38.354/0.9904 37.495/0.9817 LFSSR [35] × 2 33.671/0.9744 36.802/0.9749 43.811/0.9938 35.279/0.9832 37.944/0.9898 37.501/0.9832 LF-A TO [36] × 2 34.272/0.9757 37.244/0.9767 44.205/0.9942 36.171/0.9842 39.636/0.9929 38.306/0.9847 MEG-Net [37] × 2 34.312/0.9773 37.424/0.9777 44.097/0.9942 36.103/0.9849 38.767/0.9915 38.141/0.9851 DistgSSR [25] × 2 34.809/0.9787 37.959/0.9796 44.943/0.9949 36.586/0.9859 40.404/0.9942 38.940/0.9867 LF-InterNet [38] × 2 34.112/0.9760 37.170/0.9763 44.573/0.9946 35.829/0.9843 38.435/0.9909 38.024/0.9844 LF-IINet [39] × 2 34.736/0.9773 37.768/0.9790 44.852/0.9948 36.564/0.9853 39.894/0.9936 38.763/0.9860 HLFSR-SSR [40] × 2 35.310/0.9800 38.317/0.9807 44.978/0.9950 37.060/0.9867 40.849/0.9947 39.303/0.9874 DPT [21] × 2 34.490/0.9758 37.355/0.9771 44.302/0.9943 36.409/0.9843 39.429/0.9926 38.397/0.9848 LFT [20] × 2 34.783/0.9776 37.766/0.9788 44.628/0.9947 36.539/0.9853 40.408/0.9941 38.825/0.9861 EPIT [24] × 2 34.826/0.9775 38.228/0.9810 45.075/0.9949 36.672/0.9853 42.166 /0.9957 39.393/0.9869 LF-DET [18] × 2 35.262/0.9797 38.314/0.9807 44.986/0.9950 36.949/0.9864 41.762/0.9855 39.455/0.9874 MLFSR [6] × 2 35.218/0.9801 38.140/0.9803 44.904/0.9950 36.919/0.9865 40.975/0.9949 39.231/0.9873 LFMamba [7] × 2 35.758 / 0.9824 38.368/0.9801 44.985/0.9950 37.063/ 0.9876 40.954/0.9948 39.424/ 0.9881 L 2 FMamba [8] × 2 35.515/0.9796 38.225/0.9803 44.953/0.9949 37.165 /0.9862 41.567/0.9952 39.485/0.9873 RASLF (Our) × 2 35.176/0.9791 38.427 / 0.9813 45.312 / 0.9952 36.987/0.9861 41.873/ 0.9955 39.555 /0.9875 RCAN [33] × 4 27.904/0.8863 29.694/0.8886 35.359/0.9548 29.800/0.9276 29.021/0.9131 30.355/0.9141 resLF [34] × 4 28.260/0.9035 30.723/0.9107 36.705/0.9682 30.338/0.9412 30.191/0.9372 31.243/0.9322 LFSSR [35] × 4 28.596/0.9118 30.928/0.9145 36.907/0.9696 30.585/0.9467 30.570/0.9426 31.517/0.9370 LF-A TO [36] × 4 28.514/0.9115 30.880/0.9135 36.999/0.9699 30.710/0.9484 30.607/0.9430 31.542/0.9373 MEG-Net [37] × 4 28.749/0.9160 31.103/0.9177 37.287/0.9716 30.674/0.9490 30.771/0.9453 31.717/0.9399 DistgSSR [25] × 4 28.992/0.9195 31.380/0.9217 37.563/0.9732 30.994/0.9519 31.649/0.9534 32.116/0.9439 LF-InterNet [38] × 4 28.812/0.9162 30.961/0.9161 37.150/0.9716 30.777/0.9491 30.365/0.9409 31.613/0.9388 LF-IINet [39] × 4 29.048/0.9188 31.331/0.9208 37.620/0.9734 31.039/0.9515 31.261/0.9502 32.060/0.9429 HLFSR-SSR [40] × 4 29.196/0.9222 31.571/0.9238 37.776/0.9742 31.241/0.9543 31.641/0.9537 32.285/0.9456 DPT [21] × 4 28.939/0.9170 31.196/0.9188 37.412/0.9721 30.964/0.9503 31.150/0.9488 31.932/0.9414 LFT [20] × 4 29.261/0.9209 31.433/0.9215 37.633/0.9735 31.218/0.9524 31.794/0.9543 32.268/0.9445 EPIT [24] × 4 29.339/0.9197 31.511/0.9231 37.677/0.9737 31.372/0.9526 32.179/0.9571 32.416/0.9452 LF-DET [18] × 4 29.473/0.9230 31.558/0.9235 37.843/0.9744 31.388/0.9534 32.139/0.9573 32.480/0.9463 MLFSR [6] × 4 29.283/0.9218 31.564/0.9235 37.831/0.9745 31.241/0.9531 32.031/0.9567 32.389/0.9235 LFMamba [7] × 4 29.840 / 0.9256 31.695 / 0.9249 37.912 / 0.9748 31.808 / 0.9551 31.846/0.9553 32.620/0.9471 L 2 FMamba [8] × 4 29.681/0.9233 31.647/0.9243 37.864/0.9745 31.728/0.9543 32.198/ 0.9574 32.623/0.9468 RASLF (Our) × 4 29.763/0.9239 31.667/0.9245 37.894/0.9745 31.758/0.9544 32.368 / 0.9586 32.690 / 0.9472 I V . E X P E R I M E N T S A. Datasets and Implementation Details T o comprehensi vely ev aluate the ef fectiv eness of RASLF , we conducted experiments on three real-world LF datasets, EPFL [41], INRIA [42], and STF-gantry [43], as well as two synthetic LF datasets, HCIold [44], and HCInew [45]. For all datasets, we strictly followed the publicly av ailable data splitting protocols and pre-processing procedures adopted in prior work to ensure fair and reproducible comparisons. Specifically , we used the central 5 × 5 vie ws of each LF image and cropped HR patches from these views for both training and testing. HR patches are cropped with sizes of 64 × 64 and 128 × 128 for the 2 × and 4 × LFSSR tasks, respectiv ely . The corresponding LR inputs are generated via bicubic down- sampling, yielding LR patches of size 32 × 32. For quantitative ev aluation, we conv erted the LF images from RGB to YCbCr and computed the peak signal-to-noise ratio (PSNR) and structural similarity (SSIM) on the Y channel only . For each dataset, we first av eraged the metrics across all LF scenes, then averaged the results across the five datasets to obtain the final scores. T o facilitate the RAAS strategy while av oiding sub-optimal con vergence inherent in training sparse architectures from scratch, a two-stage training paradigm is adopted. First, a full- path model is pre-trained for 180 epochs to capture dense feature representations. W e used the Adam optimizer and a StepLR scheduler, with an initial learning rate of 2e-4 that is decreased by a factor of 0.5 e very 30 epochs. The training data is augmented with random horizontal and vertical flips and 90-degree rotations. Subsequently , guided by the RAAS strategy , redundant scanning paths are pruned according to the representation-specific requirements, as defined in Sec. III-C. The pruned model is then fine-tuned for 30 epochs with a learning rate of 5e-5, which is decayed by a f actor of 0.5 ev ery 15 epochs to smoothly recover and optimize the final performance. B. Comparisons with State-of-The-Art Methods T o thoroughly assess the proposed RASLF , we compared it against 16 representativ e state-of-the-art methods across quantitativ e metrics, visual quality , and computational ef fi- ciency . The compared methods cover both SISR and LFSR tasks. Specifically , RCAN [33] serves as the CNN-based SISR method. For LFSR, DPT [21], [20], EPIT [24], and LF- DET [18] are built upon Transformer architectures, whereas LFMamba [7], L 2 FMamba [8], and MLFSR [6] adopt state- space structures. The remaining approaches are based on CNN 7 EPFL / ISO_Chart_1__D ecoded Stanford Gantry / Ta rot Cards S Fig. 3. Qualitative visualization results for 4 × LFSR compared to other methods. Here, we sho wed the error maps of the reconstructed center -view images, with representative regions indicated by arrows. PSNR/SSIM values for the corresponding region are provided below . T ABLE II C O MPA R IS O N O F PA R AM E T E RS , FL O P S , T I M E , A N D A V E RA GE P S N R/ S S I M V A L UE S F OR × 2 A N D × 4 S R . F L O P S A N D T I M E A R E C A L C UL ATE D O N A N I N PU T L F W I TH A S I Z E O F 5 × 5 × 32 × 32 . Method Scale Params. FLOPs(G) Time(ms) A vg. PSNR/SSIM LFSSR [35] × 2 0.89M 25.70 10.0 37.501/0.9832 MEG-Net [37] × 2 1.69M 48.40 31.2 38.141/0.9851 LF-A TO [36] × 2 1.22M 597.66 85.6 38.306/0.9847 LF-IINet [39] × 2 5.04M 56.16 20.6 38.763/0.9860 DistgSSR [25] × 2 3.53M 64.11 24.2 38.940/0.9867 HLFSR-SSR [40] × 2 13.72M 167.81 31.1 39.303/0.9874 LFT [20] × 2 1.11M 56.16 91.4 38.825/0.9861 DPT [21] × 2 3.73M 65.34 98.5 38.397/0.9848 EPIT [24] × 2 1.42M 69.71 32.2 39.393/0.9869 LF-DET [18] × 2 1.59M 48.50 65.9 39.455/0.9874 MLFSR [6] × 2 1.36M 53.30 27.8 39.231/0.9873 LFMamba [7] × 2 2.15M 92.29 75.9 39.424/0.9881 L 2 FMamba [8] × 2 1.04M 36.59 31.9 39.485/0.9873 RASLF (Our) × 2 0.90M 29.77 28.8 39.552/0.9876 Method Scale Params. FLOPs(G) Time(ms) A vg. PSNR/SSIM LFSSR [35] × 4 1.61M 128.44 37.7 31.517/0.9370 MEG-Net [37] × 4 1.77M 102.20 32.4 31.717/0.9399 LF-A TO [36] × 4 1.66M 686.99 88.7 31.542/0.9373 LF-IINet [39] × 4 4.89M 57.42 20.8 32.060/0.9429 DistgSSR [25] × 4 3.58M 65.41 25.0 32.116/0.9439 HLFSR-SSR [40] × 4 13.87M 182.93 32.7 32.285/0.9456 LFT [20] × 4 1.16M 57.60 95.2 32.268/0.9445 DPT [21] × 4 3.78M 66.55 99.7 31.932/0.9414 EPIT [24] × 4 1.47M 71.15 33.6 32.416/0.9452 LF-DET [18] × 4 1.69M 51.20 75.0 32.480/0.9463 MLFSR [6] × 4 1.41M 54.74 28.9 32.389/0.9235 LFMamba [7] × 4 2.30M 96.24 77.1 32.620/0.9471 L 2 FMamba [8] × 4 1.09M 37.99 32.6 32.623/0.9468 RASLF (Our) × 4 0.95M 31.20 29.4 32.690/0.9472 architectures, including LFSSR [35], MEG-Net [37], LF-A T O [36], LF-IINet [39], DistgSSR [25], and HLFSR-SSR [40]. 1) Quantitative Results: The quantitati ve comparisons be- tween RASLF and other state-of-the-art methods are presented in T able I. In the 4 × LFSR task, RASLF achiev es the best performance across all datasets, consistently outperforming the efficient state-space baseline L 2 FMamba. Notably , on the STF-gantry dataset, which features large-scale parallax, RASLF surpasses L 2 FMamba by 0.17 dB and LFMamba by 0.52 dB. This significant impro vement confirms that our PGR block effecti vely enforces explicit geometric calibration on panoramic representations, enabling precise modeling of complex cross-view dependencies. In the 2 × LFSR task, RASLF remains highly competitiv e, achieving the highest av- erage PSNR among all methods. Although LFMamba achieves a slightly higher SSIM, RASLF still outperforms its direct competitor , L 2 FMamba, in both PSNR and SSIM. Consid- ering the extremely lo w parameter count of RASLF , these results indicate that our method achiev es a fa vorable balance between pixel-le vel fidelity and geometric consistency while maintaining efficient inference. 8 20 40 60 80 100 Inference T ime (s) 31.8 32.0 32.2 32.4 32.6 32.8 PSNR (dB) L 2 F M a m b a LFMamba MLFSR EPIT DistgSSR LF_IINET DPT LF-DET LFT RASLF Fig. 4. Comparison of computational efficiency between our method and SO T A models on the 4 × LFSR task. The area of each circle represents memory consumption. T ABLE III A B LAT IO N S T U DY O N D I FF ER E N T C O M PO N E N TS F OR 4 × L F SR . PSNR / SIMM Params FLOPS w ./o. DD A 32.637 / 0.9466 1.086 37.928 w . EPI-H & EPI-V 32.588 / 0.9466 0.953 31.200 w . EPIs-H & EPIs-V 32.628 / 0.9468 0.953 31.200 RASLF (Our) 32.690 / 0.9472 0.953 31.200 2) Qualitative Results: Fig. 3 presents the qualitative re- sults of error maps for dif ferent methods on the 4 × LFSR task. Compared with other state-of-the-art methods, our ap- proach shows better ability to restore texture details and maintain structural consistenc y . This is primarily attributed to our proposed PGR block and Panoramic Epipolar Rep- resentation, which jointly reorganize fragmented local epipo- lar constraints into a globally coherent geometric structure, as e videnced by the significantly lo wer error responses. In varied and challenging scenes, such as the dense radial lines in ”EPFL/ISO Chart 1 Decoded” and the complex specular reflections and distinct contours of the crystal ball in ”Stan- ford Gantry/T arot Cards S”, our method achiev es outstanding performance, producing the cleanest error maps. In particular , compared to recent SSM-based architectures such as LF- Mamba and L 2 FMamba, our proposed RASLF achiev es more visually pleasing error suppression and superior quantitati ve results. 3) Computational Ef ficiency: W e compared RASLF with representativ e existing methods under standardized conditions in terms of model comple xity , including the number of pa- rameters (Params.), floating-point operations (FLOPs), and inference time (T ime). For the LFSR task, all methods took LF patches of size 5 × 5 × 32 × 32 as input and are ev aluated on a unified NVIDIA R TX 3090 GPU hardware platform, cov ering both × 2 and × 4 SR scales. Quantitati ve efficienc y metrics in T able II rev eal that RASLF achieves a superior trade-off between model complexity and accuracy . Specifically , compared to T ransformer-based methods, RASLF significantly lowers computational costs and provides much faster inference. Although its inference speed is slightly lower than that of lightweight CNN-based methods, it remains highly competitiv e regarding overall efficienc y . Notably , cur- rent state-space model implementations are not yet as heavily optimized for GPUs as con volutional operators; therefore, with continued development of hardware-a ware SSM operators, the inference efficienc y of RASLF is expected to improve further . Compared with MLFSR and LFMamba, RASLF achieves higher reconstruction accuracy with fewer parameters, lo wer computational complexity , and shorter inference time. Fur- thermore, ev en compared with the state-of-the-art ef ficiency- oriented model L 2 FMamba, which adopts a similar VSSM backbone, RASLF maintains a clear adv antage. Specifically , at the 4 × scale, RASLF achiev es a 12.8% reduction in parameter scale and a 17.9% reduction in FLOPs compared to L 2 FMamba, while also improving the PSNR by 0.07 dB. This shows that pruning strate gic paths in our RAAS approach effecti vely remo ves unnecessary calculations without losing representational capability . T o evaluate runtime efficienc y and resource usage, we compared the inference time and GPU memory consumption between our method and SOT A models on the 4 × LFSR task in Fig. 4. The area of each circle indicates peak memory usage. Our proposed RASLF achieves a superior balance between reconstruction performance and computational cost. By effecti vely pruning hierarchical and directional redundan- cies, our framework maximizes resource utilization for critical reconstruction information. C. Ablation Study 1) Dual-Anchor Aggr e gation Module: T o verify the effec- tiv eness of the Dual-Anchor Aggregation (D AA) module in hierarchical feature fusion, we ev aluate a variant in which the D AA is replaced with a simple feature concatenation oper - ation, denoted w/o D AA. As reported in T able III, removing DD A increases the parameter count by about 14.0% and raises FLOPs by about 21.6%, while the reconstruction accurac y decreases by 0.05 dB in PSNR. This contrast confirms that simple hierarchical stacking introduces significant hierarchical redundancy , whereas the DAA ef fectiv ely impro ves feature utilization and reduces model complexity through an anchor- guided mechanism. 2) P anoramic Epipolar Repr esentation: W e further in ves- tigated the impact of different epipolar representations on reconstruction performance. While keeping the rest of the network fixed, the Panoramic Epipolar Representation (PER) is compared against con ventional isolated epipolar slices (w . EPI) and stacked epipolar slices (w . EPIs). As shown in T able III, the configuration with isolated EPI slices deli vers the lowest reconstruction accurac y , achie ving a PSNR of 32.588 dB. This is because fragmented slices interrupt the geometric continuity of the light field, pre venting the capture and transmission of global disparity dependen- cies. While stacking EPIs partially alle viates this issue and improv es PSNR to 32.628 dB, it still lags behind the PEPI- based final configuration. By creating a continuous panoramic epipolar plane, PEPI more effecti vely preserv es global geomet- ric structures, thereby providing higher -quality reconstruction. 9 T ABLE IV A B LAT IO N S T U DY O N D I FF ER E N T R E P RE S E NTA T I O N S C A N NI N G S T R A T E G IE S F OR 4 × L F S R . SAI MacPI H-EPI V -EPI PSNR / SIMM P arams FLOPS (a) Φ 4 - path Φ 4 - path { Φ row , Φ ′ row } { Φ col , Φ ′ col } 32.703 / 0.9473 1.013 36.050 (b) { Φ row , Φ col } Φ 4 - path { Φ row , Φ ′ row } { Φ col , Φ ′ col } 32.700 / 0.9471 0.983 33.625 (c) { Φ row , Φ col } Φ 4 - path { Φ col } { Φ col } 32.696 / 0.9471 0.953 31.200 (d) { Φ row , Φ col } { Φ row , Φ col } { Φ col } { Φ col } 32.329 / 0.9440 0.923 28.775 T ABLE V I N VE S T I GATI O N O N T HE S C AL A B I LI T Y O F T H E P RO P O SE D R AS L F W I T H R E SP E C T T O N E TW O R K D E P T H ( N U M BE R O F P G R B L O C KS M ) A N D W I DT H ( F E A T U R E D I M EN S I ON S C ) . M C PSNR / SIMM Params FLOPS 4 64 32.690 / 0.9472 0.953 31.200 8 64 32.901 / 0.9492 1.654 55.756 12 64 32.972 / 0.9497 2.310 76.676 16 64 33.070 / 0.9502 2.996 100.020 4 128 33.039 / 0.9498 3.486 101.185 4 256 33.129 / 0.9508 13.412 367.205 Notably , these three variants have identical parameter scales and computational costs, confirming that the benefits of PER arise solely from improved representational ability rather than from increased model capacity . 3) Repr esentation-A war e Asymmetric Scanning Strate gy: T o v alidate the Representation-A ware Asymmetric Scanning (RAAS) strategy , we conduct a quantitativ e ablation on scan- ning configurations for the 4 × LFSR task, as summarized in T able IV. Exp. (a) serves as the symmetric-scanning base- line, emplo ying quad-directional state updates following the standard SS2D design. Exp. (b) applies RAAS exclusi vely to the SAI branch; pruning the in verse trajectories reduces FLOPs by 6.7% with negligible performance impact. This indicates that in verse paths provide limited additional benefits in spatial domains with locally symmetric dependencies. Exp. (c) extends RAAS to the EPI domain by pruning to a single epipolar-aligned trav ersal, while preserving full coupling in MacPI. This achieves a 13.5% reduction in FLOPs relative to Exp. (a) without noticeable degradation. Con versely , Exp. (d) additionally prunes the MacPI paths, resulting in a clear drop in accuracy . This suggests that the MacPI domain necessitates comprehensiv e directional coupling to support comple x mixed spatial-angular interactions, and that e xcessiv e pruning hinders effecti ve cross-view information propagation. D. Model Scaling Analysis T o ev aluate the scalability of RASLF , we inv estigated the impact of network depth (number of PGR blocks, M ) and width (feature dimension, C ) on the performance-efficienc y trade-off. As shown in T able V, increasing M from 4 to 16 with a fixed C = 64 consistently improves performance with linear gro wth in comple xity , confirming the effecti veness of the D AA module in reducing redundancy in deep architectures. Notably , a comparison between configurations with similar Flops, specifically the deeper v ariant ( M = 16 , C = 64 ) and the wider variant ( M = 4 , C = 128 ), shows that increasing cascading depth is more effecti ve than expanding feature dimensionality . The former achieves higher accuracy with a more compact parameter footprint, providing empiri- cal evidence that depth-wise cascading is more critical than width-wise expansion for efficient light-field modeling. This cascaded structure facilitates the continuous refinement of geometric representations through iterativ e state-space e vo- lutions. Con versely , expanding the feature dimension to 256 leads to a disproportionate increase in parameters and FLOPs, with diminishing marginal utility . Therefore, to achiev e the best balance between accuracy and computational cost, the configuration of M = 4 and C = 64 is chosen as the standard RASLF baseline. V . C O N C L U S I O N This study presented RASLF , a representation-aware state space framew ork designed to facilitate deep collaboration among div erse LF representations By integrating Progres- siv e Geometric Refinement (PGR) with a Panoramic Epipo- lar Representation (PEPI), the proposed method success- fully ensures global geometric consistency . Furthermore, the Representation-A ware Asymmetric Scanning (RAAS) strategy and the Dual-Anchor Aggregation (D AA) module effecti vely eliminate directional and hierarchical redundancies, respec- tiv ely , thereby improving the balance between reconstruction accuracy and computational ef ficiency . Extensiv e ev aluations on fiv e public benchmarks confirmed that RASLF achiev es state-of-the-art (SOT A) performance with remarkably compact parameters. Future research will in vestigate the integration of light-field geometric priors with the intermediate states of the state-space model, promoting synergistic representations between SSM and LF features to further improve cross-view consistency and high-frequency texture restoration. R E F E R E N C E S [1] Z. Cui, H. Sheng, D. Y ang, S. W ang, R. Chen, and W . Ke, “Light field depth estimation for non-lambertian objects via adaptive cross operator, ” IEEE T ransactions on Cir cuits and Systems for V ideo T echnology , vol. 34, no. 2, pp. 1199–1211, 2024. [2] W . Chao, F . Duan, X. W ang, Y . W ang, K. Lu, and G. W ang, “Occcasnet: Occlusion-aware cascade cost volume for light field depth estimation, ” IEEE T ransactions on Computational Imaging , vol. 10, pp. 1680–1691, 2024. [3] Y . Y ang, L. Wu, L. Zeng, T . Y an, and Y . Zhan, “Joint upsampling for refocusing light fields derived with hybrid lenses, ” IEEE T ransactions on Instrumentation and Measurement , vol. 72, pp. 1–12, 2023. [4] X. Ban, Y . Liu, L. Zhang, and Z. Fan, “Focus-a ware fusion for enhanced all-in-focus light field image generation, ” IEEE T ransactions on Instrumentation and Measurement , vol. 73, pp. 1–18, 2024. 10 [5] Z. Chen and E. N. Brown, “State space model, ” Scholarpedia , vol. 8, no. 3, p. 30868, 2013. [6] R. Gao, Z. Xiao, and Z. Xiong, “Mamba-based light field super- resolution with efficient subspace scanning, ” in Pr oceedings of the Asian Confer ence on Computer V ision (ACCV) , December 2024, pp. 531–547. [7] W . xia, Y . Lu, S. W ang, Z. W ang, P . Xia, and T . Zhou, “Lfmamba: Light field image super -resolution with state space model, ” 2024. [Online]. A vailable: https://arxi v .org/abs/2406.12463 [8] Z. W ei, K. Jin, Z. Hou, K. Song, and X. Zhou, “ l 2 fmamba: Lightweight light field image super-resolution with state space model, ” IEEE T rans- actions on Computational Imaging , v ol. 11, pp. 816–826, 2025. [9] X. Pei, T . Huang, and C. Xu, “Efficientvmamba: Atrous selectiv e scan for light weight visual mamba, ” Pr oceedings of the AAAI Conference on Artificial Intelligence , vol. 39, no. 6, pp. 6443–6451, Apr . 2025. [10] H. Xiao, L. T ang, P .-T . Jiang, H. Zhang, J. Chen, and B. Li, “Boosting vision state space model with fractal scanning, ” in Pr oceedings of the Thirty-Ninth AAAI Conference on Artificial Intelligence and Thirty- Seventh Conference on Innovative Applications of Artificial Intelligence and Fifteenth Symposium on Educational Advances in Artificial Intelli- gence . AAAI Press, 2025. [11] H. Guo, Y . Guo, Y . Zha, Y . Zhang, W . Li, T . Dai, S.-T . Xia, and Y . Li, “Mambairv2: Attentive state space restoration, ” in Pr oceedings of the IEEE/CVF Conference on Computer V ision and P attern Recognition (CVPR) , June 2025, pp. 28 124–28 133. [12] M. Lev oy and P . Hanrahan, “Light field rendering, ” ser . SIGGRAPH ’96. Association for Computing Machinery , 1996, p. 31–42. [13] R. C. Bolles, H. H. Baker , and D. H. Marimont, “Epipolar-plane image analysis: An approach to determining structure from motion, ” International Journal of Computer V ision , vol. 1, pp. 7–55, 1987. [Online]. A vailable: https://api.semanticscholar .org/CorpusID:12598541 [14] Y . Y oon, H.-G. Jeon, D. Y oo, J.-Y . Lee, and I. S. Kweon, “Light- field image super-resolution using conv olutional neural network, ” IEEE Signal Processing Letters , vol. 24, no. 6, pp. 848–852, 2017. [15] Y . W ang, F . Liu, K. Zhang, G. Hou, Z. Sun, and T . T an, “Lfnet: A novel bidirectional recurrent con volutional neural network for light- field image super-resolution, ” IEEE T ransactions on Image Processing , vol. 27, no. 9, pp. 4274–4286, 2018. [16] Y . W ang, L. W ang, J. Y ang, W . An, J. Y u, and Y . Guo, “Spatial-angular interaction for light field image super -resolution, ” in Computer V ision – ECCV 2020: 16th European Conference, Glasgow , UK, August 23–28, 2020, Proceedings, P art XXIII . Berlin, Heidelberg: Springer -V erlag, 2020, p. 290–308. [Online]. A vailable: https://doi.org/10.1007/978- 3- 030- 58592- 1 18 [17] Y . W ang, J. Y ang, L. W ang, X. Ying, T . W u, W . An, and Y . Guo, “Light field image super-resolution using deformable con volution, ” IEEE T ransactions on Image Pr ocessing , v ol. 30, pp. 1057–1071, 2021. [18] R. Cong, H. Sheng, D. Y ang, Z. Cui, and R. Chen, “Exploiting spatial and angular correlations with deep ef ficient transformers for light field image super-resolution, ” IEEE T ransactions on Multimedia , 2023. [19] N. Meng, H. K.-H. So, X. Sun, and E. Y . Lam, “High-dimensional dense residual conv olutional neural network for light field reconstruc- tion, ” IEEE T ransactions on P attern Analysis and Machine Intelligence , vol. 43, no. 3, pp. 873–886, 2021. [20] Z. Liang, Y . W ang, L. W ang, J. Y ang, and S. Zhou, “Light field image super-resolution with transformers, ” IEEE Signal Pr ocessing Letters , vol. 29, pp. 563–567, 2022. [21] S. W ang, T . Zhou, Y . Lu, and H. Di, “Detail-preserving transformer for light field image super-resolution, ” in Proceedings of the AAAI confer ence on artificial intelligence , vol. 36, no. 3, 2022, pp. 2522– 2530. [22] Z. Z. Hu, X. Chen, V . Y . Y . Chung, and Y . Shen, “Beyond subspace iso- lation: many-to-many transformer for light field image super-resolution, ” IEEE Tr ansactions on Multimedia , 2024. [23] K. Jin, Z. W ei, A. Y ang, D. Wu, M. Gao, and X. Zhou, “Lftrans- mamba: A hybrid mamba-transformer model for light field image super- resolution, ” in 2025 IEEE/CVF Confer ence on Computer V ision and P attern Recognition W orkshops (CVPRW) , 2025, pp. 1186–1195. [24] Z. Liang, Y . W ang, L. W ang, J. Y ang, S. Zhou, and Y . Guo, “Learn- ing non-local spatial-angular correlation for light field image super- resolution, ” in Pr oceedings of the IEEE/CVF International Conference on Computer V ision (ICCV) , 2023, pp. 12 376–12 386. [25] Y . W ang, L. W ang, G. W u, J. Y ang, W . An, J. Y u, and Y . Guo, “Disen- tangling light fields for super-resolution and disparity estimation, ” IEEE T ransactions on P attern Analysis and Machine Intelligence , vol. 45, no. 1, pp. 425–443, 2022. [26] Z. W ang, Y . Lu, S. W ang, W . Xia, P . Xia, and W . W ang, “Trident transformer for light field image super-resolution, ” in 2024 IEEE Inter- national Conference on Multimedia and Expo (ICME) , 2024, pp. 1–6. [27] D. Ke, Y . Chen, C. Jin, H. Xu, Z. Jiang, T . Luo, and G. Jiang, “Combining independent and joint spatial-angular information learning for light field image super-resolution, ” Knowledge-Based Systems , vol. 330, p. 114619, 2025. [Online]. A vailable: https://www .sciencedirect. com/science/article/pii/S0950705125016582 [28] M. Y u, Z. W u, and D. Huang, “Lfmix: A lightweight hybrid architecture for light field super-resolution, ” in 2025 IEEE/CVF Confer ence on Computer V ision and P attern Recognition W orkshops (CVPRW) , 2025, pp. 1441–1450. [29] M. Li, B. Ma, and S. W ang, “Hierarchical spatial–angular integration for lightweight light field image super-resolution, ” Knowledge-Based Systems , vol. 315, p. 113240, 2025. [30] H. Zhang, W . Zhou, L. Lin, and A. Lumsdaine, “Cascade residual learn- ing based adapti ve feature aggregation for light field super -resolution, ” P attern Recognition , vol. 165, p. 111616, 2025. [31] Y . Liu, Y . Tian, Y . Zhao, H. Y u, L. Xie, Y . W ang, Q. Y e, J. Jiao, and Y . Liu, “Vmamba: V isual state space model, ” Advances in neural information processing systems , v ol. 37, pp. 103 031–103 063, 2024. [32] H. Guo, J. Li, T . Dai, Z. Ouyang, X. Ren, and S.-T . Xia, “Mambair: A simple baseline for image restoration with state-space model, ” in Eur opean conference on computer vision . Springer , 2024, pp. 222– 241. [33] Y . Zhang, K. Li, K. Li, L. W ang, B. Zhong, and Y . Fu, “Image super- resolution using v ery deep residual channel attention networks, ” in Pr oceedings of the European conference on computer vision (ECCV) , 2018, pp. 286–301. [34] S. Zhang, Y . Lin, and H. Sheng, “Residual networks for light field image super-resolution, ” in Pr oceedings of the IEEE/CVF confer ence on computer vision and pattern recognition , 2019, pp. 11 046–11 055. [35] H. W . F . Y eung, J. Hou, X. Chen, J. Chen, Z. Chen, and Y . Y . Chung, “Light field spatial super -resolution using deep ef ficient spatial-angular separable con volution, ” IEEE T ransactions on Image Pr ocessing , vol. 28, no. 5, pp. 2319–2330, 2018. [36] J. Jin, J. Hou, J. Chen, and S. Kwong, “Light field spatial super - resolution via deep combinatorial geometry embedding and structural consistency regularization, ” in Proceedings of the IEEE/CVF conference on computer vision and pattern recognition , 2020, pp. 2260–2269. [37] S. Zhang, S. Chang, and Y . Lin, “End-to-end light field spatial super- resolution network using multiple epipolar geometry , ” IEEE T ransac- tions on Image Pr ocessing , v ol. 30, pp. 5956–5968, 2021. [38] Y . W ang, L. W ang, J. Y ang, W . An, J. Y u, and Y . Guo, “Spatial-angular interaction for light field image super-resolution, ” in Computer V ision– ECCV 2020: 16th European Conference, Glasgow , UK, August 23–28, 2020, Proceedings, P art XXIII 16 . Springer, 2020, pp. 290–308. [39] G. Liu, H. Y ue, J. W u, and J. Y ang, “Intra-inter view interaction network for light field image super-resolution, ” IEEE T ransactions on Multimedia , vol. 25, pp. 256–266, 2021. [40] V . V . Duong, T . H. Nguyen, J. Y im, and B. Jeon, “Light field image super-resolution network via joint spatial-angular and epipolar informa- tion, ” IEEE T rans. Compuational Imaging , 2023. [41] M. Rerabek and T . Ebrahimi, “New light field image dataset, ” in 8th International Confer ence on Quality of Multimedia Experience (QoMEX) , 2016, pp. 1–2. [42] M. Le Pendu, X. Jiang, and C. Guillemot, “Light field inpainting propagation via low rank matrix completion, ” IEEE Tr ansactions on Image Pr ocessing , v ol. 27, no. 4, pp. 1981–1993, 2018. [43] V . V aish and A. Adams, “The (ne w) stanford light field archiv e, ” Computer Graphics Laboratory , Stanford University , vol. 6, no. 7, p. 3, 2008. [44] S. W anner, S. Meister , and B. Goldluecke, “Datasets and benchmarks for densely sampled 4d light fields. ” in VMV , vol. 13, 2013, pp. 225–226. [45] K. Honauer, O. Johannsen, D. K ondermann, and B. Goldluecke, “ A dataset and ev aluation methodology for depth estimation on 4d light fields, ” in Computer V ision–ACCV 2016: 13th Asian Conference on Computer V ision, T aipei, T aiwan, November 20-24, 2016, Revised Se- lected P apers, P art III 13 . Springer , 2017, pp. 19–34.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment