Dual Consensus: Escaping from Spurious Majority in Unsupervised RLVR via Two-Stage Vote Mechanism

Current label-free RLVR approaches for large language models (LLMs), such as TTRL and Self-reward, have demonstrated effectiveness in improving the performance of LLMs on complex reasoning tasks. However, these methods rely heavily on accurate pseudo…

Authors: Kaixuan Du, Meng Cao, Hang Zhang

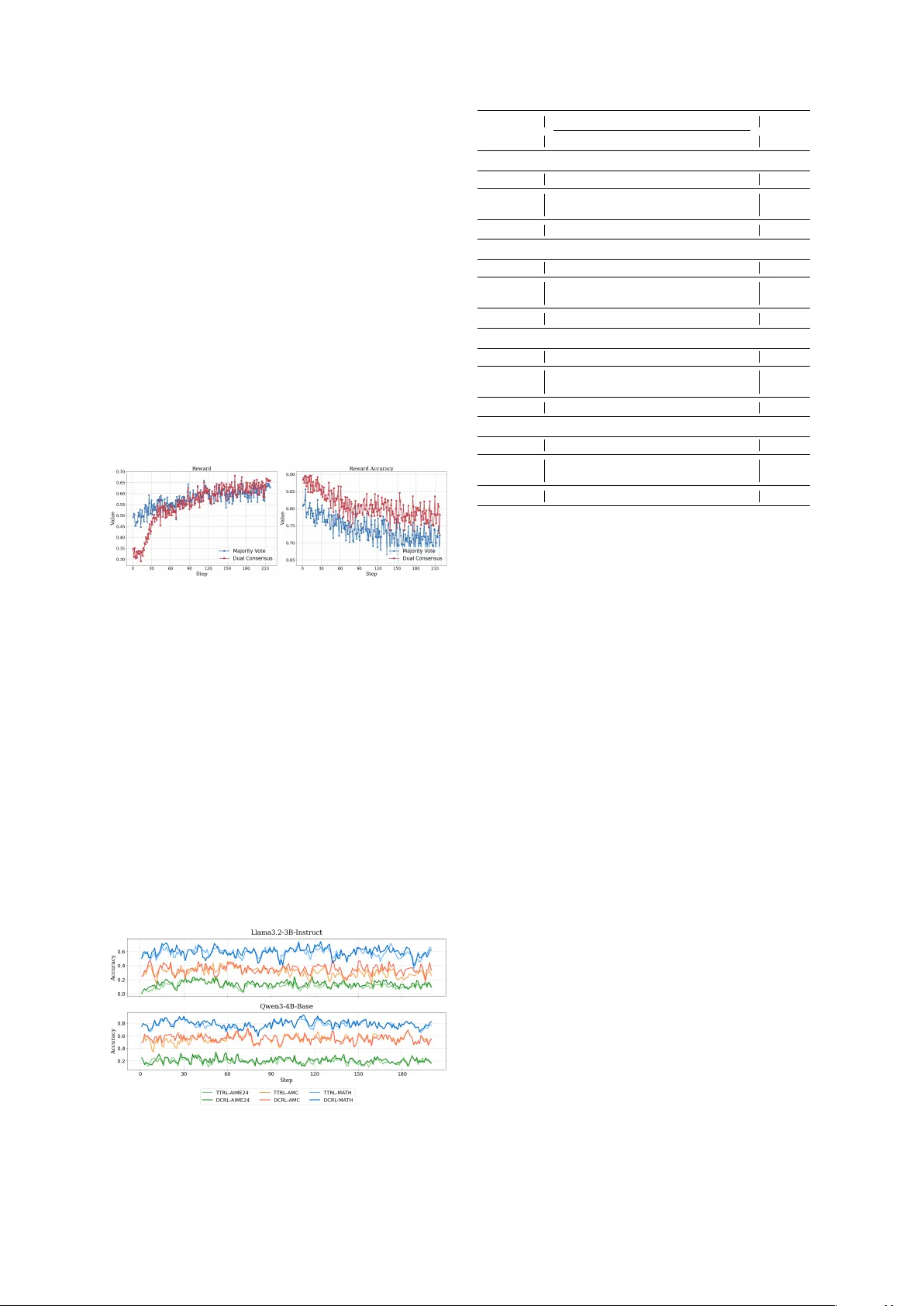

Dual Consensus: Escaping from Spurious Majority in Unsupervised RL VR via T wo-Stage V ote Mechanism Kaixuan Du 1 , Meng Cao 1 , Hang Zhang 1 , Y ukun W ang 1 , Xiangzhou Huang , Ni Li 1 * 1 School of Automation Science and Electrical Engineering, Beihang Uni versity {dukaixuan, lini}@buaa.edu.cn Abstract Current label-free RL VR approaches for lar ge language models (LLMs), such as TTRL and Self-rew ard, have demonstrated effecti veness in improving the performance of LLMs on com- plex reasoning tasks. Howe v er , these meth- ods rely heavily on accurate pseudo-label es- timation and con ver ge on spurious yet pop- ular answers, thereby trapping in a domi- nant mode and limiting further impro vements. Building on this, we propose D ual C onsensus R einforcement L earning 1 (DCRL), a novel self-supervised training method which is ca- pable of generating more reliable learning sig- nals through a tw o-stage consensus mechanism. The model initially acts as an anchor , produc- ing dominant responses; then it serves as an explor er , generating div erse auxiliary signals via a temporary unlearning process. The final training target is deri ved from the harmonic mean of these two signal sets. Notably , the pro- cess operates entirely without external models or supervision. Across eight benchmarks and div erse domains, DCRL consistently improves Pass@1 ov er majority v ote while yielding more stable training dynamics. These results demon- strate that DCRL establishes a scalable path tow ard stronger reasoning without labels. 1 Introduction Reinforcement Learning with V erifiable Rewards (RL VR) has emerged as an effecti ve approach to boosting the performance of Large Language Mod- els (LLMs), enabling superior reasoning capabil- ities through long chain-of-thought ( W ei et al. , 2023 ) reasoning on various challenging bench- marks ( OpenAI et al. , 2024 ; DeepSeek-AI et al. , 2025 ; Y ang et al. , 2025 ). Howe ver , typically im- plemented via algorithms such as Group Relati ve Policy Optimization (GRPO) ( Shao et al. , 2024 ), * Corresponding author . 1 Code is av ailable at https://github.com/v0yager33/ DualConsensus . query Policy Model Anchor Explorer Rollout Rollout 33 42 42 11 33 33 42 unlearn Reward Compution Advantage Compution Policy Optimization 33 R1 R2 ... ... R3 Rn 42 11 33 45 42 14 42 A1 A2 ... ... A3 An Pseudo- Label Figure 1: An overvie w of Dual Consensus Reinfor ce- ment Learning (DCRL). Specifically , the policy model assumes two roles: (1) an anchor that generates dom- inant and reliable responses; (2) an e xplor er that pro- duces div erse auxiliary signals through a temporary un- learning process. current RL VR approaches hea vily rely on human- annotated datasets or , at a minimum, environments that provide verifiable ground-truth signals ( Le et al. , 2022 ; W ang et al. , 2024a ). This reliance restricts its generalizability to fully unlabeled or distribution-shifted tasks where neither human an- notations ( Ziegler et al. , 2020 ) nor executable en vi- ronments are accessible. As LLMs approach or sur - pass human-le vel performance, they will inevitably operate in domains where even e xpert humans can- not provide definiti ve judgments or reliable ev alua- tions, which moti vates the e xploration of training on unlabeled data. Recent studies ( Shafayat et al. , 2025 ; Zhao et al. , 2025a ) find that LLMs can achiev e self- improv ement without labeled data. One approach is determinism-based methods ( Prabhudesai et al. , 2025 ; Zhang et al. , 2025b ), which deriv e rew ards from the confidence of a single policy along trajec- tories, thereby encouraging lo w-entropy and high- confidence predictions. These methods can achie ve better performance by sharpening the model’ s out- puts, but it remains debatable whether they are truly ef fectiv e for improving reasoning capabilities. Another approach is aggregation-based methods 1 ( Zhang et al. , 2025c ; Y u et al. , 2025b ; W u et al. , 2025 ), which deri ve rew ards from agreement across multiple samples, assuming that cross-sample con- sistency correlates with correctness. Ne vertheless, these approaches still suffer from tw o critical limi- tations: • Spurious Reward Signals : Models strug- gle to generate distinguishable rew ard sig- nals, especially when tackling hard reason- ing tasks; the answers deri ved from majority vote may themselves suffer from systematic biases ( Zhao et al. , 2025b ). In the later stages of training, spurious majority outcomes can come to dominate, yet the correct solutions may instead lie within the minority rollouts. • Lack Exploration Capability : By contin- uously re warding consensus across diverse trajectories, the model’ s output distribution becomes increasingly rigid and concentrated, resulting in a se vere deficienc y in exploration capability or ev en entropy collapse ( Cui et al. , 2025 ). Consequently , the model tends to con- ver ge to a narrow set of suboptimal responses and exhibits de graded performance when con- fronted with out-of-domain (OOD) tasks. In this paper , we propose Dual Consensus —a nov el framework for Unsupervised Reinforcement Learning with V erifiable Re wards (URL VR) driv en by a multi-stage vote mechanism. Our core insight stems from the following intuition: valid reasoning trajectories should not only con ver ge to the dom- inant mode but also exhibit enhanced robustness when the distribution of the model is artificially flattened. Instead of nai vely adopting the fragile majority vote as the pseudo-label, we decompose the rollout process into two stages: anchor and explor er . The anchor stage in volv es normal rollouts where the model generates responses under its current policy , capturing the dominant reasoning mode. Subse- quently , in the e xplorer stage, we introduce a tem- porary unlearning process to flatten the distrib ution and enhance exploration, thereby encouraging the generation of div erse auxiliary responses that devi- ate from the dominant mode. After obtaining the two signal sets, we compute the harmonic mean of their consensus scores to determine the final re ward signal. This harmonic mean balances the reliability of the dominant mode (from the anchor stage) and the di versity of potential v alid trajectories (from the explorer stage), ef fecti vely mitigating the ad- verse impact of majority v ote when it con verges to spurious answers. T o validate our approach, we demonstrate the ef fecti veness of Dual Consensus through extensi ve experiments. W e first train models on the large- scale D APO-14K-Math dataset and ev aluate them on multiple established benchmarks. Addition- ally , we apply test-time adaptation on fi ve distinct datasets to further assess our method. In summary , our ke y contributions are as follo ws: • A nov el URL VR method, Dual Consensus , which utilizes the intrinsic robustness of the model to guide the model to e volve with pol- icy optimization methods such as GRPO. No- tably , the frame work is entirely free of ex- ternal models and enables continuous self- improv ement without supervision. • A pseudo-label selection mechanism and re- ward design that e xploits the intrinsic robust- ness of the model itself to generate more reli- able rew ard signals, mitigating the toxicity of majority vote when it f ails. • W e empirically verify the general effecti ve- ness of Dual Consensus in boosting LLMs’ reasoning performance via comprehensiv e ex- periments, and additionally present systematic ablation studies and in-depth further analyses. 2 Methodology In this section, we present the details of Dual Con- sensus. It mitigates spurious majority bias by gen- erating di verse signals via Unlearn Then Explore, identifying more accurate labels through Harmonic Election, and stabilizing updates with Adaptiv e Sampling. Our frame work employs Grouped Relati ve Pol- icy Optimization (GRPO) ( Shao et al. , 2024 ) as its foundational RL algorithm—it stabilizes training by normalizing advantage estimates across multiple rollouts of the same prompt. For a giv en input prompt x (paired with its ground-truth label y ), GRPO first samples n roll- outs { y i } n i =1 from the current policy . For each roll- out y i , GRPO computes a reward r i = R ( y i , y | x ) , then deri ves the group-normalized adv antage ˆ A i : ˆ A i = r i − ¯ r σ r (1) 2 where ¯ r = 1 n P n k =1 r k denotes the mean re ward of the rollout group, and σ r is the standard de vi- ation of the group re wards. The GRPO objecti ve optimizes the target policy π θ by maximizing the clipped normalized adv antage: L GRPO ( x, y ; θ ) = 1 n n X i =1 min ρ i ( θ ) ˆ A i , clip ρ i ( θ ) , 1 − ϵ, 1 + ϵ ˆ A i − β D KL π θ ( · | x ) ∥ π ref ( · | x ) (2) where ρ i ( θ ) = π θ ( y i | x ) /π old ( y i | x ) is the im- portance sampling ratio. 2.1 Unlearn Then Explor e Follo wing the GRPO framework, we first initialize an anchor model (parameterized by θ ′ ) by cloning the current policy model (parameterized by θ ), i.e., θ ′ ← θ . W e then strate gically apply an unlearn- ing strategy ( Liu et al. , 2024 ) to transform this anchor into an explorer . Unlike EEPO ( Chen et al. , 2025 ), which simply employs unlearning as a sup- plementary technique to enhance exploration, our approach le verages unlearning as a core methodol- ogy to acti vely search for correct answers. T o implement this unlearning strategy , we firstly introduce the standard ne gative log-lik elihood (NLL) loss that is defined as: L NLL = − log π anchor ( y i,t | x, y i, 1 2 , both O 0 and O 1 are included in training. This enables the policy to lever - age reliable anchor behaviors while acti vely integrating di verse, high-quality explorations. The ef fective rollout set for polic y update is defined as: O train = ( O 0 if ¯ ρ t ≤ 1 2 , O 0 ∪ O 1 if ¯ ρ t > 1 2 . (15) Only trajectories in O train contribute to the pol- icy gradient, ensuring a smooth transition from exploitation-dominant to balanced exploration- exploitation learning. The ov erall workflo w of the proposed Dual Con- sensus algorithm is summarized in Algorithm 1. 3 Experiments In this section, we first introduce the e xperimen- tal setup, then discuss the o verall ef fectiv eness of our method, and finally present the results of our ablation studies. 3.1 Setups T o comprehensi vely ev aluate the effecti veness of DCRL, our e xperiments are conducted under tw o distinct training paradigms: • Large-Scale Unsupervised Lear ning: Directly applying DCRL to train models from scratch on a large, unlabeled dataset. Algorithm 1 Dual Consensus: An Unsupervised RL VR Algorithm 1: Initialize: policy θ 0 ; learning rates η GRPO , η u ; group size G ; iterations T 2: for t = 0 to T − 1 do 3: Sample query x ∼ D 4: Sample G trajectories O anchor ∼ π θ t ( · | x ) , compute consensus rate ρ t and ¯ ρ t 5: θ e ← θ t − η u ∇ θ t L u ( O anchor ) 6: Sample G trajectories O explorer ∼ π θ e ( · | x ) 7: S ( a ) = 2 p 0 ( a ) p 1 ( a ) p 0 ( a )+ p 1 ( a ) for each answer a in O anchor ∪ O explorer 8: Pseudo-label y ∗ = arg max a S ( a ) 9: if ρ t > 1 / 2 then 10: O ← O anchor ∪ O explorer 11: else 12: O ← O anchor 13: end if 14: Compute re wards and adv antages for O 15: θ t +1 ← θ t + η GRPO ∇ θ J GRPO ( θ t ; O ) 16: end for • T est-Time Adaptation (TT A): Using DCRL to adapt a pre-trained model to new , unseen bench- marks via constant unsupervised training. Models: Our tar get models include Llama3.2-3B- Instruct ( Grattafiori et al. , 2024 ), Qwen3-4B-Base, and Qwen3-8B-Base ( Y ang et al. , 2025 ). Addi- tionally , for the TT A paradigm, we also conduct experiments on Qwen2.5-Math-1.5B ( Y ang et al. , 2024 ). Implementation: Our primary training dataset is D APO-Math-14k, a processed version of D APO- Math-17k ( Y u et al. , 2025a ), which is refined by deduplicating prompts and standardizing the for- matting of both prompts and reference answers. W e train each model on this dataset for tw o epochs to mitigate o verfitting. All experiments are base d on the V eRL frame work ( Sheng et al. , 2025 ). Imple- mentation details are reported in Appendix A . Benchmarks: Our benchmark suite comprises eight challenging datasets, including six math- specific: (1) MA TH-500 ( Hendrycks et al. , 2021 ), (2) GSM8K ( Cobbe et al. , 2021 ), (3) AIME24 ( Y ang et al. , 2025 ), (4) Minerv a-math ( Lewk owycz et al. , 2022 ), (5) AMC ( Zuo et al. , 2025 ), (6) OlympiadBench ( He et al. , 2024 ); and two multi- task benchmarks: (1) MMLU-Pro ( W ang et al. , 5 Methods Math Multi-T ask A verage Math GSM8K AIME24 Minerva. AMC Olympiad. MMLU. GPQA. Llama3.2-3B-Instruct V anilla 42.4 76.1 4.5 11.7 20.6 14.6 26.4 22.3 27.3 GRPO 49.2 79.7 13.5 14.9 23.2 15.3 32.8 23.8 31.5 RENT 45.4 78.5 9.4 11.2 20.9 15.2 30.3 22.7 29.2 TTRL 44.6 71.8 10.2 12.4 21.5 14.6 25.9 21.4 27.8 Co-Rew arding-I 45.3 77.4 11.8 15.1 22.2 14.8 33.9 24.3 30.6 Co-Rew arding-II 47.6 76.9 11.6 13.7 23.3 15.3 24.9 21.4 29.3 DCRL(ours) 47.8 79.1 11.8 15.8 23.3 15.4 33.9 24.7 31.4 Qwen3-4B-Base V anilla 47.4 87.0 9.3 24.5 38.3 34.9 48.6 30.7 40.0 GRPO 76.8 91.8 13.1 32.9 45.2 36.1 52.2 34.8 47.8 RENT 72.1 85.0 10.2 23.7 44.3 36.6 48.6 32.2 44.0 TTRL 74.4 91.3 11.5 29.0 44.7 37.0 51.2 32.8 46.4 Co-Rew arding-I 74.8 91.6 11.8 31.1 43.1 36.9 52.2 32.9 46.7 Co-Rew arding-II 74.5 91.7 11.6 30.8 44.5 37.1 52.6 33.3 47.0 DCRL(ours) 74.8 91.7 12.4 31.2 45.6 37.2 52.6 34.3 47.4 Qwen3-8B-Base V anilla 61.8 88.3 12.5 25.0 48.9 40.1 50.6 32.3 44.9 GRPO 82.0 93.8 20.4 34.1 57.0 45.8 57.5 38.1 53.5 RENT 75.3 86.5 13.1 25.4 49.7 40.3 52.5 34.5 47.1 TTRL 78.3 92.9 14.4 32.7 51.2 40.9 55.2 36.5 50.2 Co-Rew arding-I 78.2 92.7 12.2 32.0 51.2 40.7 56.7 37.9 50.2 Co-Rew arding-II 77.2 92.9 12.2 32.3 51.8 40.2 56.1 37.6 50.0 DCRL(ours) 79.2 93.3 14.7 32.7 51.9 40.9 56.7 37.9 50.9 T able 1: Main Results (%) of DCRL T rained on D APO-Math-14k: DCRL Outperforms All Label-Fr ee Baselines. The best results are highlighted in bold . DCRL exceeds all unsupervised methods and nearly matches GRPO with gold labels. (a) Accuracy curv e on the MA TH500 benchmark. (b) Label accuracy curv e (smoothed). Figure 4: T raining Dynamics of Dual Consensus on Qwen3-8B-Base. DCRL-Anchor in Fig. 4b refers to the majority vote of the anchor model in DCRL. 2024b ), (2) GPQA-Diamond ( Rein et al. , 2024 ). For the TT A experiments, we ev aluate on subsets of MA TH-500, AIME24, AMC, and GPQA-Diamond. Baselines: W e compare DCRL against four unsupervised RL VR methods, including one determinism-based approach RENT ( Prabhude- sai et al. , 2025 ), and three aggregation-based ap- proaches TTRL ( Zuo et al. , 2025 ), Co-Rewarding- I , and Co-Rewarding-II ( Zhang et al. , 2025c ). Specifically , for Co-Rew arding-I, we adopt a dataset rephrased by Qwen3-32B for all related experiments. Metrics: W e use the Pass@1 metric. For each question, we sample 16 predictions using a temper- ature of 0.6 and a top-p value of 0.95. The final re- ported score is the av erage Pass@1 accuracy across these 16 independent seeds. 3.2 Results 3.2.1 Main Perf ormance of Dual Consensus T able 1 presents the main results of DCRL trained on D APO-Math-14k across eight challenging rea- soning benchmarks. Our method consistently out- performs all label-free baselines—including RENT , TTRL, and both v ariants of Co-Re warding—across dif ferent model scales and task domains. Notably , 6 on the Qwen3-8B-Base model, DCRL achiev es 79.2% on MA TH-500, surpassing the strongest baseline TTRL at 78.3% by 0.9%, and improv es AIME24 from 14.4% to 14.7%, demonstrating its ef fecti veness on extremely hard competition- le vel problems. On multi-task benchmarks, DCRL matches or exceeds Co-Re warding-I on MMLU- Pro and GPQA-Diamond, confirming its generaliz- ability beyond pure math reasoning. Remarkably , despite being fully unsupervised and using no ground-truth labels, DCRL achie ves performance on par with, and occasionally e xceeds, the supervised GRPO baseline. On Llama3.2-3B- Instruct, Qwen3-4B-Base, and Qwen3-8B-Base, it yields av erage gains of +7.5%, +4.0%, and +6.1% on MMLU-Pro respecti vely . Figure 5: Comparison of re ward signal between Major- ity V ote and Dual Consensus. Compared with Majority V ote: W e adopt TTRL ( Zuo et al. , 2025 ) as a representativ e baseline that relies on standard majority v ote for pseudo- label estimation. Fig 4 and Fig 5 depict the training dynamics on Qwen3-8B-Base: our method can se- lect low-consistency answers (especially early in training) and achie ves higher ground-truth re ward accuracy . This demonstrates that Dual Consensus produces more reliable supervision signals than nai ve majority v ote. 3.2.2 T est-time Adaption Figure 6: Label accuracy curv es (smoothed) of test-time adaptation for DCRL and TTRL across different tasks. T est-time Adaptation (TT A) serves as a critical Methods Datasets A verage MA TH500 AIME24 AMC GPQA Qwen2.5-Math-1.5B V anilla 30.6 5.6 23.4 15.4 18.7 TTRL 72.9 17.0 45.3 22.2 39.3 DCRL(ours) 74.5 17.7 46.6 22.8 40.4 ∆ (-TTRL) ↑ 2.2% ↑ 4.1% ↑ 2.8% ↑ 2.7% ↑ 2.7% Llama3.2-3B-Instruct V anilla 42.4 4.5 20.6 22.3 22.4 TTRL 59.6 10.0 26.5 28.9 31.2 DCRL(ours) 59.8 13.3 32.3 32.6 34.5 ∆ (-TTRL) ↑ 0.3% ↑ 33.0% ↑ 21.8% ↑ 12.8% ↑ 10.5% Qwen3-4B-Base V anilla 47.4 9.3 38.3 30.7 31.4 TTRL 82.6 17.2 56.5 35.3 47.9 DCRL(ours) 83.4 20.6 56.5 35.6 49.0 ∆ (-TTRL) ↑ 0.9% ↑ 19.7% ↑ 0.0% ↑ 0.8% ↑ 2.2% Qwen3-8B-Base V anilla 61.8 12.5 48.9 32.3 38.8 TTRL 85.7 19.8 59.8 43.1 52.1 DCRL(ours) 86.4 22.8 61.0 44.5 53.6 ∆ (-TTRL) ↑ 0.8% ↑ 15.1% ↑ 2.0% ↑ 3.2% ↑ 2.8% T able 2: Results (%) of T est-Time Adaptation T rained on Different Datasets: DCRL Consistently Outperforms the TTRL Baseline. All results are e val- uated under the same experimental settings and reported as the av erage pass@1 over 16 independent seeds. v alidation scenario for unsupervised RL VR meth- ods, as it directly ev aluates the ability to escape spurious majority bias and generalize to unseen rea- soning tasks without labeled data. The performance of TT A is presented in T able 2 . W e did not include methods such as RESTRAIN ( Y u et al. , 2025b ) and Self-Harmony ( Liu et al. , 2025 ) as baselines be- cause their of ficial code has not been released. Although TT A still enables generalization to other unseen scenarios with limited data ( Shafayat et al. , 2025 ; Zuo et al. , 2025 ), a defining character- istic of TT A is its immunity to overfitting concerns. In this setting, the model’ s ability to a priority av oid spurious re ward signals becomes critically impor- tant. As demonstrated in T able 2 , our DCRL con- sistently outperforms TTRL across all e valuated tasks, validating the ef ficacy of our dual consensus mechanism in suppressing misleading signals. 3.3 Ablation Studies T o understand the contribution of each component in our DCRL frame work, we conduct a series of ablation studies on the Qwen3-8B-Base model, and the results are summarized in T able 3 . More de- tailed results are sho wn in Appendix A . 7 Method MA TH GSM8K AIME24 MMLU . GPQA. A verage DCRL (Full) 79.2 93.3 14.7 56.7 37.9 56.3 - w/o Harmonic Election 78.7 93.4 14.3 56.2 37.8 56.0 - w/o Conservati ve Rew ard 78.3 93.3 12.2 57.2 36.2 55.4 - w/o Dynamic Sampling 76.4 90.3 14.3 52.0 34.4 53.4 T able 3: Ablation Studies to Analyze the Contribution of DCRL Core Modules with the Qwen3-8B-Base Model. Impact of Harmonic Election Replacing har- monic mean consensus with simple majority voting from the anchor model alone leads to performance drops. This confirms that harmonic election effec- ti vely mitigates spurious majority bias by fusing both dominant and div erse exploratory signals to produce more reliable pseudo-labels. Impact of Conservativ e Reward Simplifying our re ward design to a binary scheme (1 for correct, 0 otherwise) results in performance degradation, especially in difficult tasks. This demonstrates that our conserv ati ve reward, which reserves a mod- est re ward for anchor majority answers, stabilizes training by pre venting e xtreme fluctuations in ad- v antage estimation and av oiding harsh penalties to high-confidence trajectories. Impact of Dynamic Sampling Using both an- chor and explorer samples for training at all times leads to the worst overall performance. This un- derscores the importance of dynamic sampling in balancing exploration and e xploitation: it excludes noisy signals early on to av oid rew ard hacking and incorporates high-quality e xploration later , ensur- ing stable training while preserving the ability to escape suboptimal modes. 4 Related W orks Unsupervised RL f or LLMs: LLMs can achieve self-improv ement without labeled data via two typ- ical unsupervised RL paradigms: determinism- based methods ( Prabhudesai et al. , 2025 ; Zhang et al. , 2025b ) encourage low-entropy and high- confidence predictions for performance sharpen- ing, while aggregation-based methods ( Zhang et al. , 2025c ; Y u et al. , 2025b ; W u et al. , 2025 ) assign re wards by cross-sample agreement, which takes cross-sample consistency as the proxy of prediction correctness. T est-time Adaptation for LLMs: Recent works ( Akyürek et al. , 2025 ) have demonstrated that LLMs can le verage reinforcement learning to con- duct test-time adaptation ( Sun et al. , 2020 ), which ef fecti vely enhances model performance on unseen data and ev en surpasses the performance of stan- dard training protocols. This paradigm ( Zuo et al. , 2025 ; W u et al. , 2025 ; Liu et al. , 2025 ; Zhou et al. , 2025 ; Zhang et al. , 2025a ) empo wers models to dy- namically adapt to novel task distrib utions without access to extra labeled training data. 5 Conclusion In this paper , we propose DCRL, an unsupervised Reinforcement Learning with V erifiable Rewards (RL VR) frame work that transforms intrinsic model robustness into reliable learning signals, enabling LLMs to self-improv e on reasoning tasks without annotated data. By (i) adopting an Unlearn-Then- Explore strategy to break dominant suboptimal reasoning patterns and enhance exploration capa- bility , (ii) le veraging a Harmonic Election mech- anism to balance reliability and div ersity for ro- bust pseudo-label estimation, and (iii) introduc- ing Adapti ve Sampling to dynamically regulate the exploration-e xploitation trade-off during train- ing, DCRL effecti vely mitigates the spurious major - ity bias—a critical limitation of existing label-free RL VR methods. Empirically , extensi ve ev aluations across diverse LLMs and challenging reasoning benchmarks demonstrate that DCRL consistently outperforms current determinism-based approaches and aggreg ation-based approaches, which pa ves a scalable path for LLM self-improvement without external supervision. Limitations Although DCRL successfully mitigates spurious majority issues and boosts reasoning performance via dual consensus and enhanced exploration, it still has ke y limitations when encountering se vere systematic prior bias, where both anchor and ex- plorer signals con verge to consistent spurious con- sensus. This provides little correcti ve supervision and e ven reinforces misleading reasoning patterns through policy optimization. Moreov er, its perfor - mance gains diminish for extremely comple x out- 8 of-distribution reasoning tasks that deviate far from the model’ s pretraining distribution, as anchor- explor er fails to reconstruct no vel reasoning paths and dual consensus signals become unreliable. References Ekin Akyürek, Mehul Damani, Adam Zweiger, Linlu Qiu, Han Guo, Jyothish Pari, Y oon Kim, and Ja- cob Andreas. 2025. The surprising ef fectiv eness of test-time training for fe w-shot learning . Pr eprint , Liang Chen, Xueting Han, Qizhou W ang, Bo Han, Jing Bai, Hinrich Schutze, and Kam-Fai W ong. 2025. Eepo: Exploration-enhanced policy optimization via sample-then-forget . Preprint , arXi v:2510.05837. Karl Cobbe, V ineet Kosaraju, Mohammad Bavarian, Mark Chen, Heewoo Jun, Lukasz Kaiser, Matthias Plappert, Jerry T worek, Jacob Hilton, Reiichiro Nakano, Christopher Hesse, and John Schulman. 2021. Training verifiers to solve math word prob- lems . Pr eprint , Ganqu Cui, Y uchen Zhang, Jiacheng Chen, Lifan Y uan, Zhi W ang, Y uxin Zuo, Haozhan Li, Y uchen Fan, Huayu Chen, W eize Chen, Zhiyuan Liu, Hao Peng, Lei Bai, W anli Ouyang, Y u Cheng, Bo wen Zhou, and Ning Ding. 2025. The entropy mechanism of rein- forcement learning for reasoning language models . Pr eprint , DeepSeek-AI, Daya Guo, Dejian Y ang, Haowei Zhang, Junxiao Song, Ruoyu Zhang, Runxin Xu, Qihao Zhu, Shirong Ma, Peiyi W ang, Xiao Bi, Xiaokang Zhang, Xingkai Y u, Y u W u, Z. F . W u, Zhibin Gou, Zhi- hong Shao, Zhuoshu Li, Ziyi Gao, and 181 others. 2025. Deepseek-r1: Incentivizing reasoning capa- bility in llms via reinforcement learning . Pr eprint , Aaron Grattafiori, Abhimanyu Dube y , Abhinav Jauhri, Abhinav Pande y , Abhishek Kadian, Ahmad Al- Dahle, Aiesha Letman, Akhil Mathur , Alan Schel- ten, Alex V aughan, Amy Y ang, Angela Fan, Anirudh Goyal, Anthony Hartshorn, Aobo Y ang, Archi Mi- tra, Archie Srav ankumar, Artem Korene v , Arthur Hinsvark, and 542 others. 2024. The llama 3 herd of models . Pr eprint , Chaoqun He, Renjie Luo, Y uzhuo Bai, Shengding Hu, Zhen Thai, Junhao Shen, Jinyi Hu, Xu Han, Y ujie Huang, Y uxiang Zhang, Jie Liu, Lei Qi, Zhiyuan Liu, and Maosong Sun. 2024. OlympiadBench: A challenging benchmark for promoting A GI with olympiad-lev el bilingual multimodal scientific prob- lems . In Pr oceedings of the 62nd Annual Meeting of the Association for Computational Linguistics (V ol- ume 1: Long P apers) , pages 3828–3850, Bangkok, Thailand. Association for Computational Linguistics. Dan Hendrycks, Collin Burns, Saura v Kadav ath, Akul Arora, Steven Basart, Eric T ang, Dawn Song, and Jacob Steinhardt. 2021. Measuring mathematical problem solving with the math dataset . Preprint , Jiaxin Huang, Shixiang Shane Gu, Le Hou, Y uexin W u, Xuezhi W ang, Hongkun Y u, and Jiawei Han. 2022. Large language models can self-improv e . Pr eprint , Hung Le, Y ue W ang, Akhilesh Deepak Gotmare, Sil- vio Sav arese, and Ste ven C. H. Hoi. 2022. Coderl: Mastering code generation through pretrained mod- els and deep reinforcement learning . Pr eprint , Aitor Lewk owycz, Anders Andreassen, David Dohan, Ethan Dyer , Henryk Michalewski, V inay Ramasesh, Ambrose Slone, Cem Anil, Imanol Schlag, Theo Gutman-Solo, Y uhuai W u, Behnam Neyshab ur , Guy Gur-Ari, and V edant Misra. 2022. Solving quan- titativ e reasoning problems with language models . Pr eprint , Jia Liu, ChangY i He, Y ingQiao Lin, MingMin Y ang, FeiY ang Shen, and ShaoGuo Liu. 2025. Ettrl: Balancing exploration and exploitation in llm test- time reinforcement learning via entropy mechanism . Pr eprint , Sijia Liu, Y uanshun Y ao, Jinghan Jia, Stephen Casper , Nathalie Baracaldo, Peter Hase, Y uguang Y ao, Chris Y uhao Liu, Xiaojun Xu, Hang Li, Kush R. V arshney , Mohit Bansal, Sanmi Koyejo, and Y ang Liu. 2024. Rethinking machine unlearning for lar ge language models . Pr eprint , OpenAI, :, Aaron Jaech, Adam Kalai, Adam Lerer, Adam Richardson, Ahmed El-Kishky , Aiden Low , Alec Helyar , Aleksander Madry , Alex Beutel, Alex Carney , Alex Iftimie, Ale x Karpenko, Alex T achard Passos, Alexander Neitz, Alexander Prokofie v , Alexander W ei, Allison T am, and 244 others. 2024. Openai o1 system card . Pr eprint , Mihir Prabhudesai, Lili Chen, Alex Ippoliti, Katerina Fragkiadaki, Hao Liu, and Deepak Pathak. 2025. Maximizing confidence alone improves reasoning . Pr eprint , David Rein, Betty Li Hou, Asa Cooper Stickland, Jack- son Petty , Richard Y uanzhe Pang, Julien Dirani, Ju- lian Michael, and Samuel R. Bo wman. 2024. GPQA: A graduate-le vel google-proof q&a benchmark . In F irst Confer ence on Language Modeling . Sheikh Shafayat, Fahim T ajwar , Ruslan Salakhutdi- nov , Jeff Schneider, and Andrea Zanette. 2025. Can large reasoning models self-train? Preprint , Zhihong Shao, Peiyi W ang, Qihao Zhu, Runxin Xu, Junxiao Song, Xiao Bi, Hao wei Zhang, Mingchuan Zhang, Y . K. Li, Y . W u, and Daya Guo. 2024. Deepseekmath: Pushing the limits of mathemati- cal reasoning in open language models . Preprint , 9 Guangming Sheng, Chi Zhang, Zilingfeng Y e, Xibin W u, W ang Zhang, Ru Zhang, Y anghua Peng, Haibin Lin, and Chuan W u. 2025. Hybridflow: A flexible and efficient rlhf frame work . In Pr oceedings of the T wentieth Eur opean Conference on Computer Sys- tems , pages 1279–1297. Y u Sun, Xiaolong W ang, Zhuang Liu, John Miller, Alex ei A. Efros, and Moritz Hardt. 2020. T est-time training for out-of-distribution generalization . Peiyi W ang, Lei Li, Zhihong Shao, R. X. Xu, Damai Dai, Y ifei Li, Deli Chen, Y . W u, and Zhifang Sui. 2024a. Math-shepherd: V erify and reinforce llms step-by-step without human annotations . Preprint , Ru W ang, W ei Huang, Qi Cao, Y usuke Iwasa wa, Y u- taka Matsuo, and Jiaxian Guo. 2025. Self-harmony: Learning to harmonize self-supervision and self- play in test-time reinforcement learning . Pr eprint , Y ubo W ang, Xueguang Ma, Ge Zhang, Y uansheng Ni, Abhranil Chandra, Shiguang Guo, W eiming Ren, Aaran Arulraj, Xuan He, Ziyan Jiang, T ianle Li, Max Ku, Kai W ang, Ale x Zhuang, Rongqi Fan, Xiang Y ue, and W enhu Chen. 2024b. Mmlu-pro: A more robust and challenging multi-task language understanding benchmark . In Advances in Neural Information Pr o- cessing Systems , volume 37, pages 95266–95290. Curran Associates, Inc. Jason W ei, Xuezhi W ang, Dale Schuurmans, Maarten Bosma, Brian Ichter , Fei Xia, Ed Chi, Quoc Le, and Denny Zhou. 2023. Chain-of-thought prompting elic- its reasoning in large language models . Pr eprint , Jianghao W u, Y asmeen George, Jin Y e, Y icheng W u, Daniel F . Schmidt, and Jianfei Cai. 2025. Spine: T oken-selectiv e test-time reinforcement learn- ing with entropy-band regularization . Pr eprint , An Y ang, Anfeng Li, Baosong Y ang, Beichen Zhang, Binyuan Hui, Bo Zheng, Bowen Y u, Chang Gao, Chengen Huang, Chenxu Lv , Chujie Zheng, Day- iheng Liu, Fan Zhou, Fei Huang, Feng Hu, Hao Ge, Haoran W ei, Huan Lin, Jialong T ang, and 41 others. 2025. Qwen3 technical report . Pr eprint , An Y ang, Beichen Zhang, Binyuan Hui, Bofei Gao, Bowen Y u, Chengpeng Li, Dayiheng Liu, Jian- hong T u, Jingren Zhou, Junyang Lin, Keming Lu, Mingfeng Xue, Runji Lin, T ianyu Liu, Xingzhang Ren, and Zhenru Zhang. 2024. Qwen2.5-math tech- nical report: T ow ard mathematical expert model via self-improv ement . Pr eprint , Qiying Y u, Zheng Zhang, Ruofei Zhu, Y ufeng Y uan, Xiaochen Zuo, Y u Y ue, W einan Dai, T iantian Fan, Gaohong Liu, Lingjun Liu, Xin Liu, Haibin Lin, Zhiqi Lin, Bole Ma, Guangming Sheng, Y uxuan T ong, Chi Zhang, Mofan Zhang, W ang Zhang, and 16 others. 2025a. Dapo: An open-source llm re- inforcement learning system at scale . Pr eprint , Zhaoning Y u, Will Su, Leitian T ao, Haozhu W ang, Aashu Singh, Hanchao Y u, Jianyu W ang, Hongyang Gao, W eizhe Y uan, Jason W eston, Ping Y u, and Jing Xu. 2025b. Restrain: From spurious votes to sig- nals – self-driv en rl with self-penalization . Preprint , Haoyu Zhang, Jiaxian Guo, Y usuke Iwasaw a, and Y u- taka Matsuo. 2025a. Aqa-ttrl: Self-adaptation in au- dio question answering with test-time reinforcement learning . Pr eprint , Qingyang Zhang, Haitao W u, Changqing Zhang, Peilin Zhao, and Y atao Bian. 2025b. Right question is already half the answer: Fully unsupervised llm rea- soning incentivization . Preprint , arXi v:2504.05812. Zizhuo Zhang, Jianing Zhu, Xinmu Ge, Zihua Zhao, Zhanke Zhou, Xuan Li, Xiao Feng, Jiangchao Y ao, and Bo Han. 2025c. Co-re warding: Stable self- supervised rl for eliciting reasoning in large language models . Pr eprint , Andrew Zhao, Y iran W u, Y ang Y ue, T ong W u, Quentin Xu, Y ang Y ue, Matthieu Lin, Shenzhi W ang, Qingyun W u, Zilong Zheng, and Gao Huang. 2025a. Absolute zero: Reinforced self-play reasoning with zero data . Pr eprint , W enting Zhao, Pranjal Aggarwal, Swarnadeep Saha, Asli Celikyilmaz, Jason W eston, and Ilia K u- likov . 2025b. The majority is not always right: Rl training for solution aggregation . Pr eprint , Y ujun Zhou, Zhenwen Liang, Haolin Liu, W enhao Y u, Kishan Panaganti, Linfeng Song, Dian Y u, Xian- gliang Zhang, Haitao Mi, and Dong Y u. 2025. Evolv- ing language models without labels: Majority dri ves selection, novelty promotes variation . Pr eprint , Xinyu Zhu, Mengzhou Xia, Zhepei W ei, W ei-Lin Chen, Danqi Chen, and Y u Meng. 2025. The surprising ef fectiv eness of negati ve reinforcement in llm reason- ing . Pr eprint , Daniel M. Ziegler , Nisan Stiennon, Jef frey W u, T om B. Brown, Alec Radford, Dario Amodei, Paul Chris- tiano, and Geof frey Irving. 2020. Fine-tuning lan- guage models from human preferences . Pr eprint , Y uxin Zuo, Kaiyan Zhang, Li Sheng, Shang Qu, Ganqu Cui, Xuekai Zhu, Haozhan Li, Y uchen Zhang, Xin- wei Long, Ermo Hua, Biqing Qi, Y oubang Sun, Zhiyuan Ma, Lifan Y uan, Ning Ding, and Bowen Zhou. 2025. Ttrl: T est-time reinforcement learning . Pr eprint , 10 A Implementation Details A.1 Prompt W e use the same suffix prompt both in the training and ev aluation of our experiments to promote clear and step-by-step reasoning: \nPlease reason step by step, and put your final answer within \boxed{} . A.2 Hyperparameters Hyperparameter settings of our experiment on Qwen3-8B-Base is sho wn in T able 4 . T able 4: Hyperparameter Settings for DCRL Frame- work. Hyperparameter V alue Batch size 128 Mini batch size 128 Micro batch size 8 Max prompt length 4096 Max response length 3072 Learning rate 1 × 10 − 6 LR warmup steps ratio 0.1 Learning rate warmup cosine Optimizer Adam T emperature 0.6 T op k -1 T op p 0.95 Unlearn LR 3 × 10 − 7 Number of anchor samples per example 16 Number of explorer samples per example 16 Number of samples per example for polic y update 16 Number of samples per example for T esting 16 Use KL loss False A.3 Baseline Implementation For all baselines, we use the official code pro vided in their public repositories. For TTRL, we set the learning rate to 1 × 10 − 6 and the warm-up ratio to 0.1 for large-scale unsupervised learning. For Co-Re warding-I, we adopt the D APO-Math-14k dataset rephrased by Qwen3-32B as provided in the original source code. Besides, no external models are used in all baseline experiments. All other hyperparameter settings for the baseline are kept identical to the original configuration. B Detailed Results B.1 Detailed Results of Ablation Studies Detailed results of ablation studies are sho wn in fig 7 . Figure 7: Detailed Results of Ablation Studies with Qwen3-8B-Base, including pass@16 on training dataset and label accuracy C Extra Experiments C.1 Hyperparameter Sensitivity A key empirical finding from our experiments is that dif fering models necessitate tailored unlearn- ing learning rates ( η u ). A v alue that is too large risks disrupting the model’ s ability to generate v alid reasoning trajectories, while one that is too small fails to effecti vely suppress spurious dom- inant modes. In this section, we present the de- tailed performance of the Qwen3-8B-Base model under various unlearning learning rate configu- rations, thereby validating the rob ustness of our Unlearn-Then-Explore strategy and the rationale 11 behind our specific hyperparameter selection. Re- sults are sho wn in T able 5 . C.2 Comparison of Different Consensus Strategies T o further validate the effecti veness of our pro- posed Harmonic Election mechanism, we compare it against se veral alternative consensus strategies. These strategies differ in how they aggregate the signals from the anchor and explor er models to select the final pseudo-label y ∗ . The key insight is that a valid reasoning path should be robust, i.e., supported by both the dominant mode (anchor) and the exploratory distrib ution (explorer). W e ev aluate the following strategies: • Majority V ote (Anchor Only) : Simply select the majority answer from the anchor model’ s rollouts. • Majority V ote (Anchor + Explorer) : A sim- ple aggre gation strategy that combines all roll- outs from both the anchor and e xplorer mod- els and selects the majority answer . • Harmonic Mean (Ours) : Our proposed strat- egy , which selects the answer that maximizes the harmonic mean of its probabilities in the anchor and explor er distrib utions. The results are presented in T able 6 and Fig 8 . W e observe that simply combining all samples ( An- chor + Explor er ) does not improv e performance and can ev en be detrimental, as it does not effec- ti vely filter out spurious signals. In contrast, our harmonic mean strategy achiev es the best overall performance, demonstrating its superior ability to balance reliability and div ersity in pseudo-label selection. D Why Does Dual Consensus W ork? W e formally prove that the dual consensus pseudo- label selection mechanism achiev es higher accu- racy than nai ve majority v ote by mitigating spuri- ous majority bias, under mild and realistic assump- tions. D.1 Problem Setup & Definitions Let A be the set of candidate answers, and y true ∈ A be the ground-truth answer . • π anchor ( a ) : Probability of answer a from the an- chor model. Figure 8: Curves of Different Consensus Strate gies for Pseudo-Label Selection on Qwen3-8B-Base. • π explorer ( a ) : Probability of answer a from the explorer model (after unlearning). • p 0 ( a ) , p 1 ( a ) : Empirical probabilities of a from G rollouts of the anchor and explorer models, respecti vely . • Majority V ote : ˆ y MV = arg max a p 0 ( a ) . • Dual Consensus : y ∗ DC = arg max a S ( a ) , where S ( a ) = 2 p 0 ( a ) p 1 ( a ) p 0 ( a )+ p 1 ( a ) is the harmonic mean score. D.2 Key Assumptions W e introduce three realistic assumptions for LLMs with spurious majority bias. Assumption 1 (Spurious Majority Bias): There exists a spurious dominant answer y sp = y true such that: π anchor ( y sp ) ≫ π anchor ( y true ) This is the core failure mode of majority v ote. Assumption 2 (Effective Unlearning): The ex- plorer model suppresses the spurious answer but preserves the true answer: π explorer ( y sp ) ≪ π anchor ( y sp ) , π explorer ( y true ) π anchor ( y true ) ≫ π explorer ( y sp ) π anchor ( y sp ) The ratio inequality implies the true answer is more robust to unlearning. 12 Unlearn LR ( η u ) MA TH GSM8K AIME24 MMLU . GPQA. A verage 1 × 10 − 7 78.6 92.7 12.6 55.5 37.2 55.3 3 × 10 − 7 79.2 93.3 14.7 56.7 37.9 56.3 5 × 10 − 7 77.6 92.7 11.5 54.3 36.4 54.5 1 × 10 − 6 (Failed) 67.3 88.1 12.1 50.2 35.1 50.5 T able 5: Sensitivity Analysis of Unlearning Learning Rate ( η u ) on Qwen3-8B-Base. Consensus Strategy MA TH GSM8K AIME24 MMLU . GPQA. A vg. Majority V ote (Anchor Only) 78.7 93.4 14.3 56.2 37.8 56.0 Majority V ote (Anchor + Explorer) 64.7 90.3 14.3 52.0 34.4 51.5 Harmonic Mean (Ours) 79.2 93.3 14.7 56.7 37.9 56.3 T able 6: Comparison of Different Consensus Strategies for Pseudo-Label Selection on Qwen3-8B-Base. Assumption 3 (Large-Sample Consistency): For suf ficiently large G , by the La w of Large Numbers: p 0 ( a ) G →∞ − − − − → π anchor ( a ) , p 1 ( a ) G →∞ − − − − → π explorer ( a ) D.3 Main Result Theorem : Under Assumptions 1-3, DCRL selects the true answer ( y ∗ DC = y true ), while majority v ote selects the spurious answer ( ˆ y MV = y sp ). Thus, Acc ( y ∗ DC ) > Acc ( ˆ y MV ) . D.4 Proof Part 1: Majority V ote Conv erges to Spurious Answer : By Assumption 1, π anchor ( y sp ) ≫ π anchor ( y true ) . By Assumption 3, p 0 ( y sp ) > p 0 ( a ) , ∀ a ∈ A . Thus ˆ y MV = arg max a p 0 ( a ) = y sp . Part 2: Dual Consensus Con verges to T rue An- swer : W e show S ( y true ) > S ( y sp ) . F or large G : S ( a ) → ˜ S ( a ) = 2 π anchor ( a ) π explorer ( a ) π anchor ( a ) + π explorer ( a ) . Define robustness ratios (Assumption 2, r true ≫ r sp ): r sp = π explorer ( y sp ) π anchor ( y sp ) , r true = π explorer ( y true ) π anchor ( y true ) , where r true ≫ r sp → 0 . Substitute ratios into ˜ S ( a ) : ˜ S ( y sp ) = 2 π anchor ( y sp ) · r sp π anchor ( y sp ) π anchor ( y sp ) + r sp π anchor ( y sp ) = 2 r sp · π anchor ( y sp ) 1 + r sp . ˜ S ( y true ) = 2 π anchor ( y true ) · r true π anchor ( y true ) π anchor ( y true ) + r true π anchor ( y true ) = 2 r true · π anchor ( y true ) 1 + r true . By Assumption 2, r sp → 0 = ⇒ ˜ S ( y sp ) → 0 . Since π anchor ( y true ) > 0 and r true > 0 , we hav e ˜ S ( y true ) > 0 . Thus ˜ S ( y true ) > ˜ S ( y sp ) , so y ∗ DC = arg max a S ( a ) = y true . Dual Consensus enforces a robustness con- straint—v alid answers must be supported by both anchor and explorer , eliminating spurious answers fragile to unlearning and outperforming Majority V ote. E The Use of Large Language Models W e used lar ge language models (LLMs) only to pol- ish writing and improve textual clarity . No LLM was applied to research idea generation, experi- mental design, data analysis, or result deri vation. All scientific contributions—conceptualization, methodology , experiments, and conclusions—were independently de veloped by the authors in full. 13

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment