Robust Generative Audio Quality Assessment: Disentangling Quality from Spurious Correlations

The rapid proliferation of AI-Generated Content (AIGC) has necessitated robust metrics for perceptual quality assessment. However, automatic Mean Opinion Score (MOS) prediction models are often compromised by data scarcity, predisposing them to learn…

Authors: Kuan-Tang Huang, Chien-Chun Wang, Cheng-Yeh Yang

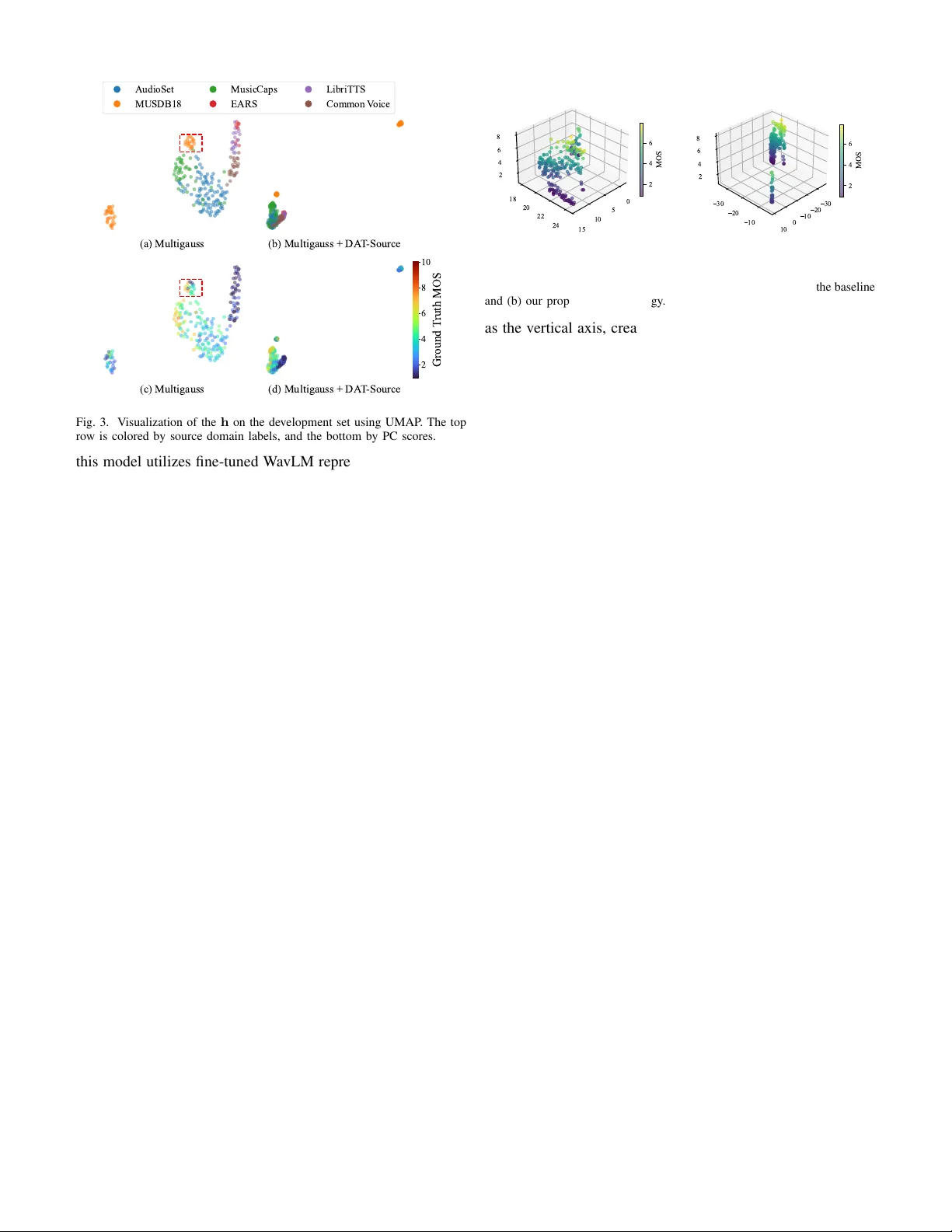

Rob ust Generati v e Audio Quality Assessment: Disentangling Quality from Spurious Correlations Kuan-T ang Huang ∗ , Chien-Chun W ang ∗ ‡ , Cheng-Y eh Y ang ∗ , Hung-Shin Lee § , Hsin-Min W ang † , and Berlin Chen ∗ ∗ Dept. Computer Science and Information Engineering, National T aiwan Normal Univ ersity , T aiwan † Institute of Computer Science, Academia Sinica, T aiwan ‡ E.SUN Financial Holding Co., Ltd., T aiwan § United Link Co., Ltd., T aiwan Abstract —The rapid proliferation of AI-Generated Content (AIGC) has necessitated rob ust metrics for perceptual quality assessment. Howev er , automatic Mean Opinion Score (MOS) prediction models ar e often compromised by data scarcity , pre- disposing them to learn spurious correlations—such as dataset- specific acoustic signatures—rather than generalized quality features. T o address this, we le verage domain adversarial training (D A T) to disentangle true quality per ception from these nuisance factors. Unlike prior works that rely on static domain priors, we systematically in vestigate domain definition strategies ranging from explicit metadata-driv en labels to implicit data-driven clus- ters. Our findings re veal that ther e is no ”one-size-fits-all” domain definition; instead, the optimal strategy is highly dependent on the specific MOS aspect being evaluated. Experimental results demonstrate that our aspect-specific domain strategy effectively mitigates acoustic biases, significantly improving correlation with human ratings and achie ving superior generalization on unseen generative scenarios. Index T erms —audio quality assessment, mean opinion score, domain adversarial training, rob ust generalization. I . I N T RO D U C T I O N The exponential growth of AI-Generated Content (AIGC) has rev olutionized the multimedia landscape. Particularly within the audio modality , generativ e audio has established itself as a cornerstone of modern content creation. This domain encompasses a diverse array of tasks, ranging from text-to-speech (TTS) to text-to-music (TTM) and uni versal text-to-audio (TT A). These adv anced technologies are now driving a wide range of applications, such as creating dynamic soundscapes for immersi ve streaming, generating background scores for personalized media, and enabling interactiv e audio for virtual en vironments. Howe ver , accurately ev aluating the perceptual quality of these generated sounds remains a critical challenge. While subjectiv e listening tests yielding mean opin- ion scores (MOS) represent the gold standard for assessment, they are notoriously expensi ve and time-consuming to conduct. Consequently , the de velopment of automatic MOS prediction models has become indispensable, serving as a scalable and efficient proxy for human ratings [1]–[3]. Howe ver , the reliability of these data-driv en models is often sev erely compromised by the lack of large-scale subjectiv ely labeled data. In such low-resource regimes, models are pre- disposed to learn spurious correlations rather than generalized quality features. Within limited training sets, high subjectiv e ratings may coincidentally align with specific, non-quality- related acoustic characteristics intrinsic to the source data. For instance, a model might erroneously learn to associate high quality with the specific timbre of a musical instrument, the background ambiance of an environmental recording, or a particular room reverberation pattern in speech, simply because these traits dominate the highly rated samples in the limited training corpus. Consequently , the model over - fits to these nuisance factors—treating them as proxies for quality . When deployed to unseen data where these specific acoustic signatures are absent, the model’ s predictions become unreliable, highlighting the need to disentangle true quality perception from these domain-specific biases. The necessity of disentangling quality from confounding factors is echoed in adjacent fields. In video quality assess- ment, [4] attempts to separate aesthetics from technical quality by engineering specific input vie ws. Similarly , in speaker recognition, recent works [5] hav e proposed specialized Gaus- sian inference layers to disentangle static speaker traits from dynamic linguistic content. Howe ver , these approaches often rely on either intricate, hand-crafted heuristics or complex, task-specific architectural designs to define the separation boundaries. T o avoid reliance on rigid heuristics or task- specific designs, we introduce a generalized domain adver- sarial training (DA T) [6] framework capable of automatically purging dataset-induced biases. By lev eraging this strategy , we enforce the model to discard these nuisance factors within the latent space, retaining only the features pertinent to intrinsic perceptual quality . While D A T has been widely adopted in speech and audio tasks—such as addressing variations arising from dif ferent noise conditions [7], accents [8], or recording corpora [9], [10]—these works typically treat domain definition as a static prior . In contrast, we argue that for MOS prediction under data scarcity , the optimal definition of a ”domain” is not self- evident. A critical yet underexplored question arises: what constitutes the most effecti ve ”domain” for adversarial train- ing? Crucially , our experiments suggest there is no ”one-size- fits-all” answer; instead, we find that different MOS aspects necessitate specific domain definition strategies to maximize prediction generalization. T o this end, we systematically in vestigate three distinct domain definition strategies: 1) Source-based, which lev erages explicit metadata (e.g., dataset identity) as static priors; 2) K- means clustering, which discovers implicit, data-driven acous- tic patterns in the latent space, where we further examine the impact of cluster granularity ( K ) on adaptation performance; and 3) Random assignment, serving as a control baseline to validate the necessity of meaningful domain structures. Our findings rev eal that the choice of domain definition is piv otal. By identifying the optimal adversarial target among these strategies, we ef fectively mitigate specific acoustic biases and achie ve superior generalization across diverse, unseen generativ e scenarios. The main contributions of this study 1 are summarized as follows: • Addressing Spurious Correlations: W e identify data scarcity causes overfitting to acoustic signatures, proposing a D A T framew ork to mitigate this without complex heuristics. • Systematic Inv estigation of Domain Definitions: W e sys- tematically e xplore effecti ve adversarial targets, ranging from explicit metadata to implicit data-dri ven clusters. • Generalizability: W e demonstrate that our findings on do- main granularity are robust across various backbone models. I I . P R O PO S E D M E T H O D W e propose a rob ust MOS prediction framework that incorporates Domain Adv ersarial T raining (DA T) to learn quality-aware representations in v ariant to domain shifts. In this section, we detail the model architecture and systematically in vestigate domain definition strate gies. A. Model Arc hitecture The ov erall framew ork is illustrated in Fig. 1. The model comprises three key components: a pre-trained SSL feature extractor , a quality prediction backbone (i.e., MultiGauss), and a domain adversarial branch. SSL-Based F eature Extractor: Gi ven the vast div ersity of pre-trained SSL models, we specifically select the XLS-R 2B model [11] due to its exceptional model capacity and training scale. While it is primarily trained on speech, pre vious w orks [12], [13] show that speech-based SSL representations can accurately assess the quality of vinyl music collections and en- code general audio events, such as environmental sounds, with high fidelity . By lev eraging this broad acoustic knowledge, we utilize XLS-R as a general-purpose encoder to ensure stable quality assessment across the diverse speech, music, and audio conditions in our study . MultiGauss Backbone (MOS Predictor): W e leverage the state-of-the-art MultiGauss framew ork [14] as our backbone. It e xtracts a flattened latent representation h to predict a multiv ariate mean vector m (representing quality scores across multiple aspects) and a cov ariance matrix Λ . The matrix Λ ex- plicitly models predictiv e uncertainty and captures correlations between these quality dimensions. While Λ models predictiv e uncertainty , our analysis focuses on the latent representation h and the mean vector m to align directly with MOS-based 1 Our code: https://github.com/610494/domainGRL. SSL-Based Feature Extractor Dropout Dropout Flatten Dense D Affine T ransformation Encoder Domain Branch MOS Branch Dense 32, ReLU Dense 64, ReLU GRL Maxpool LayerNorm, ReLU Conv1D, k =5, c =32 Maxpool LayerNorm, ReLU Conv1D, k =5, c =32 Cholesky T ransformation Dense 14 Dense 32, ReLU Dense 64, ReLU ❄ 🔥 🔥 🔥 Active only during training Active during training and inference T rainable Frozen 🔥 ❄ Fig. 1. The proposed model architecture with D A T . ev aluation metrics. This ensures that the domain adaptation process prioritizes the most salient features responsible for quality score prediction. Domain Discriminator: T o disentangle domain-specific information, we introduce a parallel “Domain Branch” con- nected to the shared representation h via a Gradient Re versal Layer (GRL) [15]. This branch comprises stacked dense layers culminating in an output layer of size D (where D denotes the number of domains), mapping the features to a domain prediction vector d . During training, the GRL rev erses the gradients flowing from this discriminator to the encoder , effec- tiv ely forcing h to become in variant to the domain distinctions. Optimization Objective: The entire framew ork is trained end-to-end using a multi-task objectiv e: L total = L task + λ L adv (1) Follo wing MultiGauss [14], we employ the Gaussian Neg- ativ e Log-Likelihood (GNLL) loss as L task to estimate the multiv ariate parameters ( m and Λ ). L adv denotes the standard cross-entropy loss for domain classification. Through the GRL, the gradients from L adv are reversed during backpropag ation, thereby enforcing the encoder to learn domain-in variant rep- resentations while minimizing the prediction error . B. Domain Definition Strate gies The effecti veness of the GRL hinges on the definition of the adversarial tar get. Unlike prior w orks that rely on fix ed domain labels, we systematically explore three strate gies covering explicit, latent, and stochastic definitions: • DA T -Source (Dataset Origin): This explicit strategy uti- lizes the inherent dataset identifiers (e.g., AudioSet vs. LibriTTS) as domain labels ( N = 6 ). It aims to capture macro-lev el variations in production environments, such as differences in recording equipment, codec standards, and post-processing pipelines specific to each data source. • DA T -Kmeans (Latent Acoustic): T o capture acoustic varia- tions that transcend dataset boundaries, we employ unsuper- vised K-means clustering on the pre-trained acoustic embed- dings extracted from the training set. Specifically , we utilize the last-layer representations from the same frozen SSL backbone used for MOS prediction, applying temporal mean pooling to obtain global utterance-level embeddings for standard K-means clustering (using Euclidean distance). W e treat the number of clusters K as a dynamic hyperparameter representing the granularity of the domain definition. W e explore a range of granularities (e.g., K ∈ { 2 , . . . , 10 } ), selected to encompass the number of explicit source datasets ( N = 6 ), to identify the optimal resolution for capturing fine-grained, implicit acoustic textures—such as re verbera- tion patterns or background noise profiles—that are often not annotated but significantly impact the domain distrib ution. • DA T -Random (P erturbation): This strategy assigns ran- dom labels to samples. It serves as a baseline to verify whether performance gains stem from meaningful domain disentanglement or merely from the stochastic re gularization effect of the gradient reversal mechanism. I I I . E X P E R I M E N TA L S E T U P A. Dataset W e e valuate our proposed method on the AES-Natural dataset [16], utilizing a rigorous split protocol to benchmark generalization from natural acoustic priors to unseen gen- erativ e scenarios. The T raining and V alidation sets consist of natural recordings stratified into three categories: Speech (EARS, LibriTTS, and Common V oice), Music (MUSDB18 and MusicCaps), and General Audio (AudioSet). Due to source av ailability , the final training set comprises 2,544 clips (approx. 31.6 hours), and the validation set consists of 232 clips (approx. 3.2 hours). In contrast, the Evaluation set is strictly disjoint, containing 3,060 machine-generated audio samples (approx. 7.9 hours) synthesized by various generative models. T o ensure reliable ground truth, all samples were annotated by a panel of 10 expert listeners possessing pro- fessional backgrounds in audio engineering or music theory . Departing from traditional MOS datasets that strictly e val- uate technical degradation, AES-Natural characterizes au- dio perception along four distinct dimensions. This multi- dimensional schema allo ws us to disentangle lo w-lev el signal fidelity from inherent content attributes: • Production Quality (PQ): Reflects the low-level tec hnical fidelity of the signal. This metric focuses on physical signal degradations, such as noise floor , distortion, and bandwidth limitations, which are typically dependent on the recording equipment and channel characteristics. • Production Complexity (PC): Quantifies the structural richness and density of the audio content (e.g., the number of activ e stems in a mix or the layering of sound effects). While strongly correlated with content type, simple models may risk forming spurious correlations between this metric and specific dataset signatures rather than the content itself. • Content Enjoyment (CE): Represents the intrinsic aes- thetic appeal and engagement value of the audio. As an intrinsic aesthetic attribute, CE abstracts away from simple signal fidelity but can be susceptible to bias arising from listener preferences for specific genres or recording styles. • Content Usefulness (CU): Assesses the functional utility of the audio for its intended application (e.g., speech intelligi- bility or atmospheric immersion for en vironmental sounds). B. T raining Setup T o verify generalizability , we integrate the D A T strategy into two distinct backbone architectures. First, for MultiGauss [14] , we follow the original implementation, training for 30 epochs with a batch size of 64 and a learning rate of 1 × 10 − 4 . The best checkpoint is selected based on the lowest vali- dation loss. Second, we ev aluate A udiobox-Aesthetics [16] , an architecture that directly predicts quality from multi-layer W avLM features without an additional encoder . Unlike the frozen backbone in MultiGauss, we fully fine-tune this encoder for 200 epochs (batch size 16, learning rate 1 × 10 − 5 ) to enable adversarial gradient propagation. Both models are optimized using AdamW with 0 weight decay . The adversarial loss weight Λ acts as a hyperparameter controlling the trade-of f between task performance and domain in variance. Through empirical validation, we set λ = 0 . 5 for the D A T -Source strategy to effecti vely bridge the significant distribution gaps between distinct datasets. Con versely , for the D A T -Kmeans and DA T -Random strategies, we set λ = 0 . 1 . This reduced weight is crucial for the DA T -Kmeans strategy to prev ent over -regularization since the implicit clusters may partially encode quality-related information that should not be aggressiv ely suppressed. For the D A T -Kmeans strategy , we specifically set K = 8 as the default granularity , which will be further analyzed in Sec. IV -C. C. Evaluation Metrics T o comprehensi vely assess our model, we report system- lev el Mean Squared Error (MSE) and Spearman’ s Rank Corre- lation Coefficient (SRCC). Following the e valuation protocols of prominent MOS prediction challenges [21], all metrics are calculated by first a veraging the predictions and ground-truth labels for all utterances belonging to the same generativ e system. SRCC is prioritized as the primary metric to reflect the model’ s capability to reliably rank div erse generativ e systems. By combining it with system-lev el MSE, which assesses absolute precision and model calibration, we ensure a robust ev aluation that disentangles ranking consistency from absolute scale errors in cross-domain scenarios. T ABLE I P E RF O R M A N C E CO M PAR I S O N W I T H EX I S T I N G B A S E L I N E S AC RO S S F O U R A S P E C T S : P R O D U C T I O N Q UA L I T Y ( P Q ) , P R O D U C T I O N C O M P L E X I T Y ( P C ) , C O N T E N T E N J O Y M E N T ( C E ) , A N D C O N T E N T U S E F U L N E S S ( C U ) . T H E S Y M B O L † I N D I C AT E S R E S U LT S C I T E D F R O M O R I G I NA L PA P E R S . B E S T P E R F O R M A N C E I N E AC H C O L U M N I S H I G H L I G H T E D I N B O L D . System / Strategy PQ (T echnical) PC (Content) CE (Content) CU (Functional) MSE ↓ SRCC ↑ MSE ↓ SRCC ↑ MSE ↓ SRCC ↑ MSE ↓ SRCC ↑ Existing Baselines (SOT A) QAMR O † [17] - 0.883 - 0.942 - 0.869 - 0.852 DRASP † [18] - 0.900 - 0.936 - 0.890 - 0.911 AESA-Net † [19] 0.635 0.896 0.198 0.928 3.991 0.904 0.533 0.894 MultiGauss [14] 0.557 0.942 1.093 0.947 1.841 0.938 0.945 0.961 + L2 Regularization [20] 0.472 0.941 0.962 0.944 1.634 0.945 0.874 0.962 + High Dropout 0.649 0.944 1.894 0.945 2.182 0.965 1.060 0.947 + DA T -Source (Ours) 0.413 0.940 0.747 0.969 1.581 0.967 0.855 0.959 + DA T -Kmeans (Ours) 0.479 0.953 0.928 0.945 1.605 0.952 0.835 0.963 + DA T -Random (Ours) 0.390 0.941 0.945 0.958 1.689 0.961 0.789 0.959 PQ PC CE CU 0.0 0.5 1.0 1.5 2.0 2.5 MSE PQ PC CE CU 0.70 0.75 0.80 0.85 0.90 0.95 1.00 SRCC Baseline DA T -Source DA T -Kmeans Fig. 2. Performance comparison on Audiobox-Aesthetics across MSE and SRCC. The results are reported for four aspects: PQ, PC, CE, and CU. I V . R E S U LT S A N D D I S C U S S I O N A. Main results W e ev aluate the effecti veness of the proposed domain adver - sarial training (D A T) framework on the MultiGauss backbone. T able I presents the performance comparison. The results demonstrate that explicitly disentangling domain information consistently improves model rob ustness. Following [22], a two-sided t-test ( p ≤ 0 . 05 ) confirms that the performance gains of our proposed DA T strategies are statistically signifi- cant compared to the baseline. Our experiments re veal that the optimal definition of a “domain” is inherently dimension-dependent, reflecting the distinct nature of different perceptual attributes. For inherent content attrib utes, specifically Production Com- plexity (PC) and Content Enjoyment (CE), the D A T -Source strategy yields the most significant improvements. Since these attributes exhibit systematic biases—for instance, music datasets inherently yield much higher complexity scores than speech datasets—the baseline model is prone to “shortcut learning” based on dataset signatures. D A T -Source penalizes this reliance, significantly reducing PC MSE from 1.093 to 0.747 while achie ving the highest SRCC of 0.969. This improv ement stems from forcing the encoder to learn intrinsic structural representations rather than relying on source identity . In contrast, technical and functional attrib utes, such as Pro- duction Quality (PQ) and Content Usefulness (CU), achiev e optimal performance under the D A T -Kmeans strate gy . Un- like content-related biases, technical degradations (e.g., back- ground noise, reverberation) frequently transcend dataset boundaries and overlap across sources. W e observe an interest- ing trade-off here: while explicit source labels help calibrate the absolute score scale (reducing PQ MSE to 0.413), the latent acoustic clusters discov ered by K-means better capture fine-grained texture variations essential for preserving relati ve rankings. This is evidenced by D A T -Kmeans achieving the highest SRCC of 0.953 for PQ. Thus, for technical metrics where domain distrib utions overlap, unsupervised acoustic clustering of fers a superior adversarial target for refining ranking capabilities. T o verify whether performance gains stem from blind reg- ularization rather than principled domain inv ariance, we com- pared our strategies against L2 regularization, High Dropout, and DA T -Random. While L2 re gularization and D A T -Random provide improvements in absolute error (MSE) for certain aspects, they consistently fail to match the superior ranking performance of our aspect-specific D A T strategies in terms of SRCC. Crucially , SRCC serves as our primary ev aluation metric as it directly reflects the model’ s capability to reli- ably rank generative systems, a task where traditional and stochastic regularization prov e inadequate compared to our targeted disentanglement approach. Furthermore, increasing stochasticity via High Dropout leads to statistically significant performance degradation. Notably , our framew ork consistently outperforms these traditional and stochastic regularization techniques across the majority of dimensions. These results confirm that targeted domain disentanglement is fundamen- tally superior to blind generic regularization. By explicitly addressing the specific nature of each quality dimension, our framework effecti vely purges spurious correlations and empowers state-of-the-art backbones to capture more intrinsic and generalized quality features. Generalization acr oss Model Arc hitectures. T o verify whether the effecti veness of our aspect-specific strategies gen- eralizes across different frameworks, we ev aluate the proposed method on the Audiobox-Aesthetics [16] architecture. Unlike the MultiGauss backbone, which uses frozen XLS-R features, (a) Multigauss (b) Multigauss + DA T -Source (c) Multigauss (d) Multigauss + DA T -Source 2 4 6 8 10 Ground T ruth MOS AudioSet MUSDB18 MusicCaps EARS LibriTTS Common V oice Fig. 3. V isualization of the h on the development set using UMAP . The top row is colored by source domain labels, and the bottom by PC scores. this model utilizes fine-tuned W avLM representations, provid- ing a distinct feature space for validation. As illustrated in Fig. 2, the performance trends are highly consistent with previous observations: the D A T -Source strategy remains superior for inherent content attributes (PC, CE), while DA T -Kmeans con- sistently excels in technical and functional dimensions (PQ, CU). This alignment across different backbone architectures and SSL feature extractors validates the robustness of our domain definition strategies and confirms their benefits are independent of the underlying model configuration. B. Latent Space Analysis T o analyze the manifold structure and verify whether the D A T framework effecti vely removes domain-specific informa- tion from the latent space, we project the bottleneck features h obtained from the model encoder into a two-dimensional space using UMAP . As illustrated in Fig. 3 (top ro w), the baseline model exhibits severe domain bias. Specifically , the region highlighted by the red dashed box in Fig. 3(a) sho ws a tight cluster formed solely by dataset identity . Howe ver , referencing Fig. 3(c), it becomes evident that samples within this domain- driv en cluster possess v astly different quality scores. This clustering fragments the semantic space: high-quality samples are isolated within their respectiv e domain “islands” rather than forming a cohesi ve high-quality region. This confirms that the baseline learns spurious correlations, grouping samples by domain signatures rather than their actual perceptual quality . In contrast, our proposed method successfully merges these heterogeneous domains into a unified manifold, indicating the remov al of non-causal signatures. Crucially , this alignment preserves intrinsic quality information, as Fig. 3(d) rev eals a continuous quality gradient transitioning from low to high quality across the aligned manifold. T o further inv estigate the relationship between domain in- variance and quality prediction, we extend the UMAP projec- tion to three dimensions by incorporating the predicted MOS 0 5 10 15 18 20 22 24 2 4 6 8 2 4 6 MOS (a) Multigauss 30 20 10 0 10 30 20 10 2 4 6 8 2 4 6 MOS (b) Multigauss + DA T -Source Fig. 4. 3D “Quality T errain” generated by combining 2D UMAP projections of encoder features h with the predicted MOS as the z-axis for (a) the baseline and (b) our proposed DA T strategy . as the vertical axis, creating a “Quality T errain” visualization. This allo ws us to inspect whether the latent manifold maintains a consistent structural hierarchy across dif ferent score ranges. As shown in Fig. 4(a), the baseline model’ s features remain scattered into fragmented clusters across the 3D space. Even at identical quality levels, samples from different domains are horizontally segregated, forcing the model to navigate a dis- jointed latent space. In contrast, our DA T strategy (Fig. 4(b)) collapses these horizontal domain variances into a cohesi ve “Quality Pillar . ” In this structure, the manifold aligns vertically according to the quality gradient, where samples from all heterogeneous domains are successfully mapped onto a shared, continuous trajectory . This vertical alignment confirms that our domain-adversarial objecti ve does not compromise the ranking capability; instead, it enforces a principled represen- tation where domain-in variant features and quality-rele vant information are effecti vely disentangled and organized. Linear probing on h (T able II) re veals the baseline’ s sev ere dataset entanglement (90.9% Domain Acc.) artificially inflates its PC SRCC (0.891) via identity shortcuts. D A T -Source effecti vely purges these spurious signatures (87.5% Acc.), intentionally dismantling this shortcut (PC drops to 0.879) for superior zero-shot generalization (T able I). Con versely , D A T -Kmeans inadvertently increases predictability (92.2%) by clustering acoustic textures; this effecti vely organizes the linear manifold, yielding the optimal predictor for technical attributes lik e PQ (0.800). C. Impact of Domain Granularity and Gr ouping Strate gy T o in vestigate the impact of domain granularity K , we ev aluated model performance across K ∈ { 2 , 4 , 6 , 8 , 10 } , centered around K = 6 to provide a direct comparison with the D A T -Source strategy . This analysis aims to determine whether latent acoustic clusters offer more precise domain disentanglement than explicit dataset identities. As illustrated in Fig. 5, the proposed D A T -Kmeans strategy demonstrates a more structured and superior performance trend compared to the random assignment baseline. The D A T -Kmeans strategy reaches its performance peak at K = 8 (marked with ⋆ in Fig. 5), achieving the highest gain in ranking consistency ( ∆ SRCC ≈ 0 . 011 ) and a significant reduction in error ( ∆ MSE ≈ 0 . 08 ). While K = 10 yields a slightly higher MSE gain, its ∆ SRCC drops below the baseline, suggesting T ABLE II L I N E A R P RO B I N G A NA LYS I S O N L ATE N T F E ATU R E S h . Strategy Domain Acc. (%, ↓ ) PQ SRCC ( ↑ ) PC SRCC ( ↑ ) Baseline 90.9 0.795 0.891 D A T -Source 87.5 0.798 0.879 D A T -Kmeans 92.2 0.800 0.886 that ov er-partitioning the acoustic space introduces noise that hinders the model’ s ranking ability . In contrast, the random strategy exhibits high instability . Although it sho ws sporadic gains in MSE (e.g., at K = 8 ), its impact on SRCC is erratic and often falls into the negativ e range, indicating a degradation in ranking capability compared to the baseline. This disparity confirms that domain definitions must be anchored in meaningful acoustic sub-structures to provide reliable adversarial gradients. The superior trajectory of K-means v alidates our hypothesis that data-dri ven clustering effecti vely captures the underlying domain bias essential for robust audio quality assessment. V . C O N C L U S I O N A N D F U T U R E W O R K In this paper, we introduced Domain Adversarial Training (D A T) to the task of MOS prediction to address the critical issue of shortcut learning. Specifically , we proposed an aspect- specific D A T framew ork, demonstrating that by forcing the encoder to be in variant to domain factors, we can significantly improv e the robustness and generalization of quality assess- ment models. Our analysis rev eals that the optimal definition of a “domain” is inherently dimension-dependent: explicit source labels are superior for disentangling content-related biases, while latent acoustic clusters are more effecti ve for refining technical quality rankings. These strategies consistently empower state-of-the-art back- bones to capture intrinsic quality features rather than spurious correlations. Future work will focus on de veloping a unified multi-branch architecture that simultaneously integrates both explicit source constraints and latent acoustic clustering. By lev eraging these complementary domain definitions, we aim to build a robust, uni versal model that achieves optimal performance across all perceptual dimensions of audio quality . R E F E R E N C E S [1] T akaaki Saeki, Detai Xin, W ataru Nakata, T omoki K oriyama, Shinno- suke T akamichi, and Hiroshi Saruwatari, “UTMOS: UT okyo-SaruLab system for V oiceMOS challenge 2022, ” in Pr oc. Interspeech , 2022. [2] Soham Deshmukh, Dareen Alharthi, Benjamin Elizalde, Hannes Gam- per , Mahmoud Al Ismail, Rita Singh, Bhiksha Raj, and Huaming W ang, “P AM: Prompting audio-language models for audio quality assessment, ” in Pr oc. Interspeech , 2024. [3] Ryandhimas E. Zezario, Szu-W ei Fu, Fei Chen, Chiou-Shann Fuh, Hsin-Min W ang, and Y u Tsao, “Deep learning-based non-intrusive multi-objectiv e speech assessment model with cross-domain features, ” IEEE/ACM T ransactions on Audio, Speech, and Language Processing , vol. 31, pp. 54–70, 2023. [4] Haoning W u, Erli Zhang, Liang Liao, Chaofeng Chen, Jingwen Hou, Annan W ang, W enxiu Sun, Qiong Y an, and W eisi Lin, “Exploring video quality assessment on user generated contents from aesthetic and technical perspectiv es, ” in Pr oc. ICCV , 2023. [5] Tianchi Liu, Kong Aik Lee, Qiongqiong W ang, and Haizhou Li, “Disen- tangling v oice and content with self-supervision for speaker recognition, ” in Pr oc. NeurIPS , 2023. 0.00 0.01 S R C C K - m e a n s ( S R C C ) R a n d o m ( S R C C ) 2 4 6 8 10 N u m b e r o f D o m a i n s ( K ) 0.1 0.0 0.1 0.2 M S E K - m e a n s ( M S E ) R a n d o m ( M S E ) Fig. 5. Ablation study on domain granularity K for the PQ dimension. The top panel shows the absolute improvement in SRCC ( ∆ SRCC), and the bottom panel shows the improvement in MSE ( ∆ MSE) relativ e to the baseline. The star ( ⋆ ) denotes the optimal configuration at K = 8 , which yields the most balanced gains across both metrics. [6] Y aroslav Ganin, Evgeniya Ustinova, Hana Ajakan, Pascal Germain, Hugo Larochelle, Franc ¸ ois Laviolette, Mario March, and V ictor Lem- pitsky , “Domain-adversarial training of neural networks, ” Journal of Machine Learning Research , vol. 17, no. 59, pp. 1–35, 2016. [7] Y usuke Shinohara, “ Adversarial multi-task learning of deep neural networks for robust speech recognition, ” in Pr oc. Interspeech , 2016. [8] Sining Sun, Ching-Feng Y eh, Mei-Y uh Hwang, Mari Ostendorf, and Lei Xie, “Domain adversarial training for accented speech recognition, ” in Pr oc. ICASSP , 2018. [9] Mohammed Abdelwahab and Carlos Busso, “Domain adversarial for acoustic emotion recognition, ” IEEE/ACM T ransactions on Audio, Speech, and Language Processing , vol. 26, pp. 2423–2435, 2018. [10] T omohiro T anaka, Ryo Masumura, Hiroshi Sato, Mana Ihori, K ohei Mat- suura, T akanori Ashihara, and T akafumi Moriya, “Domain adversarial self-supervised speech representation learning for improving unknown domain downstream tasks, ” in Proc. Interspeech , 2022. [11] Arun Bab u, Changhan W ang, Andros Tjandra, Kushal Lakhotia, Qiantong Xu, Naman Goyal, Kritika Singh, Patrick von Platen, Y atharth Saraf, Juan Pino, Ale xei Bae vski, Alexis Conneau, and Michael Auli, “XLS-R: Self-supervised cross-lingual speech representation learning at scale, ” in Pr oc. Interspeech , 2022. [12] Alessandro Ragano, Emmanouil Benetos, and Andre w Hines, “ Audio quality assessment of vinyl music collections using self-supervised learning, ” in Pr oc. ICASSP , 2023. [13] Tung-Y u W u, Tsu-Y uan Hsu, Chen-An Li, Tzu-Han Lin, and Hung- yi Lee, “The efficac y of self-supervised speech models for audio representations, ” in Pr oc. HEAR , 2022. [14] Fredrik Cumlin, Xinyu Liang, V ictor Ungureanu, Chandan K. A. Reddy , Christian Sch ¨ uldt, and Saikat Chatterjee, “Multiv ariate probabilistic assessment of speech quality , ” in Pr oc. Interspeech , 2025. [15] Y aroslav Ganin and V ictor Lempitsky , “Unsupervised domain adaptation by backpropagation, ” in Pr oc. ICML , 2015. [16] Andros Tjandra, Y i-Chiao W u, Baishan Guo, John Hoffman, Brian Ellis, Apoorv Vyas, Bowen Shi, Sanyuan Chen, Matt Le, Nick Zacharov , Car- leigh W ood, Ann Lee, and W ei-Ning Hsu, “Meta audiobox aesthetics: Unified automatic quality assessment for speech, music, and sound, ” in Arxiv preprint arXiv:2502.05139 , 2025. [17] Chien-Chun W ang, Kuan-T ang Huang, Cheng-Y eh Y ang, Hung-Shin Lee, Hsin-Min W ang, and Berlin Chen, “QAMRO: Quality-aware adaptiv e margin ranking optimization for human-aligned assessment of audio generation systems, ” in Pr oc. ASRU , 2025. [18] Cheng-Y eh Y ang, Kuan-T ang Huang, Chien-Chun W ang, Hung-Shin Lee, Hsin-Min W ang, and Berlin Chen, “DRASP: A dual-resolution attentiv e statistics pooling frame work for automatic MOS prediction, ” in Pr oc. APSIP A ASC , 2025. [19] Dyah A. M. G. W isnu, Ryandhimas E. Zezario, Stefano Rini, Hsin-Min W ang, and Y u Tsao, “Improving perceptual audio aesthetic assessment via triplet loss and self-supervised embeddings, ” in Pr oc. ASRU , 2025. [20] Ilya Loshchilov and Frank Hutter, “Decoupled weight decay regulariza- tion, ” in Pr oc. ICLR , 2019. [21] W en-Chin Huang, Hui W ang, Cheng Liu, Y i-Chiao Wu, Andros Tjandra, W ei-Ning Hsu, Erica Cooper , Y ong Qin, and T omoki T oda, “The audioMOS challenge 2025, ” in Pr oc. ASRU , 2025. [22] Erica Cooper , W en-Chin Huang, T omoki T oda, and Junichi Y amagishi, “Generalization ability of MOS prediction networks, ” in Proc. ICASSP , 2022.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment