Online Semi-infinite Linear Programming: Efficient Algorithms via Function Approximation

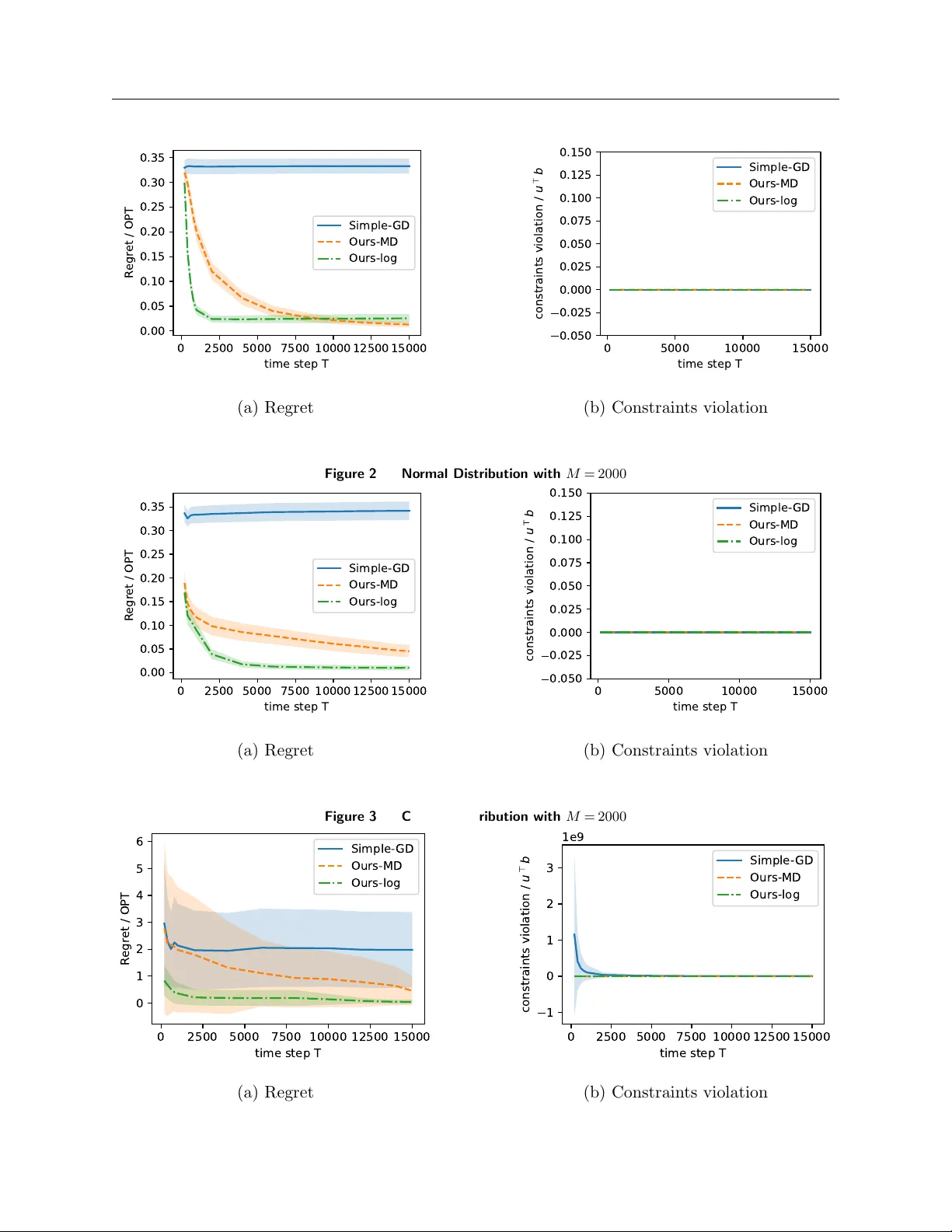

We consider the dynamic resource allocation problem where the decision space is finite-dimensional, yet the solution must satisfy a large or even infinite number of constraints revealed via streaming data or oracle feedback. We model this challenge a…

Authors: Yiming Zong, Jiashuo Jiang