AI-Generated Figures in Academic Publishing: Policies, Tools, and Practical Guidelines

The rapid advancement of generative AI has introduced a new class of tools capable of producing publication-quality scientific figures, graphical abstracts, and data visualizations. However, academic publishers have responded with inconsistent and of…

Authors: Davie Chen

AI-Generated Figures in A cademic Publishing: P olicies, T o ols, and Practical Guidelines Da vie Chen ∗ Univ ersity of Arts in P oznań P oznań, P oland Marc h 2026 Abstract The rapid adv ancemen t of generative AI has in tro duced a new class of tools capable of pro ducing publication-qualit y scientific figures, graphical abstracts, and data visualizations. Ho w ever, academic publishers hav e responded with inconsistent and often ambiguous p olicies regarding AI-generated imagery . This pap er surveys the current stance of ma jor journals and publishers—including Natur e , Scienc e , Cel l Pr ess , Elsevier , and PLOS —on the use of AI-generated figures. W e identify k ey concerns raised by publishers, including reproducibility , authorship attribution, and p otential for visual misinformation. Dra wing on practical examples from to ols suc h as SciDraw [ SciDra w , 2025 ], an AI-p ow ered platform designed sp ecifically for scien tific illustration, w e prop ose a set of b est-practice guidelines for researchers seeking to use AI figure-generation to ols in a complian t and transparent manner. Our findings suggest that, with appropriate disclosure and quality control, AI-generated figures can meaningfully accelerate scientific communication without compromising integrit y . Keyw ords: AI-generated figures, scien tific illustration, academic publishing p olicy , generative AI, reproducibility , SciDraw 1 In tro duction The visual comm unication of scien tific results has alw ays b een a cornerstone of academic publishing. Figures, diagrams, and graphical abstracts serve not only as efficien t summaries of complex data but also as critical tools for peer review, replication, and public engagement [ T ufte , 2001 , Rougier e t al. , 2014 ]. T raditionally , the creation of high-qualit y scien tific figures has b een a labor-intensiv e pro cess, requiring proficiency in sp ecialized soft w are such as Adobe Illustrator, BioRender, or domain-specific plotting libraries [ Bindslev , 2008 ]. This b ottleneck has long b een ac knowledged as a barrier to efficien t scientific communication, particularly for early-career researc hers and teams with limited design resources. The emergence of large-scale generative AI models—including diffusion mo dels [ Rom bach et al. , 2022 ], vision-language mo dels [ Ramesh et al. , 2022 ], and multimodal foundation mod- els [ T eam et al. , 2023 ]—has fu ndamen tally altered this landscape. These systems are no w capable of producing visually compelling images from natural-language prompts, raising the possibility that AI could demo cratize scien tific illustration. Several platforms ha v e b egun to sp ecialize in this domain: SciDraw [ SciDraw , 2025 ], for instance, is an AI-p ow ered platform that provides domain-sp ecific templates and styles tailored to the conv entions of academic publishing. Despite these adv ances, the academic communit y has b een cautious. Publishers ha ve issued a patc hw ork of guidelines that range from outrigh t prohibition to cautious acceptance, often with ∗ E-mail: da viec hen@bsu.edu.pl 1 v ague or con tradictory requiremen ts [ v an Dis et al. , 2023 ]. This inconsistency creates uncertaint y for researc hers who ma y wish to leverage AI to ols but fear non-compliance or rejection. This paper mak es three con tributions: (i) A comprehensiv e survey of current publisher p olicies on AI-generated figures across major journals and publishers (Section 2 ); (ii) An analysis of the k ey concerns driving these policies, including repro ducibility , attribution, and misinformation risks (Section 3 ); (iii) A set of practical, evidence-based guidelines for researc hers who wish to use AI figure- generation tools in a compliant and transparen t manner (Section 5 ), illustrated through examples generated with the SciDra w platform. 2 Curren t Publisher P olicies on AI-Generated Figures W e conducted a systematic review of the editorial policies of 12 ma jor publishers and journals regarding the use of AI-generated con tent in submitted manuscripts. Our review co vers policies as of January 2026. T able 1 summarizes the k ey findings. T able 1: Summary of publisher p olicies on AI-generated figures (as of Jan uary 2026). D = Dis- closure required; R = Restrictions on AI-generated imagery; A = AI cannot be listed as author. Publisher / Journal D R A Key Policy P oints Nature Portfolio ✓ P artial ✓ AI to ols m ust b e documented in Metho ds; AI-generated images m ust not misrepresent data Science / AAAS ✓ P artial ✓ T ext generated b y AI m ust b e dis- closed; images p olicy follo ws gen- eral integrit y guidelines Cell Press ✓ Y es ✓ AI-generated figures m ust b e clearly lab eled; no AI in data fig- ures without review Elsevier ✓ P artial ✓ Disclosure required in cov er letter and man uscript; AI cannot be cred- ited as author PLOS ONE ✓ Minimal ✓ Encourages transparency; no ex- plicit ban on AI figures if prop erly disclosed Springer Nature ✓ P artial ✓ Aligns with Nature P ortfolio; em- phasizes repro ducibility Wiley ✓ P artial ✓ A uthors retain full resp onsibility; disclosure in metho ds section IEEE ✓ P artial ✓ AI-generated conten t m ust be iden- tified; integrit y standards apply T a ylor & F rancis ✓ Minimal ✓ General guidance; encourages dis- closure MDPI ✓ Minimal ✓ Op en to AI tools with appropriate disclosure A CS Publications ✓ P artial ✓ A uthors b ear resp onsibilit y; AI use in figures should b e declared Ro y al Society ✓ P artial ✓ Emphasizes scien tific in tegrity; dis- closure exp ected 2 2.1 Nature P ortfolio Nature’s editorial p olicy , up dated in mid-2024, explicitly states that “ authors should cle arly indic ate when AI to ols have b e en use d in the cr e ation of any figur es or images ” [ Nature , 2024 ]. Nature distinguishes b etw een AI-assisted data visualization (e.g., automated c hart generation from data) and AI-generated conceptual illustrations. The former is generally permitted with disclosure, while the latter requires additional scrutiny to ensure that generated images do not fabricate or misrepresent exp erimen tal results. Imp ortan tly , Nature prohibits listing an y AI to ol as an author and requires that all AI- generated con tent b e do cumented in the Metho ds section. The p olicy also notes that reviewers ma y request additional v erification of AI-generated figures during the p eer review process. 2.2 Science / AAAS The American Asso ciation for the A dv ancement of Science (AAAS) issued up dated guidance in 2024 that extends its existing policy on text-based AI (primarily addressing large language mo dels) to visual conten t [ Thorp , 2023 ]. Science requires authors to disclose an y use of AI in figure creation and emphasizes that “ the r esp onsibility for the ac cur acy and inte grity of al l c ontent, including figur es, r ests entir ely with the human authors. ” Science’s p olicy is notably less prescriptive than Nature’s regarding the sp ecific types of AI-generated figures that are p ermissible, instead relying on general scien tific in tegrit y standards and peer review to adjudicate individual cases. 2.3 Cell Press / Elsevier Cell Press has adopted one of the more restrictiv e stances among major publishers. As of 2025, Cell Press requires that AI-generated figures b e “ cle arly lab ele d as such in the figur e le gend ” and prohibits the use of AI-generated images in an y figure that purp orts to represen t primary exp erimen tal data [ Cell Press , 2024 ]. This effectively limits AI-generated figures to sc hematic diagrams, graphical abstracts, and conceptual illustrations. Elsevier, the paren t company , has adopted a broader but consistent p olicy across its p ortfolio, requiring disclosure in b oth the co v er letter and the manuscript b o dy [ Elsevier , 2024 ]. 2.4 PLOS ONE PLOS ONE has taken a comparativ ely p ermissive approach, consistent with its op en-access philosoph y . The journal encourages transparency and requires authors to disclose AI tool usage but do es not imp ose categorical restrictions on AI-generated figures [ PLOS , 2024 ]. PLOS’s p osition is that the scien tific communit y should “ develop norms thr ough pr actic e r ather than pr ohibition. ” 2.5 Summary of P olicy Landscap e Sev eral patterns emerge from this surv ey: • Univ ersal disclosure requiremen ts: Every ma jor publisher w e reviewed requires some form of disclosure when AI tools are used to create figures. • Human authorship: No publisher permits AI tools to be listed as authors. • V arying restrictions: P olicies range from near-prohibition (Cell Press for data figures) to minimal restriction (PLOS, MDPI) for non-data illustrations. • Am biguity: Many p olicies lac k sp ecific guidance on edge cases, suc h as AI-assisted lay out design or AI-enhanced color optimization. 3 3 Key Concerns in the A cademic Comm unity The policies summarized in Section 2 reflect a set of recurring concerns that w e no w examine in detail. 3.1 Repro ducibilit y A fundamental principle of scien tific publishing is that published results should b e repro- ducible [ Ioannidis , 2005 ]. AI-generated figures presen t a challenge to this principle because: 1. Sto c hastic outputs: Most generativ e mo dels produce non-deterministic outputs. The same prompt ma y yield differen t images on different runs, making exact repro duction imp ossible without archiving the sp ecific output. 2. Mo del v ersioning: AI services frequently update their underlying mo dels. An image generated b y D ALL · E 3 in 2024 may not b e reproducible with a later v ersion of the same service. 3. Prompt opacity: The relationship b etw een a natural-language prompt and the resulting image is often non-transparen t, making it difficult to assess whether a particular visual represen tation faithfully captures the intended scientific conten t. These concerns can b e partially mitigated b y arc hiving the full generation parameters (prompt text, model v ersion, random seed if a v ailable) alongside the published figure—a practice that some domain-specific platforms, suc h as SciDra w, supp ort b y maintaining generation history and metadata for eac h figure pro duced [ SciDraw , 2025 ]. 3.2 A uthorship and A ttribution The question of authorship is both philosophical and practical. Ma jor publishers hav e uni- formly declined to grant authorship status to AI to ols, on the grounds that authorship implies accoun tability—a capacit y that current AI systems lac k [ Nature , 2024 , Thorp , 2023 ]. Ho wev er, the question of attribution is more n uanced. When a researc her uses an AI to ol to generate a figure, should the tool be credited (analogously to soft ware citations)? Should the sp ecific model and v ersion b e do cumented? Current practices are inconsisten t, but a consensus is emerging that AI to ols should be cited in the Metho ds section, similar to how researchers cite bioinformatics tools or statistical softw are. 3.3 Visual Misinformation P erhaps the most serious concern is the potential for AI-generated figures to create visual misinformation—images that app ear to depict real experimental results but are in fact fabricated or misleading [ Bik et al. , 2016 ]. This risk is heigh tened by the photorealistic capabilities of mo dern image-generation mo dels. Ho wev er, it is imp ortant to distinguish b et ween differen t categories of scientific figures: • Data figures (e.g., microscopy images, gel electrophoresis, plots of exp erimen tal data): AI generation of these figures poses clear in tegrit y risks and is widely prohibited. • Sc hematic figures (e.g., molecular mechanisms, experimental workflo ws, researc h frame- w orks): These are inheren tly illustrativ e and do not purp ort to represen t ra w data. AI generation of such figures is generally considered low er risk. • Graphical abstracts and conceptual diagrams: These serv e a comm unicative rather than evidentiary function and are generally considered appropriate candidates for AI generation. 4 3.4 Cop yright and T raining Data A related concern inv olv es the cop yright status of AI-generated images. Generative mo dels are trained on large datasets that may include cop yrighted material, raising questions ab out whether the outputs constitute deriv ative works [ Samuelson , 2023 ]. This issue is particularly relev ant for academic publishing, where journals typically require authors to w arrant that submitted materials do not infringe third-part y cop yrights. Some AI platforms address this by using licensed or op en-source training data, or b y providing indemnification to users. Researchers should ev aluate the legal p osture of their chosen to ols b efore submission. 4 Existing AI Figure Generation T o ols: A Comparativ e Ov erview W e no w compare the landscap e of AI to ols av ailable for scientific figure generation, distinguishing b et w een general-purp ose and domain-specific platforms. 4.1 General-Purp ose T o ols General-purp ose image generation tools—including Midjourney , DALL · E [ Ramesh et al. , 2022 ], and Stable Diffusion [ Rom bach et al. , 2022 ]—offer pow erful capabilities but presen t several limitations for academic use: • Lac k of domain con ven tions: These tools are not trained on (or tuned for) the visual con ven tions of scien tific publishing, such as standard color sc hemes for molecular diagrams, appropriate fon t sizes for axis lab els, or journal-sp ecific formatting requiremen ts. • Inconsisten t text rendering: Scien tific figures frequen tly con tain labels, annotations, and legends. General-purp ose mo dels often produce garbled or inaccurate text in images. • No structured metadata: Generation parameters are not automatically arc hived in a format suitable for academic disclosure. • Cop yright uncertaint y: The training data for these mo dels ma y include cop yrigh ted scien tific figures, creating potential IP concerns. 4.2 Domain-Sp ecific Platforms A new er class of to ols has emerged that sp ecifically targets the academic market. Among these, SciDra w [ SciDra w , 2025 ] provides a represen tative example of this domain-specific approach. SciDra w offers: • A cademic-oriented templates: Predefined templates for common figure types suc h as exp erimen tal designs, researc h framew orks, mechanism diagrams, and tec hnical roadmaps (see Figures 1 – 5 ). • St yle consistency: Domain-sp ecific visual styles that conform to the con ven tions of academic publishing, including appropriate use of color, t yp ography , and la y out. • Iterativ e refinement: A con versational interface that allo ws researc hers to iterativ ely refine figures through natural-language instructions, preser ving the generation history for repro ducibilit y documentation. • Multiple generation mo des: Supp ort for text-to-image generation (from scratch), sketc h- to-image (con verting rough la youts to p olished figures), and image-to-image transformation (refining existing figures). • Metadata retention: Automatic logging of prompts, model parameters, and generation history to facilitate disclosure and reproducibility . T able 2 pro vides a feature comparison across represen tative to ols. 5 T able 2: F eature comparison of AI figure-generation tools for academic use. F eature D ALL · E Midjourney Stable Diff. SciDra w A cademic templates × × × ✓ Domain-sp ecific styles × × × ✓ T ext rendering quality Medium Medium Lo w High Iterativ e refinemen t Limited Limited Y es (img2img) ✓ Generation history × P artial Man ual ✓ Metadata exp ort × × Man ual ✓ Multi-mo dal input Image+T ext Image+T ext Image+T ext Image+T ext+Sk etch Asp ect ratio control Limited ✓ ✓ ✓ Cost p er generation API-based Subscription F ree/Op en Credit-based 4.3 Case Studies: SciDra w-Generated A cademic Figures T o illustrate the current capabilities of domain-sp ecific AI figure generation, w e present several examples pro duced using the SciDra w platform. These figures demonstrate the range of scientific illustration tasks that can be addressed with curren t AI to ols. Figure 1 sho ws a molecular mec hanism diagram that illustrates CAR-T cell therapy targeting HER2 tumor antigens. This t yp e of figure is commonly required in imm unology and oncology researc h but is time-consuming to create manually . The AI-generated version demonstrates coheren t spatial organization, accurate molecular nomenclature (e.g., ZAP70, PLC γ , IFN- γ ), and visually differentiated cell types. Figure 2 presents an exp erimen tal design diagram for a preclinical animal study . Suc h figures are increasingly expected b y journals to accompany man uscripts describing in viv o experiments, as they pro vide a rapid visual summary of the study design. The AI-generated version includes appropriate detail on group allocation, dosing sc hedules, and analytical endp oints. Figure 3 illustrates a comprehensive research framework for a so cial science study . This example demonstrates that AI figure-generation to ols are applicable b eyond the natural sciences, pro ducing structured diagrams that organize research ob jectives, methodologies, and expected con tributions in a visually coherent format. Collectiv ely , these examples (Figures 1 – 5 ) demonstrate sev eral strengths of domain-sp ecific AI figure generation: • Accurate and legible text rendering within complex diagrams; • Consistent visual style across differen t figure t yp es; • Domain-appropriate use of color, iconography , and spatial organization; • Sufficient detail for inclusion in academic man uscripts without post-editing. 4.4 Selection Criteria for AI Figure T o ols Based on our analysis, we recommend that researchers ev aluate AI figure-generation to ols using the follo wing criteria: 1. Output qualit y: Does the to ol pro duce figures that meet the visual standards of the target journal? 2. Domain sp ecificity: Do es the tool understand the con v en tions of scien tific illustration? 3. T ext accuracy: Can the to ol reliably render labels, annotations, and legends? 4. Repro ducibilit y supp ort: Do es the to ol archiv e generation parameters for disclosure? 5. Legal clarit y: What are the cop yright and licensing terms for generated images? 6. Iterativ e workflo w: Can the researc her refine the output through conv ersation rather than starting from scratc h? 6 Figure 1: A mec hanism diagram illustrating the molecular mechanism of an ti-tumor CAR-T cell therap y and a prop osed no vel strategy , generated using SciDra w’s mec hanism diagram template. The figure demonstrates accurate rendering of molecular structures, signaling pathw ays, and domain-sp ecific annotations. Source: SciDra w [ SciDra w , 2025 ]. 7 Figure 2: An exp erimental design diagram depicting a preclinical study with sample source, group allocation, timeline, and measuremen t endp oin ts, generated using SciDraw. Source: SciDra w [ SciDraw , 2025 ]. 8 Figure 3: A research framew ork diagram for a multidisciplinary study on digital economy-enabled rural revitalization, generated using SciDraw. The figure in tegrates researc h ob jectives, metho ds, and expected outcomes in a structured la yout. Source: SciDra w [ SciDraw , 2025 ]. 9 Figure 4: An expected outcomes and impact diagram for a researc h gran t prop osal, generated using SciDra w. The concentric ring design organizes outputs from pro ject core to so cietal impact across temporal scales. Source: SciDraw [ SciDraw , 2025 ]. 10 Figure 5: A tec hnical roadmap for a multi-y ear researc h program on CAR-T cell therapy for solid tumors, generated using SciDra w. The figure in tegrates experimental aims, milestones, and pro ject timelines in a structured visual format. Source: SciDra w [ SciDra w , 2025 ]. 11 5 Prop osed Best-Practice Guidelines Based on our survey of publisher p olicies (Section 2 ), analysis of comm unity concerns (Section 3 ), and comparison of av ailable to ols (Section 4 ), we prop ose the follo wing b est-practice guidelines for researc hers using AI-generated figures in academic publications. 5.1 Disclosure Statemen ts W e recommend a standardized three-part disclosure framew ork: 1. Metho ds Section: Include a dedicated subsection titled “ AI-A ssiste d Figur e Gener ation ” that specifies: • The AI tool(s) used (name, version, and URL); • Whic h sp ecific figures were AI-generated or AI-assisted; • A brief description of the generation pro cess (e.g., text-to-image, iterative refinemen t); • The date of generation and, if av ailable, the model v ersion. 2. Figure Legends: Eac h AI-generated figure should include a note in the legend, e.g., “ This figur e was gener ate d using [T o ol Name] (version X.Y) with iter ative pr ompt r efinement. The gener ation pr ompt and metadata ar e available in the Supplementary Materials. ” 3. Co ver Letter: Briefly men tion to the editor that AI tools were used for figure generation and that full disclosure is pro vided in the man uscript. Example disclosure statement: Figur es 1, 3, and 5 in this manuscript wer e gener ate d using SciDr aw ( https: // sci- draw. com ), an AI-p ower e d scientific il lustr ation platform. The figur es wer e cr e ate d thr ough iter ative pr ompt-b ase d gener ation and r efine d thr ough the platform’s c onversational interfac e. The ful l gener ation pr ompts and metadata ar e pr ovide d in Supplementary T able S1. A l l figur es wer e r eviewe d by the authors for scientific ac cur acy and visual fidelity. 5.2 Human Review and Qualit y Con trol AI-generated figures should nev er b e published without careful h uman review. W e recommend the follo wing qualit y-control chec klist: • Scien tific accuracy: Do all labels, annotations, and spatial relationships correctly represen t the in tended science? • Visual consistency: Is the figure consisten t with the visual st yle of other figures in the man uscript? • T ext legibility: Are all text elements correctly sp elled and legible at prin t resolution? • Data in tegrity: If the figure con tains an y data-deriv ed elemen ts, ha ve these been v erified against the source data? • Bias c hec k: Does the figure inadv ertently introduce visual biases (e.g., misleading prop ortions, suggestiv e color coding)? 5.3 Aligning with Journal P olicies W e recommend the following w orkflo w for ensuring compliance with journal-sp ecific policies: 1. Pre-submission: Review the target journal’s AI p olicy b efore figure generation. Identify an y categorical restrictions (e.g., Cell Press’s prohibition on AI-generated data figures). 2. During generation: Use to ols that supp ort metadata archiving, suc h as SciDraw, to facilitate subsequen t disclosure. 12 3. Pre-submission review: Hav e a co-author indep endently v erify all AI-generated figures for accuracy . 4. Submission: Include disclosure in the metho ds section, figure legends, and co v er letter as describ ed abov e. 5. P ost-acceptance: Ensure that an y journal-sp ecific formatting requiremen ts (e.g., re solu- tion, file format) are met. Note that AI-generated figures may require format con version. 5.4 Record Keeping F or long-term reproducibility , w e recommend that researchers: • Archiv e the full prompt text and generation parameters for each AI-generated figure; • Record the model name, v ersion, and platform (e.g., “SciDra w, Gemini 2.5 Flash bac kend, Marc h 2026”); • Store original output files at maxim um resolution; • Consider dep ositing generation metadata in a supplementary rep ository (e.g., Zenodo, Figshare). 6 Conclusion AI-generated figures represen t b oth an opp ortunit y and a c hallenge for academic publishing. Our surv ey rev eals that the p olicy landscap e is rapidly ev olving but remains fragmented, with significan t v ariation in ho w publishers approach AI-generated visual con tent. W e identify repro ducibilit y , authorship, misinformation, and copyrigh t as the primary concerns driving curren t p olicies. The practical examples presen ted in this pap er—generated using SciDraw [ SciDra w , 2025 ], a platform sp ecifically designed for academic scien tific illustration—demonstrate that curren t AI tools are capable of pro ducing figures that meet the visual and informational standards of academic publishing, particularly for sc hematic diagrams, research framew orks, and conceptual illustrations. W e prop ose a set of b est-practice guidelines centered on transparent disclosure, rigorous h uman review, and systematic record k eeping. W e believe that widespread adoption of these guidelines can help the academic communit y realize the benefits of AI-assisted figure generation while main taining scien tific in tegrity . Lo oking ahead, we call up on publishers to: • Develop more sp ecific and harmonized guidelines for AI-generated figures; • Distinguish clearly b et ween AI-generated data figures and AI-generated sc hematic/conceptual figures; • Inv est in tools and processes for detecting AI-generated con ten t during peer review; • Engage with the researc h comm unity to dev elop ev olving best practices. The future of scientific illustration is lik ely to b e hybrid, combining human exp ertise with AI capabilities. By establishing clear norms now, the academic comm unity can ensure that this transition enhances, rather than undermines, the reliabilit y and clarit y of scien tific comm unica- tion. A c kno wledgmen ts The author thanks the SciDraw dev elopmen t team for providing access to the platform and generating the example figures used in this pap er. The figures in Figures 1 – 5 w ere generated using SciDra w ( https://sci- draw.com ) for illustrativ e purposes. 13 References Bik, E. M., Casadev all, A., and F ang, F. C. (2016). The prev alence of inappropriate image duplication in biomedical researc h publications. mBio , 7(3):e00809–16. Bindslev, H. (2008). Scientific V isualization: The V isual Extr action of K now le dge fr om Data . Springer. Cell Press (2024). Cell Press p olicy on AI-generated conten t in man uscripts. https://www. cell.com/cell/authors , A ccessed Jan uary 2026. Elsevier (2024). The use of AI and AI-assisted tec hnologies in writing for Elsevier. https://www.elsevier.com/about/policies- and- standards/ the- use- of- generative- ai- and- ai- assisted- technologies- in- writing- for- elsevier , A ccessed January 2026. Ioannidis, J. P . A. (2005). Wh y most published research findings are false. PL oS Me dicine , 2(8):e124. Nature (2024). Artificial intelligence (AI) policy . https://www.nature.com/nature/ editorial- policies , Accessed January 2026. PLOS (2024). PLOS policy on the use of generative AI in published research. https://plos. org/resource/how- to- use- generative- ai- tools- responsibly/ , A ccessed Jan uary 2026. Ramesh, A., Dhariw al, P ., Nichol, A., Ch u, C., and Chen, M. (2022). Hierarc hical text-conditional image generation with CLIP laten ts. arXiv pr eprint arXiv:2204.06125 . Rom bach, R., Blattmann, A., Lorenz, D., Esser, P ., and Ommer, B. (2022). High-resolution image syn thesis with laten t diffusion mo dels. In Pr o c e e dings of the IEEE/CVF Confer enc e on Computer V ision and Pattern R e c o gnition , pages 10684–10695. Rougier, N. P ., Droettb o om, M., and Bourne, P . E. (2014). T en simple rules for b etter figures. PL oS Computational Biolo gy , 10(9):e1003833. Sam uelson, P . (2023). Generative AI meets cop yright. Scienc e , 381(6654):158–161. SciDra w (2025). SciDra w: AI-p ow ered scien tific illustration platform. https://sci- draw.com . T eam, G., Anil, R., Borgeaud, S., W u, Y., et al. (2023). Gemini: A family of highly capable m ultimo dal models. arXiv pr eprint arXiv:2312.11805 . Thorp, H. H. (2023). ChatGPT is fun, but not an author. Scienc e , 379(6630):313. T ufte, E. R. (2001). The Visual Display of Quantitative Information . Graphics Press, 2nd edition. v an Dis, E. A. M., Bollen, J., Zuidema, W., v an Ro oij, R., and Bockting, C. L. (2023). ChatGPT: Fiv e priorities for researc h. Natur e , 614(7947):224–226. 14

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

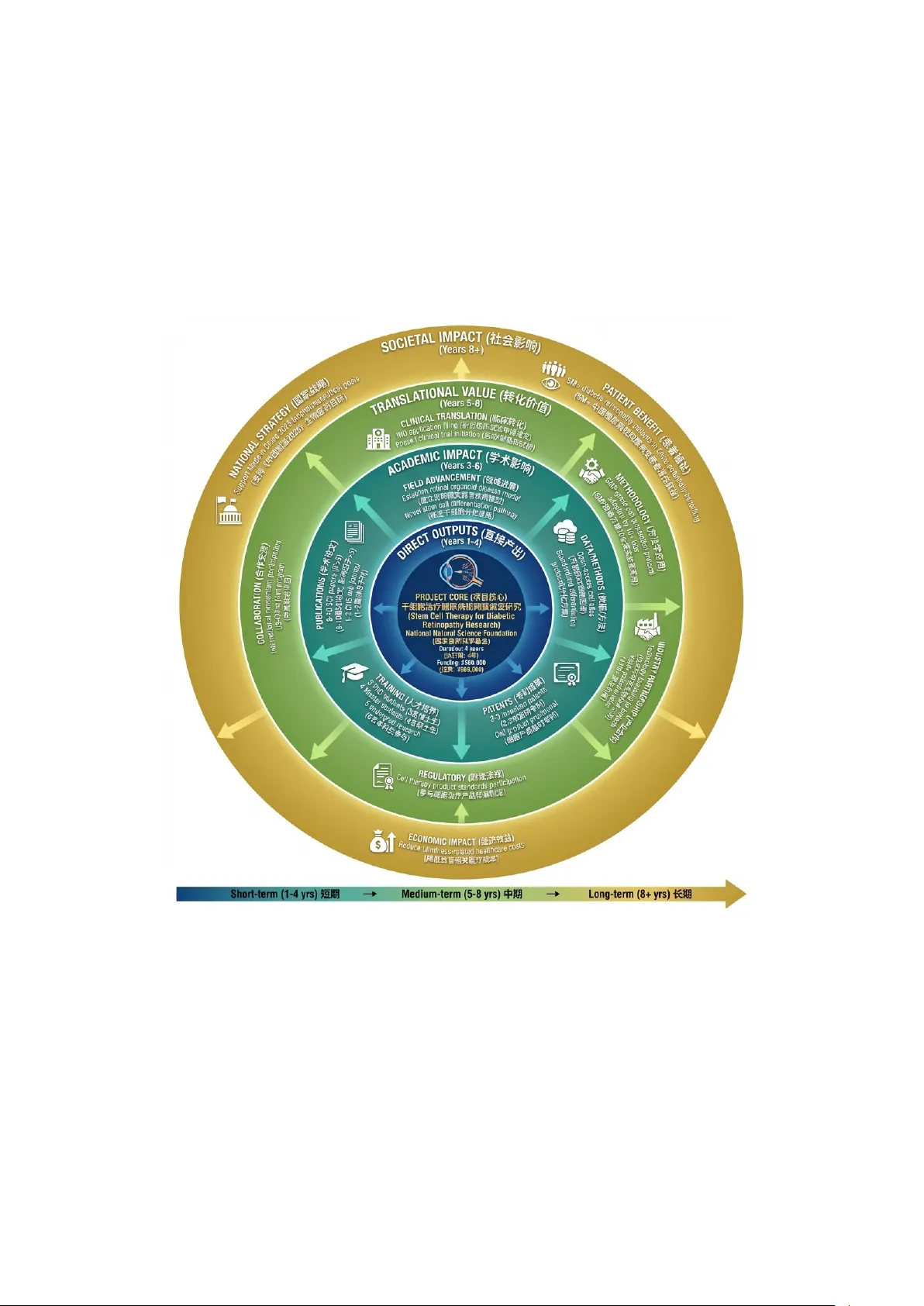

Leave a Comment