Knowledge Distillation for Collaborative Learning in Distributed Communications and Sensing

The rise of sixth generation (6G) wireless networks promises to deliver ultra-reliable, low-latency, and energy-efficient communications, sensing, and computing. However, traditional centralized artificial intelligence (AI) paradigms are ill-suited t…

Authors: Nhan Thanh Nguyen, Mengyuan Ma, Nir Shlezinger

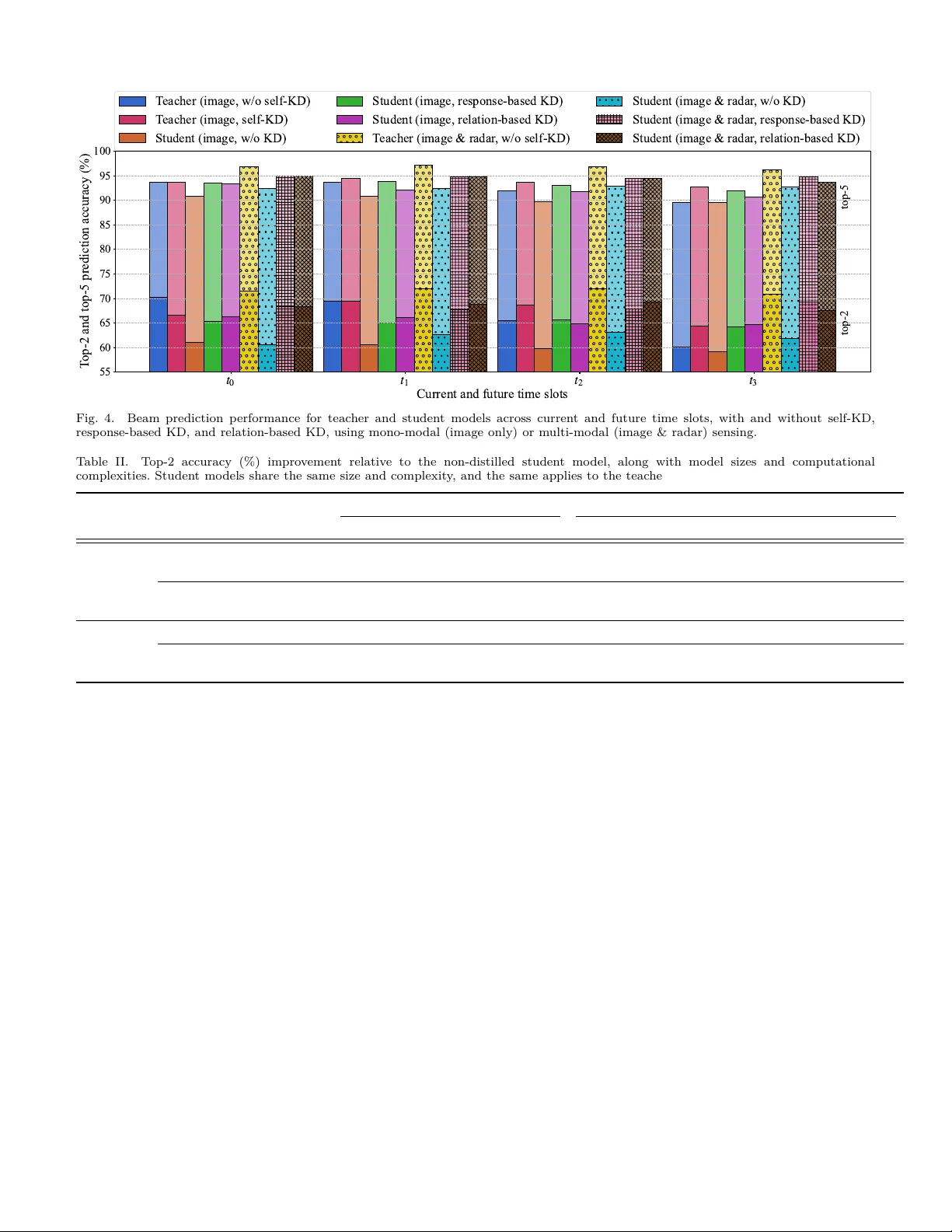

1 Kno wledge Distillation for Collab orativ e Learning in Distributed Comm unications and Sensing Nhan Thanh Nguy en, Mengyuan Ma, Nir Shlezinger, Junil Choi, Y onina C. Eldar, A. Lee Swindleh urst, and Markku Jun tti Abstract—The rise of sixth generation (6G) wireless net works promises to deliv er ultra-reliable, low-latency , and energy-ecien t communications, sensing, and computing. Ho wev er, traditional cen tralized articial in telligence (AI) paradigms are ill-suited to the decentralized, resource- constrained, and dynamic nature of 6G ecosystems. This pap er explores kno wledge distillation (KD) and collab orative learning as promising techniques that enable the ecient and scalable deplo yment of ligh tw eight AI models across distributed comm unications and sensing (C&S) no des. W e b egin by providing an ov erview of KD and highlight the k ey strengths that mak e it particularly eective in distributed scenarios c haracterized by device heterogeneit y , task div ersity , and constrained resources. W e then examine its role in fostering collectiv e intelligence through collab orative learning betw een the central and distributed no des via v arious knowledge distill- ing and deploymen t strategies. Finally , we presen t a systematic n umerical study demonstrating that KD-empow ered collab o- rativ e learning can eectiv ely support light w eight AI mo dels for m ulti-mo dal sensing-assisted b eam tracking applications with substan tial performance gains and complexity reduction. I. In tro duction Sixth-generation (6G) wireless net w orks are envisioned as a transformative platform that integrates comm unications, sensing, and computing to enable intelligen t applications suc h as smart cities, autonomous transportation, and im- mersiv e en vironmen ts [ 1 ], [ 2 ]. Distributed communications and sensing (C&S) systems, illustrated in Fig. 1 , are a key arc hitectural enabler of this vision. In such systems, pro- cessing capabilities are increasingly shifted from centralized cloud serv ers to distributed access points (APs) and edge de- vices [ 3 ]. This shift reduces latency , eases fronthaul conges- tion, improv es scalability , and preserv es data priv acy , all of whic h are critical requirements in scenarios with high mobil- it y , massive connectivity , and diverse sensing mo dalities [ 4 ]. A t the netw ork edge, APs and sensors are exp ected to carry out complemen tary C&S tasks such as environmen tal p erception, user tracking, and b eam prediction. Equipping these nodes with articial in telligence (AI) mo dels tailored to their sp ecic roles and lo cal conditions can greatly enhance resp onsiveness, lo wer inference load, and enable real-time, context-a ware decision-making. Realizing this vision, how ever, requires scalable mechanisms for deploying ligh t w eigh t yet capable AI mo dels across a wide range of heterogeneous edge devices. This need motiv ates the dev elopmen t of collaborative learning frameworks that can propagate intelligence from centralized entities to distributed no des in a resource-ecient manner. Radar Lidar Radar Lidar RIS Large ML model Small ML model Mobile user Sensing target Camera Blockage Fronthaul link Sensing link Access point User link AP1 AP2 Server Fig. 1. Collab orative learning in distributed C&S netw orks. T o realize the full p otential of collaborative learning, AI models m ust meet stringent op erational requiremen ts [5]. They m ust supp ort diverse C&S tasks, such as b eam prediction, c hannel estimation, user lo calization, and ob ject detection, while maintaining high accuracy . Sim ultaneously , mo dels m ust b e ligh tw eigh t for real-time inference on edge devices with limited p ow er and memory . Unlik e centralized systems, distributed environmen ts are inheren tly heterogeneous, with v arying hardw are, sensing mo dalities, and c hannel dynamics. This necessitates AI mo dels adapted to eac h device to maximize p erformance and enable eective collaborative inference [ 6 ]. A ddressing these challenges requires principled metho dologies that deliv er compact, high-performing, and task-sp ecialized mo dels across the netw ork without imp osing excessive computational or comm unications ov erhead. In this article, w e examine knowledge distillation (KD) [ 7 ] as a collaborative learning paradigm for distributed C&S systems. KD has shown promise in wireless tasks such as transceiv er design [ 8 ], [ 9 ], channel estimation [10], user p osi- tioning [11], and remote sensing [12], but mostly as a mo del compression to ol for resource eciency . In distributed 2 Of fline distillation Online distillation Self - distillation Pre - trained T o be t rained Knowledge Feature - based knowledge Respons e - based knowledge Data Distill T ransfer Knowledge t ransfer Relation - based knowledge Student model T eache r model Fig. 2. Dierent t yp es of knowledge and distillation schemes in KD [ 7 ]. C&S net w orks, KD in tro duces key challenges: co ordinating teac her-studen t mo dels across servers and no des, dening co op eration levels, and enhancing distributed learning while preserving autonom y . A ddressing these c hallenges requires principled metho dologies that deliver compact, high-p erforming, and task-sp ecialized mo dels across the net w ork without imp osing excessiv e computational or comm unications ov erhead. This broader view p ositions KD as a foundation for collab orative in telligence at the edge, a direction that remains insuciently explored. W e begin with a concise ov erview of KD, emphasizing principles that mak e it suitable for distributed C&S systems with heterogeneous devices, diverse tasks, and strict edge resource constraints. Building on this, we present KD-based collab orativ e learning frameworks for future 6G netw orks, including cen tralized, decentralized, and semi-centralized strategies, each with distinct trade-os in complexity , adaptabilit y , communication ov erhead, and priv acy . Fi- nally , we demonstrate the eectiveness of KD through nu- merical experiments on a represen tative C&S task, namely sensing-assisted b eam tracking. The results show that KD-based collaborative learning can signican tly reduce mo del complexit y while preserving, and in some cases ev en impro ving, C&S p erformance at the netw ork edge. I I. Kno wledge Distillation for Distributed C&S W e b egin with an ov erview of KD concepts and highlight its v alue for distributed C&S systems. KD serves b oth as a mo del compression technique and a learning framework, enabling smaller mo dels to inherit knowledge from larger ones through dieren t transfer mec hanisms and training sc hemes. These foundations motiv ate how KD can deliver ligh t w eigh t and ecien t AI at distributed netw ork nodes. A. KD Pro cess KD transfers knowledge from a large teacher model to a smaller student, trained to mimic the teac her under tigh ter resource constrain ts. Unlik e conv entional compression metho ds such as quantization or pruning [13], KD uses the teacher’s outputs or in ternal represen tations as extra sup ervision. W e review tw o key asp ects: the types of kno wledge distilled and the training schemes emplo y ed, as illustrated in Fig. 2 , and discuss ho w these can be adapted for distributed C&S tasks. 1) T yp es of Knowledge: A key design choice in KD is the form of kno wledge transferred, typically group ed in to three categories: resp onse-based, feature-based, and relation-based [ 7 ]. Resp onse-Based Knowledge: This kno wledge type refers to the output logits pro duced by the teacher’s nal lay er [ 8 ]– [11]. It is particularly eective for classication tasks, where the soft output distribution can serve as a pro xy for the true Ba y esian p osterior o ver classes. Response-based KD allows the student to lev erage the teacher’s probabilistic estimates rather than one-hot labels, by minimizing the divergence b et ween their output distributions. In distributed C&S net w orks, many tasks map naturally to classication problems, making resp onse-based KD useful for b eam selection, blo c kage detection, or signal detection at the edge. F eature-Based and Relation-Based Kno wledge: In feature-based KD, the student is trained to align its in ternal activ ations with those of the teac her, enabling richer represen tation learning, particularly in deep er netw orks. Relation-based KD further extends this idea by capturing structural relationships such as distances or similarities among samples in the teacher’s latent space [ 7 ], [ 9 ]. These kno wledge types are not limited to classication and are well suited to a wide range of C&S tasks including b eamforming, resource allo cation, channel estimation, and lo calization. They also supp ort integration with mo del-based learning, enabling explainable, ecient, and light w eigh t mac hine learning (ML) solutions. 2) Distillation Schemes: The second k ey asp ect of KD is the training sc heme, which typically follows three paradigms: oine, online, and self-distillation [ 7 ]. Oine Distillation: In this sc heme, the teacher is fully trained b efore studen t training b egins, and the student learns from b oth ground-truth lab els and supervisory signals pro- vided by the teac her [ 7 ]–[11]. This widely adopted approach assumes access to a pre-trained, high-capacity teacher mo del. Suc h a setting aligns w ell with distributed C&S systems, where m ultiple no des (e.g., APs) p erform similar tasks suc h as b eamforming. A pow erful teacher can be trained at a central server with ample resources, and its kno wledge distilled into light weigh t students for deploymen t at the APs. Online Distillation: Unlike the oine setting, online distillation trains teac her and student mo dels simultane- ously , often within a mutual learning framew ork [ 7 ]. Each mo del acts as b oth teacher and studen t, enabling knowledge exc hange in a co-ev olving pro cess. This approac h is exible and does not require a strong pre-trained teacher, though it demands careful synchronization and learning-rate tuning. It is w ell suited to collab orativ e distributed C&S scenarios, where m ultiple APs or sensors with dieren t data distribu- tions share distilled knowledge in real time to improv e tasks suc h as user tracking and environmen tal a wareness. Self-Distillation: A sp ecial case of online KD, self- distillation uses a single mo del as both teacher and studen t, either across ep o chs (e.g., earlier vs. later snapshots) or b et ween submo dules [7]. Although the architecture remains unc hanged, self-distillation improv es generalization and calibration b y reinforcing internal consistency . Unlike 3 con v en tional ne-tuning, which up dates the model using task-sp ecic sup ervision, self-distillation incorp orates a distillation loss that guides the mo del using its o wn previous outputs or intermediate representations. This approac h b enets edge no des such as APs and sensors under strict priv acy constrain ts, enabling contin uous renement without sharing ra w data or relying on external teac hers. What distinguishes KD is that the studen t is trained using b oth direct sup ervision (e.g., labels or task ob jectives) and teac her-pro vided guidance. These ric her signals strengthen the learning pro cess and impro v e generalization, particularly for AI mo dels deplo yed at the edge, where labeled data are scarce, sensing modalities are div erse, and dynamic en vironmen ts make cen tralized training impractical. B. KD for Distributed C&S This subsection highlights the key prop erties that make KD particularly v aluable for enabling ecient, scalable, and in telligen t distributed C&S deploymen ts at the edge. Mo del Compression with High T ask P erformance: A k ey b enet of oine KD is its ability to compress a high-capacity teac her in to a ligh t w eigh t studen t while preserving most of the teacher’s p erformance [ 7 ], [ 9 ]. This makes KD especially app ealing for distributed C&S netw orks, where limited computation, memory , and energy at edge nodes tigh tly constrain mo del size. Other approaches such as quantization and pruning [13] also improv e resource eciency , but KD stands out b y serving not only as a c ompression to ol but also as a learning framework, b oth of which are particularly w ell matc hed to distributed C&S systems. Sp ecialization and Diversit y: Because distillation trains the student on data, it naturally supp orts tailoring the studen t mo del to its local operating con text. In distributed net w orks, edge devices often face heterogeneous environ- men ts and p erform dierent C&S tasks. KD enables each studen t to b e trained on lo cal data, pro ducing mo dels that are not only compact but also personalized and adapted to their device’s unique c haracteristics. This leads to a div erse ensemble of student mo dels across the netw ork [ 6 ], eac h optimized for the sp ecic distributions, dynamics, and ob jectiv es of its edge device. Eectiv e Learning with Limited Data: Another key adv an- tage of KD is its role as a strong regularizer during training. By guiding the student with teacher-generated soft targets or in termediate features, KD enables more stable and data- ecien t learning [ 7 ]. This is esp ecially v aluable in distributed C&S settings, where each device ma y hav e only limited task-sp ecic data and where collecting extensiv e labeled datasets is often impractical. KD alleviates data scarcity b y transferring the teacher’s inductive biases, improving generalization and reducing ov ertting when training on the small datasets t ypically av ailable at the edge. Input Reduction and Resource Eciency: Beyond mo del compression, KD also enables input reduction, which is crucial in resource-constrained C&S applications [ 9 ]. Lo w- ering the dimensionality or frequency of input data greatly reduces energy and pro cessing demands. This is esp ecially v aluable in sensing-aided communications, where multi- mo dal sensors such as cameras, LiD AR, and radar provide ric h context but are p ow er-h ungry [ 4 ]. KD allows students to op erate eectively with reduced or single-mo dality inputs b y distilling knowledge from teac hers trained on full m ulti- mo dal data [ 9 ]. It further supp orts inference from sparse sensing, reducing ov erhead and latency while preserving task p erformance. The established prop erties of KD make it a pow erful to ol for addressing distributed C&S net work challenges. By combining exibility with resource a w areness, KD enables the scalable deplo yment of diverse AI mo dels across heterogeneous edge devices. The following sections discuss the in tegration of previously introduced distillation sc hemes into distributed C&S via centralized, decen tralized, and semi-centralized collab orative learning frameworks, highligh ting their resp ective adv antages and limitations in AI-emp o wered netw orks. I I I. In tegrating KD in Distributed C&S Netw orks In this section, we explore collab orativ e learning capabilities enabled b y in tegrating KD in distributed C&S net w orks. W e examine their training dynamics, information exc hange mechanisms, and the resulting b enets in terms of scalability , adaptability , and eciency . A. Motiv ation and Rationale In distributed C&S net w orks, light weigh t student mo dels are naturally deploy ed at no des such as APs, sensors, and UEs, while a high-capacit y teacher resides on a cen tralized platform (e.g., a server). The centralized teacher, trained on rich and diverse data, can p eriodically up date students via fron thaul links, enabling ecient knowledge transfer and co ordination. This mitigates the limitations of purely lo cal training, where students often underp erform due to scarce data and limited resources. KD eectiv ely allows the teacher to guide students, improving their p erformance despite lo cal constraints. F urthermore, through online distillation, distributed no des can collab orate without a pre-trained teac her, exc hanging information and in termediate representations to supp ort one another. These congurations enhance collective intelligence and eciency in distributed C&S systems while reducing the burden of individual training, esp ecially in online learning scenarios. While the term collab orative learning is often associated with federated learning (FL), the tw o paradigms are designed for dierent purp oses. FL fo cuses on the cen tralized training of a shared global mo del by aggregating up dates from distributed edge no des, typically without exchanging ra w data. In contrast, this work considers a KD-based collab- orativ e learning approach aimed at training and deplo ying m ultiple edge-sp ecic AI mo dels through teacher-studen t in teractions within distributed C&S systems. In this setting, the server plays the role of a pow erful teacher, while the resulting student mo dels are directly deplo y ed for inference at the edge. Moreo v er, unlike FL, whic h commonly enforces uniform mo del architectures and input/output structures 4 T rai n student m odels ba sed on KD Deploy student m odel s for infe ren ce phase Central iz ed dis til li ng T rai n te ac her m odel s T rai n student m odel s ba sed on KD bef ore depl oy m ent Dec entr ali z ed dis til li ng Init ia ll y tra in s tude nt based on KD Fine - tune student m odel s a nd bef ore depl oy m ent Semi - ce ntrali z ed dis til li ng d istill fin e - tu n e d istill d istill S er ver Ed ge Student m odel s Data updat e if nee ded T ea che r m odel s Student m ode ls CPU CPU CPU Fig. 3. Three kno wledge distilling top ologies with dynamic deploymen ts of teacher and student models in collab orative learning for distributed C&S netw orks. across no des, KD-based collaborative learning naturally supp orts heterogeneous mo dels tailored to dierent devices, sensing mo dalities, and tasks, making it particularly well suited for distributed C&S applications. B. KD Strategies for Distributed C&S via Collab orative Learning F or distributed C&S systems, as in the common KD setting, the teacher mo del is typically trained at a server based on simulations and digital twins. How ever, the deplo ymen t and training of student mo dels can v ary dep ending on system constrain ts and applications. W e iden tify three primary collaborative learning topologies for in tegrating KD in such settings, as illustrated in Fig. 3 : Cen tralized Distilling: In this top ology , student mo dels are trained en tirely at the server using the av ailable training data and then deploy ed to edge no des for inference. This approac h lev erages the serv er’s computational resources and minimizes the training burden on edge devices. Since the student models are ligh t w eigh t, the communication o v erhead for deploymen t is typically low. How ev er, a k ey limitation is that the training dataset at the server ma y b ecome outdated and not accurately reect the C&S en vironmen ts at all lo cal no des. Such data mismatch can lead to degraded student p erformance in dynamic or heterogeneous settings. Addressing this issue may require frequen t dataset up dates and mo del retraining at the serv er, whic h can in tro duce latency and limit adaptability . Decen tralized Distilling: In this approach, the teacher mo del is rst trained at the serv er and then transferred to distributed no des, where it is used to train studen t mo dels lo cally . Compared to cen tralized distilling, this incurs higher communication ov erhead due to the transfer of the teac her mo del, but it oers greater exibilit y . In particular, lo cal retraining or ne-tuning allows students to adapt to no de-sp ecic data distributions and sub optimal distillation h yp erparameters (e.g., temp erature or weigh ting factors in the KD loss function). This approac h also supp orts data priv acy , as lo cal datasets remain at the edge. A p otential limitation is that high-capacity teacher mo dels may still b e to o resource-in tensiv e to execute at edge devices, even if used only during training. Semi-Cen tralized Distilling: This h ybrid top ology com bines the strengths of centralized and decentralized distilling. Studen t mo dels are rst trained at the server and then ne-tuned at edge no des using lo cal, p oten tially non- shareable data. This alleviates p erformance degradation due to data mismatch while keeping comm unication ov erhead lo w. It also enables lo cal adaptation without deplo ying the teac her at the edge, but in tro duces the risk of catastrophic forgetting where the studen t may partially lose the teacher’s kno wledge. T o reduce forgetting, a small subset of global or syn thetic data can b e used for rehearsal, and lo cal loss can b e com bined with distillation loss to main tain alignmen t with the teac her’s outputs. Eectiv e adaptation still dep ends on properly c hosen distillation parameters during initial training. T able I summarizes the three KD top ologies, highlight- ing their trade-os in computational load, adaptabilit y , comm unications o v erhead, and data priv acy . In the next section, we examine deploymen t strategies and co ordination mec hanisms that enable eective collab orativ e learning in distributed C&S net w orks. C. Model Deplo yments and Co ordination In distributed C&S systems, dierent edge AI mo dels do not necessarily p erform identical tasks or rely on the same types of input data. Even when targeting a common task, mo dels at dierent no des often op erate under distinct resource constrain ts and data a v ailabilit y . F or instance, in m ulti-mo dal sensing, dierent AI models ma y exploit dieren t mo dalities or combinations thereof dep ending on the environmen t and a v ailable resources. This heterogene- it y motiv ates exible KD strategies that dier in teac her deplo ymen t, maintenance, and student assignmen t. T eacher Mo dels: The serv er can maintain and train m ultiple teacher mo dels tailored to the sp ecic tasks and requiremen ts of distributed no des, as illustrated in Fig. 3 . Based on no de requirements, the server may dynamically select one of the distillation top ologies discussed earlier 5 T able I. Comparison of KD topologies in distributed C&S netw orks Asp ect Cen tralized distillation Decen tralized distillation Semi-cen tralized distillation T eacher mo del T rained and executed at the serv er T rained at the server and transferred to edge for lo cal studen t training T rained and executed at the serv er Studen t mo del T rained at the serv er and deplo yed to no des for inference T rained and deploy ed locally using the transferred teac her and edge data Hybrid: initially trained at the serv er, then ne-tuned at the edge Data utilization Relies solely on cen tralized datasets Uses lo cal datasets at each no de Com bines global and local datasets for impro ved generalization Comm unications o verhead Lo w (only for transferring studen ts); increase if data up dates are needed Mo derate (for transferring teac her mo dels from serv er to edge) Lo w (only for transferring stu- den t models from server to edge) Edge computa- tional and memory load Minimal (only required for running light w eight studen t mo dels) High (to execute teacher models while training students) Mo derate (for ne-tuning studen t mo dels; teacher mo dels not executed) A daptability to lo cal conditions Limited (students trained on cen tralized data) High (students can b e retrained or ne-tuned lo cally) High (students can b e retrained or ne-tuned lo cally) Data priv acy Lo w (sending lo cal data to serv er) High (no need to share lo cal data) High (no need to share lo cal data) T ypical use cases Stable C&S environmen ts with lo w edge dynamics Highly dynamic or priv acy- sensitiv e systems Dynamic, priv acy-sensitive, and resource-constrained deplo yments (cen tralized, decen tralized, or semi-cen tralized) to train and up date the student models accordingly . Given that the server t ypically has access to abundan t computational resources and datasets, oine training of multiple teac her mo dels do es not impose a signicant burden. Moreo ver, since teac her mo dels are only inv oked during distillation rather than inference, their computational cost does not impact real-time op eration. This exibility enables scalable and adaptive col- lab orativ e learning across heterogeneous C&S deploymen ts. Studen t Mo dels: As illustrated in Fig. 3 , edge no des can host multiple studen t mo dels, each tailored for dierent tasks or optimized for sp ecic input conditions. This allows no des to adapt mo del selection to runtime constraints such as sensing qualit y , energy budget, or computational load. F or example, in multi-modal sensing-aided b eam prediction, a no de may rely on high-quality camera images during daytime op eration, while switching to GPS or radar data at night when visual inputs b ecome less reliable. The previously discussed distillation strategies can b e applied to co ordinate mo del up dates b etw een the server and distributed no des. Although multiple student mo dels are stored lo cally , only one is executed at inference time, ensuring no increase in run time complexity and minimal memory ov erhead due to their light weigh t design. IV. Numerical Example In this section, we demonstrate the eectiveness of the resp onse-based KD, relation-based KD, and self-KD strate- gies discussed earlier, in a distributed C&S setting. W e consider sensing-assisted b eam trac king as a representativ e in tegrated sensing and communications (ISAC) use case, where the AI mo del predicts b oth current and future optimal b eams for a vehicle-moun ted user based on historical sensing data in a mobile millimeter-wa ve system. W e consider tw o scenarios, one where the b eam is predicted using image data alone, and the other using a com bination of image and radar data, b oth obtained from the real-world DeepSense 6G dataset [14]. 1 W e fo cus on a decen tralized distillation scenario in which the teacher is pretrained at the serv er and deplo yed at a single edge node to train the student mo del using the same lo cal dataset. With this setup, the simulations aim to demonstrate the eectiveness of KD in model compression for high-p erformance sensing-assisted b eam tracking. The abilit y of KD to enable sp ecialization and diversit y across edge devices operating in heterogeneous en vironmen ts and p erforming dierent C&S tasks, and the implemen tation of cen tralized and semi-centralized KD top ologies are left for future inv estigation. Note that the b enet of KD for input and modality reduction has already b een demonstrated in [ 9 ]. In Fig. 4 , we sho w b eam prediction p erformance in terms of top-2 and top-5 accuracies for the curren t time slot ( t 0 ) and three future slots ( t 1 – t 3 ). T op- k accuracy is the probabilit y that the correct lab el app ears among the model’s top k predictions. The detailed performance gains of the distilled studen ts relative to their non-distilled counterparts, as w ell as the computational eciency achiev ed compared to the teac her mo dels, are summarized in T able I I. F rom Fig. 4 and T able II, we observe: • KD signican tly enhances the learning ability of small mo dels, enabling them to outp erform their non-distilled 1 The source co de for this exp eriment is av ailable at https://github.com/WillysMa/KD- for- sensing. 6 t 0 t 1 t 2 t 3 Curren t a d future t i me slot s 55 60 65 70 75 80 85 90 95 100 T op-2 a d t op-5 predict io accuracy ( %) %op-2 %op-5 T eacher (image, w/o s el f-KD) T eacher (image, s el f-KD) S%ude % (image, w/o KD) S%ude % (image, r e s po s e-based K D) S%ude % (image, r e l a%io -based K D) T eacher (image & r ada r , w / o self -KD ) St&de t (im age & r adar , w/o KD ) St&de t (im age & r adar , res po se-based KD) St&de t (im age & r adar , relati o -bas ed KD) Fig. 4. Beam prediction performance for teacher and student mo dels across curren t and future time slots, with and without self-KD, response-based KD, and relation-based KD, using mono-mo dal (image only) or multi-modal (image & radar) sensing. T able II. T op-2 accuracy (%) improv ement relative to the non-distilled studen t model, along with model sizes and computational complexities. Student models share the same size and complexity , and the same applies to the teacher mo dels. Category Model T op-2 accuracy improv emen t (%) Complexity and mo del size reduction (million) t 0 t 1 t 2 t 3 No. of parameters No. of FLOPs Image only T eacher (w/o self-KD) 9.15 8.92 5.76 0.94 1.787 157.83 T eacher (w/ self-KD) 5.52 8.92 8.84 5.21 Resp onse-based student 4.18 4.42 5.92 5.05 0.095 (18.8 × smaller) 92.25 (41.6% reduction) Relation-based student 5.21 5.60 5.13 5.60 Image & radar T eacher (w/o self-KD) 10.84 9.32 8.74 8.97 2.931 179.25 Resp onse-based student 7.69 5.13 4.66 7.46 0.106 (27.7 × smaller) 42.72 (76.2% reduction) Relation-based student 7.69 6.29 6.18 5.59 coun terparts and, in some cases, ev en the teacher. F or example, in the image-only scenario, the relation-based studen t mo del achiev es 64 . 72% top-2 accuracy at t 3 , represen ting a 5 . 60% impro vemen t o v er the non- distilled studen t mo del ( 59 . 12% ). It also surpasses the con v en tional teac her model without self-KD ( 60 . 06% ), despite requiring nearly 20 times fewer parameters. • By leveraging knowledge from the large teac her model with stronger feature extraction capabilit y , training with KD enables the student to pro cess multi-modal sensing data more eectiv ely than indep endent training. This is eviden t in the image and radar sensing results. Specically , at t 0 , the non-distilled studen t ac hiev ed only 60 . 61% top-2 accuracy , whereas both the resp onse- and relation-based studen ts reached 68 . 30% . Under the more relaxed top-5 criterion, the distilled studen t mo del consistently exhibits gains of ov er 2% across all time slots. • In terms of mo del size compression, the student mo dels are nearly 20 and 30 times smaller than their corresp onding teac hers for the image-only and image-plus-radar scenarios, resp ectively . Despite this substan tial compression, the studen t mo dels still p erform close to the muc h larger teachers. Sp ecically , for the image-only scenario, the students achiev e 64% – 66% top-2 accuracy , while the teac her without self-KD ranges b etw een 60% and 70% . In the case of image and radar data, the students reach 68% – 69% , compared to 70% – 72% for the teacher mo dels. • Thanks to model compression, the distilled studen t mo dels achiev e signicant reductions in computational complexit y with minimal loss in accuracy compared to their teachers. Specically , the students ac hiev e 41 . 6% and 76 . 2% reductions in the num ber of oating- p oin t op erations (FLOPs) for the image-only and m ulti-mo dal (image and radar) scenarios, resp ectively . Com bined with the reduced mo del size, this mak es the studen ts m uc h more suitable for deploymen t on edge no des, as low er FLOPs and smaller neural netw orks generally lead to reduced energy and comm unication costs, although they remain only proxy indicators of these quantities. Imp ortan tly , these light weigh t mo dels still retain substantial p erformance improv ements o v er non-distilled studen t mo dels of the same size and computational complexity . 7 V. Challenges and Op en Researc h Directions KD-emp o wered collab orative learning eectively balances p erformance against complexit y and pow er consumption. How ever, practical deploymen t necessitates o v ercoming several c hallenges that exp ose curren t limitations and dene futu re research av enues. Key issues include loss function design, student mo del autonomy , priv acy , and standardization, as discussed next. A. Optimized Loss F unctions The distillation loss function is central to KD, as it gov erns ho w knowledge is transferred from teacher to student. While standard formulations exist for approac hes such as response- and relation-based KD [ 7 ], renements are often needed for domain- and task-sp ecic applications. In b eam tracking with input reduction, for example, b oth teacher and student predict current and future b eam directions. Here, the loss must b e adaptive, reecting the data av ailability and prediction horizon: the student may dep end more on the teac her for far-future b eams, while near-future b eams can b e eectively learned from labeled data. B. Enhancing Student Mo de l’s Autonom y T o reduce reliance on teacher mo dels, KD and collab o- rativ e learning can b e integrated with adv anced tec hniques that enhance the autonom y of student mo dels. One promising direction is the incorp oration of meta-learning, whic h enables distributed nodes to learn from div erse tasks or datasets. Sp ecically , the teacher mo del trained at the server can act as a meta-learner, while distributed student mo dels are trained with a combined loss integrating the task-sp ecic loss, the KD loss from the teacher, and a meta-learning term. This equips student mo dels to retain teacher kno wledge while generalizing across v arying conditions, suc h as dieren t channel distributions and hardw are impairments. Consequen tly , student models at edge nodes can quickly adapt to new en vironmen ts with minimal retraining, reducing the need for frequent teac her execution in decen- tralized distillation and minimizing the o v erhead of student retraining and redeplo ymen t in cen tralized distillation. C. Priv acy and Securit y Challenges In distributed C&S scenarios, exc hanging mo dels or dis- tilled knowledge betw een serv ers and edge nodes creates signican t priv acy and security risks. A k ey concern is information leakage, where sensitive attributes or sensing c haracteristics ma y be inferred from teacher or studen t mo dels, distilled logits, or in termediate represen tations through attac ks such as model inv ersion or mem b ership inference. Thus, keeping ra w data lo cal do es not, b y itself, ensure priv acy . Another ma jor threat is model p oisoning or bac kdo or attac ks, in whic h compromised no des or serv ers inject malicious mo dels or distillation signals to corrupt studen t mo dels and manipulate inference. These risks are particularly pronounced in decentralized distillation, where teac her mo dels or knowledge represen tations are shared, and they also arise in cen tralized settings when edge nodes upload lo cal data to update teacher mo dels. These risks can b e mitigated by sharing only enco ded or task-relev ant represen tations and by integrating priv acy-preserving tec h- niques into the distillation pro cess. F urthermore, secure c hannels and encryption help protect mo del exchanges from ea v esdropping, while mo del integrit y chec ks and anomaly detection assist in iden tifying p oisoned or tampered mo dels b efore deploymen t. D. Standardization of KD-Based Collaborative Learning in 6G In tegrating KD in to distributed C&S systems oers b oth c hallenges and opp ortunities for standardization. Collab orativ e learning requires frequen t exchange of mo dels, distilled outputs, and con trol signals, necessitating standardized formats for mo del representation, kno wledge transfer, and sync hronization. These requirements align with the 6G vision of native AI, emphasizing distributed, adaptiv e, and in telligen t functionality . KD supports this vision by enabling light weigh t, lo cally specialized mo dels that utilize global knowledge without centralizing raw data. Standardizing KD-related mechanisms, such as teac her-studen t up date protocols, model compression in terfaces, and secure coordination, will b e essential for ensuring in terop erabilit y , scalability , and robustness in future 6G netw orks, in line with ongoing 3GPP eorts to standardize AI/ML mo del transfer and deploymen t [15]. VI. Conclusion In this work, we demonstrated that KD and collab orative learning are key enablers for the ecient and scalable deplo ymen t of light weigh t AI models in distributed C&S net w orks. KD supp orts edge deplo yments through mo del compression, sp ecialization, p erformance gains, and reduced input requirements, while collaborative learning enables its use in distributed settings via centralized, distributed, and semi-centralized distillation strategies. The dynamic deplo ymen t of teacher and studen t models further allo ws adaptation to diverse tasks and input conditions. Numerical results for the decen tralized distillation scenario sho w that KD-emp o wered collab orativ e learning preserves or improv es b eam tracking accuracy while substantially reducing mo del complexit y , highligh ting the practicalit y of compact, high-p erforming AI models in distributed environmen ts. References [1] M. Giordani, M. Polese, M. Mezzavilla, S. Rangan, and M. Zorzi, “T ow ard 6G netw orks: Use cases and technologies,” IEEE Commun. Mag., vol. 58, no. 3, pp. 55–61, 2020. [2] In ternational T elecomm unication Union, “IMT tow ards 2030 and b eyond,” https://www.itu.int/en/ITU- R/study- groups/ rsg5/rwp5d/imt- 2030/Pages/default.aspx, accessed: 2024-07- 23. [3] H. Q. Ngo, A. Ashikhmin, H. Y ang, E. G. Larsson, and T. L. Marzetta, “Cell-free massive MIMO versus small cells,” IEEE T rans. Wireless Commun., v ol. 16, no. 3, pp. 1834–1850, 2017. [4] A. Ali, N. Gonzalez-Prelcic, R. W. Heath, and A. Ghosh, “Leveraging sensing at the infrastructure for mm W av e commu- nication,” IEEE Commun. Mag., vol. 58, no. 7, pp. 84–89, 2020. 8 [5] T. Raviv, S. Park, O. Simeone, Y. C. Eldar, and N. Shlezinger, “Adaptiv e and exible model-based AI for deep receivers in dynamic c hannels,” IEEE Wireless Comm un., v ol. 31, no. 4, pp. 163–169, 2024. [6] N. Shlezinger and I. V. Ba jić, “Collab orative inference for AI- empowered IoT devices,” IEEE In ternet of Things Magazine, vol. 5, no. 4, pp. 92–98, 2023. [7] J. Gou, B. Y u, S. J. Maybank, and D. T ao, “Knowledge distillation: A survey ,” In t. J. Computer Vision, vol. 129, no. 6, pp. 1789–1819, 2021. [8] Q. Gao, Z. Cao, and D. Li, “Defensive distillation based end-to-end auto-encoder communication system,” in Pro c. IEEE Int. Conf. Computer Commun., 2021, pp. 109–114. [9] Y. M. Park, Y. K. T un, W. Saad, and C. S. Hong, “Resource- ecient b eam prediction in mmw av e communications with multimodal realistic simulation framework,” arXiv preprint arXiv:2504.05187, 2025. [10] F. O. Catak, M. Kuzlu, E. Catak, U. Cali, and O. Guler, “Defensive distillation-based adv ersarial attack mitigation method for c hannel estimation using deep learning mo dels in next-generation wireless netw orks,” IEEE Access, vol. 10, pp. 98 191–98 203, 2022. [11] A. Al-Ahmadi, “Knowledge distillation based deep learning model for user equipment p ositioning in massive MIMO systems using ying recongurable intelligen t surfaces,” IEEE Access, 2024. [12] Y. Zhang, Z. Y an, X. Sun, W. Diao, K. F u, and L. W ang, “Learning ecien t and accurate detectors with dynamic knowledge distillation in remote sensing imagery ,” IEEE Geosci. Remote Sens. Mag., vol. 60, pp. 1–19, 2021. [13] K. Zhang, H. Ying, H.-N. Dai, L. Li, Y. Peng, K. Guo, and H. Y u, “Compacting deep neural netw orks for Internet of Things: Metho ds and applications,” IEEE Internet Things J., vol. 8, no. 15, pp. 11 935–11 959, 2021. [14] A. Alkhateeb, G. Charan, T. Osman, A. Hredzak, J. Morais, U. Demirhan, and N. Sriniv as, “DeepSense 6G: A large-scale real-world m ulti-modal sensing and communication dataset,” IEEE Commun. Mag., vol. 61, no. 9, pp. 122–128, 2023. [15] 3GPP , “Overview of AI/ML related work in 3GPP ,” https://www.3gpp.org/news- events/3gpp- news/ai- ml- 2025, F eb 2025, [Accessed: Oct. 13, 2025]. A uthors’ Bio: Nhan Thanh Nguy en (nhan.nguy en@oulu.) is an Assistan t Professor at Universit y of Oulu, Finland. Mengyuan Ma (mengyuan.ma@oulu.) is a Postdoctoral Researc her at Univ ersit y of Oulu, Finland. Nir Shlezinger (nirshl@bgu.ac.il) is an Assistant Professor in Sc ho ol of Electrical and Computer Engineering in Ben- Gurion Universit y , Israel. Junil Choi (junil@kaist.ac.kr) is a tenured Asso ciate Pro- fessor at School of Electrical Engineering, K orea A dv anced Institute of Science and T echnology (KAIST), South Korea. Y onina C. Eldar (yonina.eldar@w eizmann.ac.il) is a Professor in Departmen t of Math and Computer Science, W eizmann Institute of Science, Israel. A. Lee Swindlehurst (swindle@uci.edu) is a Distinguished Professor in the Department of Electrical Engineering & Computer Science, Univ ersit y of California, Irvine, CA, USA. Markku Juntti (markku.jun tti@oulu.) is a Professor at Cen tre for Wireless Communications, Universit y of Oulu, Finland.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment