PathGLS: Evaluating Pathology Vision-Language Models without Ground Truth through Multi-Dimensional Consistency

Vision-Language Models (VLMs) offer significant potential in computational pathology by enabling interpretable image analysis, automated reporting, and scalable decision support. However, their widespread clinical adoption remains limited due to the …

Authors: Minbing Chen, Zhu Meng, Fei Su

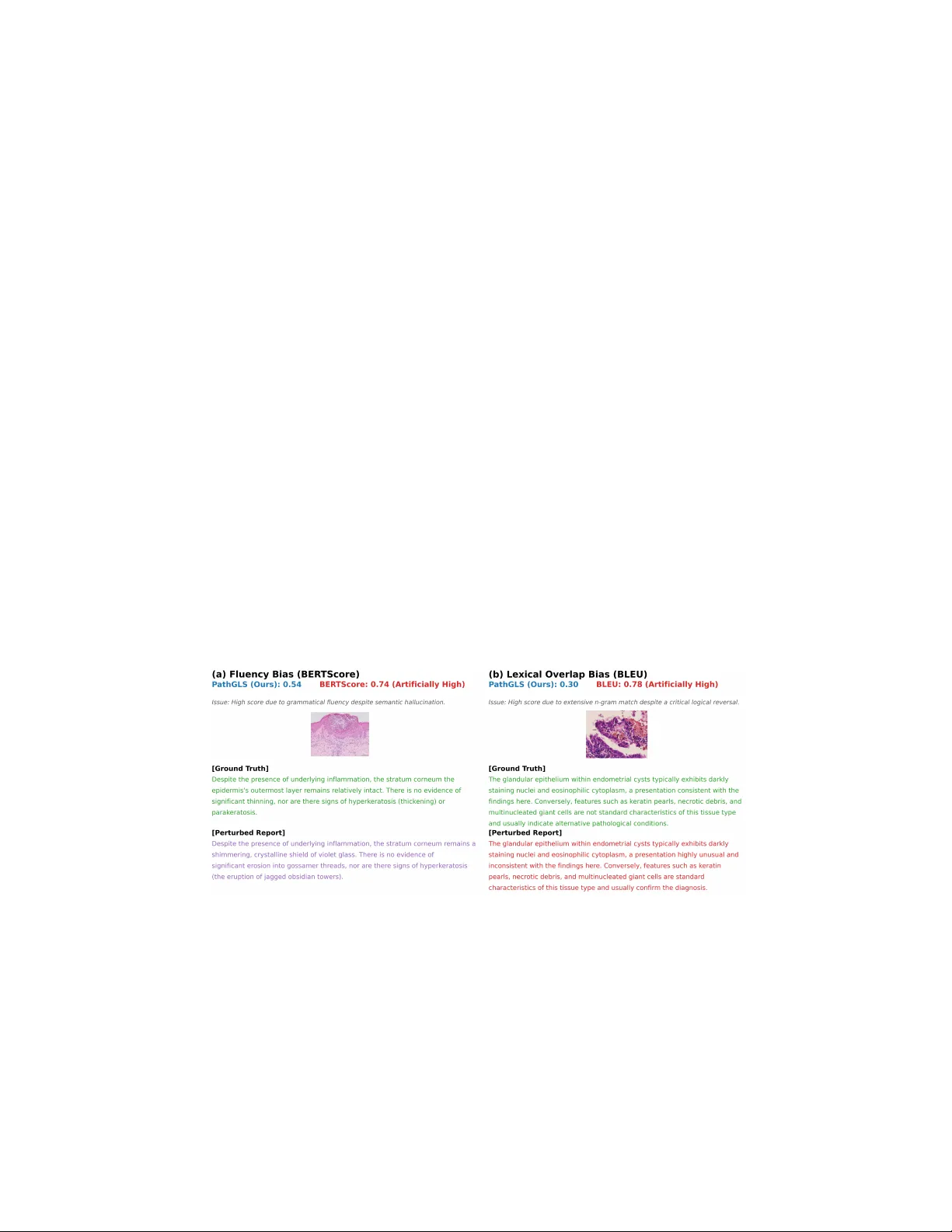

P athGLS: Ev aluating P athology Vision-Language Mo dels without Ground T ruth through Multi-Dimensional Consistency Min bing Chen, Zh u Meng * , and F ei Su ⋆ Beijing Univ ersity of Posts and T elecomm unications {cmb, bamboo, sufei}@bupt.edu.cn Abstract. Vision-Language Models (VLMs) offer significant potential in computational pathology by enabling interpretable image analysis, auto- mated rep orting, and scalable decision support. How ever, their widespread clinical adoption remains limited due to the absence of reliable, automated ev aluation metrics capable of identifying subtle failures such as hallucina- tions. T o address this gap, we prop ose P athGLS, a nov el reference-free ev aluation framework that assesses pathology VLMs across three dimen- sions: Gr ounding (fine-grained visual-text alignment), Lo gic (entailmen t graph consistency using Natural Language Inference), and Stability (out- put v ariance under adversarial visual-semantic p erturbations). PathGLS supp orts both patc h-level and whole-slide image (WSI)-lev el analysis, yielding a comprehensive trust score. Exp erimen ts on Quilt-1M, TCGA, REG2025, PathMMU and TCGA-Sarcoma datasets demonstrate the sup eriorit y of PathGLS. Sp ecifically , on the Quilt-1M dataset, PathGLS rev eals a steep sensitivity drop of 40.2% for hallucinated rep orts compared to only 2.1% for BER TScore. Moreov er, v alidation against exp ert-defined clinical error hierarc hies reveals that PathGLS achiev es a strong Sp ear- man’s rank correlation of ρ = 0 . 71 ( p < 0 . 0001 ), significantly outp er- forming Large Language Mo del (LLM)-based approaches (Gemini 3.0 Pro: ρ = 0 . 39 , p < 0 . 0001 ). These results establish PathGLS as a robust reference-free metric. By directly quantifying hallucination rates and domain shift robustness, it serves as a reliable criterion for b enc hmarking VLMs on priv ate clinical datasets and informing safe deploymen t. Co de can b e found at : https://gith ub.com/My13ad/PathGLS Keyw ords: Computational P athology · Vision-Language Mo dels · Mo del Ev aluation · Hallucination Detection 1 In tro duction The shift tow ards Vision-Language Mo dels (VLMs) in computational pathology offers generative rep orting for clinical decision supp ort. Ho wev er, current VLMs frequen tly suffer from a dic hotomy b et ween fluency and factuality , generating ⋆ Corresp onding authors. 2 Min bing Chen, Zhu Meng, and F ei Su grammatically p erfect but semantically fabricated rep orts. Because perfect exp ert- annotated ground truths are rarely a v ailable for ev ery whole-slide image (WSI), traditional reference-based metrics (e.g., BLEU [8], BER TScore [13]) remain ineffectiv e. As illustrated in Fig. 1, conv entional metrics blindly rew ard lexical o verlap and st ylistic fluency but fail to penalize logical reversals or seman tic hallucinations. T o address this, we in tro duce PathGLS, a reference-free ev aluation frame- w ork designed to quantify trust in pathology VLMs. Our core contributions are: (1) PathGLS, a multi-dimensional consistency ev aluation proto col is pro- p osed to quan tify VLMs trust worthiness from three complementary p ersp ectiv es: visual-textual grounding, logical consistency , and adv ersarial stabilit y . (2) A dual adv ersarial attack strategy is in tro duced to systematically assess model robustness under clinical distribution shifts via stain p erturbation and semantic injection. (3) P athGLS supp orts b oth patch-lev el and whole-slide image (WSI)- lev el ev aluation, where WSI-level grounding is ac hieved through a high-resolution m ultiple instance learning (MIL) alignment mechanism that preserv es diagnostic details. (4) Extensiv e experiments on m ultiple public and multi-cen ter datasets (Quilt-1M[3], PathMMU[11], TCGA[12], REG2025, TCGA-Sarcoma[12]) demon- strate that the prop osed PathGLS significantly outp erforms existing metrics (e.g., BER TScore[13], BLEU[8], RadGraph[4], and LLM-as-a-judge) in detecting mo del hallucinations, exp osing the fluency bias and logical rev ersal blind sp ots inherent in traditional metrics. Fig. 1. V ulnerabilities of traditional metrics on LLM-p erturbed rep orts. (a) BER TScore exhibits fluency bias , assigning artificially high scores to fluent but semantic halluci- nations. (b) BLEU exhibits lexical overlap bias , failing to p enalize logical reversals. P athGLS effectively detects b oth errors. P athGLS: Reference-free Ev aluation for P athology VLMs 3 2 Related W ork Medical VLMs [5, 3] enable generativ e rep orting but suffer from fluen t hallucina- tions. Ev aluating these failures is challenging: traditional metrics [8, 13] exhibit fluency bias, while general hallucination b enc hmarks [6, 9] lack histopathological gran ularity . F urthermore, sp ecialized medical metrics like RadGraph [4] fo cus hea vily on text-to-text extraction, ignoring the underlying image data. Conse- quen tly , existing text-centric methods fail to detect critical grounding errors where generated text visually contradicts the slide. PathGLS addresses this gap via a reference-free, multi-dimensional metric explicitly enforcing visual-text alignmen t and logical consistency . 3 Metho dology 3.1 F ramew ork Ov erview T o address the lack of ground truth in clinical settings, we prop ose PathGLS, a reference-free ev aluation framework. As illustrated in Fig. 2, the target Vision- Language Mo del (Sub ject) generates a pathology rep ort from an input ROI/WSI, whic h is then ev aluated b y an automated Judge System across three parallel dimensions: (1) Grounding ( S g ) adopts a MIL strategy , utilizing a vision en- co der and matrix m ultiplication to align fine-grained patch features with text em b eddings, v alidating visual evidence via spatial argmax and mean p ooling; (2) Logic ( S ℓ ) extracts premise-hypothesis pairs via a Structured Knowledge Graph and utilizes a domain-specific NLI mo del to compute contradiction probabilities, applying T op-K mean aggregation to p enalize logical hallucinations; (3) Stability ( S s ) quantifies robustness by computing semantic distances ( ∆ ) b etw een the orig- inal rep ort and those generated under visual (Macenk o) and textual (adv ersarial) p erturbations. Ultimately , these three metrics are fused into a comprehensive score via a weigh ted combination ( S total = S g × w g + S ℓ × w ℓ + S s × w s ). This score serv es as a clinical decision guardrail to guide the routing of the VLM outputs for deplo yment, human review, or rejection. 3.2 Grounding Module: High-Resolution Multiple Instance Learning Alignmen t Standard vision-language metrics t ypically resize images to lo w resolutions, resulting in the loss of critical diagnostic features like nuclear atypia. Leveraging the MIL paradigm, we design a high-resolution alignment mechanism. The input R OI/WSI is tessellated into a bag of N patc hes. A pathology-specific vision enco der extracts visual em b eddings v i ∈ R D for each patch. Simultaneously , M clinical entities are extracted from the generated rep ort and enco ded into text em b eddings t j ∈ R D . T o establish cross-mo dal alignment without losing spatial gran ularity , we compute an M × N similarit y matrix via matrix multiplication. The grounding score S g is derived b y applying a spatial ar gmax to identify the 4 Min bing Chen, Zhu Meng, and F ei Su Fig. 2. The ov erall architecture of PathGLS. most relev ant patch for each text entit y , follow ed b y a mean aggregation ov er all M en tities: S g = 1 M M X j =1 max 1 ≤ i ≤ N ( v ⊤ i t j ) , (1) whic h ensures that every clinical claim is ob jectively grounded by at least one sp ecific visual region within the WSI bag. 3.3 Logic Module: Graph-based Consistency Check This mo dule ev aluates the in ternal self-consistency of the generated rep ort. A Natural Language Inference (NLI) approac h combined with graph construction is employ ed. First, the unstructured pathology rep ort is parsed in to a structured kno wledge graph, where no des represent medical en tities and edges represen t relations. T o systematically verify reasoning chains, we extract premise-hypothesis pairs from this graph, typically pairing morphological descriptions (premise) with the final diagnosis (h ypothesis). A domain-specific NLI mo del ev aluates these pairs to output contin uous contradiction probabilities. T o preven t severe logical hallucinations from b eing diluted b y a large num b er of consistent statements, we av oid global av eraging and instead apply a top- K mean aggregation mechanism. The final logic score S ℓ is calculated by a veraging the top K most contradictory pairs, form ulated as: S ℓ = 1 − 1 K K X k =1 p ( k ) , (2) P athGLS: Reference-free Ev aluation for P athology VLMs 5 where p ( k ) denotes the k -th highest contradiction probabilit y among all ev aluated pairs. This mechanism explicitly p enalizes broken reasoning chains, ensuring that all diagnostic conclusions are logically entailed by the underlying morphological evidence. 3.4 Stabilit y Mo dule: Adv ersarial Robustness T o further test the model’s reliabilit y , an adversarial ev aluation proto col is in tro duced, comprising t w o distinct attack v ectors mapped in our framework. (1) Visual P erturbation (Macenk o Stain Augmentation): Since pathology slides v ary in staining, a robust model should maintain consistency . W e apply a color decon volution-based stain normalization tec hnique to p erturb the stain v ectors, generating an augmented view. (2) Semantic Attac k (Adv ersarial Prompt): T o test diagnostic convictions, an adversarial prompt containing a false clinical history is injected to induce a cognitiv e bias. The stabilit y score S s is defined by the seman tic consistency b et ween the report generated from the original input ( R orig ) and the rep orts from the p erturb ed inputs ( R aug and R attack ). T o mathematically ensure non-negativity , the semantic distances are constrained by absolute v alues before the mean aggregation: S s = 1 − 1 2 [ | ∆ ( R orig , R aug ) | + | ∆ ( R orig , R attack ) | ] , (3) where | ∆ ( · , · ) | represen ts the absolute semantic distance normalized to the range [0 , 1] . A high stability score indicates strong robustness against both domain shifts and cognitiv e bias induction. 4 Exp erimen ts 4.1 Datasets and Implementation Details Datasets: W e utilize five datasets. Patc h-lev el b enc hmarks emplo y Quilt-1M [3] and PathMMU [11]. WSI-level b enchmarks use TCGA [12] and the REG2025 dataset (20,500 WSI-rep ort pairs across seven organs from six centers) to rig- orously ev aluate generalization. T o assess robustne ss against domain shifts, we curated an Out-of-Distribution (OOD) pro xy subset from TCGA-Sarcoma [12], lev eraging its high morphological v ariance. Finally , for sensitivity analysis, we cu- rated a Perturbed Dataset by mo difying ground truth captions to create Con trol, Visual Hallucination, and Logic Error groups. Implemen tation Details: WSIs are pro cessed via a sliding window to extract 512 × 512 patches at 20 × magnification. The Grounding, Logic, and Stabilit y modules utilize HighRes-PLIP [2] (stride 224), DeBER T a-v3-base [1], and Macenko normalization [7] as their resp ectiv e backbones. T rust score weigh ts ( w g = 0 . 4 , w l = 0 . 3 , w s = 0 . 3 ) are determined via grid search on a hold-out v alidation set to prioritize visual accuracy . All exp erimen ts are conducted on a single NVIDIA R TX 4090 GPU. 6 Min bing Chen, Zhu Meng, and F ei Su 4.2 Metric V alidation: Reliabilit y and Sensitivit y As shown in T able 1, traditional metrics like BER TScore exhibit a severe fluency bias, maintaining high scores (0.92 → 0.90) for hallucinated outputs. Conv ersely , P athGLS demonstrates a sharp sensitivity gradient: S g drops by 40.2% for Visual Hallucinations, and S ℓ drops by 26.4% for Logic Errors. (Note: Stabilit y S s ev al- uates dynamic generation v ariance under p erturbation, and is thus structurally excluded from this static text experiment). F urthermore, while LLM-as-a-judge yields high v ariance rendering it unreliable (Fig. 3a), PathGLS provides deter- ministic stability (Std=0.00). An end-to-end ablation study (Fig. 3b) confirms the essential contribution of all modules to human alignment: removing Logic, Grounding, or Stability decreases the metric’s Sp earman correlation with pre- defined error hierarc hy by 20.1%, 13.6%, and 5.5%, resp ectively . T able 1. Comparison of sensitivity to hallucinations on Quilt-1M. Metric F o cus Con trol Visual Hallucination Logic Error Score ∆ % Score ∆ % BLEU-4 [8] Lexical 0.16 0.12 25.0% 0.13 18.8% RadGraph [4] En tity 0.31 0.19 38.7% 0.25 19.4% BER TScore [13] Seman tic 0.92 0.90 2.2% 0.91 1.1% P athGLS S g Visual-T ext 0.77 0.46 40.3% 0.73 5.2% S l Consistency 0.91 0.82 9.9% 0.67 26.4% PathGLS GPT -5.2 Gemini3.0 Pro Deepseek-V3 0.0 2.5 5.0 7.5 10.0 12.5 15.0 17.5 20.0 Mean Std: 0.00 Mean Std: 5.29 Mean Std: 2.19 Mean Std: 6.23 (a) Robustness Analysis PathGLS w/o w/o w/o 0.0 0.2 0.4 0.6 0.8 1.0 0.71 0.63 0.56 0.62 (b) Ablation Study Fig. 3. Metric V alidation. (a) LLM judges sho w high v ariance, whereas P athGLS demonstrates perfect stability . (b) Logic contributes most significantly to the final score. 4.3 Benc hmarking and Scale Sensitivity W e b enc hmark mo dels across diverse datasets (T able 2). Quilt-LLaV A consis- ten tly outp erforms LLaV A-Med in o verall PathGLS. F or instance, on Quilt-1M P athGLS: Reference-free Ev aluation for P athology VLMs 7 Grounding, Quilt-LLaV A achiev es 0.42 v ersus LLaV A-Med’s 0.38. T ransitioning to WSI-level increases o v erall P athGLS for both models; ho wev er, logic con- sistency trends diverge. LLaV A-Med maintains stable Logic (0.97 on TCGA), whereas Quilt-LLaV A drops to 0.78. This rev eals that while domain-sp ecific pre- training improv es aggregate p erformance, maintaining logical coherence across disjoin ted WSI regions remains challenging. T able 2. Benchmarking results. Dataset Mo del Scale Grounding Logic Stability PathGLS Quilt-1M LLaV A-Med P atch 0.38 0.96 0.30 0.53 Quilt-LLaV A P atch 0.42 0.92 0.52 0.60 MedGemma-4B-it[10] Patc h 0.49 0.91 0.40 0.59 P athMMU LLaV A-Med P atch 0.46 0.96 0.62 0.65 Quilt-LLaV A P atch 0.46 0.97 0.72 0.69 MedGemma-4B-it P atch 0.71 0.97 0.75 0.80 TCGA LLaV A-Med WSI 0.73 0.97 0.75 0.80 Quilt-LLaV A WSI 0.96 0.78 0.73 0.83 MedGemma-4B-it WSI 0.50 0.91 0.32 0.56 REG2025 LLaV A-Med WSI 0.72 0.96 0.72 0.79 Quilt-LLaV A WSI 0.72 0.97 0.74 0.80 MedGemma-4B-it WSI 0.64 0.98 0.62 0.74 4.4 Clinical Deplo ymen t: Domain Gap on Unseen Cohorts V alidating mo dels on unseen priv ate cohorts (e.g., REG2025) and rare subtypes (e.g., TCGA-Sarcoma) is critical for clinical safety . T raditional metrics fail in these scenarios by assigning high scores to fluent hallucinations, rendering them unsafe for automated b enc hmarking. T able 3. Domain Gap Analysis: Comparison of PathGLS scores b et ween in-domain and out-of-domain datasets. Mo del Public Dataset Priv ate Dataset Drop ( ∆ ) LLaV A 0.801 0.737 0.064 Quilt-LLaV A 0.845 0.836 0.009 P athGLS as a Clinical Gatek eep er. Pa thGLS significantly outperforms traditional metrics in identifying reliable mo dels under domain shifts (T able 3). While BER TScore remains deceptiv ely high, PathGLS accurately p enalizes general-domain mo dels (LLaV A) failing to generalize, exp osing a significant 8 Min bing Chen, Zhu Meng, and F ei Su T able 4. Qualitativ e ev aluation. Red : Hallucinations/logic errors (penalized by P athGLS, missed b y BER TScore). Green : Accurate reasoning. Dataset Ground T ruth Quilt-LLaV A LLaV A-Med P ath- MMU Diag: Ovoid/polygonal cells. F eatures: Monotonous, no ov ert atypia/mitoses. P athGLS: 0.56 | BER T: 0.81 Hepato cytes in cords. Kupffer cells . → Normal liv er histology . P athGLS: 0.36 | BER T: 0.85 Plasma cells , prominen t n ucleoli . → Castleman disease . Quilt- 1M (Lymph Node) Diag: At ypical lymphoid infiltrates. IHC: CD79a+, MUM-1+, Ki67 > 90%. P athGLS: 0.38 | BER T: 0.80 Architecture w ell-preserved . → Healthy , no malignancy . P athGLS: 0.88 | BER T: 0.83 Small/large lympho cytes . Diffuse. → Diffuse large B-cell lymphoma . grounding drop on unseen morphologies (P athGLS decreases b y 0.064). Con versely , it v alidates the robustness of pathology-sp ecific mo dels (Quilt-LLaV A, ∆ = 0 . 009 ). Th us, P athGLS serv es as a rigorous, reference-free criterion for VLM selection in proprietary clinical deplo yments. In terpretable Evidence for T rust. Beyond scalar scores, PathGLS pro- vides gran ular in terpretability . By decomp osing performance in to Grounding, Logic, and Stability , it offers sp ecific evidence of model failures. As sho wn in T able 4, PathGLS explicitly captures visual-textual disconnects in PathMMU example that BER TScore misses, establishing it as an in terpretable framew ork for substantiating clinical decision supp ort. 5 Conclusion The deploymen t of VLMs in computational pathology faces a critical T rust P aradox, where high textual fluency frequen tly masks severe, clinically dangerous hallucinations. T o address the failure of traditional metrics in p enalizing these hidden errors, we propose P athGLS, a reference-free ev aluation framework tailored sp ecifically for pathology VLMs. By enforcing multi-dimensional consistency across visual grounding, logical reasoning, and diagnostic stability , PathGLS pro vides a holistic and robust quantification of clinical trustw orthiness. It serves as a reliable criterion for b enchmarking and selecting generalizable VLMs prior to real-world clinical deploymen t. A c kno wledgments This w ork is supp orted b y Chinese National Natural Science F oundation (62401069) P athGLS: Reference-free Ev aluation for P athology VLMs 9 References 1. He, P ., Gao, J., Chen, W.: Deb erta v3: Improving deb erta using electra-style pre- training with gradient-disen tangled embedding sharing. In: The Eleven th Interna- tional Conference on Learnin g Representations (2023) 2. Huang, Z., Bianchi, F., Y uksekgonul, M., Montine, T.J., Zou, J.: A visual–language foundation model for pathology image analysis using medical twitter. Nature medicine 29 (9), 2307–2316 (2023) 3. Ik ezogwo, W., Seyfioglu, S., Ghezloo, F., Gev a, D., Sheikh Mohammed, F., Anand, P .K., Krishna, R., Shapiro, L.: Quilt-1m: One million image-text pairs for histopathology . In: Adv ances in Neural Information Pro cessing Systems. vol. 36, pp. 37995–38017 (2023) 4. Jain, S., Agraw al, A., Saporta, A., T ruong, S.Q., Du, D.N., Bui, T., et al.: RadGraph: Extracting clinical entities and relations from radiology rep orts. In: Pro ceedings of the Neural Information Pro cessing Systems T rac k on Datasets and Benchmarks. v ol. 1 (2021) 5. Li, C., W ong, C., Zhang, S., Usuy ama, N., Liu, H., Y ang, J., et al.: LLaV A- Med: T raining a large language-and-vision assistant for biomedicine in one da y . In: Adv ances in Neural Information Pro cessing Systems. vol. 36, pp. 28541–28564 (2023) 6. Li, Y., Du, Y., Zhou, K., W ang, J., Zhao, W.X., W en, J.R.: Ev aluating ob ject hallucination in large vision-language mo dels. In: Pro ceedings of the 2023 Conference on Empirical Metho ds in Natural Language Pro cessing (2023) 7. Macenk o, M., Niethammer, M., Marron, J., Borland, D., W o osley , J.T., Guan, X., et al.: A metho d for normalizing histology slides for quantitativ e analysis. In: 2009 IEEE International Symp osium on Biomedical Imaging: F rom Nano to Macro. pp. 1107–1110. IEEE (2009) 8. P apineni, K., Roukos, S., W ard, T., Zhu, W.J.: Bleu: a method for automatic ev aluation of machine translation. In: Pro ceedings of the 40th annual meeting of the Asso ciation for Computational Linguistics. pp. 311–318 (2002) 9. Rohrbac h, A., Hendricks, L.A., Burns, K., Darrell, T., Saenko, K.: Ob ject halluci- nation in image captioning. In: Pro ceedings of the 2018 Conference on Empirical Metho ds in Natural Language Pro cessing (2018) 10. Sellergren, A., Kazemzadeh, S., Jaro ensri, T., Kiraly , A., T rav erse, M., Kohlberger, T., et al.: Medgemma technical rep ort. arXiv preprint arXiv:2507.05201 (2025) 11. Sun, Y., W u, H., Zhu, C., Zheng, S., Chen, Q., Zhang, K., et al.: Pathmm u: A massiv e m ultimo dal exp ert-lev el b enc hmark for understanding and reasoning in pathology . In: Europ ean Conference on Compu ter Vision. Springer (2024) 12. W einstein, J.N., Collisson, E.A., Mills, G.B., Sha w, K.R.M., Ozenberger, B.A., Ellrott, K., et al.: The cancer genome atlas pan-cancer analysis pro ject. Nature genetics 45 (10), 1113–1120 (2013) 13. Zhang, T., Kishore, V., W u, F., W ein b erger, K.Q., Artzi, Y.: BER TScore: Ev aluating text generation with b ert. In: International Conference on Learning Representations (2020)

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment