ASDA: Automated Skill Distillation and Adaptation for Financial Reasoning

Adapting large language models (LLMs) to specialized financial reasoning typically requires expensive fine-tuning that produces model-locked expertise. Training-free alternatives have emerged, yet our experiments show that leading methods (GEPA and A…

Authors: Tik Yu Yim, Wenting Tan, Sum Yee Chan

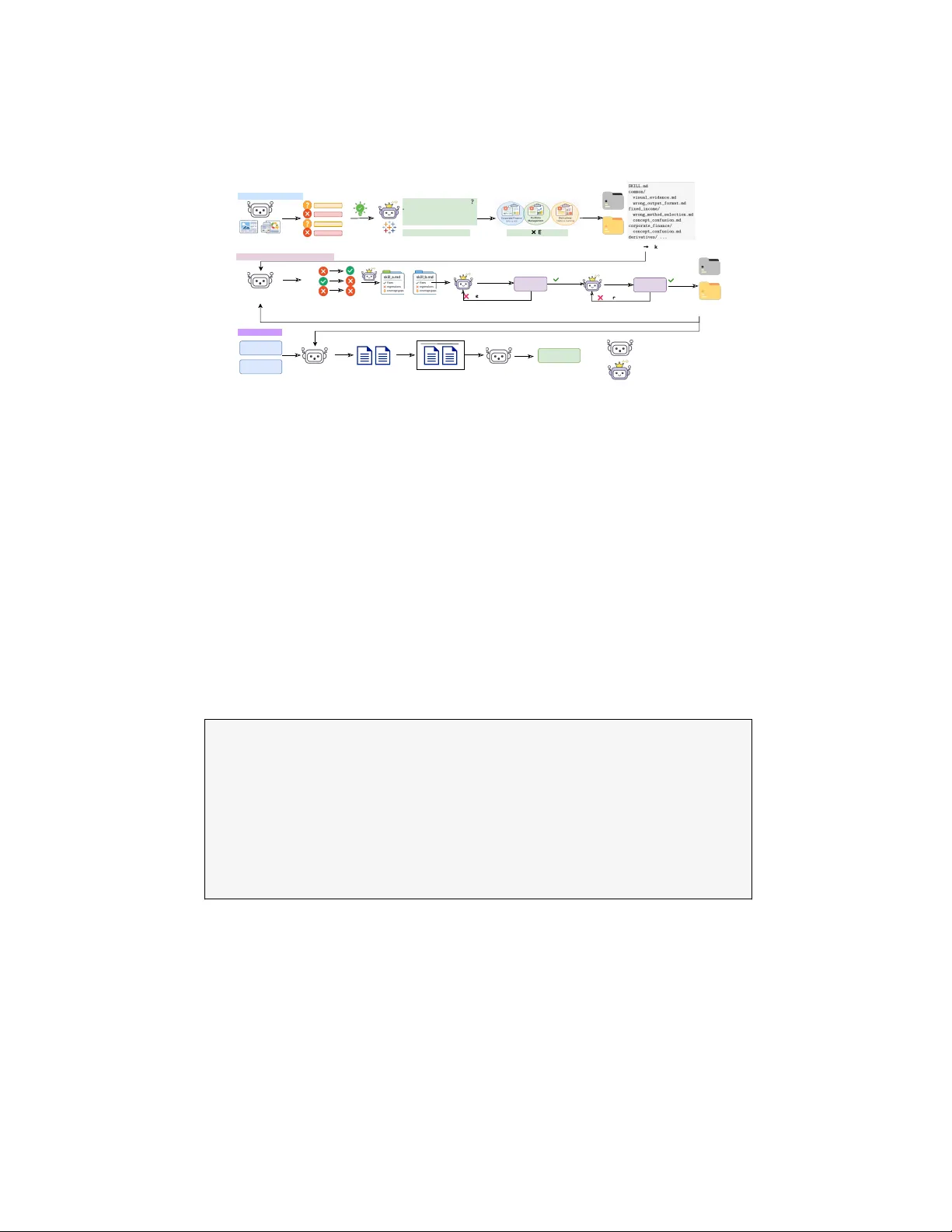

ASD A: Automated Skill Distillation and A daptation for Financial Reasoning Tik Y u Yim, W en ting T an, Sum Y ee Chan, T ak-W ah Lam, and Siu Ming Yiu The Univ ersit y of Hong Kong, Hong Kong SAR, China {tyyim, wt212796}@connect.hku.hk, sumychan@hku.hk, {twlam, smyiu}@cs.hku.hk Abstract. A dapting large language models (LLMs) to specialized fi- nancial reasoning t ypically requires exp ensiv e fine-tuning that produces mo del-lock ed expertise. T raining-free alternatives ha v e emerged, yet our exp erimen ts show that leading methods (GEP A and ACE) achiev e only marginal gains on the F AMMA financial reasoning b enchmark, exposing the limits of unstructured text optimization for complex, multi-step do- main reasoning. W e introduce Automated Skill Distillation and Adapta- tion (ASDA), a framework that automatically generates structured skill artifacts through iterativ e error-correctiv e learning without modifying mo del weigh ts. A teac her mo del analyzes a student mo del’s failures on financial reasoning tasks, clusters errors by subfield and error type, and syn thesizes skill files containing reasoning pro cedures, co de templates, and work ed examples, whic h are dynamically injected during inference. Ev aluated on F AMMA, ASDA achiev es up to +17.33 pp improv ement on arithmetic reasoning and +5.95 pp on non-arithmetic reasoning, sub- stan tially outperforming all training-free baselines. The resulting skill artifacts are h uman-readable, v ersion-controlled, and compatible with the Agen t Skills op en standard, offering an y organization with a labeled domain dataset a practical and auditable path to domain adaptation without weigh t access or retraining 1 . Keyw ords: LLM Adaptation · Financial Reasoning · Skill Distillation · T raining-F ree A daptation · Agent Skills 1 In tro duction Financial reasoning poses a distinctive challenge for general-purp ose LLMs: it demands sim ultaneous mastery of multi-step quantitativ e calculation and deep domain-sp ecific judgmen t, a com bination that pure math or pure kno wledge b enc hmarks do not join tly test [6,13]. Ev aluations across m ultiple financial b enc h- marks confirm a p ersisten t p erformance ceiling: F AMMA reveals that standard, 1 Co de and skill libraries are a v ailable at https://github.com/SallyTan13/ ASDA- skill 2 T.Y. Yim et al. non-reasoning fron tier mo dels achiev e only 38–45% o verall accuracy across eight financial subfields [13] 2 ; and FinBen finds that while LLMs handle information extraction well, they consistently struggle with adv anced reasoning and com- plex financial QA [6]. F AMMA’s error analysis finds that domain-know le dge gaps dominate mo del errors [13], as mo dels misapply financial concepts to the wrong con text or lac k the exp ertise to select the correct pro cedure. These are not failures that more parameters alone will fix—they require targeted adv ances in domain reasoning. The standard remedy—domain-specific fine-tuning—is costly , pro duces mo del-lo cke d exp ertise that becomes obsolete with eac h mo del release, and dep ends on sup ervision resources that many organizations lack [11,14]. This is especially problematic in regulated industries suc h as financial services, legal, and healthcare, where organizations deploy commercial LLMs via black-box API without w eight access. Automated prompt optimization offers a training-free al- ternativ e, but metho ds like GEP A [2] and ACE [1] optimize flat text strings — monolithic instruction blocks that lack the mo dularit y and executabilit y required for multi-step reasoning across diverse financial sub domains, as our F AMMA ex- p erimen ts confirm (Section 5). The missing abstraction is not a b etter prompt, but an exe cutable skil l : a mo dular, self-contained reasoning pro cedure that can b e indep endently comp osed, tested, and up dated for eac h target domain. W e introduce Automated Skill Distillation and Adaptation (ASD A) , a framework that automatically generates executable agen t skills from error anal- ysis without mo difying mo del w eigh ts. A teacher mo del diagnoses a studen t’s failures on financial tasks, clusters them by subfield and error type to identify the ro ot causes of domain-know le dge gaps , and synthesizes skill files containing domain-sp ecific reasoning pro cedures and co de templates, whic h a selector in- jects at inference time. Ev aluated on F AMMA, ASDA achiev es up to +17.33 pp on arithmetic and +5.95 pp on non-arithmetic reasoning after iterative refinemen t, significantly outperforming all training-free baselines. The resulting skill library is not a better prompt, but a new represen tational la yer b et ween the mo del and its deplo yment context that can b e version-con trolled, audited, and regenerated for an y successor mo del. 1.1 Con tributions (1) ASDA framework. W e introduce the first system to automatically gen- erate executable agent skills for domain-sp ecific reasoning using only blac k-b ox LLM access—no weigh t up dates or gradien t computation—substantially outper- forming all training-free baselines on F AMMA. (2) Self-sufficient adaptation from questions and answers alone. A self- teac hing ablation shows that ASD A can improv e a mo del using only the ques- tions and ground-truth answers in the training set—no sup erior teac her mo del 2 Extended-thinking mo dels (GPT-o1, DeepSeek-R1, Qw en-QwQ-32B) score 67–76% on F AMMA, and P oT-augmented v ariants reac h 78–86%. W e exclude these as think- ing budget introduces a confound orthogonal to domain adaptation; our ev aluation targets standard non-reasoning models under fixed inference budgets. ASD A: Automated Skill Distillation and Adaptation for Financial Reasoning 3 required—ac hieving +6.33 pp (73% of the full gain). This means an y organization with a labeled domain dataset can run ASD A on their deplo yed mo del directly , without access to a stronger or more exp ensive mo del, making the framew ork practical for real-w orld enterprise deplo yment. 2 Related W ork 2.1 Financial LLM Adaptation Domain-sp ecific fine-tuning has b een the dominant approach to adapting LLMs for financial tasks. Blo om b ergGPT [11] demonstrated the p oten tial of finance- sp ecific pretraining but required approximately 1.3 million GPU hours on a pro- prietary 363B-token corpus—resources b eyond most organizations. FinGPT [14] offered a more accessible alternativ e through LoRA-based fine-tuning on op en financial data. More recently , Xue et al. [13] explored distillation from DeepSeek- R1 to smaller models for financial reasoning. Despite these adv ances, all fine- tuning approaches share a fundamental limitation: they pro duce mo del-lo c ked exp ertise that requires re-training when the base mo del is up dated or replaced, and man y are incompatible with black-box API access to commercial LLMs. 2.2 T raining-F ree A daptation Automated prompt optimization offers a weigh t-free alternativ e. Prior work for- malizes this as automatic differentiation o v er text [15] or optimizable module comp ositions [7]. GEP A [2] ac hieves state-of-the-art results on several b enc h- marks through reflective prompt evolution. ACE [1] takes a test-time knowledge accum ulation approach, building contextual expertise during inference. While these metho ds a void fine-tuning costs, their output is a flat text string—a mono- lithic instruction block that cannot represent the mo dular, m ulti-step procedural kno wledge required for complex domain reasoning, as our F AMMA exp erimen ts confirm (Section 5). A complementary line of work treats failure analysis as the primary learning signal. LEMMA [3] synthesizes error-t yp e-grounded training data for mathe- matical reasoning, consistently outp erforming correction-agnostic data augmen- tation baselines, establishing that failure-driv en analysis yields richer adaptation signal—a principle ASD A extends to training-free, executable skill generation. A t the other end of the adaptation sp ectrum, test-time training (TTT) metho ds temp orarily up date mo del w eights at inference, requiring gradient access incom- patible with blac k-b o x API deploymen ts; ASDA o ccupies the gap b etw een these approac hes. 2.3 Skill-Based Agent Architectures Recen t agen t work has explored skill libraries as reusable kno wledge artifacts. V o yager [10] demonstrated that comp osable executable skills—stored as Ja v aScript 4 T.Y. Yim et al. co de—enable con tin ual capability gro wth in open-ended Minecraft environmen ts. The Agen t Skills op en standard [4] formalized p ortable Markdown skill files with routing metadata, progressive disclosure, and em b edded code templates; ASD A generates skill artifacts compatible with this standard. On the skill-distillation side, a concurrent w ork, SkillRL [12], distills hier- arc hical skills from interactiv e agent rollouts and co-evolv es them with a rein- forcemen t learning p olicy . SkillRL op erates in the in teractive agen tic setting and still requires SFT and weigh t up dates, whereas ASD A distills reasoning patterns from error analysis for static domain QA—en tirely training-free and compatible with API-only mo dels. A cross threads, no prior w ork has addressed automate d, tr aining-fr e e skill generation for domain-sp ecific r e asoning from error analysis, the gap ASDA fills. 3 Metho d: ASD A F ramew ork ASD A op erates through a teacher–studen t architecture comprising tw o phases: (1) a warm-up pip eline that establishes an initial skill library from systematic error analysis, and (2) an iter ative r efinement lo op that refines skills through rep eated evidence collection and v alidation. Figure 1 provides an ov erview. Before describing each phase, w e introduce three terms used throughout. An error t yp e is one of ten categories from a predefined taxonom y 3 . A pattern is a single, named failure scenario within a skill file, one sp ecific kno wledge gap or recurring mistake the model makes. A skill file groups all patterns that share the same financial subfield and error type; for example, fixed_income/wrong_ method_selection.md collects all wrong-metho d failures observ ed in the fixed income subfield. 3.1 Skills W arm-Up F ailure Analysis and Structured Annotation The w arm-up stage con- structs an initial skill library K 0 from the student mo del’s failures on the train- ing set. F or each question the student answ ers incorrectly , the teacher model receiv es the question, the student’s incorrect answ er and reasoning trace, and the ground-truth answ er. The teacher is then prompted to p erform failure anal- ysis and output a structured annotation in the follo wing format: { "subfield": "fixed_income", "error_type": "wrong method selection", "root_cause": "Lacks knowledge that forward rates must be composed sequentially as discount factors, not applied independently per period" } 3 The ten error t yp es are: visual evidence, wrong metho d selection, concept confusion, missed multi-step computation, unit/currency mistakes, missed constrain ts, wrong targets, wrong output format, co de execution errors (P oT-sp ecific), and other. ASD A: Automated Skill Distillation and Adaptation for Financial Reasoning 5 Domain-Specific Reasoning Questions Failure Cases Failure Analysis WHY student model failed ? - Missing Knowledge? - Concept Confusion? - Ignoring Constraints... CLASSIFY the error type (Subfield ✖ Error Type) Clustering Ground-Truth Skills Distillation → Skill Libary K0 Test on Student Model with and w/o skills Kt Evidence Collection Q+ Q- Q_gap analyze Common Subfield Attribution Analysis Coverage Refinement ❌ iterative refine / create Refine skills create new skills Verify Common Subfield Update Q_gap cases Q+ & Q- cases ❌ iterative refine Refine skills Verify post-verification Safety Refinement Update Skill Library Kt+1 Phase 1: Skill Warm Up Phase 2: Iterative Skill Refinement Inference Phase Question Context Selected Skill Files LLM-based Selector Student Model Augmented Prompt Answer Student Model Teacher Model Fig. 1. Overview of the ASDA framew ork. Phase 1 (warm-up): the teacher mo del analyzes student failures, pro ducing structured annotations that are clustered b y sub- field and error type to synthesize an initial skill library K 0 . Phase 2 (iterativ e re- finemen t): the library is refined through tw o sequential phases, cov erage refinement (resolving uncov ered failures in Q gap ) follow ed by safety refinement (suppressing re- gressions in Q − ), with ev ery skill up date gated by a correctness threshold. Inference: a selector reads SKILL.md and injects the relev an t skill files into the studen t’s prompt. The error_type field is constrained to the taxonom y defined ab o ve. The root_cause field captures the underlying knowledge gap rather than a surface description of what was computed incorrectly , ensuring the diagnosis is action- able for skill syn thesis. Skill Library Organization The annotated failures are clustered b y their (subfield, error_type) pair. Eac h cluster b ecomes one skill file. The library is therefore organized as a t wo-lev el hierarch y: SKILL.md % navigation + routing table common/ visual_evidence.md % cross-subfield patterns wrong_output_format.md fixed_income/ wrong_method_selection.md % one file per subfield x error type concept_confusion.md corporate_finance/ concept_confusion.md derivatives/ ... Within each skill file, the teacher synthesizes one p attern p er distinct failure scenario iden tified in the cluster. Eac h pattern contains: a concise description of the addressed knowledge gap, explicit "when to use“ conditions, step-by-step reasoning pro cedures, and work ed examples or co de templates. Figure 2 shows one pattern from fixed_income/wrong_method_selection.md ; the full file con- tains five additional patterns cov ering other recurring failure scenarios in that subfield. 6 T.Y. Yim et al. The library also includes a top-lev el SKILL.md navigation file that summarizes the scope of each skill and pro vides a structured mapping from subfield keyw ords and failed financial patterns to skill file paths. This navigation file allo ws the do wnstream selector to identify relev ant skills without parsing every skill file in full. Skill Selection and Injection A t inference time, an LLM-based selector reads the question text and the SKILL.md mapping table to identify the relev ant fi- nancial subfield and match the question’s characteristics against listed patterns. The selector ma y c ho ose m ultiple skill files for a single question, since complex fi- nancial reasoning often requires combining guidance from sev eral patterns (e.g., a fixed income pricing question ma y need b oth wrong_method_selection.md and common/missed_constraints.md ). Selected skill files are injected into the studen t’s prompt as domain knowledge, guiding it to follow the appropriate rea- soning pro cedure for the question. 3.2 Dual-Phase Iterative Skill Refinemen t The warm-up stage captures the m ost systematic failure patterns but leav es tw o residual problems: c over age gaps , where some failures remain because existing skills do not y et address the required kno wledge; and r e gr essions , where skill injection causes previously correct answers to b ecome incorrect b ecause a skill o verfits a narrow failure pattern and misleads the mo del on related but distinct questions. W e address these through iterative refinemen t, alternating b et ween a c over age phase that expands skill cov erage and a safety phase that suppresses regressions. Across b oth phases, ev ery candidate skill up date passes through a verific ation gate : the up dated skill is tested by ha ving the student re-solve the target questions with the new skill injected, and is committed to the library only if the resulting accuracy meets a predefined threshold τ . If it fails, the teacher regenerates a revised prop osal, rep eating up to N max attempts b efore falling bac k to the previous skill v ersion. The full pro cedure is summarized in Algorithm 1. Evidence Collection and Attribution A t the start of eac h refinemen t it- eration t , ev ery training question is ev aluated under tw o conditions: once with the curren t skill library K t injected, and once without an y skills. By comparing results, we partition the training set into three disjoint groups: Q + t (correct with skills), Q − t (incorrect with skills but correct without, i.e., regressions introduced b y the current library), and Q gap t (incorrect under b oth conditions, i.e., cov erage failures that the library has not y et resolved). Since multiple skill files may b e loaded for eac h question, a naïv e per-question outcome cannot be attributed to a sp ecific file. An attribution step follows: for eac h question in Q + t , Q − t , and Q gap t , the teac her examines the studen t’s reason- ing trace alongside the loaded files and identifies the single file most resp onsible for the outcome. Questions are then re-group ed b y their attributed file, pro duc- ing p er-file evidence sets that isolate eac h file’s individual contribution to fixes, regressions, and remaining co verage gaps. ASD A: Automated Skill Distillation and Adaptation for Financial Reasoning 7 fixed_income/wrong_method_selection.md Sequential Discounting with Forward Rates for Coupon Bonds When pricing coupon bonds using forward rates, each cash flow must be discounted by the cumulative product of (1 + forward_rate) for all periods from today to that cash flow's date, not by a single rate or simple average. When to Use Questions asking for bond price given a table/list of forward rates (labeled "Y ear 0 (today)", "Y ear 1", etc.) and bond characteristics (coupon rate, maturity , par value). Procedure 1. Price = Σ[CF t / Π(1 + f i )] for i =0 to t −1 , where CF t is cash flow at time t and f i is forward rate for period i 2. Extract all forward rates from the given table in chronological order ( f 0 , f 1 , f 2 , …) 3. For each cash flow date t , compute cumulative discount factor: DF t = (1+ f 0 )(1+ f 1 )…(1+ f t −1 ) 4. Divide each cash flow by its corresponding cumulative discount factor and sum all present values 5. Return the final sum as the bond price (expression, not print) Correct Code Scenario: 3-year bond, 7% annual coupon, $1,000 par . Forwar d rates: Y ear 0=4%, Y ear 1=5%, Y ear 2=6%. # Bond characteristics par_value = 1000 coupon_rate = 0.07 maturity = 3 annual_coupon = par_value * coupon_rate # Forward rates for each period forward_rates = [ 0.04 , 0.05 , 0.06 ] # Calculate price using sequential discounting price = 0 cumulative_discount = 1.0 for year in range( 1 , maturity + 1 ): cumulative_discount *= ( 1 + forward_rates[year - 1 ]) if year == maturity: cash_flow = annual_coupon + par_value else : cash_flow = annual_coupon price += cash_flow / cumulative_discount price # Result: approximately 1027.51 Common Bugs to A void Using forward rates directly as discount rates without cumulative multiplication ✗ A veraging forward rates instead of compounding them sequentially ✗ Forgetting to include par value in final year's cash flow ✗ Using print(price) instead of returning price as final expression ✗ Off-by-one errors in indexing forward rates (Y ear 0 rate applies to Y ear 1 cash flow) ✗ Fig. 2. One pattern from an ASDA skill file pro duced during warm-up from Haiku 3.5 failure analysis by Sonnet 4.5. The full file con tains fiv e additional patterns. Co verage Phase F or eac h skill file, the teacher examines the Q gap t cases at- tributed to that file and diagnoses why cov erage fails. Common causes include missing procedures for an edge case, trigger conditions that are to o narro w to fire on relev an t questions, or the absence of a work ed example for a pattern the file do es not yet con tain. Based on this diagnosis, the teac her prop oses an up date, either b y refining an existing pattern or by adding a new one. Each prop osal is submitted to the verification gate: the student re-solv es the attributed Q gap t 8 T.Y. Yim et al. Algorithm 1 Dual-Phase Iterative Skill Refinemen t Require: Skill library K 0 , training set D , studen t M s , teacher M t , max iterations T Ensure: Refined skill library K ∗ 1: for t = 1 to T do // Evidenc e Col le ction 2: Q + , Q − , Q gap ← Ev alWithWithout ( D , K t , M s ) 3: A ttributeToFiles ( Q + , Q − , Q gap , M t ) // Cover age Phase 4: for each file f with attributed Q gap f = ∅ do 5: f ′ ← M t . Pr oposeExp ansion ( f , Q gap f ) 6: if Verify ( f ′ , Q gap f , M s ) ≥ τ cov then 7: K t [ f ] ← f ′ 8: end if 9: end for 10: ˜ Q + , ˜ Q − ← PostCo verageVerify ( K t , Q + , Q gap , M s ) // Safety Phase 11: for each file f with attributed ˜ Q − f = ∅ do 12: f ′ ← M t . Pr oposeRep air ( f , ˜ Q + f , ˜ Q − f ) 13: if Verify ( f ′ , ˜ Q + f , M s ) ≥ τ safe then 14: K t [ f ] ← f ′ 15: end if 16: end for 17: K t +1 ← K t 18: end for 19: return K T cases with the candidate update injected, and the up date is accepted only if the reco very rate exceeds τ cov . After all p er-file cov erage up dates are committed, a p ost-co verage verification pass re-ev aluates the entire affected set. Cases in Q gap t that are no w solved are promoted into an up dated p ositiv e set ˜ Q + t . Cases in Q + t that regress under the mo dified skills are merged with the existing Q − t to form an up dated regression set ˜ Q − t . This up dated partition forms the input to the safet y phase. Safet y Phase The safety phase resolves regressions in ˜ Q − t , including b oth pre- existing ones and those newly in tro duced by the co v erage phase, without dis- rupting correct b eha vior on ˜ Q + t . F or each skill file, the teacher receives b oth sets sim ultaneously as contrastiv e evidence: the ˜ Q + t cases (annotated with what the curren t skill gets righ t) serv e as preserv ation constraints, and the ˜ Q − t cases (an- notated with what go es wrong) serv e as repair targets. The teacher prop oses a revised skill that remov es or narrows the guidance resp onsible for the regressions while preserving the reasoning steps that pro duce correct answ ers on the p osi- tiv e set. Each prop osal again passes through the verification gate with threshold ASD A: Automated Skill Distillation and Adaptation for Financial Reasoning 9 τ safe , which requires that accuracy on the p ositiv e cases not degrade to o muc h while reco vering as man y negative cases as p ossible. After b oth phases complete, the up dated library K t +1 b ecomes the input for the next iteration. 4 Exp erimen tal Setup 4.1 Benc hmark: F AMMA F AMMA-Basic [13] pro vides the scale, arithmetic/non-arithmetic decomposi- tion, and self-contained textual context our pip eline requires. 4 It comprises 1,945 questions sourced from universit y textb o oks and professional finance exams, spanning eight financial sub domains (e.g., corp orate finance, deriv ativ es, p ort- folio management) across three difficult y levels, with an explicit decomp osition b et ween arithmetic and non-arithmetic questions that enables separate ev alua- tion of pro cedural and conceptual skill effectiv eness. W e use the F AMMA-Basic- T xt release, whic h pro vides OCR-extracted textual con text for eac h question, ensuring that ev aluation targets reasoning abilit y rather than retriev al. 5 4.2 Data Filtering and Split W e restrict the corpus to the 1,378 English-language questions to control for lan- guage v ariation, ensuring that observ ed p erformance differences reflect reasoning capabilit y alone. Since F AMMA provides no official train–test split, we construct our own: separating the English corpus into arithmetic and non-arithmetic sub- sets and applying stratified 60/40 splits based on difficulty level (easy , medium, hard) and question type (m ultiple-choice vs. op en-ended). This produces 448 training and 300 test questions for arithmetic, and 378 training and 252 test questions for non-arithmetic. All reported results are on these held-out test sets and are therefore not directly comparable to those rep orted b y Xue et al. [13]. 4.3 Ev aluation Proto col ASD A’s distillation pip eline operates on individual question–answer pairs, so we ev aluate eac h question indep endently rather than in group ed LLM calls as in the original F AMMA proto col. F AMMA stores shared context only in the first sub- question of each group, so w e propagate this con text to all sub-questions to pre- serv e information completeness. 6 Arithmetic questions use Program-of-Though t 4 W e also considered FinMR [5], Fin anceMath [16], FinanceQA [9], and FinMME [8], but these are either to o small for reliable train–test splits, withhold ground-truth answ ers for their primary test sets, yield baseline results that differ substantially from published figures, or fo cus on visual and retriev al-based reasoning rather than pro cedural financial reasoning. 5 F AMMA also includes a Liv ePro subset of 103 expert-curated questions, but only 35 are English-language and most are op en-ended, making it to o small for our pip eline. 6 A dditional prepro cessing: w e enforce expression-based PoT outputs rather than print() statements to preven t execution failures. 10 T.Y. Yim et al. T able 1. Ev aluation protocol modifications. Original F AMMA Ours Question handling Group ed sub-questions Indep enden t MC ev aluation LLM judge Rule-based exact match Op en-ended ev aluation LLM judge (GPT-4o) LLM judge (Qwen-Max) Arithmetic execution P oT P oT + MC mapping step (P oT) code execution, with an additional selection step that maps n umeric out- puts to the closest multiple-c hoice option where applicable. F or ev aluation, w e adopt a h ybrid approac h: rule-based exact matc hing for m ultiple-choice ques- tions and LLM-based judging for op en-ended questions, replacing the original proto col’s use of LLM judging for all question t yp es. T able 1 summarizes these mo difications. V alidation confirms these mo difications do not inflate gains: swapping Qwen- Max for GPT-4o yields 99.3% agreement across 1,104 judgments (delta: 0.00 pp arithmetic, − 0 . 39 pp non-arithmetic); adopting the full F AMMA LLM-judge strategy confirms the same shift. 4.4 Baselines W e compare ASDA against tw o leading training-free adaptation metho ds that op erate under the same deploymen t constraint—blac k-b o x API access without w eight modifications: GEP A [2] and A CE [1]. Both metho ds optimize a sin- gle monolithic text applied uniformly to all questions. The baseline condition uses the student mo del with a standard task prompt and no injected skills or optimized prompts. Neither GEP A nor ACE has previously b een ev aluated on F AMMA; the results rep orted here are, to our kno wledge, the first published ev aluations of both metho ds on this b enc hmark. T o ensure a fair comparison, we adapt training conditions to each method’s arc hitectural requirements. 7 4.5 Implemen tation Details T w o comp onen ts are fixed across all exp erimen tal conditions: Qw en-Max serves as the LLM ev aluation judge for op en-ended questions, and Qw en-T urb o p er- forms the MC selection step that maps numeric PoT outputs to answ er c hoices. All inference is run at temp erature 0 for reproducibility . 8 7 GEP A follows its original 3-wa y split protocol, training on 50% of our training p ool (222 arithmetic, 188 non-arithmetic) and selecting the b est prompt on the remaining 50%. ACE and ASD A use the full training p ool (448 arithmetic, 378 non-arithmetic). 8 All mo dels are accessed via the Anthropic API, with tw o exceptions: Haiku 3.5 via Op enRouter (no longer av ailable on the An thropic API directly) and Qwen mo dels via DashScop e. ASD A: Automated Skill Distillation and Adaptation for Financial Reasoning 11 T able 2. ASDA results across student mo dels on F AMMA. F or Haiku 3.5, GEP A and A CE are shown as reference baselines. All exp erimen ts use Sonnet 4.5 as the teacher mo del. WU = W arm-Up; E2 = best refinement epo c h. ∆ denotes absolute improv ement in p ercen tage points ov er each mo del’s own baseline. Studen t Metho d Arithmetic Non-Arithmetic A cc. (%) ∆ A cc. (%) ∆ Haiku 3.5 Baseline 41.00 — 49.21 — GEP A [2] 42.33 +1.33 50.79 +1.58 A CE [1] 44.30 +3.30 49.60 +0.39 ASD A WU (ours) 49.67 +8.67 51.98 +2.78 ASD A E2 (ours) 58.33 +17.33 55.16 +5.95 Haiku 4.5 Baseline 64.67 — 57.14 — ASD A WU (ours) 69.67 +5.00 56.35 − 0.79 ASD A E2 (ours) 70.66 +5.99 58.74 +1.60 5 Results and Analysis 5.1 Main Results W e ev aluate ASD A across t wo studen t models on both arithmetic and non- arithmetic tasks. F or Haiku 3.5, w e additionally include GEP A and A CE as training-free reference points; both ac hieve only marginal gains on F AMMA, whic h suggests the structural limitations of flat-text optimization. ASD A consisten tly improv es performance for Claude-family student mo dels. On arithmetic, Haiku 3.5 gains +8.67 pp at warm-up and +17.33 pp after tw o refinemen t ep ochs. Haiku 4.5, despite its stronger 64.67% baseline, achiev es a +5.99 pp—showing that ASD A adds v alue even for more capable models. Non- arithmetic gains are smaller but consistent for Haiku 3.5 (+2.78 pp warm-up, +5.95 pp at E2); Haiku 4.5 sees mo dest non-arithmetic impro vemen t only after refinemen t (+1.60 pp at E2). Effe ct of iter ative r efinement. The w arm-up stage targets the most frequent fail- ure patterns and delivers the first large wa v e of gains. Iterativ e refinement then addresses residual failures that remain after initial skill injection. F or arithmetic, accuracy improv es from 49.67% at warm-up to 54.67% at ep o c h 1 and reaches 58.33% at ep och 2. A smaller but consisten t trend holds for non-arithmetic, where ep o c h 2 p eaks at 55.16%. Both domains regress at ep och 3 (arithmetic: 54.33%; non-arithmetic: 51.98%), indicating ov erfitting to residual training-set patterns after tw o refinement passes. In practice, tw o refinement ep o c hs repre- sen t the optimal op erating p oin t. Gains by question typ e. Skills consisten tly produce larger gains on m ultiple- c hoice questions than on op en-ended ones. F or Haiku 3.5 arithmetic at warm-up, 12 T.Y. Yim et al. T able 3. Self-teac hing ablation. Each mo del serves as both student and teacher; no sup erior mo del is inv olved. F ull ASDA (Sonnet 4.5 teacher) results are shown for ref- erence. Studen t Domain Baseline With Skills ∆ F ul l ASDA (Sonnet 4.5 te acher), for r efer enc e Haiku 3.5 Arithmetic 41.00 49.67 +8.67 Haiku 3.5 Non-Arith 49.21 51.98 +2.78 Self-T e aching (student = te acher) Haiku 3.5 Arithmetic 41.00 47.33 +6.33 Haiku 3.5 Non-Arith 49.21 50.79 +1.58 MC accuracy rises by +14.39 pp compared to +3.73 pp for op en-ended; Haiku 4.5 sho ws the same pattern (MC +7.91 pp, op en-ended +2.48 pp). A skill that nar- ro ws the solution procedure is most useful when the answer space is already constrained to a few options. F or op en-ended generation, where the mo del must pro duce a free-form numerical or textual resp onse, the guidance is less precise and the ro om for regression is higher. R e gr essions r eve al the c omplementary risk. In sampled cases, the most common pattern is skill-induced ov er-reasoning: the mo del elab orates beyond what the question requires and revises an already-correct judgment. Because the selector loads all skill files for a subfield as a bundle, ev ery question receives guidance re- gardless of whether it needs it — questions the baseline already handles correctly can b e destabilized by unnecessary proce dural elab oration. 5.2 Qualitativ e Analysis T o illustrate how skill artifacts op erate at inference time, Figure 3 presents an arithmetic case from the Haiku 3.5 warm-up ev aluation where the baseline fails and skill injection succeeds. The same skill file was credited with 7 additional fixes on related fixed income questions, illustrating the reusabilit y of subfield- sp ecific skill artifacts. 5.3 Where Do es the Impro vemen t Come F rom? Self-T eaching Ablation ASD A’s gains could come from t wo sources: knowledge contributed by a superior teac her mo del, or knowledge drawn from the training data itself. T o separate these, we run a self-teac hing configuration where the student mo del acts as its o wn teacher—it analyzes its own failures, builds the skill library , and then uses those skills at inference. No stronger mo del is in volv ed. F or Haiku 3.5 arithmetic, self-teac hing ac hieves +6.33 pp—73% of the +8.67 pp gain from using a Sonnet 4.5 teac her. This shows that most of the b enefit comes ASD A: Automated Skill Distillation and Adaptation for Financial Reasoning 13 FAM MA · AR IT HM ET IC · FI XE D IN CO ME What is the price of a 2-year maturity bond with a 5% coupon rate paid annually? (Par = $1,000) Forward rates: Y ear 0 = 3%, Y ear 1 = 4%, Y ear 2 = 5%, Y ear 3 = 6% A. $1,092.97 B. $1,054.24 C. $1,028.51 ✓ D. $1,073.34 E. None BA SE LIN E (NO S KIL LS ) forward_rates = [ 0.03 , 0.04 , 0.05 , 0.06 ] par_value = 1000 coupon_rate = 0.05 ; maturity = 2 def discount_cashflow(cf, yrs, rate): return cf / (( 1 + rate) ** yrs) coupon = par_value * coupon_rate bond_price = ( discount_cashflow(coupon, 1 , forward_rates[ 0 ]) + discount_cashflow( coupon + par_value, 2 , forward_rates[ 1 ] ) ) # Year 2: 1050/(1.04)² = 970.78 # Should be: 1050/(1.03×1.04) bond_price $1,019.33 → Option E ✗ WI TH A SD A SK ILL S par_value = 1000 coupon_rate = 0.05 ; maturity = 2 annual_coupon = par_value * coupon_rate forward_rates = [ 0.03 , 0.04 ] price = 0 cumulative_discount = 1.0 for year in range( 1 , maturity + 1 ): cumulative_discount *= ( 1 + forward_rates[year - 1 ]) if year == maturity: cf = annual_coupon + par_value else : cf = annual_coupon price += cf / cumulative_discount price $1,028.75 → Option C ✓ Error: The baseline discounts Y ear 2 using (1.04)² = 1.0816, treating the Y ear 1 forward rate as a spot rate. The correct denominator chains all forward rates: (1.03)(1.04) = 1.0712. The skill file fixed_income/wrong_method_selection.md (Fig. 2) directly addresses this in its procedure and "Common Bugs to Avoid." Fig. 3. Baseline vs. skill-augmented output on a F AMMA fixed income question (Haiku 3.5, warm-up). The skill file in Fig. 2 provides the domain-sp ecific pro cedure that corrects the baseline error. The same skill file was credited with 7 additional fixes on related fixed income questions. from the training data, not from the teacher’s sup erior knowledge. It is worth noting that the training questions provide only the question text and the correct answ er—not work ed solutions or step-by-step reasoning. Even so, seeing where it consisten tly go es wrong is enough for the mo del to iden tify its recurring failure patterns and syn thesize skills to address them. In other w ords, the mo del al- ready p ossesses m uch of the relev an t domain knowledge; the distillation pro cess giv es it the structure to apply that kno wledge reliably . The remaining 2.34 pp gap reflects the teacher’s contribution—a stronger mo del pro duces sharper fail- ure diagnoses and more precise skill form ulations. The same pattern holds for non-arithmetic (+1.58 pp self-teaching vs. +2.78 pp with a Sonnet teacher). 5.4 Are Skills Student-Specific? Cross-T ransfer Exp erimen t The self-teaching results raise a natural follow-on question: once skills are dis- tilled for one mo del, can they b enefit another? W e apply skills generated from Haiku 3.5’s failures to Haiku 4.5 (a stronger model in the same family) and compare against skills deriv ed from Haiku 4.5’s own failures. Cross-mo del transfer pro duces a net regression of − 2.33 pp, driven by a sharp op en-ended decline ( − 6.21 pp) that ov erwhelms mo dest MC gains (+2.16 pp). In 14 T.Y. Yim et al. T able 4. Skill p ortability: Haiku 4.5 arithmetic (300 ev al questions). Own skills are generated from Haiku 4.5’s failures; cross-transfer uses skills generated from Haiku 3.5’s failures. Skills Source Baseline With Skills ∆ MC ∆ Op en ∆ Own skills (H4.5) 64.67 69.67 +5.00 +7.91 +2.48 H3.5 skills (cross-transfer) 64.67 62.33 − 2.33 +2.16 − 6.21 con trast, Haiku 4.5 with its own ASDA skills gains a consisten t +5.00 pp across b oth question types. The practical implication is direct: skills should be generated for eac h de- plo yed model indep endently . Reusing skills across mo del generations is unlik ely to yield p ositiv e returns and can actively harm the stronger mo del. At approx- imately $13 and ∼ 6 hours of w all-clock time per our model configurations, 9 studen t-sp ecific distillation is op erationally feasible at deplo yment scale. 6 Discussion and Conclusion Discussion. T wo experimental findings illuminate the underlying mec hanism of ASD A. The self-teaching result—where a mo del acting as its o wn teac her reco vers 73% of the full arithmetic gain—shows that the primary source of im- pro vemen t is not the teac her’s sup erior knowledge but the structure imposed by the distillation pro cess itself. Systematically en umerating failure patterns across a training set forces the mo del to externalize domain knowledge it already im- plicitly holds but cannot reliably apply during single-pass inference. The cross- transfer failure reinforces this view from the opposite direction: skills are not generic domain knowledge that transfers across mo dels, but artifacts of a sp e- cific mo del’s failure distribution. Applying one mo del’s skills to a stronger mo del activ ely constrains it b y the w eaker mo del’s blind sp ots. T ogether, these results c haracterize skills as mo del-sp e cific failur e r eme dies —a distinction with direct consequences for how skill libraries should b e managed in practice. F or organi- zations in regulated industries—financial services, legal, and healthcare—that rely on commercial LLMs via blac k-b o x API for kno wledge-intensiv e workflo ws, ASD A offers a concrete operational path: run the distillation pip eline once on a lab eled in-domain dataset, version-con trol the resulting skill files alongside ap- plication co de, and regenerate them when the base mo del is upgraded. The skill library then functions as an auditable, insp ectable kno wledge la yer that domain exp erts can review, compliance teams can certify , and engineering teams can up date without touching model weigh ts. 9 W arm-up pip eline cost for the arithmetic configuration (Haiku 3.5 student, Son- net 4.5 teacher), including baseline generation, failure analysis, skill synthesis, and ev aluation with skills: appro ximately $13 across ∼ 10M tokens in ∼ 6 hours wall-clock time. Costs computed at March 2026 API pricing. ASD A: Automated Skill Distillation and Adaptation for Financial Reasoning 15 ASD A’s gains are largest when failure patterns are clustered and the task has w ell-defined pro cedural structure, as with arithmetic reasoning via Program-of- Though t. When errors are more disp ersed—as in non-arithmetic tasks—the sig- nal av ailable for distillation is weak er and regression risk increases. This b ound- ary condition suggests that diagnostic quality (ho w cleanly errors cluster by t yp e and subfield) predicts adaptation success alongside ra w mo del capability . Limitations. Results are confined to F AMMA and the Claude mo del family , leav- ing op en ho w the error taxonomy , skill format, and refinement dynamics trans- fer to other domains. F AMMA’s OCR-extracted text also introduces a corpus- sp ecific confound: skills distilled from questions with gen uine OCR artifacts can enco de data-correction heuristics that misfire on correctly parsed test questions, inflating regression coun ts in wa ys that may not app ear on cleaner datasets. F utur e W ork. Cr oss-domain tr ansfer. Legal and tax reasoning are the most nat- ural next targets, structured, pro cedural, and with explicit auditability require- men ts that align with the skill library’s insp ectable format. Skil l c ompr ession. The current pip eline generates 10–30 skill files p er con- figuration. A pruning step that measures per-skill regression rates and merges narro wly scoped files could reduce regressions while sharpening routing precision. Conclusion. The central finding of this work is that failure-driven distillation can externalize laten t domain knowledge into an explicit, inspectable form that single-pass inference cannot access without modifying model w eights. Our ab- lations further rev eal that the resulting skills function as mo del-sp e cific failur e r eme dies : artifacts of a particular mo del’s failure distribution that cannot b e transferred across mo del generations, but can be cheaply regenerated for an y deplo yed model giv en only a lab eled domain dataset—at a one-time cost of ap- pro ximately $13 and six hours of wall-clock time. The skill library is not a b etter prompt: it is a new represen tational la yer b et w een the raw model and its deplo y- men t con text, one that can b e version-con trolled, audited, and regenerated for successor mo dels. Whether this lay er generalizes to other kno wledge-intensiv e domains, and ho w far self-teaching can substitute for stronger sup ervision, re- main the cen tral op en questions. Generativ e AI Disclosure The authors used generativ e AI tools to assist with man uscript editing and exp erimen tal pip eline dev elopment. All scientific conten t, in- cluding the hypotheses, exp erimen tal design, and conclusions, is entirely the work of the human authors. Disclosure of In terests. The authors hav e no comp eting in terests to declare that are relev ant to the conten t of this article. References 1. Zhang, Q., Hu, C., Upasani, S., Ma, B., Hong, F., Kaman uru, V., Rainton, J., W u, C., Ji, M., Li, H., Thakker, U., Zou, J., Olukotun, K.: Agentic Context En- gineering: Evolving Contexts for Self-Improving Language Mo dels. arXiv preprint arXiv:2510.04618 (2025) 16 T.Y. Yim et al. 2. Agra wal, L .A., T an, S., Soylu, D., Ziems, N., Khare, R., Opsahl-Ong, K., Singhvi, A., Shandilya, H., Ry an, M.J., Jiang, M., P otts, C., Sen, K., Dimakis, A.G., Stoica, I., Klein, D., Zaharia, M., Khattab, O.: GEP A: Reflective prompt evolution can outp erform reinforcemen t learning. arXiv preprint arXiv:2507.19457 (2025) 3. P an, Z., et al.: LEMMA: Learning from errors for mathematical adv ancemen t in LLMs. In: Findings of ACL 2025. arXiv preprint arXiv:2503.17439 (2025) 4. An thropic: Agen t Skills: An open standard for executable agent knowledge. https: //agentskills.io (2025) 5. Deng, S., Peng, H., Xu, J., Mao, R., Giurcˇ anean u, C.D., Liu, J.: FinMR: A Kno wledge-Intensiv e Multimo dal Benchmark for Adv anced Financial Reasoning. arXiv preprint arXiv:2510.07852 (2025) 6. Xie, Q., et al.: FinBen: A holistic financial benchmark for large language models. In: NeurIPS 2024. arXiv preprint arXiv:2402.12659 (2024) 7. Khattab, O., Potts, C., Zaharia, M.: DSPy: Compiling declarative language mo del calls into self-impro ving pipelines. arXiv preprint arXiv:2310.03714 (2023) 8. Luo, J., et al.: FinMME: Benchmark Dataset for Financial Multi-Modal Reasoning Ev aluation. arXiv preprint arXiv:2505.24714 (2025) 9. Mateega, S., Georgescu, C., T ang, D.: FinanceQA: A Benchmark for Ev aluat- ing Financial Analysis Capabilities of Large Language Models. arXiv preprint arXiv:2501.18062 (2025) 10. W ang, G., Xie, Y., Jiang, Y., Mandlek ar, A., Xiao, C., Zh u, Y., F an, L., Anand- kumar, A.: V o yager: An op en-ended embo died agent with large language models. arXiv preprint arXiv:2305.16291 (2023) 11. W u, S., Irso y , O., Lu, S., Dab er, V., Dredze, M., Gehrmann, S., Gupta, P ., Ishrat, S., Jha, A., Johnston, S., et al.: Blo ombergGPT: A large language mo del for fi- nance. arXiv preprint arXiv:2303.17564 (2023) 12. Xia, P ., et al.: SkillRL: Evolving agen ts via recursive skill-augmented reinforcemen t learning. arXiv preprint arXiv:2602.08234 (2026) 13. Xue, Z., et al.: F AMMA: A b enc hmark for financial domain multilingual m ulti- mo dal question answering. arXiv preprint arXiv:2410.04526 (2024) 14. Y ang, H., Liu, X., W ang, C.: FinGPT: Op en-source financial large language models. arXiv preprint arXiv:2306.06031 (2023) 15. Yüksekgon ül, E., Bianchi, F., Boen, J., Liu, T., Zou, J.: T extGrad: Automatic “differen tiation” via text. arXiv preprint arXiv:2406.07496 (2024) 16. Zhao, Y., Liu, H., Long, Y., Zhang, R., Zhao, C., Cohan, A.: Finance- Math: Knowledge-In tensiv e Math Reasoning in Finance Domains. arXiv preprin t arXiv:2311.09797 (2024)

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment