Diffusion Models for Joint Audio-Video Generation

Multimodal generative models have shown remarkable progress in single-modality video and audio synthesis, yet truly joint audio-video generation remains an open challenge. In this paper, I explore four key contributions to advance this field. First, …

Authors: Alej, ro Paredes La Torre

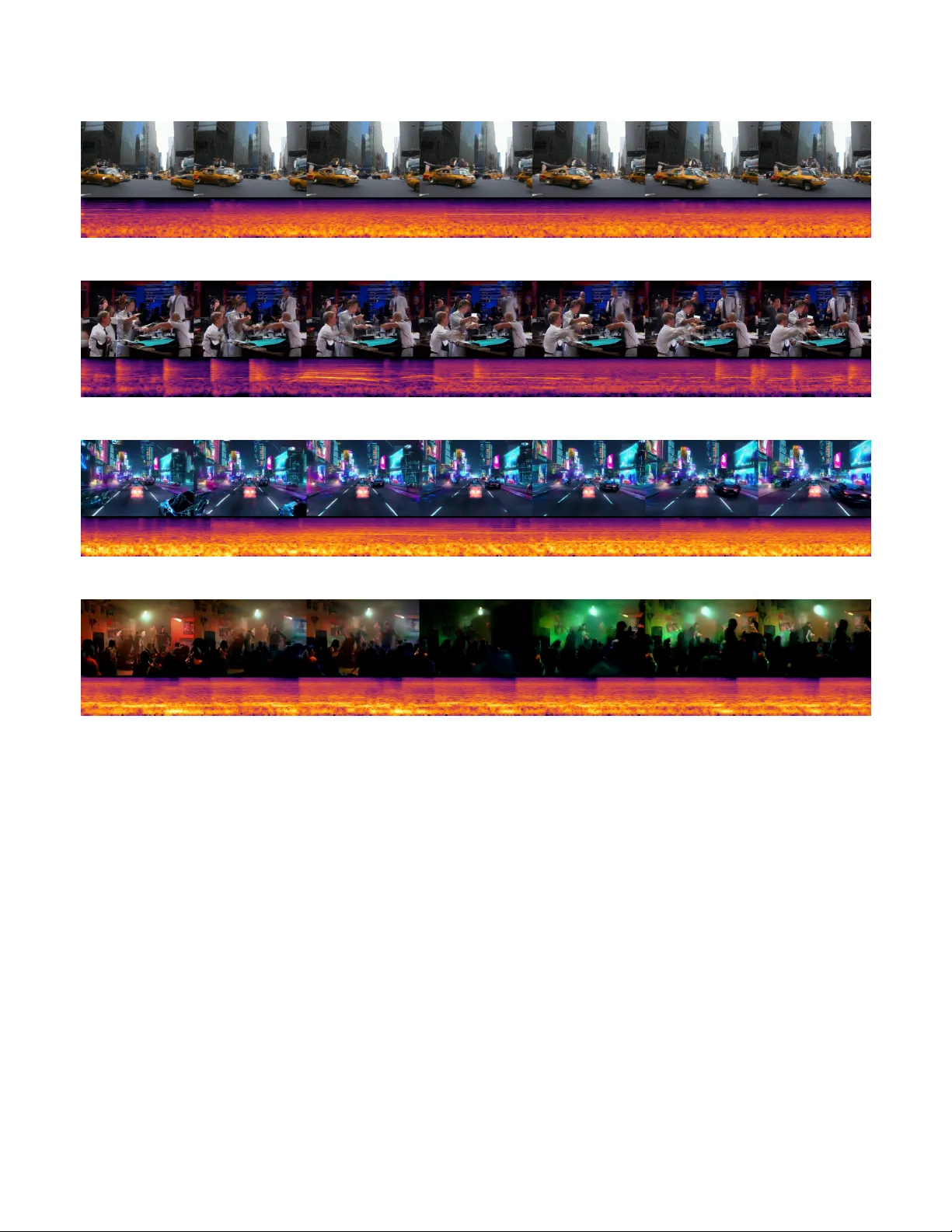

Dif fusion Models for Joint Audio-V ideo Generation Alejandro Paredes La T orre Duke University alejandro.paredeslatorre@duke.edu Abstract —Multimodal generative models have shown remark- able progr ess in single-modality video and audio synthesis, yet truly joint audio–video generation remains an open challenge. In this paper , I explore four key contrib utions to advance this field. First, I r elease two high-quality , paired audio–video datasets. The datasets consisting on 13 hours of video-game clips and 64 hours of concert performances, each segmented into consistent 34-second samples to facilitate reproducible resear ch. Second, I train the MM-Diffusion architecture from scratch on our datasets, demonstrating its ability to produce semantically coherent audio–video pairs and quantitatively evaluating align- ment on rapid actions and musical cues. Third, I in vestigate joint latent diffusion by leveraging pretrained video and audio encoder–decoders, uncovering challenges and inconsistencies in the multimodal decoding stage. Finally , I propose a sequential two-step text-to-audio-video generation pipeline: first generating video, then conditioning on both the video output and the original prompt to synthesize temporally synchronized audio. My experiments show that this modular approach yields high-fidelity generations of audio video generation. The code can be found in https://github .com/AlejandroParedesL T/audioV ideo-GenAI Index T erms —Multimodal Generation, A udio-V ideo Generation Fig. 2: T wo-step sequential generation using prompt "A liv ely street dance battle under neon lights, with dancers showing off impressive moves to an ener getic hip-hop beat. The crowd cheers ...". A clear alignment between the motion of dancing and rhythmic patterns reflected on the spectrogram can be observed. I. Intr oduction Multimodal generative models hav e recently emer ged as a vibrant research area, driv en by advances in dif fusion techniques [1]–[3]. Research on video generation models has seen general improv ements on massive long-context generation, with increasing work being done on foundational models that can effecti vely generate videos from a very wide range of topics [2], [4]. Multimodal generative models are increasingly gaining attention with the increasing attention to the research of large language models and diffusion models. Building on important contributions for video generation [4], po werful models such as Stable V ideo diffusion [5] and Sora [3] can create guided text-to-video, based on dif fusion techniques e xtensiv ely used in image generation [6], while models such as AudioLDM [7], MusicGen [8] and AudioGen [9] generate audio and music effects using very efficient techniques. These models are limited to single-modality generation, either in vision or audio. Recent efforts have been exploring the research gaps for audio-video joint generation. MM-dif fusion [10] is a notable ef fort to align audio-video joint generation by using a dual U-Net with cross-attention between audio and video pairs, later being improved by [11], which enhanced the training sequence by proposing a framework to perform dif fusion on aligned latent representations of audio video. Other techniques such as An y-to-Any [12] project the latent encoding of any input modality to a shared multimodal latent space, which has the drawback of misalignment between actions and audio. Another approach to this task is the Diffusion T ransformer [13] which has shown impresiv e performance. Building on this work, A V -DiT [14] proposes using a pre- trained image transformer with a small number of trainable parameters that are adapted for image and audio generation. The evolution of this research area leads to potential opportunities for experiments and improvements on efficient training. Some limitations on such adv ancements on the field include very high computational requirement and unconditional sampling, leaving a door open for improvement. Inspired by the exciting research in the field the follo wing contrib utions and experiments are e valuated to expand the existing research. • I release two new high-quality paired audio-video datasets: a video-game gameplay dataset (13 hours of samples segmented into consistent 34-second clips) and a musical concerts dataset (64 hours of samples segmented into consistent 34-second clips), both a vailable upon request for non-commercial research purposes. These contribution aim at encouraging the research community to continue experimenting with joint audio-video generation. • I have experimented with joint video-audio latent diffusion by employing a pretrained video encoder-decored and a pretrained audio encoder-decoder finding inconsistencies and challenges on the joint audio-video decoding step in contrast to previous techniques • I Performed a training from scratch on my new released datasets using the MM-Diffusion architecture showing consistency on video and music generation. I ev aluated the effecti ve alignment on short sounds and quick actions such is the nature of the second dataset, video games • I propose a sequential two-step text to audio-video generation procedure by lev eraging two powerful video and audio generation models. By concatenating the results from the text to video generation model [15] to the video- Fig. 1: MM-Diffusion unconditional generation. Model trained from scratch on the concert dataset (20k steps). The model is able to capture the semantics of the dataset (lights and human figures). Further training can yield better results. to-audio [16] generation model and conditioning both models on long comprehensive text prompts I demonstrate good quality generation results, furthermore le veraging negati ve prompting on Audio video alignment can improve ev en further the task. Finally I present a collection of prompt examples that show the efficac y of this method. II. Related work A. Diffusion Probabilistic Models Diffusion probabilistic models are a widely adopted model for image generation and sound generation. They have as a process a forward process which maps signal to noise and a rev erse process that maps noise back to signal [17]. This family of models hav e been proven to perform specially well on image inpainting [18], super-resolution [19], image-to-image translation [20] among others. Improving on this mechanism Denoising Diffusion implicit models [21] are proposed as a method of sampling through a DPM in an implicit way improving sampling speed. B. video generation V ideo generation has seen an exponential improvement in recent years, with the advent of high-definition diffusion-based framew orks [22], [23]. Starting with a foundational work done by [5] who present Stable V ideo Dif fusion (SVD), a latent video diffusion model for high-resolution te xt-to-video and image-to-video generation that builds upon foundational Latent Dif fusion Models (LDMs) [24] and extends prior video adaptations such as Align Y our Latents. Their three- stage training pipeline comprises a te xt-to-image pre-training using the Stable Diffusion v2.1 image LDM [5], lar ge-scale video pre-training on curated Lar ge V ideo Datasets (L VD) via a systematic data curation workflow—including optical- flow-based motion filtering and OCR-based text remov al and finally high-quality video fine-tuning on smaller, meticulously filtered datasets Architecturally , SVD augments the U-Net backbone with temporal con volutional layers and temporal self-attention modules to model spatiotemporal dynamics in the latent space, while retaining cross-attention conditioning on CLIP text embeddings. The authors lev erage continuous- time denoising diffusion objective with classifier-free guidance using a linear guidance schedule to balance sample fidelity and div ersity . Achieving state of the art results, [15] propose CogV ideoX, a large-scale te xt-to-video generation model that leverages a dif fusion transformer architecture to synthesize 10-second videos at 16 frames per second with a resolution of 768×1360 pixels. T o address the challenges of modeling high-dimensional video data, the authors introduce a 3D V ariational Autoencoder (V AE) that compresses video inputs along both spatial and temporal dimensions. This compression facilitates efficient rep- resentation learning while preserving video fidelity . The V AE employs causal con volutions to maintain temporal coherence, ensuring that each frame generation depends only on past and present information, similar to autoregressi ve models in natural language processing. T o enhance text-video alignment, the model incorporates an expert transformer equipped with expert adapti ve LayerNorm, enabling deep fusion between textual and visual modalities. C. Sound generation Recent advancements in audio generation have been marked by the de velopment of models such as AudioLDM [7], MusicGen [8], and AudioGen [9], each introducing nov el methodologies to enhance audio synthesis quality and control- lability . AudioLDM [7] employs latent diffusion models trained on CLAP embeddings to generate audio from text descriptions, achieving high-quality outputs with reduced computational demands. MusicGen [8] utilizes a single-stage transformer language model operating over compressed discrete music representations, enabling efficient and controllable music gen- eration conditioned on textual or melodic inputs. AudioGen [9] adopts an autoregressi ve transformer frame work that generates audio samples conditioned on te xt inputs, leveraging discrete audio representations and classifier-free guidance to improve fidelity and adherence to textual prompts. Collectively , these models represent significant strides in the field of text-to-audio generation, offering diverse approaches to synthesizing audio content from textual descriptions. These methods rely on efficient encoding in the latent space, improving on this task, there have been impro vements such as Music2latent [25], a novel end-to-end consistency autoencoder for latent audio compression that encodes complex- v alued STFT spectrograms into a highly compressed continuous latent space and enables single-step high-fidelity reconstruction through a consistency model trained with a unified loss function. The architecture comprises an encoder that projects input spectrograms into a lo w-dimensional latent representation, a decoder that upsamples these latents back to the original resolution, and cross-connections at all hierarchical le vels that condition the consistenc y model on intermediate encoder outputs to preserve fine-grained detail. D. A udio-video generation Joint alignment on audio video generation has seen promis- ing improv ements. [10] introduce MM-Diffusion, the first framew ork for joint audio-video generation that synthesizes semantically aligned audio–video pairs from pure Gaussian noise. The core architecture is a sequential multi-modal U- Net, comprising two coupled denoising autoencoders, one for audio spectrograms and one for video frames, that iteratively denoise noisy inputs at each diffusion timestep in lockstep. The model defines independent forw ard processes for audio and video with a shared linear noise schedule and employs a unified reverse model to predict noise residuals conditioned on both audio at time t and video at time t. The authors use an MM-Block, which consists on a 1D dilated conv olutions for audio and decomposed 2D+1D spatial–temporal con volutions for video, interleav ed with modality-specific self-attention and cross-connections to preserve fine-grained features. T o enforce cross-modal coherence, the authors propose a random- shift based attention block that bridges the audio and video subnets, enabling each modality’ s denoising process to attend dynamically to the other and thus reinforcing temporal and semantic fidelity across video and audio. Building on this contribution, [11] present a model that extends the MM-Dif fusion methodology but employs a hier - archical autoencoder to construct modality-specific low-le vel perceptual latent spaces that are perceptually equiv alent to raw signals b ut significantly reduce dimensionality , alongside a shared high-lev el semantic feature space to bridge the information gap between modalities. Concurrent work use alternati ves to dif fussion processes, such is the case of [26] which introduce UniForm, a unified multi-task dif fusion transformer that jointly generates audio and video by concatenating auditory and visual tokens within a shared latent space, thereby implicitly learning cross-modal correlations and ensuring synchronous denoising across modal- ities. Architecturally , the model replaces dual U-Net backbones with a single transformer-based backbone featuring shared weights, which reduces parameter redundancy and enhances the exploitation of intrinsic audio-visual syner gies. Finally , there have been other approaches to deal with multimodal generation, particularly video to audio. MMAudio [16] introduces a novel multimodal joint training framework designed to synthesize high-quality , temporally synchronized audio from video inputs, optionally guided by te xtual prompts. Departing from traditional single-modality training paradigms, MMAudio lev erages extensiv e audio-text datasets alongside video-audio pairs to cultiv ate a unified semantic space, thereby enhancing the richness and diversity of audio generation. Central to its architecture is a multimodal transformer that integrates video, audio, and text modalities, augmented by a conditional synchronization module that aligns video frames with audio latents at the frame lev el, ensuring precise temporal coherence. The model employs a flo w matching objectiv e during training, facilitating ef ficient and effecti ve learning across modalities. III. Methodology A. Diffusion Models Dif fusion models [27] are generati ve frame works that learn to re verse a gradual noising process applied to data. The forward process incrementally adds Gaussian noise to the data over T timesteps, transforming a clean sample x 0 into a noisy sample x T . This process is defined as: q ( x t | x t − 1 ) = N ( x t ; p 1 − β t x t − 1 , β t I ) , t ∈ [1 , T ] , (1) where β t denotes the v ariance schedule controlling the noise magnitude at each timestep [27]. The reverse process aims to denoise x t back to x 0 by learning a parameterized model p θ ( x t − 1 | x t ) , typically modeled as: p θ ( x t − 1 | x t ) = N ( x t − 1 ; µ θ ( x t , t ) , Σ θ ( x t , t )) . (2) Where µ θ denotes the Gaussian mean v alue predicted by θ . T raining in volv es minimizing the variational bound on ne gati ve log-likelihood, which simplifies to the denoising score matching objectiv e: L simple = E x 0 ,ϵ,t h ϵ − ϵ θ ( √ ¯ α t x 0 + √ 1 − ¯ α t ϵ, t ) 2 2 i , (3) where ϵ ∼ N (0 , I ) and ϵ θ predicts the added noise. B. Multi-Modal Diffusion Model (MM-Diffusion) The architecture proposed in [10] has shown strong per- formance on multi-modal audio-video joint generation. This architecture, uses two U-Net coupled denoising autoencoders. Different data types necessitate different handling on term of distributions and ensuring cross-modal coherence. Given paired data ( a , v ) from 1D audio set A and video set V , the forward processes for each modality are defined independently: q ( a t | a t − 1 ) = N ( a t ; p 1 − β t a t − 1 , β t I ) , (4) q ( v t | v t − 1 ) = N ( v t ; p 1 − β t v t − 1 , β t I ) , (5) with a shared noise schedule { β t } T t =1 to maintain synchro- nization. T o model the interdependence during generation, a unified rev erse process is employed. Instead of separate decoders, a joint model p θ av conditions the denoising of each modality on both audio and video latent v ariables: p θ av ( a t − 1 | a t , v t ) = N ( a t − 1 ; µ θ av ( a t , v t , t ) , Σ θ av ( a t , v t , t )) , (6) p θ av ( v t − 1 | v t , a t ) = N ( v t − 1 ; µ θ av ( v t , a t , t ) , Σ θ av ( v t , a t , t )) . (7) Fig. 3: T wo step generation with prompt: "A crowded street market in a vibrant city , filled with stalls of colorful fruits and handmade goods. V endors shout out their prices, while...". A clear alignment between the expected noise and the video can be observed. The training objectiv e minimizes the discrepancy between the predicted and true noise for both modalities: L θ av = E ϵ ∼N (0 , I ) h λ ( t ) ∥ ϵ − ϵ θ av ( a t , v t , t ) ∥ 2 2 i , (8) where λ ( t ) is a weighting function that can emphasize certain timesteps. This joint modeling approach ensures that the generated audio and video are not only indi vidually coherent b ut also semantically aligned, capturing the intricate correlations inherent in multi-modal data. C. Sequential procedure for A udio-V ideo Generation In order to perform text to audio-video conditional gen- eration I lev erage two large pre-trained models for video generation [15] and audio to video generation [16]. Starting with CogV ideoX which consists on a 3D Causal V ariational Autoencoder , Latent Diffusion in V ideo Space and an Expert T ransformer . The latent diffision on video space consist on a compressed representation z 0 and a diffusion process which is applied in latent space. The forward (noising) process over T timesteps is defined by q ( z t | z t − 1 ) = N z t ; p 1 − β t z t − 1 , β t I , ¯ α t = t Y s =1 (1 − β s ) . (9) The model learns a reverse denoising netw ork ϵ θ : p θ ( z t − 1 | z t , y ) = N z t − 1 ; µ θ ( z t , y , t ) , Σ θ , (10) with the objective L diff = E z 0 ,ϵ,t h ϵ − ϵ θ ( √ ¯ α t z 0 + √ 1 − ¯ α t ϵ, y , t ) 2 2 i , (11) where y denotes conditioning signals (text embeddings and ex- pert controls). The second important contribution of CogV ideoX [15]. consist on an Expert T ransformer module. Expert-specific adapters are included into T ransformer layers via Expert- Adaptiv e Layer Normalization (EA-LN). Gi ven a hidden representation h ℓ at layer ℓ and expert weights ( γ e , β e ) for expert e , EA-LN computes: EA - LN( h ℓ ; e ) = γ e ⊙ h ℓ − µ ( h ℓ ) σ ( h ℓ ) + β e . (12) Experts are activ ated via gating based on user-provided control tokens, enabling the same backbone to adapt dynamically to different modalities or control inputs. The next sequential step on this framework consist on prompting a pre-trained video to sound model guided by te xt. MMAudio [16] is selected as a state of the art model on this task. This model uses conditional flow matching (CFM), a continuous-time generation paradigm. The model learns a conditional velocity field v θ ( t, C , x ) ov er the latent space R d , where C denotes conditioning inputs (e.g., video or text), and x denotes audio latent features. The sample x 1 is obtained by integrating from Gaussian noise x 0 ∼ N (0 , I ) as: d x t dt = v θ ( t, C , x t ) , t ∈ [0 , 1] . (13) During training, the model minimizes the following flo w- matching objectiv e: L CFM = E t, x 0 , x 1 ,C h ∥ v θ ( t, C , x t ) − u ( x t | x 0 , x 1 ) ∥ 2 2 i , (14) where x t = t x 1 + (1 − t ) x 0 is a linear interpolation, and u ( x t | x 0 , x 1 ) = x 1 − x 0 is the flow velocity . 1) Sequential generation Giv en a long-form textual prompt p and classifier-free guidance scale ω , the video generation stage synthesizes a sequence of frames v 1: T in latent space: v 1: T = CogVideoXPipeline ( p ; ω ) . (15) These frames are rendered and e xported to a video file V out using a fixed frame rate (e.g., 8 fps). In the second stage the MMAudio model is used for the task of video to audio generation. The model conditions on both visual inputs (clip- le vel and frame-aligned features e xtracted via Synchformer) and textual prompts, and produces audio latent trajectories guided by a learned velocity field. Specifically , given conditioning information C = { v , p } from the video v and prompt p , and a flow-matching objective u ( · ) , the model inte grates: x t = x 0 + Z t 0 v θ ( τ , C, x τ ) dτ , with v θ ≈ u ( x t | x 0 , x 1 ) , (16) where x 0 ∼ N (0 , I ) and x 1 is the target latent. The generated audio latents are subsequently decoded into mel-spectrograms and v ocoded into wa veforms using a BigVGAN-based decoder , resulting in high-fidelity audio with frame-lev el synchronization to the video stream. IV . Experiments In this section a detailed specification on the experimental setup is revised. A. Datasets In order to have an ef fectiv e training sequence, a high quality dataset is required for the task of joint audio video generation. Filtering by the categories "concerts" and "Call of duty" present on the Y outube 8M [28] dataset, I created a crawling job to retrieve different videos hosted on youtube. Further processing of this information characterizes the videos by cropping each video into consistent 34 second clips and getting rid of introductory parts that could ha ve non-related content to the video. Further inspection on the patterns of audio to filter videos that are not related to the category was performed. (a) Concerts dataset (b) Gaming dataset Fig. 4: Side-by-side comparison of datasets: Concerts and Gaming. As a result the concerts dataset has more than 60 hours of video audio recordings, consisting on 7200 clips, whereas the dataset of Call of Duty (i.e. gaming) resulted on 1700 clips and up to 13 hours of high quality video recordings. B. T raining MM-Diffusion from scratch T o e valuate the robustness and generalization capabilities of the MM-Diffusion framework [10], I conducted training from scratch on a newly curated audio-visual dataset. The MM- Diffusion architecture comprises two coupled U-Net-based denoising autoencoders, facilitating the joint generation of temporally aligned audio and video sequences. This design enables the model to learn cross-modal correlations effecti vely , ensuring coherent audio-visual outputs. The training configuration was meticulously set to accom- modate the characteristics of the custom dataset. A linear noise scheduler was employed, with the dif fusion process encompassing 2000 steps. The video generation component was configured to produce sequences with dimensions 16 × 3 × 64 × 64 , corresponding to 16 frames of 64 × 64 RGB images. Concurrently , the audio generation component was set to output waveforms with a length of 25,600 samples, aligning with the temporal span of the video sequences. Ke y architectural parameters included the use of cross- attention mechanisms at resolutions 2, 4, and 8, with attention windows of sizes 1, 4, and 8, respecti vely . A dropout rate of 0.1 was applied to mitigate overfitting. The model utilized 128 base channels, with 64 channels dedicated to each attention head, and incorporated two residual blocks per layer . T o enhance training efficienc y and stability , mixed-precision training w as enabled via the use of FP16 computations, and scale-shift normalization was applied throughout the network. Training was conducted using a batch size of 4 across four GPUs, with a learning rate set to 0.0001. Fig. 5: Loss of MM-Diffusion training with my custom concerts dataset. Listed are the coupled Joint U-net Loss, audio loss and video Loss. The model requires man y resources to complete, the loss function is very noisy and requires careful hyperparameter setting. C. Latent MM-Diffusion Joint A udio video generation In order to improv e the training time on the MM-Diffusion process, a latent diffusion model was tested. Le veraging the architecture proposed by [11] I experiment by emplo ying the pretrained latent image dif fusion encoder -decoder from stable diffusion sd-vae-ft-mse [29] and for audio encoder-decoder I employ the pretrained audio encoder music2latent [25]. By matching the length and dimensions of these tw o encoders I le verage the original MM-Diffusion training procedure to train the model. Howe ver , because of very different architectures on the decoding step, the experiments showed poor performance. D. T wo-Step sequence for text to audio video generation For this experiment, a dataset comprising 100 prompts was generated using the LLaMA 3.2 3B language model. Each prompt, av eraging 50 words in length, was designed to encapsulate detailed descriptions suitable for audio-video generation tasks. These prompts were subsequently input into the CogV ideoX model , a state-of-the-art text-to-video diffusion model. CogV ideoX employs a 3D V ariational Autoencoder (V AE) for ef ficient spatiotemporal compression and an expert transformer architecture to enhance te xt-video alignment. The model’ s capabilities in generating coherent, long-duration videos with significant motion make it particularly suitable for this application. Follo wing video generation, each video, along with its corre- sponding prompt, was processed using the MM-Audio model. MM-Audio is designed for synchronized audio generation conditioned on video and/or text inputs. It utilizes a multimodal joint training framework, enabling it to learn from a diverse range of audio-visual and audio-text datasets. This approach Fig. 6: T wo-step sequential generation using prompt "A wild west shootout at high noon in a dusty town. Spurs clink as gunslingers face off in the...". facilitates the generation of high-quality , semantically aligned audio that maintains temporal coherence with the visual content. The inte gration of detailed prompts and adv anced modeling techniques in both video and audio generation stages contrib utes to the overall effecti veness of the system. E. Evaluation metrics The ev aluation metrics employed in this study are grounded in the Fréchet Distance (FD), which quantifies the diver gence between the distributions of real and generated feature repre- sentations in a high-dimensional embedding space. Specifically , both audio and video modalities are embedded into fix ed- length feature vectors via pretrained neural models—ResNet18 for spectrogram-based audio and R(2+1)D for video. For each modality , statistical properties including the empirical mean and cov ariance matrix of the e xtracted feature sets are computed. The Fréchet Distance is then calculated between these Gaussian approximations of real and generated data distributions, incorporating both mean and covariance via the closed-form solution: FD ( µ 1 , Σ 1 , µ 2 , Σ 2 ) = ∥ µ 1 − µ 2 ∥ 2 + Tr (Σ 1 + Σ 2 − 2(Σ 1 Σ 2 ) 1 / 2 ) This metric captures both first-order (mean) and second-order (cov ariance) discrepancies, offering a principled measure of the generativ e model’ s fidelity to the real data manifold. T ABLE I: Comparison of F AD and FVD scores for uncon- ditional and text-video generation models. F or the concerts dataset. Baseline results of [10]. Lo wer v alues are better . Method F AD ↓ FVD ↓ Unconditional Generation 9260.94 251.62 T ext-to-V ideo Generation 5020.37 206.49 [10](AIST++, dancing dataset) 10.69 75.71 V . Conclusions In this paper I presented the e xperiments performed on custom datasets using the MM-Dif fusion frame work for joint audio-video generation and proposed a two step sequencial procedure to generate audio-video using CogvideoX [15] for video generation and MM-Audio [16] for video to audio generation. The proposed implementation of latent joint audio video dif fusion prov ed to be challenging as the decoding step becomes unstable. It is also observed that training MM- Diffusion from scratch proves to be a very rource intensi ve task and requires careful hyperparameter setting. Further work on on this research direction could include improving on the latent MM-Diffusion method to achie ve State of the art result with less resources. References [1] Z. Y ang, L. Li, K. Lin, J. W ang, C.-C. Lin, Z. Liu, and L. W ang, “The dawn of lmms: Preliminary explorations with gpt-4v(ision), ” 2023. [2] NVIDIA, :, N. Agarwal, A. Ali, M. Bala, Y . Balaji, E. Barker , T . Cai, P . Chattopadhyay , Y . Chen, Y . Cui, Y . Ding, D. Dworakowski, J. Fan, M. Fenzi, F . Ferroni, S. Fidler, D. Fox, S. Ge, Y . Ge, J. Gu, S. Gururani, E. He, J. Huang, J. Huffman, P . Jannaty , J. Jin, S. W . Kim, G. Klár, G. Lam, S. Lan, L. Leal-T aixe, A. Li, Z. Li, C.-H. Lin, T .-Y . Lin, H. Ling, M.-Y . Liu, X. Liu, A. Luo, Q. Ma, H. Mao, K. Mo, A. Mousavian, S. Nah, S. Niverty , D. Page, D. Paschalidou, Z. Patel, L. Pav ao, M. Ramezanali, F . Reda, X. Ren, V . R. N. Saba vat, E. Schmerling, S. Shi, B. Stefaniak, S. T ang, L. Tchapmi, P . Tredak, W .-C. Tseng, J. V arghese, H. W ang, H. W ang, H. W ang, T .-C. W ang, F . W ei, X. W ei, J. Z. Wu, J. Xu, W . Y ang, L. Y en-Chen, X. Zeng, Y . Zeng, J. Zhang, Q. Zhang, Y . Zhang, Q. Zhao, and A. Zolkowski, “Cosmos world foundation model platform for physical ai, ” 2025. [3] Y . Liu, K. Zhang, Y . Li, Z. Y an, C. Gao, R. Chen, Z. Y uan, Y . Huang, H. Sun, J. Gao, L. He, and L. Sun, “Sora: A revie w on background, technology , limitations, and opportunities of lar ge vision models, ” 2024. [4] A. Blattmann, R. Rombach, H. Ling, T . Dockhorn, S. W . Kim, S. Fidler , and K. Kreis, “ Align your latents: High-resolution video synthesis with latent diffusion models, ” 2023. [5] A. Blattmann, T . Dockhorn, S. K ulal, D. Mendele vitch, M. Kilian, D. Lorenz, Y . Levi, Z. English, V . V oleti, A. Letts, V . Jampani, and R. Rombach, “Stable video dif fusion: Scaling latent video diffusion models to large datasets, ” 2023. [6] A. Ramesh, P . Dhariwal, A. Nichol, C. Chu, and M. Chen, “Hierarchical text-conditional image generation with clip latents, ” 2022. [7] H. Liu, Z. Chen, Y . Y uan, X. Mei, X. Liu, D. Mandic, W . W ang, and M. D. Plumbley , “ Audioldm: T ext-to-audio generation with latent diffusion models, ” 2023. [8] J. Copet, F . Kreuk, I. Gat, T . Remez, D. Kant, G. Synnaeve, Y . Adi, and A. Défossez, “Simple and controllable music generation, ” 2024. [9] F . Kreuk, G. Synnae ve, A. Polyak, U. Singer , A. Défossez, J. Copet, D. Parikh, Y . T aigman, and Y . Adi, “ Audiogen: T extually guided audio generation, ” 2023. [10] L. Ruan, Y . Ma, H. Y ang, H. He, B. Liu, J. Fu, N. J. Y uan, Q. Jin, and B. Guo, “Mm-diffusion: Learning multi-modal diffusion models for joint audio and video generation, ” 2023. [11] M. Sun, W . W ang, Y . Qiao, J. Sun, Z. Qin, L. Guo, X. Zhu, and J. Liu, “Mm-ldm: Multi-modal latent diffusion model for sounding video generation, ” 2024. [12] Z. T ang, Z. Y ang, C. Zhu, M. Zeng, and M. Bansal, “ Any-to-an y generation via composable dif fusion, ” 2023. [13] W . Peebles and S. Xie, “Scalable diffusion models with transformers, ” 2023. [14] K. W ang, S. Deng, J. Shi, D. Hatzinakos, and Y . T ian, “ A v-dit: Ef ficient audio-visual diffusion transformer for joint audio and video generation, ” 2024. [15] Z. Y ang, J. T eng, W . Zheng, M. Ding, S. Huang, J. Xu, Y . Y ang, W . Hong, X. Zhang, G. Feng, D. Y in, Y . Zhang, W . W ang, Y . Cheng, B. Xu, X. Gu, Y . Dong, and J. T ang, “Cogvideox: T ext-to-video dif fusion models with an expert transformer , ” 2025. [16] H. K. Cheng, M. Ishii, A. Hayaka wa, T . Shibuya, A. Schwing, and Y . Mitsufuji, “Mmaudio: T aming multimodal joint training for high- quality video-to-audio synthesis, ” 2025. [17] P . Dhariwal and A. Nichol, “Dif fusion models beat gans on image synthesis, ” 2021. [18] A. Lugmayr , M. Danelljan, A. Romero, F . Y u, R. T imofte, and L. V . Gool, “Repaint: Inpainting using denoising dif fusion probabilistic models, ” 2022. [19] Z. Qiu, H. Y ang, J. Fu, and D. Fu, “Learning spatiotemporal frequency- transformer for compressed video super-resolution, ” 2022. [20] C. Saharia, W . Chan, H. Chang, C. A. Lee, J. Ho, T . Salimans, D. J. Fleet, and M. Norouzi, “Palette: Image-to-image dif f usion models, ” 2022. [21] J. Song, C. Meng, and S. Ermon, “Denoising diffusion implicit models, ” 2022. [22] J. Ho, W . Chan, C. Saharia, J. Whang, R. Gao, A. Gritsenko, D. P . Kingma, B. Poole, M. Norouzi, D. J. Fleet, and T . Salimans, “Imagen video: High definition video generation with diffusion models, ” 2022. [23] R. V illegas, M. Babaeizadeh, P .-J. Kindermans, H. Moraldo, H. Zhang, M. T . Saffar , S. Castro, J. Kunze, and D. Erhan, “Phenaki: V ariable length video generation from open domain textual description, ” 2022. [24] R. Rombach, A. Blattmann, D. Lorenz, P . Esser, and B. Ommer , “High- resolution image synthesis with latent diffusion models, ” 2022. [25] M. Pasini, S. Lattner, and G. Fazekas, “Music2latent: Consistency autoencoders for latent audio compression, ” 2024. [26] L. Zhao, L. Feng, D. Ge, R. Chen, F . Y i, C. Zhang, X.-L. Zhang, and X. Li, “Uniform: A unified multi-task diffusion transformer for audio- video generation, ” 2025. [27] J. Ho, A. Jain, and P . Abbeel, “Denoising dif fusion probabilistic models, ” 2020. [28] S. Abu-El-Haija, N. K othari, J. Lee, P . Natsev , G. T oderici, B. V aradarajan, and S. V ijayanarasimhan, “Y outube-8m: A large-scale video classification benchmark, ” 2016. [29] R. Rombach, A. Blattmann, D. Lorenz, P . Esser, and B. Ommer , “High- resolution image synthesis with latent dif fusion models, ” in Pr oceedings of the IEEE/CVF Confer ence on Computer V ision and P attern Recognition (CVPR) , pp. 10684–10695, June 2022. Appendix MM-Diffusion T rained from Scratch Shown below are more examples for MM-Dif fusion. Figure A1: MM-Dif fusion training from scratch on the concert dataset. Sequential audio-video generation

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment