Attribution Upsampling should Redistribute, Not Interpolate

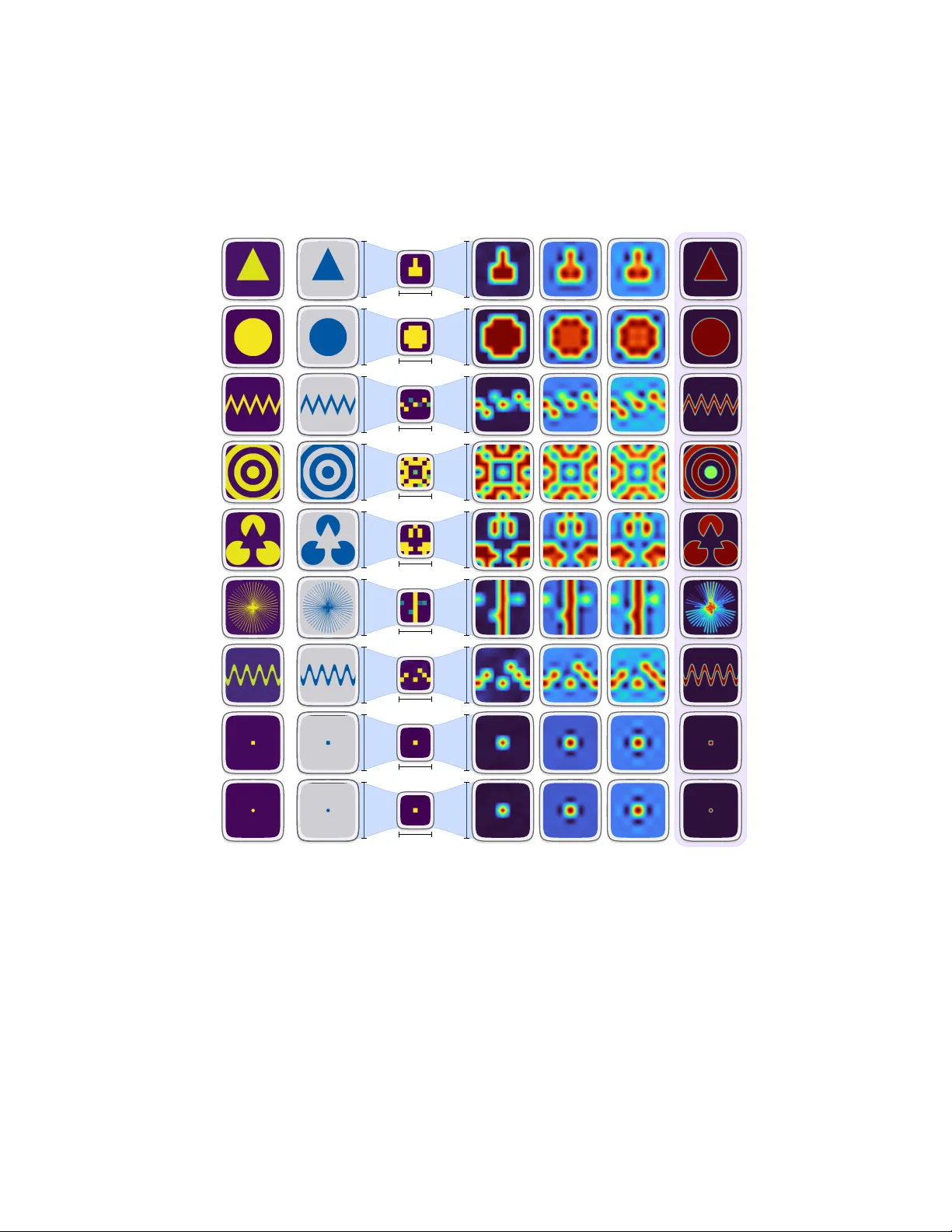

Attribution methods in explainable AI rely on upsampling techniques that were designed for natural images, not saliency maps. Standard bilinear and bicubic interpolation systematically corrupts attribution signals through aliasing, ringing, and bound…

Authors: Vincenzo Buono, Peyman Sheikholharam Mashhadi, Mahmoud Rahat