Power Analysis for Prediction-Powered Inference

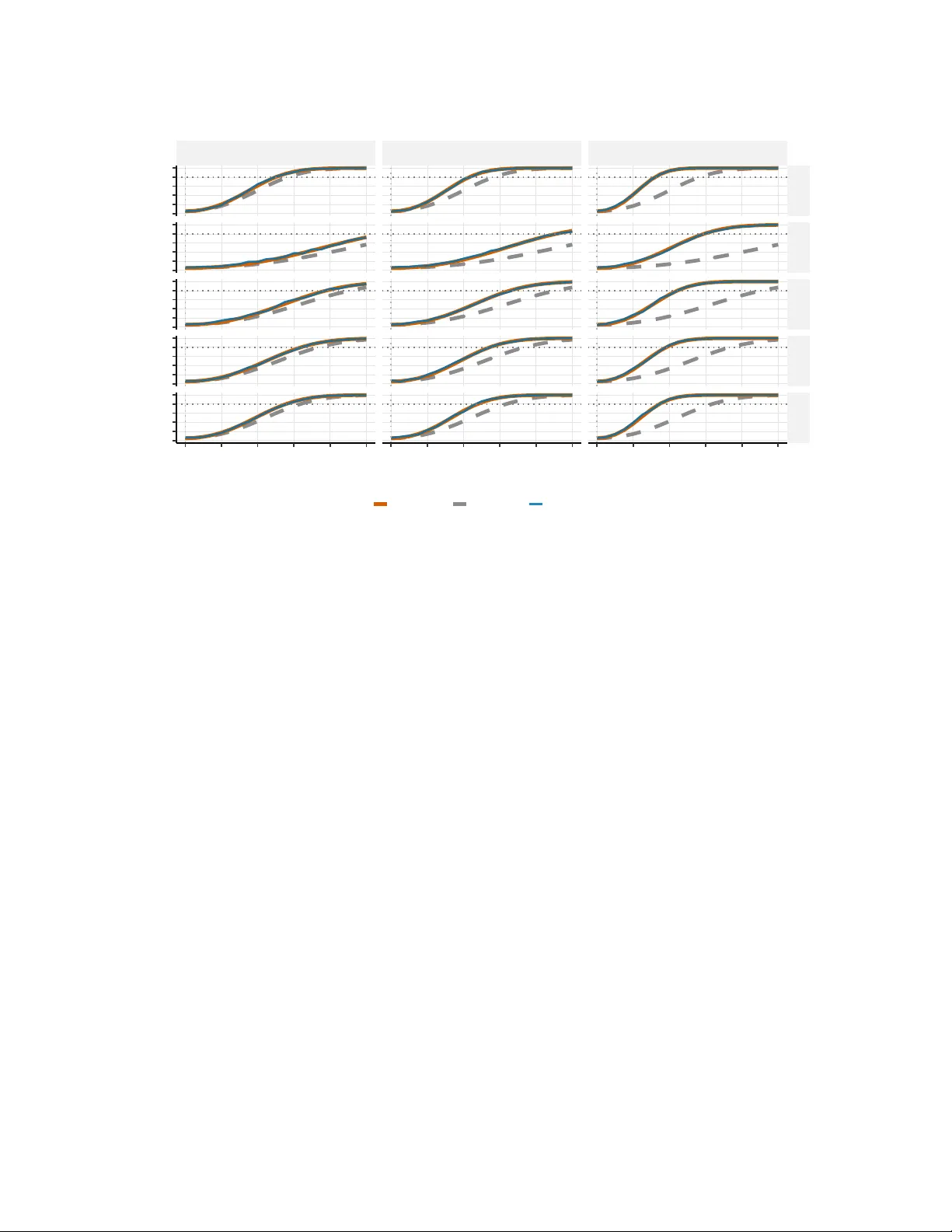

Modern studies increasingly leverage outcomes predicted by machine learning and artificial intelligence (AI/ML) models, and recent work, such as prediction-powered inference (PPI), has developed valid downstream statistical inference procedures. Howe…

Authors: Yiqun T. Chen, Moran Guo, Shengy Li