The PokeAgent Challenge: Competitive and Long-Context Learning at Scale

We present the PokeAgent Challenge, a large-scale benchmark for decision-making research built on Pokemon's multi-agent battle system and expansive role-playing game (RPG) environment. Partial observability, game-theoretic reasoning, and long-horizon…

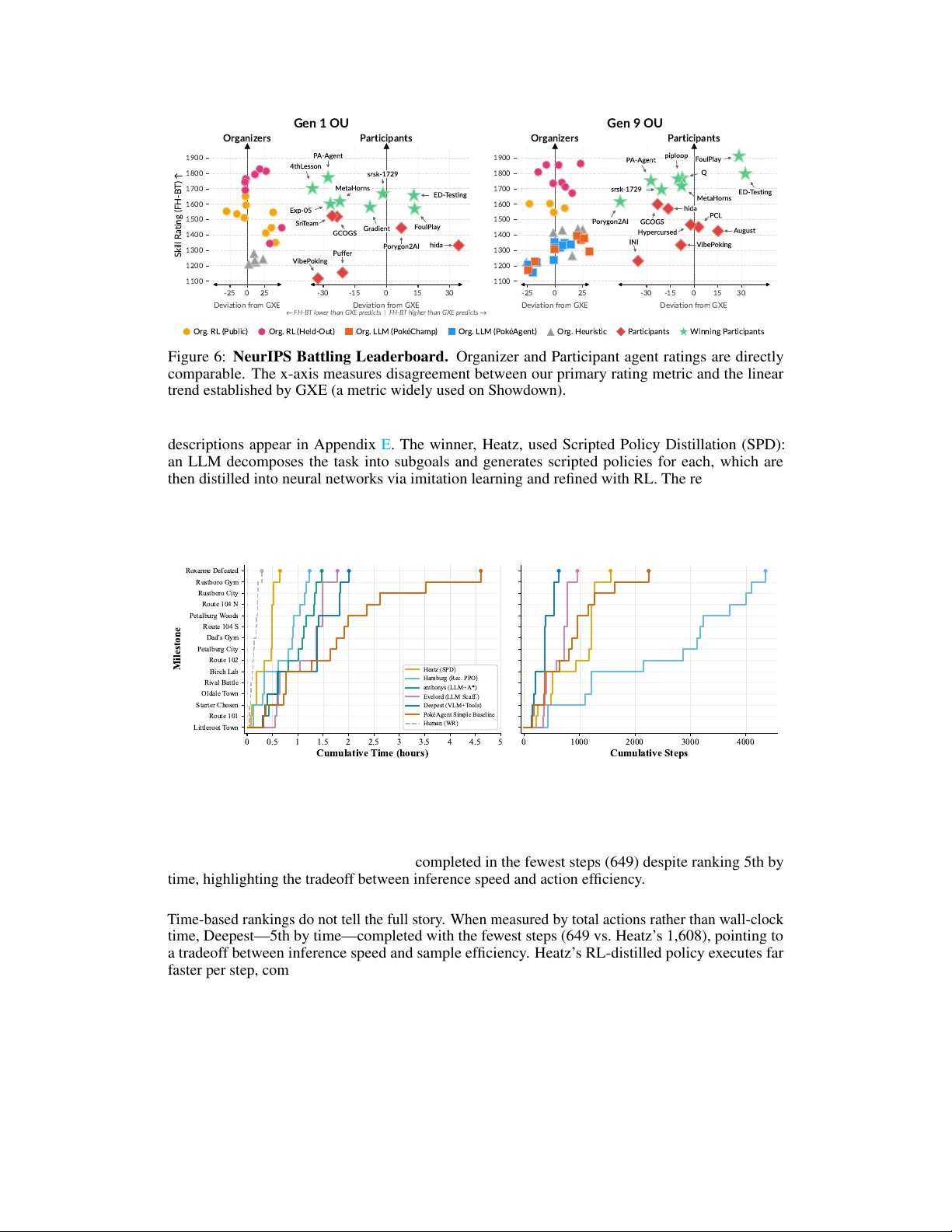

Authors: Seth Karten, Jake Grigsby, Tersoo Upaa