RSGen: Enhancing Layout-Driven Remote Sensing Image Generation with Diverse Edge Guidance

Diffusion models have significantly mitigated the impact of annotated data scarcity in remote sensing (RS). Although recent approaches have successfully harnessed these models to enable diverse and controllable Layout-to-Image (L2I) synthesis, they s…

Authors: Xianbao Hou, Yonghao He, Zeyd Boukhers

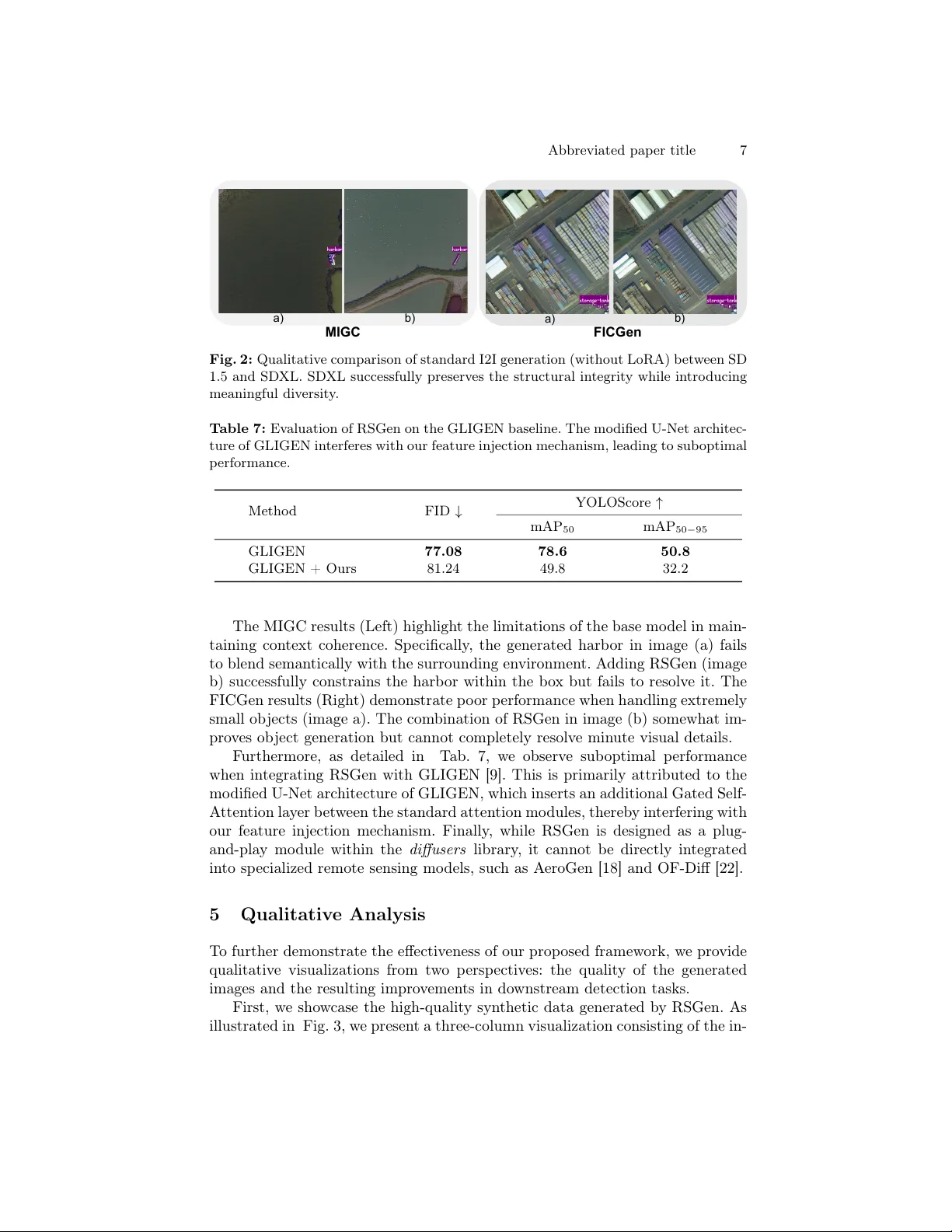

RSGen: Enhancing La y out-Driv en Remote Sensing Image Generation with Div erse Edge Guidance Xian bao Hou 1 , 2 ⋆ , Y onghao He 2 ⋆, † , Zeyd Boukhers 3 , John See 4 , Hu Su 5 , W ei Sui 2 , and Cong Y ang 1 1 Sc ho ol of F uture Science and Engineering, Soo c ho w Universit y , Suzhou, China 2 D-Rob otics, Beijing, China 3 F raunhofer Institute for Applied Information T ec hnology , Sankt Augustin, German y 4 Sc ho ol of Mathematical and Computing Sciences, Heriot-W att Universit y Mala ysia, Putra jay a, Malaysia 5 Institute of Automation, Chinese Academ y of Sciences, Beijing, China xbhou2024@stu.suda.edu.cn, wei.sui@d-robotics.cc, cong.yang@suda.edu.cn CC-Dif f MIGC FICGen a) ori ginal b) + RSGen (ours) Fig. 1: Visualization of con trollability betw een existing L2I methods (MIGC [38], CC- Diff [35], FICGen [24]) and the same metho ds equipped with RSGen. While the original metho ds (a) struggle to adhere to the sp ecified b ounding boxes, integrating our mo dule (b) significantly enhances instance alignment and con trol precision. ⋆ Equal contribution. † Pro ject leader. Corresp onding authors. 2 X. Hou et al. Abstract. Diffusion models hav e significantly mitigated the impact of annotated data scarcit y in remote sensing (RS). Although recen t ap- proac hes ha ve successfully harnessed these models to enable diverse and con trollable Lay out-to-Image (L2I) synthesis, they still suffer from lim- ited fine-grained con trol and fail to strictly adhere to bounding b o x con- strain ts. T o address these limitations, we prop ose RSGen, a plug-and- pla y framework that leverages diverse edge guidance to enhance lay out- driv en RS image generation. Sp ecifically , RSGen employs a progressive enhancemen t strategy: 1) it first enriches the diversit y of edge maps com- p osited from retriev ed training instances via Image-to-Image generation; and 2) subsequently utilizes these diverse edge maps as conditioning for existing L2I mo dels to enforce pixel-lev el con trol within b ounding b o xes, ensuring the generated instances strictly adhere to the la yout. Extensiv e exp erimen ts across three baseline mo dels demonstrate that RSGen signif- ican tly bo osts the capabilities of existing L2I mo dels. F or instance, with CC-Diff on the DOT A dataset for oriented ob ject detection, we achiev e remark able gains of +9.8/+12.0 in YOLOScore (mAP 50 /mAP 50 − 95 ) and +1.6 in mAP on the downstream detection task. Our co de will be pub- licly av ailable: https://gith ub.com/D-Rob otics-AI-Lab/RSGen Keyw ords: Lay out-to-Image · Remote Sensing · Ob ject Detection 1 In tro duction High-qualit y remote sensing (RS) data is crucial for impro ving do wnstream de- tection tasks, going beyond sp ecific algorithmic enhancements [1]. As a result, man y studies hav e inv estigated the p oten tial of augmenting training sets with additional generated data [22, 23, 30, 35]. T raditional metho ds mainly use text prompts to control the semantics of the generated images [11, 16]. Ho wev er, a sig- nifican t limitation of these approaches is that the generated data often requires man ual annotation or additional pro cessing to be effectively used. T o mitigate this problem, some studies ha ve fo cused on enhancing controllabilit y by intro- ducing dense guidance [22, 31]. Unfortunately , these strategies often compromise the diversit y of the generated instances. Recen tly , lay out-to-image (L2I) generation [25, 37, 38] has b een introduced to ac hieve a balance b et ween diversit y and controllabilit y b y using sp ecified ob ject b ounding b o xes as spatial conditions. While these metho ds allo w for control at the b o x lev el and enhance ov erall div ersity , the precision of this control is still limited. As a result, there is often a misalignmen t b et ween the generated in- stances and their corresp onding bounding b o xes Fig. 1 (a). Consequently , even though the generated images may look visually realistic, the corresp onding an- notations tend to b e inaccurate, which reduces their effectiveness for training purp oses. In addition to issues with spatial misalignmen t, current L2I metho ds do not fully utilize the in trinsic information presen t in the training samples. They mainly dep end on b ounding b o xes and class lab els, neglecting the finer edge details that can con tribute to b etter results. RSGen 3 T o address these issues, we in tro duce RSGen. RSGen consists of tw o mo dules: Edge2Edge and L2I FGCon trol (F requency-Gated Con trol with Spatially Gated Injection). The rationale b ehind RSGen is to incorp orate edge maps as auxil- iary conditions alongside b o x-level guidance to achiev e pixel-level precision. This ensures that the generated instances align strictly with the sp ecified b ounding b o xes and improv es the quality and reliabilit y of the resulting annotations, as demonstrated in Fig. 1 (b). Sp ecifically , Edge2Edge retrieves Holistically-Nested Edge Detection (HED) [28] edge maps conditioned on the input b o xes and p er- forms Image-to-Image (I2I) generation with an SDXL [17] mo del fine-tuned via Lo w-Rank A daptation (LoRA) [7], where diversit y is promoted by v arying seeds and text prompts for downstream data augmentation [3, 6]. F urthermore, the L2I FGCon trol mo dule separates structure from semantics. FGCon trol extracts high-frequency structural residual features b y filtering out lo w-frequency com- p onen ts, and then injects these residuals only within the b ounding b o xes via a spatially gated mec hanism to keep the guidance spatially confined. Exp erimen ts conducted on three baselines (MIGC [38], CC-Diff [35], FIC- Gen [24]) sho w consistent improv ements in YOLOScore and mean A v erage Pre- cision (mAP). F or example, with CC-Diff on the DIOR-RSVG [33] dataset, we observ e enhancements of +3.3/+6.5 in YOLOScore (mAP 50 /mAP 50 − 95 ), along with a +0.3 increase in mAP . The improv ements are even more significant on the DOT A-v1.0 [26] dataset, which fo cuses on oriented ob ject detection, producing notable increases of +9.8/+12.0 in YOLOScore and +1.6 in mAP . In summary , our key contributions are as follo ws: – W e propose RSGen, a plug-and-pla y framew ork that enhances L2I mo dels with fine-grained edge guidance. By pro viding pixel-lev el con trol, RSGen fully harnesses the p oten tial of L2I generation. – T o tac kle the challenge of main taining generation diversit y while utilizing strong pixel-level control, we introduce Edge2Edge. This approac h generates div erse edge priors at the source using v aried prompts and seeds. F ollo wing this, FGCon trol incorp orates these edges as guidance, effectively separating structure from semantics. This allows for precise control through spatial and frequency gating mec hanisms. – Extensiv e exp erimen ts demonstrate the effectiveness and generalizability of RSGen. It significantly improv es spatial alignmen t precision and enhances do wnstream detection p erformance for b oth horizontal b ounding b o xes (HBB) and oriented b ounding b o xes (OBB). 2 Related W ork This section reviews the literature that forms the basis of our metho d, fo cusing on tw o areas: L2I generation and generative data augmen tation. 2.1 La yout-to-Image Generation L2I generation aims to create images based on input b ounding b o xes and cat- egory lab els. Early training-free approaches [2, 9, 27] interv ene in the attention 4 X. Hou et al. mec hanisms of diffusion mo dels [17, 20] to restrict generation to sp ecific regions, but they suffer from limited con trollability . T o address these issues, subsequent training-based metho ds explicitly integrate la yout guidance in to the mo del ar- c hitecture. F or example, GLIGEN [14] introduces gated attention to combine spatial information with visual features, achieving strong controllabilit y . In re- mote sensing, AeroGen [23] adv ances this approach by enabling the generation of oriented b ounding b o xes, while CC-Diff [35] enhances coherence b et ween fore- ground instances and backgrounds. OF-Diff [30] utilizes prior masks for improv ed con trol, but acquiring these masks can b e c hallenging compared to edge maps, esp ecially for amorphous categories like golf courses. F urthermore, relying solely on simple spatial transformations can limit div ersity . RSGen addresses these limitations in t wo wa ys. First, it employs edge guid- ance that is robust to b oundary ambiguit y , effectiv ely managing amorphous cat- egories. Second, it utilizes a diffus ion mo del to enhance the diversit y of edge priors. By leveraging these diverse edges, our metho d guides the generation pro- cess to ac hieve b oth high diversit y and precise structural control. 2.2 Generativ e Data Augmentation Generativ e data augmentation has b ecome a p opular strategy for addressing data scarcity during mo del training. By leveraging the generative capabilities of diffusion mo dels, this approac h generates nov el samples to enric h existing datasets, and it is widely adopted across tasks such as classification [8], ob ject detection [23, 30], and segmentation [36]. Some metho ds [3, 6] emplo y m ulti- stage, training-free pip elines, whic h efficiently av oid the need for mo del training. Ho wev er, these methods incur high computational costs due to complex p ost- pro cessing requiremen ts. In contrast, L2I metho ds [35] fine-tune diffusion mo dels to generate instances directly within b ounding boxes, which reduces complex- it y compared to multi-stage pro cessing. This approach facilitates a streamlined augmen tation pipeline where generated samples are directly merged in to the training dataset. Occasionally , a filtering step is incorp orated to ensure qualit y . Similarly , our RSGen directly augments the training set, but keeps the base L2I mo del frozen and only trains the light weigh t FGCon trol mo dule and LoRA [7], resulting in minimal resource consumption. 3 Metho d In this section, we start by reviewing the fundamentals of Latent Diffusion Mo d- els (LDMs) [20]. Next, we provide a detailed ov erview of the RSGen framew ork ( Fig. 2), whic h consists of tw o key components: the Edge2Edge mo dule, de- signed for generating diverse edge maps, and the L2I FGCon trol module, which incorp orates edge guidance to ensure accurate lay out alignment. T ogether, these comp onen ts address the challenges of limited diversit y and spatial misalignment in remote sensing image generation. RSGen 5 LoRA T rain ing Dataset Referen ce Edge Generated Edge a) Edg e2Edg e Input Scale- Bala nced Regi on Attention “T his is an aeria l im age of…” Global Pr om pt Layout && Cl ass CLS CLS CLS CLS Instance1 Instance2 Instance3 Instance4 Phra se T okens Attention m ap Region Loss Latent … guidan ce Input Layout SDXL U-Net Setps 1 … U-Net Setps N CLS CLIP b) L2I FGContr ol … … … L2I Model FGControl High- Pass Filter Spatial ly Gated && Control Residual T rain able Frozen U-net Fea ture Input Generated Edge Fig. 2: Overview of RSGen, which consists of the Edge2Edge mo dule (a) and the L2I F GControl mo dule (b), where “CLS” denotes the class lab el. The Edge2Edge mo dule enhances the diversit y of retrieved edge maps through an I2I pro cess. Subsequently , these diverse edges and la yout inputs guide the L2I FGCon trol mo dule, whic h interacts with the base L2I model to achiev e precise pixel-level control. Our framework signifi- can tly increases structural div ersity while ensuring fine-grained spatial alignment. 3.1 Preliminary Denoising Diffusion Probabilistic Mo dels [5] synthesize images by iterativ ely re- mo ving Gaussian noise directly in pixel space. In contrast, LDMs [20] signifi- can tly accelerate this pro cess within a compressed latent space constructed by 6 X. Hou et al. Fig. 3: Visualization of diverse edge maps generated b y the Edge2Edge module. Our metho d employs distinct random seeds to generate v aried structural details within the sp ecified b ounding b o xes, significan tly enhancing the diversit y of the structural priors. a V ariational Auto encoder (V AE) [12]. Sp ecifically , an input image x is first en- co ded into a latent representation z 0 = E ( x ) by a pre-trained V AE enco der E . Gaussian noise is then injected into z 0 to yield a noisy state z t = α t z 0 + σ t ϵ , with ϵ ∼ N (0 , I ) , where α t and σ t are co efficien ts determined by the noise schedule. A denoising U-Net [21] ϵ θ is optimized to estimate the added noise ϵ conditioned on the timestep t ( t ∈ { 1 , . . . , T } ) and auxiliary context c (e.g., lay outs and text). The LDM loss, denoted as L LDM , is defined to minimize the Mean Squared Error (MSE) b et ween the predicted noise and the actual noise, which can b e expressed as follo ws: L LDM = E z 0 ,ϵ ∼N (0 ,I ) ,t,c ∥ ϵ − ϵ θ ( z t , t, c ) ∥ 2 2 . (1) 3.2 Edge2Edge As highligh ted in Fig. 2 (a), w e construct a database of reference edges using HED [28] and retriev e the optimal candidates based on the class and aspect ratio of eac h input b ounding b o x. The retrieved edges are then assembled into a comp osite edge map, which serves as the input for a fine-tuned SDXL [17] mo del within an I2I pro cess. T o ensure that the generated edge map aligns with the sp ecified bounding b o xes, we incorp orate a no vel Scale-Balanced Region A tten- tion mechanism. A dditionally , by utilizing prompts derived from input instance classes and v arious random seeds, this module generates a diverse edge map, as illustrated in Fig. 3. LoRA Fine-tuning and Scale-Balanced Region Atten tion. The base SD XL mo del is fine-tuned via LoRA [7] to align with the visual domain of HED edges. By utilizing comp osite maps assembled from retrieved references as input, the model parameters are optimized to shift the distribution to ward the target HED style. This parameter-efficient strategy ensures that the gener- ated edges maintain high fidelity to the desired structural patterns without the computational cost of full-parameter training. RSGen 7 ControlNe t ControlNe t-X S FGControl Fig. 4: Comparison of ControlNet [34], ControlNet-XS [32], and our F GControl. Stan- dard global control methods suffer from feature en tanglement, causing bac kground c haos. Con versely , F GControl strictly confines high-frequency structural guidance within the lay out b ounding b o xes, achieving fine-grained lo cal con trol without inter- fering with the global semantic syn thesis. Ho wev er, although the fine-tuned SDXL mo del captures the visual c harac- teristics of HED edges, standard I2I generation still lac ks explicit spatial con- strain ts. This limitation leads to tw o critical issues: 1) Seman tic Misalignment, where a single global prompt fails to restrict seman tic attributes to their cor- resp onding b ounding b o xes; and 2) Boundary Overflo w, where generated struc- tural edges drift b ey ond the limits of the input lay out b o xes. Suc h unconstrained generation pro duces noisy priors that significantly degrade the p erformance of the subsequent L2I FGCon trol mo dule. Inspired by training-free lay out con trol metho ds [2, 27], we propose Scale- Balanced Region Atten tion that in tervenes in the U-Net [21] denoising pro cess to enforce area-aw are constrain ts, effectively mitigating the optimization bias to ward larger bounding b o xes. (i) Latent Guidance via Region Loss. In the early timesteps of the denois- ing pro cess, we p erform iterative latent up dates to align the semantic concepts with their target spatial regions. T o achiev e this, we construct a comprehensive text prompt by concatenating the class names of all lay out instances into the format: “ he d e dge map. [class 1], [class 2]... ”. Subsequen tly , using the CLIP [18] text enco der, we tokenize the prompt and iden tify the specific token indices K i corresp onding to the class name of each b ounding box b i . During the U-Net forward pass, we extract b oth cross-attention and self- atten tion maps from designated low-resolution lay ers where lay out features are concen trated. F or cross-attention, let K i denote the set of tok en indices cor- resp onding to the class name of instance i . W e obtain the aggregated spatial atten tion map A i ∈ R H × W ( H , W are latent dimensions) b y summing ov er all tok ens k ∈ K i and av eraging across all atten tion heads. F or self-attention, A i is directly derived from the spatial region interactions without text tok ens. 8 X. Hou et al. F or regional alignmen t, let M f g i ∈ { 0 , 1 } H × W b e the binary mask of bound- ing b o x b i , and M bg ∈ { 0 , 1 } H × W b e the global background mask. W e compute the mean foreground activ ation ( A f g i ) and bac kground leak age ( A bg i ): A f g i = P x,y A ( x,y ) i ⊙ M f g , ( x,y ) i P x,y M f g , ( x,y ) i + ϵ , A bg i = P x,y A ( x,y ) i ⊙ M bg , ( x,y ) P x,y M bg , ( x,y ) + ϵ . (2) where ⊙ denotes elemen t-wise multiplication, ( x, y ) represents the spatial pixel co ordinates, and ϵ = 10 − 6 is a small constant. The region loss L reg is an In tersection o ver Union (IoU)-inspired ob jective to maximize foreground response and p enalize background leak age. Computed p er step and a veraged across targeted la yers, it is defined as: L reg = N X i =1 1 − A f g i A f g i + N · A bg i ! 2 . (3) where N is the total num b er of b ounding b o xes. Crucially , scaling background leak age b y N counters the area im balance betw een instances and the global bac kground, prev enting it from dominating optimization. With this loss, w e compute its gradient with resp ect to the input noisy latent z t and p erform a gradien t descen t up date: z t ← z t − λ ∇ z t L reg , where λ is a step size dynamically decreasing from 8 to 2 . Crucially , this up dated latent dynami- cally shap es the subsequent attention maps. Since the spatial Query features ( Q ) in the cross-attention lay ers are directly pro jected from the laten t z t , shifting z t along the negativ e gradien t geometrically translates the high-activ ation visual features. When the mo dified z t is fed bac k into the U-Net, the resulting attention maps naturally concen trate the semantic resp onse within the b ounding b o xes. (ii) Region-Mask ed Atten tion. While the early timesteps establish the global la yout via latent up dates, the later steps require strict spatial confine men t. T o prev ent b oundary ov erflow, the attention computation is mo dified by introducing a masking matrix M to b oth cross-atten tion and self-atten tion lay ers. Based on the input lay out, M ij is set to 0 if the query-key pair ( i, j ) aligns with the same instance, and −∞ otherwise. The attention score is then reformulated: A ttention ( Q, K , V ) = Softmax QK T √ d + M V . (4) By adding this large negative mask b efore the Softmax op eration, information flo w b et ween unrelated regions is physically blo c ked. The design inten tionally forces the mo del to fo cus on adhering to the strict edge constraints within the sp ecified bounding b o xes. T o translate the div erse edge priors into precise pixel-lev el control, w e intro- duce the L2I F GControl mo dule ( Fig. 2 (b)). While retaining the ligh tw eigh t RSGen 9 T able 1: Quan titative comparison of baseline mo dels with and without our prop osed RSGen on DIOR-RSVG and DOT A datasets. Mo dels equipp ed with RSGen ac hieve substan tial impro vemen ts in la yout consistency (YOLOScore) alongside highly com- p etitiv e generation fidelity (FID). Metho d DIOR-RSV G (HBB) DOT A (OBB) FID ↓ YOLOScore ↑ FID ↓ YOLOScore ↑ mAP 50 mAP 50 − 95 mAP 50 mAP 50 − 95 MIGC [38] 79.32 63.2 38.4 66.80 53.0 30.0 + Ours 85.04 68.7 45.8 68.07 55.1 37.7 CC-Diff [35] 66.75 66.8 41.2 47.72 57.7 34.0 + Ours 68.12 70.1 47.7 46.86 67.5 46.0 FICGen [24] 74.26 64.9 39.7 48.46 74.0 49.3 + Ours 73.26 65.7 42.2 48.56 75.2 53.2 efficiency of ControlNet-XS [32], FGCon trol fundamen tally addresses the issues of standard global injections, whic h often cause background c haos and o ver- whelming structural control in L2I tasks, as sho wn in Fig. 4. T o explicitly decou- ple structure from seman tics, F GControl incorp orates the generated edge maps as auxiliary conditions and utilizes a high-pass filter to extract high-frequency structural residuals. Through a spatially gated mechanism, these residuals are explicitly injected in to the base mo del. This synergistic interaction e nsures that the pixel-level structural guidance is strictly confined within the lay out b ounding b o xes without affecting the background. 3.3 L2I F GControl (i) F requency Gated Structure Decoupling. The fundamen tal goal of F GControl is to pro vide precise geometric edge guidance without in terfering with the seman tic syn thesis of the base model. Since structural edges natu- rally corresp ond to high-frequency features, we prop ose a frequency-aw are de- coupling strategy to explicitly extract sharp edge features. Giv en a control resid- ual h res from the control branch, w e define the intermediate residual map as ∆h = ZeroConv ( h res ) . W e then apply a F ast F ourier T ransform ( F ) and a high- pass filter H to extract high-frequency structural edges: ∆h hig h = F − 1 ( F ( ∆h ) ⊙ H ) . (5) Sp ecifically , H zeroes out a cen tral H d × W d lo w-frequency band ( d = 16 ). W e then apply soft thresholding ( τ = 0 . 05 ) to eliminate negligible noise, yielding the purified structural residual ∆h str : ∆h str = sgn( ∆h hig h ) ⊙ max( | ∆h hig h | − τ , 0) . (6) 10 X. Hou et al. By completely discarding the low-frequency features, FGCon trol strictly dictates structural lay outs while the base mo del go verns the seman tic con tent. (ii) Spatially Gated Injection. Bey ond frequency decoupling, strict spatial confinemen t is imp erativ e to prev ent structural artifacts from leaking in to the bac kground. F or each instance, w e dynamically resize its b ounding b o x mask M f g to matc h the spatial resolution of the current U-Net [21] lay er. A spatially gated mec hanism is then form ulated by explicitly multiplying the pu r ified high- frequency residuals with this mask. The final injected residual is formulated as: ∆h f inal = ∆h str ⊙ M f g . (7) Consequen tly , the base L2I mo del receives these precise structural constrain ts exclusiv ely within the lay out regions, achieving fine-grained pixel-lev el alignment while leaving the background generation completely unaffected. 4 Exp erimen ts In this section, we conduct comprehensive exp erimen ts to assess the effectiveness and generalization of RSGen. W e incorp orate our framew ork into v arious L2I baselines and ev aluate its p erformance across a range of remote sensing datasets, fo cusing on both the quality of generation and its application in downstream ob ject detection tasks. A dditional implemen tation details, efficiency analysis, supplemen tary exp erimen ts, and limitations are provided in the App endix. 4.1 Exp erimen tal Settings Datasets. F ollowing CC-Diff [35], w e ev aluate our proposed RSGen on t wo widely used remote sensing datasets: DIOR-RSV G [33] and DOT A-v1.0 [26]. Constructed based on the large-scale DIOR [13] dataset, DIOR-RSVG pro vides HBB annotations exclusiv ely , serving as our primary b enc hmark for horizon tal ob ject generation. In contrast, DOT A-v1.0 is a challenging dataset comprising 15 categories, featuring dense scenes and small ob jects. W e crop the images from DOT A to 512 × 512 following CC-Diff. F urthermore, w e utilize this dataset to conduct a comprehensiv e dual verification for b oth HBB and OBB detection. Benc hmarks. W e ev aluate our metho d across three L2I generation mo dels orig- inally designed for distinct domains: MIGC [38] for natural images, CC-Diff for remote sensing, and FICGen [24] for degraded scenes. Implemen tation. W e train CC-Diff and FICGen following their official settings, while MIGC is fine-tuned using its official weigh ts under CC-Diff ’s settings, except for a reduced 50 training ep ochs. F or F GControl, we freeze the base L2I mo dels. Images are resized to 512 × 512, and the mo dule is optimized for 75,000 steps with a batch size of 8 and a fixed learning rate of 8e-5. Metrics. T o comprehensively ev aluate the performance of RSGen, we assess the generated images from three distinct persp ectiv es: (1) Fidelity . W e utilize RSGen 11 T able 2: Comparison of downstream ob ject detection p erformance. By mixing syn- thetic and real data at a 1:1 ratio, models trained with data generated by RSGen ac hieve ov erall accuracy impro vemen ts across both HBB and OBB settings. Method DIOR-RSVG (HBB) DOT A (HBB) DOT A (OBB) mAP mAP 50 mAP 75 mAP mAP 50 mAP 75 mAP 50 mAP 75 MIGC [38] 54.3 78.9 59.8 38.3 64.5 39.2 53.55 19.68 + Ours 55.0 78.7 61.1 38.9 64.5 40.5 53.19 21.92 CC-Diff [35] 54.7 78.4 60.1 37.4 63.2 38.6 54.80 20.11 + Ours 55.0 78.8 60.7 39.0 64.2 40.4 54.92 21.65 FICGen [24] 54.5 78.7 60.7 39.2 64.8 40.8 55.81 22.66 + Ours 54.6 78.8 61.1 39.5 64.8 41.4 55.93 23.03 T able 3: Comparison of downstream ob ject detection p erformance un der different real- to-syn thetic data ratios. All exp erimen ts are conducted on the DOT A dataset utilizing the CC-Diff [35] baseline. Method Ratio (Real:Syn) DOT A (HBB) DOT A (OBB) mAP mAP 50 mAP 75 mAP 50 mAP 75 CC-Diff [35] 1:3 39.0 65.2 39.8 55.72 21.11 + Ours 39.0 64.2 40.0 56.07 24.36 CC-Diff [35] 1:2 38.8 65.4 39.7 54.75 21.44 + Ours 39.3 64.4 41.2 55.98 22.34 CC-Diff [35] 1:1 37.4 63.2 38.6 54.80 20.11 + Ours 39.0 64.2 40.4 54.92 21.65 CC-Diff [35] 0:1 16.2 30.9 14.7 22.07 9.49 + Ours 21.9 38.6 21.3 33.24 12.16 the F réchet Inception Distance (FID) [4] to assess the p erceptual quality of the generated images. FID measures the distributional distance b et w een generated and real features, providing a robust ev aluation of contextual coherence. (2) La yout Consistency . The YOLOScore [15] is employ ed to quantify the align- men t b et ween generated instances and spatial lay outs. Sp ecifically , we fine-tune a YOLOv8 detector [10] for HBB and a YOLOv8-OBB detector for OBB. The precision on the generated images directly reflects the control capability . (3) T rainabilit y . T o ev aluate the effect of RSGen for data augmentation, we mix syn thetic images with real training data to train downstream detectors. W e em- plo y F aster R-CNN [19] for HBB tasks (rep orting mAP , mAP 50 , mAP 75 .) and Orien ted F aster R-CNN [29] for OBB tasks (rep orting mAP 50 , mAP 75 ). 4.2 Main Results Observ ation 1: RSGen significan tly enhances lay out consistency with only marginal fluctuations in generation fidelit y . As shown in T ab. 1, w e 12 X. Hou et al. ev aluate the effectiveness by integrating RSGen into three baselines (MIGC, CC- Diff, and FICGen). While the enforcement of strict structural edge constraints leads to sligh t increases in FID in certain cases (e.g., MIGC on DIOR-RSVG), the o verall quality remains highly comp etitiv e, and in some instances (e.g., CC- Diff on DOT A), the FID even improv es. Sp ecifically , whether ev aluated under the HBB setting on DIOR-RSVG or the OBB setting on DOT A, the mo dels equipp ed with our module ac hieve substan tial impro vemen ts in YOLOScore compared to their original coun terparts. Notably , the impro vemen ts in the comprehensiv e mAP 50 − 95 metric are even more pronounced than those in mAP 50 . F or instance, in tegrating RSGen in to CC-Diff yields remark able mAP 50 − 95 gains of +6.5 on DIOR-RSV G and +12.0 on DOT A. Such substantial increases strongly indicate that our metho d significantly enhances the fine-grained con trol precision within the b ounding b o xes. This consistent p erformance b oost across distinct baselines confirms that our mo dule effectiv ely trades negligible v ariances in global image distribution for precise, pixel-level lay out alignment. Observ ation 2: RSGen significantly b oosts the accuracy of downstream ob ject detection tasks. F ollowing the exp erimen tal settings of CC-Diff, we mix the synthetic images generated by our metho d with the real training data at a 1:1 ratio. As shown in T ab. 2, integrating RSGen into the base mo dels leads to broad increases in the standard mAP under the HBB setting on b oth the DIOR-RSV G and DOT A datasets. Notably , when comparing different IoU thresholds, the improv ements in mAP 75 are consistently more pronounced than those in mAP 50 . F or example, adding RSGen to CC-Diff on the DOT A dataset yields a substan tial +1.8 gain in mAP 75 , compared to a +1.0 gain in mAP 50 . T o provide a deeper comparison, we further ev aluate the mo dels under the more challenging OBB setting on DOT A. In this stringent scenario, the gains in mAP 50 are relativ ely marginal; for instance, CC-Diff equipp ed with our module only sho ws a sligh t +0.12 increase ov er the baseline. How ever, the performance b oost primarily manifests in the stricter mAP 75 metric, where it ac hiev es a notable +1.54 improv ement. These outsized gains at higher IoU thresholds across b oth HBB and OBB tasks conc lusiv ely demonstrate that our metho d provides substan tially stronger and more precise pixel-lev el con trol capabilities. Observ ation 3: RSGen consistently impro ves do wnstream detection p erformance across v arious syn thetic data ratios. T o further v alidate the robustness and scalability of our metho d, we conduct extended exp eriments using the CC-Diff baseline on the DOT A dataset. Sp ecifically , we train the detectors under b oth HBB and OBB settings using different synthetic-to-real data mix- ing ratios, including 1:3, 1:2, and purely synthetic data. As rep orted in T ab. 3, the ev aluation across different data mixing prop ortions reveals sev eral insightful trends. First, under the HBB setting, simply increasing the prop ortion of syn- thetic data from 1:2 to 1:3 do es not yield contin uous p erformance gains. How ever, our metho d at a minimal 1:1 ratio (mAP 39.0, mAP 75 40.4) matches or exceeds the p erformance of the baseline trained with a heavily augmented 1:3 ratio (mAP 39.0, mAP 75 39.8). This comparison highligh ts the exceptional data efficiency RSGen 13 w/o E dge2E dge w E dge2E dge Fig. 5: Qualitative comparison of generated instances with and without the Edge2Edge mo dule. Incorp orating the Edge2Edge mo dule introduces rich structural v ariations, sig- nifican tly enhancing b oth the structural and ov erall diversit y of the generated instances within the sp ecified b ounding b o xes. of our metho d, demonstrating that high-qualit y , strictly aligned instances can saturate the HBB detection p erformance with muc h less data volume. Con versely , under the OBB setting, increasing the synthetic data ratio yields sustained improv emen ts. Compared to the baseline, RSGen bo osts mAP 50 (e.g., +1.23 at 1:2 ratio), while the gains are even more pronounced in the stricter mAP 75 , p eaking at 24.36 (+3.25) under the 1:3 ratio. This sho ws that for com- plex oriented ob ject detection tasks, our fine-grained control ensures strict spa- tial alignmen t, providing highly accurate annotations for detector training. In the extreme purely synthetic setting, incorp orating our mo dule leads to sub- stan tial p erformance gains ov er the baseline. Sp ecifically , the detector achiev es massiv e increases of +5.7 in mAP and +6.6 in mAP 75 for HBB, alongside an im- pressiv e +11.17 surge in mAP 50 and +2.67 in mAP 75 for OBB. Ultimately , these results con vincingly confirm that RSGen generates highly accurate and struc- turally reliable instances, maximizing b oth training efficiency and fine-grained spatial alignment for downstream applications. 4.3 Ablation Study Effect of the Edge2Edge Mo dule. Edge2Edge enric hes structural diversit y , as qualitatively shown in Fig. 5. As rep orted in T ab. 4, it significantly impro ves the YOLOScore, particularly in the comprehensiv e mAP 50 − 95 metric (+0.8). 14 X. Hou et al. T able 4: Ablation of the Edge2Edge module on DIOR-RSVG. While main taining nearly identical generation fidelit y (FID), the mo dule significan tly b oosts lay out con- sistency (YOLOScore), particularly in the stricter IoU threshold. Metho d FID ↓ YOLOScore ↑ mAP 50 mAP 50 − 95 w/o Edge2Edge 68.40 69.5 46.9 w/ Edge2Edge 68.12 70.1 47.7 T able 5: Cross-dataset v alidation. Mo dels are trained on DIOR-RSV G and ev aluated on DOT A. The Edge2Edge mo dule impro ves AP across most categories, demonstrating its strong generalization capability , whic h is crucial for practical applications. Method vehicle ship basketballcourt groundtrackfield harbor tenniscourt airplane w/o Edge2Edge 6.8 8.3 14.9 13.6 9.6 42.6 8.2 w/ Edge2Edge 7.1 8.3 15.5 13.5 10.2 44.6 8.4 Crucially , the FID remains nearly identical (68.12 and 68.40), demonstrating that div erse structural priors effectively enhance instance-lay out alignmen t and realism without compromising generation fidelit y of the base mo del. T o verify that the structural v ariations in tro duce meaningful diversit y rather than noise, we conduct a cross-dataset generalization test by training on DIOR- RSV G and ev aluating on DOT A. As shown in T ab. 5, under this c hallenging setting, the model equipp ed with Edge2Edge consisten tly outp erforms the base- line across most categories. These results confirm that the structural v ariations in tro duced b y Edge2Edge enhance cross-dataset generalization, highlighting its robustness across domains and its imp ortance for practical applications. Effect of the FGCon trol Mo dule. T o v alidate the design of the F GControl mo dule, we progressiv ely ablate its k ey comp onen ts. As sho wn in T ab. 6, our baseline mo del is ControlNet-XS [32], a typical global control mechanism. How- ev er, this global injection forces structural conditions into background regions, causing severe background confusion and degraded image qualit y . By introducing the Spatially Gated mechanism, we confine the residual fea- tures within the target b ounding b o xes, which effectiv ely eliminates background noise and impro ves generation fidelit y . Finally , the high-pass filter decouples structural guidance from seman tic features. Despite a sligh t FID increase due to in tensified structural fo cus, the complete F GControl module achiev es the high- est lay out consistency (mAP 50 − 95 46.9), demonstrating its distinct adv an tage in enabling precise, fine-grained control while preserving background coherence. 5 Conclusion In this pap er, we in tro duced RSGen, a nov el plug-and-play framework designed to resolv e the misalignment b et ween generated instances and given b ounding RSGen 15 T able 6: Ablation of the FGCon trol mo dule on the DIOR-RSVG dataset. The spa- tially gated mec hanism resolves background confusion, while the high-pass filter further decouples features to achiev e the highest lay out consistency . Metho d FID ↓ YOLOScore ↑ mAP 50 mAP 50 − 95 Baseline (Global Control) 112.43 62.1 45.5 + Spatially Gated 64.38 68.7 45.7 + High-pass Filter (F ull) 68.40 69.5 46.9 b o xes in L2I generation. Within this framework, the Edge2Edge mo dule enriches the structural div ersity of the instances and significan tly b oosts the generaliza- tion capability of the mo del. Building up on this, the L2I FGCon trol mo dule lev erages these diverse edge priors to achiev e precise, pixel-level lay out control. 16 X. Hou et al. References 1. Chen, Z., Chen, K., Lin, W., See, J., Y u, H., Ke, Y., Y ang, C.: Piou loss: T o- w ards accurate oriented ob ject detection in complex en vironments. In: Europ ean conference on computer vision. pp. 195–211. Springer (2020) 2. Dahary , O., P atashnik, O., Ab erman, K., Cohen-Or, D.: Be y ourself: Bounded atten tion for m ulti-sub ject text-to-image generation. In: Europ ean Conference on Computer Vision. pp. 432–448. Springer (2024) 3. F an, C., Zh u, M., Chen, H., Liu, Y., W u, W., Zhang, H., Shen, C.: Div ergen: Impro ving instance segmentation by learning wider data distribution with more div erse generativ e data. In: Proceedings of the IEEE/CVF Conference on Com- puter Vision and Pattern Recognition. pp. 3986–3995 (2024) 4. Heusel, M., Ramsauer, H., Unterthiner, T., Nessler, B., Ho c hreiter, S.: Gans trained b y a tw o time-scale up date rule conv erge to a lo cal nash equilibrium. Adv ances in neural information pro cessing systems 30 (2017) 5. Ho, J., Jain, A., Abb eel, P .: Denoising diffusion probabilistic mo dels. Adv ances in neural information pro cessing systems 33 , 6840–6851 (2020) 6. Hou, X., He, Y., Boukhers, Z., See, J., Su, H., Sui, W., Y ang, C.: Instada: Augmen ting instance segmen tation data with dual-agen t system. arXiv preprint arXiv:2509.02973 (2025) 7. Hu, E.J., yelong shen, W allis, P ., Allen-Zhu, Z., Li, Y., W ang, S., W ang, L., Chen, W.: LoRA: Low-rank adaptation of large language mo dels. In: In ternational Con- ference on Learning Represen tations (2022), https://openreview.net/forum?id= nZeVKeeFYf9 8. Islam, K., Zaheer, M.Z., Mahmo od, A., Nandakumar, K.: Diffusemix: Lab el- preserving data augmen tation with diffusion models. In: Pro ceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition. pp. 27621– 27630 (2024) 9. Jia, C., Luo, M., Dang, Z., Dai, G., Chang, X., W ang, M., W ang, J.: Ssmg: Spatial- seman tic map guided diffusion mo del for free-form lay out-to-image generation. In: Pro ceedings of the AAAI Conference on Artificial In telligence. v ol. 38, pp. 2480– 2488 (2024) 10. Jo c her, G., Chaurasia, A., Qiu, J.: Ultralytics yolo (2023), https://github .com/ ultralytics/ultralytics 11. Khanna, S., Liu, P ., Zhou, L., Meng, C., Rombac h, R., Burke, M., Lob ell, D.B., Ermon, S.: Diffusionsat: A generative foundation mo del for satellite imagery . In: The T w elfth International Conference on Learning Representations (2024), https: //openreview.net/forum?id=I5webNFDgQ 12. Kingma, D.P ., W elling, M.: Auto-enco ding v ariational bay es. arXiv preprint arXiv:1312.6114 (2013) 13. Li, K., W an, G., Cheng, G., Meng, L., Han, J.: Ob ject detection in optical remote sensing images: A survey and a new b enc hmark. ISPRS journal of photogrammetry and remote sensing 159 , 296–307 (2020) 14. Li, Y., Liu, H., W u, Q., Mu, F., Y ang, J., Gao, J., Li, C., Lee, Y.J.: Gligen: Open-set grounded text-to-image generation. In: Proceedings of the IEEE/CVF conference on computer vision and pattern recognition. pp. 22511–22521 (2023) 15. Li, Z., W u, J., Koh, I., T ang, Y., Sun, L.: Image synthesis from lay out with lo calit y- a ware mask adaption. In: Pro ceedings of the IEEE/CVF International Conference on Computer Vision. pp. 13819–13828 (2021) RSGen 17 16. Liu, C., Chen, K., Zhao, R., Zou, Z., Shi, Z.: T ext2earth: Unlocking text-driven re- mote sensing image generation with a global-scale dataset and a foundation mo del. IEEE Geoscience and Remote Sensing M agazine (2025) 17. P o dell, D., English, Z., Lacey , K., Blattmann, A., Do c khorn, T., Müller, J., Penna, J., Rombac h, R.: SDXL: Improving latent diffusion mo dels for high-resolution im- age syn thesis. In: The T welfth International Conference on Learning Representa- tions (2024), https://openreview.net/forum?id=di52zR8xgf 18. Radford, A., Kim, J.W., Hallacy , C., Ramesh, A., Goh, G., Agarwal, S., Sastry , G., Ask ell, A., Mishkin, P ., Clark, J., et al.: Learning transferable visual mo dels from natural language sup ervision. In: International conference on machine learning. pp. 8748–8763. PmLR (2021) 19. Ren, S., He, K., Girshick, R., Sun, J.: F aster r-cnn: T ow ards real-time ob ject de- tection with region prop osal netw orks. IEEE transactions on pattern analysis and mac hine intelligence 39 (6), 1137–1149 (2016) 20. Rom bach, R., Blattmann, A., Lorenz, D., Esser, P ., Ommer, B.: High-resolution image synthesis with latent diffusion models. In: Pro ceedings of the IEEE/CVF conference on computer vision and pattern recognition. pp. 10684–10695 (2022) 21. Ronneb erger, O., Fischer, P ., Brox, T.: U-net: Conv olutional netw orks for biomedi- cal image segmentation. In: International Conference on Medical image computing and computer-assisted interv ention. pp. 234–241. Springer (2015) 22. T ang, D., Cao, X., Hou, X., Jiang, Z., Liu, J., Meng, D.: Crs-diff: Con trollable remote sensing image generation with diffusion mo del. IEEE T ransactions on Geo- science and Remote Sensing (2024) 23. T ang, D., Cao, X., W u, X., Li, J., Y ao, J., Bai, X., Jiang, D., Li, Y., Meng, D.: A erogen: Enhancing remote sensing ob ject detection with diffusion-driv en data generation. In: Proceedings of the Computer Vision and Pattern Recognition Con- ference. pp. 3614–3624 (2025) 24. W ang, W., Zhao, Y., Ma, M., Liu, M., Jiang, Z., Chen, Y., Li, J.: Ficgen: F requency- inspired con textual disentanglemen t for la yout-driv en degraded image generation. In: Pro ceedings of the IEEE/CVF In ternational Conference on Computer Vision. pp. 19097–19107 (2025) 25. W ang, X., Darrell, T., Rambhatla, S.S., Girdhar, R., Misra, I.: Instancediffusion: Instance-lev el control for image generation. In: Pro ceedings of the IEEE/CVF con- ference on computer vision and pattern recognition. pp. 6232–6242 (2024) 26. Xia, G.S., Bai, X., Ding, J., Zhu, Z., Belongie, S., Luo, J., Datcu, M., Pelillo, M., Zhang, L.: Dota: A large-scale dataset for ob ject detection in aerial images. In: Pro ceedings of the IEEE conference on computer vision and pattern recognition. pp. 3974–3983 (2018) 27. Xie, J., Li, Y., Huang, Y., Liu, H., Zhang, W., Zheng, Y., Shou, M.Z.: Boxdiff: T ext- to-image syn thesis with training-free b o x-constrained diffusion. In: Proceedings of the IEEE/CVF International Conference on Computer Vision. pp. 7452–7461 (2023) 28. Xie, S., T u, Z.: Holistically-nested edge detection. In: Pro ceedings of the IEEE in ternational conference on computer vision. pp. 1395–1403 (2015) 29. Xie, X., Cheng, G., W ang, J., Y ao, X., Han, J.: Oriented r-cnn for ob ject detection. In: Pro ceedings of the IEEE/CVF international conference on computer vision. pp. 3520–3529 (2021) 30. Y e, Z., Ma, S., Y ang, J., Y ang, X., Gong, Z., Y ang, X., W ang, H.: Ob ject fidelity diffusion for remote sensing image generation. In: The F ourteenth International Conference on Learning Representations (2026), https://openreview.net/forum? id=ngfIm9aPsH 18 X. Hou et al. 31. Y uan, Z., Hao, C., Zhou, R., Chen, J., Y u, M., Zhang, W., W ang, H., Sun, X.: Efficien t and controllable remote sensing fake sample generation based on diffusion mo del. IEEE T ransactions on Geoscience and Remote Sensing 61 , 1–12 (2023) 32. Za v adski, D., F eiden, J.F., Rother, C.: Con trolnet-xs: Rethinking the con trol of text-to-image diffusion mo dels as feedback-con trol systems. In: Europ ean Confer- ence on Computer Vision. pp. 343–362. Springer (2024) 33. Zhan, Y., Xiong, Z., Y uan, Y.: Rsvg: Exploring data and mo dels for visual ground- ing on remote sensing data. IEEE T ransactions on Geoscience and Remote Sensing 61 , 1–13 (2023). https://doi.org/10.1109/TGRS.2023.3250471 34. Zhang, L., Rao, A., Agraw ala, M.: A dding conditional con trol to text-to-image diffusion mo dels. In: Pro ceedings of the IEEE/CVF in ternational conference on computer vision. pp. 3836–3847 (2023) 35. Zhang, M., Liu, Y., Liu, Y., Zhao, Y., Y e, Q.: Cc-diff: enhancing contextual coher- ence in remote sensing image synthesis. arXiv preprint arXiv:2412.08464 (2024) 36. Zhao, H., Sheng, D., Bao, J., Chen, D., Chen, D., W en, F., Y uan, L., Liu, C., Zhou, W., Chu, Q., et al.: X-paste: Revisiting scalable copy-paste for instance seg- men tation using clip and stablediffusion. In: International Conference on Machine Learning. pp. 42098–42109. PMLR (2023) 37. Zheng, G., Zhou, X., Li, X., Qi, Z., Shan, Y., Li, X.: Lay outdiffusion: Controllable diffusion mo del for lay out-to-image generation. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition. pp. 22490–22499 (2023) 38. Zhou, D., Li, Y., Ma, F., Zhang, X., Y ang, Y.: Migc: Multi-instance generation con troller for text-to-image syn thesis. In: Pro ceedings of the IEEE/CVF conference on computer vision and pattern recognition. pp. 6818–6828 (2024) RSGen: Enhancing La y out-Driv en Remote Sensing Image Generation with Div erse Edge Guidance Supplemen tary Material 1 Implemen tation Details The section provides additional implemen tation details. W e first describ e the ba- sic exp erimen tal configuration. Then we present the training settings for LoRA [7] and the YOLOv8 [8] mo del. After that, we in tro duce the ev aluation for the F réchet Inception Distance (FID) [6] and the cross-v alidation details used in our exp erimen ts. Finally , we provide the detailed training configurations for MMDe- tection [1] and MMRotate [28]. 1.1 Exp erimen tal Configuration W e implement the training for the baseline models (CC-Diff [26], MIGC [27], and FICGen [19]) as well as our FGCon trol mo dule using PyT orch 2.1.0 and CUD A 12.1.0 on 8 NVIDIA H20 GPUs. The training follo ws the official settings of the resp ectiv e metho ds. T o ensure a fair comparison, we train FICGen for 100 ep ochs in our main exp erimen ts. W e provide the ev aluation of FICGen under its official 300 ep ochs training setting in the Additional Experimental Results sec- tion. F urthermore, we ev aluate all metrics, including FID and YOLOScore [10], using 8 NVIDIA L20 GPUs with PyT orch 2.5.1 and CUDA 12.4 1.2 LoRA T raining Details W e fine-tune the SDXL [13] base mo del using the standard Lo w-Rank Adapta- tion (LoRA). The training dataset consists of the comp osited edge maps detailed in the main text. W e freeze the text enco ders and exclusiv ely train the U-Net parameters [16]. W e set the LoRA rank (dimension) to 64 and the netw ork alpha to 8. The input training images are pro cessed at a resolution of 1024 × 1024. F or the optimization, w e utilize the A dam W [12] optimizer with a learning rate of 0.0004, managed b y a cosine learning rate sc heduler with a warm up phase. The training is conducted for a total of 7,500 steps with a batch size of 4 and 4 gradien t accumulation steps. F urthermore, we emplo y FP16 mixed precision to accelerate the pro cess and apply Min-SNR [4] weigh ting with a gamma v alue of 5 to impro ve conv ergence stability . 2 Supplemen tary Material 1.3 YOLOScore T raining Details W e train distinct YOLO detectors tailored to the b ounding b o x formats of eac h dataset. F or the DIOR-RSVG [24] dataset, which relies on horizontal b ound- ing b o xes (HBB), we emplo y the YOLOv8 Nano (yolo v8n). Conv ersely , for the DOT A-v1.0 [20] and the HRSC2016 [11] datasets (detailed in the A dditional Exp erimen tal Results section), which require oriented b ounding b o xes (OBB), w e utilize the YOLOv8 Medium OBB (yolo v8m-obb). A cross all datasets, models are trained on their resp ectiv e train splits for 50 ep ochs using a batch size of 16 and an input image resolution of 512 × 512. T o ev aluate la yout consistency , images generated from sp ecific spatial la youts are assessed against the corresp onding ground-truth annotations. Because test set annotations are una v ailable for DIOR-RSV G and DOT A-v1.0, w e utilize their v al sets for b oth generation and ev aluation. F or HRSC2016, we use the test set for b oth generation and ev aluation. During these v alidation phases, w e adjust the parameters to align with the settings of CC-Diff: the DIOR-RSVG ev aluation image size is scaled to 800 × 800, while DOT A-v1.0 and HRSC2016 are maintained at 512 × 512, all with a batch size of 16. 1.4 FID Computation The FID is computed using a pre-trained Inception-v3 netw ork [17]. The real images from the DIOR-RSVG dataset are resized to 800 × 800, while images from b oth the DOT A-v1.0 and HRSC2016 datasets are resized to 512 × 512. 1.5 Cross-V alidation T o ev aluate generalization, mo dels trained on DIOR-RSVG are tested on DOT A- v1.0. W e align the differing category sets by mapping shared DOT A categories to their DIOR-RSVG equiv alents (e.g., merging "small-v ehicle" and "large-vehicle" in to "v ehicle"). Annotations for unshared categories are filtered out to ensure a standardized and fair ev aluation. 1.6 MMDetection and MMRotate T raining Details T o ev aluate downstream ob ject detection p erformance, all mo dels are imple- men ted using mmcv-full 1.7.2, MMDetection 2.28.2, and MMRotate 0.3.4. F or HBB tasks on the DIOR-RSVG and DOT A datasets, we utilize F aster R-CNN [14], resizing input images to 800 × 800 and applying standard data augmentations suc h as random horizontal flipping with a 0.5 probability . F or OBB detection, ar- c hitectures and angle representations are tailored to the sp ecific datasets. Sp ecif- ically , for DOT A-v1.0, w e train a Rotated F aster R-CNN [21] with a ResNet-50 bac kb one [5] using the le90 angle definition and a 1024 × 1024 image resolution. Con versely , for the HRSC2016 dataset, we employ S2ANet [3] with a ResNet-50 bac kb one, utilizing the le135 angle definition and an 800 × 800 resolution. Both OBB configurations incorp orate comprehensive spatial augmentations, including random horizontal, vertical, and diagonal flipping. Abbreviated pap er title 3 T able 1: Comparison of training times. Both FGCon trol and LoRA fine-tuning require significan tly less time than the base L2I model. Comp onen t Time Base Mo del 14h 43m F GControl (Ours) 8h 31m LoRA (Ours) 4h 40m T able 2: Inference efficiency on the HRSC dataset. While the Edge2Edge mo dule incurs computational costs, adding FGCon trol intro- duces negligible ov erhead. Mo del Config. VRAM (GB) Latency (s) Base Mo del 10.18 9.58 + FGCon trol 10.19 10.16 Edge2Edge 46.05 41.04 2 Efficiency Analysis In this section, we provide a detailed efficiency analysis of the RSGen frame- w ork. First, we rep ort the training time required for the FGCon trol mo dule and fine-tuning the base mo del via LoRA. Subsequently , we ev aluate the inference efficiency in terms of latency and p eak memory utilization up on in tegrating our prop osed modules. 2.1 T raining Time T o demonstrate the training efficiency of the prop osed RSGen framew ork, we compare the training ov erhead of our mo dules against the base lay out-to-image (L2I) model. As detailed in T ab. 1, training the base mo del requires approx- imately 14 hours and 43 min utes. In con trast, optimizing the ligh tw eigh t FG- Con trol mo dule takes only 8 hours and 31 minutes. F urthermore, fine-tuning the SD XL mo del via LoRA for the Edge2Edge mo dule is highly efficient, requiring appro ximately 4 hours. These results indicate that our plug-and-pla y frame- w ork significan tly enhances fine-grained lay out con trol without in tro ducing a prohibitiv e training burden. 2.2 Inference Efficiency As sho wn in T ab. 2, ev aluations on the HRSC dataset rev eal that integrating the light weigh t FGCon trol mo dule adds virtually no burden: VRAM consump- tion increases by a mere 0.01 GB, and latency rises b y only 0.58 seconds p er image. F urthermore, while the Edge2Edge mo dule (op erating at 50 steps with a 0.6 denoising strength) requires 46.05 GB of VRAM and approximately 41 sec- onds p er image, it serves strictly as a one-time offline data augmentation step, in tro ducing zero o verhead during downstream inference. 3 A dditional Exp erimen tal Results This section pro vides further experimental evidence to v alidate the prop osed RSGen framework, including its generalization on the HRSC2016 dataset, effec- tiv eness on p erformance-saturated mo dels, additional ablation studies on core mo dules, and justification for the selection of SD XL. 4 Supplemen tary Material T able 3: P erformance comparison on the HRSC2016 dataset. Equipp ed with RSGen, the base mo del achiev es sup erior lay out consistency and downstream detection p erfor- mance in complex backgrounds. Metho d FID ↓ YOLOScore (OBB) ↑ mAP ↑ mAP 50 mAP 50 − 95 Ori (Real Data Only) - - - 37.71 FICGen 101.27 89.8 57.1 36.55 FICGen + Ours 99.85 90.8 61.2 38.39 T able 4: Ev aluation on a p erformance-saturated mo del. Even when the base FICGen mo del is trained for 300 ep ochs to reach its p erformance b ottlenec k, integrating RSGen pro vides further improv emen ts in lay out consistency . Metho d FID ↓ YOLOScore (OBB) ↑ mAP 50 mAP 50 − 95 FICGen 41.23 77.2 52.8 FICGen + Ours 41.41 77.2 54.8 3.1 Results on the HRSC2016 Dataset T o ev aluate the generalization capabilit y of the prop osed RSGen framework, w e conduct exp erimen ts on the HRSC2016 dataset. The dataset fo cuses on ship detection in satellite images c haracterized by highly complex backgrounds. Using FICGen as the base L2I mo del, we assess FID, YOLOScore, and mAP . As sho wn in T ab. 3, integrating RSGen simultaneously improv es FID (from 101.27 to 99.85) and YOLOScore, sp ecifically achieving a +4.1 gain in mAP 50 − 95 . Crucially , ev aluating the downstream detection p erformance reveals that simply augmen ting the training set with FICGen degrades p erformance to 36.55 mAP , compared to 37.71 mAP when using solely real data. In contrast, incorp orating RSGen significantly elev ates generated data quality , reversing this degradation and bo osting the o verall mAP to 38.39. This confirms that our fine-grained con trol preserv es structural integrit y , enabling substan tial gains for detectors ev en under severe background in terference. 3.2 Impro ving Up on Performance-Saturated Mo del T o in vestigate the effectiveness of our framew ork when applied to a model with strong inherent control capabilities, we ev aluate RSGen on a baseline that has reac hed p erformance saturation. Sp ecifically , FICGen is utilized as the base L2I mo del and trained on the DOT A dataset. The baseline is trained for 300 ep ochs to ensure its p erformance reaches saturation. FID and YOLOScore are then as- sessed under the OBB setting. As shown in T ab. 4, while the FID exp eriences a negligible increase (from 41.23 to 41.41), integrating RSGen yields a substan tial Abbreviated pap er title 5 T able 5: Ablation study on different control modules. Compared to Con trolNet and Con trolNet-XS, our FGCon trol achiev es sup erior lay out consistency and fidelity . Metho d FID ↓ YOLOScore ↑ mAP 50 mAP 50 − 95 Con trolNet 128.04 50.5 37.5 Con trolNet-XS 112.43 62.1 45.5 F GControl (Ours) 68.40 69.5 46.9 T able 6: Ablation on Scale Balanced Region Atten tion. Compared to standard SDXL I2I generation and Be Y ourself, our metho d ac hieves the b est lay out consistency and generation fidelity . Metho d FID ↓ YOLOScore ↑ mAP 50 mAP 50 − 95 SD XL I2I 69.18 67.3 44.7 Be Y ourself 68.44 69.3 46.9 Ours 68.12 70.1 47.7 +2.0 gain in the mAP 50 − 95 metric, alongside a stable mAP 50 . The improv emen t demonstrates that our fine-grained edge guidance effectiv ely elev ates the gener- ation precision and ov erall performance ceiling, even for fully trained mo dels. 3.3 A dditional Ablation Studies Ablation on Con trol Mo dules. T o ev aluate the prop osed FGCon trol, w e compare it against the established global control mec hanisms ControlNet [25] and ControlNet-XS [23]. As detailed in T ab. 5, FGCon trol outp erforms these global approaches across all metrics. Sp ecifically , our mo dule ac hieves a highly comp etitiv e FID of 68.40 and a YOLOScore (mAP 50 − 95 ) of 46.9. Our approach yields a substantial improv ement in generation fidelity alongside consistent gains in spatial alignment accuracy . This confirms that confining high-frequency struc- tural guidance within the lay out b ounding b o xes achiev es fine-grained lo cal con- trol without in terfering with the global semantic generation. Ablation on Scale-Balanced Region Atten tion. W e ev aluate the prop osed Scale-Balanced Region Atten tion within the Edge2Edge mo dule to assess its role in generating structural priors. The generation pro cess utilizes 50 inference steps with a denoising strength of 0.6. T o ensure a fair comparison, we adopt the h yp erparameters established b y Be Y ourself [2]. Sp ecifically , w e utilize a dynamic step size that decays from 8 to 2, and w e scale the maximum guidance iterations p er step to 9 (proportionally adjusted from the original 15 to account for our 0.6 denoising strength). 6 Supp lemen tary Material SD1.5 SDXL GT Fig. 1: Qualitativ e comparison of standard I2I generation (without LoRA) b et w ee n SD 1.5 and S D XL. SD XL successfully preserv es the structural in tegrit y while in tro ducing meaningful d iv ersit y . As detailed in T ab. 6, standard SD XL I2I generation yields a lo w la y out con- sistency (mAP 50 − 95 of 44.7) due to a lac k of explicit spatial constrain ts, leading to seman tic misalignmen t and b oundary o v erflo w. While Be Y ourself in tro duces spatial guidance and impro v es the mAP 50 − 95 to 46.9, it suffers from an optimiza- tion bias that fa v ors larger b ounding b o xes. Our area constrain ts mitigate this bias during laten t up dates, b o osting mAP 50 − 95 to 47.7 while securing the b est FID (68.12). These results demonstrate that our metho d ens ures more balance d and precise spatial con trol across ob jects of v arying scales , whic h pro vides the do wnstream F GCon trol mo dule w ith accurate structural priors. 3.4 SD XL Selection T o ju stify the foundational mo del c hoice for the Edge2Edge mo dule, w e quali- tativ ely compare the generation capabilities of SD 1.5 [15] and SD XL. F or a fair ev aluation, w e conduct standard I2I generation us ing b oth mo dels. As illustrated in Fig. 1, SD 1.5 struggles to preserv e the structural i n tegrit y of the original ins ta n ces. It frequen tly fails to main tain the basic shap e of the air- plane and in tro duces noticeable noise . In con trast, SD XL success fully preserv es the accurate structural con tours of the airplane while concurren tly in tro ducing meaningful structural div ersit y . The qualitativ e comparison demonstrates that SD XL p osses ses a v astly sup erior inheren t understanding of c omplex structures, making it the m o s t suitable and robust foundation f or generating high-qualit y edge priors in our framew ork. 4 Limitations and Long-tail Cases Despite its robust p erformance, our metho d has certain limitations. As an auxil- iary plug-and-pla y mo dule, RSGen maximizes the la y out consistency of e x is ting L2I mo dels but cannot fundamen tally alter the inheren t generativ e limits of the base mo del. Fig. 2 illustrates sp ecifi c instances across differen t base m o dels. Abbreviated pap er title 7 a) b) a) b) MIGC FICGen Fig. 2: Qualitativ e comparison of standard I2I generation (without LoRA) b et w ee n SD 1.5 and S D XL. SD XL successfully preserv es the structural in tegrit y while in tro ducing meaningful d iv ersit y . T able 7: Ev aluation of RSGen on the GLIGEN baseline. The mo d ified U-Net arc hitec- ture of GLIGEN in terferes with our feature injection mec h a n ism, leading to sub optimal p erf ormance. Metho d FID ↓ YOLOScore ↑ mAP 50 mAP 50 − 95 GLIGEN 77.08 78.6 50.8 GLIGEN + Ours 81.24 49.8 3 2.2 The MI GC results (Le f t) highligh t the limitations of the base mo de l in main- taining con text coherence. Sp ecifically , the generated harb or in image (a) fails to ble nd seman tically with the surrounding en vironmen t. A dding RSGen (image b) successfully constrains the har b or within the b o x but fails to resolv e it. The FICGen results (Righ t) demonstrate p o or p erformance when handling extremely small ob jects (image a). The com bination of RSGen in image (b) somewhat im- pro v es ob ject generation but cannot comple tely resolv e min ute visual details. F urthermore, as detailed in T ab. 7, w e observ e sub optimal p erformance when in tegrating RSGen with GLIGEN [9]. This is primarily attributed to the mo dified U-Net arc hitecture of GLIGEN, whic h inserts an additional Gated Self- A tten tion la y er b et w een the standard atten tion mo du les, thereb y in terfering with our feature injection mec hanism. Finally , while RSGen is des ig n ed as a plug- and-pla y mo dule within the diffusers library , it cannot b e directly in tegrated in to s p ecialized remote sensing mo dels, suc h as A eroGen [18] and OF-Diff [22]. 5 Qualitativ e A nalysis T o further demonstrate the effectiv eness of our prop osed fra m ew ork, w e pro vide qualitativ e visualizations from t w o p ersp ectiv es: th e qualit y of the generated images and the resulting impro v emen ts in do wnstream detection tasks. First, w e sho w case the high-qualit y syn thetic data generated b y RSGen. As illustrated in Fig. 3, w e presen t a three-column visualization consisting of the in- 8 Supplemen tary Material put spatial la youts, the diverse edge maps generated by our Edge2Edge mo dule, and the final images generated by the L2I mo del. These results demonstrate that our framework pro duces highly realistic remote sensing data that strictly adheres to the given spatial constrain ts with pixel-level precision, successfully capturing b oth global la youts and fine-grained structural details. Notably ( Fig. 3, fifth ro w), the decoupling mechanism in F GControl naturally reduces reliance on guidance when edge maps are blurred. This preven ts instance generation failure and ensures robust spatial alignmen t despite low-qualit y edges. Second, we present visual comparisons of detection p erformance to highlight the practical b enefits of our framework, with FICGen serving as the base mo del. As shown in Fig. 4, we p erform HBB detection on the DIOR-RSVG dataset and OBB detection on the DOT A dataset. The visualization confirms that augment- ing the training set with our generated data significantly enhances b ounding b o x precision and o verall recognition capabilit y . Abbreviated pap er title 9 Layout Edge Ours Fig. 3: Visualization of the RSGen image generation pro cess. Images generated b y RSGen demonstrate the abilit y of the framew ork to main ta in strict spatial alignmen t and high visual fidelit y . 10 Supplemen tary Material FICGen FICGen + RSGen + RSGen Fig. 4: Visual comparison of detection results on DIOR-RSV G (HBB) and DOT A (OBB) data sets using FICGen. Mo dels trained with our augmen ted data exhibit higher lo calization precision and impro v ed recognition of ob jects. Abbreviated pap er title 11 References 1. Chen, K., W ang, J., Pang, J., Cao, Y., Xiong, Y., Li, X., Sun, S., F eng, W., Liu, Z., Xu, J., Zhang, Z., Cheng, D., Zhu, C., Cheng, T., Zhao, Q., Li, B., Lu, X., Zhu, R., W u, Y., Dai, J., W ang, J., Shi, J., Ouy ang, W., Loy , C.C., Lin, D.: MMDetection: Op en mmlab detection toolb o x and b enchmark. arXiv preprint (2019) 2. Dahary , O., P atashnik, O., Ab erman, K., Cohen-Or, D.: Be yourself: Bounded atten tion for m ulti-sub ject text-to-image generation. In: Europ ean Conference on Computer Vision. pp. 432–448. Springer (2024) 3. Han, J., Ding, J., Li, J., Xia, G.S.: Align deep features for oriented ob ject detection. IEEE transactions on geoscience and remote sensing 60 , 1–11 (2021) 4. Hang, T., Gu, S., Li, C., Bao, J., Chen, D., Hu, H., Geng, X., Guo, B.: Efficient diffusion training via min-snr weigh ting strategy . In: Pro ceedings of the IEEE/CVF In ternational Conference on Computer Vision (ICCV). pp. 7441–7451 (Octob er 2023) 5. He, K., Zhang, X., Ren, S., Sun, J.: Deep residual learning for image recognition. In: Pro ceedings of the IEEE conference on computer vision and pattern recognition. pp. 770–778 (2016) 6. Heusel, M., Ramsauer, H., Unterthiner, T., Nessler, B., Ho c hreiter, S.: Gans trained b y a tw o time-scale up date rule conv erge to a lo cal nash equilibrium. Adv ances in neural information pro cessing systems 30 (2017) 7. Hu, E.J., yelong shen, W allis, P ., Allen-Zhu, Z., Li, Y., W ang, S., W ang, L., Chen, W.: LoRA: Low-rank adaptation of large language mo dels. In: In ternational Con- ference on Learning Represen tations (2022), https://openreview.net/forum?id= nZeVKeeFYf9 8. Jo c her, G., Chaurasia, A., Qiu, J.: Ultralytics yolo (2023), https://github .com/ ultralytics/ultralytics 9. Li, Y., Liu, H., W u, Q., Mu, F., Y ang, J., Gao, J., Li, C., Lee, Y.J.: Gligen: Op en-set grounded text-to-image generation. In: Proceedings of the IEEE/CVF conference on computer vision and pattern recognition. pp. 22511–22521 (2023) 10. Li, Z., W u, J., Koh, I., T ang, Y., Sun, L.: Image synthesis from lay out with lo calit y- a ware mask adaption. In: Pro ceedings of the IEEE/CVF International Conference on Computer Vision. pp. 13819–13828 (2021) 11. Liu, Z., W ang, H., W eng, L., Y ang, Y.: Ship rotated b ounding b o x space for ship extraction from high-resolution optical satellite images with complex backgrounds. IEEE geoscience and remote sensing letters 13 (8), 1074–1078 (2016) 12. Loshc hilov, I., Hutter, F.: Decoupled weigh t deca y regularization. arXiv preprin t arXiv:1711.05101 (2017) 13. P o dell, D., English, Z., Lacey , K., Blattmann, A., Do c khorn, T., M üller, J., Penna, J., Rombac h, R.: SDXL: Improving latent diffusion mo dels for high-resolution im- age syn thesis. In: The T welfth International Conference on Learning Representa- tions (2024), https://openreview.net/forum?id=di52zR8xgf 14. Ren, S., He, K., Girshick, R., Sun, J.: F aster r-cnn: T ow ards real-time ob ject de- tection with region prop osal netw orks. IEEE transactions on pattern analysis and mac hine intelligence 39 (6), 1137–1149 (2016) 15. Rom bach, R., Blattmann, A., Lorenz, D., Esser, P ., Ommer, B.: High-resolution image synthesis with latent diffusion models. In: Pro ceedings of the IEEE/CVF conference on computer vision and pattern recognition. pp. 10684–10695 (2022) 12 Supplementary Material 16. Ronneb erger, O., Fischer, P ., Brox, T.: U-net: Conv olutional netw orks for biomedi- cal image segmentation. In: International Conference on Medical image computing and computer-assisted interv ention. pp. 234–241. Springer (2015) 17. Szegedy , C., V anhouc ke, V., Ioffe, S ., Shlens, J., W o jna, Z.: Rethinking the incep- tion arc hitecture for computer vision. In: Pro ceedings of the IEEE conference on computer vision and pattern recognition. pp. 2818–2826 (2016) 18. T ang, D., Cao, X., W u, X., Li, J., Y ao, J., Bai, X., Jiang, D., Li, Y., Meng, D.: A erogen: Enhancing remote sensing ob ject detection with diffusion-driv en data generation. In: Proceedings of the Computer Vision and Pattern Recognition Con- ference. pp. 3614–3624 (2025) 19. W ang, W., Zhao, Y., Ma, M., Liu, M., Jiang, Z., Chen, Y., Li, J.: Ficgen: F requency- inspired con textual disentanglemen t for la yout-driv en degraded image generation. In: Pro ceedings of the IEEE/CVF In ternational Conference on Computer Vision. pp. 19097–19107 (2025) 20. Xia, G.S., Bai, X., Ding, J., Zhu, Z., Belongie, S., Luo, J., Datcu, M., Pelillo, M., Zhang, L.: Dota: A large-scale dataset for ob ject detection in aerial images. In: Pro ceedings of the IEEE conference on computer vision and pattern recognition. pp. 3974–3983 (2018) 21. Xie, X., Cheng, G., W ang, J., Y ao, X., Han, J.: Oriented r-cnn for ob ject detection. In: Pro ceedings of the IEEE/CVF international conference on computer vision. pp. 3520–3529 (2021) 22. Y e, Z., Ma, S., Y ang, J., Y ang, X., Gong, Z., Y ang, X., W ang, H.: Ob ject fidelity diffusion for remote sensing image generation. In: The F ourteenth International Conference on Learning Representations (2026), https://openreview.net/forum? id=ngfIm9aPsH 23. Za v adski, D., F eiden, J.F., Rother, C.: Con trolnet-xs: Rethinking the con trol of text-to-image diffusion mo dels as feedback-con trol systems. In: European Confer- ence on Computer Vision. pp. 343–362. Springer (2024) 24. Zhan, Y., Xiong, Z., Y uan, Y.: Rsvg: Exploring data and mo dels for visual ground- ing on remote sensing data. IEEE T ransactions on Geoscience and Remote Sensing 61 , 1–13 (2023). https://doi.org/10.1109/TGRS.2023.3250471 25. Zhang, L., Rao, A., Agra wala, M.: A dding conditional control to text-to-image diffusion mo dels. In: Pro ceedings of the IEEE/CVF in ternational conference on computer vision. pp. 3836–3847 (2023) 26. Zhang, M., Liu, Y., Liu, Y., Zhao, Y., Y e, Q.: Cc-diff: enhancing contextual coher- ence in remote sensing image syn thesis. arXiv preprin t arXiv:2412.08464 (2024) 27. Zhou, D., Li, Y., Ma, F., Zhang, X., Y ang, Y.: Migc: Multi-instance generation con troller for text-to-image syn thesis. In: Pro ceedings of the IEEE/CVF conference on computer vision and pattern recognition. pp. 6818–6828 (2024) 28. Zhou, Y., Y ang, X., Zhang, G., W ang, J., Liu, Y., Hou, L., Jiang, X., Liu, X., Y an, J., Lyu, C., Zhang, W., Chen, K.: Mmrotate: A rotated ob ject detection b enc h- mark using pytorc h. In: Pro ceedings of the 30th ACM International Conference on Multimedia (2022)

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment