SA GE: Multi-Agent Self-Evolution f or LLM Reasoning Y ulin Peng 1 , Xinxin Zhu 1, 2 , Chenxing W ei 1, 2 , Nianbo Zeng 1, 2 , Leilei W ang 1, 2 , Y ing Tiffany He 1 , F . Richard Y u 3 1 College of Computer Science and Softw are Engineering, Shenzhen Univ ersity , China 2 Guangdong Laboratory of Artificial Intelligence and Digital Economy (SZ), China 3 School of Information T echnology , Carleton Uni versity , Canada Abstract Reinforcement learning with v erifiable rewards improv es reasoning in large language models (LLMs), but many methods still rely on large human-labeled datasets. While self-play re- duces this dependency , it often lacks explicit planning and strong quality control, limiting stability in long-horizon multi-step reasoning. W e present SA GE ( S elf-e volving A gents for G eneralized reasoning E volution), a closed- loop framew ork where four agents: Challenger , Planner , Solver , and Critic , co-evolv e from a shared LLM backbone using only a small seed set. The Challenger continuously generates in- creasingly dif ficult tasks; the Planner conv erts each task into a structured multi-step plan; and the Solver follows the plan to produce an an- swer , whose correctness is determined by e x- ternal verifiers. The Critic scores and filters both generated questions and plans to pre vent curriculum drift and maintain training signal quality , enabling stable self-training. Across mathematics and code-generation benchmarks, SA GE deli vers consistent gains across model scales, improving the Qwen-2.5-7B model by 8.9% on LiveCodeBench and 10.7% on OlympiadBench. 1 Introduction Large language models (LLMs) have achieved re- markable adv ancements in reasoning tasks such as mathematics and coding through reinforcement learning (RL) techniques ( Guo et al. , 2025 ; Sheng et al. , 2025 ; Sun et al. , 2024 ). Ho wever , these meth- ods often depend on lar ge-scale human-curated datasets for verifiable rewards, posing scalability challenges and limiting autonomous adaptation as models approach superhuman capabilities ( Zhao et al. , 2025a ; Chen et al. , 2025 ). Recent ef forts have explored self-play and multi- agent frameworks to enable self-e volution with- out extensi ve e xternal data. For instance, self-play paradigms like SPIRAL ( Liu et al. , 2025 ) and Ab- Propose d Que stion s Forma t Chec k Pass Rat e Q ua l ity Chec k Q ual ity Check Que stion s + Plan s For ma t Check For mat Check Chall enger Crit i c Sol ve r Plan ne r Figure 1: Overview of the SA GE framework. F our specialized agents—Challenger , Planner , Solver , and Critic—interact through quality filtering and format v al- idation to enable closed-loop self-ev olution. solute Zero ( Zhao et al. , 2025a ) leverage v erifiable en vironments for autonomous improvement, while multi-agent systems such as MARS ( Y uan et al. , 2025 ) and MAE ( Chen et al. , 2025 ) facilitate col- laborati ve reasoning through role specialization. Despite these adv ances, existing approaches strug- gle with open-ended domains lacking robust v er- ification and often fail to integrate planning for complex, multi-step tasks ( Huang et al. , 2025 ; Gao et al. , 2025 ; Y ue et al. , 2025 ). T o address these gaps, we propose SA GE ( S elf-e volving A gents for G eneralized reasoning E volution), a closed-loop multi-agent frame work that enables LLMs to co-e volve in verifiable do- mains like math and coding using only minimal seed examples. As illustrated in Figure 1 , SAGE in- stantiates four specialized agents: a Challenger for task generation, a Planner for strategy outlining, a Solver for solution ex ecution, and a Critic for qual- ity assessment and format calibration. These agents interact adversarially , with the Challenger rewarded for dif ficulty and the Solver optimized via verifier - based correctness, forming a self-re warding cycle trained end-to-end using task-relativ e policy gradi- ents. Through experiments on mathematics and cod- ing benchmarks, SA GE demonstrates signifi- cant performance gains, outperforming baselines trained on human-curated datasets in sample ef fi- ciency and generalization. W e outline our contribu- tion as follo ws: • W e design a scalable multi-agent frame work for self-e volving LLMs in reasoning tasks. • W e propose a dual-role Critic mechanism en- suring task quality and solution verification. • W e conduct empirical evidence of effecti ve co-e volution in math and code domains under fe w-example settings. 2 Related W ork Reinf orcement Learning f or LLM Reasoning. Early work applied RL (e.g., PPO ( Schulman et al. , 2017 )) to language tasks, b ut recent research fo- cuses on reinforcement learning with verifiable re- wards (RL VR) for reasoning ( W an et al. , 2025 ). F or example, DeepSeek-R1 ( Guo et al. , 2025 ) sho ws that RL VR can extend an LLM’ s reasoning capabil- ities on math by training from correctness signals. W ebAgent-R1 ( W ei et al. , 2025 ) is an end-to-end multi-turn RL frame work that significantly boosts web navig ation success using binary success re- wards. Critic-free RL v ariants (e.g., GRPO ( Guo et al. , 2025 )) reduce training overhead, but typically still rely on human-curated or grounded en viron- ments. Recent work has systematically character - ized agentic RL for LLMs, emphasizing capabil- ities like planning and self-improv ement ( Zhang et al. , 2025 ; W en et al. , 2025 ; W u et al. , 2025 ). In contrast, SA GE learns from self-generated, v erifi- able tasks with little external data. Multi-Agent LLM Systems. LLM-based multi- agent frame works facilitate complex tasks via role specialization. MetaGPT ( Hong et al. , 2024 ) en- codes human-like workflo ws into a multi-agent as- sembly line, breaking down large tasks into sub- tasks among collaborating agents. CAMEL ( Li et al. , 2023 ) uses inception prompting to guide a society of role-playing agents, enabling study of cooperativ e behaviors in instruction-following tasks. MARS ( Y uan et al. , 2025 ) introduces a rein- forcement learning frame work where multi-agent self-play enhances strategic reasoning capabilities across cooperative and competitiv e tasks. These systems demonstrate that coordinating multiple LLM agents can enhance performance on com- plex tasks ( Zhao et al. , 2025b ; Zhu et al. , 2025 ). MARFT ( Liao et al. , 2025 ) applies multi-agent rein- forcement fine-tuning to optimize LLM-based sys- tems, and MAL T ( Motwani et al. , 2025 ), which di- vides reasoning into generation, verification, and re- finement steps using heterogeneous agents. SAGE extends this line by instantiating distinct agents (Challenger , Planner , Solver , Critic) within one LLM and jointly training them with shared feed- back. Self-Play and Self-Evolving Agents. Recent works explore self-play and self-e volution to im- prov e LLMs autonomously . The SPIRAL ( Liu et al. , 2025 ) framework shows that self-play on zero-sum games can automatically induce gener- alizable reasoning strate gies without human data. Absolute Zero ( Zhao et al. , 2025a ) generates its o wn coding problems and uses a code ex ecutor as a verifier to self-critique and solve them, achie ving strong math and coding reasoning without exter - nal data. Agentic Self-Learning ( Sun et al. , 2025 ) is a closed-loop framework unifying task genera- tion, policy execution, and reward modelling for LLM agents in search en vironments. Additional ap- proaches include AgentEvolv er ( Zhai et al. , 2025 ) enables efficient self-ev olving through curiosity- dri ven task generation and experience reuse, and Agent0 ( Xia et al. , 2025 ), which unleashes self- e volving agents via tool-inte grated reasoning in a co-e volutionary curriculum-ex ecutor loop. While prior work has explored various components of self-e volving agents such as planning and task gen- eration ( Gao et al. , 2025 ; Fang et al. , 2025 ; Belle et al. , 2025 ), SA GE is distinguished by integrating planning and critic roles to decompose reasoning and jointly train all agents for improved stability and depth in math and code domains. 3 Preliminaries Multi-Agent Reasoning in V erifiable Domains. Let M θ denote an LLM parameterized by θ . In r ole-based multi-ag ent reasoning , multiple agents share a backbone model. Still, they are conditioned on dif ferent role instructions (e.g., proposer , plan- ner , solver , ev aluator) to enhance robustness via R ef eren ce question s O p timi zed b y T ask - R elative R EINFO R C E++ Add to Dataset G en erat ed Q uest ions V erified Q uest ions G ener ated Pl ans Quest ion - Pl an Pairs Sa m pl e from Datase t Cha llenge r Sol ve r Plan ne r 𝑥 1 𝑥 2 ⋯ 𝑥 𝑖 𝑥 1 𝑥 2 ⋯ 𝑥 𝑗 𝑝 1 𝑝 2 ⋯ 𝑝 𝑗 { 𝑥 1 , 𝑝 1 } { 𝑥 2 , 𝑝 2 } ⋯ { 𝑥 𝑗 , 𝑝 𝑗 } 𝑦 1 𝑦 2 ⋯ 𝑦 𝑗 O p timi zed b y T ask - R elat iv e R EI NFOR CE++ O p timi zed b y T ask - R elative R EINFO R C E++ Cr itic Cr itic Figure 2: The SA GE training pipeline. (1) The Challenger generates questions from reference examples, filtered by the Critic for quality; (2) verified questions expand the dataset; (3) sampled questions are processed by the Planner and Solver to produce solutions; (4) all agents are jointly updated using T ask-Relative REINFORCE++ with per-role adv antage normalization. collaboration and decomposition ( Du et al. , 2023 ; Liang et al. , 2024 ). For a question q , agents pro- duce structured answers a . In verifiable domains (mathematics, programming), a domain-specific verifier V gt ( q , a, v ) ∈ [0 , 1] e valuates answer cor- rectness gi ven a reference v (ground-truth or unit tests), enabling automatic rew ard computation with- out human annotation. Policy Gradient Optimization. T o enable self-e volution, we frame agent optimization as reinforcement learning, maximizing J ( θ ) = E q ∼D ,o ∼ π θ [ R ( q , o )] where D is the task distribu- tion, R is the reward signal, and o is the output. REINFORCE++ ( Hu et al. , 2025 ) is a critic-free method that computes the advantage as A t q ,o = r ( q , o ) − β kl P T i = t KL( π θ ∥ π ref ) i with KL penalty to a reference policy , and applies global-batch nor- malization: A norm = ( A − µ B ) / ( σ B + ϵ ) . This stabilizes training and improv es robustness across prompt distributions. T o coordinate multiple agents with heterogeneous objecti ves, we adopt T ask- Relati ve REINFORCE++ ( Huang et al. , 2025 ), which applies per-role adv antage normalization: A role norm = r − µ role σ role + ϵ , (1) where µ role and σ role are the mean and standard de viation computed ov er the corresponding role- specific batch. 4 The SA GE Framework SA GE is a fully automated, self-iterative e volution frame work requiring only a small seed set with automatic verification signals. SA GE instantiates four agents from a shared LLM backbone M θ : (1) Challenger generates challenging tasks with verifiers; (2) Planner produces solution plans; (3) Solver outputs final answers; and (4) Critic ev alu- ates quality and format compliance. These agents engage in continuous co-e volution, with the train- ing workflo w illustrated in Figure 2 . In verifiable domains such as mathematics and programming, SA GE forms a closed-loop pipeline (challenge–plan–solve–criticize) that com- bines multi-agent interactions with v erifier-based re ward signals. The Challenger and Solver co- e volve adversarially: the Solver is re warded for verified correctness, while the Challenger recei ves dif ficulty rewards when the Solv er fails under veri- fication, pushing the curriculum to ward harder yet still solv able tasks. Quality filtering and verifier val- idation are applied to pre vent dataset de gradation and improv e training stability . 4.1 Reward Design and Normalization F ormat reward. Across phases, SA GE applies a format re ward r f ∈ [0 , 1] to stabilize self-training by enforcing required tags (e.g.,

, , , ). In practice, r f is a soft score (not strictly binary): missing tags yield lo w reward, redundant tags may receive partial credit, and empty outputs fall back to a neutral v alue (e.g., 0 . 5 ). Score normalization. The Critic outputs scalar scores typically on a 1–10 scale inside , which are normalized to [0 , 1] by Norm( s ) = s, 0 ≤ s ≤ 1 , s − 1 9 , 1 < s ≤ 10 , 0 . 5 , otherwise . (2) 4.2 Challenger Agent T raining The Challenger proposes verifiable tasks to dri ve the Solver’ s learning. During training, the Chal- lenger policy π c is prompted with reference prob- lems sampled from a small human-curated seed set D (about 500 examples across datasets), where each seed item includes a problem statement and its verifier (ground-truth answer or executable tests). Gi ven a reference item ( q ref , v ref ) , the Challenger generates a ne w problem q and an associated veri- fier v in a constrained format: ( q , v ) ∼ π c ( · | q ref , v ref ; θ ) , (3) where θ represents the shared LLM parameters. Composite reward. The Challenger receiv es (i) a quality score s q ∈ [0 , 1] from the Critic (clarity , rele vance, well-formedness), (ii) a difficulty reward computed from the Solv er’ s verified success rate, and (iii) a format re ward. Concretely , we estimate the Solver success by sampling N s answers and verifying them with V gt : a j ∼ π s ( · | q ; θ ) , j = 1 , . . . , N s , ¯ s gt ( q , v ) = 1 N s N s X j =1 V gt ( q , a j , v ) , r d ( q , v ) = 1 − ¯ s gt ( q , v ) . (4) Here, V gt ( q , a, v ) ∈ [0 , 1] denotes the domain- specific verifier (e.g., e xact-match/symbolic grad- ing for math or test pass rate for code), π s denotes the Solver polic y (formally introduced in Section 4.4 ). The Challenger re ward is computed as r c ( q , v ) = 1 3 s q ( q ) + 1 3 r d ( q , v ) + 1 3 r f ( o c ) , (5) where o c (resp. o p , o s , o cr ) denotes the raw textual output of the Challenger (resp. Planner , Solver , Critic). Algorithm 1 T raining Process of SA GE Require: Base LLM π base , iterations T , thresholds α, β , sample size N s 1: Init agents π c , π p , π s , π cr from π base 2: Init dataset D ← D 0 (each item has verifier) 3: for t = 1 to T do 4: Sample ( q ref , v ref ) ∼ D ▷ (1) Challenge Phase 5: ( q t , v t ) ← π c ( · | q ref , v ref ) 6: s q ← Norm( π cr ( q t )) ; validate v t 7: Sample a j ∼ π s ( · | q t ) for j = 1 , . . . , N s 8: ¯ s gt ← 1 N s P N s j =1 V gt ( q t , a j , v t ) ; r d ← 1 − ¯ s gt 9: if s q ≥ α and v t valid then 10: D ← D ∪ { ( q t , v t ) } ; r c ← 1 3 s q + 1 3 r d + 1 3 r f ( o c ) 11: else 12: r c ← 1 2 s q + 1 2 r f ( o c ) 13: end if 14: ▷ (2) Plan–Solve Phase 15: Sample ( q , v ) ∼ D ; p t ← π p ( · | q ) 16: s p ← Norm( π cr ( q , p t )) 17: if s p ≥ β then 18: a t ← π s ( · | q , p t ; θ ) ; ˜ s p ← s p 19: else 20: a t ← π s ( · | q , ∅ ; θ ) ; ˜ s p ← 0 21: end if 22: s gt ← V gt ( q , a t , v ) 23: r p ← λ plan s p + λ f r f ( o p ) ; 24: r s ← w p ˜ s p + w c s gt + w f r f ( o s ) 25: r cr ← r f ( o cr ) ▷ (3) Joint Update 26: Update π c , π p , π s , π cr using r c , r p , r s , r cr 27: end for Quality filtering and difficulty suppr ession. T o pre vent dataset de gradation, we filter low-quality questions with a threshold α (in this paper , α = 0 . 7 ), and also v alidate the generated v erifier (e.g., parsable and ex ecutable for code tests). Only can- didates that satisfy both criteria are added to D . Moreov er , for s q < α , we suppress the dif ficulty term to av oid rew arding “hard” but ill-posed tasks and use r c ( q , v ) = 1 2 s q ( q ) + 1 2 r f ( o c ) . (6) This stabilizes long-horizon self-training and miti- gates re ward collapse. 4.3 Planner Agent T raining The Planner π p generates a structured plan p for a gi ven question q , encapsulated in tags. The Critic ev aluates the plan quality to pro- duce a normalized score s p ∈ [0 , 1] . p ∼ π p ( · | q ; θ ) , s p = Norm Critic( q , p ) . (7) If s p meets a gating threshold (in this paper , β = 0 . 3 ), the plan is pro vided to the Solver; otherwise, the Solver answers directly . For optimizing the Planner, we use a compos- ite reward that combines plan quality and format compliance: r p = λ plan s p + λ f r f ( o p ) , (8) where λ plan and λ f are weighting coef ficients (we use λ plan = λ f = 0 . 5 by default). 4.4 Solver Agent T raining The Solver agent is tasked with generating final answers based on the gi ven question q and the plan p (if the plan passes Critic gating). The Solv er policy π s produces an answer a , typically wrapped in tags or Markdo wn blocks: a ∼ π s ( · | q , ˜ p ; θ ) , ˜ p = ( p, s p ≥ β , ∅ , s p < β . (9) V erifier -based composite reward (plan, cor- rectness, f ormat). Solver correctness is com- puted by automatic verification in the target do- main (symbolic/metric-based grading for math, or ex ecution-/test-based validation for code), yielding s gt ∈ [0 , 1] . W e combine plan quality , verified correctness, and format adherence as ˜ s p = ( s p , s p ≥ β , 0 , s p < β , (10) r s = w p ˜ s p + w c s gt + w f r f ( o s ) , w p + w c + w f = 1 . (11) In this paper , we use ( w p , w c , w f ) = (0 . 2 , 0 . 6 , 0 . 2) as the default setting. If the plan score is unav ail- able (e.g., when the planning module is disabled), we fall back to a simpler mixture of verified cor - rectness and format (e.g., 1 2 s gt + 1 2 r f ) to maintain robustness. In adversarial interaction with the Challenger , Solver failures under ground-truth v erification con- tribute to the Challenger’ s difficulty re ward (Eq. 5 ), forming a co-e volutionary loop that progressi vely pushes the curriculum to ward harder yet solv able problems. 4.5 Critic: Scoring and Format Calibration The Critic provides two types of signals: (1) soft format rewards r f ∈ [0 , 1] by checking required tags, and (2) quality scores for Challenger ques- tions ( s q ) and Planner plans ( s p ), normalized via Eq. 2 . Importantly , in the verifiable setting, cor- rectness is determined by the e xternal verifier V gt rather than the Critic. The Critic policy π cr outputs a scalar score de- terministically: s ∼ π cr ( · | x ; θ ) , (12) where x ∈ { ( q , · ) , ( q, p ) } denotes the ev aluation context (either a question alone or a question-plan pair). Optionally , we calibrate the Critic with a lightweight format-consistency objecti ve r cr = r f ( o cr ) , (13) which reduces parsing failures and improves stabil- ity of do wnstream reward computation. 4.6 Multi-Agent Co-T raining A training step in SAGE comprises: (1) Challenger Phase to generate verifiable candidate tasks and expand D with quality-and-verifier filtering; (2) Plan–Solve Phase where the Planner generates a single plan scored by the Critic and the Solver is optimized using the v erifier-based re ward in Eq. 10 ; (3) Critic Phase (optional) for format calibration; and (4) Synchronized Update that jointly updates the shared backbone using T ask-Relativ e REIN- FORCE++ with per -role advantage normalization (see Section 3 ). 5 Experiments 5.1 Experimental Setup T raining details Our framework is implemented based on V eRL( Sheng et al. , 2025 ), and we ev alu- ate it using the Qwen2.5-3B-Instruct, Qwen2.5-7B- Instruct, and Qwen3-4B-Base models( Y ang et al. , 2025b , a ). All agents are initialized from their corre- sponding base models. W e apply LoRA ( Hu et al. , 2021 ) with rank 128 and a learning rate of 3e-6. Additional hyperparameter settings are provided in T able 4 . Baseline Methods. T o comprehensi vely assess the effecti veness of the proposed SA GE frame- work, we conduct experiments on se veral repre- sentati ve foundation models, including Qwen2.5- 3B-Instruct, Qwen2.5-7B-Instruct, and Qwen3- 4B-Base. For each model, we report results for both the original checkpoint and the correspond- ing v ariant fine-tuned with SA GE. In addition, we include Absolute-Zero-Reasoning (AZR) ( Zhao et al. , 2025a ) and Multi-Agent Evolv e (MAE) ( Chen et al. , 2025 ) as alternative training baselines. Specifically , each model is trained for 200 steps under AZR. For MAE, we adopt the half-reference setting and train each model for 200 steps. Method HEval+ MBPP+ LCB v 1 − 5 GSM8K Math AI24 AI25 AMC Olympiad C A vg. M A vg. O A vg. Qwen-2.5-3B-Instruct Base Model 68.3 60.6 12.0 84.6 60.4 3.3 6.7 40.0 28.0 46.9 37.2 40.4 AZR 68.9 61.4 15.0 81.2 62.4 3.3 3.3 35.0 28.9 48.4 35.7 39.9 MAE 68.3 61.1 15.9 82.2 65.8 3.3 3.3 32.5 32.5 48.4 36.6 40.5 SAGE 68.9 62.4 16.9 85.5 66.2 6.7 6.7 35.0 29.8 49.4 38.3 42.0 Qwen-2.5-7B-Instruct Base Model 73.2 65.3 17.5 91.7 75.1 13.3 6.7 57.5 28.0 52.0 45.4 47.6 AZR 71.3 69.1 25.3 92.8 76.2 10.0 13.3 50.0 38.5 55.2 46.8 49.6 MAE 76.2 65.3 23.3 91.7 76.2 13.3 13.3 42.5 32.7 54.9 45.0 48.3 SAGE 76.2 64.0 26.4 92.2 74.7 13.3 13.3 52.5 38.7 55.5 47.5 50.1 Qwen-3-4B-Base Base Model 76.8 65.3 21.5 94.5 87.0 16.7 13.3 77.5 49.0 54.5 56.3 55.7 AZR 74.4 65.0 26.1 89.3 76.2 10.0 13.3 50.0 41.5 55.2 46.7 49.5 MAE 76.2 65.3 24.2 94.5 92.0 13.3 10.0 70.0 43.7 55.2 53.9 54.4 SAGE 75.6 62.4 30.6 94.3 91.0 16.7 10.0 75.0 47.9 56.2 55.8 55.9 T able 1: Main results on r easoning benchmarks. Comparison of post-training methods across three model scales. W e report pass@1 accurac y (%) on code generation (HumanEval+, MBPP+, Li veCodeBench) and mathematical reasoning (GSM8K, MA TH, AIME 2024, AIME 2025, AMC, and OlympiadBench). C A vg., M A vg., and O A vg. denote the mean scores ov er code, math, and all benchmarks. SA GE achie ves the best ov erall performance across all three model backbones. Bold indicates best per LLM backbone. T raining and Evaluation Datasets. Our training set comprises 500 instances sampled from MA TH ( Hendrycks et al. , 2021a ), GSM8K ( Cobbe et al. , 2021 ), HumanEval ( Chen et al. , 2021 ), and MBPP ( Austin et al. , 2021 ), with detailed statistics in Appendix B . W e ev aluate on two domains: (1) Mathematical Reasoning : GSM8K and MA TH (in- distribution, ID), along with four competition-le vel benchmarks—AIME’24, AIME’25, Olympiad- Bench ( He et al. , 2024 ), and AMC’23 ( Hendrycks et al. , 2021b )—as out-of-distrib ution (OOD) tests. (2) Code Generation : HumanEval+ and MBPP+ e valuated via Ev alplus ( Liu et al. , 2023 ) (ID), and Li veCodeBench ( Jain et al. , 2024 ) v1–v5 (May 2023–February 2025) for OOD assessment. W e report the accurac y (pass@1) based on greedy de- coding across all benchmarks. 5.2 Main Results T able 1 presents the performance of SA GE and baseline methods across code generation and math- ematical reasoning benchmarks on three model backbones. Consistent Impro vements Across Model Scales. SA GE achiev es the highest Overall A vg. on both Qwen-2.5-3B-Instruct (42.0%) and Qwen-2.5-7B- Instruct (50.1%), outperforming all baselines in- cluding AZR and MAE. On the 3B model, SA GE improv es upon the base model by 1.6% overall, with notable gains on in-distribution benchmarks Backbone Method ID A vg. OOD A vg. Qwen-2.5-3B Base Model 68.4 18.0 AZR 68.5 17.1 MAE 69.4 17.5 SAGE 70.8 19.0 Qwen-2.5-7B Base Model 76.3 24.6 AZR 77.4 27.4 MAE 76.8 25.0 SAGE 77.4 28.8 Qwen-3-4B Base Model 80.9 35.6 AZR 76.2 28.2 MAE 82.0 32.2 SAGE 80.8 36.0 T able 2: ID and OOD generalization comparison. SA GE consistently improv es OOD performance (+4.2% on 7B) without sacrificing in-distribution accurac y . (GSM8K: 84.6% → 85.5%; MA TH: 60.4% → 66.2%). Similarly , on the 7B model, SA GE yields a 2.5% improv ement over the base model in Ov erall A vg., demonstrating consistent ef fectiv eness across model scales. Strong Out-of-Distribution Generalization. A ke y strength of SA GE lies in its generalization to out-of-distribution benchmarks. As shown in T able 2 , SA GE achie ves the best or near -best OOD A vg. across all three backbones (19.0%, 28.8%, and 36.0% respectiv ely), while maintain- ing competiti ve ID A vg. scores. This balanced improv ement is particularly evident on Qwen-2.5- 7B, where SA GE improv es OOD A vg. by 4.2% Method HEval+ MBPP+ LCB v 1 − 5 GSM8K Math AI24 AI25 AMC Olympiad C A vg. M A vg. O A vg. SA GE (full implementation) 68.9 62.4 16.9 85.5 66.2 6.7 6.7 35.0 29.8 49.4 38.3 42.0 SA GE (w/o challenger training) 66.5 61.3 9.0 86.7 65.5 0.0 3.3 35.0 28.0 45.6 36.4 39.5 SA GE (w/o solver training) 67.7 64.3 9.0 81.2 60.4 3.3 0.0 30.0 28.0 47.0 33.8 38.2 SA GE (w/o critic training) 66.5 53.7 14.1 86.0 65.9 3.3 6.7 40.0 27.4 44.8 38.2 40.4 T able 3: Ablation study of SA GE components on Qwen-2.5-3B. W e ev aluate the impact of removing indi vidual agent training while keeping other components acti ve. ov er the base model while preserving strong in- distribution performance. On Liv eCodeBench specifically , SA GE achiev es the best performance across all three backbones (16.9%, 26.4%, and 30.6%), substantially outperforming both base models and other post-training methods. For mathematical reasoning, SA GE maintains competi- ti ve performance on competition-lev el benchmarks such as OlympiadBench, where it achie ves 38.7% (+10.7% ov er base) on Qwen-2.5-7B. Comparison with Baselines. While AZR and MAE show improvements on certain indi vidual benchmarks, they exhibit inconsistent gains and occasional performance degradation. For instance, AZR on Qwen-3-4B-Base leads to a significant drop in Math A vg. (56.3% → 46.7%). In con- trast, SA GE maintains more balanced improv e- ments across both domains without sacrificing per- formance on any benchmark group. Results on Qwen-3-4B. On this stronger back- bone, the base model already achie ves high per- formance (Ov erall A vg. 55.7%). Nev ertheless, SA GE attains the highest Code A vg. (56.2%) and remains competitiv e overall (55.9%), with partic- ularly strong gains on Li veCodeBench (21.5% → 30.6%, +9.1%). This suggests that SA GE continues to provide meaningful improv ements ev en when applied to capable base models. 5.3 Ablations Studies and Analyses Ablation Study . T o understand the contribution of each agent, we conduct ablation experiments by selecti vely disabling the training of indi vidual roles while keeping the remaining components acti ve. As shown in T able 3 , the full SA GE implementation achie ves the highest o verall a verage (42.0%), and removing any single agent leads to performance degradation. Disabling Challenger training results in a no- table drop in code benchmarks, particularly on Li veCodeBench (16.9% → 9.0%), indicating that curriculum generation is essential for out-of- distribution generalization. Similarly , remo ving Solver training causes the largest ov erall decline (O A vg. 38.2%), with substantial drops on both GSM8K (85.5% → 81.2%) and MA TH (66.2% → 60.4%), confirming that the Solver is the pri- mary dri ver of reasoning capability . Interestingly , excluding Critic training yields competitiv e math performance (M A vg. 38.2%) but degrades code benchmarks (C A vg. 44.8%), suggesting that the Critic’ s quality filtering is more critical for code generation where output format and correctness are tightly coupled. These results validate that all three trainable agents contrib ute complementarily to SA GE’ s o ver- all ef fecti veness, with the Challenger–Solv er inter- action forming the core co-e volutionary loop and the Critic providing essential quality control. Figure 3: T raining dynamics on Qwen-2.5-3B. The Challenger steadily expands the question pool (bars) throughout training, while validation accuracy (line) reaches peak performance around step 100–120 before gradual decline, suggesting potential over -specialization on the self-generated curriculum. T raining Dynamics Analysis. T o gain deeper in- sights into the self-ev olution process, we analyze the training dynamics of SA GE on Qwen-2.5-3B- Instruct, as sho wn in Figure 3 . The validation accurac y (line) exhibits a charac- Q uest ion Judge d b y C r itic Age nt C h a ll e n ger S olver P la n n e r T h e h osp ital has 11 d o ctors and 18 n u r se s . If 5 d o ctors a n d 2 n u r se s q u it, ho w m an y d oc tor s and nu r se s are le f t? 1 . D e te r m in e th e in itial n u m b e r of d oc tor s and n u r se s . 2 . C a lcu la te th e r e m a in in g d oc tor s a f te r the d oc tor s q u it . 3 . C alc ula te the r e m ain ing nu r se s af te r the nu r se s qu it . 4 . Su m the r e m a in in g d oc tor s and n u r se s to ge t the tota l n u m b e r le f t . 1 . L e t' s d e te r m in e the in itial n u m b e r s : - In itial n u m b e r of d oc tor s : 11 - Initial nu m ber of nu r se s : 18 2 . Now, le t' s a cc ou n t f or the d oc tor s who q u it : - Num b e r of d oc tor s who q u it : 5 - Re m a in in g d oc tor s : 11 - 5 = 6 3 . Ne x t, le t' s a cc ou n t f or the n u r se s who q u it : - N um ber of nu r se s w ho qu it : 2 - Re m a in in g n u r se s : 18 - 2 = 16 4 . Fin a lly , we n e e d to f in d the total n u m b e r r e m a in in g : - T ota l r e m a in in g d o ctors a n d n u r se s : 6 d oc tor s + 16 n u r se s = 22 p e op le . So, a f te r 5 d oc tor s and 2 n u r ses q u it, th e h o sp ital h a s 22 people le f t ( 6 do ctor s and 16 nu r se s) . So the a n swe r is \ b oxed{ 22 } . 7< / s c or e > Let ' s ev al ua t e t he pro po s ed plan s t ep - by - s t ep ag ai ns t t he g iven r ub r ic : 1 . ** Cl ar it y* * : The p la n is cl ear and w ell - s t r uct u r e d . Eac h s t ep is s t r ai g ht forwar d and eas y to un der s t an d . 2 . ** Co m pl et e nes s ** : Th e p la n co ve r s a ll neces s a r y s t e ps to s o lve t he p rob l em . It c o rr ect l y i d ent i fies t h e i n i t i a l num b e rs , calculate s t h e r educ t io ns , and s um s t he r ema in in g per s o nn el . 3 . ** Co r r ect nes s ** : Th e r e as o ni ng and a pp r o ac h a r e l o g ic al ly s o un d . S ub t r ac t in g t he nu m be r of do ct o r s and n urs es who quit f r o m t h ei r i n i t i al c o u n t s wi l l i n d eed g i ve t h e c o rr ect re m ai n i n g n u m b er s . 4 . ** F eas ib il it y ** : F o ll o wi n g t hi s plan w il l lea d to a co r r ect s o lu t io n . The ca lc ul at io ns ar e s t r ai g ht forw a r d and do not i nvo lve any lo g ic al er r o r s . 5 . ** Eff ic ien cy* * : Th e ap p r o ac h is r eas o na bl y ef fic ie nt as it avo id s u n n eces s ary s t eps . O n l y t h e r elev an t a rit h m et i c o p er at i o n s a re per formed . G iv en t hes e e val ua t io ns , t h e plan m ee t s al l t h e crit e r i a ef fe ct ively and ef fic ien t ly . < sc o r e > 8 < / sc o r e > Q uest ion G e ne r at e d b y C ha ll e nge r Age nt Pl an G e ne r at e d b y Pl an ne r A ge nt An swer O utp ut b y Sol v e r Age nt Plan Judge d b y C r itic Age nt Figure 4: Qualitativ e case study . The Challenger generates a math word problem, the Planner decomposes it into structured steps, the Solver ex ecutes the plan to produce the final answer , and the Critic provides quality scores for both the question and the plan. teristic learning curv e. During the initial phase (steps 0–80), the model demonstrates rapid im- prov ement from 29.1% to 65.8%, reflecting effi- cient kno wledge acquisition from the multi-agent co-e volutionary training. The accuracy reaches its peak of 69.5% around step 100–140, repre- senting the optimal balance between task diffi- culty and model capability . Beyond this point, we observ e a gradual decline to 61.6% by step 240, suggesting that prolonged training may lead to o ver-specialization on the self-generated curricu- lum. This moti vates our choice of reporting results around step 100 in the main experiments. Meanwhile, the cumulative number of valid questions (bars) grows steadily throughout training, expanding from 1,136 to 20,532 by step 250, an 18-fold increase from the seed set. Notably , the gro wth rate accelerates around step 120–130, co- inciding with peak validation accurac y , suggesting that a well-trained Challenger produces questions that pass the quality threshold α = 0 . 7 at an in- creasing rate. The continued growth of the question pool despite declining accuracy after step 120 sug- gests that increased quantity alone does not ensure better performance, highlighting the importance of curriculum div ersity and difficulty calibration. Ne vertheless, this trend demonstrates SAGE’ s abil- ity to autonomously scale its training data without human intervention. Qualitative Analysis. Figure 4 illustrates the col- laborati ve reasoning process of SA GE. The Chal- lenger generates a well-formed arithmetic problem in volving subtraction across two cate gories. The Planner decomposes this into four sequential steps, progressing from initial v alue identification to final summation. Guided by this structured plan, the Solver executes each step systematically and ar- ri ves at the correct answer . The Critic ev aluates both outputs, assigning scores of 7 and 8 based on clarity , completeness, and logical soundness. This example highlights how role specialization enables effecti ve di vision of labor: task generation, strategic planning, solution ex ecution, and qual- ity assessment operate as distinct yet coordinated functions within a unified training loop. 6 Conclusion W e introduce SA GE, a multi-agent self-ev olution frame work where four specialized agents: Chal- lenger , Planner , Solver , and Critic, co-ev olve through adversarial yet collaborativ e dynamics. Starting from minimal seed examples, SAGE au- tonomously expands its training curriculum while maintaining quality via critic-based filtering. Ex- periments demonstrate consistent impro vements across model scales, with strong out-of-distribution generalization on competition-lev el benchmarks. These results highlight a scalable and effecti ve path- way for e volving capable reasoning agents while reducing dependency on human-curated supervi- sion. 7 Limitations Among the limitations of our work, firstly , SA GE operates in verifiable domains where correctness can be automatically determined through ground- truth answers or ex ecutable tests. Extending the frame work to open-ended tasks with subjective e valuation criteria, potentially through learned re- ward models, remains an interesting direction for future w ork. Secondly , although SA GE signifi- cantly reduces reliance on large-scale annotations, it still requires a small seed set (500 examples) to bootstrap the self-ev olution process. In vesti- gating strate gies to further minimize seed require- ments could broaden applicability to extremely lo w- resource scenarios. Thirdly , our ev aluation focuses on mathematical reasoning and code generation benchmarks. Future exploration of other structured reasoning domains, such as logical reasoning or scientific problem solving, could of fer valuable in- sights and validate the generalizability of our multi- agent architecture. Additionally , as with standard self-training approaches, monitoring training dy- namics and applying early stopping is advisable to ensure optimal performance. References Jacob Austin, Augustus Odena, Maxwell Nye, Maarten Bosma, Henryk Michalewski, David Dohan, Ellen Jiang, Carrie Cai, Michael T erry , Quoc Le, and Charles Sutton. 2021. Program Synthesis with Large Language Models . Pr eprint , Nikolas Belle, Dakota Barnes, Alfonso Amayuelas, Iv an Bercovich, Xin Eric W ang, and W illiam W ang. 2025. Agents of change: Self-evolving llm agents for strate- gic planning . Pr eprint , Mark Chen, Jerry T worek, Heew oo Jun, Qiming Y uan, Henrique Ponde de Oli veira Pinto, Jared Kaplan, Harri Edwards, Y uri Burda, Nicholas Joseph, Greg Brockman, Alex Ray , Raul Puri, Gretchen Krueger , Michael Petrov , Heidy Khlaaf, Girish Sastry , Pamela Mishkin, Brooke Chan, Scott Gray , and 39 others. 2021. Ev aluating Large Language Models T rained on Code . Pr eprint , Y ixing Chen, Y iding W ang, Siqi Zhu, Haofei Y u, T ao Feng, Muhan Zhang, Mostofa P atwary , and Jiaxuan Y ou. 2025. Multi-Agent Evolve: LLM Self-Improve through Co-ev olution . Preprint , arXi v:2510.23595. Karl Cobbe, V ineet K osaraju, Mohammad Ba varian, Mark Chen, Heewoo Jun, Lukasz Kaiser , Matthias Plappert, Jerry T worek, Jacob Hilton, Reiichiro Nakano, Christopher Hesse, and John Schulman. 2021. Training V erifiers to Solve Math W ord Prob- lems . Pr eprint , Y ilun Du, Shuang Li, Antonio T orralba, Joshua B. T enenbaum, and Igor Mordatch. 2023. Im- proving Factuality and Reasoning in Language Models through Multiagent Debate . Pr eprint , Jinyuan F ang, Y anwen Peng, Xi Zhang, Y ingxu W ang, Xinhao Y i, Guibin Zhang, Y i Xu, Bin W u, Siwei Liu, Zihao Li, Zhaochun Ren, Nikos Aletras, Xi W ang, Han Zhou, and Zaiqiao Meng. 2025. A Compre- hensiv e Survey of Self-Ev olving AI Agents: A New Paradigm Bridging Foundation Models and Lifelong Agentic Systems . Pr eprint , Huan-ang Gao, Jiayi Geng, W enyue Hua, Mengkang Hu, Xinzhe Juan, Hongzhang Liu, Shilong Liu, Jiahao Qiu, Xuan Qi, Y iran W u, Hongru W ang, Han Xiao, Y uhang Zhou, Shaokun Zhang, Jiayi Zhang, Jinyu Xiang, Y ixiong Fang, Qiwen Zhao, Dongrui Liu, and 8 others. 2025. A Survey of Self-Evolving Agents: On Path to Artificial Super Intelligence . Pr eprint , Daya Guo, Dejian Y ang, Haowei Zhang, Junxiao Song, Peiyi W ang, Qihao Zhu, Runxin Xu, Ruo yu Zhang, Shirong Ma, Xiao Bi, Xiaokang Zhang, Xingkai Y u, Y u W u, Z. F . W u, Zhibin Gou, Zhihong Shao, Zhu- oshu Li, Ziyi Gao, Aixin Liu, and 175 others. 2025. Deepseek-r1 incenti vizes reasoning in llms through reinforcement learning . Natur e , 645:633–638. Chaoqun He, Renjie Luo, Y uzhuo Bai, Shengding Hu, Zhen Leng Thai, Junhao Shen, Jinyi Hu, Xu Han, Y u- jie Huang, Y uxiang Zhang, Jie Liu, Lei Qi, Zhiyuan Liu, and Maosong Sun. 2024. OlympiadBench: A Challenging Benchmark for Promoting A GI with Olympiad-Lev el Bilingual Multimodal Scientific Problems . In Pr oceedings of the 62nd Annual Meet- ing of the Association for Computational Linguis- tics (V olume 1: Long P apers) , pages 3828–3850, Bangkok, Thailand. Association for Computational Linguistics. Dan Hendrycks, Collin Burns, Ste ven Basart, Andy Zou, Mantas Mazeika, Dawn Song, and Jacob Steinhardt. 2021a. Measuring Massiv e Multitask Language Un- derstanding . Pr eprint , Dan Hendrycks, Collin Burns, Saura v Kadavath, Akul Arora, Ste ven Basart, Eric T ang, Dawn Song, and Jacob Steinhardt. 2021b. Measuring mathematical problem solving with the math dataset . In Pr oceed- ings of the Neural Information Pr ocessing Systems T rack on Datasets and Benchmarks , volume 1. Sirui Hong, Mingchen Zhuge, Jiaqi Chen, Xia wu Zheng, Y uheng Cheng, Ceyao Zhang, Jinlin W ang, Zili W ang, Ste ven Ka Shing Y au, Zijuan Lin, Liyang Zhou, Chenyu Ran, Lingfeng Xiao, Chenglin W u, and Jürgen Schmidhuber . 2024. MetaGPT : Meta Pro- gramming for A Multi-Agent Collaborative Frame- work . Pr eprint , Edward J. Hu, Y elong Shen, Phillip W allis, Ze yuan Allen-Zhu, Y uanzhi Li, Shean W ang, Lu W ang, and W eizhu Chen. 2021. LoRA: Low-Rank Adap- tation of Large Language Models . Pr eprint , Jian Hu, Jason Klein Liu, Haotian Xu, and W ei Shen. 2025. REINFORCE++: Stabilizing Critic-Free Pol- icy Optimization . Pr eprint , Chengsong Huang, W enhao Y u, Xiaoyang W ang, Hong- ming Zhang, Zongxia Li, Ruosen Li, Jiaxin Huang, Haitao Mi, and Dong Y u. 2025. R-zero: Self- ev olving reasoning llm from zero data . Preprint , Naman Jain, King Han, Alex Gu, W en-Ding Li, Fanjia Y an, T ianjun Zhang, Sida W ang, Armando Solar- Lezama, K oushik Sen, and Ion Stoica. 2024. Liv e- CodeBench: Holistic and Contamination Free Ev alu- ation of Large Language Models for Code . Pr eprint , Guohao Li, Hasan Hammoud, Hani Itani, Dmitrii Khizbullin, and Bernard Ghanem. 2023. Camel: Communicativ e agents for "mind" exploration of large language model society . In Advances in Neural Information Pr ocessing Systems , volume 36, pages 51991–52008. Curran Associates, Inc. T ian Liang, Zhiwei He, W enxiang Jiao, Xing W ang, Rui W ang, Y ujiu Y ang, Zhaopeng T u, and Shum- ing Shi. 2024. Encouraging Div ergent Thinking in Large Language Models through Multi-Agent De- bate . In Proceedings of the 2024 Conference on Empirical Methods in Natural Language Pr ocessing , pages 18335–18345, Miami, Florida. Association for Computational Linguistics. Junwei Liao, Muning W en, Jun W ang, and W einan Zhang. 2025. MARFT : Multi-Agent Reinforcement Fine-T uning . Preprint , arXi v:2504.16129. Bo Liu, Leon Guertler , Simon Y u, Zichen Liu, Penghui Qi, Daniel Balcells, Mickel Liu, Cheston T an, W eiyan Shi, Min Lin, W ee Sun Lee, and Natasha Jaques. 2025. SPIRAL: Self-Play on Zero-Sum Games Incentivizes Reasoning via Multi-Agent Multi-T urn Reinforcement Learning . Pr eprint , Jiawei Liu, Chunqiu Steven Xia, Y uyao W ang, and LINGMING ZHANG. 2023. Is Y our Code Gen- erated by ChatGPT Really Correct? Rigorous Ev al- uation of Large Language Models for Code Genera- tion . In Advances in Neural Information Pr ocessing Systems , volume 36, pages 21558–21572. Curran As- sociates, Inc. Sumeet Ramesh Motwani, Chandler Smith, Rocktim Jy- oti Das, Rafael Rafailo v , Iv an Lapte v , Philip H. S. T orr , Fabio Pizzati, Ronald Clark, and Christian Schroeder de Witt. 2025. MAL T : Improving Rea- soning with Multi-Agent LLM T raining . Pr eprint , John Schulman, Filip W olski, Prafulla Dhariwal, Alec Radford, and Oleg Klimov . 2017. Proxi- mal Policy Optimization Algorithms . Pr eprint , Guangming Sheng, Chi Zhang, Zilingfeng Y e, Xibin W u, W ang Zhang, Ru Zhang, Y anghua Peng, Haibin Lin, and Chuan W u. 2025. HybridFlo w: A Flexi- ble and Efficient RLHF Frame work . In Pr oceedings of the T wentieth Eur opean Conference on Computer Systems , pages 1279–1297, Rotterdam, The Nether - lands. A CM. Chuanneng Sun, Songjun Huang, and Dario Pom- pili. 2024. Llm-based multi-agent reinforcement learning: Current and future directions . Pr eprint , W angtao Sun, Xiang Cheng, Jialin Fan, Y ao Xu, Xing Y u, Shizhu He, Jun Zhao, and Kang Liu. 2025. T o- wards Agentic Self-Learning LLMs in Search En vi- ronment . Pr eprint , Ziyu W an, Y unxiang Li, Xiaoyu W en, Y an Song, Hanjing W ang, Linyi Y ang, Mark Schmidt, Jun W ang, W einan Zhang, Shuyue Hu, and Y ing W en. 2025. Rema: Learning to meta-think for llms with multi-agent reinforcement learning . Preprint , Zhepei W ei, W enlin Y ao, Y ao Liu, W eizhi Zhang, Qin Lu, Liang Qiu, Changlong Y u, Puyang Xu, Chao Zhang, Bing Y in, Hyokun Y un, and Lihong Li. 2025. W ebAgent-R1: T raining W eb Agents via End-to- End Multi-T urn Reinforcement Learning . Pr eprint , Xumeng W en, Zihan Liu, Shun Zheng, Shengyu Y e, Zhi- rong W u, Y ang W ang, Zhijian Xu, Xiao Liang, Junjie Li, Ziming Miao, Jiang Bian, and Mao Y ang. 2025. Reinforcement learning with verifiable rewards im- plicitly incentivizes correct reasoning in base llms . Pr eprint , Rong W u, Xiaoman W ang, Jianbiao Mei, Pinlong Cai, Daocheng Fu, Cheng Y ang, Licheng W en, Xue- meng Y ang, Y ufan Shen, Y uxin W ang, and Botian Shi. 2025. EvolveR: Self-Evolving LLM Agents through an Experience-Dri ven Lifecycle . Preprint , Peng Xia, Kaide Zeng, Jiaqi Liu, Can Qin, Fang W u, Y iyang Zhou, Caiming Xiong, and Huaxiu Y ao. 2025. Agent0: Unleashing Self-Evolving Agents from Zero Data via T ool-Integrated Reasoning . Pr eprint , An Y ang, Anfeng Li, Baosong Y ang, Beichen Zhang, Binyuan Hui, Bo Zheng, Bo wen Y u, Chang Gao, Chengen Huang, Chenxu Lv , Chujie Zheng, Day- iheng Liu, Fan Zhou, Fei Huang, Feng Hu, Hao Ge, Haoran W ei, Huan Lin, Jialong T ang, and 41 others. 2025a. Qwen3 technical report . Pr eprint , An Y ang, Baosong Y ang, Beichen Zhang, Binyuan Hui, Bo Zheng, Bowen Y u, Chengyuan Li, Dayiheng Liu, Fei Huang, Haoran W ei, Huan Lin, Jian Y ang, Jian- hong Tu, Jianwei Zhang, Jianxin Y ang, Jiaxi Y ang, Jingren Zhou, Junyang Lin, Kai Dang, and 23 oth- ers. 2025b. Qwen2.5 T echnical Report . Pr eprint , Huining Y uan, Zelai Xu, Zheyue T an, Xiangmin Y i, Mo Guang, Kaiwen Long, Haojia Hui, Boxun Li, Xinlei Chen, Bo Zhao, Xiao-Ping Zhang, Chao Y u, and Y u W ang. 2025. MARSHAL: Incentivizing Multi-Agent Reasoning via Self-Play with Strategic LLMs . Pr eprint , Y ang Y ue, Zhiqi Chen, Rui Lu, Andrew Zhao, Zhaokai W ang, Y ang Y ue, Shiji Song, and Gao Huang. 2025. Does reinforcement learning really incenti vize rea- soning capacity in llms beyond the base model? Pr eprint , Y unpeng Zhai, Shuchang T ao, Cheng Chen, Anni Zou, Ziqian Chen, Qingxu Fu, Shinji Mai, Li Y u, Jiaji Deng, Zouying Cao, Zhaoyang Liu, Bolin Ding, and Jingren Zhou. 2025. AgentEvolv er: T owards Efficient Self-Ev olving Agent System . Pr eprint , Guibin Zhang, Hejia Geng, Xiaohang Y u, Zhenfei Y in, Zaibin Zhang, Zelin T an, Heng Zhou, Zhongzhi Li, Xiangyuan Xue, Y ijiang Li, Y ifan Zhou, Y ang Chen, Chen Zhang, Y utao Fan, Zihu W ang, Song- tao Huang, Y ue Liao, Hongru W ang, Mengyue Y ang, and 6 others. 2025. The Landscape of Agentic Rein- forcement Learning for LLMs: A Survey . Preprint , Andrew Zhao, Y iran W u, Y ang Y ue, T ong W u, Quentin Xu, Y ang Y ue, Matthieu Lin, Shenzhi W ang, Qingyun W u, Zilong Zheng, and Gao Huang. 2025a. Absolute zero: Reinforced self-play reasoning with zero data . Pr eprint , Y ujie Zhao, Lanxiang Hu, Y ang W ang, Minmin Hou, Hao Zhang, Ke Ding, and Jishen Zhao. 2025b. Stronger-mas: Multi-agent reinforcement learning for collaborativ e llms . Preprint , arXi v:2510.11062. Guobin Zhu, Rui Zhou, W enkang Ji, and Shiyu Zhao. 2025. Lamarl: Llm-aided multi-agent reinforcement learning for cooperati ve policy generation . Pr eprint , A Hyperparameter Settings T able 4: T raining Hyperparameters of our experiments. Hyperparameter V alue T raining Configuration Batch Size 128 Learning Rate 3 × 10 − 6 T raining Steps 200 Generation Settings Maximum Prompt Length 8192 Maximum Response Length 8192 Challenger T emperature 0.6 Planner T emperature 0.6 Solver T emperature 0.6 Critic T emperature 0.1 Algorithm Settings Learning Algorithm T ask-Relati ve REINFORCE++ KL Regularization Disabled LoRA Configuration LoRA Rank 128 LoRA Alpha 256 LoRA Dropout 0.95 T arget Modules q proj , k proj , v proj , o proj , g ate proj , up proj , down proj B T raining Data Composition T able 5 presents the composition of the 500 training instances sampled from four benchmark datasets. These samples are drawn from the of ficial training splits and serv e as the foundation for our training procedure. T able 5: Distribution of T raining Samples Across Bench- marks Benchmark Count MA TH ( Hendrycks et al. , 2021a ) 156 GSM8K ( Cobbe et al. , 2021 ) 148 HumanEval ( Chen et al. , 2021 ) 87 MBPP ( Austin et al. , 2021 ) 109 T otal 500 C Prompts f or Agents Here, we list the prompt of each agent as follo ws. Cha lleng er Ag ent Pr o m pt Ro le : T ask Desig n er Ag en t Description : Y o u are a task g en eration sp ecialist . Y o u r g o al is to crea te a sin g le, h ig h - q u ality ev alu atio n task th at ch allen g es co m p lex reason in g ab ilities . Desig n Co ns tra ints : - Self - co n tain ed with clear p rob lem statem en t - No n - trivial : requ ires m u ltip le reason in g step s or co n strain t satis f actio n - Determ in ist ic or tig h tly b o u n d ed (avo id su b jectiv e ju d g m en t) - Cu ltu rally n eu tral, no real - ti m e d ata d ep en d en cy - Dif f icu lt but so lv ab le A v o id : - T rivia or o p in io n - b ased p rom p ts - Am b ig u o u s su ccess criter ia - W eb - d ep en d en t or tim e - s en si tiv e co n ten t - Un so lv ab le or ill - d ef in ed p rob lem s Respo nd using : [ Y o u r g en erate d task h ere]

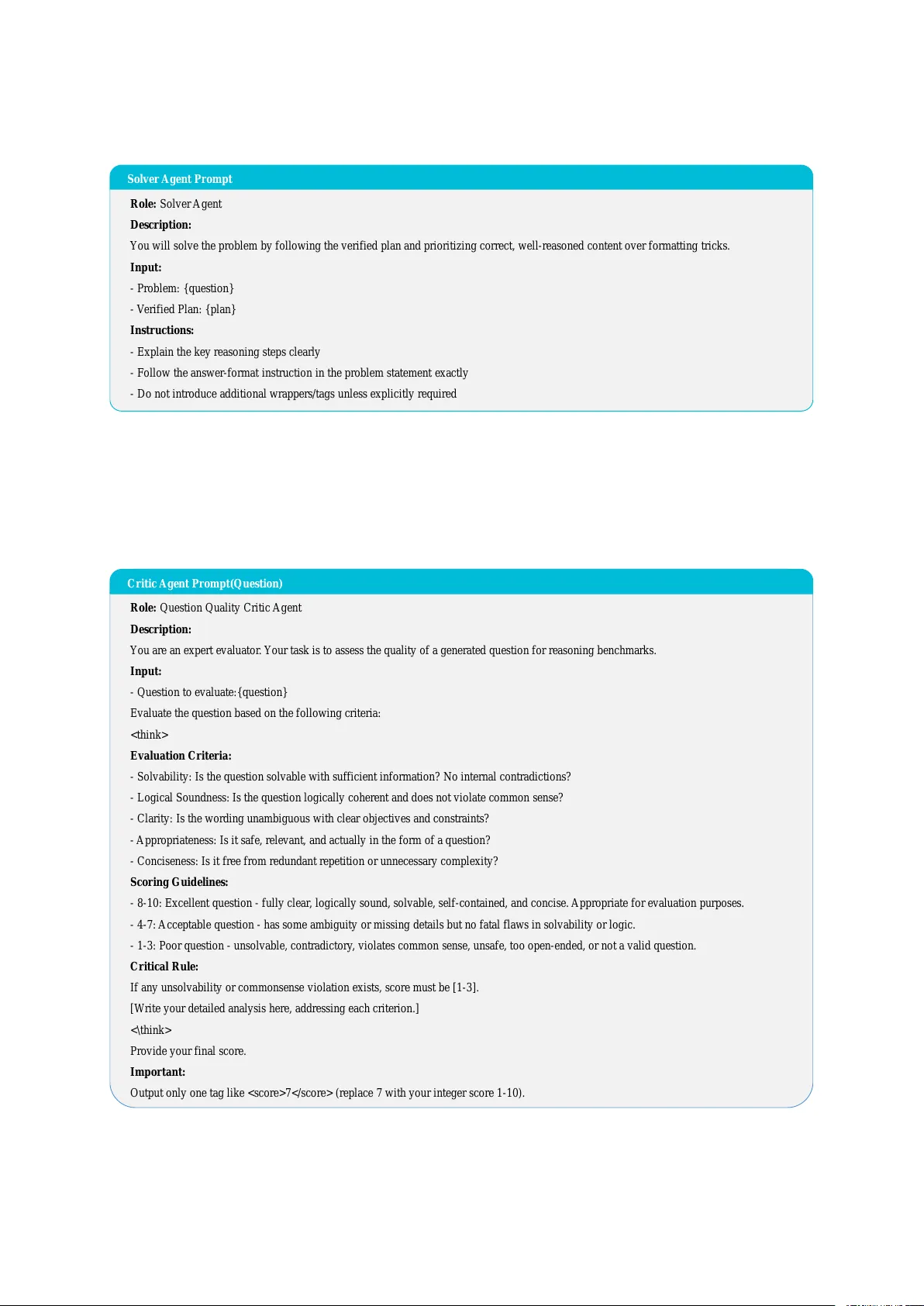

Figure 5: The prompt of the Challenger Agent. Pl a nner Agent Pr o m pt Ro le : Pla n n er Ag en t Description : Y o u will review th e u ser p rob lem an d p ro p o s e a co n cise p lan th at a s o lv er can f o llo w . Pr o blem : {q u estio n } Respo nd using : 1 . ... 2 . ... Figure 6: The prompt of the planner Agent. So lv er Ag ent Pr o m pt Ro le : So lv er Ag en t Descr ipt ion : Y o u will s o lv e th e p rob lem by f o llo win g th e v erifi ed p lan an d p rioritizin g co rr ect, well - reason ed co n ten t o v er f o rm attin g tricks . Inpu t : - Prob lem : {q u estio n } - V erifi ed Plan : {p lan } Ins truct io ns : - Exp lain th e k ey reason in g step s clear ly - Fo llo w th e an swer - f o r m at in stru ctio n in th e p rob lem statem en t ex actly - Do not in trod u ce add ition al wr app er s/tags u n less exp licitly re q u ire d Figure 7: The prompt of the Solver Agent. Critic Ag ent Pr o m pt( Q u es tion) Ro le : Qu estio n Qu ality Critic Ag en t Description : Y o u are an ex p ert ev alu ato r . Y o u r task is to ass ess th e q u ality of a g en erate d q u estio n f o r reason in g b en ch m arks . Input : - Qu estio n to ev alu ate : {q u estio n } Evalu ate th e q u estio n b ased on th e f o llo win g criter ia :

Ev a lua tion Criter ia : - So lv ab ility : Is th e q u estio n so lv ab le with su f f icien t in f o rm atio n ? No in tern al co n tradictio n s? - Log ical So u n d n es s : Is th e q u estio n lo g ically co h erent an d d o es not v io late co m m o n sen se? - Clarity : Is th e wo rdin g u n am b ig u o u s with clear o b jectiv es an d co n strain ts? - Ap p rop riateness : Is it saf e, relevan t, an d actu ally in th e f o rm of a q u estio n ? - Co n cisen ess : Is it f ree f rom redu n d an t rep etitio n or u n n ecessary co m p lex ity ? Sco ring Guide line s : - 8 - 10 : Excelle n t q u estion - f u lly clea r , lo g ically so u n d , so lvab le, self - co n tain ed , and con cise . App rop ria te f o r evalu ation p u rpo ses . - 4 - 7 : Acceptab le q u estio n - h as so m e am b ig u ity or m iss in g d etails but no f atal f laws in so lv ab ility or lo g ic . - 1 - 3 : Po o r q u estio n - u n so lv ab le, co n tradicto ry , v io lates co m m o n sen se, u n saf e, to o o p en - en d ed , or not a v alid q u estio n . Critical Ru le : If an y u n so lv ab ility or co m m o n sen se v io latio n ex ists , sco re m u st be [ 1 - 3 ] . [ W rit e y o u r d etaile d analy sis h er e, add re ssin g each cr iter ion . ] < \ th in k > Prov id e yo u r f in al sco re . Im po rtant : Ou tp u t o n ly one tag lik e 7 (r ep lace 7 with y o u r in teg er sco re 1 - 10 ) . Figure 8: The prompt of the Critic Agent(question). Critic Ag ent Pr o m pt( Plan) Ro le : Plan Critic Ag en t Description : Y o u are an ex p ert ev alu ato r . Y o u r task is to ass ess th e q u ality of a p rop o sed p lan f o r so lv in g a p rob lem . Inpu t : - Pr o b lem : {q u estion } - Prop o sed Plan : {p lan } Evalu ate th e p lan b ased on th e f o llo win g criter ia : | Ev a lua tion Criter ia : - Clarity : Is th e p lan clear , stru ctu red, an d easy to f o llo w? - Co m p leten ess : Do es it co v er all n ecessary step s to so lv e th e p rob lem ? - Co rr ectn ess : Is th e reason in g an d ap p roach lo g ically so u n d ? - Feasib ility : Can f o llo win g th is p lan lead to a co rr ect so lu tio n ? - Ef f icien cy : Is th e ap p roach reason ab ly ef f icien t, av o id in g u n n ecessary step s? Sco ring Guide line s : - 8 - 10 : Excellen t p lan - clear , co m p lete, lo g ically so u n d , an d f easib le . Fo llo win g it sh o u ld lead to a co rr ect so lu tio n . - 4 - 7 : Acceptab le p lan - h as so m e g ap s or m in o r iss u es but th e g en eral d irection is co rr ect . - 1 - 3 : Po o r p lan - u n clear , in co m p lete, lo g ically f lawed, or u n lik ely to lead to a co rr ect so lu tio n . [ W rite y o u r d etailed an aly sis h ere, ad d ressin g each criter io n . ] | Prov id e yo u r f in al sco re . Im po rtant : Ou tp u t o n ly one tag lik e 7 (r ep lace 7 with y o u r in teg er sco re 1 - 10 ) . Figure 9: The prompt of the Critic Agent(plan). Critic Ag ent Pr o m pt( Answ er) Role : Solu tion Quality Critic Agen t Description : Y o u are an ex p ert ev alu ato r . Y o u r task is to ass ess th e q u ality of a g en erate d so lu tio n to a g iv en q u estio n or p rob lem . Inpu t : - Qu estio n : {q u estio n } - Gen erate d So lu tio n : {an swer} Ev a luate th e so lut ion bas ed on th e follow ing crit eria : Ev a lua tion Criter ia : - Accuracy : Is th e so lu tio n f actu ally co rr ect with no err o rs in reason in g , arithm et ic, u n its, or ass u m p tio n s? - Co m p leten ess : Do es it f u lly ad d ress th e q u estio n with all n ecessary s tep s an d d erivatio n s? - Co h erence : Is th e reason in g lo g ical an d f ree f rom co n tradictio n s or h allu cin atio n s? - Co n ciseness : Is the ans wer d ire ct witho u t m eanin g less re p etition , ra m b ling , or f iller ? - Ins tructio n Fo llo win g : Do es th e so lu tio n f o llo w an y ex p licit f o rm attin g or stru ctu ral requ ire m en ts? Sco ring Guide line s : - 8 - 10 : Excellen t so lu tio n - en tirely co rr ect, co m p lete, lo g ically so u n d , co n cise, an d f o llo ws all in stru ctio n s . - 4 - 7 : Acceptab le so lu tio n - g en erally on - to p ic an d p artially co rr ect, but h as o m iss io n s or clarity iss u es (no f actu al err o rs) . - 1 - 3 : Po o r so lu tio n - co n tain s an y f actu al/lo g ic/calcu lat io n err o r , h allu cin ated co n ten t, ex cess iv e repetitio n , or sev ere irr elev an ce . Crit ical Rules : - An y f actu al err o r (ar ith m e tic , reason in g , co m m o n sen se, u n its, in v alid ass u m p tio n s) → sco re m u st be [ 1 - 3 ] - Hallu cin ated referen ces, f ab ricated d ata, or u n su p p o rted claim s → sco re m u st be [ 1 - 3 ] - Meanin g less repetitio n or ex cess iv e ram b lin g → sco re m u st be [ 1 - 3 ] [ W rite y o u r d etailed an aly sis h ere, ad d ressin g each criter io n . If an y critical iss u e ex ists , n o te th at th e s co re m u st be [ 1 - 3 ] . ] < \ th in k > Pr o v ide y o u r f inal sco re . Im po rtant : Ou tp u t o n ly one tag lik e 7 (r ep lace 7 with y o u r in teg er sco re 1 - 10 ) . Figure 10: The prompt of the Critic Agent(answer).

Loading high-quality paper...

|

Comments & Academic Discussion

Loading comments...

Leave a Comment