Mechanistic Foundations of Goal-Directed Control

Mechanistic interpretability has transformed the analysis of transformer circuits by decomposing model behavior into competing algorithms, identifying phase transitions during training, and deriving closed-form predictions for when and why strategies…

Authors: Alma Lago

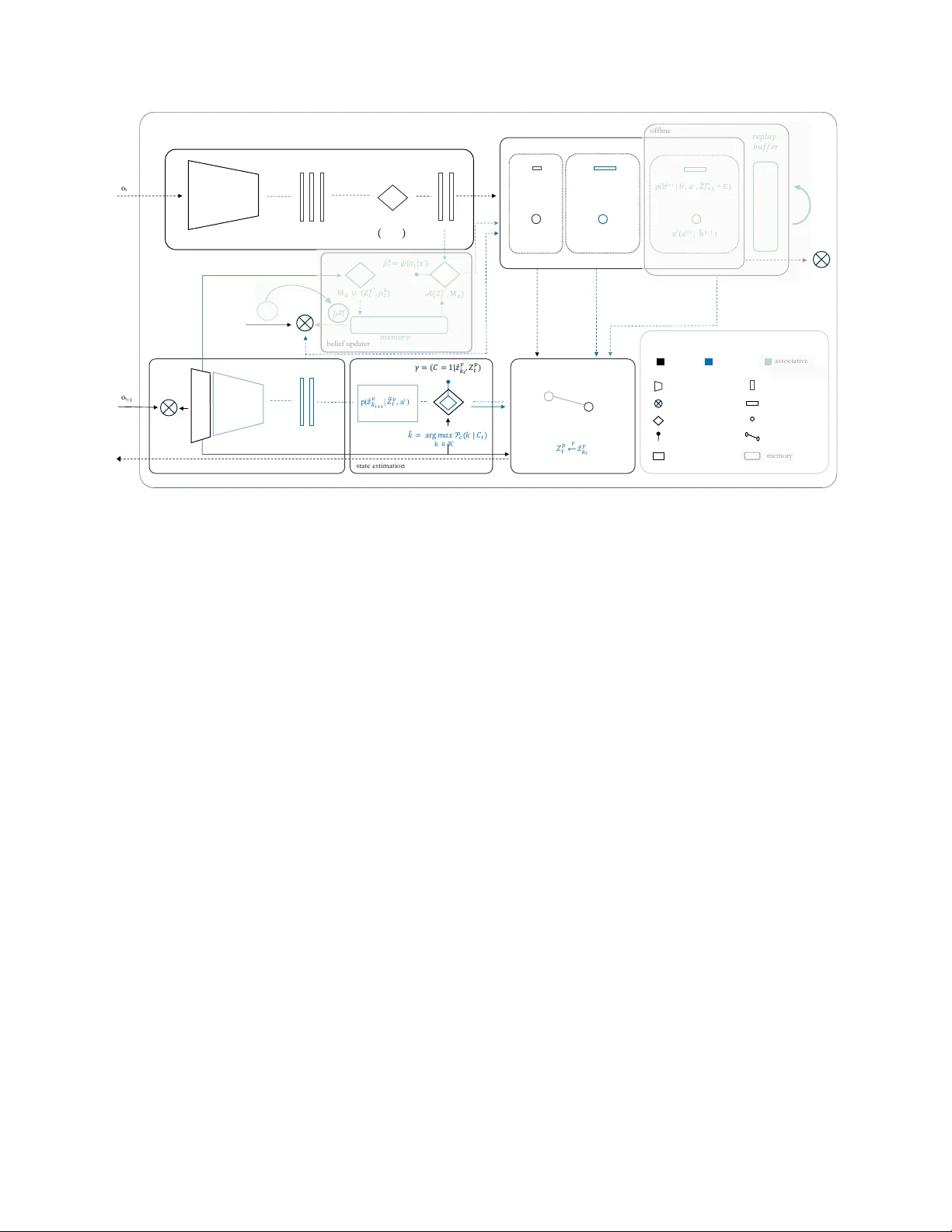

M E C H A N I S T I C F O U N D A T I O N S O F G O A L - D I R E C T E D C O N T R O L Alma Lago †,‡ † Cajal Neuroscience Center (CNC), CSIC ‡ Department of Computer Science and Artificial Intelligence, Univ ersity of the Basque Country (UPV/EHU) alma.lago@csic.es A B S T R AC T Mechanistic interpretability has transformed the analysis of transformer circuits by decomposing model behavior into competing algorithms, identifying phase transitions during training, and deri ving closed-form predictions for when and why strategies shift. Howe ver , this program has remained largely confined to sequence-prediction architectures, leaving embodied control systems without comparable mechanistic accounts. Here we extend this frame work to sensorimotor-cogniti ve de- velopment, using infant motor learning as a model system. W e show that foundational inducti ve biases gi ve rise to causal control circuits, with learned gating mechanisms con verging to ward theo- retically motiv ated uncertainty thresholds. The resulting dynamics reveal a clean phase transition in the arbitration gate whose commitment behavior is well described by a closed-form e xponential moving-a verage surrogate. W e identify context windo w k as the critical parameter governing circuit formation: belo w a minimum threshold (k ≤ 4) the arbitration mechanism cannot form; abo ve it (k ≥ 8), gate confidence scales asymptotically as log k. A two-dimensional phase diagram further rev eals task-demand-dependent route arbitration consistent with the prediction that prospective e xecution be- comes advantageous only when prediction error remains within the task tolerance window . T ogether , these results provide a mechanistic account of how reactiv e and prospectiv e control strategies emerge and compete during learning. More broadly , this work sharpens mechanistic accounts of cogniti ve dev elopment and provides principled guidance for the design of interpretable embodied agents. Keyw ords: agency; control; embodied AI; cogniti ve dev elopment; inductiv e biases; mechanistic interpretability Introduction The issue is not complexity but contr ol — Michael T omasello [1] Living organisms exhibit an extraordinary capacity to adapt. This suggests that the ingredients of behavioral control are deeply rooted in life’ s evolutionary fabric [2, 3]. Con versely , embod- ied artificial systems grow in sophistication [4], but lack the resilience and context-sensiti vity of biological ones [5, 6]. Under- standing how such control emerges and scales remains a central challenge [1, 7–11]. In humans, unlike other precocial species [12], control unfolds ov er an unusually extended developmental window [13]. This prolonged trajectory is well documented across dev elopmental psychology and neuroscience [14], offering a natural opportunity to isolate the computational building blocks of control as they consolidate over time. Like other vertebrates, human infants pos- sess hardwired sensorimotor organization reflecting foundational inductiv e biases implemented through conserved subcortical cir- cuits [15]. These built-in structures constrain early behavior , while more flexible control strategies emerge through learning. Understanding how such strategies arise from shared architec- tural constraints requires analytical tools capable of decomposing learned behavior into interpretable computational primiti ves. Mechanistic interpretability has transformed understanding of transformer circuits by decomposing model beha vior into com- peting algorithms, identifying phase transitions across training, and deriving closed-form predictions of when and why strate- gies shift [16 – 19]. This program has remained lar gely confined to sequence prediction architectures, lea ving embodied control systems without comparable mechanistic accounts. Here we extend it to sensorimotor–cognitiv e dev elopment, using infant motor learning as a model system. W e show that foundational inductiv e biases giv e rise to causal control circuits, with learned gating mechanisms con verging toward theoretically motivated uncertainty thresholds. The resulting phase diagrams provide a mechanistic account of how reactive and prospectiv e control strategies emerge during learning, e xtending this program beyond attention circuits to modular embodied control architectures. 1. pre - motor c ausal learning 3. autonomous behavior Retrospective inference Prospective control action-effect anticipation body schema action selection contingency evidence bundle predictive world-modeling policy generalization memory consolidation Allostatic control 2. sensorimotor mastery C G h* S C Environ ment G M h* T S C G h' b (a) (b) (c) (d) M T Environ ment Environ ment M S M – motor | S – sensor y | C – control G – goa l/d esi re | h – event codes | b – belief reactive prospective associativ e developmental timeline (approximate) birth 2 mo 18 mo temporal & hierarc hical depth degree of agency + afferent efferent 11 mo 21 mo 12 mo 6 mo 4 mo 8 mo transition zone Figure 1: A parsimonious computational model of infant sensorimotor–cognitive development. The architecture unfolds through three consolidation routes: (a) pre-motor causal learning, where causal representations ( h ∗ ) form early , ev en as motor commands are issued in weakly supervised loop; (b) sensorimotor mastery , where a transition function (T) enables coherent sensory integration and prediction-based control; and (c) autonomous behavior , where previously learned components consolidate into self-re gulated behavior , with belief ( b ) tracking internal goals. All stages share the same basic modules—sensory (S), control (C), and motor (M)—b ut dif fer in representational depth and internal coordination. These correspond to reactive (black), prospective (blue), and associativ e (green) routes shown belo w . (d) Dev elopmental timeline maps architectural transitions to infant motor milestones, alongside the emergence of three control modes. The nerv ous system shifts from predominantly afferent processing (birth to ≈ 2 months, sensory input shapes behavior) through a transition zone ( ≈ 2–4 months) to predominantly efferent processing ( ≈ 4 months onward, goal-directed action). A mechanistic model of infant sensorimotor–cognitive dev elopment The unique de velopmental trajectory of the human brain of fers a windo w into how agenti ve control emerges. Unlike other pre- cocial species [12], humans delay the maturation of core neural systems, preserving plasticity and prolonging the co-dev elopment of sensorimotor and cognitive structures [13, 20, 21]. This ex- tended timeline enables a gradual layering of control mechanisms, which eventually consolidate into self-regulated, goal-directed behavior [22, 23]. This layering is dominated by subcortical loops, ev olutionarily conserved structures that provide stable control before cortical systems mature. The reacti ve and prospectiv e routes formalized here are implemented by this subcortical machinery , conserved across vertebrates [24] and predating neocortical specialization. These circuits dri ve goal-directed behavior from early dev elop- ment. Their dynamics support the shift toward more flexible cortical control, and their ef ficiency predicts later cogniti ve out- comes [25]. The associativ e route recruits cortical structures, though not necessarily the six-layered neocortex unique to mam- mals, and is deferred to future work. T raditionally , infant de velopment has been framed in terms of dis- crete stages, beginning with Piaget’ s sensorimotor period, where intelligence emer ges through action [26, 27]. More recent agenc y- based accounts have reframed early infanc y as a phase of goal- directed control from the outset [28], emphasizing the structured nature of infant interaction. Building on and enriching these views, we propose a parsimonious de velopmental computational trajectory in which three consolidation routes jointly support the emergence of minimal agenti ve organization (Fig. 1a–c). Each route introduces specific control mechanisms that stabilize ov er time. These routes are not strictly sequential nor mutually exclu- siv e; they lik ely co-exist and compete throughout de velopment, with dominance shifting based on reliability and conte xtual de- mands [29, 30]. 2 Early behavior is predominantly reactiv e [31]. Infants issue mo- tor commands and receiv e fragile feedback, initiating a basic sensorimotor loop. Through repetition, they be gin to link motor acts with immediate outcomes, forging primitiv e action–effect codes [32] on the scaffold of an emerging body schema [33]. At this stage, control is unstable, but the system begins to sta- bilize action selection through repeated contingencies [34 – 38]. Contingency learning, such as actions producing reinforcing ef- fects [39, 40], underlies the extraction of causal structure [41]. Action-effect anticipation, ho we ver , follows a distinct trajectory . Infants can predict sensory outcomes before those associations are reliably used for action selection, re vealing a dev elopmental dissociation between prediction and control [42](Fig. 1a). W ith experience, causal regularities are accumulated and internal- ized as a contingency e vidence bundle—a compact internal struc- ture mapping actions to expected outcomes. The bundle gives rise to the operation of a transition function, supporting internal simulation of action outcomes from self-induced sensorimotor contingencies. This marks the onset of short-horizon predictiv e control. The infant can now anticipate not just immediate ef fects, but sequences of ev ents over time [43 – 45]. Importantly , this is not planning in the full sense, b ut a consequence of deeper tem- poral structure in predicti ve modeling, supported by the ability to internally simulate transitions within discrete episodes [46 – 49]. The system shifts from reactiv e responses to prospective control, gradually acquiring a self-causal model of unfolding interaction dynamics (Fig. 1b). As the internal transition function becomes robust, learned pat- terns are consolidated through of fline processes [50]. Infant sleep appears to support this transition, with changes in sleep architec- ture reflecting the emergence of new motor skills through altered mov ement dynamics and sleep oscillations [51 – 56]. These con- solidation processes help reorganize recent e xperiences and pro- mote generalization across stimuli and conte xts [57 – 61]. Learned policies can thus be applied flexibly in no vel situations. By the end of the first year , infants adapt actions to previously unseen ob- jects from familiar categories, indicating a reuse of v alue-guided strategies beyond exact ex emplars [62]. This rapid transfer is likely mediated by stimulus–stimulus ( S–S ) associations [63], which, upon the inference of a novel, stable goal, trigger a dis- tributed decision mechanism for polic y retriev al, affordance esti- mation, and goal commitment or inhibition. The agent begins to regulate its own behavior , a transition de- fined by the capacity for selective decision-making [28, 64]. This self-regulation inv olves selecting goals, redirecting strategies, and operating independently of immediate feedback, thereby marking the emergence of minimal autonomy [65]. Through associativ e learning, the agent not only predicts outcomes but organizes behavior around internally inferred goals (Fig. 1c). The consequences of these self-directed choices, are subsequently refined through interaction with the en vironment, reinforcing rel- ev ant strategies and updating internal beliefs. Over time, repeated goal–action–outcome cycles are consolidated into stable memory structures, enabling more abstract generalization and supporting emerging causal understanding [66–69]. The overall arc of this process is reflected in a developmental time- line (Fig. 1d) that maps architectural transitions to infant motor milestones, alongside the emergence of three control modes. The nervous system shifts from predominantly afferent processing (birth– ≈ 2 months), where sensory feedback from spontaneous motor exploration sculpts developing circuits through bottom- up signals [70, 71], through a transition zone ( ≈ 2–4 months) as network organization shifts and activity sparsifies, to predomi- nantly efferent processing ( ≈ 4 months onward), where maturing subcortical machinery enables goal-directed control. 1 Methods Experimental paradigm W e instantiate a minimal closed-loop en vironment with two dots. One represents a visible goal; the other , a self-controlled cur - sor . These are linked through a hidden actuator, a tw o-joint arm sensed only via proprioception, as described in Fig. 2. Each time the goal resets, the system assumes no external dynamics beyond those produced by its own actions. This formulation defines an interactiv e environment of minimal complexity , permitting mechanistic analysis of the underlying control circuitry . T ask demand parameterizes the precision–efficiency trade-of f by jointly v arying the tolerance radius (acceptable positional error at goal) and the goal distance. T ighter tolerance and shorter distance increase precision requirements while reducing the trajectory length available for error correction, and vice versa. T ogether with training duration, this defines a two-dimensional experimen- tal space spanning learning progress and task precision. Geomet- ric intuition for tolerance radius and trajectory structure, together with implementation details, is provided in Appendix A. Design rationale The goal position is randomized on ev ery trial to eliminate spatial predictability , preventing learning biases associated with fixed targets and encouraging the agent to develop generalized con- trol strategies. Movement is restricted to a horizontal line. The mapping between the agent’ s motor commands and the cursor is indirect, depending on a hidden actuator chain. The agent must first infer and master an internal model of its own body before solving the external task. en d - ef f ect o r ac t u at o r el b o w j o i n t cursor goal hidden obser va ble x x Figure 2: Experimental interface. The protocol is framed as a continuous control problem. The observable scene (goal and cursor) and hidden actuation (elbo w joint) en- force a decoupled perception–action loop. The agent drives its motor command to reduce the gap between goal and cursor ov er time. The goal resets to a ne w random position on ev ery trial. T ask demand jointly modulates target dis- tance and tolerance radius. V arying this parameter along- side training duration allo ws exploration of distinct control regimes (visualized in Appendix A). 3 ! !"# oₜ oₜ +1 p( " # !"# | $ % !"# $ ∗ ) $ ! $ = { & # $ .. & % $ } $ % !"# $ ∗ = { &' % & "#$ $ ( & % ∗ $ } $ ! $ ∗ ) = { & # $ .. & % ∗ $ } * $ ! $ ()+ , 𝒕 𝒗 - ). )$ / ! ) = 01$ ! ) 2 3 { & ! * )4)5) 6 7 } )& !+# *# )8)) a t q(h t | h t- 1 , )$ ! $ ∗ ) p(h k+1 | h t , a t , $ % !"# $∗ ) π (a t+1 | h k+1 ) π (a t | h t ) 9: ; < = > ) ) ?@ A A : 9 object - centric perception of f l i ne body schema visual decoder B 1 $ ! $ ∗ ( C - 2 < C - ) D ) 1 $ ! $ ∗ ( E ! % 2 𝜆 π ´ ( a t+1 | h F k+ 1 ) online p( h k+ 1 | h t , a t , $ % ! " # $ ∗ + 6 ) control unit p( & ' % & " # $ $ | ) $ / ! $ , a t ) G H 3 ) IJ K L= M N . G ) ) O ! 2 k # ∈ 𝒦 P 3 1 O 3 Q 4 & ' % & " $ ( $ ! ) 2 $ ! ) ) / / / 0 / ) & ' % & " $ st a t e e st i m a t i o n bel i ef u pd a t er encoder/decoder error decision gate transition [ Δ ] slot event motor command graph me mo r y Graphi c primi tives reactive prospective as s o c i at i v e L: L" 9 > q( $ ! $ ) | oₜ) b / 𝒁 2 𝒕 𝒗 R ₜ +1 ) E S ! % 3 T U ! π ´ 2 𝒟 ! !" V) ! 3*$ )+ , 𝒕 𝒗 Figure 3: MAIA overview . Three consolidation routes organize control: reacti ve (black), prospectiv e (blue), and associativ e (green, deferred). As learning progresses, uncertainty gates arbitrate route transitions based on accumulated confidence. Foundational inductiv e biases (object-centric perception, event-based representations, body schema) provide structural constraints that enable interpretable control pathways. Cognitive architectur e MAIA ( Minimal Agentic Inference Architecture) is a modular control architecture organized into three consolidation routes (Fig. 3). Behavior emerges from the interplay of top-down predictions and bottom-up feedback, with each route adding temporal and hierarchical depth to the agent’ s control repertoire. Route transitions are arbitrated by uncertainty-gated attention mechanisms. The contingency gate integrates prediction error over a temporal context window of k steps, producing a confidence signal that governs the transition from reactive to prospectiv e control. Slot commitment is enforced via temperature-scaled softmax at temperature τ . The affordability gate mediates the transition to allostatic control based on similarity to stored goal representations. Both g ates are implemented as two-layer attention modules; full specifications are provided in Appendix B. Foundational inducti ve biases, including object-centric percep- tion, ev ent-based representations, and relational body schema, constrain the hypothesis space and provide anchor points for con- trol, enabling tractable learning from high-dimensional input and causally traceable control pathways [72]. These design choices follow principles of parsimony and transparenc y , fa voring inter - pretable structure ov er post hoc explanation [73, 74]. Results The contingency gate must solv e two sequential problems. First, identify which visual slot is under control (controllability); sec- ond, determine when to use prospecti ve v ersus reactiv e control (arbitration). W e e valuate these through complementary experi- ments. Gate learning dynamics. W e examined whether the gate learns controllability by measuring temporal commitment dy- namics at fixed task demand across context windows k ∈ { 1 , 2 , 4 , 8 , 16 , 32 , 64 , 128 } . During warm exploration ( τ = 10 ), confidence remains at chance (0.5) across all k . Follo wing tem- perature collapse ( τ → 0 . 001 ), commitment emerges with dy- namics well approximated by an exponential moving av erage: c ( t ) = c ∞ − ( c ∞ − 0 . 5) e − t/k . The quality of this approximation varies with k . W indow size k = 32 exhibits both the tightest EMA fit and the clearest two-phase structure, coinciding with optimal arbitration performance ( ∆ = 0 . 192 , Figure 4). F or smaller k , commitment fails to stabilize; for large r k , dynam- ics saturate without qualitati ve change. This regime-dependent structure is analogous to phase transitions during induction head formation [75–77]. Context window governs arbitration emergence. Hav- ing established that the gate learns controllability , we next asked whether context window also governs task-demand arbitration. Phase diagrams across k ∈ { 1 , 2 , 4 , 8 , 16 , 32 } rev eal a sharp threshold (Figure 4): below k ≤ 4 , no arbitration structure forms ( ∆ < 0 . 01 ); at k = 8 structure emerges weakly; at k = 32 the phase diagram is fully resolved ( ∆ =0.192), with prospectiv e con- trol dominating at lo w task demand and reactiv e control at high demand. This threshold aligns with theoretical prediction: the gate requires k ≥ K steps ≈ 10 to represent the full prospective trajectory . 4 reactiv e prospectiv e Figure 4: Context window threshold governs arbitration emergence. Phase diagrams across k ∈ { 1 , 2 , 4 , 8 , 16 , 32 } show gate confidence ov er training epochs (x-axis) and task demand (y-axis). Belo w k ≤ 4 , no arbitration structure forms, gates remain at chance across all conditions (white/gray). At k = 8 structure begins to emerge; at k = 32 the phase diagram is fully resolv ed, with prospecti ve control (blue) dominating at lo w task demand and reacti ve control (gray) at high demand. This progression rev eals k as the critical architectural parameter go verning circuit formation, consistent with theoretical threshold k ≥ K steps ≈ 10 . Figure 5: Gate learning dynamics and EMA structure. Confidence trajectories across context windows k (solid lines) are compared to exponential moving a verage predic- tions (dashed lines). During warm exploration, confidence remains at chance (0.5) across all k . Follo wing temper- ature collapse ( τ → 0 . 001 ), commitment emerges with k -dependent dynamics; agreement with the EMA surro- gate is strongest at k = 32 ( ⋆ , bold), where a clear two- phase structure emerges. Beyond this re gime, asymptotic confidence saturates ( ∆ ≈ 0 . 198 for k ∈ { 64 , 128 , 256 } ; see Appendix C), consistent with diminishing returns from additional context. EMA as diagnostic. The EMA captures the temporal struc- ture of commitment dynamics, providing a useful diagnostic of circuit formation. Ho wev er, when used as a replacement for the gate (fixed EMA; α = 1 /k ), it fails to produce any task- demand-dependent structure, remaining at chance ( 0 . 50 ± 0 . 001 ; ∆ = 0 . 0006 ). The resulting phase diagram is uniform, indicat- ing that while EMA captures temporal integration, it lacks the state-dependent mechanism required for arbitration. Thus, the EMA characterizes the dynamics, but does not implement the function. Discussion Goal-directed behavior originates in the regulation of or ganismic needs [78]. Physiological driv es arise from homeostatic imbal- ances and motiv ate actions that restore internal stability [79]. Ef- fectiv e control, howe ver , requires more than immediate feedback: the agent must learn the causal consequences of its actions and regulate beha vior with respect to anticipated outcomes [78, 80]. These capabilities enable prospective ev aluation and allostatic control [81], allowing beha vior to be guided by internally gener- ated goals rather than fixed stimulus–response patterns. These requirements distinguish goal-directed control from habitual or reflexi ve action [82] and moti v ate a control-theoretic decompo- sition of the minimal organizational requirements for agency . Figure 6 situates the present work within this decomposition. Conclusion W e extended the mechanistic interpretability program to embod- ied control architectures using infant motor learning as a model system. T wo sequential results establish the mechanism. First, the gate learns slot controllability , with commitment dynamics well approximated by an EMA (closed-form surrogate; which, as ablation confirms, cannot itself arbitrate), and context window k gov erning the rate and quality of commitment. Second, the same gate, when trained with task-demand variation, learns to arbitrate between prospective and reactiv e control, a result not implied by the first. Context window k , not network depth, is the critical architectural parameter: below k ≤ 4 no arbitration forms; above k ≥ 8 the phase diagram is fully resolved, with the threshold analytically predicted by the prospective e xecution horizon K steps ≈ 10 . These results establish phase diagrams as diagnostic tools for developmental control systems and con- nect mechanistic interpretability to embodied agency , sharpening mechanistic accounts of cognitiv e dev elopment and providing principled guidance for interpretable embodied agent design. Acknowledgements This research has been partially funded by the DEEPSELF project (467045002 DFG SPP The Active Self). Portions of the code were developed with assistance from Claude (Anthropic). All scientific content, analysis, and conclusions are the authors’ own. 5 Sy m b o l i c se m a n t ic la b e lin g , na r r a t i v e s y nt he s i s , for ma l r e a s oni ng . simulation layer decision layer regulatory layer Ex e c u t i v e Agentic Physiolo gical non - lin g uist ic p la n n in g , pr obl e m - so lv in g , w o r k in g m e m o r y . allostasis , self - regul ated policy retriev al, inhibitor y control (GO/NO - GO). homeostasis , universal regulatory process to sta y alive . representation la yer categories & values Figure 6: A control-theoretic decomposition of goal-directed behavior . Three functional layers organize embodied control: the re gulatory layer , the decision layer , and the simulation layer . Orthogonal to this hierarchy , the symbolic system grounds implicit agentic categories into explicit representations, enabling semantic labeling, narrati ve synthesis, and formal reasoning. This work specifies the mechanistic foundations of the reactiv e and prospective routes within the decision layer . The associativ e route and its interface with the symbolic system are deferred to future work. References [1] Michael T omasello. The evolution of agency: Behavioral organization fr om lizards to humans . MIT Press, 2022. [2] Pamela L yon, Fred Keijzer , Detlev Arendt, and Michael Levin. Reframing cognition: getting down to biological basics, 2021. [3] Paul Cisek. Ev olution of behavioural control from chordates to primates. Philosophical T ransactions of the Royal Society B , 377(1844):20200522, 2022. [4] Anthony Zador , Sean Escola, Blake Richards, Bence Ölveczk y , Y oshua Bengio, Kwabena Boahen, Matthe w Botvinick, Dmitri Chklovskii, Anne Churchland, Claudia Clopath, et al. Catalyzing ne xt-generation artificial intelligence through neuroai. Nature communications , 14(1):1597, 2023. [5] Josh Bongard, V ictor Zyko v , and Hod Lipson. Resilient machines through continuous self-modeling. Science , 314(5802):1118– 1121, 2006. [6] Sam Kriegman, Douglas Blackiston, Michael Levin, and Josh Bongard. A scalable pipeline for designing reconfigurable organisms. Pr oceedings of the National Academy of Sciences , 117(4):1853–1859, 2020. [7] T ony J Prescott, Peter Redgrav e, and Ke vin Gurney . Layered control architectures in robots and v ertebrates. Adaptive Behavior , 7(1):99–127, 1999. [8] Stuart P W ilson and T ony J Prescott. Scaffolding layered control architectures through constraint closure: insights into brain ev olution and dev elopment. Philosophical T ransactions of the Royal Society B: Biological Sciences , 377(1844), 2022. [9] T ony J Prescott and Alejandro Jimenez-Rodriguez. Understanding the layered brain architecture for moti vation: dynamical systems, computational neuroscience, and robotic approaches. In Psycholo gy of Learning and Motivation , volume 82, pages 45–96. Elsevier , 2025. [10] Giov anni Pezzulo, Thomas Parr , and Karl Friston. The ev olution of brain architectures for predictive coding and active inference. Philosophical T ransactions of the Royal Society B , 377(1844):20200531, 2022. [11] Paul Cisek and Benjamin Y Hayden. Neuroscience needs ev olution, 2022. [12] Birgit Szabo, Daniel W A Noble, Richard W Byrne, Da vid S T ait, and Martin J Whiting. Precocial juvenile lizards show adult lev el learning and behavioural fle xibility . Animal Behaviour , 154:75–84, 2019. [13] Mehmet Somel, Henriette Franz, Zheng Y an, Anna Lorenc, Song Guo, Thomas Giger, Janet Kelso, Bir git Nickel, Michael Dannemann, Sabine Bahn, et al. T ranscriptional neoteny in the human brain. Pr oceedings of the National Academy of Sciences , 106(14):5743–5748, 2009. [14] Philippe Rochat and Philippe Rochat. The infant’s world . Harvard Uni versity Press, 2009. [15] Sten Grillner and Brita Robertson. The basal ganglia over 500 million years. Curr ent Biology , 26(20):R1088–R1100, 2016. [16] Nelson Elhage, Neel Nanda, Catherine Olsson, T om Henighan, Nicholas Joseph, Ben Mann, Amanda Askell, Y untao Bai, Anna Chen, T om Conerly , et al. A mathematical framework for transformer circuits. T ransformer Circuits Thread , 1(1):12, 2021. [17] Chris Olah, Nick Cammarata, Ludwig Schubert, Gabriel Goh, Michael Petrov , and Shan Carter . Zoom in: An introduction to circuits. Distill , 5(3):e00024–001, 2020. 6 [18] Core Francisco Park, Ekdeep Singh Lubana, Itamar Pres, and Hidenori T anaka. Competition dynamics shape algorithmic phases of in-context learning. arXiv pr eprint arXiv:2412.01003 , 2024. [19] Daniel W urg aft, Ekdeep Singh Lubana, Core Francisco Park, Hidenori T anaka, Gautam Reddy , and Noah D Goodman. In-context learning strate gies emerge rationally . arXiv preprint , 2025. [20] Aida Gómez-Robles and Chet C Sherwood. Human brain ev olution how the increase of brain plasticity made us a cultural species. MÉTODE Science Studies Journal , (7):35–43, 2017. [21] Sabina Kanton, Michael James Boyle, Zhisong He, Malgorzata Santel, Anne W eigert, Fátima Sanchís-Calleja, P atricia Guijarro, Leila Sidow , Jonas Simon Fleck, Dingding Han, et al. Organoid single-cell genomic atlas uncov ers human-specific features of brain dev elopment. Nature , 574(7778):418–422, 2019. [22] Y uko Munakata, Hannah R Snyder , and Christopher H Chatham. Dev eloping cognitive control: Three key transitions. Current dir ections in psychological science , 21(2):71–77, 2012. [23] Karen E Adolph and John M Franchak. The development of motor behavior . W iley Inter disciplinary Reviews: Cognitive Science , 8(1-2):e1430, 2017. [24] Paul Cisek. Resynthesizing beha vior through phylogenetic refinement. Attention, perception, & psychophysics , 81(7):2265– 2287, 2019. [25] Huili Sun, Rongtao Jiang, W ei Dai, Alexander J Dufford, Stephanie Noble, Marisa N Spann, Shi Gu, and Dustin Scheinost. Network controllability of structural connectomes in the neonatal brain. Natur e communications , 14(1):5820, 2023. [26] Jean Piaget, Margaret Cook, et al. The origins of intelligence in children , v olume 8. International universities press Ne w Y ork, 1952. [27] Jean Piaget. The psychology of intelligence . Routledge, 2005. [28] Michael T omasello. Agency and Cognitive Development . Oxford Univ ersity Press, 2024. [29] Nathaniel D Daw , Y ael Niv , and Peter Dayan. Uncertainty-based competition between prefrontal and dorsolateral striatal systems for behavioral control. Natur e neur oscience , 8(12):1704–1711, 2005. [30] Samuel J Gershman. 17 reinforcement learning and causal models. The Oxford handbook of causal reasoning , page 295, 2017. [31] Esther Thelen, Daniela Corbetta, Kathi Kamm, John P Spencer , Klaus Schneider , and Ronald F Zernicke. The transition to reaching: Mapping intention and intrinsic dynamics. Child development , 64(4):1058–1098, 1993. [32] Bernhard Hommel, Jochen Müsseler , Gisa Aschersleben, and W olfgang Prinz. The theory of event coding (tec): A framework for perception and action planning. Behavioral and brain sciences , 24(5):849–878, 2001. [33] Shaun Gallagher . How the body shapes the mind . Clarendon press, 2006. [34] Jürgen K onczak and Johannes Dichgans. The dev elopment tow ard stereotypic arm kinematics during reaching in the first 3 years of life. Experimental brain r esear ch , 117(2):346–354, 1997. [35] Sheila Schneiberg, Heidi Sveistrup, Bradford McFadyen, Patricia McKinley , and Mindy F Levin. The dev elopment of coordination for reach-to-grasp mov ements in children. Experimental Brain Researc h , 146(2):142–154, 2002. [36] Neil E Berthier and Rachel Keen. De velopment of reaching in infanc y . Experimental brain r esear ch , 169(4):507–518, 2006. [37] HM Lee, A Bhat, JP Scholz, and JC Galloway . T oy-oriented changes during early arm movements: Iv: shoulder–elbo w coordination. Infant Behavior and Development , 31(3):447–469, 2008. [38] Mei-Hua Lee, Rajiv Ranganathan, and Karl M Ne well. Changes in object-oriented arm movements that precede the transition to goal-directed reaching in infancy . Developmental Psychobiology , 53(7):685–693, 2011. [39] Carolyn K ent Rovee and Da vid T Rov ee. Conjugate reinforcement of infant e xploratory behavior . Journal of experimental child psychology , 8(1):33–39, 1969. [40] Hama W atanabe and Gentaro T aga. General to specific dev elopment of movement patterns and memory for contingency between actions and ev ents in young infants. Infant Behavior and Development , 29(3):402–422, 2006. [41] Andrew N Meltzof f. Infants’ causal learning: Intervention, observ ation, imitation. 2007. [42] Stephan A V erschoor , Michiel Spapé, Szilvia Biro, and Bernhard Hommel. From outcome prediction to action selection: dev elopmental change in the role of action–effect bindings. Developmental Science , 16(6):801–814, 2013. [43] Claes von Hofsten and Karin Lindhagen. Observations on the dev elopment of reaching for moving objects. J ournal of experimental child psychology , 28(1):158–173, 1979. [44] Claes von Hofsten. Catching skills in infancy . Journal of Experimental Psychology: Human P er ception and P erformance , 9(1):75, 1983. [45] Jacqueline Fagard, Elizabeth Spelke, and Claes von Hofsten. Reaching and grasping a mo ving object in 6-, 8-, and 10-month-old infants: Laterality and performance. Infant Behavior and Development , 32(2):137–146, 2009. [46] Jacqueline Fagard. Linked proximal and distal changes in the reaching behavior of 5-to 12-month-old human infants grasping objects of different sizes. Infant Behavior and Development , 23(3-4):317–329, 2000. 7 [47] David C W itherington. The dev elopment of prospectiv e grasping control between 5 and 7 months: A longitudinal study . Infancy , 7(2):143–161, 2005. [48] T racy M Barrett, Emily T raupman, and Amy Needham. Infants’ visual anticipation of object structure in grasp planning. Infant Behavior and Development , 31(1):1–9, 2008. [49] Claes von Hofsten and Katarina Johansson. Planning to reach for a rotating rod: Dev elopmental aspects. Zeitschrift für Entwicklungspsyc hologie und pädagogisc he Psychologie , 41(4):207–213, 2009. [50] Sangyoon Y K o, Y iming Rong, Adam I Ramsaran, Xiaoyu Chen, Asim J Rashid, Andre w J Mocle, Jagroop Dhaliwal, Ankit A wasthi, Axel Guskjolen, Sheena A Josselyn, et al. Systems consolidation reorganizes hippocampal engram circuitry . Nature , pages 1–9, 2025. [51] Anat Scher and Dina Cohen. V . sleep as a mirror of de velopmental transitions in inf ancy: The case of crawling. Monographs of the Society for Researc h in Child Development , 80(1):70–88, 2015. [52] Osnat Atun-Einy and Anat Scher . Sleep disruption and motor dev elopment: Does pulling-to-stand impacts sleep–wak e regulation? Infant behavior and development , 42:36–44, 2016. [53] Manuela Friedrich, Matthias Mölle, Angela D Friederici, and Jan Born. The reciprocal relation between sleep and memory in infancy: Memory-dependent adjustment of sleep spindles and spindle-dependent impro vement of memories. Developmental Science , 22(2):e12743, 2019. [54] Elliott Gray Johnson, Janani Prabhakar , Lindsey N Mooney , and Simona Ghetti. Neuroimaging the sleeping brain: Insight on memory functioning in infants and toddlers. Infant Behavior and Development , 58:101427, 2020. [55] Anna-Liisa Satomaa, Tiina Mäk elä, Outi Saarenpää-Heikkilä, Anneli Kylliäinen, Eero Huupponen, and Sari-Leena Himanen. Slow-w ave activity and sigma acti vities are associated with psychomotor dev elopment at 8 months of age. Sleep , 43(9):zsaa061, 2020. [56] Aaron DeMasi, Melissa N Hor ger, Anat Scher , and Sarah E Berger . Infant motor development predicts the dynamics of mov ement during sleep. Infancy , 28(2):367–387, 2023. [57] Amanda R T arullo, Peter D Balsam, and William P Fifer . Sleep and infant learning. Infant and child development , 20(1):35–46, 2011. [58] Manuela Friedrich, Ines W ilhelm, Jan Born, and Angela D Friederici. Generalization of word meanings during infant sleep. Natur e communications , 6(1):6004, 2015. [59] Sabine Seehagen, Carolin Konrad, Jane S Herbert, and Silvia Schneider . T imely sleep facilitates declarative memory consolidation in infants. Pr oceedings of the National Academy of Sciences , 112(5):1625–1629, 2015. [60] M Friedrich, M Mölle, AD Friederici, and J Born. Sleep-dependent memory consolidation in inf ants protects new episodic memories from existing semantic memories. nat. commun. 11, 1298, 2020. [61] Zhilin Peng, Ruoying Zheng, Xiaoqing Hu, and Dandan Zhang. The role of sleep in consolidating memory of learning in infants and toddlers. Advances in Psychological Science , 2024. [62] Clay Mash, Marc H Bornstein, and Abhilasha Banerjee. Development of object control in the first year: Emerging cate gory discrimination and generalization in infants’ adapti ve selection of action. Developmental psycholo gy , 50(2):325, 2014. [63] Robert Rescorla. P avlovian second-order conditioning (psychology re vivals): studies in associative learning . Psychology Press, 2014. [64] Adele Diamond. Developmental time course in human infants and inf ant monkeys, and the neural bases of, inhibitory control in reaching a. Annals of the New Y ork Academy of Sciences , 608(1):637–676, 1990. [65] Richard M Ryan and Edw ard L Deci. Intrinsic and e xtrinsic motiv ations: Classic definitions and ne w directions. Contempor ary educational psychology , 25(1):54–67, 2000. [66] Charles A Nelson. The neurobiological basis of early memory dev elopment. The development of memory in childhood , pages 41–82, 1997. [67] Charles A Nelson. The nature of early memory . Pr eventive medicine , 27(2):172–179, 1998. [68] Harlene Hayne, Rachel Barr , and Jane Herbert. The effect of prior practice on memory reacti vation and generalization. Child Development , 74(6):1615–1627, 2003. [69] Lillian Behm, Nicholas B T urk-Browne, and Melissa M Kibbe. The ubiquity of episodic-like memory during infancy . T r ends in Cognitive Sciences , 2025. [70] Mijna Hadders-Algra. Early human motor dev elopment: From variation to the ability to vary and adapt. Neur oscience & Biobehavioral Reviews , 90:411–427, 2018. [71] Mijna Hadders-Algra. Neural substrate and clinical significance of general movements: an update. Developmental Medicine & Child Neur ology , 60(1):39–46, 2018. [72] Anirudh Goyal and Y oshua Bengio. Inductive biases for deep learning of higher -level cognition. Pr oceedings of the Royal Society A , 478(2266):20210068, 2022. 8 [73] Cynthia Rudin. Stop explaining black box machine learning models for high stakes decisions and use interpretable models instead. Natur e machine intelligence , 1(5):206–215, 2019. [74] Leonard Bereska and Efstratios Gavv es. Mechanistic interpretability for ai safety–a revie w . arXiv preprint , 2024. [75] Catherine Olsson, Nelson Elhage, Neel Nanda, Nicholas Joseph, Nova DasSarma, T om Henighan, Ben Mann, Amanda Askell, Y untao Bai, Anna Chen, et al. In-context learning and induction heads. arXiv pr eprint arXiv:2209.11895 , 2022. [76] Ezra Edelman, Nikolaos Tsili vis, Benjamin L Edelman, Eran Malach, and Surbhi Goel. The ev olution of statistical induction heads: In-context learning marko v chains. Advances in neural information pr ocessing systems , 37:64273–64311, 2024. [77] Sara Kangaslahti, Elan Rosenfeld, and Naomi Saphra. Hidden breakthroughs in language model training. arXiv pr eprint arXiv:2506.15872 , 2025. [78] Bernard W Balleine and Anthony Dickinson. Goal-directed instrumental action: contingency and incenti ve learning and their cortical substrates. Neur opharmacology , 37(4-5):407–419, 1998. [79] Hans-Rudolf Berthoud. Mind versus metabolism in the control of food intak e and energy balance. Physiology & behavior , 81(5):781–793, 2004. [80] Anthony Dickinson and Bernard Balleine. Motiv ational control of goal-directed action. Animal learning & behavior , 22(1):1–18, 1994. [81] Peter Sterling. Allostasis: a model of predictive re gulation. Physiology & behavior , 106(1):5–15, 2012. [82] Paul FMJ V erschure, Cyriel MA Pennartz, and Giovanni Pezzulo. The why , what, where, when and how of goal-directed choice: neuronal and computational principles. Philosophical T ransactions of the Royal Society B: Biological Sciences , 369(1655):20130483, 2014. [83] Ruben S van Ber gen and Pablo Lanillos. Object-based activ e inference. In International W orkshop on Active Inference , pages 50–64. Springer , 2022. [84] Ruben V an Bergen, Justus Hübotter , Alma Lago, and Pablo Lanillos. Object-centric proto-symbolic beha vioural reasoning from pixels. Neural Networks , page 108407, 2025. [85] Christian Gumbsch, Martin V Butz, and Georg Martius. Sparsely changing latent states for prediction and planning in partially observable domains. Advances in Neural Information Pr ocessing Systems , 34:17518–17531, 2021. 9 A Goal-tracking en vironment and task-demand parameterization The en vironment is a minimal 1D cursor-to-goal tracking task rendered as a 64 × 64 RGB scene. The observation contains a red cursor and a white goal on a black background, with motion restricted to the horizontal axis. Actions are continuous scalar displacements applied to the cursor , while the goal remains fixed within each episode and is resampled only after successful arri val. An episode terminates when the cursor enters the arriv al zone centered on the goal. T ask-demand parameterization The en vironment exposes a scalar task-demand parameter d ∈ [0 , 1] , which jointly controls the initial cursor-to-goal distance and the arriv al tolerance: goal distance = d max (1 − d ) , tolerance radius = r max (1 − d ) , where d max = 28 px (the minimum guaranteed d av ail across all cursor positions; actual separation reaches up to 54 px) and r max = 5 px. Step velocity is coupled to tolerance radius as v = clip( r, 0 . 5 , 3 . 0) px/step, ensuring precise arriv al remains geometrically feasible across the full demand range. Distance bands Goal positions are sampled from three contiguous, non-overlapping distance bands anchored at d ∈ { 0 . 0 , 0 . 5 , 1 . 0 } , with linear interpolation between anchors: d range distance band (px) regime [0 . 0 , 0 . 5] [24 , d av ail ] long reach, loose arriv al 0 . 5 [11 , 24] intermediate regime [0 . 5 , 1 . 0] [1 , 11] short reach, tight arriv al where d av ail = max( c x − g min , g max − c x ) is the maximum a vailable separation gi ven current cursor position c x and goal boundary constraints [ g min , g max ] = [6 , 57] px. At low demand, the cursor traverses long distances with relati vely predictable mo vement and coarse corrections suf fice for success. At high demand, the goal is nearby but the tolerance is narrow , placing greater emphasis on precise final positioning and leaving less margin for correcti ve error . The parameterization thus defines a compact experimental space in which training duration and task demand can be varied independently . Rendering and implementation details The en vironment supports three velocity modes: bounded (actions clipped to ± v ), fixed (magnitude always v , sign from action), and free (unclipped). All experiments use bounded mode. The goal frame is pre-rendered and cached between resets; only the cursor is redrawn per step, keeping rendering lightweight and deterministic. Anti-aliased and sharp-pixel rendering modes are both supported; sharp pixels ( cv2.LINE_8 ) are used in all reported e xperiments for exact pixel consistenc y . An interactiv e interface ( env/interactive.py ) allows k eyboard control of the cursor and li ve adjustment of task demand via +/- keys. The interface displays the current arriv al zone, distance to goal, step size, and task-demand level in real time 1 . . Figure 7: Geometric intuition for the task-demand parameter . Representati ve configurations across increasing d . The yello w band denotes the tolerance radius around the goal; the c yan arro w indicates cursor-to-goal distance. As d increases, the arriv al zone narrows, the reach distance shortens, and step velocity decreases accordingly . T ogether these coupled changes define a progression from long-reach, permissiv e control to short-range, precision-constrained control. 1 Code and interactiv e demo are av ailable at https://github.com/almalago/goal- tracking- env . 10 B MAIA implementation details B.1 Foundational inductive biases The architecture embeds three structural constraints that enable tractable learning from high-dimensional input. B.1.1 Object-Centric perception. W e assume a stable, trained object-centric perceptual encoder backbone that parses raw sensory input into structured latents. Giv en the current observation o t , an inference network returns K probabilistic slots. q ( Z v t | o t ) = K Y i =1 q ( z v i | o t ) , Z v t = { z v 1 , . . . , z v K } where each slot z v i encodes one scene element (appear- ance/geometry), and one slot absorbs the background. This structured representation follows the self-supervised, proba- bilistic framew ork of recent object-centric models [83, 84]. This encoder acts as the perceptual backbone across routes, en- abling downstream inference and control to operate over a fac- tored scene decomposition. B.1.2 Event-based representations. The controller is implemented by an RNN that outputs the action giv en a structured latent representation formed by the dynamics of the object encoded representations: given an e vent code state h t , it generates action a t . This latent is sparsely updated through a stochastic gate, yielding interpretable, piecewise-stable dynam- ics aligned with ev ents. At each step, the controller receiv es: x t = Z v ∗ t , h t − 1 and applies a G ATE L 0 R D cell [85] composed of: (i) a recom- mendation network r , (ii) a gating network g , and (iii) an output projection p , which acts directly as the policy head. RNN update. s t ∼ N g ( x t , h t − 1 ) , σ 2 I Λ t = ReT anh( s t ) Θ t = Hea viside(Λ t ) ( L 0 reg.: L gate = λ E [Θ t ] ) h t = Λ t ⊙ r ( x t , h t − 1 ) + (1 − Λ t ) ⊙ h t − 1 a t = p ([ x t , h t ]) The output network p provides probabilistic action parameters ( µ, log σ ) . B.1.3 Relational body schema. W e represent the limb as a directed kinematic chain (graph) with nodes N , such as elbo w ( n 1 ) and wrist ( n 2 ). Each node maintains a proprioceptiv e latent: Z p t = { z n t = ( µ n t , log σ n 2 t ) | n ∈ N } , where µ and σ 2 encode the mean and variance of each joint’ s estimated state. The stacked vector ˜ Z p t = ξ ( Z p t ) serves as input to downstream modules. The motor command a t directly updates the proximal node (e.g., the elbow): µ n 1 t = µ n 1 t − 1 + a t , log σ n 1 2 t = log σ n 1 2 t − 1 + ϕ ( ∥ a t ∥ ) , where ϕ ( · ) ≥ 0 increases uncertainty with command magni- tude. The updated state of n 1 propagates distally to downstream nodes (e.g., wrist) via learned edge-conditioned transformations W ij ( e ij ) , which encode link-specific attributes like length. This models a feed-forward kinematic chain, and scales naturally to longer limbs or branching structures, ensuring that the output wrist state reflects both the current action and upstream uncer- tainty . B.2 Contingency gate The gate implements slot commitment via attention over a sliding context windo w of k steps: α i = softmax ( W Q [ a t , td t ]) ⊤ ( W K [ a t − i , td t − i ]) √ d c t = σ w ⊤ k − 1 X i =0 α i W V z t − i ! Query: current (action, task demand). Ke ys: buf fered (action, task demand). V alues: slot latents [ z cursor , z goal ] . W e ablate attention depth N L ∈ { 1 , 2 , 3 } ; results in T able 2. B.3 T raining Gate trained with Adam using a two-phase schedule (warm-up with no gradients, then cold phase with updates). Figure 5 uses hard slot selection via Gumbel-softmax and cross-entropy loss; Figure 4 uses BCE tow ard P pro ( td ) with no hard selection. Both use oracle actions and episode-lev el updates. 11 C Supplementary results C.1 Context window sweep T able 1: Context windo w threshold and saturation behavior . Range ∆ measures phase separation strength. ⋆ denotes the selected configuration ( k = 32 ); larger k saturates with negligible gain. k ∆ (range) Status 1 0.002 Dead 2 0.004 Dead 4 0.010 Dead 8 0.045 Emerging 16 0.113 P artial 32 0.192 ⋆ 64 ≈ 0.198 Saturated 128 ≈ 0.198 Saturated 256 ≈ 0.198 Saturated C.2 Attention depth ablation T able 2: Gate discrimination strength across attention depths at k = 32 . Separation ∆ is defined as cursor confidence at td=0 minus td=0.88, quantifying how strongly the gate arbitrates between prospecti ve and reactiv e control. Increasing depth beyond N L = 2 does not materially change separation, indicating that context windo w k , rather than network depth, is the critical architectural parameter . N L = 2 ( ⋆ ) was selected for all experiments; the g ain at N L = 3 is within seed variance. Depth ( N L ) Separation ( ∆ ) 1 0.164 2 0.192 ⋆ 3 0.195 Unlike Olsson et al. [75], where depth is a hard architectural floor (induction heads cannot form in a single-layer model), our gate achiev es partial discrimination at N L = 1 (0.164), with performance plateauing from N L = 2 onward (0.192 → 0.195). Context window k , not depth, is the binding constraint. 12

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment