Masked BRep Autoencoder via Hierarchical Graph Transformer

We introduce a novel self-supervised learning framework that automatically learns representations from input computer-aided design (CAD) models for downstream tasks, including part classification, modeling segmentation, and machining feature recognit…

Authors: Yifei Li, Kang Wu, Wenming Wu

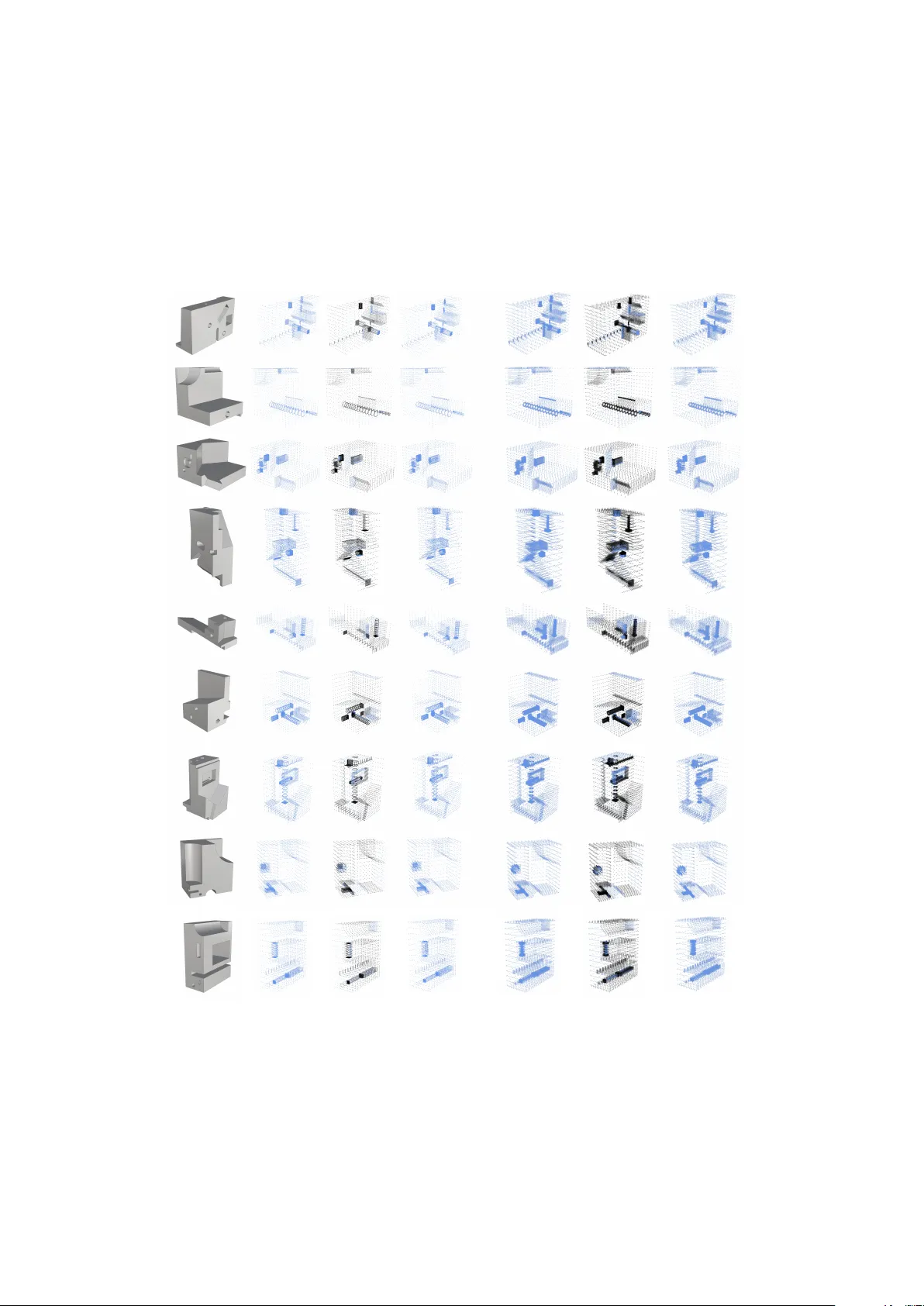

Masked BRep Autoencoder via Hierarchical Graph T ransformer Y ifei Li a , Kang W u a , W enming W u b , Xiao-Ming Fu a a University of Science and T ec hnology of China b Hefei University of T ec hnology Abstract W e introduce a no vel self-supervised learning frame work that automatically learns representations from input computer-aided design (CAD) models for downstream tasks, including part classification, modeling segmentation, and machining feature recognition. T o train our network, we construct a large-scale, unlabeled dataset of boundary representation (BRep) models. The success of our algorithm relies on two k ey components. The first is a masked graph autoencoder that reconstructs randomly mask ed geometries and attributes of BReps for representation learning to enhance the generalization. The second is a hierarchical graph T ransformer architecture that elegantly fuses global and local learning by a cross-scale mutual attention block to model long-range geometric dependencies and a graph neural network block to aggreg ate local topological information. After training the autoencoder, we replace its decoder with a task-specific network trained on a small amount of labeled data for downstream tasks. W e conduct experiments on v arious tasks and achiev e high performance, even with a small amount of labeled data, demonstrating the practicality and generalizability of our model. Compared to other methods, our model performs significantly better on do wnstream tasks with the same amount of training data, particularly when the training data is very limited. K e ywor ds: CAD Models, Representation learning, Hierarchical Graph T ransformer 1. Introduction As a core component of modern digital industrial manufacturing, computer -aided design (CAD) enhances process e ffi ciency by using computers to create, modify , analyze, and optimize product models, thereby laying a crucial foundation across industries. In addition to creating models, CAD in v olves a range of critical tasks, such as machining feature recognition, face segmentation, and shape classification [1, 2, 3, 4, 5, 6, 7, 8, 9]. Machine learning for processing CAD models has emerged as a leading research focus, dri ven by their superior generalizability and e ffi ciency compared to traditional handcrafted methods [ 10 , 11 ]. In particular , since boundary representations (BReps) are the dominant representation for CAD models due to their precise geometric description, we focus on learning algorithms for BReps. Howe ver , the practical v alue of learning-based methods is limited by the inherent characteristics of CAD data. A ke y characteristic is the scarcity of models, as they often contain sensitiv e commercial intellectual property that enterprises closely guard. Hence, constructing large-scale, high-quality labeled datasets is challenging, consequently limiting the performance of learning algorithms. T o address this limitation, a viable way is to perform representation learning on large-scale unlabeled datasets, enabling artificial intelligence (AI) models to automatically extract meaningful features for do wnstream tasks, thereby improving their performance with limited labeled data. Howe ver , it faces two main challenges. First, the combination of discrete topology with continuous geometry in BReps complicates the e ff ective handling. Second, the inconsistent and hierarchical nature of BRep graph structures hinders understanding and analysis. Many learning methods hav e been proposed for processing CAD models [ 12 , 4 , 13 , 8 , 14 , 10 , 5 , 6 ]. Some require a large amount of annotated data [ 14 , 8 , 12 , 4 , 13 ] and are limited to specific downstream tasks, such as feature recognition and surface se gmentation. Other works [ 10 , 5 , 6 , 15 ] train on unlabeled data, but their graph neural network (GNN) encoders only aggregate local information, ignoring global dependencies. Recently , Transformer -based architectures hav e emerged to address this [ 12 , 8 ]. Especially , BRepFormer [ 9 ] successfully applies generic graph Transformers to capture long-range global dependencies. Howe ver , their flat, single-level attention block is ine ff ectiv e for extreme scale variations (e.g., large planes vs. small fillets) in CAD models and is easily ov erwhelmed by the sev ere data redundancy (e.g., planar re gions require fewer samples) introduced by dense geometric sampling, leading to ine ffi cient representation learning. In this paper , we introduce a self-supervised learning framew ork for acquiring e ff ectiv e represen- tations of BReps from a large-scale, unlabeled dataset. There are two essential technical components. First, the framew ork inherits from the eleg ant masked autoencoder [ 16 ]. By representing the BRep as a geometric attrib uted adjacency graph (gAAG) [ 4 ], it learns to reconstruct randomly masked face / edge geometries and their attributes from input CAD models, while keeping the graph topology in v ariant to enable e ff ectiv e representation learning. Second, we propose a tailored hierarchical graph T ransformer , serving as its core encoder , with both global and local learning to tackle the heterogeneity and extreme scale v ariations of practical BRep data. Its architecture centers on a pro- gressiv e, coarse-to-fine cross-attention model that processes CAD face geometries via dual-resolution UV grids to capture long-range geometric dependencies, follo wed by a message-passing neural network (MPNN) to aggre gate local topological information. In summary , the progressi ve abstraction is coupled with the self-supervised masked reconstruction task that guides the encoder to distill essential CAD topologies and geometries rather than merely memorizing raw sampling coordinates. Extensiv e e xperiments on a dataset of 283,018 models demonstrate that our pre-trained hierar - chical encoder achie ves superior generalization. It significantly outperforms existing representati ve methods across multiple do wnstream tasks, especially under highly limited supervision (e.g., 0 . 1% labeled data). 2. Related W ork Learning for BRep. The di verse geometries and complex topologies of BReps pose significant challenges for neural network learning. Methods [ 17 , 18 , 3 , 2 , 19 , 20 ] circumvent these challenges by con verting BRep models into alternati ve 3D representations, like point clouds, vox els, or meshes, but f ail to e xploit the topological information inherent in BReps. A more faithful w ay treats BRep as a structured graph [ 21 , 11 , 22 , 23 ], where each BRep face is represented as a node and each BRep edge as a graph edge. UVNet [ 10 ] samples geometric signals from both surfaces and curv es to conv ert BRep models into graphs and applies GNN to aggregate information across the BRep topology . Owing to its e ff ectiveness in handling BRep geometry and topology , UVNet has inspired a series of follo w-up works [ 24 , 1 , 25 , 26 , 4 , 12 , 27 , 13 , 28 ]. Among them, W u et al. [ 4 ] incorporate attributes to achieve improved performance. Zou et al. [ 8 ] lev erage T ransformer -based attention to capture long-range dependencies across the topology , but they do not use the graph-structured messaging mechanism to aggreg ate neighborhood information. Moreover , BRepMFR [ 12 ] and BRepFormer [ 9 ] introduce graph T ransformers for global interactions. Howe ver , applying single- lev el attention uniformly remains inherently challenging for CAD models’ extreme scale variations, as it struggles to decouple macroscopic topologies from dense microscopic details. T o address this, we propose a hierarchical architecture featuring a cross-scale mutual attention block that establishes a dual-resolution information bottleneck, explicitly decoupling macro-anchors from micro-features. Finally , an MPNN aggregates local graph topology , seamlessly synergizing global hierarchical abstraction with local messaging for robust representation learning. Self-supervised learning for CAD models. Self-supervised learning is especially valuable for CAD models, where publicly av ailable, well-annotated datasets are scarce. Jones et al. [ 5 ] propose a reconstruction-based self-supervised learning method that, while e ff ectiv e in fe w-shot settings, is 2 Face geometry Face attribute Edge geometry Edge attribute Mask Graph Encoder Graph Decoder BRep Decoder Feature Loss Geometry Loss Small Labeled Dataset Pretrained Encoder Modeling Segmentation Machining Feature Recognition Part Classification Downstream Task Head BRep Encoder logits Pocket Hole Chamfer MAE Face geometry Face attribute Edge geometry Edge attribute Face geometry Face attribute Edge geometry Edge attribute Figure 1: Giv en a CAD model, our method first applies a BRep encoder to construct a gAAG from the extracted BRep information. A Graph encoder is then implemented to update the graph features with both global and local information. During pre-training, we train an MAE by reconstructing the randomly masked faces and edges for BRep representation learning (the upper part of the figure). In the fine-tuning stage, we train a new network, formed by connecting a task-specific head behind the encoder , using a small amount of labeled data with di ff erent loss functions (lower part of the figure). limited to CAD models composed of basic geometric primiti ves. BRep-BER T [ 6 ] con v erts BRep entities into discrete tokens via a GNN tokenizer and trains a Transformer with a masked entity modeling task. Howe ver , this discretization step introduces a significant bottleneck by compressing geometries and topologies into a limited number of token IDs. BRepGen [ 29 ] uses a hierarchical tree representation to encode BRep models into a latent space, which cannot be e ff ectively used for do wnstream tasks. Zhang et al. [ 17 ] operate on point clouds, which inherently lack topological and detailed geometric information. Our method builds upon the masked autoencoder (MAE) [ 16 ] framew ork. Closely related to our work, Y ao et al. [ 15 ] successfully apply MAE to BRep models by masking latent features and employing an MPNN. In contrast, we enforce a stricter information bottleneck by directly masking raw geometric inputs and use a hierarchical GT to capture long-range global dependencies prior to local topology aggregation. Graph T ransformer . Unlike traditional GNNs, which rely solely on local message passing, GTs also use attention mechanisms to capture long-range dependencies, thereby becoming powerful architectures for graph-structured data to achie ve more expressi ve and flexible representations. Most prior work is centered on molecular property prediction, where input graphs represent chemical compounds, such as Graph-BER T [ 30 ], GR O VER [ 31 ], GraphiT [ 32 ], GraphT rans [ 33 ], SAN [ 34 ], Graphormer [ 35 ], and Sgformer [ 36 ]. While GTs have shown immense potential, their direct application to BRep data requires careful architectural design. Although the pioneers [ 12 , 9 ] hav e introduced GTs to BReps, they predominantly inherit flat attention structures from general-purpose GTs, which limits their e ff ectiv eness on complex CAD topologies. T o bridge this gap, we propose a hierarchical GT encoder tailored to BReps. By seamlessly integrating a dual-resolution information bottleneck with local message passing, our architecture ov ercomes the limitations of flat attention, enabling highly expressi ve and transferable representation learning across div erse do wnstream tasks. 3. Method Our proposed self-supervised BRep representation learning method consists of two parts: BRep information extraction (Sec. 3.1) and pre-training (Sec. 3.2). F or pre-training, we employ the MAE frame work to reconstruct the mask ed geometries in the input models and propose a tailored hierarchical graph T ransformer encoder with a cross-scale mutual attention block. After pre-training, we concatenate a specific-task network behind our encoder to form a ne w network, and then train it with a small amount of labeled data for v arious downstream tasks (Sec. 3.3). The pipeline of our method is shown in Fig. 1. 3 Face geometry Face attribute C C :Concat Edge geometry Edge attribute C 256D 256D 128D 256D 128D 256D 2DCNN 1DCNN MLP MLP MLP MLP 2DCNN 256D 256D 128D Figure 2: Our BRep encoder extracts and fuses geometric and attribute features into a unified gAAG representation via parallel CNN and MLP branches. 3.1. BRep information extr action T o address extreme scale v ariations (e.g., lar ge planes vs. small fillets) and reduce surface data redundancy (e.g., planar regions that require fewer sample points) in CAD models, we propose a dual-resolution geometry extraction strate gy that explicitly separates global shape from local detail. Extracting face information. For each face, to capture both its local and global geometric information, we perform UV parameterization [ 10 ] and uniformly sample two di ff erent resolution grids (3 × 3 for a global semantic guidance and 13 × 13 for fine-grained local details). W e extract the coordinates (3D), normal (3D), and trimming indicator (1D) of each grid point to form a 7D feature as the face geometric information. The face attribute for each grid point is a 16D vector that contains face type (6D), area (1D), centroid (3D), and bounding box (6D). Extracting edge information. Similar to the node feature construction, we uniformly sample 13 points on each BRep edge to extract the information of the edges. The edge geometric information is a 12D vector , containing coordinate (3D), tangent (3D), and the normals of the two incident faces (6D), while the attribute is a 9D v ector with edge type (5D), length (1D), and con vexity (3D). 3.2. Pr e-training 3.2.1. Encoder Our encoder has two parts: (1) BRep encoder and (2) Graph encoder . BRep encoder . Raw BRep models consist of highly heterogeneous data, intricately intertwining continuous geometric with discrete topological information. T o systematically process such hetero- geneous data while strictly preserving its structural integrity , we project the BRep into a unified latent graph space via a gAAG [ 4 ]. In this formulation, the gAAG nodes represent the extracted face features, while the graph edges represent the BRep topological edge features. Specifically , our BRep encoder employs fiv e parallel branches to independently embed the geometries and attributes: tw o 2D CNNs encode the face geometries, and an MLP encodes the attributes (Fig. 2). The outputs of the two face geometry embeddings are both 256D vectors, while the attribute embedding is a 128D v ector . W e then concatenate each dual-resolution face geometric v ector with its corresponding attrib ute v ector , feeding them into another MLP to produce two decoupled 256D embeddings, denoted as f i low and f i high , which serve as the node features for the gAA G. Similarly , the geometries and attributes of BRep edges are extracted using a 1D CNN and an MLP . They are transferred to 256D and 128D v ectors and then concatenated to generate a unified 256D embedding e i as the graph edge feature. Details are provided in the supplementary . 4 E CSMA Block CSMA Block E MPNN Model ) 0 ( low F ) 0 ( high F ) 2 ( low F ) 2 ( high F CA Q K,V K,V Q K,V Q SA CA CA ) 1 k ( l o w F 1 low k F E 1 high k F k F low k F high E Figure 3: Left: The overall architecture of the Graph encoder , processing multi-resolution face features F low , F high , and edge features E . Right: The internal structure of the k -th CSMA block. CA and SA denote cross-attention and self-attention layers, respectiv ely , while Q, K, and V represent queries, keys, and v alues. Graph encoder . Standard GNNs su ff er from over -smoothing [ 37 ], failing to capture long-range geometric dependencies. Simultaneously , directly applying standard T ransformers to dense BRep samplings is ine ffi cient, as the attention mechanism can be easily ov erwhelmed by redundant local details. T o address this, our hierarchical graph Transformer encoder combines a cross-scale mutual attention (CSMA) module to capture global geometric structures and an MPNN [ 4 ] to enforce local topological connections (Fig. 3 - Left). • Cross-Scale Mutual Attention. T o e ffi ciently process features, we group node features by grid resolution into low-resolution features F low and high-resolution features F high [ 38 ], along with edge features E . T o make the network focus on key geometric changes rather than being distracted by lar ge, flat surfaces, our CSMA block uses sparse, lo w-resolution features to query and extract essential details from dense, high-resolution features (Fig. 3 - Right): F ( k ) low = SA CA F ( k − 1) low , F ( k − 1) high , (1) F ( k ) high = CA CA F ( k − 1) high , F ( k ) low , E , (2) where CA is multi-head cross-attention (queries from the first argument, keys / v alues from the second), and SA is self-attention. W ith F (0) low = F low and F (0) high = F high , the updated F ( k ) low aggregates a global conte xt to guide the refinement of F ( k ) high alongside explicit topological edge features E . W e stack two such CSMA blocks to ensure the ov erall structure and local details are fully integrated. Crucially , this asymmetric bottleneck pre vents the netw ork from being ov erwhelmed by redundant flat surf aces and a voids the ov er-smoothing typical of GNNs. It ensures that crucial local details are accurately retained and integrated into the global context. • Local T opological Message Passing. Although the CSMA blocks capture the o verall structure, BRep faces are geometrically connected by local edges. T o explicitly model these precise neighborhood relationships, we pass the updated face features F (2) high and edge features E into an MPNN: F latent , E latent = MPNN F (2) high , E ; G adj , (3) where G adj is the explicit adjacency graph. By placing this local MPNN after the global T ransformer , our encoder e ff ectiv ely combines the overall 3D geometric structure with precise face-to-face connections. Detailed configurations are in the supplementary . 5 3.2.2. T wo-Stage Decoder Reconstructing heavily mask ed BRep models in a single step is highly challenging. Instead of directly predicting raw 3D geometries and attrib utes from the compressed latent space, we design a two-stage decoding strategy: a graph decoder followed by a BRep decoder . 1. The graph decoder first reconstructs the intermediate node and edge features within the graph space. 2. Subsequently , the BRep Decoder maps these reco vered features back to explicit geometries and attributes. This two-stage breakdo wn not only eases the reconstruction b ut also allo ws the intermediate graph features to serve as additional supervision signals, thereby stabilizing the pre-training process. Graph decoder . T o first recov er the intermediate node and edge features from the mask ed context, the graph decoder employs an MPNN with an architecture symmetric to the graph encoder: F recon , E recon = MPNN F latent , E latent ; G adj , (4) where F recon and E recon represent the reconstructed graph node and edge features, respectively . During training, these features are directly supervised by the features e xtracted from the unmasked BRep using the BRep encoder . BRep decoder . Subsequently , the BRep decoder utilizes four parallel branches to map these recov- ered graph features back to the explicit geometric and attrib ute details. For the continuous geometric structures, we employ FoldingNet [ 39 ] branches, which are naturally suited for deforming latent representations into 3D surfaces (7D face geometry) and curves (12D edge geometry). Simultane- ously , two standard MLPs are used to re gress the 16D face discrete attrib utes and 9D edge discrete attributes. Detailed layer-wise configurations are pro vided in the supplementary material. 3.2.3. Masked Pr e-training T o learn robust BRep representations, we formulate a self-supervised masked autoencoding task. Crucially , instead of masking latent features [ 15 ], we randomly mask the raw geometries and attributes of input f aces and edges at a high ratio (e.g., 70%). This strict input-le vel corruption forces the network to deduce missing structures from the survi ving global context, pre venting trivial local shortcuts. Specifically , we reconstruct the edge features and only the high-resolution f ace features, along with their original geometric information. Since higher -resolution grids encapsulate richer geometric information, reconstructing them enables the model to accurately capture small geometric structures. Our objecti ve includes fi ve loss terms to provide comprehensi ve supervision across both latent features and explicit geometries. It includes a latent feature alignment loss L feat to penalize the div ergence between reconstructed graph features and the target features e xtracted from the original unmasked BRep. Crucially , a stop-gradient operation is applied to this target branch to prev ent representation collapse and ensure the network learns meaningful semantics, alongside four explicit reconstruction losses ( L face geom , L face attr , L edge geom and L edge attr ) for face / edge geometries and attributes. Detailed formulations are provided in the supplementary material. W e optimize the loss functions over all faces and edges to ensure our network accurately reco vers the mask ed information. 3.3. F ine-tuning After pre-training, we attach a task-specific head behind the pre-trained encoder for downstream tasks. The entire network is then fine-tuned by di ff erent loss functions with a small amount of labeled data from the target dataset. Details for each downstream task are provided in the supplementary materials. 6 Input Sampling Masked Reconstruct i on Input Sampling Masked Reconstruction Figure 4: Four reconstruction examples. For each example, the sequence from left to right sho ws: (1) the input BRep model, (2) the full surface point cloud, (3) the masked input where black denotes masked faces and blue denotes unmasked faces, and (4) the reconstructed point cloud. 4. Experiments All experiments are implemented in PyT orch and executed on a server equipped with a sin- gle NVIDIA A800 GPU. W e first perform self-supervised pre-training, followed by task-specific ev aluation across three downstream benchmarks: (1) machining feature recognition, (2) modeling segmentation, and (3) part classification. Unless otherwise specified, all results are obtained under identical hardware configurations, and the best results are in bold for statistics comparisons. 4.1. Datasets and Data Isolation Pr otocol T o rigorously ev aluate our method and pre vent potential data leakage (i.e., data contamination), we enforce a strict data-isolation protocol across all experiments. Specifically , the CAD models are randomly split into training, v alidation, and testing subsets according to the task: a 70% / 15% / 15% split is used for pre-training, model segmentation, and classification, while an 80% / 10% / 10% split is adopted for machining feature recognition. Crucially , the testing sets are strictly held out and remain completely unseen during both the self-supervised pre-training and downstream fine-tuning phases, ensuring a fair e v aluation. Utilized benchmarks. Our experiments utilize the follo wing benchmarks: • MFInstSeg [4] (62,495 models): per-face machining-feature labels; 25 classes. • MFCAD ++ [14] (59,665 models): per-face machining-feature labels; 25 classes. • CADSynth [12] (100,000 models): per-f ace machining-feature labels; 25 classes. • Fusion 360 Gallery Segmentation [ 11 ] (35,858 models): per-face modeling-operation labels; 8 classes. • SolidLetter [ 10 ] (96,861 models): alphabetic CAD models with shape-lev el labels; 26 classes. Pr e-training corpus.. Follo wing our strict isolation protocol, the pre-training dataset is constructed by merging only the training splits (70%) of MFInstSeg, MFCAD ++ , CADSynth, Fusion 360 Gallery , and a 25,000-sample subset of SolidLetter . While the total collection of all datasets amounts to 283,018 models, this sanitized pre-training corpus comprises exactly 198,113 unique, unlabeled CAD models, ensuring absolute isolation from any do wnstream testing data. Downstr eam adaptation.. For downstream tasks, we utilize the training and validation splits of the respecti ve tar get datasets for fine-tuning, and report all final metrics exclusi vely on the strictly isolated testing splits. 7 6 - sides passage Triangular passage Rectangular pocket Horizontal circular end blind slot O - ring Circular blind step Vertical circular end blind slot Triang u lar blind step Rectangular passage Figure 5: Machining feature recognition under the full supervised setting. W e accurately predict various machining features, such as passages, pockets, slots, and steps. 4.2. Pr e-training In the pre-training stage, we use the AdamW optimizer with a batch size of 128 and an initial learning rate of 10 − 4 . The learning rate follows a cosine decay schedule. Pre-training is conducted for 100 epochs from scratch, with 70% of faces and edges randomly masked in each sample. W e show four e xamples of face-coordinate reconstruction in Fig. 4. The results show that our algorithm can correctly reconstruct the geometry of the masked BRep faces. Therefore, our algorithm does learn an e ff ectiv e BRep representation. See supplementary for more reconstruction results. 4.3. Comparisons W e use face-le vel accurac y (Acc) and mean intersection o ver union (mIoU) to e v aluate perfor- mance on machining feature recognition and modeling segmentation. F or part classification, we report shape-lev el accuracy only . The formulas of Acc and mIoU are shown in the supplementary . 4.3.1. Machining featur e reco gnition W e e v aluate our method on per-f ace machining-feature recognition (25 classes) using MFInstSeg, MFCAD ++ , and CADSynth. W e compare against fully supervised baselines, AA GNet [ 4 ] and the recent state-of-the-art BRepFormer [ 9 ], adopting their o ffi cial implementations, alongside a recent self-supervised pre-training method, BRepMAE [ 15 ]. All datasets follo w our strict 80 / 10 / 10 isolation protocol. T o assess fe w-shot performance, we fine-tune the network under v arying supervision ratios (0.1%–100%). W e use the AdamW optimizer for 200 epochs, setting learning rates to 10 − 4 for the task-specific head and 10 − 5 for the pre-trained encoder . As shown in T able 1, while the fully supervised BRepFormer demonstrates strong capacity under full supervision (100%), training Transformers from scratch with extremely limited data (e.g., 0.1%) is inherently challenging, leading to a drastic performance drop (e.g., falling to 33.43% Acc and 7.47% mIoU on MFInstSeg). In contrast, pre-training methods e ff ectively alleviate this issue. Specifically , our method maintains a rob ust 88.75% Acc and 66.38% mIoU on MFInstSeg at the 0.1% setting. It should be noted that while our method is strictly pre-trained on our 198K sanitized corpus, the BRepMAE results are reported from their original paper , which utilizes a significantly larger pre-training corpus of 250K models. Despite being pre-trained on substantially less unlabeled data, our method demonstrates superior data e ffi cienc y , consistently outperforming BRepMAE across most lo w-data regimes (e.g., achie ving 96.19% vs. 94.75% Acc on MFInstSeg at 0.5%, and 89.19% vs. 86.56% Acc on MFCAD ++ at 0.1%). This confirms that our hierarchical MAE pre-training extracts more transferable and robust representations. Furthermore, under full supervision, our method remains highly competitiv e with the fully supervised upper bound (e.g., 99.53% vs. BRepFormer’ s 99.62% Acc on MFInstSeg), proving that we achie ve strong few-shot generalization without sacrificing the model’ s overall representational capacity . T o further illustrate the e ff ecti veness of our method, Figure 5 sho ws representative results from the machining feature recognition task. 4.3.2. Modeling se gmentation W e ev aluate our pre-trained encoder on the Fusion 360 Gallery Segmentation dataset (8 classes). W e compare against BRep-BER T [ 6 ], BR T [ 8 ], and BRepFormer [ 9 ] under 10-shot, 20-shot, and 8 T able 1: Comparison across MFInstSeg, MFCAD ++ , and CADSynth under varying supervision ratios. Accuracy (Acc, %) and mIoU (%) are reported. Method 0.1% 0.5% 1% 1.5% 2% 3% 100% MFInstSeg Acc AA GNet 46.49 76.62 85.19 90.30 92.31 92.37 99.13 BRepFormer 33.43 86.14 91.23 95.77 96.51 97.64 99.62 BRepMAE 88.81 94.75 96.99 97.44 97.66 98.20 99.57 Ours 88.75 96.19 97.31 97.67 97.92 98.26 99.53 mIoU AA GNet 34.01 67.51 77.50 84.64 86.76 87.32 98.36 BRepFormer 7.47 62.66 73.89 86.17 88.25 91.85 98.75 BRepMAE 67.63 83.38 90.39 91.33 91.93 93.59 98.60 Ours 66.38 87.21 90.81 91.74 92.60 93.86 98.47 MFCAD ++ Acc AA GNet 51.22 81.47 87.38 90.64 91.64 94.42 99.27 BRepFormer 32.95 83.92 92.49 95.82 96.93 97.70 99.71 BRepMAE 86.56 94.39 96.68 97.18 97.21 97.91 99.59 Ours 89.19 95.94 97.34 97.60 97.97 98.36 99.61 mIoU AA GNet 38.23 71.88 80.66 84.62 86.28 90.91 98.65 BRepFormer 6.36 58.02 77.80 86.80 90.00 92.56 99.27 BRepMAE 65.75 83.35 89.78 91.41 91.87 93.63 98.82 Ours 67.29 86.26 90.97 92.12 93.25 94.57 99.03 CADSynth Acc AA GNet 58.53 87.36 95.58 96.08 97.01 97.14 99.66 BRepFormer 59.34 65.60 97.49 98.33 98.65 98.73 99.88 BRepMAE 93.63 96.84 98.36 98.78 99.08 99.20 99.78 Ours 93.82 98.54 98.91 99.25 99.29 99.42 99.86 mIoU AA GNet 47.17 81.04 92.40 93.42 94.92 94.61 99.37 BRepFormer 10.97 82.61 89.84 93.04 94.38 94.49 99.51 BRepMAE 78.40 88.91 92.91 94.70 96.26 96.50 99.06 Ours 79.29 93.72 95.53 96.85 97.03 97.55 99.43 full-data settings, adopting their o ffi cial implementations or reported results. The dataset is split into 70% training, 15% validation, and 15% testing. As shown in T able 2, our method achiev es the highest performance across all settings. Under limited supervision (10-shot and 20-shot), fully supervised approaches like BR T and BRepFormer naturally experience performance drops since they are trained from scratch. Benefiting from the pre-trained representations, our model yields 72.33% Acc and 34.63% mIoU in the 10-shot scenario, demonstrating strong few-shot generalization and substantially e xceeding the self-supervised baseline BRep-BER T (59.26% Acc). Furthermore, while the fully supervised BRepFormer excels in machin- ing feature recognition, its flat, single-lev el attention mechanism faces inherent challenges when handling the e xtreme scale v ariations of CAD surfaces. In contrast, our hierarchical architecture with the CSMA bottleneck and local message passing seamlessly integrates global geometric conte xts with crucial local details. Consequently , our method not only excels in fe w-shot scenarios but also maintains the highest accuracy (97.02%) and mIoU (86.75%) under full supervision (See Fig.6). 4.3.3. P art classification W e ev aluate our pre-trained encoder on the part classification task using the SolidLetter dataset, which consists of CAD models labeled across 26 alphabetic classes. W e compare our method with strong baselines: BRep-BER T [ 6 ], BR T [ 8 ], and BRepFormer [ 9 ]. For BRep-BER T , we report results from the original paper , as the publicly av ailable code is currently una vailable. For BR T and BRepFormer , we adopt their o ffi cial implementations and follo w the recommended hyperparameter settings. T o assess performance under di ff erent lev els of supervision, we conduct experiments in three 9 T able 2: Performance comparison on Fusion 360 Gallery Segmentation under di ff erent levels of supervision. The k -shot setting denotes using k labeled training samples in total. Accuracy (Acc, %) and mIoU (%) are reported. Model 10-shot 20-shot Full Acc mIoU Acc mIoU Acc mIoU BRep-BER T 59.26 19.02 62.30 24.20 95.14 82.88 BR T 54.98 13.54 56.69 14.99 94.48 79.23 BRepFormer 50.24 12.31 51.75 13.38 94.02 78.73 Ours 72.33 34.63 74.31 38.22 97.02 86.75 ExtrudeSide ExtrudeEnd CutSide CutEnd Fillet Chamfer RevolveSide RevolveEnd Figure 6: Examples of modeling segmentation results on the Fusion 360 Gallery se gmentation dataset under the full supervised setting. Our model accurately predicts per-face labels across 8 operation types, with distinct colors for each. settings: 10-shot, 20-shot, and full-data. In the few-shot settings, 10 or 20 labeled examples are sampled per class for training. The dataset is split in a class-balanced manner: for each alphabet class, 70% of the models are used for training, 15% for validation, and 15% for testing. All models are trained using the same experimental setup as described pre viously . Our method achie ves state-of-the-art performance on the SolidLetter benchmark across all supervision lev els. Under 10-shot and 20-shot settings, our model achieves 82.51% and 89.58% accuracy , respecti vely , outperforming the self-supervised baseline BRep-BER T (68.71% and 75.92%) as well as fully supervised approaches like BRepF ormer (76.53% and 84.25%) and BR T (54.84% and 62.69%) by substantial margins (See Fig 7 Right). Notably , even with v ery limited labeled data, our method maintains high classification accuracy , demonstrating strong generalization and data e ffi ciency . Under full supervision, our method continues to yield the best performance, achie ving 97.91% accuracy , slightly surpassing BRep-BER T (97.76%), BRepFormer (97.59%), and BR T (97.35%). W e further e valuate the cross-dataset generalization of our model by removing SolidLetter samples from the pre-training corpus (denoted as Ours*). As shown in T able 3, e ven without exposure to the tar get dataset during pre-training, our method achieves 81.83% and 88.47% accurac y in the 10-shot and 20-shot settings, respecti vely , substantially outperforming all baselines, including the strong BRepFormer . Under full supervision, Ours* attains 97.78%, closely matching the best results. These findings confirm the robustness and transferability of our pre-trained encoder across di ff erent geometric domains. 4.4. Ablation study T o isolate the contributions of our core designs, we conduct ablation studies on MFInstSeg (T able 4) across four critical dimensions. Additional ablations are detailed in the supplementary material. 1. Dual-Resolution Information Bottleneck. W e compare our dual-resolution (3 × 3 and 13 × 13) CSMA block against Single Res. (13 × 13 only) and T riple Res. (adding 7 × 7). Single Res. consistently lags behind our method (e.g., 95.19% vs. 97.31% at 1% data), indicating that lacking a sparse global anchor limits representation capability . Furthermore, T riple Res. slightly underperforms our approach (e.g., 99.42% vs. 99.53% at 100%), demonstrating that an intermediate resolution introduces mild attention dilution. Thus, our extreme coarse-to-fine bottleneck pro vides the optimal balance for feature compression. 2. Necessity of Masked Modeling. Pre-training with simple autoencoding (0% mask ratio, w / o Masking ) causes a drastic performance collapse in lo w-data regimes, dropping to 66.41% at 0.1% 10 ExtrudeSide ExtrudeEnd CutSide CutEnd Fillet Chamfer RevolveSide RevolveEnd Ours BRT 13 13 13 13 13 10 13 20 13 13 13 13 Ground Truth Classes Input Model Figure 7: Performance comparison with BRT under the 10-shot setting. Left: The segmentation task, where our method predicts face labels more accurately . Right: The part classification task, where our method correctly identifies all shapes as n (class 13), while BR T misclassifies the last two. T able 3: Comparison on SolidLetter classification under di ff erent lev els of supervision. The k -shot setting denotes using k labeled training samples for each class. “Ours*” denotes our model pre-trained without SolidLetter data. Accuracy (Acc, %) is reported. Model 10-shot Acc 20-shot Acc Full Acc BRep-BER T 68.71 75.92 97.76 BR T 54.84 62.69 97.35 BRepFormer 76.53 84.25 97.59 Ours* 81.83 88.47 97.78 Ours 82.51 89.58 97.91 supervision. This > 20% gap confirms that high-ratio masking is essential, forcing the network to infer missing geometries from context rather than simply memorizing local features. 3. Architectural Routing (Global to Local). Reversing our architecture to apply the MPNN before the T ransformer ( MPNN first ) significantly degrades performance (e.g., 76.39% vs. 88.75% at 0.1%). This prov es that establishing a global, coarse-to-fine geometric context before local topological message passing serves as a superior inducti ve bias for BRep data. 4. Representation T ransferability . When strictly freezing the pre-trained encoder and only updating the downstream MLP head ( F r ozen encoder ), the model still achiev es a remarkable 82.01% accuracy at 0.1% supervision. This strong performance with frozen representations demonstrates that our pre-training framew ork extracts high-quality , task-agnostic geometric features. 5. Masking strategies. Instead of masking inputs, we randomly mask the gAA G features after the BRep encoder . This consistently underperforms, confirming that input-level masking better guides pre-training. 5. Conclusion W e propose the Masked BRep Autoencoder , a novel self-supervised frame work for CAD repre- sentation learning. Our method e ff ectively captures both the geometric and topological structures of BRep models by directly reconstructing the ra w geometries and attrib utes of mask ed faces and edges, establishing a rigorous information bottleneck. By featuring a hierarchical architecture that seamlessly integrates global geometric contexts via the cross-scale mutual attention (CSMA) bottle- neck and preserves crucial local details through explicit local message passing, our encoder learns rich, transferable representations. Extensiv e experiments on machining feature recognition, modeling se gmentation, and part classification benchmarks demonstrate the e ff ectiv eness and versatility of our approach. Notably , our method achie ves strong performance with v ery limited supervision and remains competiti v e under full-data settings. These results highlight the practicality and generalizability of our framew ork for a wide range of do wnstream tasks. In the future, as larger datasets become a vailable, our frame work has the potential to scale to broader applications, such as BRep generativ e models. 11 T able 4: Ablation study on MFInstSeg under various supervision ratios. The core architectural and pre-training designs are ev aluated. Configuration 0.1% 0.5% 1% 1.5% 2% 3% 100% Single Res. (13 × 13 only) 85.83 93.14 95.19 95.88 97.16 97.42 99.27 T riple Res. (w / 7 × 7) 86.41 94.46 96.40 97.04 97.38 97.78 99.42 w / o Masking (0% Mask) 66.41 84.19 92.99 94.20 95.33 95.96 99.43 MPNN first 76.39 92.10 94.95 95.62 96.12 96.41 99.06 Mask gAA G 78.63 92.98 94.36 95.11 95.75 96.48 99.16 Frozen encoder 82.01 92.71 94.70 95.74 96.15 96.56 98.64 Ours (Default) 88.75 96.19 97.31 97.67 97.92 98.26 99.53 Limitations. Although our exhausti ve dual-resolution sampling is crucial for capturing fine-grained details, it inherently increases the computational and storage overhead during data preprocessing. Consequently , scaling this face-lev el representation to massiv e industrial assemblies introduces significant memory bottlenecks, restricting the maximum sequence length during training. References [1] Y . Shi, Y . Zhang, R. Harik, Manufacturing feature recognition with a 2d con volutional neural network, CIRP Journal of Manufacturing Science and T echnology 30 (2020) 36–57. [2] H. Zhang, S. Zhang, Y . Zhang, J. Liang, Z. W ang, Machining feature recognition based on a nov el multi-task deep learning network, Robotics and Computer -Integrated Manuf acturing 77 (2022) 102369. [3] X. Y ao, D. W ang, T . Y u, C. Luan, J. Fu, A machining feature recognition approach based on hierarchical neural network for multi-feature point cloud models, Journal of Intelligent Manufacturing 34 (6) (2023) 2599–2610. [4] H. W u, R. Lei, Y . Peng, L. Gao, Aagnet: A graph neural network tow ards multi-task machining feature recognition, Robotics and Computer-Inte grated Manufacturing 86 (2024) 102661. [5] B. T . Jones, M. Hu, M. K odnongbua, V . G. Kim, A. Schulz, Self-supervised representation learning for cad, in: Proceedings of the IEEE / CVF conference on computer vision and pattern recognition, 2023, pp. 21327–21336. [6] Y . Lou, X. Li, H. Chen, X. Zhou, Brep-bert: Pre-training boundary representation bert with sub- graph node contrastiv e learning, in: Proceedings of the 32nd A CM International Conference on Information and Knowledge Management, 2023, pp. 1657–1666. [7] J. Hou, C. Luo, F . Qin, Y . Shao, X. Chen, Fus-gcn: E ffi cient b-rep based graph con volutional networks for 3d-cad model classification and retrie val, Adv anced Engineering Informatics 56 (2023) 102008. [8] Q. Zou, L. Zhu, Bringing attention to cad: Boundary representation learning via transformer , Computer-Aided Design (2025) 103940. [9] Y . Dai, X. Huang, Y . Bai, H. Guo, H. Gan, L. Y ang, Y . Shi, Brepformer: Transformer -based b-rep geometric feature recognition, in: Proceedings of the 2025 International Conference on Multimedia Retriev al, 2025, pp. 155–163. [10] P . K. Jayaraman, A. Sanghi, J. G. Lambourne, K. D. W illis, T . Davies, H. Shayani, N. Morris, Uv-net: Learning from boundary representations, in: Proceedings of the IEEE / CVF conference on computer vision and pattern recognition, 2021, pp. 11703–11712. 12 [11] J. G. Lambourne, K. D. W illis, P . K. Jayaraman, A. Sanghi, P . Meltzer , H. Shayani, Brepnet: A topological message passing system for solid models, in: Proceedings of the IEEE / CVF conference on computer vision and pattern recognition, 2021, pp. 12773–12782. [12] S. Zhang, Z. Guan, H. Jiang, X. W ang, P . T an, Brepmfr: Enhancing machining feature recogni- tion in b-rep models through deep learning and domain adaptation, Computer Aided Geometric Design 111 (2024) 102318. [13] X. Zheng, H. Chen, F . He, X. Liu, Sfrgnn-da: An enhanced graph neural network with domain adaptation for feature recognition in structural parts machining, Journal of Manufacturing Systems 81 (2025) 16–33. [14] A. R. Colligan, T . T . Robinson, D. C. Nolan, Y . Hua, W . Cao, Hierarchical cadnet: Learning from b-reps for machining feature recognition, Computer-Aided Design 147 (2022) 103226. [15] C. Y ao, K. W u, Z. Zheng, S. Xing, X.-M. Fu, Brepmae: Self-supervised masked brep autoen- coders for machining feature recognition, arXiv preprint arXi v:2602.22701 (2026). [16] K. He, X. Chen, S. Xie, Y . Li, P . Dollár , R. Girshick, Mask ed autoencoders are scalable vision learners, in: Proceedings of the IEEE / CVF conference on computer vision and pattern recognition, 2022, pp. 16000–16009. [17] H. Zhang, W . W ang, S. Zhang, Z. W ang, Y . Zhang, J. Zhou, B. Huang, Point cloud self- supervised learning for machining feature recognition, Journal of Manufacturing Systems 77 (2024) 78–95. [18] R. Lei, H. W u, Y . Peng, Mfpointnet: A point cloud-based neural network using selective downsampling layer for machining feature recognition, Machines 10 (12) (2022) 1165. [19] H.-X. Guo, Y . Liu, H. Pan, B. Guo, Implicit con version of manifold b-rep solids by neural halfspace representation, A CM Transactions on Graphics (T OG) 41 (6) (2022) 1–15. [20] Z. Zhang, P . Jaiswal, R. Rai, Featurenet: Machining feature recognition based on 3d conv olution neural network, Computer -Aided Design 101 (2018) 12–22. [21] B. Jones, D. Hildreth, D. Chen, I. Baran, V . G. Kim, A. Schulz, Automate: A dataset and learning approach for automatic mating of cad assemblies, A CM Transactions on Graphics (TOG) 40 (6) (2021) 1–18. [22] S. Bian, D. Grandi, K. Hassani, E. Sadler , B. Borijin, A. Fernandes, A. W ang, T . Lu, R. Otis, N. Ho, et al., Material prediction for design automation using graph representation learning, in: International Design Engineering T echnical Conferences and Computers and Information in Engineering Conference, V ol. 86229, American Society of Mechanical Engineers, 2022, p. V03A T03A001. [23] K. D. Willis, P . K. Jayaraman, H. Chu, Y . T ian, Y . Li, D. Grandi, A. Sanghi, L. T ran, J. G. Lambourne, A. Solar-Lezama, et al., Joinable: Learning bottom-up assembly of parametric cad joints, in: Proceedings of the IEEE / CVF conference on computer vision and pattern recognition, 2022, pp. 15849–15860. [24] H. Zhang, W . W ang, S. Zhang, Y . Zhang, J. Zhou, Z. W ang, B. Huang, R. Huang, A novel method based on deep reinforcement learning for machining process route planning, Robotics and Computer-Inte grated Manufacturing 86 (2024) 102688. [25] F . Ning, Y . Shi, M. Cai, W . Xu, Part machining feature recognition based on a deep learning method, Journal of Intelligent Manufacturing 34 (2) (2023) 809–821. 13 [26] X. Liu, Y . Li, T . Deng, P . W ang, K. Lu, J. Chen, D. Y ang, A supervised community detection method for automatic machining region construction in structural parts nc machining, Journal of Manufacturing Systems 62 (2022) 367–376. [27] P . W ang, W .-A. Y ang, Y . Y ou, A hybrid learning framew ork for manufacturing feature recogni- tion using graph neural networks, Journal of Manufacturing Processes 85 (2023) 387–404. [28] Z. Li, X. T ong, P . Shi, M. Cai, F . Han, Classification of non-standard mechanical parts with graph con volutional networks, Engineering Applications of Artificial Intelligence 153 (2025) 110879. [29] X. Xu, J. Lambourne, P . Jayaraman, Z. W ang, K. W illis, Y . Furukaw a, Brepgen: A b-rep generativ e di ff usion model with structured latent geometry , ACM T ransactions on Graphics (TOG) 43 (4) (2024) 1–14. [30] J. Zhang, H. Zhang, C. Xia, L. Sun, Graph-bert: Only attention is needed for learning graph representations, arXiv preprint arXi v:2001.05140 (2020). [31] Y . Rong, Y . Bian, T . Xu, W . Xie, Y . W ei, W . Huang, J. Huang, Self-supervised graph transformer on large-scale molecular data, Adv ances in Neural Information Processing Systems 33 (2020). [32] G. Mialon, D. Chen, M. Selosse, J. Mairal, Graphit: Encoding graph structure in transformers (2021). . [33] Z. W u, P . Jain, M. Wright, A. Mirhoseini, J. E. Gonzalez, I. Stoica, Representing long-range context for graph neural networks with global attention, in: M. Ranzato, A. Beygelzimer , Y . Dauphin, P . Liang, J. W . V aughan (Eds.), Advances in Neural Information Processing Systems, V ol. 34, Curran Associates, Inc., 2021, pp. 13266–13279. [34] D. Kreuzer , D. Beaini, W . L. Hamilton, V . Létourneau, P . T ossou, Rethinking graph transformers with spectral attention, in: A. Beygelzimer , Y . Dauphin, P . Liang, J. W . V aughan (Eds.), Advances in Neural Information Processing Systems, 2021. URL https://openreview.net/forum?id=huAdB- Tj4yG [35] C. Y ing, T . Cai, S. Luo, S. Zheng, G. Ke, D. He, Y . Shen, T .-Y . Liu, Do transformers really perform bad for graph representation?, arXiv preprint arXi v:2106.05234 (2021). [36] Q. W u, W . Zhao, C. Y ang, H. Zhang, F . Nie, H. Jiang, Y . Bian, J. Y an, Sgformer: Simplifying and empowering transformers for large-graph representations, Adv ances in Neural Information Processing Systems 36 (2023) 64753–64773. [37] W . Zhang, Z. Sheng, Y . Jiang, Y . Xia, J. Gao, Z. Y ang, B. Cui, Ev aluating deep graph neural networks, arXi v preprint arXi v:2108.00955 (2021). [38] I. Cho, Y . Y oo, S. Jeon, S. J. Kim, Representing 3d shapes with 64 latent vectors for 3d di ff usion models, in: Proceedings of the IEEE / CVF International Conference on Computer V ision (ICCV), 2025, pp. 28556–28566. [39] Y . Y ang, C. Feng, Y . Shen, D. T ian, Foldingnet: Point cloud auto-encoder via deep grid defor- mation, in: Proceedings of the IEEE conference on computer vision and pattern recognition, 2018, pp. 206–215. 14 Appendix A. BRep Encoder Details Appendix A.1. Input attribute The set of attribute information defined for each f ace is as follo ws: • T ype (6D): One-hot vector indicating the surface type (plane, cylinder , cone, sphere, torus, or NURBS). • BBox (6D): The (x, y , z) coordinates of the two diagonal vertices of the axis-aligned bounding box (AABB). • Centroid (3D): The (x, y , z) coordinates of the face’ s centroid. • Area (1D): The total area of the f ace. Similarly , the set of attribute information defined for each edge is: • T ype (5D): One-hot vector indicating the curve type (Line, Circle, Ellipse, BSplineCurv e, or Other). • Length (1D): The total length of the edge. • Con vexity (3D): One-hot vector indicating the edge’ s con ve xity (concav e, con vex, or smooth). Appendix A.2. Ar chitectur e of BRep encoder Our BRep encoder consists of 7 modules in total: • T wo 2D CNNs for face geometry (one for each resolution). These are separate instances that all use the same architecture (T able A.5). • One 1D CNN for edge geometry (T able A.6). • One MLP for face attrib ute (T able A.7). • One MLP for edge attribute (T able A.8). • One MLP for face feature fusion (T able A.9). • One MLP for edge feature fusion (T able A.9). Appendix B. Hierarchical Graph T ransformer Details Appendix B.1. Ar chitectur e of Cr oss-Scale Mutual Attention (CSMA) Block The CSMA block is built from tw o distinct components: • Self-Attention Block (SA): Our SA block is a standard T ransformer encoder layer . It uses a Post-LayerNorm design and consists of one Multi-Head Attention module followed by a Feed-Forward Netw ork (FFN). • Cross-Attention Block (CA): Our CA block is a simplified module designed only for feature aggregation. It only consists of one Multi-Head Attention module. The ke y hyperparameters used to configure both SA and CA blocks are shared and detailed in T able B.10. 15 T able A.5: Architecture of the 2DCNN for face geometry . The same architecture is applied to process two f ace sampling resolutions. The input grid size is generally denoted as N × N , where N ∈ { 3 , 13 } . The con volution kernel size is k , padding is p , and groups for GroupNorm are G . Operator Input Shape Output Shape Con v2D, k = 3 , p = 1 7 × N × N 64 × N × N GroupNorm, G = 8 64 × N × N 64 × N × N ReLU 64 × N × N 64 × N × N Con v2D, k = 3 , p = 1 64 × N × N 128 × N × N GroupNorm, G = 16 128 × N × N 128 × N × N ReLU 128 × N × N 128 × N × N Con v2D, k = 3 , p = 1 128 × N × N 256 × N × N GroupNorm, G = 32 256 × N × N 256 × N × N ReLU 256 × N × N 256 × N × N Adaptiv eA vgPool2D 256 × N × N 256 × 1 × 1 Flatten 256 × 1 × 1 256 T able A.6: Architecture of the 1DCNN for edge geometry . The input is a sequence of 13 points sampled along the edge, each with a 12D vector . The con volution kernel size is k , the padding is p , and the groups for GroupNorm are G . Operator Input Shape Output Shape Con v1D, k = 3 , p = 1 12 × 13 64 × 13 GroupNorm, G = 8 64 × 13 64 × 13 ReLU 64 × 13 64 × 13 Con v1D, k = 3 , p = 1 64 × 13 256 × 13 GroupNorm, G = 32 256 × 13 256 × 13 ReLU 256 × 13 256 × 13 Adaptiv eA vgPool1D 256 × 13 256 × 1 Flatten 256 × 1 256 Appendix B.2. Ar chitectur e of MPNN Block W e adopt a Message Passing Neural Netw ork (MPNN) block to propagate information across the gAA G topology . The update consists of three steps: edge update, message aggregation, and node update. Denoting f ( l ) i as the feature of node i at layer l , and e ( l ) ji as the feature of the edge from node j to i , the update is defined as follows: • Edge update: The edge feature is updated by combining features from its incident nodes and current edge embedding: e ( l + 1) ji = MLP f ( l ) j ∥ f ( l ) i ∥ e ( l ) ji , l = 0 , 1 , . . . , L − 1 (B.1) where ∥ denotes vector concatenation, and L is the total number of MPNN layers. In our implementation, we use L = 2. The input dimension of the MLP is 256 × 3, followed by a hidden layer of dimension 256 with ReLU activ ation, and an output layer of dimension 256. 16 T able A.7: Architecture of the MLP for face attrib ute. Operator Input Shape Output Shape Linear 16 128 LayerNorm 128 128 GELU 128 128 Linear 128 128 T able A.8: Architecture of the MLP for edge attribute. Operator Input Shape Output Shape Linear 9 128 LayerNorm 128 128 GELU 128 128 Linear 128 128 • Message aggregation: Node i aggregates messages from its neighbors using a graph con v olu- tional network GA T : ˜ f ( l + 1) i = GA T f ( l ) i , n ( f ( l ) j , e ( l + 1) ji ) | j ∈ N ( i ) o , (B.2) where N ( i ) is the set of indices for all neighbor nodes of the i th node. • Node update: The aggregated message is passed through a tw o-layer feed-forward netw ork (FFN), followed by residual addition and layer normalization: f ( l + 1) i = LayerNorm f ( l ) i + FFN ˜ f ( l + 1) i . The FFN consists of two linear layers with GELU acti v ation. The input and output dimensions are both 256, while the hidden layer has a dimension of 512. Appendix C. BRep Decoder Details The BRep decoder consists of four parallel branches to reconstruct the f ace and edge information from latent graph features. Specifically: • F ace Geometry Branch: This branch employs a two-stage FoldingNet decoder to reconstruct the 7D geometric features for each face sampling point, including 3D coordinates, 3D normals, and a 1D trimming indicator . The decoder follo ws a two-stage architecture composed of MLPs. In the first stage, a 2D grid point ( u , v ) is concatenated with the 256D reconstructed face feature and passed through a three-layer MLP to generate a 64D intermediate feature. In the second stage, this 64D feature is concatenated with the original face feature and further processed by another three-layer MLP to produce the final 7D output. The detailed architecture is summarized in T able C.11. • Face Attribute Branch: This branch is a single MLP that regresses the 16D face attrib utes, including face type, area, centroid, and bounding box information. See T able C.12 for details. • Edge Geometry Branch: Similar to the face geometry branch, this decoder employs a two- stage FoldingNet structure to reconstruct the 12D geometric features of each edge sampling point, including 3D coordinates, tangent vectors, and incident face normals. The first stage 17 T able A.9: Architecture of the MLP for feature fusion. This network is used to fuse the geometric features (256D) and attribute features (128D) into a final 256D v ector . The input shape is the concatenated dimension (256 + 128 = 384). Operator Input Shape Output Shape Linear 384 256 LayerNorm 256 256 GELU 256 256 Linear 256 256 T able B.10: Hyperparameters for our T ransformer blocks. Hyperparameter V alue Embedding Dimension 256 Number of Attention Heads 8 FFN Hidden Dimension 1024 FFN Activ ation GELU Dropout Rate 0.3 takes the edge feature concatenated with a 1D curve parameter u , producing a 64D intermediate representation. The second stage refines this by concatenating it again with the edge feature and decoding it into a final 12D output. See T able C.13 for details. • Edge Attribute Branch: A lightweight MLP regresses the 9D edge attribute, including edge type, length, and con vexity . See T able C.14 for details. Appendix D . Losses for pre-training W e define our pre-training loss function as follows: L = λ 1 L feat + λ 2 L face geom + λ 3 L face attr + λ 4 L edge geom + λ 5 L edge attr , (D.1) where L feat is used to reconstruct the graph node and edge features obtained from the masked geometric information by our BRep encoder , and L face geom , L face attr , L edge geom , and L edge attr measures the di ff erence between the input BRep information and the reconstructed information. In our experiments, we set λ 1 = 1, λ 2 = 1, λ 3 = 0 . 3, λ 4 = 0 . 5, and λ 5 = 0 . 3. F eature term. The feature term ensures that the f ace and edge features obtained through the graph decoder are consistent with the ground truth. Therefore, we adopt the mean squared error (MSE) to measure the di ff erence: L feat = 1 |M f | X i ∈M f F m recon , i − F t high , i 2 2 + 1 |M e | X i ∈M e E m recon , i − E t high , i 2 2 , (D.2) where M f and M e are the sets of masked faces and edges, respecti vely , and |M f | and |M e | are the number of masked f aces and edges, respecti vely . F m recon , i and E m recon , i are the reconstructed face and edge features by our decoder . F t high , i and E t high , i are the ground truth, which are generated by the BRep encoder with the original geometric information corresponding to the masked faces and edges as its inputs. 18 T able C.11: Architecture of the FoldingNet decoder for face geometry . Operator Input Shape Output Shape Linear 256 + 2 512 ReLU 512 512 Linear 512 512 ReLU 512 512 Linear 512 64 Linear 256 + 64 512 ReLU 512 512 Linear 512 512 ReLU 512 512 Linear 512 7 T able C.12: Architecture of the MLP decoder for face attrib ute information. Operator Input Shape Output Shape Linear 256 256 LayerNorm 256 256 GELU 256 256 Linear 256 16 F ace geometry term. The face geometric information of each sampling point is a 7D vector ( p i , n i , τ i ), where p i is its coordinate, n i is its face normal, and τ i indicates whether the point is trimmed ( τ i = 0 means it is trimmed and τ i = 1 means it is retained). Considering that the components of face geometric information represent di ff erent physical meanings, we use di ff erent loss functions and weights to calculate them respectiv ely: L face geom = α 1 L face coord + α 2 L face norm + α 3 L face trim , (D.3) where L face coord = 1 |P f | X i ∈P b p i − p i 2 2 , (D.4) L face norm = 1 |P f | X i ∈P b n i − n i 2 2 , (D.5) L face trim = 1 |P f | X i ∈P ℓ bce b τ i , τ i . (D.6) P f is the set of sampling points with the highest resolution on all BRep faces, and |P f | is its number . b p i , b n i , and b τ i are the predicted geometric information of these sampling points, respecti vely . ℓ bce is the binary cross-entropy (BCE) function defined as follo ws: ℓ bce ( ˆ τ , τ ) = − τ log ˆ τ + (1 − τ ) log(1 − ˆ τ ) . (D.7) In our experiments, we set α 1 = 1, α 2 = 0 . 5, and α 3 = 0 . 3. 19 T able C.13: Architecture of the FoldingNet decoder for edge geometry . Operator Input Shape Output Shape Linear 256 + 1 512 ReLU 512 512 Linear 512 512 ReLU 512 512 Linear 512 64 Linear 256 + 64 512 ReLU 512 512 Linear 512 512 ReLU 512 512 Linear 512 12 T able C.14: Architecture of the MLP decoder for edge attribute. Operator Input Shape Output Shape Linear 256 256 LayerNorm 256 256 GELU 256 256 Linear 256 9 F ace attribute term. The face attribute information is reco vered from the graph node feature by an MLP . W e use the following loss function to encourage the predicted face attrib ute information to be close to the ground truth value: L face attr = 1 |F | X i ∈F b a face i − a face i 2 2 , (D.8) where F is the set of BRep faces, and |F | is the number of BRep faces. b a face i and a face i are the predicted and original face attrib ute vectors, respecti vely . Edge geometry term. Since the edge lacks trimming information, we use MSE as the loss function ov er the entire 12D edge information: L edge geom = 1 |P e | X i ∈P e b c i − c i 2 2 , (D.9) where P e is the set of sampling points on all BRep edges, and |P e | is the number of edge sampling points. b c i and c i are the predicted and original edge geometric information, respectiv ely . Edge attrib ute term. The same as the face attribute term, our edge attrib ute loss function is defined as follows: L edge attr = 1 |E| X i ∈E b a edge i − a edge i 2 2 (D.10) where E is the set of BRep edges, and |E| is its number . b a edge i and a edge i are the recov ered and corresponding 9D BRep edge attribute v ectors, respecti vely . 20 Appendix E. Fine-tuning Details Our pre-trained encoder is adapted for downstream tasks by attaching a small, task-specific head (see table E.15) and fine-tuned on a small labeled dataset with task-specific losses. Loss for mac hining featur e reco gnition. For the machining feature recognition task, the task-specific head takes each f ace’ s feature f i latent = ( F latent ) i , the i -th ro w of F latent , as input and outputs a v ector z mach i ∈ R C mach . The constant C mach is the number of machining features. W e use the following loss function to train our network: L mach = 1 N f N f X i = 1 ℓ ce ( z mach i , y mach i ) , (E.1) where N f is the number of f aces in the BRep model and y mach i is a one-hot v ector representing the ground truth. ℓ ce is the multiclass cross-entropy function: ℓ ce ( z , y ) = − C X i = 1 y i log exp( z i ) P C j = 1 exp( z j ) , (E.2) where C is the dimension of z and y , indicating the number of classes. Loss for modeling-se gmentation. The same as the machining feature recognition task, the input of our task-specific head is f i latent , and the output is z seg i . Our modeling-segmentation loss function is defined as follows: L seg = 1 N f N f X i = 1 ℓ ce ( z seg i , y seg i ) , (E.3) where z seg i ∈ R C seg is the output of our network, y seg i is the ground truth and C seg is the number of segmentation classes. Loss for classification. Since the classification task in volves classifying the entire BRep, we employ a global max pooling layer to aggregate the BRep f ace features F latent as the BRep feature B latent : ( B latent ) j = max 1 ≤ i ≤ N f ( F latent ) i , j . (E.4) Then we input B latent into the task-specific head to generate a vector z cls ∈ R C cls , where C cls is the number of CAD classes as output. The loss function is as follows: L cls = ℓ ce ( z cls , y cls ) , (E.5) where y cls is the ground truth one-hot vector . Appendix F . Evaluation metrics Accuracy . Accuracy is defined as the ratio of correctly predicted labels to the total number of samples: Acc = N cor N total , (F .1) where N cor is the number of correctly classified faces or shapes, and N total is the total number of labels in the ev aluation set (e.g., total number of faces for face-le vel tasks, or total number of models for classification). 21 T able E.15: Architecture of the downstream task head. It consists of three linear layers with GELU activ ations and dropout (rate = 0.3) applied after each hidden layer . C denotes the number of output classes. The input is the encoded feature vector produced by our pre-trained encoder . For machining feature recognition and modeling segmentation, each f ace’ s feature is fed independently . For part classification, a global max pooling is applied ov er all face features to obtain the input. Operator Input Shape Output Shape Linear 256 1024 GELU 1024 1024 Dropout 1024 1024 Linear 1024 256 GELU 256 256 Dropout 256 256 Linear 256 C Mean intersection over union. T o ev aluate per-face prediction quality , especially under class im- balance, we use the mean intersection over union (mIoU). For a given class c , let A c be the set of predicted labels and B c the ground-truth labels. The IoU for class c is defined as: IoU c = | A c ∩ B c | | A c ∪ B c | . (F .2) The ov erall mIoU is the av erage ov er all N c classes: mIoU = 1 N c N c X c = 1 IoU c . (F .3) This metric measures the ov erlap between predicted and true labels and reflects both precision and recall at the class lev el. Appendix G. Pre-training Con vergence Analysis T o detail the pre-training optimization process, we visualize the con ver gence curves of the reconstruction losses. As sho wn in Fig. G.8, the y-axis represents the logarithmic loss v alue ( log ( Loss )), and the x-axis represents the training epochs. The figure plots the total loss L alongside its fi ve indi vidual components: latent feature L f eat , face geometry L f ace geom , face attrib ute L f ace att r , edge geometry L edge geom , and edge attribute L edge att r . Throughout the training process, all loss components decrease steadily . In the later epochs, all curves gradually plateau. This consistent trend indicates that the parallel decoding branches con verge stably without exhibiting di vergence during the masked autoencoding task. Appendix H. Computational Cost Analysis T o provide details on the computational cost of the proposed method, we report the model parameters, floating-point operations (FLOPs), inference latency , and throughput. The profiling is conducted using CAD models with an a verage of 30 faces on a single NVIDIA A800 GPU, consistent with the hardware setup described in the main paper . As sho wn in T able H.16, we report the metrics separately for the self-supervised pre-training stage (comprising the full masked autoencoder architecture) and the do wnstream task stage (comprising the pre-trained encoder and the task-specific network). 22 -0.5 -1.0 -1.5 -2.0 -2.5 -3.0 log(Loss) feat face face attr geom edge geom edge attr 0 20 40 60 80 Epoch Figure G.8: The pre-training loss con vergence curv es. The y-axis denotes the logarithmic loss, showing the total loss and its fiv e individual components o ver the training epochs. T able H.16: Computational cost profiling for the pre-training and downstream stages. Profiling is based on CAD models with an av erage of 30 faces. Stage Parameters (M) FLOPs (G) Latency (ms) Throughput (models / s) Pre-training 8.15 20.98 25.99 38.47 Do wnstream 6.09 5.26 10.42 96.01 Pre-training Cost: During the pre-training stage, the full architecture contains 8.15M parameters and requires 20.98G FLOPs per forward pass. The av erage latency is 25.99 ms per model, corre- sponding to a throughput of 38.47 models per second, which constitutes a one-time computational cost. Downstr eam Cost: For do wnstream tasks, the pre-training decoder is replaced by specific task networks tailored for the respecti ve applications. The adapted do wnstream netw ork comprises 6.09M parameters and requires 5.26G FLOPs. The latency in this stage is 10.42 ms per model, with a throughput of 96.01 models per second. Scalability Considerations: It is worth noting that these profiling results are based on typical CAD models av eraging 30 faces. When extending this frame work to massi ve industrial assemblies with significantly more faces, the memory footprint and inference latency will naturally scale up. Appendix I. More Ablation Study Due to the page limit of the main te xt, we pro vide additional ablation studies in this section to further v alidate our pre-training configurations and loss designs. All experiments in this section are conducted on the MFInstSeg dataset, follo wing the identical training protocol described in the main paper . The quantitativ e results are summarized in T able I.17. 1. Masking Attributes vs. Geometry Only . In our default setting, we mask both the raw geometries and the discrete attributes. T o validate this, we test a configuration where only geometric information is masked, while attrib ute information remains fully visible to the network ( w / o Mask attr ). As shown in T able I.17, this results in a noticeable performance drop (e.g., from 88.75% to 84.48% at the 0.1% supervision level). This demonstrates that keeping attributes visible provides the 23 T able I.17: More Ablation study on MFInstSeg under various supervision ratios. Configuration 0.1% 0.5% 1% 1.5% 2% 3% 100% w / o Mask attr 84.48 94.15 95.42 96.34 96.60 97.20 99.41 Feature all 76.77 90.86 93.79 94.67 95.72 96.56 99.33 Geometry masked 78.03 91.14 93.91 95.23 95.81 96.71 99.37 Mask ratio(50%) 86.99 96.02 97.18 97.43 97.60 98.02 99.49 Mask ratio(60%) 87.41 96.12 97.19 97.46 97.73 98.05 99.51 Mask ratio(80%) 87.01 95.66 96.86 97.37 97.62 97.96 99.46 Ours (Default) 88.75 96.19 97.31 97.67 97.92 98.26 99.53 network with a tri vial shortcut to infer local semantics, thereby weak ening the information bottleneck and hindering the learning of deep, transferable representations. 2. Supervision Domains for Loss Functions. W e ablate the regions ov er which our pre- training losses are computed to justify our asymmetric design choices between feature and geometry supervisions: • F eature all: By default, the latent feature loss ( L f eat ) is computed strictly on the mask ed entities. If we force the network to compute this loss on all entities (both mask ed and unmasked), the accuracy drops drastically to 76.77% at 0.1% supervision. This is because unmasked features are already exposed to the encoder; supervising them encourages a tri vial identity mapping, which distracts the network from its primary task of deducing missing structures. • Geometry masked: Con versely , our default e xplicit geometry losses ( L f ace geom , L edge geom ) are com- puted ov er the entire BRep model. If we restrict the geometry loss to only the masked regions ( Geometry masked ), performance drops to 78.03% at 0.1% supervision. Reconstructing the full geometry acts as a strong global re gularizer , ensuring that the decoder maintains overall shape consistency and spatial coherence rather than optimizing masked patches in isolation. 3. Sensitivity to Masking Ratios. W e also ev aluate the sensiti vity of our framework to di ff erent pre-training masking ratios ( Mask ratio 50% , Mask ratio 60% , and Mask ratio 80% ). The results indicate that while our architecture remains relati vely rob ust across di ff erent masking regimes, our default configuration (with a 70% masking ratio) yields the most optimal and balanced representation, consistently achieving the highest accurac y across all low-data settings. Appendix J . Additional Visualization Results T o provide a more comprehensiv e qualitativ e ev aluation, we present additional visualization results in this section. Fig. J.9 displays more reconstruction e xamples, specifically visualizing the face coordinates and face normals, which further demonstrates the e ff ectiveness of our masked pre-training strategy . Regarding downstream tasks, we sho w extended results for machining feature recognition in Fig. J.10 and modeling se gmentation in Fig. J.11. These visualizations confirm that our pre-trained encoder learns robust and generalizable representations for di verse CAD tasks. 24 Input Coordinate Normal Sampling Masked Reconstruction Sampling Masked Reconstruction Figure J.9: More reconstruction examples with face coordinates and face normals. 25 Input Triangular passage 6-sides pocket 2-sides through step Rectangular passage Rectangular pocket Input 2-sides through step Chamfer T hrough hole Slanted through step Triangular blind step Input Rectangular through slot Horizontal circular end blind slot Rectangular passage 6-sides passage 6-sides pocket Input Rectangular pocket Circular through slot Blind hole Circular blind step Circular end pocket Input Circular through slot Trianglular through slot Rectangular through slot 6-sides passage O-ring Input Through hole Rectangular blind slot Circular end pocket 6-sides pocket Rectangular passage Input Rectangular through step Rectangular blind step Through hole Triangular blind step Triangular passage Input Circular blind step Circular through slot Rectangular through slot Rectangular pocket 6-sides pocket Figure J.10: More results of machining feature recognition. 26 Figure J.11: More results of modeling segmentation. 27

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment