Gradient Atoms: Unsupervised Discovery, Attribution and Steering of Model Behaviors via Sparse Decomposition of Training Gradients

Training data attribution (TDA) methods ask which training documents are responsible for a model behavior. However, models often learn broad concepts shared across many examples. Moreover, existing TDA methods are supervised -- they require a predefi…

Authors: J Rosser

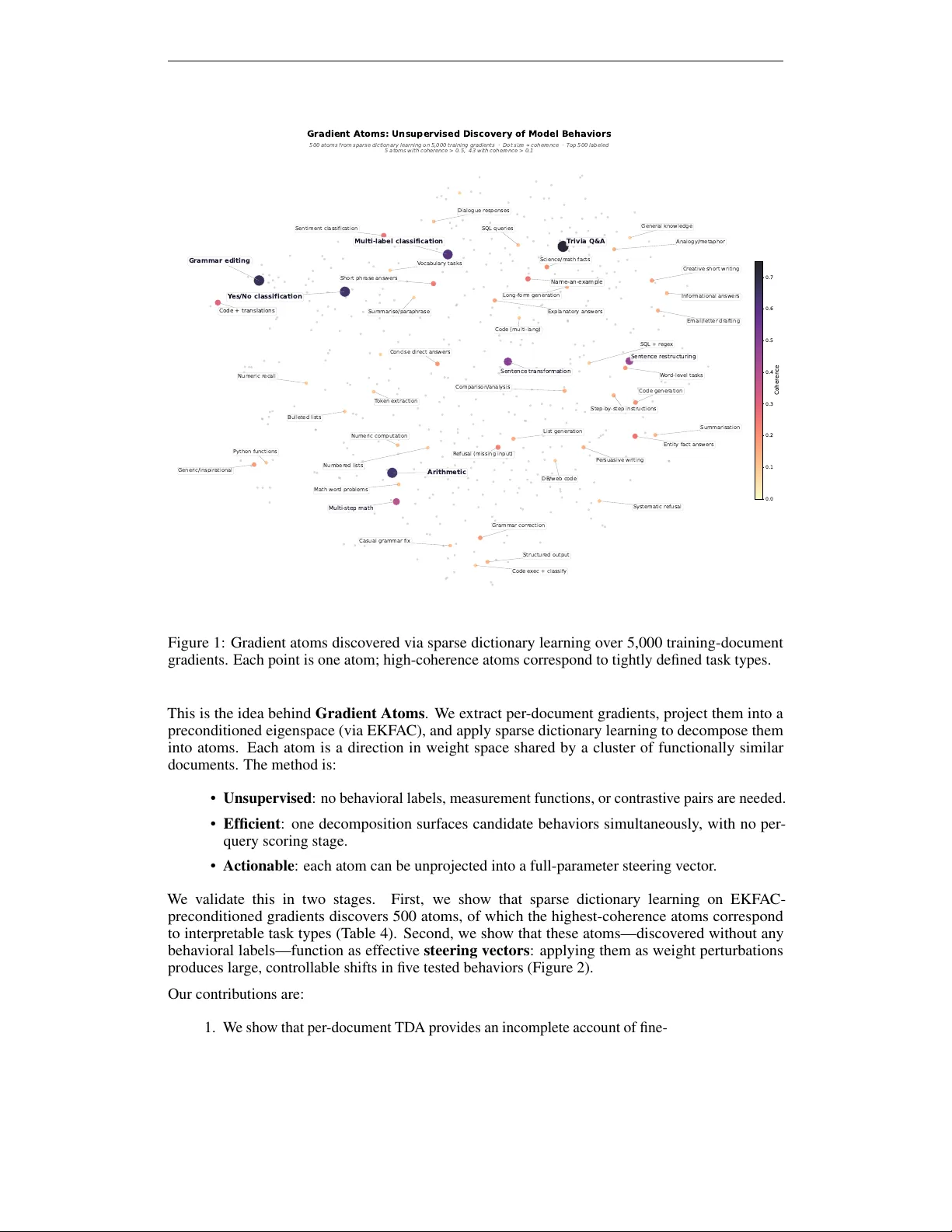

Gradient Atoms G R A D I E N T A T O M S : U N S U P E RV I S E D D I S C O V E RY , A T T R I B U T I O N A N D S T E E R I N G O F M O D E L B E H A V I O R S V I A S P A R S E D E C O M - P O S I T I O N O F T R A I N I N G G R A D I E N T S J Rosser FLAIR, Univ ersity of Oxford jrosser@robots.ox.ac.uk A B S T R AC T T raining data attribution (TD A) methods ask which training documents are respon- sible for a model behavior . Howe ver , models often learn broad concepts shared across many examples. Moreov er , existing TD A methods are supervised—they require a predefined query behavior , then score ev ery training document against it—making them both expensi ve and unable to surf ace behaviors the user did not think to ask about. W e present Gradient Atoms , an unsupervised method that decomposes per-document training gradients into sparse components (“atoms”) via dictionary learning in a preconditioned eigenspace. Each atom captures a shared update direction induced by a cluster of functionally similar documents, directly recovering the collecti ve structure that per-document methods do not address. Among 500 discov ered atoms, the highest-coherence ones recover in- terpretable task-type behaviors—refusal, arithmetic, yes/no classification, trivia QA—without any behavioral labels. These atoms double as effecti ve steering vectors: applying them as weight-space perturbations produces large, controllable shifts in model behavior (e.g., b ulleted-list generation 33% → 94%; systematic refusal 50% → 0%). The method requires no query–document scoring stage, and scales independently of the number of query beha viors of interest. Code is av ailable at https://github.com/jrosseruk/gradient_atoms . 1 I N T RO D U C T I O N T raining data attribution (TD A) attributes a model’ s behavior to the documents it was trained on (K oh & Liang, 2017; Grosse et al., 2023; Bae et al., 2024). Per-document attribution has proven valuable for identifying influential e xamples, debugging predictions, and curating datasets. Howe ver , per-document scoring captures only part of what training teaches. A model that learns to perform arithmetic during fine-tuning does so not because of an y single arithmetic example, but because many arithmetic e xamples induce a shared update direction across weights. More broadly , Ruis et al. (2024) sho w that fine-tuning primarily instils pr ocedural capabilities—task-lev el strategies such as classification, editing, or code generation—that emerge from the collecti ve gradient signal of functionally similar documents. Standard TD A also faces a practical bottleneck: it is supervised , requiring the user to specify a query behavior before scoring documents against it. This demands (1) knowing what behaviors to look for in adv ance, and (2) an O ( N ) scoring pass per query . For influence-function methods like EK- F A C (Grosse et al., 2023; Bae et al., 2024), attrib uting Q behaviors costs O ( Q × N ) query–document comparisons. For a comprehensi ve audit of learned behaviors, this is prohibiti vely expensi ve. W e address both gaps with a dif ferent question. Instead of asking “which document caused this behavior?”, we ask: what are the shared update directions that clusters of documents jointly induce? 1 Gradient Atoms 500 atoms from sparse dictionary learning on 5,000 training gradients · Dot size coherence · T op 500 labeled 5 atoms with coherence > 0.5, 43 with coherence > 0.1 Gradient Atoms: Unsupervised Discovery of Model Behaviors T rivia Q&A Grammar editing Y es/No classification Arithmetic Multi-label classification Sentence transfor mation Sentence r estructuring Multi-step math Code + translations Name-an-e xample Sentiment classification Entity fact answers Short phrase answers R efusal (missing input) Science/math facts Code generation Grammar cor r ection Concise dir ect answers Generic/inspirational W or d-level tasks Cr eative short writing Explanatory answers L ong-for m generation Comparison/analysis Step-by -step instructions List generation Email/letter draf ting P ersuasive writing Structur ed output Analogy/metaphor Infor mational answers Summarisation Dialogue r esponses Math wor d pr oblems Numeric computation Casual grammar fix SQL queries Systematic r efusal T ok en e xtraction Python functions Bulleted lists Number ed lists SQL + r ege x Code e x ec + classif y Code (multi-lang) DB/web code V ocabulary tasks Summarise/paraphrase Numeric r ecall General knowledge 0.0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 Coher ence Figure 1: Gradient atoms discovered via sparse dictionary learning over 5,000 training-document gradients. Each point is one atom; high-coherence atoms correspond to tightly defined task types. This is the idea behind Gradient Atoms . W e extract per-document gradients, project them into a preconditioned eigenspace (via EKF A C), and apply sparse dictionary learning to decompose them into atoms. Each atom is a direction in weight space shared by a cluster of functionally similar documents. The method is: • Unsupervised : no behavioral labels, measurement functions, or contrastiv e pairs are needed. • Efficient : one decomposition surfaces candidate behaviors simultaneously , with no per- query scoring stage. • Actionable : each atom can be unprojected into a full-parameter steering vector . W e validate this in two stages. First, we show that sparse dictionary learning on EKF A C- preconditioned gradients discovers 500 atoms, of which the highest-coherence atoms correspond to interpretable task types (T able 4). Second, we show that these atoms—discovered without any behavioral labels—function as effecti ve steering vectors : applying them as weight perturbations produces large, controllable shifts in fi ve tested behaviors (Figure 2). Our contributions are: 1. W e sho w that per-document TD A provides an incomplete account of fine-tuning and propose a richer unit of analysis: shared update directions in gradient space. 2. W e introduce Gradient Atoms, an unsupervised method that discov ers candidate model behaviors from training gradients alone, without supervision or per -query scoring. 3. W e demonstrate that discovered atoms function as ef fectiv e steering vectors, producing lar ge controllable shifts in model behavior without an y behavioral labels. 2 Gradient Atoms 2 R E L A T E D W O R K Sev eral concurrent works share our core insight that gradient similarity reflects functional similarity among training examples. GradientSpace (Sridharan et al., 2025) clusters LoRA gradients to identify “latent skills” but uses them to train specialised expert routers, not for interpretability or steering. Mode-Conditioning (W u et al., 2025) confirms that gradient clustering reliably reco vers functional groupings (98.7% F1), b ut applies this to test-time compute allocation. ELR OND (Skier ´ s et al., 2026) is philosophically closest: they decompose per-sample gradients into steerable directions via PCA and sparse autoencoders, but in dif fusion models rather than LLMs. Our contribution combines: (1) operating on training gradients rather than inference activ ations, (2) being fully unsupervised with no per-query scoring, and (3) the disco vered atoms directly functioning as steering vectors for LLMs. Standard TD A methods (K oh & Liang, 2017; Grosse et al., 2023; Bae et al., 2024) require a predefined query and O ( Q × N ) query–document comparisons; atoms complement this with unsupervised discov ery . In the activ ation-space literature, SAEs (Nanda et al., 2023) and gradient-informed variants (Olmo et al., 2025; Shu et al., 2025) decompose activ ations at inference time; atoms decompose what the model learned during training, across all layers simultaneously . Extended discussion is in Section A. 3 M E T H O D Consider a language model with parameters θ fine-tuned on a dataset of N input–output pairs { x 1 , . . . , x N } . Each document x i induces a gradient g i = ∇ θ L CE ( θ ; x i ) ∈ R d , where d is the number of trainable parameters. The gradient g i is the direction the model’ s weights would move to improv e on document i . Gradient Atoms decomposes these per -document gradients into sparse components; the highest-quality components isolate interpretable learned behaviors. The pipeline has fiv e steps. 3 . 1 P E R - D O C U M E N T G R A D I E N T E X T R A C T I O N For each training document x i , we compute the gradient of cross-entropy loss with respect to all trainable parameters, producing a gradient matrix G ∈ R N × d . Documents that require similar computations tend to produce similar gradient vectors. 3 . 2 E K FAC P R O J E C T I O N A N D P R E C O N D I T I O N I N G The raw gradient space is anisotropic—some directions hav e high curvature (small weight changes cause large loss changes) and others ha ve low curvature. W ithout correction, any decomposition is dominated by high-curv ature directions, drowning out semantic structure. W e use the EKF AC eigendecomposition (Grosse et al., 2023; Bae et al., 2024) of the approximate Fisher information matrix to correct for this. For each module m with eigen vectors Q m and eigen values λ m , we project each gradient into the top- k eigen vectors and precondition: ˜ g ( m ) i = Q ( k ) ⊤ m g ( m ) i , ˆ g ( m ) i = ˜ g ( m ) i q λ ( k ) m + ϵ (1) After concatenating across all M modules, the projected gradient is ˆ g i ∈ R k total where k total = k × M . This makes the space approximately isotropic: a unit step in any direction corresponds to a roughly equal change in loss, encouraging atoms to capture functionally distinct directions rather than curvature artif acts. 3 . 3 S PA R S E D I C T I O N A RY L E A R N I N G W e normalize each projected gradient to unit norm (so atoms reflect direction, not magnitude) and apply sparse dictionary learning to decompose: ˆ g i ≈ K X j =1 α ij d j (2) 3 Gradient Atoms where D = [ d 1 , . . . , d K ] ∈ R K × k total are the atoms and α ij are sparse coefficients—most are zero, so each document is explained by a few atoms. The sparsity penalty encourages each atom to capture a single pattern rather than blending multiple unrelated behaviors. 3 . 4 C O H E R E N C E S C O R I N G For each atom j , we identify its activ ating documents—those with non-zero coefficient α ij —and compute a coherence score ov er the top- n activ ating documents S j : coherence ( j ) = 1 |S j | ( |S j | − 1) X a = b ∈S j cos( g a , g b ) (3) where g a , g b are the raw (unprojected, full d -dimensional) gradients. High coherence suggests the atom has found a shared computational motif in the original weight space, rather than an artifact of the projection. 3 . 5 U N P RO J E C T I O N T O S T E E R I N G V E C T O R S Any atom can be con verted back to a full parameter -space vector by rev ersing the projection: v j = unproject ( d j ) ∈ R d (4) This vector can be applied as a weight-space perturbation θ new = θ ± α · v j , analogous to curvature- aware model editing (Ikram et al., 2026). The key dif ference is that v j was discov ered unsupervised from the training data, rather than deriv ed from a hand-crafted measurement function or contrastive pair . 4 E X P E R I M E N T S 4 . 1 S E T U P Model. W e use Gemma-3 4B IT fine-tuned via LoRA (rank 8) on the q proj and v proj matrices across all 34 layers, yielding 2.2M trainable parameters across 136 LoRA modules. Dataset. The model is fine-tuned on 5,000 instruction–response pairs sampled from a general- purpose SFT mixture cov ering arithmetic, grammar correction, classification, code generation, QA, creati ve writing, and other tasks. EKF AC factors (eigendecomposition of the approximate Fisher) are computed on the full training set. Gradient Atoms pipeline. W e extract per-document gradients for all 5,000 training examples, project via EKF A C into 6,800 dimensions (50 eigencomponents × 136 modules, a 328 × reduction), and run MiniBatchDictionaryLearning (scikit-learn) with K = 500 atoms and sparsity penalty α = 0 . 1 . Coherence is computed ov er the top-20 acti v ating documents per atom using the raw 2.2M-dimensional gradients. 4 . 2 A T O M D I S C OV E RY From 500 atoms: 5 hav e coherence > 0 . 5 , 43 hav e coherence > 0 . 1 , and 457 hav e coherence < 0 . 1 . Figure 1 shows the distrib ution. The top 5 atoms (coherence > 0 . 5 ) are: short factual QA (0.725), grammar editing (0.672), yes/no classification (0.647), simple arithmetic (0.643), and multi-category classification (0.614). The full top 50 are listed in T able 4. Atoms capture task types, not topics. The decomposition clusters training data by how the model responds (arithmetic, classification, editing, code) rather than what it responds about (science, history , culture). This is consistent with the procedural-knowledge hypothesis of Ruis et al. (2024) discussed in Section 1, and confirms that gradient structure is organised around shared computational strategies. High coherence = stereotyped tasks. The top-5 atoms are all formulaic task types whose activ ating documents hav e highly similar gradients, suggesting similar computational pathways. 4 Gradient Atoms Multiple granularities. Grammar correction appears three times (ranks 2, 17, 36) and code generation fiv e times (ranks 16, 37, 40, 45, 46), at decreasing coherence. The dictionary finds sub-clusters that may reflect different sentence comple xity levels or programming language f amilies. Format atoms. Bulleted lists (#469) and numbered lists (#299) are separate atoms, suggesting the model uses distinct weight pathways for these formatting patterns. Refusal is discoverable. T wo atoms (#52, #161) capture the model’ s tendency to reply “Please provide the input” when task instructions lack content—a behavior learned from training data that appears to be separable from other behaviors in gradient space. Effect of sparsity penalty . T able 1 shows the ef fect of α . At α = 0 . 01 , atoms are too dense (median ∼ 2500 docs each), blending unrelated patterns. At α = 0 . 1 , atoms are selecti ve ( ∼ 100 docs each). At α = 1 . 0 , the penalty ov erwhelms reconstruction and all coef ficients are zero. T able 1: Ef fect of sparsity penalty α on atom quality . α Docs per atom (median) Atoms coh > 0 . 5 Atoms coh > 0 . 1 0.01 ∼ 2500 3 ∼ 20 0.1 ∼ 100 5 43 1.0 0 0 0 4 . 3 B E H A V I O R A L S T E E R I N G A ke y test of whether gradient atoms capture genuine computational structure is whether they can steer model beha vior when applied as weight-space perturbations. W e select fiv e atoms spanning a range of coherence scores and behavioral types, unproject each to a full LoRA parameter-space vector , and apply perturbations θ new = θ ± α · v j with α ∈ { 0 . 5 , 1 . 0 , 2 . 0 , 5 . 0 , 10 . 0 } in both directions. Since dictionary learning assigns atom signs arbitrarily , we test both. For each atom, we design 100 e valuation questions ( ∼ 60 that naturally in vite the tar get behavior , ∼ 40 neutral controls) and measure behavior with a re gex detector: • Y es/No (#415): first line starts with Y es/No/True/F alse • Code (#64): response contains a fenced code block ( ‘‘‘ ) • Refusal (#161): matches clarification-seeking patterns • Bullets (#469): ≥ 2 lines starting with - , * , or • • Numbered (#299): ≥ 2 lines starting with \ d+[.)] Each steered adapter is served via vLLM alongside the clean baseline, with all 11 v ariants (5 alphas × 2 signs + baseline) loaded simultaneously as LoRA modules. 0.5 1 2 5 10 0 20 40 60 80 100 % Detected base=39% #415 Y es/No Classification (coh=0.647) 0.5 1 2 5 10 0 20 40 60 80 100 base=42% #64 Code Generation (coh=0.201) 0.5 1 2 5 10 0 20 40 60 80 100 base=50% #161 Systematic Refusal (coh=0.111) 0.5 1 2 5 10 0 20 40 60 80 100 base=33% #469 Bulleted List Generation (coh=0.103) 0.5 1 2 5 10 0 20 40 60 80 100 base=58% #299 Numbered List Generation (coh=0.103) Behavioral Atom Steering: Alpha Sweep (Both Directions) T o w a r d ( v ) A w a y ( + v ) Figure 2: Behavioral steering via unsupervised gradient atoms. Red bars show the “to ward” direction ( θ − α v ), blue bars show “a way” ( θ + α v ), and the dashed line marks the clean baseline. Four of fi ve atoms produce large, monotonic steering ef fects in at least one direction. Results are shown in Figure 2 and T able 2. All fiv e atoms steer behavior in at least one direction, and four produce large ef fects ( > 14pp): 5 Gradient Atoms T able 2: Beha vioral atom steering results. For each atom, we report the baseline detection rate and the best increase/decrease achie ved across all alpha v alues and both directions. ∆ is the change in percentage points from baseline. Atom Behavior Coh. Base Best ↑ ∆ ↑ Best ↓ ∆ ↓ #415 Y es/No Classification 0.647 39% 51% + 12pp 0% − 39pp #64 Code Generation 0.201 42% 58% + 16pp 28% − 14pp #161 Systematic Refusal 0.111 50% 55% + 5pp 0% − 50pp #469 Bulleted Lists 0.103 33% 94% + 61pp 0% − 33pp #299 Numbered Lists 0.103 58% 59% + 1pp 8% − 50pp • Bulleted lists (#469) is the strongest: steering increases bullet usage from 33% to 94% ( + 61pp) and suppresses it to 0%. The ef fect is monotonic with alpha in both directions. • Refusal (#161) is completely suppressible: from 50% baseline to 0% at α = 5 . The steered model responds “Okay . ” to underspecified prompts instead of asking for clarification. The rev erse direction modestly increases refusal ( + 5pp) and makes the model more verbose, suggesting this atom captures a terse/verbose dimension. • Code generation (#64) increases from 42% to 58% ( + 16pp) or decreases to 28% ( − 14pp). The sign is flipped relativ e to the Newton step con vention, confirming that atom signs from dictionary learning are arbitrary . • Y es/No (#415) shows moderate amplification ( + 12pp) but strong suppression ( − 39pp to 0%). At high alpha, the model loses coherence, establishing the upper bound of useful perturbation. • Numbered lists (#299) is asymmetric: easily suppressed (58% → 8%) but not amplifiable ( + 1pp), likely due to a ceiling ef fect. 5 D I S C U S S I O N Suppression appears easier than amplification. All fiv e atoms suppress their target behavior to near zero, b ut only two (b ullets, code) achiev e substantial amplification. One interpretation is that suppressing a beha vior requires disrupting a single computational pathway , while amplifying requires strengthening it against many competing alternati ves. Coherence does not necessarily pr edict steerability . Atom #469 (coherence 0.103) produces the largest steering ef fect ( + 61pp), while #415 (coherence 0.647) giv es only + 12pp. Coherence measures gradient alignment among acti vating documents, but steerability also depends on how much the model’ s default beha vior already saturates the target pathw ay . Limitations. Only 43 of 500 atoms ha ve coherence > 0 . 1 , the majority are noise or capture o verly broad mixtures. The instruction-follo wing training data means atoms recover task types rather than fine-grained semantic preferences; more naturalistic data might yield different atoms. The 6,800-dim EKF A C projection discards information, and 5,000 documents may not cov er rare behaviors. Our rege x-based e v aluation measures surface formatting rather than deeper beha vioral changes. 6 C O N C L U S I O N W e presented Gradient Atoms, an unsupervised method that discov ers what a fine-tuning dataset teaches a model by decomposing training gradients into sparse components. The method addresses a gap in standard training data attrib ution: rather than scoring indi vidual documents against a known behavior , it recov ers the shared update directions that clusters of documents jointly induce—the collecti ve structure through which procedural capabilities are acquired. The highest-coherence atoms recov er interpretable task-type behaviors without supervision, and function as effecti ve steering vectors, connecting unsupervised beha vior discovery with controllable model editing. Future directions include composing multiple atoms for simultaneous multi-behavior steering, scaling the dictionary to 1,000+ atoms, cross-model comparison to identify shared vs. adapter-specific behaviors, and de veloping principled methods for alpha selection. 6 Gradient Atoms A C K N O W L E D G E M E N T S J Rosser is supported by the EPSRC centre for Doctoral Training in Autonomous and Intelligent Machines and Systems EP/Y035070/1. Special thanks to the London Initiative for Safe AI and Arcadia Impact for providing workspace. R E F E R E N C E S Juhan Bae, W u Lin, Jonathan Lorraine, and Roger Grosse. T raining data attribution via approximate unrolled differentiation, 2024. URL . RD WS Cook et al. Residuals and influence in regression. 1982. Roger Grosse, Juhan Bae, Cem Anil, Nelson Elhage, Alex T amkin, Amirhossein T ajdini, Benoit Steiner , Dustin Li, Esin Durmus, Ethan Perez, et al. Studying large language model generalization with influence functions. arXiv pr eprint arXiv:2308.03296 , 2023. Zarif Ikram, Arad Firouzkouhi, Stephen T u, Mahdi Soltanolkotabi, and P aria Rashidinejad. Crispedit: Lo w-curvature projections for scalable non-destructiv e llm editing, 2026. URL https://arxiv. org/abs/2602.15823 . Pang W ei Koh and Percy Liang. Understanding black-box predictions via influence functions. In International confer ence on machine learning , pp. 1885–1894. PMLR, 2017. Neel Nanda, Lawrence Chan, T om Lieberum, Jess Smith, and Jacob Steinhardt. Progress measures for grokking via mechanistic interpretability . arXiv preprint , 2023. Jeffre y Olmo, Jared Wilson, Max Forsey , Bryce Hepner, Thomas V in Howe, and David Wing ate. Features that make a dif ference: Le veraging gradients for improv ed dictionary learning, 2025. URL . Laura Ruis, Maximilian Mozes, Juhan Bae, Siddhartha Rao Kamalakara, Dwarak T alupuru, Acyr Locatelli, Robert Kirk, T im Rockt ¨ aschel, Edward Grefenstette, and Max Bartolo. Procedural knowl- edge in pretraining dri ves reasoning in lar ge language models. arXiv pr eprint arXiv:2411.12580 , 2024. Dong Shu, Xuansheng W u, Haiyan Zhao, Mengnan Du, and Ninghao Liu. Beyond input activ ations: Identifying influential latents by gradient sparse autoencoders, 2025. URL https://arxiv. org/abs/2505.08080 . Pa weł Skier ´ s, T omasz Trzci ´ nski, and Kamil Deja. Elrond: Exploring and decomposing intrinsic capabilities of diffusion models, 2026. URL . Shrihari Sridharan, Deepak Ravikumar , Anand Raghunathan, and Kaushik Roy . Gradientspace: Unsupervised data clustering for improved instruction tuning, 2025. URL https://arxiv. org/abs/2512.06678 . George W ang and Daniel Murfet. Patterning: The dual of interpretability , 2026. URL https: //arxiv.org/abs/2601.13548 . Chen Henry W u, Sachin Goyal, and Aditi Raghunathan. Mode-conditioning unlocks superior test-time scaling, 2025. URL . 7 Gradient Atoms A E X T E N D E D R E L A T E D W O R K T raining data attribution. Influence functions (Cook et al., 1982; K oh & Liang, 2017) estimate the effect of remo ving a training example on predictions. Grosse et al. (2023) scaled these to LLMs via EKF A C, and Bae et al. (2024) improved accurac y with approximate unrolled differentiation. All such methods are supervised—requiring a query beha vior and O ( N ) scoring per query . Gradient Atoms complements these approaches by discov ering candidate behaviors without a predefined query . Gradient-based clustering. GradientSpace (Sridharan et al., 2025) clusters LoRA gradients via online SVD to identify “latent skills, ” sharing our core insight that gradient similarity reflects functional similarity . Howe ver , they use clusters to train specialised LoRA experts with a router , not for interpretability or steering. Their SVD + k-means yields routing labels; our sparse dictionary learning produces individually s teerable atoms. Mode-Conditioning (W u et al., 2025) independently confirms that gradient clustering recovers functional groupings (98.7% F1), applying this to test-time compute allocation. Gradient decomposition in diffusion models. ELROND (Skier ´ s et al., 2026) decomposes per- sample gradients via PCA and sparse autoencoders into steerable directions for visual attribute control—the diffusion-model analogue of our approach. Ke y differences: they decompose gradients within a single prompt’ s realisations rather than across the full training set, and target visual attributes rather than LLM behaviors. Activation-space interpretability . SAEs decompose single-layer activ ations into monosemantic features (Nanda et al., 2023); gradient-informed v ariants (g-SAEs (Olmo et al., 2025), GradSAE (Shu et al., 2025)) use gradients to improve feature selection. Both still decompose acti v ations—we decompose the gradients themselves, across all layers simultaneously . W ang & Murfet (2026) frame the theoretical dual of interpretability (behavior → training causes); Gradient Atoms provides a practical mechanism that additionally discov ers behaviors without a query . Model editing and pr ocedural knowledge. Prior steering methods require a kno wn concept with measurement functions or contrasti ve pairs (Ikram et al., 2026); Gradient Atoms discov ers steering directions unsupervised. Our finding that atoms capture task types rather than topics is consistent with Ruis et al. (2024), who sho w procedural knowledge dri ves what models e xtract from training data. B C O M P U T A T I O NA L D E TA I L S T able 3: Computational cost of the Gradient Atoms pipeline. Step Resources T ime Gradient extraction 8 × A100 40GB 170s EKF A C projection CPU, 16GB RAM ∼ 5 min Dictionary learning ( α = 0 . 1 ) CPU, 32GB RAM ∼ 15 min Coherence computation CPU, 8GB RAM ∼ 5 min T otal ∼ 25 min Model: Gemma-3 4B IT , LoRA rank 8 ( q proj + v proj ), 2.2M parameters, 136 modules across 34 layers. EKF A C factors computed separately on the full training set. C F U L L A T O M T A B L E 8 Gradient Atoms T able 4: T op 50 gradient atoms ranked by coherence score. Each atom was characterised by manual inspection of its top-20 activ ating documents. Rank Atom Coherence Acti ve Docs Description 1 #348 0.725 139 Short factual Q&A—tri via with one-word/numeric answers 2 #328 0.672 110 Grammar and sentence editing 3 #415 0.647 156 Y es/No/True/F alse binary classification 4 #458 0.643 124 Simple arithmetic 5 #498 0.614 176 Multi-category classification and labeling 6 #358 0.499 88 Sentence transformation (voice, tense, translation) 7 #2 0.463 206 Sentence restructuring (questions, passi ve/acti ve) 8 #451 0.395 182 Multi-step arithmetic and unit con versions 9 #484 0.298 49 Mixed technical (code + translations + set ops) 10 #319 0.262 180 “Name an example of X”—single-entity retrie val 11 #430 0.258 150 Sentiment and text classification 12 #425 0.257 215 Single-entity factual answers 13 #363 0.238 146 Short phrase answers to open questions 14 #52 0.230 57 “Please provide the input”—refusal on missing input 15 #364 0.205 158 Science and math fact answers 16 #64 0.201 25 Code generation (Python, JS, C++, HTML) 17 #303 0.189 49 Grammar correction on short sentences 18 #394 0.188 144 Concise direct answers (mixed tasks) 19 #488 0.187 168 Short inspirational/generic responses 20 #477 0.185 227 W ord-lev el tasks (synonyms, anton yms, rhymes) 21 #376 0.176 97 Creati ve short-form writing 22 #136 0.165 161 Multi-sentence explanatory answers 23 #66 0.154 45 Long-form generation (essays, paragraphs) 24 #457 0.152 83 Comparison and analysis tasks 25 #256 0.152 50 Step-by-step instructions and ho w-to guides 26 #265 0.151 118 List generation (brainstorming, idea lists) 27 #224 0.149 31 Email and letter drafting 28 #446 0.146 86 Persuasi ve/ar gumentati ve writing 29 #294 0.142 50 Data extraction and structured output 30 #419 0.142 78 Analogy and metaphor reasoning 31 #359 0.137 211 Neutral informational answers 32 #306 0.136 118 Summarisation 33 #181 0.119 87 Dialogue and con versational responses 34 #72 0.118 37 Math word problems 35 #445 0.117 69 Numeric computation (GCF , LCM, time) 36 #465 0.116 70 Grammar correction on casual sentences 37 #231 0.115 21 SQL queries and structured code 38 #161 0.111 47 Systematic refusal on unclear input 39 #325 0.106 143 Single-word/token e xtraction from input 40 #61 0.105 21 Python utility function implementations 41 #469 0.103 143 Bulleted list generation 42 #299 0.103 46 Numbered list generation 43 #67 0.102 9 SQL + rege x + technical expressions 44 #180 0.100 52 Mixed code e xecution and classification 45 #381 0.097 79 Code generation (broad, multi-language) 46 #428 0.096 81 Database/web code (SQL, HTML, CSS, APIs) 47 #48 0.095 56 Single-word v ocabulary tasks (fill-in-blank, plurals) 48 #475 0.088 83 Summarisation and paraphrasing 49 #233 0.087 46 Numeric/factual recall with approximation 50 #172 0.084 129 General kno wledge Q&A 9

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment