ASAP: Attention-Shift-Aware Pruning for Efficient LVLM Inference

While Large Vision-Language Models (LVLMs) demonstrate exceptional multi-modal capabilities, the quadratic computational cost of processing high-resolution visual tokens remains a critical bottleneck. Though recent token reduction strategies attempt …

Authors: Surendra Pathak, Bo Han

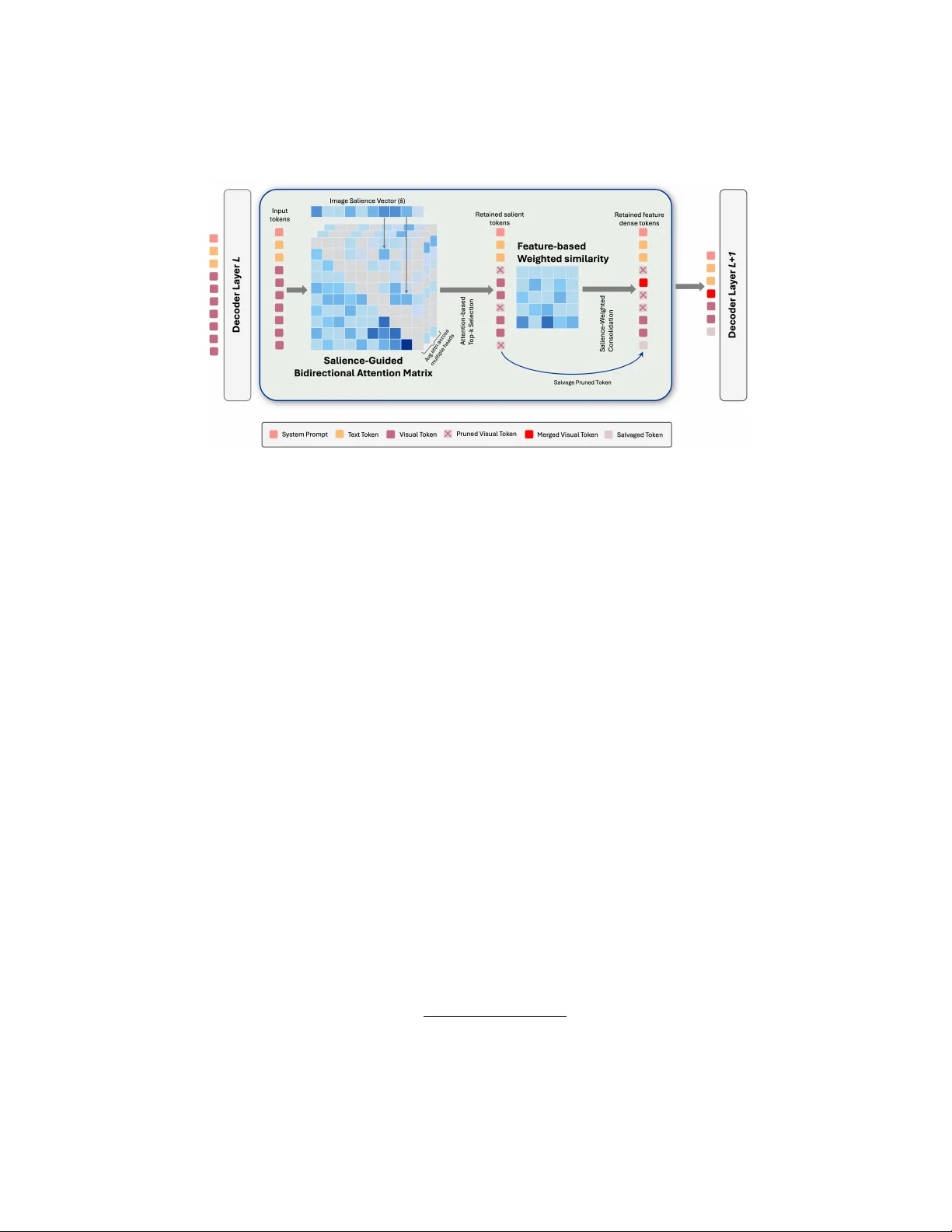

ASAP: A tten tion-Shift-A w are Pruning for Efficien t L VLM Inference Surendra P athak and Bo Han George Mason Univ ersity F airfax, V A, USA {spathak8, bohan}@gmu.edu Abstract. While Large Vision-Language Mo dels (L V LMs) demonstrate exceptional m ulti-mo dal capabilities, the quadratic computational cost of processing high-resolution visual tok ens remains a critical b ottleneck. Though recen t tok en reduction strategies attempt to accelerate inference, suc h metho ds inadequately exploit attention v alues and fail to address tok en redundancy . More critically , they o verlook the “atten tion shift” phenomenon inherent in L VLMs, which sk ews token atten tion scores. In this work, w e prop ose ASAP , a nov el training-free, KV-Cache-compatible pruning recipe that comprehensively addresses these limitations. First, w e mitigate the attention shift by utilizing a dynamic bidirectional soft atten tion mask, ensuring the selection of genuinely informative tokens rather than naive attention-based selection. Second, w e p osit that high seman tic redundancy within the tok en set degrades p erformance. W e therefore introduce a weigh ted soft merging comp onent that merges se- man tically similar tokens, preserving only the most feature-dense visual patc hes for subsequent la yers. ASAP achiev es virtually lossless compres- sion of visual context, retaining 99.02% of the original LLaV A-NeXT-7B p erformance while aggressively slashing computational FLOPs by ∼ 80%. Keyw ords: Large Vision-Language Mo dels · Efficient Inference 1 In tro duction Large Language Models (LLMs) hav e emerged as the dominan t paradigm for a wide range of natural language processing tasks, demonstrating remark able capabilities in reasoning, generation, and understanding [4, 14, 24, 43, 44]. This success has catalyzed a shift to ward the developmen t of multimodal systems suc h as Large Vision Language Mo dels (L VLMs) [1, 6, 13, 21, 28, 30, 41, 59]. By in tegrat- ing visual enco ders with LLM backbones, L VLMs hav e achiev ed unprecedented p erformance on challenging vision-and-language tasks, including visual question answ ering (V QA), image captioning, and multimodal reasoning. Ho wev er, the adoption of these mo dels is constrained b y their enormous computational de- mands. In particular, the length of visual tokens increases drastically in L VLMs, in tro ducing a computational burden that scales massively with sequence length. F or example, while LLaV A-1.5 conv erts an image in to 576 tokens, LLaV A-NeXT 2 P athak et al. partitions a high-resolution im age in to four tiles, resulting in a total sequence of ∼ 2 , 304 visual tokens. The abundance of visual tokens b ecomes a b ottleneck for T ransformer [45] during inference, since the computational burden scales quadratically with the sequence length. This challenge has sparked a surge of researc h tow ard efficien t inference, with token pruning as a promising candidate solution. Fig. 1: ASAP in tegration within the L VLM architecture. The plug-and-play pruning mo dule is em b edded directly within the language model bac kb one, op- erating b etw een standard deco der lay ers to compress the visual tok en sequence during the inference forw ard pass. Existing tok en pruning metho ds for L VLMs fall into tw o categories. The first comprises tec hniques that prune tokens within the vision en- co der [3, 36, 50, 56]. These methods t ypically use ViT-attention or feature v alues to iden tify candidate tokens for pruning or merging b efore b eing pro- cessed b y the LLM bac kb one. Ho w- ev er, this approach is compromised b y a flaw in its text-agnostic nature. Here, pruning decisions are made in a con textual v acuum, lac king informa- tion ab out the user’s query for the image, which ma y lead to degraded mo del p erformance and unexpected mo del resp onses. The second category of metho ds p erforms pruning within the multimodal LLM bac kbone [10, 48, 51, 58]. These tec hniques can access text-vision cross- atten tion v alues to perform con text-aw are, prompt-guided token pruning. Al- though these techniques can leverage users’ prompts, extant works o verlook the critical “atten tion shift” phenomenon inherent to L VLMs. Due to the distance deca y prop erty inheren t to Rotary Position Em b eddings (RoPE) [39], later vi- sual tokens receive high attention scores b ecause of architectural artifacts rather than the tok en’s seman tic conten t. Th us, tec hniques that equate atten tion v al- ues directly with token salience may be affected by this phenomenon, yielding sub-optimal pruning outcomes. While some approaches attempt to circum ven t this artifact by completely remo ving RoPE [16], such structural mo difications strip the mo del of essential p ositional priors, degrading its spatial reasoning and accuracy . In this w ork, we present a fresh p ersp ective to mitigate the attention shift by relaxing the mo dels’ standard causal attention. Sp ecifically , w e use a soft bidirectional attention mechanism that allows earlier visual tokens to “p eek” at the later ones, aggregating con text for holistic 2D scene comprehension. In this w ork, we p ostulate that an effective pruning strategy must address tw o distinct issues: the p ositional bias of atten tion scores and the inherent redundancy of visual information. Moreo ver, an image may contain regions of lo w-semantic- densit y (e.g., sky , o cean, or forest) that are represented by numerous redundant patc h tokens. W e argue that an effective pruner m ust select not only a sparse set of tok ens but also ensure that they are feature-dense. ASAP: A ttention-Shift-A w are Pruning for Efficient L VLM Inference 3 In this work, we prop ose ASAP , a training-free tok en pruning framew ork that maximizes computational savings while maintaining full compatibilit y with k ey-v alue (KV) cache and multi-turn conv ersations. ASAP consists of three ma- jor comp onents. The first comp onent p erforms attention-shift-a ware pruning by lev eraging bidirectional attention v alues, mitigating the influence of the atten- tion shift. The second comp onen t eliminates redundan t tok ens b y emplo ying a weigh ted feature-based similarity metric, thereby preserving a feature-dense tok en set. Finally , the third comp onent maximizes information reten tion by sal- v aging high-salience tok ens from the pruned p o ol to fill the budget slots v acated during the redundancy elimination phase. Unlike prior works, ASAP structurally mitigates attention shift and utilizes a comprehensiv e three-stage framework for effectiv e token pruning. Our exp erimental results demonstrate the sup eriority of ASAP . When ap- plied to the LLaV A-1.5 [31] and LLaV A-NeXT [30] mo dels, our metho d achiev es lossless compression, surpassing existing baselines [2, 10, 36, 58]. Notably , ASAP retains ov er 99.02% of the v anilla LL aV A-NeXT-7B model’s performance while using only 21.19% of the original FLOPs, and ev en achiev es supra-v anilla p er- formance on a few b enchmarks (e.g., MMBenc h [32]). This work demonstrates that a carefully designed pruning strategy , aw are of b oth shift characteristics and redundancy , can deliver substantial efficiency gains without compromising p erformance. Our con tributions are summarized as follows: – W e systematically identify and attribute the attention shift artifact in L VLMs to the distance deca y prop erty of RoPE. – T o our kno wledge, w e are the first to structurally mitigate the atten tion shift artifact, a critical failure p oint for attention-based tok en pruning in L VLMs, b y employing a soft bidirectional attention mechanism. – W e prop ose ASAP , a nov el, training-free pruning framework that co-designs an attention-shift-a ware pruning mo dule and a feature-based, weigh ted re- dundancy elimination mechanism. F urthermore, ASAP main tains full com- patibilit y with standard KV caching and supp orts multi-turn conv ersational inference. – W e conduct extensive exp eriments, demonstrating that ASAP achiev es sig- nifican tly superior p erformance and outperforms existing baselines. ASAP retains > 99% of v anilla accuracy while reducing FLOPs b y ∼ 80%, compared to the standard LLaV A-NeXT-7b mo del. 2 Related W ork 2.1 Large Vision Language Mo dels The remark able capabilities of LLMs [4, 14, 24, 43, 44] on diverse tasks such as question-answ ering, reasoning, coding, etc. has inspired the extension to supp ort m ultimo dal data, such as images and videos, in L VLMs [5, 29, 31]. These L VLMs usually consist of an LLM backbone and a visual enco der [15, 34, 40, 54] that 4 P athak et al. em b eds visual tok ens b efore passing them to an alignment mo dule, to align with text em b eddings. The capabilities of more recent L VLMs [1, 6, 13, 21, 28, 30, 41, 59] ha ve b een extended to supp ort high-resolution image and video data, resulting in a large n umber of visual tokens. F or example, LLaV A-NeXT [30] conv erts a high-resolution image into 4 × 4 tiles, resulting in a sequence of ∼ 2, 304 visual tok ens. Similarly , LLaV A-One Vision [26] enco des the image into 384 × 384 tiles, pro ducing up to 8,748 tok ens, and In tern VL2 [12] partitions an image in to 448 × 448 tiles, resulting in up to 10,240 tok ens. How ever, the massive visual token coun t incurs a computational burden that scales quadratically , constraining the adaptation of L VLMs. Th us, efficien t inference strategies that sav e computa- tional resources ha ve b ecome increasingly essential. 2.2 T oken Pruning in L VLM/ViT T oken compression has emerged as a p otential solution to the O ( N 2 ) compu- tational complexit y of the self-attention mechanism in L VLMs. T oken compres- sion can b e achiev ed by either pruning irrelev ant tok ens or merging semantically similar tok ens. These strategies were first extensiv ely studied in the context of Vision T ransformers (ViT s) [7, 8, 11, 17, 18, 22, 25, 35, 46] b efore the era of L VLMs. F or example, T oMe [7] in tro duced a bipartite soft matc hing algorithm to merge tok ens based on feature similarity , while EViT [27] utilized attention scores be- t ween [ C LS ] tok en and visual tokens as a proxy of token imp ortance. In the L VLM domain, these concepts ha ve b een adapted but face new challenges. In L VLM, tec hniques suc h as LLaV A-Mini [57], Lla volta [9], and others [52, 55] in tegrate pruning mec hanisms but require an additional training phase. These approac hes incur an additional computational burden, which undermines the primary goal of efficiency and limits their “plug-and-play” applicability . Conse- quen tly , training-free, plug-and-play approaches hav e received more atten tion recen tly . These methods can b e categorized into several subgroups. The first group that includes LLaV A Prumerge [36], Visionzip [50], VisPruner [56], and HiRED [3] extends the ViT-era logic, relying on the attention scores b et ween a [CLS] tok en and the visual tok ens. How ev er, these pruning strategies rely on the [CLS] tok en, which is present only in sp ecific architectures, thereby limiting adaptabilit y to differen t L VLMs. Another line of training-free w ork, including F astV [10], PyramidDrop [48], Sparse VLM [58], and FitPrune [51], lev erages text-visual cross-atten tion scores to identify salient tokens. Ho wev er, these tec hniques suffer from the critical atten tion shift phenomenon inheren t to L VLMs [38]. Due to p ositional embeddings like RoPE, later tokens receiv e high attention scores b ecause of architectural functions rather than the token’s seman tic con tent. Our work structurally addresses this limitation, enabling an efficien t pruning recip e that rectifies naive attention-based selection. 3 Motiv ation T o understand the limitations of the current visual tok en pruning metho ds, w e inv estigate atten tion v alue patterns within the LLM backbones of L VLMs. ASAP: A ttention-Shift-A ware Pruning for Efficient L VLM Inference 5 (a) How many cars are there in the parking lot? F astV : There are tw o cars in the parking lot. ✗ (b) Ho w many cars are there in the parking lot? ASAP : There are three cars in the parking lot. ✓ Fig. 2: Qualitative comparison of token selection. Retained tok ens (top 128) are visible, while dropped tokens are darkened. F astV demonstrates a b ottom spatial bias, missing the distant third car. ASAP successfully mitigates this bias, preserving critical bac kground features to accurately count all cars. Our analysis reveals a pronounced spatial bias in which b ottom visual tokens consisten tly receive disprop ortionately high attention scores across b enchmarks, indep enden t of underlying feature salience. Figure 2 illustrates a represen tative case: when prompted to count cars, a naive attention-based pruning strategy re- tains the b ottom-most patches, discarding background features and consequently missing the distan t third car entirely . This demonstrates that relying on uncal- ibrated, ra w attention scores for token selection is fundamen tally flaw ed. This atten tion shift is attributed to RoPE, a relative p ositional enco ding mec hanism that is used in p opular LLMs (e.g., LLaMA [43], Qwen [4], etc.). RoPE implic- itly imp oses a distance penalty on tok ens p ositioned further from the text query , inadv ertently suppressing early visual features. This suppression underscores the critical need to mitigate p ositional bias for holistic scene comprehension. In the remainder of this section, we formally attribute this attention shift to the RoPE distance decay mechanism and subsequently prop ose an arc hitectural approach to mitigate it, restoring balanced visual information routing. 3.1 Explaining Atten tion Shift: The RoPE Deca y W e observed the prev alence of attention shift in L VLMs in our previous section. W e attribute this bias not to seman tic relev ance, but to a structural artifact of RoPE. F or a query at p osition m and key at p osition n , RoPE computes their pre- softmax attention score as s ( m, n ) = x ⊤ m W ⊤ q R Θ,m − n W k x n . This formulation inheren tly imposes a distance deca y penalty via the relative rotation matrix R Θ,m − n , implicitly fa voring tokens that are p ositionally closer to the query . In L VLMs, a 2D image is often flattened in to a long 1D tok en sequence during computation. How ever, this leads to a massiv e p ositional gap b etw een the image tok ens and the text query . The initial visual tokens (top-left of the image) are p ositioned significan tly further aw ay from the subsequent text queries than the terminal tokens (the b ottom-right). Due to this larger distance, RoPE 6 P athak et al. unfairly p enalizes the early visual features. The distance decay penalty is suitable for LLMs such as Llama [44], where distant previous text has less imp ortance in subsequent text generation. Ho wev er, this b ecomes a limitation in L VLMs, where distan t visual features are unfairly p enalized. 3.2 Revisiting Causal Atten tion for L VLMs Bey ond the p ositional bias induced b y RoPE, information routing in L VLMs is fundamen tally constrained b y the standard atten tion masking strategy . A causal mask M causal ∈ R N × N is additively applied to pre-softmax attention logits S prior to softmax normalization: A = Softmax ( S + M causal ) . (1) Because this mask is strictly defined as M causal i,j = 0 for i ≥ j and M causal i,j = −∞ for i < j , the attention weigh t A i,j for an y subsequent patch ( j > i ) is driv en exactly to zero. By masking future v alue vectors, this unidirectional con- strain t ensures the autoregressiv e prop erty necessary for next-tok en prediction. This sequential inductiv e bias is highly effectiv e for natural language tasks, as text inherently unfolds along a temp oral axis where historical context predomi- nan tly dictates subsequent seman tics. How ev er, directly inheriting this masking strategy in L VLMs in tro duces a critical misalignmen t, giv en that images are in trinsically non-sequential, tw o-dimensional spatial representations. Visual fea- tures are structurally in terdep enden t, where the seman tics of an early patc h frequen tly dep end on the global context of surrounding patc hes. Consequently , enforcing strict causality artificially fragments these spatial dependencies along an arbitrary 1D serialization axis, depriving early visual tokens of the global re- ceptiv e field. This necessitates bidirectional information flow for comprehensiv e scene understanding. 4 Metho dology Motiv ated by the observed structural limitations in Section 3, w e prop ose a bidi- rectional attention strategy to allow unrestricted information flow among visual tok ens. Sp ecifically , we relax the strict unidirectional constrain t of the standard causal attention exclusively for the visual tok ens, while preserving standard auto- regressiv e constraints for all subsequent text tokens. While recent uniform bidi- rectional masks [42, 49] relax these constraints, their static nature inadverten tly broadcasts noise from uninformative background features. T o ov ercome this, w e prop ose a dynamic, targeted masking strategy to optimize visual information flo w. 4.1 Salience-Guided Bidirectional Atten tion Motiv ated by our prior findings in Section 3, w e prop ose a Salience-Guided Bidirectional Mask (SG-BiMask) that dynamically relaxes the causal constraint ASAP: A ttention-Shift-A ware Pruning for Efficient L VLM Inference 7 for visual tokens to ov ercome the spatial-temporal discrepancy of strict autore- gression. Rather than uniformly blinding queries to future keys, our approach in tro duces a target-dep endent, additive p enalt y matrix M bidir that scales the vis- ibilit y of a future patch based on its underlying structural imp ortance. W e define W = QK T √ d ∈ R N × N as the pre-softmax alignment matrix computed during the standard forward pass. T o extract the structural salience of eac h vis ual token without introducing auxiliary O ( N 2 ) computation, we repurp ose the in-flight pre-softmax alignmen t matrix W . Let I denote the set of indices corresp onding to the visual patches. W e formulate the ra w atten tion mass s j of a target key tok en j ∈ I as its summed alignment with all visual queries i ∈ I : s j = X i ∈I W i,j . (2) Because pre-softmax logits can op erate in arbitrary magnitude spaces de- p ending on the lay er depth and mo del architecture (often resulting in deeply negativ e absolute v alues), analyzing them nativ ely obscures their relativ e imp or- tance. T o pro ject these raw logits into a b ounded salience distribution ˆ s j ∈ [0 , 1] while preserving the relativ e v ariance learned by the attention mechanism, we apply Min-Max normalization directly to the ra w attention mass: ˆ s j = s j − s min s max − s min + ϵ , (3) where s min and s max represen t the minimum and maxim um flo ored masses across the image span, and ϵ = 10 − 6 ensures n umerical stability . This normalized salience explicitly dictates the bidirectional leak budget for each tok en. W e define the attention discount factor λ j = λ max · ˆ s j , where λ max ∈ (0 , 1] is a configurable hyperparameter gov erning the maximum allow- able forward visibility . Because the mask is additively combined with the logits prior to the exp onential softmax op eration, this scalar must b e pro jected in to log-space. The prop osed bidirectional mask M bidir is formally defined to relax the causal constraint b y o verwriting the −∞ v alues in the upp er triangle ( i < j ) of the image span with our salience-dep endent p enalt y: M bidir i,j = ( 0 if i ≥ j ln(max( λ j , ϵ )) if i < j . (4) By replacing M causal with M bidir , the final atten tion computation b ecomes: A = Softmax ( W + M bidir ) . (5) F or any previous token i attending to a future target j , the exp onent rule resolv es the additive log-p enalty as a scalar on the unnormalized probabilit y: exp( W i,j + ln( λ j )) = λ j · exp( W i,j ) . Consequen tly , highly salient structural anc hors are p ermitted to broadcast their features backw ard through the se- quence with minimal atten uation, while non-informativ e background patc hes are strongly suppressed. By relaxing 1D temp oral constraints for salient visual 8 P athak et al. Fig. 3: The t w o-stage ASAP arc hitecture. After deco der lay er L , a salience-guided bidirectional matrix retains top- k salien t visual tokens. A feature-based similarity mod- ule then consolidates redundant tokens via salience-weigh ted merging and a salv age mec hanism. This dual-filtering pipeline passes a compact, feature-dense visual set to la yer L + 1 , strictly preserving all text and system prompts. anc hors, this mechanism aligns the sequen tial priors of LLM generation with the spatial concurrency required for holistic 2D scene comprehension. 4.2 Salience-W eighted Consolidation and Budget Reallo cation While the ASAP mask successfully preserves high-impact visual anchors, it in- heren tly leads to the retention of semantically redundant patc hes (e.g., adjacent patc hes of a uniform background). Directly applying standard reduction algo- rithms treats all features uniformly during aggregation [7]. In the con text of L VLMs, this cardinality-based av eraging risks sev ere feature dilution, blurring the salien t visual patches isolated by our bidirectional mask [47]. T o optimize the informational density of our predefined token reten tion bud- get (defined in Section 5.2, 5.3, and 5.4, resp ectiv ely), w e in tro duce a Salience- W eighted Consolidation mechanism. This module couples feature redundancy with the structural imp ortance scores ( ˆ s ) extracted during the Salience-Guided Bidirectional Masking phase in Section 4.1. Giv en the preserved tok en set I keep , w e iden tify seman tic redundancy b y computing the pairwise cosine similarit y S i,j b et ween hidden states. Rather than applying a naiv e av erage or summation, we aggregate the features using a con vex com bination weigh ted by the normalized attention mass ˆ s derived from Equa- tion 3: h i ← ˆ s i h i + P j ∈I src ( i ) ˆ s j h j ˆ s i + P j ∈I src ( i ) ˆ s j . (6) ASAP: A ttention-Shift-A ware Pruning for Efficient L VLM Inference 9 T o preven t cyclic dep endencies during aggregation, we in tro duce a strict salience-anc hored routing: a source token j is absorb ed by a destination token i if and only if S i,j > t and ˆ s i ≥ ˆ s j (breaking ties by sequence index). This direc- tional mapping establishes disjoint source sets I src ( i ) , guaranteeing that feature consolidation strictly flo ws tow ard the most structurally significant anchors. This formulation ensures that when redundan t tok ens are fused, the resulting laten t representation is heavily anchored by the patch that the previous mo dule deemed most structurally critical, prev enting feature dilution. The fusion of a := | S i I src ( i ) | source tokens v acates a p ositions within the k -token budget. T o optimize information retention, we reallo cate these a v ail- able slots to the top a tok ens from the previously pruned p o ol, ranked b y their atten tion mass s j . Consequen tly , this integration of salience-driven fusion and candidate recov ery optimizes the fixed k -token budget, ensuring that the final sequence maximizes seman tic div ersity rather than merely truncating spatial represen tation. 5 Exp erimen ts In this section, we present the results of our exp eriments that demonstrate the effectiv eness and sup eriority of our framework compared to existing baselines. Imp ortan tly , we establish the efficacy of ASAP in mitigating the RoPE decay visual atten tion shift. 5.1 Exp erimen tal Setup Datasets. W e conducted our exp eriments on sev eral commonly used b ench- marks for different tasks, including visual question answ ering, ob ject recogni- tion, optical character recognition, etc. P articularly , w e accessed ASAP’s results on VQA v2 [20], GQA [23], ScienceQA [33], T extVQA [37], MME [19], MMBec- nc h [32], MMBench-CN [32], and MMV et [53]. V QA v2 assesses foundational, op en-ended visual question answ ering, while GQA ev aluates complex comp osi- tional reasoning from scene graph-based structures. T extVQA and ScienceQA as- sess fine-grained, sp ecialized reasoning. T extVQA measures OCR-based reason- ing on text within images, while ScienceQA tests complex scien tific reasoning on diagrams. MME, MMV et, and MMBenc h are comprehensive ev aluation bench- marks where MME assesses broad p erception and reasoning across 14 sub-tasks, MMV et ev aluates in tricate real-w orld reasoning c hallenges across 6 capabilities and 16 tasks, and b oth MMBench and MMBench-CN measure fine-grained p er- ception and logic. All our experiments follo w the default settings and metrics for eac h b enchmark. Implemen tation details. W e implemen ted ASAP on different L VLMs, namely LLaV A-1.5-13B [31], and LLaV A-NeXT (LLaV A-1.6-7B) [30] to demonstrate the effectiveness of our pruning recip e across different models. LLaV A-1.5-13B 10 P athak et al. enco des a standard 336 × 336 image using 576 visual tok ens, supp orting low- resolution images. On the other hand, LLaV A NeXT supports high-resolution images by partitioning an image into 4 × 4 tiles, generating up to 2,880 tok ens. This 4 × increase in token coun t ov er LLaV A-1.5 presents a significan t compu- tational b ottleneck, making it an ideal testb ed for our pruning framework. In all our exp eriments, we implemen t ASAP once at the la yer k = 2 of the corre- sp onding bac kb one LLM. Baselines. W e compare ASAP with existing token pruning and merging tech- niques to assess the effectiveness of our strategy . F or a comprehensive compari- son, we selected a diverse set of baselines, including classic and recent metho ds, tec hniques based on either atten tion or feature similarit y , and approac hes that op erate within either the LLM backbone or the vision encoder. F or a fair com- parison, all the selected metho ds are plug-and-play techniques that can b e inte- grated into existing L VLMs without additional training. The selected baselines include F astV [10], LLaV A-PruMerge+ [36], Sparse VLM [58], DivPrune [2], and VisPruner [56]. F astV and Sparse VLM are text-aw are pruning strategies that prune visual tokens in the LLM backbone. In con trast to attention-based top- k selection in F astV, Sparse VLM selects text raters and adaptiv ely prunes visual tok ens. LLaV A-PruMerge+ and VisPruner leverage [CLS] attention to deter- mine salient visual tok ens in the image enco der b efore passing to the LLM back- b one. In contrast, DivPrune formulates tok en pruning as a “Max-Min Diversit y Problem” (MMDP) to select a subset of tokens that maximizes feature distance. 5.2 Main Results W e ev aluated ASAP against leading training-free baselines on diverse image un- derstanding tasks. Our ev aluation was conducted at tw o distinct computational budgets, corresp onding to ∼ 32.99% and ∼ 21.89% of the v anilla LLaV A-1.5-7b’s total FLOPs. The pro cedure to obtain the theoretical FLOPs is outlined in Ap- p endix B. W e selected these budgets to assess ASAP’s efficiency-to-accuracy tradeoff at mo derate and aggressive pruning levels, resp ectively . T o normalize comparisons across all b enchmarks, we rep ort an av erage p erformance metric, where scores are scaled relativ e to the v anilla mo del. ASAP establishes impressive results in training-free pruning, demonstrating a superior trade-off betw een computational cost and model p erformance. As detailed in T able 1, our metho d is highly effective across diverse pruning budgets. A t a high-efficiency setting (retaining 128 tokens at a 20.90% FLOPs ratio), our metho d retains 97.10% of the v anilla model’s a verage p erformance. Under this extreme compression, ASAP sees minimal degradation on b enchmarks suc h as MMBenc h (66.18 vs. 67.71) and MMBench-CN (62.46 vs. 63.60) while robustly main taining p erformance on T extVQA (58.88 vs 61.19). A t a more mo derate 31.88% FLOPs ratio (retaining 192 tok ens), our mo del retains an impressive 98.68% of the original av erage p erformance, further v ali- dating the framework’s efficacy . A t this budget, ASAP pushes its supra-v anilla ASAP: A ttention-Shift-A ware Pruning for Efficient L VLM Inference 11 T able 1: P erformance comparison of ASAP with other pruning strategies on LLaV A-1.5-13B [31]. Ratio represents the FLOPs ratio (p ercentage of v anilla FLOPs retained). A vg refers to the av erage percentage p erformance across all b enc hmarks compared to the v anilla mo del. Best scores are highlighted in red . Method FLOPs Ratio VQA V2 GQA SQA VQA T ext MME MMB MMB CN A vg LLaV A-1.5-13B 3.817 100% 80.0 63.25 71.6 61.19 1529.93 67.71 63.60 100 Retain 192 tok ens ( ↓ 66.67%) F astV [ECCV’24] 1.259 32.99 75.42 58.67 70.64 57.23 1442.31 66.43 61.05 95.37 PruMerge+ [ICCV’25] 1.253 32.83 76.97 59.72 71.10 57.12 1451.31 66.32 62.54 95.94 Sparse VLM [ICML’25] 1.253 32.83 78.31 57.80 73.08 51.93 1485.27 67.61 61.77 95.75 DivPrune [CVPR’25] 1.253 32.83 78.42 59.12 73.08 58.84 1482.01 65.95 59.02 96.68 ASAP (Ours) 1.253 31.88 79.46 62.43 72.89 60.69 1546.40 68.29 63.40 98.68 Retain 128 tok ens ( ↓ 77.78%) F astV [ECCV’24] 0.836 21.898 74.34 57.19 70.32 56.66 1411.30 64.15 60.37 93.72 PruMerge+ [ICCV’25] 0.833 21.801 75.78 57.32 71.89 56.50 1440.38 64.63 61.51 94.91 Sparse VLM [ICML’25] 0.833 21.801 76.18 57.85 73.97 53.15 1470.43 66.09 61.51 95.55 DivPrune [CVPR’25] 0.833 21.801 76.12 58.82 72.78 57.95 1460.01 65.81 60.43 96.02 ASAP (Ours) 0.797 20.901 76.26 58.76 72.89 58.88 1481.80 66.18 62.46 97.10 ac hievemen ts even further, outp erforming the full LLaV A-1.5-13B mo del on MME (1546.40 vs. 1529.93), MMBench (68.29 vs. 67.71), and SQA (72.89 vs. 71.6). Compared to existing metho ds, ASAP consistently outp erforms the strongest prior baseline, DivPrune [2]. ASAP b eats DivPrune’s av erage p erformance re- ten tion by 1.08% at the 128-token budget (97.10% vs. 96.02%) and by 2.00% at the 192-tok en budget (98.68% vs. 96.68%), confirming its definitiv e sup eriority in optimizing the efficiency-to-accuracy fron tier. 5.3 Results on High-Resolution Images T o v alidate the generalizabilit y of ASAP , we in tegrated it into LLaV A-NeXT, a model designed for high-resolution vision tasks. LLaV A-NeXT partitions in- put images into 4 × 4 tiles, resulting in a large and computationally exp ensive sequence of up to ∼ 2 , 880 visual tokens. While this tiling approach enhances p erformance on fine-grained tasks, it introduces significant tok en redundancy , making it an ideal testbed for our method. W e ev aluated ASAP on LLaV A- NeXT using tw o distinct retention budgets of 33.33% and 22.23%, as previously . In addition to F astV, w e use Vispruner [56] as a baseline o ver here since its LLaV A NeXT implementation is av ailable publicly . As shown in T able 2, at the 33.33% budget ( ∼ 960 tokens), our metho d achiev es p erformance that is compa- rable to, and in some cases surpasses, the v anilla LLaV A-NeXT mo del. Moreov er, with a more aggressive pruning rate retaining only 22.23% ( ∼ 640 tokens) of the original tokens, ASAP’s performance degrades by only ∼ 0.98 p oin ts on a ver- age, demonstrating robust high-compression p erformance. F urthermore, ASAP establishes a n ew state-of-the-art, outp erforming the VisPruner [56] baseline by 1.80% and 2.32% at the 33.33% and 22.23% reten tion budgets, resp ectively . 12 P athak et al. T able 2: Performance comparison of ASAP with other pruning strategies on LLaV A-NeXT-7B [30]. A vg refers to the av erage p ercentage performance across all b enc hmarks compared to the v anilla mo del. Best scores are highlighted in red . Metho d FLOPs VQA V2 GQA V QA T ext MMB MMV et A vg LLaV A-NeXT-7B 20.825 81.2 62.9 59.6 65.8 40.0 100.0 Retain 960 tokens ( ↓ 66.67%) F astV [ECCV’24] 6.477 80.33 61.98 59.32 65.12 39.4 98.89 VisPruner [ICCV’25] 6.458 80.0 62.20 58.81 65.29 38.4 98.26 ASAP (Ours) 6.258 81.19 62.29 59.67 66.41 40.1 100.06 Retain 640 tokens ( ↓ 77.78%) F astV [ECCV’24] 4.260 77.81 61.04 58.08 64.37 37.6 96.43 VisPruner [ICCV’25] 4.252 79.82 61.22 58.34 65.29 36.3 96.70 ASAP (Ours) 4.144 79.91 61.17 58.98 65.35 40.48 99.02 5.4 Results on Different L VLM Architecture T o further v alidate the generalizability of ASAP , we integrated it into In tern VL 2.5 [12], a mo dern model arc hitecture designed for high-resolution vision tasks. Similar to other high-resolution architectures, Intern VL emplo ys a dynamic tiling strategy that generates a massive sequence of visual tokens. While this enhances p erformance on fine-grained tasks, it also introduces significan t tok en redun- dancy , making it an ideal testb ed for our metho d. Since other baselines’s In- tern VL 2.5 implemen tation is not a v ailable publicly , w e use F astV as the base- line ov er here. As shown in T able 3, with the FLOPS ratio of 31.56% ( ∼ 510 to- k ens), ASAP maintains the mo del’s p erformance, exp eriencing a minimal av erage degradation of only 1.24 p oints compared to the v anilla mo del while drastically reducing the computational cost from 12.575 to 3.969 FLOPs. Moreov er, ASAP remains robust under a more aggressiv e pruning rate of 21.17% FLOPs ratio ( ∼ 340 tokens) by minimizing the accuracy drop. F urthermore, ASAP establishes sup erior efficiency ov er existing approaches, outp erforming the F astV [10] base- line across a verage b enc hmark scores by 1.59% and 0.67% at the 31.56% and 21.17% retention budgets, resp ectively . These p erformances establish the gener- alizabilit y of the ASAP pruning framework to mo dern L VLM architectures. A dditionally , ASAP’s SG-BiMask component is orthogonal to the existing atten tion-based pruning metho ds and can be in tegrated to increase their pruning effectiv eness. The increase in accuracy after in tegrating SG-BiMask to atten tion- based pruning is demonstrated in App endix C.1. 5.5 Ablation Study Ablation of framew ork mo dules. In this section, w e conduct a systematic ablation study to decomp ose the ASAP framework and quantify the con tribu- tions of its core mo dules. All experiments are p erformed on the LLaV A-1.5-7b mo del, with a pruning budget of ∼ 128 tokens. The p erformance is ev aluated on ASAP: A ttention-Shift-A ware Pruning for Efficient L VLM Inference 13 T able 3: Performance comparison of ASAP with other pruning strategies on Intern VL 2.5 8B. A vg refers to the av erage p ercentage p erformance across all b enc hmarks compared to the v anilla mo del. Best scores within eac h token budget are highligh ted in red . Method FLOPs Ratio (%) GQA SQA IMG POPE MME MMB CN A vg Intern VL 2.5 8B 12.575 100.0 63.40 97.81 90.59 1696.26 82.60 100.00 Retain 510 tokens F astV [ECCV’24] 3.969 31.56 61.44 96.03 89.10 1614.42 80.32 97.17 ASAP (Ours) 3.969 31.56 62.31 97.07 90.08 1645.32 82.47 98.76 Retain 340 tokens F astV [ECCV’24] 2.663 21.17 59.17 91.51 88.47 1603.51 79.33 95.02 ASAP (Ours) 2.663 21.17 60.00 92.36 89.00 1596.91 80.15 95.69 the T extVQA and MME be nc hmarks, as they effectively measure fine-grained reasoning and m ultimo dal comprehension. The ablation is structured as an incremen tal analysis, and the results are summarized in Figure 4. Baseline (F astV) : As a starting p oin t, we ev aluate the baseline F astV metho d, which prunes tokens naively using standard causal T op- k selection. Be- cause it lacks bidirectional spatial aw areness, this approac h achiev es base scores of 53.05 on T extVQA and 1282.81 on MME. Fig. 4: Ablation of ASAP mo dules on T extVQA and MME. F astV prunes naiv ely using T op- k selection. Bidirectional uses Salience-Guided Bidirectional atten- tion v alues. ASAP adds Salience-W eighted Consolidation and Budget Reallo cation. The progressive gains confirm that combin- ing attention-driv en selection with feature consolidation maximizes seman tic density . Bidirectional (Mo dule 1) : Next, w e replace the standard causal atten- tion with our Salience-Guided Bidi- rectional atten tion v alues for token selection. This provides a significant p erformance leap, yielding an abso- lute increase of +3.23 on T extVQA (to 56.28) and +92.50 on MME (to 1375.31) ov er F astV. This v al- idates the h yp othesis that relaxing strict causal constraints and p ermit- ting bidirectional information flow al- lo w the mo del to b etter identify and retain structurally critical visual an- c hors. ASAP (F ull F ramew ork) : Fi- nally , we integrate the Salience- W eighted Consolidation and Budget Reallo cation modules to form the complete ASAP arc hitecture. Instead of merely discarding unselected tokens, this stage merges seman tically redundan t features using salience weigh ts and salv ages top-ranked pruned tok ens to fill the newly freed budget slots. This opti- 14 P athak et al. T able 4: System efficiency comparison on LLaV A-1.5-7B. W e ev aluate the computational cost and memory fo otprin t during the prefill phase. Metho d # T ok en FLOPs (T) P ercption score Storage (MB) GPU Memory (GB) CUDA Time (ms) LLaV A-1.5-7B 576 3.817 1506.46 321.39 14.33 38.27 Retain 192 tokens F astV [ECCV’24] 192 1.259 1437.45 129.73 13.98 33.65 ASAP (Ours) 192 1.259 1466.19 116.64 13.96 35.13 Retain 128 tokens F astV [ECCV’24] 128 0.836 1365.435 97.33 13.97 31.45 ASAP (Ours) 128 0.836 1417.524 82.14 13.96 33.45 mization of the token budget yields an additional p erformance increase of +1.13 on T extVQA (reaching 57.41) and +96.85 on MME (reaching 1472.16). Ov erall, the progressive gains observ ed across these stages confirm that com- bining bidirectional attention-driv en selection with salience-weigh ted feature con- solidation successfully maximizes seman tic density under a strict token budget. 5.6 Efficiency Analysis T o ev aluate the system efficiency of our approach, w e p erform a comparativ e analysis of FLOPs, KV cache storage, GPU memory , and CUDA latency along- side task accuracy against F astV [10] on the LLaV A-1.5-7B [31] architecture. As sho wn in T able 4, reducing the visual tok en coun t to 128 yields a substantial decrease in computational cost, dropping the required op erations from 3.817 to 0.836 TFLOPs. Under equiv alen t tok en retention budgets, our metho d consis- ten tly achiev es higher accuracy than F astV while sim ultaneously reducing the KV cac he storage fo otprint. F or instance, at the 128-token budget, our approach impro ves the aggregate accuracy score b y o v er 52 p oints while requiring ap- pro ximately 15% less storage space than F astV. Although our dynamic token selection mechanism introduces a marginal inference ov erhead of roughly tw o milliseconds, it pro vides a strictly sup erior balance b etw een memory efficiency and do wnstream mo del p erformance. 6 Conclusion In this w ork, we introduced ASAP , a no vel, training-free token pruning frame- w ork that establishes a new state-of-the-art efficiency-to-accuracy trade-off for L VLMs. By systematically mitigating RoPE-induced spatial biases and consoli- dating redundant visual features, ASAP ov ercomes the critical b ottlenecks of ex- isting causal pruning metho ds. Through extensiv e experimentation across a wide arra y of challenging b enc hmarks, w e demonstrated that our approac h consis- ten tly outp erforms established baselines. Notably , ASAP ac hieves virtually loss- less compression—retaining ∼ 99.02% of LLaV A-NeXT-7B’s p erformance while reducing computational FLOPs b y ∼ 80%. ASAP: A ttention-Shift-A ware Pruning for Efficient L VLM Inference 15 References 1. A chiam, J., Adler, S., Agarwal, S., Ahmad, L., Akk ay a, I., Aleman, F.L., Almeida, D., Altenschmidt, J., Altman, S., Anadk at, S., et al.: Gpt-4 tec hnical report. arXiv preprin t arXiv:2303.08774 (2023) 2. Alv ar, S.R., Singh, G., Akbari, M., Zhang, Y.: Divprune: Div ersity-based visual tok en pruning for large multimodal mo dels. In: Pro ceedings of the Computer Vision and P attern Recognition Conference. pp. 9392–9401 (2025) 3. Arif, K.H.I., Y o on, J., Nikolopoulos, D.S., V andierendonck, H., John, D., Ji, B.: Hired: Atten tion-guided token dropping for efficient inference of high-resolution vision-language mo dels. In: Pro ceedings of the AAAI Conference on Artificial In- telligence. v ol. 39, pp. 1773–1781 (2025) 4. Bai, J., Bai, S., Chu, Y., Cui, Z., Dang, K., Deng, X., F an, Y., Ge, W., Han, Y., Huang, F., et al.: Qwen technical rep ort. arXiv preprint arXiv:2309.16609 (2023) 5. Bai, J., Bai, S., Y ang, S., W ang, S., T an, S., W ang, P ., Lin, J., Zhou, C., Zhou, J.: Qwen-vl: A frontier large vision-language mo del with versatile abilities. arXiv preprin t arXiv:2308.12966 1 (2), 3 (2023) 6. Bai, S., Chen, K., Liu, X., W ang, J., Ge, W., Song, S., Dang, K., W ang, P ., W ang, S., T ang, J., et al.: Qwen2. 5-vl technical rep ort. arXiv preprint (2025) 7. Boly a, D., F u, C.Y., Dai, X., Zhang, P ., F eich tenhofer, C., Hoffman, J.: T oken merging: Y our vit but faster. arXiv preprint arXiv:2210.09461 (2022) 8. Cao, Q., P aranjap e, B., Ha jishirzi, H.: Pumer: Pruning and merging tokens for efficien t vision language mo dels. arXiv preprint arXiv:2305.17530 (2023) 9. Chen, J., Y e, L., He, J., W ang, Z.Y., Khashabi, D., Y uille, A.: Efficient large m ulti- mo dal models via visual context compression. Adv ances in Neural Information Pro cessing Systems 37 , 73986–74007 (2024) 10. Chen, L., Zhao, H., Liu, T., Bai, S., Lin, J., Zhou, C., Chang, B.: An image is worth 1/2 tokens after lay er 2: Plug-and-play inference acceleration for large vision-language mo dels. In: Europ ean Conference on Computer Vision. pp. 19–35. Springer (2024) 11. Chen, M., Shao, W., Xu, P ., Lin, M., Zhang, K., Chao, F., Ji, R., Qiao, Y., Luo, P .: Diffrate: Differentiable compression rate for efficient vision transformers. In: Pro ceedings of the IEEE/CVF in ternational conference on computer vision. pp. 17164–17174 (2023) 12. Chen, Z., W ang, W., Cao, Y., Liu, Y., Gao, Z., Cui, E., Zhu, J., Y e, S., Tian, H., Liu, Z., et al.: Expanding p erformance b oundaries of op en-source multimodal mo dels with model, data, and test-time scaling. arXiv preprint (2024) 13. Cheng, Z., Leng, S., Zhang, H., Xin, Y., Li, X., Chen, G., Zh u, Y., Zhang, W., Luo, Z., Zhao, D., et al.: Videollama 2: Adv ancing spatial-temp oral mo deling and audio understanding in video-llms. arXiv preprint arXiv:2406.07476 (2024) 14. Chiang, W.L., Li, Z., Lin, Z., Sheng, Y., W u, Z., Zhang, H., Zheng, L., Zhuang, S., Zhuang, Y., Gonzalez, J.E., et al.: Vicuna: An op en-source chatbot impressing gpt-4 with 90%* c hatgpt qualit y . See https://vicuna. lmsys. org (accessed 14 April 2023) 2 (3), 6 (2023) 15. Doso vitskiy , A.: An image is worth 16x16 words: T ransformers for image recogni- tion at scale. arXiv preprint arXiv:2010.11929 (2020) 16. Endo, M., W ang, X., Y eung-Levy , S.: F eather the throttle: Revisiting visual token pruning for vision-language model acceleration. In: Pro ceedings of the IEEE/CVF In ternational Conference on Computer Vision. pp. 22826–22835 (2025) 16 P athak et al. 17. F a yyaz, M., K o ohpay egani, S.A., Jafari, F.R., Sengupta, S., Joze, H.R.V., Som- merlade, E., Pirsiav ash, H., Gall, J.: Adaptiv e token samplin g for efficient vision transformers. In: Europ ean conference on computer vision. pp. 396–414. Springer (2022) 18. F eng, Z., Zhang, S.: Efficient vision transformer via token merger. IEEE T ransac- tions on Image Pro cessing 32 , 4156–4169 (2023) 19. F u, C., Chen, P ., Shen, Y., Qin, Y., Zhang, M., Lin, X., Qiu, Z., Lin, W., Y ang, J., Zheng, X., Li, K., Sun, X., Ji, R.: Mme: A comprehensive ev aluation b enchmark for multimodal large language mo dels. ArXiv abs/2306.13394 (2023), https: //api.semanticscholar.org/CorpusID:259243928 20. Go yal, Y., Khot, T., Summers-Stay , D., Batra, D., Parikh, D.: Making the v in vqa matter: Elev ating the role of image understanding in visual question answering. In: Pro ceedings of the IEEE conference on computer vision and pattern recognition. pp. 6904–6913 (2017) 21. Guo, Z., Xu, R., Y ao, Y., Cui, J., Ni, Z., Ge, C., Chua, T.S., Liu, Z., Huang, G.: Llav a-uhd: an lmm p erceiving any aspect ratio and high-resolution images. In: Europ ean Conference on Computer Vision. pp. 390–406. Springer (2024) 22. Haurum, J.B., Escalera, S., T aylor, G.W., Mo eslund, T.B.: Which tokens to use? inv estigating tok en reduction in vision transformers. In: Pro ceedings of the IEEE/CVF In ternational Conference on Computer Vision. pp. 773–783 (2023) 23. Hudson, D.A., Manning, C.D.: Gqa: A new dataset for real-world visual reasoning and comp ositional question answering. In: Pro ceedings of the IEEE/CVF confer- ence on computer vision and pattern recognition. pp. 6700–6709 (2019) 24. Jiang, A., Sabla yrolles, A., Mensc h, A., Bamford, C., Chaplot, D., Casas, D., Bres- sand, F., Lengyel, G., Lample, G., Saulnier, L., et al.: Mistral 7b. arxiv 2023. arXiv preprin t arXiv:2310.06825 (2024) 25. Kim, M., Gao, S., Hsu, Y.C., Shen, Y., Jin, H.: T oken fusion: Bridging the gap b et ween tok en pruning and token merging. In: Proceedings of the IEEE/CVF Win ter Conference on Applications of Computer Vision. pp. 1383–1392 (2024) 26. Li, B., Zhang, Y., Guo, D., Zhang, R., Li, F., Zhang, H., Zhang, K., Zhang, P ., Li, Y., Liu, Z., et al.: Llav a-onevision: Easy visual task transfer. arXiv preprint arXiv:2408.03326 (2024) 27. Liang, Y., Ge, C., T ong, Z., Song, Y., W ang, J., Xie, P .: Not all patc hes are what y ou need: Exp editing vision transformers via token reorganizations. arXiv preprint arXiv:2202.07800 (2022) 28. Lin, B., Y e, Y., Zh u, B., Cui, J., Ning, M., Jin, P ., Y uan, L.: Video-llav a: Learning united visual representation by alignment before pro jection. In: Pro ceedings of the 2024 Conference on Empirical Metho ds in Natural Language Processing. pp. 5971–5984 (2024) 29. Liu, H., Li, C., Li, Y., Lee, Y.J.: Impro ved baselines with visual instruction tun- ing. In: Pro ceedings of the IEEE/CVF conference on computer vision and pattern recognition. pp. 26296–26306 (2024) 30. Liu, H., Li, C., Li, Y., Li, B., Zh ang, Y., Shen, S., Lee, Y.J.: Llav anext: Improv ed reasoning, o cr, and w orld knowledge (2024) 31. Liu, H., Li, C., W u, Q., Lee, Y.J.: Visual instruction tuning. A dv ances in neural information pro cessing systems 36 , 34892–34916 (2023) 32. Liu, Y., Duan, H., Zhang, Y., Li, B., Zhang, S., Zhao, W., Y uan, Y., W ang, J., He, C., Liu, Z., et al.: Mmbench: Is your multi-modal mo del an all-around play er? In: Europ ean conference on computer vision. pp. 216–233. Springer (2024) ASAP: A ttention-Shift-A ware Pruning for Efficient L VLM Inference 17 33. Lu, P ., Mishra, S., Xi a, T., Qiu, L., Chang, K.W., Zhu, S.C., T afjord, O., Clark, P ., Kaly an, A.: Learn to explain: Multimo dal reasoning via though t c hains for science question answering. Adv ances in Neural Information Pro cessing Systems 35 , 2507–2521 (2022) 34. Radford, A., Kim, J.W., Hallacy , C., Ramesh, A., Goh, G., Agarw al, S., Sastry , G., Ask ell, A., Mishkin, P ., Clark, J., et al.: Learning transferable visual mo dels from natural language sup ervision. In: International conference on machine learning. pp. 8748–8763. PmLR (2021) 35. Rao, Y., Zhao, W., Liu, B., Lu, J., Zhou, J., Hsieh, C.J.: Dynamicvit: Efficient vision transformers with dynamic token sparsification. Adv ances in neural infor- mation pro cessing systems 34 , 13937–13949 (2021) 36. Shang, Y., Cai, M., Xu, B., Lee, Y.J., Y an, Y.: Llav a-prumerge: Adaptiv e tok en reduction for efficien t large m ultimo dal mo dels. In: Pro ceedings of the IEEE/CVF In ternational Conference on Computer Vision. pp. 22857–22867 (2025) 37. Singh, A., Natara jan, V., Shah, M., Jiang, Y., Chen, X., Batra, D., Parikh, D., Rohrbach, M.: T ow ards vqa mo dels that can read. In: Pro ceedings of the IEEE/CVF conference on computer vision and pattern recognition. pp. 8317–8326 (2019) 38. Su, J., Ahmed, M., Lu, Y., Pan, S., Bo, W., Liu, Y.: Roformer: Enhanced trans- former with rotary p osition em b edding. Neuro computing 568 , 127063 (2024) 39. Su, J., Lu, Y., P an, S., Murtadha, A., W en, B., Liu, Y.: Roformer: Enhanced transformer with rotary position embedding (2023), https : / / arxiv . org / abs / 2104.09864 40. Sun, Q., F ang, Y., W u, L., W ang, X., Cao, Y.: Ev a-clip: Impro ved training tec h- niques for clip at scale. arXiv preprint arXiv:2303.15389 (2023) 41. T eam, G., Georgiev, P ., Lei, V.I., Burnell, R., Bai, L., Gulati, A., T anzer, G., Vin- cen t, D., Pan, Z., W ang, S., et al.: Gemini 1.5: Unlocking m ultimo dal understanding across millions of tokens of context. arXiv preprint arXiv:2403.05530 (2024) 42. Tian, X., Zou, S., Y ang, Z., Zhang, J.: Identifying and mitigating p osition bias of m ulti-image vision-language mo dels. In: Pro ceedings of the Computer Vision and P attern Recognition Conference. pp. 10599–10609 (2025) 43. T ouvron, H., La vril, T., Izacard, G., Martinet, X., Lachaux, M.A., Lacroix, T., Rozière, B., Go yal, N., Hambro, E., Azhar, F., et al.: L lama: Open and efficient foundation language mo dels. arXiv preprin t arXiv:2302.13971 (2023) 44. T ouvron, H., Martin, L., Stone, K., Albert, P ., Almahairi, A., Babaei, Y., Bash- lyk ov, N., Batra, S., Bhargav a, P ., Bhosale, S., et al.: Llama 2: Op en foundation and fine-tuned chat mo dels. arXiv preprint arXiv:2307.09288 (2023) 45. V asw ani, A., Shazeer, N., Parmar, N., Uszk oreit, J., Jones, L., Gomez, A.N., Kaiser, Ł., Polosukhin, I.: Atten tion is all you need. Adv ances in neural information pro- cessing systems 30 (2017) 46. W ei, S., Y e, T., Zhang, S., T ang, Y., Liang, J.: Joint token pruning and squeezing to wards more aggressiv e compression of vision transformers. In: Proceedings of the IEEE/CVF conference on computer vision and pattern recognition. pp. 2092–2101 (2023) 47. W en, Z., Gao, Y., W ang, S., Zhang, J., Zhang, Q., Li, W., He, C., Zhang, L.: Stop lo oking for “important tokens” in multimodal language models: Duplication matters more. In: Pro ceedings of the 2025 Conference on Empirical Metho ds in Natural Language Pro cessing. pp. 9961–9980 (2025) 48. Xing, L., Huang, Q., Dong, X., Lu, J., Zhang, P ., Zang, Y., Cao, Y., He, C., W ang, J., W u, F., et al.: Pyramiddrop: A ccelerating your large vision-language mo dels via pyramid visual redundancy reduction. arXiv preprint arXiv:2410.17247 (2024) 18 P athak et al. 49. Xing, Y., Li, Y., Laptev, I., Lu, S.: Mitigating ob ject hallucination via concentric causal attention. Adv ances in neural information processing systems 37 , 92012– 92035 (2024) 50. Y ang, S., Chen, Y., Tian, Z., W ang, C., Li, J., Y u, B., Jia, J.: Visionzip: Longer is b etter but not necessary in vision language models. In: Pro ceedings of the Com- puter Vision and Pattern Recognition Conference. pp. 19792–19802 (2025) 51. Y e, W., W u, Q., Lin, W., Zhou, Y.: Fit and prune: F ast and training-free visual tok en pruning for multi-modal large language mo dels. In: Proceedings of the AAAI Conference on Artificial Intelligence. vol. 39, pp. 22128–22136 (2025) 52. Y e, X., Gan, Y., Ge, Y., Zhang, X.P ., T ang, Y.: A tp-llav a: A daptive token pruning for large vision language mo dels. In: Proceedings of the Computer Vision and P attern Recognition Conference. pp. 24972–24982 (2025) 53. Y u, W., Y ang, Z., Li, L., W ang, J., Lin, K., Liu, Z., W ang, X., W ang, L.: Mm- v et: Ev aluating large multimodal mo dels for integrated capabilities. arXiv preprint arXiv:2308.02490 (2023) 54. Zhai, X., Mustafa, B., Kolesnik ov, A., Bey er, L.: Si gmoid loss for language im- age pre-training. In: Pro ceedings of the IEEE/CVF international conference on computer vision. pp. 11975–11986 (2023) 55. Zhang, J., Meng, D., Zhang, Z., Huang, Z., W u, T., W ang, L.: p-mod: Build- ing mixture-of-depths mllms via progressive ratio deca y . In: Proceedings of the IEEE/CVF In ternational Conference on Computer Vision. pp. 3705–3715 (2025) 56. Zhang, Q., Cheng, A., Lu, M., Zhang, R., Zhuo, Z., Cao, J., Guo, S., She, Q., Zhang, S.: Beyond text-visual attention: Exploiting visual cues for effective token pruning in vlms. In: Proceedings of the IEEE/CVF International Conference on Computer Vision. pp. 20857–20867 (2025) 57. Zhang, S., F ang, Q., Y ang, Y., F eng, Y.: Llav a-mini: Efficient image and video large m ultimo dal mo dels with one vision token. In: Y ue, Y., Garg, A., P eng, N., Sha, F., Y u, R. (eds.) International Conference on Representation Learning. vol. 2025, pp. 53285–53310 (2025), https : / / proceedings. iclr .cc / paper_ files /paper / 2025/ file/8400a2ec9ba85bfdb41eb2a584c0876d- Paper- Conference.pdf 58. Zhang, Y., F an, C.K., Ma, J., Zheng, W., Huang, T., Cheng, K., Gudovskiy , D., Okuno, T., Nak ata, Y., Keutzer, K., et al.: Sparsevlm: Visual tok en sparsifica- tion for efficient vision-language model inference. arXiv preprint (2024) 59. Zhang, Y., W u, J., Li, W., Li, B., Ma, Z., Liu, Z., Li, C.: Video instruction tuning with syn thetic data. arXiv preprint arXiv:2410.02713 (2024) A App endix B ASAP Efficiency Ev aluation Presen ting only the av erage num b er of retained tokens to rep ort pruning effi- ciency may b e insufficient, as it fails to account for the heterogeneous nature of mo dern pruning strategies. T o pro vide a more standardized and accurate mea- sure of computational cost, w e adopt theoretical FLOPs as our primary efficiency metric, a practice consistent with prior work [10, 48]. F or our ev aluation, we calcu- late the FLOPs attributable to the most computationally intensiv e comp onents ASAP: A ttention-Shift-A ware Pruning for Efficient L VLM Inference 19 Fig. 5: Impact of integrating SG-BiMask in existing pruning framew orks. Our SG-BiMask component consistently improv es p erformance when integrated with the standard causal attention baseline (F astV) across all ev aluated b enchmarks. of the LLM backbone: the multi-head atten tion (MHA) and feed-forw ard net- w ork (FFN) modules. Additionally , w e only consider the computation required for the image tokens. The theoretical FLOPs at an LLM la y er L is determined as 4 v d 2 + 2 v 2 d + 3 v dm , where v is the total num b er of image tokens in the lay er L , d is the hidden state size, and m is the in termediate size of FFN. If the tok ens are pruned at m ultiple lay ers rep eatedly , the total FLOPs is obtained by: Ω ( ˆ v ) = S − 1 X n =0 L n × 4 v n d 2 + 2 v 2 n d + 3 v n dm s.t. v n = ⌈ λ n v ⌉ , n = 0 , 1 , . . . , S − 1 (7) where tok ens are pruned S times with respective pruning ratio λ n , and L n is the n umber of LLM deco der lay ers with v n tok en. The follo wing notation obtains the FLOPs ratio: η = Ω ( ˆ v ) L × (4 v d 2 + 2 v 2 d + 3 v dm ) , (8) where L × 4 v d 2 + 2 v 2 d + 3 v dm represen ts the FLOPs without applied pruning. C A dditional Exp erimen ts In this section, we present additional exp erimental results of our ASAP pruning framew ork to provide further insights on the framework’s p erformance. C.1 SG-BiMask as a Plug-and-Pla y Comp onen t T o demonstrate its mo dularity , w e designed SG-BiMask as a light weigh t, plug- and-pla y comp onent capable of seamlessly upgrading existing L VLM inference 20 P athak et al. pip elines. W e empirically v alidate this versatilit y b y directly integrating it in to F astV [10], a widely adopted baseline that conv entionally relies on strict causal atten tion for top- k token pruning. F or this exp eriment, we used the LLaV A-1.5- 7B mo del with the pruning budget of ∼ 128 tokens (FLOPs: 0.797). As Figure 5 illustrates, augmenting F astV with our bidirectional mask immediately yields consisten t p erformance gains across multiple b enchmarks. By allo wing visual to- k ens to bidirectionally attend to one another regardless of 1D sequence p osition, this drop-in mo dule instan tly cultiv ates a ric her, spatially a ware representation prior to compression.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment