LLM-Guided Reinforcement Learning for Audio-Visual Speech Enhancement

In existing Audio-Visual Speech Enhancement (AVSE) methods, objectives such as Scale-Invariant Signal-to-Noise Ratio (SI-SNR) and Mean Squared Error (MSE) are widely used; however, they often correlate poorly with perceptual quality and provide limit…

Authors: Chih-Ning Chen, Jen-Cheng Hou, Hsin-Min Wang

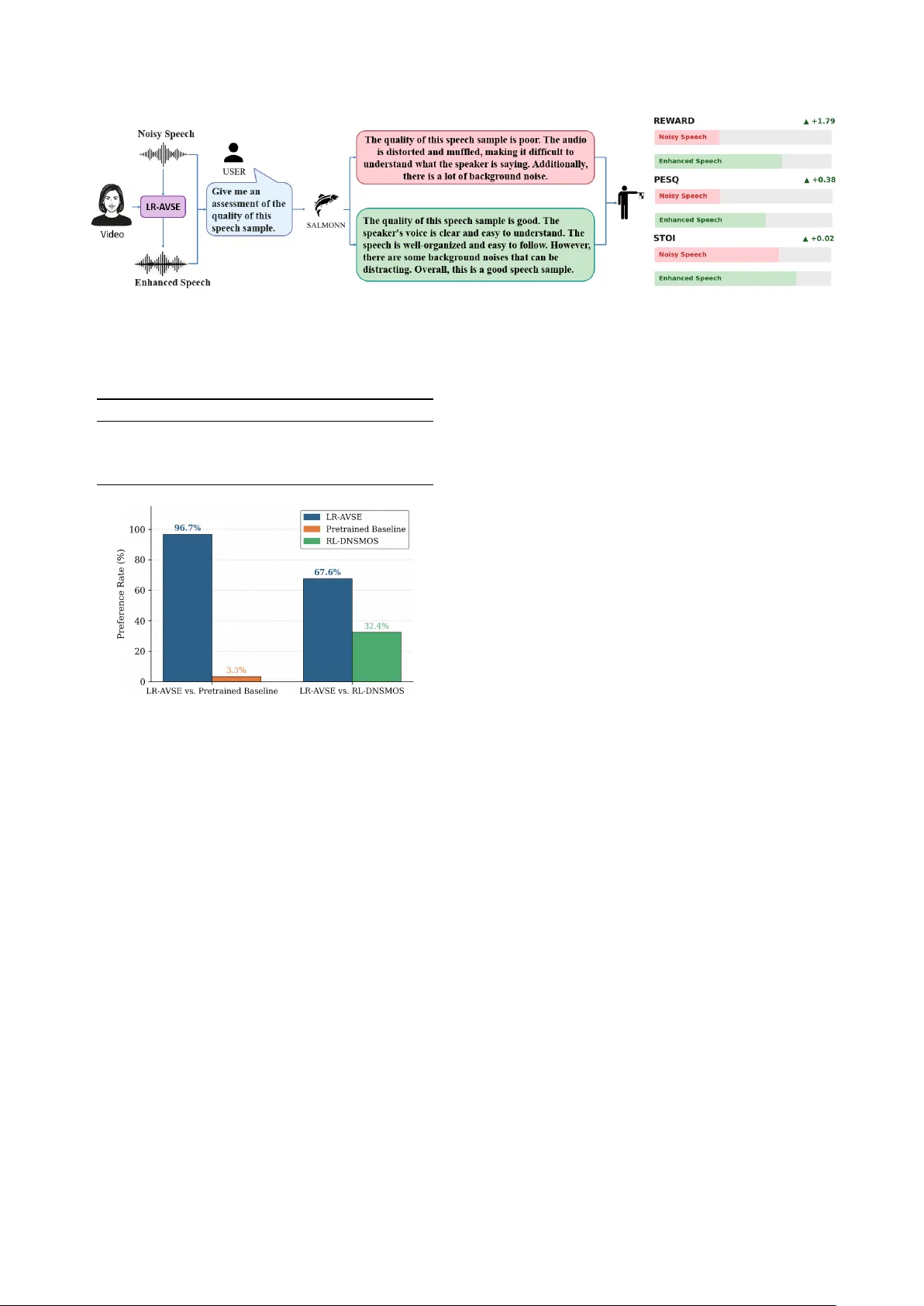

LLM-Guided Reinf or cement Learning f or A udio-V isual Speech Enhancement Chih-Ning Chen 1 , J en-Cheng Hou 2 , Hsin-Min W ang 2 , Shao-Y i Chien 1 , Y u Tsao 2 , ∗∗ , F an-Gang Zeng 3 1 Department of Electrical Engineering, National T aiwan Uni v ersity , T aiwan 2 Research Center for Information T echnology Innov ation, Academia Sinica, T aiw an 3 Center for Hearing Research, Uni versity of California Irvine, USA { ning, sychien } @media.ntu.ee.edu.tw, { jchou, whm, yu.tsao } @citi.sinica.edu.tw, fzeng@uci.edu Abstract In existing Audio-V isual Speech Enhancement (A VSE) meth- ods, objectiv es such as Scale-In v ariant Signal-to-Noise Ratio (SI-SNR) and Mean Squared Error (MSE) are widely used; howe ver , they often correlate poorly with perceptual quality and provide limited interpretability for optimization. This work pro- poses a reinforcement learning–based A VSE framework with a Large Language Model (LLM)-based interpretable rew ard model. An audio LLM generates natural language descriptions of enhanced speech, which are con verted by a sentiment analy- sis model into a 1–5 rating score serving as the PPO reward for fine-tuning a pretrained A VSE model. Compared with scalar metrics, LLM-generated feedback is semantically rich and ex- plicitly describes improv ements in speech quality . Experiments on the 4th COG-MHEAR A VSE Challenge (A VSEC-4) dataset show that the proposed method outperforms a supervised base- line and a DNSMOS-based RL baseline in PESQ, ST OI, neural quality metrics, and subjectiv e listening tests. Index T erms : speech enhancement, reinforcement learning, human feedback, speech quality , LLM 1. Introduction In real-world environments, speech is often corrupted by back- ground noise. Speech Enhancement (SE) techniques are there- fore widely used to suppress noise and improve speech qual- ity and intelligibility . Compared to con v entional SE, Audio- V isual Speech Enhancement (A VSE) additionally incorporates visual information, providing complementary cues that en- hance denoising performance [1, 2, 3, 4]. Prior studies have demonstrated that integrating visual modality significantly im- prov es ov erall performance [5, 6, 7, 8]. Howe ver , most A VSE systems are trained using con ventional objectiv es such as Scale-In variant Signal-to-Noise Ratio (SI-SNR) [9] and Mean Squared Error (MSE). Although effectiv e for optimization, these objectiv es do not necessarily align with human subjecti ve perception, creating a gap between training targets and actual listening experience. Recent Generative Adversarial Network (GAN)-based ap- proaches attempt to incorporate e valuation metrics directly into training. MetricGAN [10, 11] designs a learnable discriminator to approximate and optimize Perceptual Evaluation of Speech Quality (PESQ) [12], while CMGAN [13] refines both mag- nitude and phase to improve speech naturalness. Despite the widespread use of metrics such as PESQ, Short-T ime Objec- tiv e Intelligibility (STOI) [14], and Scale-Inv ariant Signal-to- Distortion Ratio (SI-SDR) [9], higher scores do not always cor- respond to better perceiv ed quality . Improvements in SI-SNR, ** indicates the corresponding author . for e xample, may still introduce artifacts or unnatural distor- tions not fully captured by objectiv e measures. The emergence of Large Language Models (LLMs) [15] provides new oppor- tunities for perceptual assessment. Recent audio LLMs demon- strate strong capabilities in evaluating speech quality [16, 17]. Giv en a prompt, such models generate natural language descrip- tions addressing clarity , noise, and distortion [18]. These tex- tual ev aluations complement traditional metrics and can be con- verted into numerical scores via sentiment analysis, enabling their integration into training objecti ves. In this work, we propose an LLM-based reinforcement learning framework for A VSE, termed LR-A VSE. A pretrained LLM generates perceptually aligned speech quality assessments that serve as reward signals during optimization. Compared with prior RL-based SE approaches—such as using sound qual- ity measures [19], automatic speech recognition performance [20], predicted MOS of NISQA [21, 22], or direct preference optimization with a neural MOS predictor [23]—our method introduces LLM-generated natural language descriptions as re- wards, extending perceptual alignment to the audio-visual set- ting while providing interpretability be yond scalar metrics. T o our knowledge, the proposed LR-A VSE is the first framew ork to conv ert LLM-generated descriptive e valuations into reward signals for A VSE optimization. This enables train- ing guided by explicit, human-interpretable explanations. The LLM remains frozen during training to ensure a stable reward criterion. Unlike concurrent work [23], which relies solely on scalar-valued objectiv es, our approach first produces tex- tual quality descriptions and then con verts them into numeri- cal scores, preserving the semantic basis of ev aluation. Beyond optimizing scores, this design enhances interpretability: the te x- tual feedback clarifies why a sample is rated higher , such as im- prov ed clarity , reduced noise, or diminished distortion. By in- corporating natural language quality feedback into SE training, our framework moves beyond black-box numerical optimiza- tion tow ard perceptually grounded and explainable learning. 2. Methodology This section describes in detail the technical architecture of the proposed LR-A VSE approach. W e first introduce the con ven- tional A VSE model as the base architecture, then reformulate the SE problem as a reinforcement learning problem, and de- scribe the RL-based policy optimization strategy and reward model design. The ov erall system pipeline and architecture are illustrated in Figure 1. 2.1. The A VSE Model The A VSE model in our framew ork is an en- coder–separator–decoder architecture adopted in the A VSE4 Figure 1: The training procedur e of the proposed LR-A VSE frame work. Figure 2: Pipeline of the LLM-based interpr etable re ward gen- eration. model [24]. Let f θ ( · ) denote the neural network with param- eters θ , which takes audio and visual inputs and produces the enhanced speech. The noisy speech wa veform is denoted as x ∈ R C × T , where C denotes the number of channels and T denotes the temporal length. The corresponding visual input is represented as v ∈ R 1 × F × H × W , where F is the number of frames, H and W denote the height and width of each video frame, respec- tiv ely . First, the encoder transforms the time-domain signal into a high-dimensional representation: W = ReLU(Con v1D( x )) , W ∈ R B × N × K , (1) where N is the channel size, K is the length, and B is the batch size. In the visual branch, visual features are extracted from the video via VisualF rontend( · ) : V = VisualF rontend( v ) , V ∈ R B × D v × T v , (2) where D v denotes the visual feature dimension, and T v denotes the temporal length. The separator adopts a T emporal Con volutional Network (TCN) architecture [25]. The visual features are first processed by depthwise temporal conv olution and pointwise con volution, then fused with the audio features and fed into the TCN, which ultimately predicts a time-domain mask, ˆ M ∈ R B × C × N × K . The decoder applies the predicted mask to the encoder’ s audio features to reconstruct the corresponding time-domain wa veform ˆ y ∈ R C × T : ˆ y = Deco der( W ⊙ ˆ M ) . (3) During training, the baseline uses SI-SNR as the optimiza- tion objectiv e. Given the clean speech s and the model’ s esti- mated speech ˆ y , the loss function is defined as: L SI-SNR = − SI - SNR( s, ˆ y ) . (4) 2.2. SE as a Reinfor cement Learning Problem W e define the supervised fine-tuned model as π base θ : S → A , where S denotes the state space, encompassing all possible noisy waveform distributions and thus constituting a continuous space, and A denotes the action space, comprising all possible mask distributions. In this formulation, the predicted mask ˆ M is interpreted as the action. Since the original mask output is deterministic, we inject Gaussian noise into the mask to satisfy the stochasticity requirement of reinforcement learning, yielding the RL mask ˆ M RL : ˆ M RL = f θ ( x, v ) + n, n ∼ N (0 , σ 2 ) , (5) where σ is the standard deviation controlling the degree of stochasticity , which determines the magnitude of noise added to the mask. The RL-optimized polic y is denoted as π RL θ , while the orig- inal pretrained policy is denoted as π base θ . 2.3. Reward Model Design As illustrated in Figures 1 and 2, the A VSE model outputs en- hanced speech, which is ev aluated by the reward model to up- date the parameters jointly with the Proximal Policy Optimiza- tion (PPO) loss [26, 27] and SI-SNR loss. W e employ a speech- language reward model r ϕ ( · ) , which uses an LLM to generate textual descriptions from speech, followed by a sentiment anal- ysis model that produces a 1–5 sentiment score. T o stabilize training, we use relativ e improv ement as the rew ard. Let ˆ y RL be the output of π RL θ and ˆ y base be the output of π base θ . The reward is computed as: R = r ϕ ( ˆ y RL ) − r ϕ ( ˆ y base ) , (6) where r ϕ ( ˆ y ) = Sentimen t analysis(LLM( ˆ y )) . (7) The natural language descriptions generated by the LLM, such as “the speech is clear but still has slight background noise” or “the denoising ef fect is good but with a slight sense of distortion”, provide interpretability for the reward, making the training process more than mere score optimization. T o validate the effecti veness of the LLM-based inter- pretable reward, we also implement a comparativ e method that uses the predicted MOS score of DNSMOS as the reward. DNSMOS [28, 29] is a pretrained speech quality assessment model that directly predicts MOS scores for speech. In this comparativ e setting, Equation (7) becomes: r ϕ ( ˆ y ) = DNSMOS( ˆ y ) , (8) while the remaining training procedure stays identical. This allows us to quantify the adv antage of the LLM-based inter- pretable rew ard over a con ventional scalar reward. The ov erall optimization objectiv e is: L ( ϕ ) = R − β · KL π RL ϕ ( ˆ y | x ) , π base ϕ ( ˆ y | x ) , (9) where R is the relativ e reward from Equation (6), and β controls the KL div ergence between the RL polic y and the base polic y to prev ent the model from deviating excessi vely from the original strategy . Policy updates are based on PPO [26], but we further sim- plify its structure. Since each episode consists of a single-step decision and the relative re ward already embeds baseline infor- mation, we replace the con ventional advantage function A t with L ( ϕ ) from Equation (9), eliminating the need for an additional critic network. The PPO clip loss is defined as: L clip ( ϕ ) = E x ∼D " − min π RL ϕ ( ˆ y | x ) π RL ϕ − ( ˆ y | x ) L ( ϕ ) , clip π RL ϕ ( ˆ y | x ) π RL ϕ − ( ˆ y | x ) , 1 − ϵ, 1 + ϵ L ( ϕ ) !# , (10) where ϵ controls the range of the policy update step, ϕ − denotes the parameters from the previous iteration, and D is the train- ing dataset used for supervised fine-tuning to obtain the initial pretrained policy π base θ . During the fine-tuning stage, we additionally incorporate the original pretraining loss to stabilize the model. The final total loss function is defined as: L total = L clip + γ L SI - SNR , (11) where γ balances the RL objectiv e and the original SI-SNR SE objectiv e. 3. Experimental Setup 3.1. Dataset W e evaluate the proposed LR-A VSE method on the 4th COG-MHEAR Audio-V isual Speech Enhancement Challenge (A VSEC-4) dataset [24]. The training set contains 34,524 scenes, with target speakers dra wn from 605 TED/TEDx speak- ers in the LRS3 [ 30] dataset. Noise sources include 405 compet- ing speakers and 7,346 noise files spanning 15 noise categories, including domestic noises (from CEC1 [31]), freesound clips (from DNS v2 [32]), and music (from MedleyDB [33]). The validation set contains 3365 scenes with 85 target speakers; noise sources include 30 competing speakers and 1,825 noise files. The evaluation set contains 3,180 scenes, and the noises are a subset of those present in the training set. Signal-to-noise ratios range from − 18 dB to +6 . 55 dB. Unlike the simulated room impulse responses used in the training data, the test set employs real recorded room impulse responses cap- tured in three conference rooms at distances of 1 to 2 meters, which poses an additional challenge for model generalization. All speech signals are downsampled to 16 kHz with a bit depth of 16. Impulse responses are 6th-order (49-channel) am- bisonics signals downsampled to 16 kHz. In this work, we con- duct experiments using binaural signals. Each scene provides silent video, target speech, and their mix ed audio. 3.2. T raining The training procedure consists of two stages. In the pretrain- ing stage, we initialize the model with the pretrained weights provided by the A VSE Challenge 4 organizers. In the RL fine- tuning stage, the hyperparameters are set as follo ws: σ = 0 . 05 , PPO clip range ϵ = 0 . 1 , β = 0 . 0001 , SI-SNR loss weight γ = 1 . 0 , and learning rate is set to 0 . 001 . For the LLM, we adopt SALMONN [34], which has been fine-tuned for speech-related tasks, particularly speech quality understanding, and is therefore capable of generating semanti- cally meaningful quality-related descriptions from speech. Dur- ing training, we use one of the prompts of ficially recommended by SALMONN: “Giv e me an assessment of the quality of this speech sample, ” to guide the model in producing natural lan- guage ev aluations of speech quality . The generated textual descriptions are then fed into a sen- timent analysis model, specifically BER T [35, 36], which con- verts them into quality scores on a scale of 1 to 5, serving as the final rew ard signal. 3.3. Baselines W e compare our method against two baselines. All models are built upon the same pretrained A VSE backbone, which adopts an encoder–separator–decoder architecture and is ini- tially trained with supervised learning using SI-SNR as the ob- jectiv e function. The pretrained model and weights are offi- cially provided by the 4th COG-MHEAR Audio-V isual Speech Enhancement Challenge (A VSEC-4). Reinforcement learning fine-tuning is then applied on top of this pretrained model following the reinforcement learning from human feedback (RLHF) paradigm, where a reward model provides feedback to guide policy optimization via PPO. • Pretrained Baseline : This model uses the A VSEC-4 pro- vided pretrained weights and has been trained solely with supervised learning using SI-SNR as the optimization objec- tiv e, without an y RL fine-tuning. It represents the conv en- tional supervised A VSE approach. • RL-DNSMOS : This baseline follows the same RL fine-tuning pipeline as our method, but replaces the SALMONN+BER T reward model with DNSMOS. DNS- MOS is a pretrained speech quality assessment model that directly outputs MOS predictions on a scale of 1 to 5. This comparison allows us to quantify the advantage of the LLM-based interpretable reward over a con v entional scalar rew ard. For fair comparison, RL-DNSMOS uses the same PPO hyperparameters ( σ = 0 . 05 , ϵ = 0 . 1 , β = 0 . 0001 , γ = 1 . 0 ) and training procedure as our proposed method. 4. Results 4.1. Objective Quality Metrics As shown in T able 1, ev aluated on the test set, LR-A VSE achiev es a PESQ of 1.57, outperforming the Pretrained Base- line at 1.45 and RL-DNSMOS at 1.54. Regarding the NISQA- predicted MOS, LR-A VSE reaches 1.29, significantly outper- forming the Noisy input at 0.97 and the Pretrained Baseline at 0.99. On the VQscore [37] and SpeechBER TScore (S-BER T) [38, 39, 40] metrics, LR-A VSE obtains scores of 0.62 and 0.57, respectiv ely , achieving the best overall performance among all compared methods. A comparison with RL-DNSMOS further highlights the advantage of the LLM-based interpretable re- ward: although both methods share the same RL fine-tuning framew ork, the LLM-based interpretable reward outperforms the DNSMOS-based re ward on PESQ, STOI and neural speech quality assessment scores, indicating that natural language de- scriptions provide richer quality information that helps the model learn more nuanced enhancement strategies. Figure 3: LR-A VSE inference with SALMONN. Rewards fr om textual descriptions align with PESQ and STOI, demonstrating LR-A VSE’ s interpr etability . T able 1: Objective r esults on the A VSEC-4 test set. Method PESQ STOI NISQA VQscore S-BER T Noisy 1.31 0.55 0.97 0.58 0.55 Baseline 1.45 0.48 0.99 0.61 0.54 RL-DNSMOS 1.54 0.57 1.15 0.62 0.56 LR-A VSE 1.57 0.58 1.29 0.62 0.57 Figure 4: A/B prefer ence test results on the A VSEC-4 test set. 4.2. Subjective Evaluation T o subjectively evaluate speech quality , we conducted an A/B preference test. A total of 21 participants were recruited for the experiment. T w o comparison conditions were designed: LR-A VSE vs. Pretrained Baseline and LR-A VSE vs. RL- DNSMOS. Each comparison included 10 utterances. As shown in Fig. 4, LR-A VSE achieved a 96.7% preference rate over the Pretrained Baseline. When compared with RL-DNSMOS, LR- A VSE still obtained a 67.6% preference rate. Based on the subjecti ve ev aluation results, the effecti veness of the proposed method is further validated. Through the LLM- based interpretable reward mechanism, the frame work not only improv es speech quality performance but also provides a more interpretable foundation for the model optimization process. 4.3. Interpr etable Reward Analysis Figure 3 presents the natural language descriptions generated by SALMONN for noisy speech and enhanced speech, respec- tiv ely . For the noisy speech, SALMONN described it as “dis- torted and muffled, ” “difficult to understand, ” and containing “a lot of background noise. ” After enhancement using our proposed method, the speech is described as “clear and easy to understand” and “well-organized. ” Although “some back- ground noises” are still mentioned, it is o verall characterized as a “good speech sample. ” The corresponding re ward increases by ∆ +1.79, while PESQ improves by ∆ +0.38 and STOI by ∆ +0.02. These results show a consistent improvement trend across the three metrics—Re ward, PESQ, and ST OI—thereby v alidat- ing the core hypothesis of this study: the rew ard deriv ed from LLM-generated natural language descriptions exhibits a posi- tiv e correlation with conv entional objective metrics. Compared to traditional approaches that rely solely on a single numer- ical score to assess speech quality , the descriptions provided by SALMONN offer more detailed insights into the aspects of improv ement, such as enhanced intelligibility , reduced distor- tion, and decreased background noise. Such feedback allows for a clearer understanding of how the model improves speech quality , making the ev aluation process more intuitive and inter- pretable. 5. Discussion Our LLM-based re ward demonstrates clear adv antages in inter - pretability; howe ver , the current choice of LLM remains rela- tiv ely limited. When generating natural language descriptions, the produced sentences tend to follow fixed patterns—for ex- ample, repeating phrases such as “The quality of this speech sample is poor/good” or “The audio is distorted and muffled. ” These repetitiv e descriptions impose a certain constraint on our proposed LLM-based reward, potentially making it difficult to capture subtle differences in speech quality . Future research directions may include: (1) adopting more advanced LLMs as the reward model, as models with stronger capabilities can generate richer vocab ulary and more nuanced natural language descriptions of speech quality differences; (2) more careful prompt engineering during training—for instance, using more detailed prompts such as “Please ev aluate the speech in terms of clarity , noise lev el, timbral naturalness, and loudness stability” to guide the model tow ard producing more structured and fine-grained descriptions. 6. Conclusion This paper proposes LR-A VSE, which, to the best of our kno wl- edge, is the first framework that lev erages reinforcement learn- ing from LLM feedback for A VSE. The proposed LLM-based interpretable reward achie ves notable improvements over the supervised-trained baseline and DNSMOS-based RL A VSE systems across PESQ, STOI, neural speech quality assessment metrics, and subjective listening tests. Furthermore, unlike con ventional black-box training paradigms that rely solely on single numerical values, the proposed method provides inter- pretability . Even with current limitations of LLMs, the natural language form of the LLM-based interpretable re ward can ef- fectiv ely guide the model tow ard continuous improvement. Fu- ture work will explore the use of more adv anced LLMs, im- prov ed prompt design strategies, and the extension of the pro- posed frame work to a broader range of speech processing tasks. 7. Generative AI Use Disclosure Generativ e AI was used only for editing and polishing this manuscript. 8. References [1] A. Ephrat, I. Mosseri, O. Lang, T . Dekel, K. W ilson, A. Hassidim, W . T . Freeman, and M. Rubinstein, “Looking to listen at the cock- tail party: A speaker-independent audio-visual model for speech separation, ” arXiv preprint , 2018. [2] A. Gabbay , A. Shamir, and S. Peleg, “V isual speech enhance- ment, ” arXiv preprint , 2017. [3] M. Gogate, K. Dashtipour, A. Adeel, and A. Hussain, “Cochleanet: A robust language-independent audio-visual model for real-time speech enhancement, ” Information Fusion , vol. 63, pp. 273–285, 2020. [4] D. Michelsanti, Z.-H. T an, S.-X. Zhang, Y . Xu, M. Y u, D. Y u, and J. Jensen, “ An overvie w of deep-learning-based audio-visual speech enhancement and separation, ” IEEE/A CM T ransactions on Audio, Speech, and Language Pr ocessing , vol. 29, pp. 1368–1396, 2021. [5] J.-C. Hou, S.-S. W ang, Y .-H. Lai, Y . Tsao, H.-W . Chang, and H.-M. W ang, “ Audio-visual speech enhancement using multi- modal deep conv olutional neural networks, ” IEEE Tr ansactions on Emerging T opics in Computational Intelligence , vol. 2, no. 2, pp. 117–128, 2018. [6] M. Sade ghi, S. Leglai ve, X. Alameda-Pineda, L. Girin, and R. Ho- raud, “ Audio-visual speech enhancement using conditional varia- tional auto-encoders, ” IEEE/A CM T ransactions on Audio, Speech, and Language Processing , v ol. 28, pp. 1788–1800, 2020. [7] V . A. Kalkhorani, C. Y u, A. Kumar , K. T an, B. Xu, and D. W ang, “ A v-crossnet: an audiovisual complex spectral mapping network for speech separation by leveraging narrow-and cross-band mod- eling, ” IEEE Journal of Selected T opics in Signal Pr ocessing , 2025. [8] N. Saleem, A. Hussain, K. Dashtipour , E. Sheikh, A. Sheikh, T . Arslan, and A. Hussain, “V iseme-gated multilayer cross- attentional feature fusion for cognitiv ely-inspired multimodal speech enhancement, ” IEEE T ransactions on Audio, Speech and Language Processing , v ol. 34, pp. 469–481, 2025. [9] J. Le Roux, S. Wisdom, H. Erdogan, and J. R. Hershey , “SDR– half-baked or well done?” in ICASSP 2019-2019 IEEE Interna- tional Conference on Acoustics, Speech and Signal Pr ocessing (ICASSP) . IEEE, 2019, pp. 626–630. [10] S.-W . Fu, C.-F . Liao, Y . Tsao, and S.-D. Lin, “MetricGAN: Gen- erativ e adversarial networks based black-box metric scores opti- mization for speech enhancement, ” in International Confer ence on Machine Learning . PmLR, 2019, pp. 2031–2041. [11] S.-W . Fu, C. Y u, T .-A. Hsieh, P . Plantinga, M. Ravanelli, X. Lu, and Y . Tsao, “MetricGAN+: An improved version of metric- gan for speech enhancement, ” arXiv preprint , 2021. [12] A. W . Rix, J. G. Beerends, M. P . Hollier, and A. P . Hekstra, “Per- ceptual ev aluation of speech quality (PESQ)-a new method for speech quality assessment of telephone networks and codecs, ” in 2001 IEEE international confer ence on acoustics, speech, and signal pr ocessing . Pr oceedings (Cat. No. 01CH37221) , vol. 2. IEEE, 2001, pp. 749–752. [13] R. Cao, S. Abdulatif, and B. Y ang, “CMGAN: Conformer- based metric gan for speech enhancement, ” arXiv preprint arXiv:2203.15149 , 2022. [14] C. H. T aal, R. C. Hendriks, R. Heusdens, and J. Jensen, “ A short- time objectiv e intelligibility measure for time-frequency weighted noisy speech, ” in 2010 IEEE international conference on acous- tics, speech and signal processing . IEEE, 2010, pp. 4214–4217. [15] T . Brown, B. Mann, N. Ryder , M. Subbiah, J. D. Kaplan, P . Dhari- wal, A. Neelakantan, P . Shyam, G. Sastry , A. Askell et al. , “Lan- guage models are few-shot learners, ” Advances in neural informa- tion pr ocessing systems , vol. 33, pp. 1877–1901, 2020. [16] R. E. Zezario, S. M. Siniscalchi, H.-M. W ang, and Y . Tsao, “ A study on zero-shot non-intrusive speech assessment using large language models, ” in ICASSP 2025-2025 IEEE Interna- tional Conference on Acoustics, Speech and Signal Pr ocessing (ICASSP) . IEEE, 2025, pp. 1–5. [17] C. Chen, Y . Hu, S. W ang, H. W ang, Z. Chen, C. Zhang, C.- H. H. Y ang, and E. S. Chng, “ Audio large language models can be descriptive speech quality ev aluators, ” arXiv pr eprint arXiv:2501.17202 , 2025. [18] S. W ang, W . Y u, X. Chen, X. Tian, J. Zhang, L. Lu, Y . Tsao, J. Y a- magishi, Y . W ang, and C. Zhang, “Qualispeech: A speech quality assessment dataset with natural language reasoning and descrip- tions, ” in Pr oceedings of the 63rd Annual Meeting of the Asso- ciation for Computational Linguistics (V olume 1: Long P apers) , 2025, pp. 23 588–23 609. [19] Y . K oizumi, K. Niwa, Y . Hioka, K. K obayashi, and Y . Haneda, “Dnn-based source enhancement self-optimized by reinforcement learning using sound quality measurements, ” in 2017 IEEE Inter- national Conference on Acoustics, Speech and Signal Pr ocessing (ICASSP) . IEEE, 2017, pp. 81–85. [20] Y .-L. Shen, C.-Y . Huang, S.-S. W ang, Y . Tsao, H.-M. W ang, and T .-S. Chi, “Reinforcement learning based speech enhancement for robust speech recognition, ” in ICASSP 2019-2019 IEEE Interna- tional Conference on Acoustics, Speech and Signal Pr ocessing (ICASSP) . IEEE, 2019, pp. 6750–6754. [21] G. Mittag, B. Naderi, A. Chehadi, and S. M ¨ oller , “NISQA: A deep cnn-self-attention model for multidimensional speech quality prediction with cro wdsourced datasets, ” arXiv preprint arXiv:2104.09494 , 2021. [22] A. Kumar , A. Perrault, and D. S. W illiamson, “Using rlhf to align speech enhancement approaches to mean-opinion quality scores, ” in ICASSP 2025-2025 IEEE International Conference on Acous- tics, Speech and Signal Pr ocessing (ICASSP) . IEEE, 2025, pp. 1–5. [23] H. Li, N. Hou, Y . Hu, J. Y ao, S. M. Siniscalchi, and E. S. Chng, “ Aligning generative speech enhancement with human preferences via direct preference optimization, ” arXiv preprint arXiv:2507.09929 , 2025. [24] CogMhear, “ A vse challenge baseline model, ” https://github.com/ cogmhear/avse challenge, 2024, accessed: 2026-02-19. [25] Y . Luo and N. Mesgarani, “Conv-tasnet: Surpassing ideal time– frequency magnitude masking for speech separation, ” IEEE/ACM transactions on audio, speech, and language processing , vol. 27, no. 8, pp. 1256–1266, 2019. [26] J. Schulman, F . W olski, P . Dhariwal, A. Radford, and O. Klimov , “Proximal policy optimization algorithms, ” arXiv pr eprint arXiv:1707.06347 , 2017. [27] L. Ouyang, J. Wu, X. Jiang, D. Almeida, C. W ainwright, P . Mishkin, C. Zhang, S. Agarwal, K. Slama, A. Ray et al. , “T rain- ing language models to follo w instructions with human feedback, ” Advances in neural information pr ocessing systems , vol. 35, pp. 27 730–27 744, 2022. [28] C. K. Reddy , V . Gopal, and R. Cutler , “DNSMOS: A non-intrusi ve perceptual objective speech quality metric to ev aluate noise sup- pressors, ” in ICASSP 2021-2021 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP) . IEEE, 2021, pp. 6493–6497. [29] ——, “DNSMOS p. 835: A non-intrusiv e perceptual objectiv e speech quality metric to ev aluate noise suppressors, ” in ICASSP 2022-2022 IEEE international confer ence on acoustics, speech and signal processing (ICASSP) . IEEE, 2022, pp. 886–890. [30] T . Afouras, J. S. Chung, and A. Zisserman, “Lrs3-ted: a large-scale dataset for visual speech recognition, ” arXiv preprint arXiv:1809.00496 , 2018. [31] Clarity Challenge, “Clarity enhancement challenge 1 (CEC1), ” https://github .com/claritychallenge/clarity/tree/main/recipes/ cec1, 2021, accessed: 2024. [32] Microsoft, “Deep noise suppression (DNS) challenge, ” https:// github .com/microsoft/DNS- Challenge, 2020, accessed: 2024. [33] H. Bitteur, J. Salamon, E. J. Humphrey , and J. P . Bello, “Med- leyDB: A multitrack dataset for annotation-intensi ve MIR re- search, ” https://medle ydb .weebly .com/, 2014, accessed: 2024. [34] C. T ang, W . Y u, G. Sun, X. Chen, T . T an, W . Li, L. Lu, Z. Ma, and C. Zhang, “Salmonn: T o wards generic hearing abilities for large language models, ” arXiv preprint , 2023. [35] Y . Peirsman, “BER T base multilingual un- cased sentiment, ” https://huggingface.co/nlpto wn/ bert- base- multilingual- uncased- sentiment, 2020, accessed: 2024. [36] J. Devlin, M.-W . Chang, K. Lee, and K. T outanov a, “Bert: Pre- training of deep bidirectional transformers for language under- standing, ” in Pr oceedings of the 2019 confer ence of the North American chapter of the association for computational linguis- tics: human language technologies, volume 1 (long and short pa- pers) , 2019, pp. 4171–4186. [37] S.-W . Fu, K.-H. Hung, Y . Tsao, and Y .-C. F . W ang, “Self- supervised speech quality estimation and enhancement using only clean speech, ” arXiv preprint , 2024. [38] J. Shi, H. jin Shim, J. T ian, S. Arora, H. W u, D. Petermann, J. Q. Y ip, Y . Zhang, Y . T ang, W . Zhang, D. S. Alharthi, Y . Huang, K. Saito, J. Han, Y . Zhao, C. Donahue, and S. W atanabe, “VERSA: A versatile ev aluation toolkit for speech, audio, and music, ” in 2025 Annual Conference of the North American Chapter of the Association for Computational Linguistics – System Demonstration T rac k , 2025. [Online]. A vailable: https://openrevie w .net/forum?id=zU0hmbnyQm [39] J. Shi, J. Tian, Y . W u, J.-W . Jung, J. Q. Yip, Y . Masuyama, W . Chen, Y . W u, Y . T ang, M. Baali, D. Alharthi, D. Zhang, R. Deng, T . Sri vasta va, H. W u, A. Liu, B. Raj, Q. Jin, R. Song, and S. W atanabe, “Espnet-codec: Comprehensive training and e v alua- tion of neural codecs for audio, music, and speech, ” in 2024 IEEE Spoken Language T echnology W orkshop (SLT) , 2024, pp. 562– 569. [40] T . Saeki, S. Maiti, S. T akamichi, S. W atanabe, and H. Saruwatari, “Speechbertscore: Reference-a ware automatic ev aluation of speech generation leveraging nlp evaluation metrics, ” arXiv pr eprint arXiv:2401.16812 , 2024.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment