OrigamiBench: An Interactive Environment to Synthesize Flat-Foldable Origamis

Building AI systems that can plan, act, and create in the physical world requires more than pattern recognition. Such systems must understand the causal mechanisms and constraints governing physical processes in order to guide sequential decisions. T…

Authors: Naaisha Agarwal, Yihan Wu, Yichang Jian

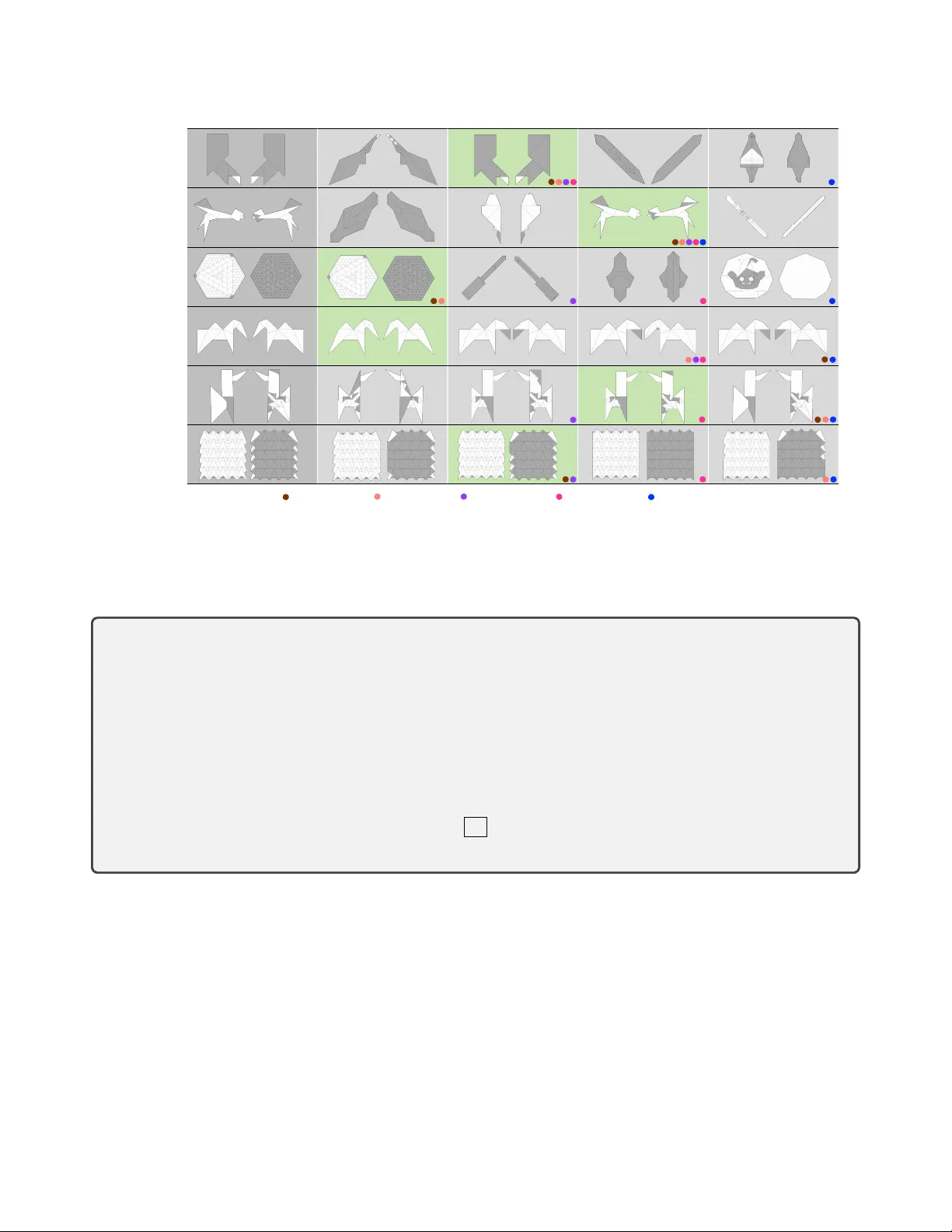

OrigamiBench: An Interactiv e En vir onment to Synthesize Flat-F oldable Origamis Naaisha Agarwal * Independent Researcher naaishaagarwal@gmail.com Y ihan W u * Y ichang Jian * Y ifei Peng Y ao-Xiang Ding State K ey Lab of CAD&CG, Zhejiang Uni versity pengyf@zju.edu.cn, { mrwuyh0327,mtdickens1998,dingyx.gm } @gmail.com Nishad Mansoor Computer Science Northeastern Uni versity mansoor.n@northeastern.edu Y ikuan Hu Mohan Li W ang-Zhou Dai National K ey Laboratory for No vel Software T echnology , Nanjing Uni versity { huyk,limh,daiwz } @lamda.nju.edu.cn Emanuele Sansone CSAIL / ESA T MIT / KU Leuven esansone@mit.edu Abstract Building AI systems that can plan, act, and cr eate in the physical world r equires mor e than pattern r ecognition. Such systems must understand the causal mechanisms and constraints governing physical pr ocesses in order to guide sequential decisions. This capability r elies on internal r ep- r esentations, analogous to an internal languag e model, that r elate observations, actions, and r esulting en vironmental changes. However , many existing benchmarks tr eat visual per ception and pr ogrammatic r easoning as separate pr ob- lems, focusing either on visual r ecognition or on symbolic tasks. The domain of origami pr ovides a natural testbed that inte grates these modalities. Constructing shapes thr ough folding operations r equires visual per ception, r easoning about geometric and physical constr aints, and sequential planning, while remaining sufficiently structur ed for sys- tematic evaluation. W e intr oduce OrigamiBench, an in- teractive benchmark in which models iteratively pr opose folds and r eceive feedback on physical validity and simi- larity to a tar get configuration. Experiments with modern vision–language models show that scaling model size alone does not reliably pr oduce causal r easoning about physical * Shared first author transformations. Models fail to gener ate coherent multi- step folding strate gies, suggesting that visual and language r epresentations r emain weakly inte grated. 1. Introduction Building AI systems that can reliably act in the phys- ical world requires more than pattern recognition. Mod- els must dev elop a causal understanding of physical pro- cesses while making sequential decisions under geometric and ph ysical constraints. Ev aluating such abilities therefore requires benchmarks that jointly test visual perception and structured reasoning about physical transformations. Existing benchmarks typically ev aluate perception or reasoning in isolation. V isual question answering bench- marks [ 10 , 18 ] focus on visual understanding but often lack structured reasoning constraints, while symbolic bench- marks emphasize programmatic reasoning with little per- ceptual grounding [ 5 , 6 , 14 , 19 , 21 ]. More recent work ex- plores hybrid benchmarks in physics-based simulation en- vironments [ 3 , 15 ]. Howe ver , designing such benchmarks remains challenging due to the tension between achie ving realistic physical en vironments and maintaining the struc- tured representations needed for systematic ev aluation and 1 symbolic supervision. Origami synthesis pro vides a domain that naturally cap- tures structured physical transformations grounded in visual perception. In origami, a flat sheet of paper is transformed into a target 2D shape through a sequence of folds con- strained by geometric validity and foldability [ 1 ]. Despite relying on a single primitiv e action, the fold, origami can generate highly comple x structures, making it a compact domain for reasoning about geometric transformations. The folding process also reflects recursiv e composition, a key concept in programming and formal reasoning [ 8 ], while the open-ended design space supports the e valuation of gen- eralization and creativ e problem solving. T o lev erage these properties, we introduce OrigamiBench , an interactiv e benchmark for e valuat- ing whether AI models can reason about geometric transformations while synthesizing shapes through folding operations. In OrigamiBench , models iterativ ely propose folds and recei ve feedback on physical v alidity and similar - ity to a target shape, requiring the inte gration of perception, constraint reasoning, and multi-step planning. Experiments with modern vision–language models re veal three ke y find- ings: scaling model size alone does not reliably produce causal reasoning about transformations, models struggle to generate coherent multi-step folding plans, and visual and language representations remain weakly integrated. 2. OrigamiBench 2.1. Data Organization The origami dataset comprises 366 distinct origami de- signs collected from the publicly av ailable online resource associated with the “Flat-Folder” project [ 2 ]. Each de- sign is encoded in the .fold file format [ 1 , 7 ], a stan- dardized data structure for representing origami crease pat- terns. The .fold format captures four components of an origami model: vertex coordinates ( vertices coords ), edge connecti vity ( edges vertices ), fold type as- signments ( edges assignment ), and face definitions ( faces vertices ). For visual inspection, we dev eloped a rendering pipeline that con verts each .fold file into SVG and PNG image representations of the corresponding folded configurations. Each sample in the dataset is or ganized along two di- mensions: semantic category and complexity le vel. The semantic category captures the representational type of the origami model, with classes including roles , animals , plants , geometry , and so on. The complexity lev el ( Easy , Medium , or Hard ) is determined by the struc- tural complexity of the crease pattern, primarily based on the counts of vertices and crease lines. This two- dimensional organization allows us to ev aluate model per- formance across different semantic domains and complexity lev els. The full taxonomy of these semantic and complexity classes is detailed in Appendix B . 2.2. En vironment W e introduce an interacti ve environment to e valuate VLMs on geometric reasoning and sequential planning through the task of origami design. The agent must incrementally fold a blank sheet into a target shape, capturing the physical con- straints of real-world origami. En vironment W orkflow . The en vironment operates as a closed-loop system. Each episode starts from a blank paper and a target origami. In the loop, each interaction follo ws a strict sequence: the en vironment presents a multimodal in- put; the agent responds with a structured command; and the en vironment validates and executes it, subsequently updat- ing its state to produce the next input. State, Observ ation and Action. The environment maintains a precise internal state , implemented as a CreasePattern object, which is the complete geometric representation of the task and can be serialized to a .fold file. At each step, the agent receiv es a structured obser- vation based on this state. In practice, this is delivered as a multimodal prompt that includes few-shot examples fol- lowed by the current task context: the three visual inputs as PNG images (the tar get folded shape, the current folded state and the 2D crease pattern) along with binary feed- back on the feasibility of the pre vious action. The detailed prompt design can be found in Supplementary . T o update the state, the agent must output a single, structured action . This action is a JSON object that precisely defines a new crease to be added: A_t = { action: "add_crease", edge_vertices: [v_i, v_j], assignment: m } where v i and v j are vertex indices and m ∈ { M , V } denot- ing Mountain or V alley folds. Action V alidation and Execution. Upon receiving an action, the en vironment performs a two-stage validation and, if valid, proceeds to update the state, and render new observations. First, the action’ s JSON structure is validated against the required schema. Next, geometry feasibility is validated by the Flat-Folder solver , which checks compli- ance with the Maekawa’ s and Kawasaki’ se theorems, and searches for v alid folded states. The action is valid only if the solver discov ers at least one feasible solution. For a v alid action, the internal CreasePattern state is updated, and then the rendering pipeline generates the ne w Current Folded State and Current Crease P attern images. 1 1 The action validator and the execution engine are built based on Flat- Folder from https : / / github . com / origamimagiro / flat - folder . 2 Crease Origami Easy Medium Hard VLM Output { ! "action": "add_creases", ! "creases": [ ! { "p1": [0, 2.5], "p2": [2.5, 0], "assignment": "V" }, ! { "p1": [7.5, 10], "p2": [10, 7.5], "assignment": "V" } ! ] ! } Current State Origami Crease Next State Origami Crease Execution Engine Action Validator Rendering Target State Origami Hidden Crease M e t r i c s Task 1: One-Step Multiple Choice Eval Q: Which candidate is obtained by performing exactly one additional fold on the “Reference” origami? Reference Task 2: Full-Step Interactive Eval T a r g e t S t e p 1 S t e p 2 S t e p 3 S t e p 4 S t e p 5 A B C D Figure 1. V isual summary of OrigamiBench . In the top-left corner ( data ), examples of crease patterns ( .fold ) from the animal class are shown together with their corresponding rendered origami images ( PNG ), ordered by increasing complexity . In the top-right ( en vironment ), we illustrate a single-step transition in the execution en vironment. The state consists of a crease pattern, where blue and red lines denote mountain and valle y folds, respectiv ely , along with its rendered origami. After receiving the output from the VLM model, the execution engine performs a foldability check for the giv en action; if successful, it generates a new state. The VLM model observes as input the initial prompt, the current environment state, and the target origami. Once the simulation is complete, the final state is compared against the target state using the proposed metrics, also lev eraging the hidden target crease pattern. Finally , at the bottom ( tasks ), the two ev aluation tasks are shown from the model’ s perspective, highlighting the inputs and the corresponding desired outputs. 2.3. Evaluation T asks & Metrics OrigamiBench is or ganized around two tasks: a one-step ev aluation and a full-step e valuation. The first task assesses a model’ s ability to (i) percei ve a folded origami image, (ii) understand the geometric transformation induced by a sin- gle folding operation, and (iii) predict the outcome of ap- plying that fold to the original origami state. By focusing on the effect of a single fold, this task ev aluates how well the model captures and manipulates the geometric struc- ture of origami, an essential skill for supporting more com- plex reasoning and planning tasks. W e formulate this task as a multiple-choice classification problem, referred to as the one-step multiple-choice evaluation . Specifically , the model is giv en a Reference origami state along with four candidate states, and must identify which candidate can be obtained by applying exactly one additional fold to the ref- erence state. The distractors include both semantically dif- ferent origami configurations and states that are more than one fold a way from the reference, thereby requiring the model to reason about folding operations rather than relying on superficial visual similarity . T o disentangle associati ve perceptual matching from causal understanding of folding transitions, we ev aluate this one-step task in two variants. In the Associative setting, distractors are sampled from un- related origamis, making the task closer to visual similarity discrimination. In the Causal setting, distractors are drawn from the same foldable sequence as the reference, so can- didates can be visually similar and differ only by subtle but causally critical fold-induced changes. An example of this task is shown in Figure 1 . The model’ s response is v alidated through a straightforward comparison with the correct can- didate, where the accuracy is calculated as the percentage of correct selections out of 225 trials. The second task, referred to as full-step interactive evaluation , is designed to assess the planning capabilities of VLMs. In this setting, models interact with the en vi- ronment to synthesize target origami shapes starting from a blank crease state, as illustrated in Figure 1 . Performance is ev aluated using three metrics: (i) Query Efficiency (QE), de- fined as the percentage of fold steps that contribute to the fi- nal origami; (ii) Geometric Similarity (GS), computed using Intersection over Union to quantify the geometric overlap between the model-generated origami and the target shape; 3 T able 1. Accuracy performance of dif ferent VLMs on the one-step multiple-choice ev aluation task. Accuracy is defined as the propor- tion of correctly solved tasks out of the total number of instances. The Associative version contains 393 instances, where distractors are generated by randomly selecting origamis other than the ref- erence example. The Causal version contains 352 instances and incorporates distractors drawn from the same foldable sequence as the reference origami, increasing the task dif ficulty . Model Associativ e Causal Random Guessing 25.00% 25.00% Qwen3-VL-32B 96.18% 43.37% Qwen3-VL-8B 95.67% 38.92% Qwen2.5-VL-32B 51.65% 25.85% Qwen2.5-VL-7B 51.40% 30.97% InternVL 2.5 8B 53.44% 25.57% MiniCPM-V 2.6 30.28% 27.84% and (iii) Semantic Similarity (SS), measured as the cosine similarity between the vector embeddings, obtained from a fine-tuned CLIP model, of the model’ s folded origami and the target. Further details are av ailable in Appendix D . 3. Experimental Results Both ev aluations examine the ability of VLMs to un- derstand and reason about origami structures, but they adopt different task formulations. One ev aluates one- step multiple-choice reasoning, focusing on perception and causal understanding of folding actions, while the other em- phasizes full-step interactiv e synthesis to assess whether models can progressi vely construct v alid origami structures. One-Step Multiple-Choice Evaluation: Associative Matching vs. Causal Understanding. This e valuation serves as a diagnostic probe of whether VLMs can reason about the geometric effect of a single fold, a prerequisite for the multi-step synthesis task e v aluated in the interac- tiv e setting. Importantly , strong performance may arise ei- ther from associativ e visual–perceptual similarity or from causal reasoning about which state can be reached through exactly one additional fold. By contrasting the Associati ve and Causal variants, we test whether models move beyond superficial similarity and correctly infer the next-step tran- sition. T able 1 summarizes model performance on this task, while Figure 2 in Appendix C shows representativ e exam- ples. On the Associative version of the task, where models only need to match visual patterns between the input and candidate outputs, the Qwen3-VL models achie ve remark- ably high performance. Qwen3-VL-32B attains the high- est accurac y at 96.18%, closely followed by Qwen3-VL-8B at 95.67%. In contrast, earlier or smaller vision-language models perform substantially worse: InternVL 2.5 8B and Qwen2.5-VL-32B obtain 53.44% and 51.65%, respecti vely , while Qwen2.5-VL-7B achiev es 51.40%. MiniCPM-V 2.6 performs the worst among the ev aluated models with 30.28%, though it still slightly surpasses random guessing (25%). These results suggest that recent VLMs, particu- larly the Qwen3-VL series, are highly capable of capturing visual associations and recognizing local geometric corre- spondences between folding states. In contrast, performance drops substantially in the Causal version of the task, where models must infer the un- derlying folding operation rather than rely on surface-lev el similarity . In this setting, Qwen3-VL-32B still achie ves the best performance at 43.37%, followed by Qwen3- VL-8B at 38.92%. The remaining models cluster much closer to random chance: Qwen2.5-VL-7B reaches 30.97%, MiniCPM-V 2.6 achie ves 27.84%, InternVL 2.5 8B obtains 25.57%, and Qwen2.5-VL-32B achiev es 25.85%, all only marginally above the 25% random baseline. The large gap between Associati ve and Causal performance indicates that current VLMs struggle to mo ve beyond pattern matching tow ard genuine causal reasoning about geometric transfor- mations. Interestingly , the results suggest that scaling model size alone may not be sufficient to elicit causal reasoning. One possible explanation is the weak integration between language and vision representations: symbolic concepts and rules provided in the prompt may not be effecti vely grounded in the visual perceptual representations used by the model. Full-Step Interactiv e Evaluation: Planning for Origami Synthesis. W e conduct a preliminary study to in- vestigate whether VLMs can interact with the en vironment and plan sequences of actions to synthesize target origami structures. Due to time and resource constraints, we ev al- uate a subset of models. Specifically , we select the small- est models that achie ve performance significantly abov e the random baseline on the causal task, namely Qwen2.5-VL- 7B and Qwen3-VL-8B. This choice is moti vated by the ob- servation that, without an understanding of causal folding mechanisms, models are unlikely to perform meaningful planning and therefore cannot solv e the full synthesis prob- lem. Results are shown in T able 2 . While all models can in- teract with the en vironment and produce syntactically valid actions, as indicated by the high QE scores, they struggle to compose actions into coherent multi-step plans. In practice, this often results in the synthesis of very simple origami structures, as illustrated in Figure 4 of Appendix D . 4. Future W ork Our results suggest that scaling improves performance in associativ e settings b ut remains insufficient for fine-grained, causally grounded understanding of fold transitions, indi- cating that training data composition and visual ground- 4 T able 2. Performance on the full-step interacti ve e valuation task with mean and standard de viation for three dif ferent metrics. Additionally , time as measured in terms of seconds per folding step is reported. Evaluation Steps 10 25 Model QE GS SS QE GS SS T ime Qwen3-VL-8B 0.69 ± 0.24 0.03 ± 0.07 0.21 ± 0.28 0.68 ± 0.23 0.05 ± 0.12 0.23 ± 0.30 75 Qwen2.5-VL-7B 0.85 ± 0.02 0.18 ± 0.24 0.26 ± 0.33 0.87 ± 0.05 0.15 ± 0.22 0.26 ± 0.33 45 ing mechanisms may play a more critical role. Building on this insight, a promising direction is to strengthen the coupling between language, perception, and geometry by introducing explicit intermediate state representations (e.g., crease graphs, verte x–edge structures, and layer ordering) and training models to jointly predict states and actions. Another direction is to lev erage the closed-loop simulator not only as an e valuation platform but also as a learning en vironment, enabling execution-based supervision and re- inforcement or interactive learning with re wards deri ved from foldability and geometric v alidity . Finally , we plan to extend diagnostic analyses through challenge splits in- volving more complex crease patterns and tighter geomet- ric constraints, and to design curricula that emphasize hard- negati ve discrimination within the same folding sequence to better promote causal understanding. 5. Acknowledgements This work received funding from the European Re- search Council (ERC) under the Horizon Europe research and innov ation programme (MSCA-GF grant agreement N° 101149800), National Natural Science Foundation of China (62206245), and Jiangsu Science Foundation Leading-edge T echnology Program (BK20232003). The authors would like to thank Angel P atricio, Y ifei Jin at MIT and V incenzo Collura at the Univ ersity of Luxembour g for initial discus- sion on the benchmark. Additionally , the authors w ould lik e to thank Armando Solar-Lezama and his lab for giving ac- cess to additional computational resources. References [1] Hugo A Akitaya, Jun Mitani, Y oshihiro Kanamori, and Y ukio Fukui. Generating Folding Sequences from Crease Patterns of Flat-Foldable Origami. In A CM SIGGRAPH , 2013. 2 [2] Hugo A Akitaya, Erik D Demaine, and Jason S Ku. Comput- ing Flat-Folded States. In International Meeting on Origami in Science, Mathematics and Education , 2024. 2 [3] Daniel M Bear , Elias W ang, Damian Mrowca, Felix J Binder, Hsiao-Y u Fish T ung, R T Pramod, Cameron Holdaway , Sirui T ao, Kevin Smith, Fan-Y un Sun, et al. Physion: Ev aluating physical prediction from vision in humans and machines. In NeurIPS , 2021. 1 , 7 [4] Anoop Cherian, K uan-Chuan Peng, Suhas Lohit, K evin A Smith, and Joshua B T enenbaum. Are Deep Neural Net- works SMAR T er than Second Graders? In CVPR , 2023. 7 [5] Franc ¸ ois Chollet. On the Measure of Intelligence. arXiv , 2019. 1 , 7 [6] Franc ¸ ois Chollet. Abstraction and Reasoning Corpus for Ar- tificial General Intelligence (ARC-A GI), 2026. 1 [7] Erik D Demaine, Jason S K u, and Robert J Lang. A Ne w File Standard to Represent Folded Structures. In F all W orkshop Computational Geometry , 2016. 2 [8] Jeremy Gibbons. Orig ami Programming. Mathematics in Computer Science , 3(2):247–268, 2003. 2 [9] ITU-R. Recommendation itu-r bt.601: Studio encoding parameters of digital television for standard 4:3 and wide- screen 16:9 aspect ratios. T echnical Report BT .601, Inter- national T elecommunication Union, Gene va, Switzerland, 2011. 18 [10] Justin Johnson, Bharath Hariharan, Laurens V an Der Maaten, Li Fei-Fei, C Lawrence Zitnick, and Ross Girshick. CLEVR: A Diagnostic Dataset for Compositional Language and Elementary V isual Reasoning. In CVPR , 2017. 1 , 7 [11] Prannay Khosla, Piotr T eterwak, Chen W ang, Aaron Sarna, Y onglong T ian, Phillip Isola, Aaron Maschinot, Ce Liu, and Dilip Krishnan. Supervised Contrastive Learning. NeurIPS , 33:18661–18673, 2020. 19 [12] Daixian Liu, Jiayi Kuang, Y inghui Li, Y angning Li, Di Y in, Haoyu Cao, Xing Sun, Y ing Shen, Hai-T ao Zheng, Liang Lin, et al. T angramPuzzle: Evaluating Multimodal Large Language Models with Compositional Spatial Reasoning. arXiv , 2026. 7 [13] Liang Ma, Jiajun W en, Min Lin, Rongtao Xu, Xiwen Liang, Bingqian Lin, Jun Ma, Y ongxin W ang, Ziming W ei, Haokun Lin, et al. PhyBlock: A Progressive Benchmark for Physi- cal Understanding and Planning via 3D Block Assembly . In NeurIPS , 2025. 7 [14] W eili Nie, Zhiding Y u, Lei Mao, Ankit B Patel, Y uke Zhu, and Anima Anandkumar . Bongard-LOGO: A New Bench- mark for Human-Level Concept Learning and Reasoning. In NeurIPS , 2020. 1 , 7 [15] A va Pun, Kangle Deng, Ruixuan Liu, Dev a Ramanan, Changliu Liu, and Jun-Y an Zhu. Generating Physically Sta- ble and Buildable Brick Structures from T ext. In ICCV , 2025. 1 , 7 [16] Alec Radford, Jong W ook Kim, Chris Hallacy , Aditya Ramesh, Gabriel Goh, Sandhini Agarwal, Girish Sastry , Amanda Askell, Pamela Mishkin, Jack Clark, et al. Learning T ransferable V isual Models from Natural Language Supervi- sion. In ICML , pages 8748–8763, 2021. 19 [17] Kexian T ang, Junyao Gao, Y anhong Zeng, Haodong Duan, Y anan Sun, Zhening Xing, W enran Liu, Kaifeng L yu, and 5 Kai Chen. LEGO-Puzzles: How Good Are MLLMs at Multi- Step Spatial Reasoning? arXiv , 2025. 7 [18] Rui Xu, Dakuan Lu, Zicheng Zhao, Xiaoyu T an, Xin- tao W ang, Siyu Y uan, Jiangjie Chen, and Y inghui Xu. ORIGAMISP A CE: Benchmarking Multimodal LLMs in Multi-Step Spatial Reasoning with Mathematical Con- straints. In NeurIPS , 2025. 1 , 7 [19] Kaiyu Y ang, Aidan Swope, Alex Gu, Rahul Chalamala, Peiyang Song, Shixing Y u, Saad Godil, Ryan J Prenger , and Animashree Anandkumar . LeanDojo: Theorem Proving with Retriev al-Augmented Language Models. In NeurIPS , 2023. 1 , 7 [20] Aimen Zerroug, Mohit V aishnav , Julien Colin, Sebastian Musslick, and Thomas Serre. A Benchmark for Composi- tional V isual Reasoning. In NeurIPS , 2022. 7 [21] Chi Zhang, Feng Gao, Baoxiong Jia, Y ixin Zhu, and Song- Chun Zhu. RA VEN: A Dataset for Relational and Analogical V isual Reasoning. In CVPR , 2019. 1 , 7 6 A. Related W ork Recent advances in Multimodal Large Language Models (MLLMs) hav e demonstrated impressi ve capabilities in pattern recognition and language understanding. Howe ver , e v aluating an MLLM’ s ability to solve complex, physically-grounded problems through sequential decision-making remains a significant challenge. T o systematically assess this capability , we surve y existing benchmarks along tw o primary lines of work. A prev alent approach to ev aluating geometric and physical reasoning is to reduce it to a question-answering, classifica- tion, or pattern completion task. This paradigm simplifies the rich, continuous nature of real-world problems into discrete selections. For e xample, in the specific domain of origami, ORIGAMISP A CE assesses orig ami kno wledge through multiple- choice questions and code completion [ 18 ]. In broader visual reasoning, Bongard-LOGO frames concept learning as binary classification from examples [ 14 ], and the Compositional V isual Relations (CVR) benchmark tests relational understanding through compositional visual question answering [ 20 ]. For more abstract cogniti ve skills, benchmarks like RA VEN [ 21 ] and ARC [ 5 ] extend this static ev aluation to abstract pattern completion (in RA VEN’ s matrices) and rule-based output genera- tion (for ARC’ s grid tasks) , while CLEVR reduces compositional 3D reasoning to static visual question answering [ 10 ]. Even problems that inherently require multi-step reasoning - such as the multimodal puzzles in SMAR T -101 [ 4 ], the tangram assembly tasks in T angramPuzzle [ 12 ], and the LEGO construction sequences in LEGO-puzzles [ 17 ] - are ev aluated based solely on the final answer . While ef fectiv e for testing cognitiv e skills, this reduction separate the task from the core challenge of geometric manipulation, as the agent nev er interacts with or alters the geometric state itself. A second line of work aims to more directly engage with physical worlds constraints or generati ve tasks. Howe ver , these approaches often operate in an open-loop: the model generates a final output (e.g., a structure, a prediction) in a single and non-interacti ve pass. This paradigm ev aluates the correctness or quality of the end product but fails to capture the generativ e and decision-making process required to arrive at it in a dynamic setting. For example, while BRICKGPT generates physically stable brick assembly structures from text descriptions, its e valuation focuses on the integrity of the generated structure rather than e xtending to the feasibility of the step-by-step construction sequence [ 15 ]. Similarly , Physion ev aluates the ability to predict physical dynamics from videos, a task of observational understanding, but does not require or test any capacity for goal-directed physical intervention to alter those outcomes [ 3 ]. While capturing aspects of physical plausibility or creativ e generation, this open-loop paradigm still fails to ev aluate the core of interactive problem-solving: the ability to plan, ex ecute, and adapt a sequence of actions within an en vironment that provides continuous feedback. In contrast, recent benchmarks in AI evaluation increasingly emphasize interactive, closed-loop paradigms that tightly couple high-lev el goals with actionable sequences. For example, in mathematical theorem proving, LeanDojo frames proof construction as stepwise interaction within a symbolic en vironment [ 19 ]; in general intelligence assessment, ARC-A GI-3 aims to test long-horizon planning through instruction-free and game-like environments; and in embodied physical reason- ing, PhyBlock introduces a progressiv e 3D block-assembly benchmark with an Activity-on-V ertex network for fine-grained planning diagnosis [ 13 ]. Follo wing this interactive, closed-loop framework, we introduce OrigamiBench, a benchmark that extends this paradigm to the domain of origami. This domain presents a challenging task that demands reasoning in a continuous geometric space while follo wing physical constraints. The task requires an agent to generate a sequence of precise and ex ecutable folding actions within the en vironment. B. Dataset Organization The OrigamiBench dataset comprises 366 entries, covering a div erse range of origami models. The data are categorized along two dimensions: semantic type and complexity lev el. Specifically , complexity is determined by the sum of the number of vertices and edges, with thresholds set at 300 and 800 (Easy: 0-300, Medium: 301-800, Hard: > 800). T able 3 presents all classified data, including each model’ s name, semantic category , and complexity le vel. T able 4 provides a statistical overvie w of the dataset’ s composition, detailing the distribution of the entries across the semantic cate gories and complexity le vels. T able 3. Dataset Structure Name Semantic Complexity 010 chan Beav er animals-aquatic Easy 065 komatsu Dolphin animals-aquatic Easy 030 tanakaoripa fish base animals-aquatic Medium 129 komatsu Shore Crab animals-aquatic Medium Continued on next page 7 T able 3 – continued from previous page Name Semantic Complexity 221 winston Cardinal T etra animals-aquatic Medium 226 kamiya Hermit Crab animals-aquatic Medium 278 ku Crab (base) animals-aquatic Medium 362 nakamura T una animals-aquatic Medium 020 imai T aiyaki Fish animals-aquatic Hard 037 imai Shrimp v2 animals-aquatic Hard 077 ku Lobster 1-8b animals-aquatic Hard 162 imai Carp animals-aquatic Hard 239 imai Squilla Mantis Shrimp animals-aquatic Hard 279 ku Crab (shaped) animals-aquatic Hard 299 brandon Japanese Spiny Lobster (jsl) animals-aquatic Hard 300 imai Japanese Spiny Lobster v2 animals-aquatic Hard 313 maeng Shrimp animals-aquatic Hard 329 maeng Lobster animals-aquatic Hard 355 nakamura Octopus animals-aquatic Hard 016 tanakaoripa enarc-crane final animals-bird Easy 082 traditionaloripa 4 Birdbase animals-bird Easy 096 komatsu Horned Owl animals-bird Easy 158 katsuta Sparro w animals-bird Easy 163 kei Party Parrot animals-bird Easy 236 kamiya Crane animals-bird Easy 244 komatsuoripa Smallbird Base animals-bird Easy 003 ku T riangle Bird animals-bird Medium 089 traditionaloripa 9 Birdbase animals-bird Medium 136 katsuta Owl animals-bird Medium 159 kamiya Little Bird animals-bird Medium 160 komatsu Little Bird animals-bird Medium 173 imai Canabn animals-bird Hard 331 gyosh Condor v1 animals-bird Hard 018 komatsu Butterfly Base animals-insects Easy 281 kamiya Long-Headed Locust animals-insects Easy 064 tanakaoripa Butterfly Base animals-insects Medium 147 kamiya Butterfly NS animals-insects Medium 261 lang Post Generic Beetle Opus 696 animals-insects Medium 339 haruka Beetle animals-insects Medium 029 imai Swallo wtail Butterfly v2 animals-insects Hard 046 imai Prosopocoilus Inclinatus v3-1 animals-insects Hard 053 imai Leaf Insect v2 animals-insects Hard 085 imai Flying Grasshopper animals-insects Hard 095 imai Mantis v2-8 animals-insects Hard 104 imai Metallifer animals-insects Hard 108 ryan T itan Beetle animals-insects Hard 112 imai Cicada v1 animals-insects Hard 119 kamiya W asp animals-insects Hard 120 imai Forest Scorpion animals-insects Hard 134 table Scorpion animals-insects Hard 142 ku Butterfly animals-insects Hard 149 imai Chilean Stag Beetle animals-insects Hard 175 shuki Dragonfly animals-insects Hard 195 kamiya Butterfly TH animals-insects Hard 198 imai Cetoniinae animals-insects Hard 214 bodo Ant animals-insects Hard 219 imai Copris Ochus animals-insects Hard Continued on next page 8 T able 3 – continued from previous page Name Semantic Complexity 234 bodo Dragonfly animals-insects Hard 252 imai Chalcosoma Chiron animals-insects Hard 256 bodo Ladybug animals-insects Hard 260 imai Lucanus Maculifemoratus animals-insects Hard 273 kamiya Cyclomantis Metalifer animals-insects Hard 283 imai Dragonfly animals-insects Hard 285 maeng Cyclommatus Elaphus animals-insects Hard 291 imai Chalcosoma Caucasus animals-insects Hard 306 maeng W eta animals-insects Hard 310 imai Dorcus hopei binodulosus animals-insects Hard 314 minh Butterfly animals-insects Hard 321 maeng Dicranocephalus wallichii animals-insects Hard 333 imai V iolin Beetle animals-insects Hard 336 maeng Mantis animals-insects Hard 343 maeng Orchid Mantis animals-insects Hard 350 maeng Sitophilus oryzae v2 animals-insects Hard 045 traditionaloripa Pig animals-mammals Easy 048 komatsu Dutch Rabbit animals-mammals Easy 055 komatsu Sheep animals-mammals Easy 113 komatsu Fox animals-mammals Easy 124 xiao Horse animals-mammals Easy 135 xiao Lazy Tiger animals-mammals Easy 138 komatsu Cat animals-mammals Easy 141 kei kitsune animals-mammals Easy 144 xiao Bull animals-mammals Easy 183 komatsu Panda animals-mammals Easy 207 komatsu Horse animals-mammals Easy 212 shuki Bear Cub animals-mammals Easy 218 kei T anuki animals-mammals Easy 227 komatsu Rabbit animals-mammals Easy 259 kei Father Cat animals-mammals Easy 327 origami van Alpaca v1 animals-mammals Easy 338 origami van Mouse animals-mammals Easy 359 fung I Heart Cat v2 (base) animals-mammals Easy 005 ku Kitten animals-mammals Medium 011 ku Bull animals-mammals Medium 034 fung Rabbit Head v4 animals-mammals Medium 081 komatsu Lion animals-mammals Medium 105 komatsu Rhino animals-mammals Medium 146 katsuta Lazy Cat animals-mammals Medium 148 komatsu W olf animals-mammals Medium 171 komatsu Macaque animals-mammals Medium 196 komatsu Giraffe animals-mammals Medium 211 luibobby Rabbit animals-mammals Medium 217 komatsu Hippopotamus animals-mammals Medium 225 xiao Chipmunk animals-mammals Medium 230 fung Rabbit Head v3 animals-mammals Medium 237 komatsu Tiger animals-mammals Medium 243 luibobby Giraf fe animals-mammals Medium 248 komatsu Dog animals-mammals Medium 271 xiao Raccoon animals-mammals Medium 337 ku Rabbit animals-mammals Medium 347 morisaw a Raccoon animals-mammals Medium 353 fukuroi Dalmatian animals-mammals Medium Continued on next page 9 T able 3 – continued from previous page Name Semantic Complexity 360 fung I Heart Cat v2 (shaped) animals-mammals Medium 123 shuki Bison animals-mammals Hard 143 shuki Asian Elephant animals-mammals Hard 154 shuki Camel animals-mammals Hard 204 bodo T iger animals-mammals Hard 004 traditional Crane animals-others Easy 021 chan Simple Dragon animals-others Easy 070 ku Pygmy Jerboa animals-others Easy 073 komatsu Squirrel animals-others Easy 083 kei Cheems Meme animals-others Easy 235 xiao T iny Dragon animals-others Easy 040 komatsu Gentle Dragon animals-others Medium 042 furutaoripa katatsumuri (snail) animals-others Medium 101 ku T urkey 2-1 animals-others Medium 137 kamiya Deinonychus animals-others Medium 180 kamiya Pheonix v2-0 animals-others Medium 289 kamiya K entrosaurus animals-others Medium 311 ku HJ Dragon animals-others Medium 351 ku Three Headed Dragon animals-others Medium 050 ku Ryu Zin Jr animals-others Hard 057 brandon Dragon HP animals-others Hard 059 bodo Leopard Frog animals-others Hard 068 bodo Sarcosuchus animals-others Hard 132 shuki Giganotosaurus v4 animals-others Hard 139 imai Red Headed Centipede animals-others Hard 140 imai Red Headed Centipede 40 animals-others Hard 181 kamiya Pheonix v3-0 animals-others Hard 182 kamiya Pheonix v3-5 animals-others Hard 200 shuki Zoanoid Dragon v1 animals-others Hard 201 shuki Zoanoid Dragon v2 animals-others Hard 209 imai Whip Spider animals-others Hard 229 imai Scorpion v2 animals-others Hard 250 juan V elociraptor animals-others Hard 268 imai T arantula animals-others Hard 277 maeng Camel Spider animals-others Hard 332 arisaw a Dragon 2017 animals-others Hard 366 imai V inegaroon v2 animals-others Hard 344 ku Lizard animals-reptiles Medium 066 brandon Rattlesnake HP animals-reptiles Hard 240 juan Galapagos T ortoise animals-reptiles Hard 265 kamiya Loggerhead Sea Turtle animals-reptiles Hard 266 kamiya T urtle animals-reptiles Hard 342 kei Rain Frog animals-reptiles Hard 071 imai Sailor Suit clothing Medium 013 mitanioripa Medal decorations Easy 130 fung Christmas Tree v2 decorations Easy 028 chan The Universal Symbol decorations Medium 199 fung Classic Snowman v1 decorations Medium 131 ku Christmas Tree decorations Hard 161 tanaka star decorations Hard 009 ku Unassigned Triangle Pleat geometry Easy 015 ku Iso-Area Half Square geometry Easy Continued on next page 10 T able 3 – continued from previous page Name Semantic Complexity 043 ku Quad Overlap geometry Easy 054 tanakaoripa Hexagon geometry Easy 076 kei Fibonacci V ortex geometry Easy 091 ku 4 Stripes 1 geometry Easy 092 ku 4 Stripes 2 geometry Easy 093 ku 4 Stripes 3 geometry Easy 098 RSorigami Inside Out geometry Easy 125 bro wn seamless4x4 geometry Easy 292 tanaka Aperiodic Monotile 1 geometry Easy 293 bodo Aperiodic Monotile 1 geometry Easy 294 ku Aperiodic Monotile 1 geometry Easy 295 tanaka Aperiodic Monotile 2 geometry Easy 296 ku Aperiodic Monotile 2 geometry Easy 297 tanaka Aperiodic Monotile 3 geometry Easy 325 bernhayes Unassigned 45° Crossover geometry Easy 049 lang Egg13-3 T essellation geometry Medium 086 miwu 5x5 Flippable Pixel Grid 1 geometry Medium 087 miwu 5x5 Flippable Pixel Grid 2 geometry Medium 094 ku 4 Stripes 4 geometry Medium 164 ku Pleats Exponential geometry Medium 191 fish Arro whead T essellation geometry Medium 361 lang Squaring the Circle geometry Medium 027 ku 8x8 Flippable Pixel Grid geometry Hard 031 boice Cat’ s Eye T essellation geometry Hard 033 lang Hyperbolic Limit geometry Hard 072 tanakaoripa Infinity 2 geometry Hard 145 beber Hexagonal T essellation of Dodecagons #1 geometry Hard 157 beber Square T essellation of Dodecagons #3 geometry Hard 167 fish Desert T essellation geometry Hard 169 beber Hexagonal T essellation of Dodecagons #2 geometry Hard 179 beber Rotated 3-4-6-4 geometry Hard 184 tanaka notitle geometry Hard 192 beber Dodecagonˆ2 geometry Hard 202 beber Hexagonal T essellation of Dodecagons #3 geometry Hard 213 beber fractal32 geometry Hard 222 beber Penrose Triangle #2 geometry Hard 231 beber Dodecagonˆ3 geometry Hard 242 beber om geometry Hard 254 beber Menger Sponge Level 2 I geometry Hard 255 beber Menger Sponge Level 2 II geometry Hard 262 beber Square T essellation of Dodecagons #2 geometry Hard 269 beber Penrose+ geometry Hard 276 beber Dodecagonˆ3-4 geometry Hard 284 beber Sierpinski-Penrose Triangle #2 geometry Hard 364 luibobby Cross with hydrangea tessellation geometry Hard 197 kei Pray gestures Easy 290 kei Hand gestures Medium 002 traditional Kabuto item Easy 052 traditionaloripa House item Easy 067 traditionaloripa damashi bune item Easy 187 fung Heart in a House (Flat) v1 item Easy 341 jared Bitcoin item Easy 017 jorge T ato Coaster item Medium Continued on next page 11 T able 3 – continued from previous page Name Semantic Complexity 106 kei 7 Segment Display (of f) item Medium 107 kei 7 Segment Display (on) item Medium 330 ku F16 item Medium 346 origami van BattleT ank item Medium 349 kei Umbrella 2022 item Medium 177 chan Mortal Kombat Emblem item Hard 232 luibobby MLG Glasses item Hard 298 kei TIE Interceptor (Star W ars) item Hard 307 kei Arc Trooper (Star W ars) item Hard 012 ku 4x4 Grid Unassigned others Easy 026 furutaoripa orihazuru others Easy 035 tachioripa kamehameha others Easy 038 traditionaloripa Y akko others Easy 056 tachioripa Hoodman others Easy 062 furutaoripa houou others Easy 069 kei Disappearing Contrails others Easy 074 miuraoripa Miura-ori others Easy 088 komatsu Papillion others Easy 117 tanaka Dice 15 others Easy 122 kei jitome others Easy 156 xiao Makima others Easy 174 kei Steps others Easy 186 kei Sus others Easy 223 luibobby Discord others Easy 282 kei Circus others Easy 301 boxhard Assigned Crossover others Easy 302 boxhard Assigned Clause others Easy 303 boxhard Assigned Split others Easy 316 boxhard Unassigned Clause others Easy 317 boxhard Unassigned Crossover others Easy 318 boxhard Unassigned Split others Easy 348 nakamura Cheat Dice others Easy 041 lang 5-Fold 2-Layer W eave others Medium 047 tanakaoripa Infinity others Medium 060 ku 1-Piece Star Atarbus others Medium 061 kei koishi others Medium 115 tanaka Dice 13 others Medium 116 tanaka Dice 8 others Medium 133 txst himeno others Medium 205 xiao Morg an others Medium 228 kei seigaiha others Medium 253 luibobby Reddit others Medium 267 kei Plat others Medium 272 juhobodo Poser others Medium 324 stebleton Hammersley’ s See Saw others Medium 328 kei Useless Resistance others Medium 358 ku 13 Stripes others Medium 019 lang Rings8 others Hard 022 boice Sword Plate Armor 40 others Hard 024 komatsu Girl-bp-01 others Hard 025 lang Golden W eave others Hard 039 boice Ara Ara others Hard 063 imai Japanese Drone v2 others Hard 100 kamiya Ryujin 2-1 others Hard Continued on next page 12 T able 3 – continued from previous page Name Semantic Complexity 172 tanaka irt others Hard 188 boicehanke Square T wist Samurai others Hard 233 tanaka equalwidth others Hard 270 ku HJ Rex others Hard 275 imai Sushi others Hard 304 boxhard Assigned Solvable others Hard 308 dequin Igris others Hard 319 boxhard Unassigned Solvable others Hard 354 lang rings4 others Hard 121 komatsuoripa rafflesia plants Easy 078 imai Maple Leaf v1 plants Medium 079 imai Maple Leaf v2 plants Medium 080 imai Maple Leaf v3 plants Medium 287 ku Four Leaf Clover plants Medium 334 jared Green Leaf plants Medium 103 koh Footballer roles Easy 114 kei Kirby roles Easy 118 katsuta Fox W edding Cub roles Easy 128 kamiya Sleipnir roles Easy 216 kamiya Minotaur roles Easy 315 kei Marnie (Pokemon) roles Easy 014 ku HJ Girl roles Medium 090 kei Man roles Medium 099 katsuta Fox W edding Bride roles Medium 109 yty Pochita roles Medium 110 katsuta Fox W edding Groom roles Medium 111 kamiya W izard roles Medium 127 katsuta Unicorn roles Medium 150 kei Sailor Moon roles Medium 168 xiao Mermaid roles Medium 170 kamiya Bahamut roles Medium 194 xiao DaJi roles Medium 206 kamiya Di vine Boar roles Medium 210 fung Angel v1 roles Medium 215 xiao Miku 2021 roles Medium 220 fung Portrait of an Origamist v1 roles Medium 224 bodo Buf f doge roles Medium 246 xiao Surfing Girl roles Medium 249 kei Poyoyo roles Medium 258 kamiya Cait Sith roles Medium 263 ku Angel roles Medium 264 xiao Y uMeiRen roles Medium 280 xiao L ying Girl roles Medium 288 xiao Skating Girl roles Medium 312 kamiya Kzinssie T ype 2 roles Medium 320 kamiya Unicorn roles Medium 323 chan M.O.D.O.K. roles Medium 356 kei Dodoco (Genshin Impact) roles Medium 365 ku Nazgul v8.1 roles Medium 032 komatsuoripa Minotaur roles Hard 044 kei Angel roles Hard 075 bodo Dwarf roles Hard 126 yuchao Bing Dwen Dwen roles Hard Continued on next page 13 T able 3 – continued from previous page Name Semantic Complexity 155 fish Man Bat roles Hard 165 shuki Guyver Unit III roles Hard 166 shuki Guyver Unit I roles Hard 176 fish Hephaetus roles Hard 185 imai Jack Skellington roles Hard 189 shuki Ev angelion Unit 01 2009 roles Hard 190 shuki Ev angelion Unit 01 2013 roles Hard 193 chan Spider Man roles Hard 203 hanke Archangel Michael roles Hard 208 kei General Griev ous roles Hard 238 kei Danbo roles Hard 241 fung Amabie v2 roles Hard 245 bodo Batman on his Bike roles Hard 247 kamiya T enma H-7 roles Hard 274 kei Keqing (Genshin Impact) roles Hard 286 carrotkei Keied Keqing roles Hard 305 kamiya W inged Kirin roles Hard 309 xiaokei Keied Raiden Shogun roles Hard 322 kei Cerberus (Helltaker) roles Hard 326 imai Flying Mantis roles Hard 335 kei Hatsune Miku roles Hard 340 imai ne w Flying Mantis roles Hard 345 haruka A yanami Rei 3.0 roles Hard 352 haruka V enusaur roles Hard 363 kei Hatoba Tsugu (Vtuber) roles Hard 006 ku Thirds Pinwheel toys Easy 007 ku Pinwheel Pockets toys Easy 008 ku Robot toys Easy 058 ku Color-change Pinwheel 1 toys Easy 084 ku 4x4 Checkerboard toys Easy 102 traditionaloripa Pinwheel toys Easy 151 ku Iso-Area Throwing Star 1 toys Easy 153 ku Iso-Area Throwing Star 3 toys Easy 178 xiao T eddy Bear to ys Easy 257 xiao Fairy Doll toys Easy 152 ku Iso-Area Throwing Star 2 toys Medium 251 fung K okeshi Doll v4 toys Medium 036 ku Checkerboard 26x26 toys Hard 357 chan 8x8 Checkerboard toys Hard 001 traditional Sailboat vehicles Easy 023 itagaki Motorcycle v ehicles Hard 051 kei CP PLZ words Easy 097 kei CP THX words Medium C. One-Step Multiple Choice Evaluation C.1. One-Step Data Generation W e construct the one-step multiple-choice dataset using all 366 entries from OrigamiBench. For each model, we first generate the cor- responding one-step crease pattern sequence using the Creasy tool on github (https://github.com/xk evio/Creasy). The crease patterns are then rendered in our en vironment to obtain the corresponding folded results, which serve as the ground-truth outputs. T o build the multiple-choice setting, we randomly sample folded results from other tasks as distractors and combine them with the correct folded result to form candidate answer sets. Each ev aluation instance therefore consists of the folded file from the previous step as 14 T able 4. OrigamiBench Dataset Statistics by Semantic Category and Comple xity Le vel Semantic Category Easy Medium Hard TO T AL animal-aquatic 2 6 11 19 animal-bird 7 5 2 14 animal-insects 2 4 34 40 animal-mammals 18 21 4 43 animal-reptiles 0 1 5 6 animal-others 6 8 18 32 clothing 0 1 0 1 decorations 2 2 2 6 geometry 17 7 23 47 gestures 1 1 0 2 item 5 6 4 15 plants 1 5 0 6 roles 6 28 29 63 toys 10 2 2 14 vehicles 1 0 1 2 words 1 1 0 2 others 23 15 16 54 TO T AL 102 113 151 366 input and several candidate folded outputs, where the model is required to select the correct next-step folded configuration. In total, we construct 255 ev aluation instances for the one-step multiple-choice ev aluation. C.2. Prompt The one-step task is formulated as a multiple-choice question in which, gi ven a “Reference” origami (current state), the model must select the candidate produced by exactly one additional fold, while the remaining candidates serv e as randomly sampled distractors. Prompt for Associativ e T ask Y ou are an intelligent agent capable of understanding 2D geometry , spatial relationships, topological transformations, and origami crease mechanics. Y our task is to sequentially analyze a 2D origami folding process and logically deduce the correct immediate next state from a set of options. IMPOR T ANT : Y our output is parsed by a strict regex parser . If your format is incorrect, the answer will be rejected. ================================ T ASK O VER VIEW ================================ Y ou are provided with a sequence of fiv e images. The first four are candidate options (A, B, C, D), and the final image is the Reference state. For e very image, the left side displays the FR ONT vie w of the origami, and the right side displays the B A CK view . Y our objecti ve is to identify which of the four options represents the exact state obtained from the Reference by performing EXA CTL Y ONE forward fold operation. ================================ HO W TO APPR O A CH THE T ASK ================================ • Analyze the Reference State: Examine the visible outer -layer creases, straight edges, ra w paper boundaries, and flaps on BO TH the front and back views. Build a mental 3D model of the current layer ordering. • Identify the Discrepancies: Compare each option (A, B, C, D) directly against the Reference state. Identify exactly what parts of the Reference state are identically preserved and what parts have changed (e.g., a flap has moved, ne w faces are exposed, 15 edges are realigned). Think about the geometry of the shape, not superficial visual similarity . • Filter out ”Non-Immediate” Steps (Rank by Dependency & T imeline): – Reject ”Unfolds” (Backward steps): Does the option lack a crease present in the Reference, or sho w a flap unfolded compared to the Reference? If it represents a step backward in time, eliminate it. – Reject ”Skips” (Multiple steps): Does the option show multiple distinct flaps moving independently , or a complex structural change spanning disconnected regions? The correct candidate must be strictly EXACTL Y ONE forward fold. If it requires two or more sequential independent folds, eliminate it. • Reverse Engineer the Operation: For the remaining viable options, det ermine the exact physical action required (e.g., mountain fold, valle y fold, squash fold, reverse fold). Ask yourself: – Where does the fold originate? Is there a pre-crease (dashed/dotted line) in the Reference state that serves as the hinge? – What specific polygonal region must be isolated and mov ed? – Does this single fold leave the rest of the origami structure completely unchanged? • V erify Physical Foldability (Rank by Irre versibility): – Layer Legality: Does the action respect current layer ordering? W ill this fold force solid paper to clip through itself? – Dual-V iew Consistency (Front/Back): A fold on the outer layer (front) strictly implies a corresponding geometric change on the re verse side (back). Eliminate options where the front and back geometries contradict each other or violate physical paper volume. • Mental Simulation: Mentally simulate the fold from the Reference. What layers move? What rotates? Does it land EXACTL Y on your chosen option? ================================ IMA GE LEGEND ================================ Images are provided in a fix ed order: 1. Option A (Front & Back) 2. Option B (Front & Back) 3. Option C (Front & Back) 4. Option D (Front & Back) 5. Reference State (Front & Back) ================================ STRICT R ULES ================================ • Y ou MUST ev aluate both the FRONT and B A CK views simultaneously . A perfectly matching front with an inconsistent back is a wrong answer . • Y ou MUST correctly identify and reject backward steps and skipped steps. • Do NOT choose purely by ”most visually similar”. A visually identical silhouette might harbor illegal layer-swaps, while a correct single fold can significantly alter the silhouette. • Do NOT output your reasoning te xt. ONL Y output the final format. ================================ OUTPUT CONTRA CT (CRITICAL) ================================ Y our final output MUST strictly consist of EXACTL Y ONE line containing the format below and NO THING ELSE: X where X is your chosen option: A, B, C, or D. Do not append extra text or punctuation. Prompt for Causal T ask Y ou are an intelligent agent capable of understanding 2D geometry , spatial relationships, topological transformations, and origami crease mechanics. Y our task is to sequentially analyze a 2D origami folding process and logically deduce the correct immediate next state from a set of options. 16 IMPOR T ANT : Y our output is parsed by a strict regex parser . If your format is incorrect, the answer will be rejected. ================================ T ASK O VER VIEW ================================ Y ou are provided with a sequence of fiv e images. The first four are candidate options (A, B, C, D), and the final image is the Reference state. For every image, the left side displays the FRONT vie w of the origami, and the right side displays the BA CK view . All images come from the exact same continuous folding sequence. Y our objecti ve is to identify which of the four options represents the exact state obtained from the Reference by performing EXA CTL Y ONE forward fold operation. ================================ HO W TO APPR O A CH THE T ASK ================================ • Analyze the Reference State: Examine the visible outer -layer creases, straight edges, ra w paper boundaries, and flaps on BO TH the front and back views. Build a mental 3D model of the current layer ordering. • Identify the Discrepancies: Compare each option (A, B, C, D) directly against the Reference state. Identify exactly what parts of the Reference state are identically preserved and what parts have changed (e.g., a flap has moved, ne w faces are exposed, edges are realigned). Think about the geometry of the shape, not superficial visual similarity . • Filter out ”Non-Immediate” Steps (Rank by Dependency & T imeline): – Reject ”Unfolds” (Backward steps): Does the option lack a crease present in the Reference, or sho w a flap unfolded compared to the Reference? If it represents a step backward in time, eliminate it. – Reject ”Skips” (Multiple steps): Does the option show multiple distinct flaps moving independently , or a complex structural change spanning disconnected regions? The correct candidate must be strictly EXACTL Y ONE forward fold. If it requires two or more sequential independent folds, eliminate it. • Reverse Engineer the Operation: For the remaining viable options, det ermine the exact physical action required (e.g., mountain fold, valle y fold, squash fold, reverse fold). Ask yourself: – Where does the fold originate? Is there a pre-crease (dashed/dotted line) in the Reference state that serves as the hinge? – What specific polygonal region must be isolated and mov ed? – Does this single fold leave the rest of the origami structure completely unchanged? • V erify Physical Foldability (Rank by Irre versibility): – Layer Legality: Does the action respect current layer ordering? W ill this fold force solid paper to clip through itself? – Dual-V iew Consistency (Front/Back): A fold on the outer layer (front) strictly implies a corresponding geometric change on the re verse side (back). Eliminate options where the front and back geometries contradict each other or violate physical paper volume. • Mental Simulation: Mentally simulate the fold from the Reference. What layers move? What rotates? Does it land EXACTL Y on your chosen option? ================================ IMA GE LEGEND ================================ Images are provided in a fix ed order: 1. Option A (Front & Back) 2. Option B (Front & Back) 3. Option C (Front & Back) 4. Option D (Front & Back) 5. Reference State (Front & Back) ================================ STRICT R ULES ================================ • Y ou MUST ev aluate both the FRONT and B A CK views simultaneously . A perfectly matching front with an inconsistent back 17 Reference Option A Option B Option C Option D Qwen3-VL-32B Qwen3-VL-8B Qwen2.5-VL- 7B InternVL 2.5 8B MiniCPM-V 2.6 Associative Easy Associative Medium Associative Hard Causal Easy Causal Medium Causal Hard Figure 2. Examples for the one-step task. The top and the bottom three rows show Associative and Causal task examples, respecti vely . Additionally , three task examples with varying degree of complexity are sho wn for both Associati ve and Causal. Each task requires to choose the next folding step of the reference origami (first column) from four candidate options (A–D). The correct answer is highlighted using the green background. Coloured circles at the bottom-right corner of each option indicates the prediction made by each model. is a wrong answer . • Y ou MUST correctly identify and reject backward steps and skipped steps. • Do NOT choose purely by ”most visually similar”. A visually identical silhouette might harbor illegal layer-swaps, while a correct single fold can significantly alter the silhouette. • Do NOT output your reasoning te xt. ONL Y output the final format. ================================ OUTPUT CONTRA CT (CRITICAL) ================================ Y our final output MUST strictly consist of EXACTL Y ONE line containing the format below and NO THING ELSE: X where X is your chosen option: A, B, C, or D. Do not append extra text or punctuation. C.3. Qualitative Results Some examples are sho wn in Figure 2 . D. Full-Step Interactive Ev aluation D.1. Ev aluation Metric: IoU-Based Geometric Similarity W e adopt the Intersection over Union (IoU) of shape masks as a geometric metric for measuring structural similarity between origami models. IoU focuses solely on the silhouette contour of each origami, remaining in variant to colour , texture, and rendering style, and directly captures the degree of o verlap between the projected shapes of two models. The ev aluation pipeline proceeds as follows. Given two FOLD files, each is first passed through the rendering pipeline ( FO L D → S VG → P N G ) to produce a 512 × 512 RGB raster image, as illustrated in Figure 3 . Each image is then conv erted to grayscale using the weighted formula 0 . 299 R + 0 . 587 G + 0 . 114 B [ 9 ], and foreground pixels are extracted by applying a brightness threshold of < 250 . 18 OpenCV contour detection is subsequently used to locate the largest external contour, which is flood-filled to produce a binary mask M ∈ { 0 , 1 } H × W . After aligning the two masks to the same spatial dimensions, the IoU score is computed as: IoU ( M A , M B ) = | M A ∩ M B | | M A ∪ M B | (1) where | M A ∩ M B | is the pix el count of the intersection and | M A ∪ M B | is the pix el count of the union. The score lies in [0 , 1] ; a v alue approaching 1 indicates that the two origami models are highly congruent in their projected shape. Figure 3. Original rendered image (left) and its corresponding binary mask (right). D.2. Ev aluation Metric: CLIP-Based Semantic Similarity D.2.1. Fine-T uning the CLIP Model The CLIP model used in this work is initialised from OpenAI CLIP [ 16 ] V iT -B/16 and domain-fine-tuned on 244 orig ami images spanning 15 categories (90/10 train/v alidation split). The original InfoNCE loss relies on image–te xt pairs; since our objecti ve is to improv e the class discriminability of image embeddings, we replace it with the Supervised Contrastiv e Loss (SupCon) [ 11 ]: L = − 1 N N X i =1 | P ( i ) | > 0 1 | P ( i ) | X p ∈ P ( i ) log exp( z i · z p /τ ) P k = i exp( z i · z k /τ ) (2) where z i is the L2-normalised image embedding, P ( i ) is the set of in-batch positives (same class, excluding self), and τ = 0 . 07 is the temperature. The loss acts only on the image encoder; the text pathway is frozen throughout training. A class-balanced sampler ensures that ev ery batch contains at least two images per category , guaranteeing valid positi ve pairs. The key h yperparameters are listed in T able 5 . Hyperparameter V alue Base model CLIP V iT -B/16 Optimiser AdamW Learning rate 5 × 10 − 6 W eight decay 1 × 10 − 3 Batch size 32 T raining epochs 100 LR schedule Cosine Annealing Gradient clipping max norm = 1 . 0 T able 5. Ke y hyperparameters for CLIP fine-tuning. 19 D.2.2. Ev aluation Protocol The projected images of two origami models are passed independently through the fine-tuned CLIP image encoder to obtain their respective L2-normalised embedding vectors; cosine similarity between the two v ectors is used as the similarity score: s = z A · z B , z = f θ ( x ) ∥ f θ ( x ) ∥ 2 (3) where f θ is the fine-tuned CLIP image encoder, x is the origami projection image, and s ∈ [ − 1 , 1] . A score approaching 1 indicates high semantic similarity in the embedding space, while a score near 0 or below indicates a significant semantic dif ference. D.2.3. Fine-T uned Model Qualitative Results T o quantify the effect of fine-tuning on CLIP , all 244 origami images are passed through the CLIP image encoder at each training epoch to obtain embedding vectors. A 244 × 244 pairwise cosine similarity matrix is then constructed and partitioned into intra-class pairs and inter-class pairs according to category labels. The mean cosine similarities µ intra and µ inter are computed for each partition, and their difference ∆ = µ intra − µ inter serves as the primary measure of class separability in embedding space—a larger ∆ indicates that images of the same class are more tightly clustered while images of different classes are pushed further apart. T able 6 reports the results across selected training epochs. The pretrained CLIP baseline yields µ inter = 0 . 861 , nearly identical to µ intra = 0 . 880 , with a separation of only ∆ = 0 . 019 , indicating that the generic model provides almost no discriminative structure for origami categories. After 25 epochs of fine-tuning, µ inter drops sharply to 0 . 243 and ∆ rises to 0 . 651 , demonstrating that the SupCon loss rapidly pushes inter-class embeddings apart in the early stages of training. As training continues, µ intra increases steadily while µ inter decreases further , reaching ∆ = 0 . 793 at epoch 75. By epoch 100, ∆ = 0 . 799 , with diminishing marginal gains; notably , µ intra decreases slightly from 0 . 9254 to 0 . 9243 , suggesting that later-stage optimization primarily acts on inter-class separation rather than further intra- class compactness. T able 6. Intra-class and inter-class cosine similarity at selected training epochs T raining Epoch µ intra ↑ µ inter ↓ ∆ ↑ Original CLIP 0.8800 0.8608 0.0192 Epoch 25 0.8934 0.2427 0.6506 Epoch 50 0.9190 0.1694 0.7496 Epoch 75 0.9254 0.1320 0.7934 Epoch 100 0.9243 0.1254 0.7989 D.3. Pr ompt The prompt is based on chain-of-thought reasoning and a few-shot e xample of a full origami sequence. Prompt Y ou are an intelligent agent capable of understanding 2D geometry , spatial relationships, and origami crease patterns. Y our task is to sequentially design a 2D origami by interacting with an en vironment through precise crease actions. Y ou must perform the reasoning steps internally . Do not output the reasoning. Only output the final action. IMPOR T ANT : Y our output is parsed by a strict JSON parser . If your JSON is incomplete, malformed, or truncated, the action will be rejected. ================================ T ASK O VER VIEW ================================ Y ou must transform a blank or partially creased square paper into a target origami shape. The task is completed through a sequence of crease additions. At each timestep: - Y ou observe the target origami. - Y ou observe the current origami produced by the existing crease pattern. - Y ou observe the current crease pattern. - Y ou receive feedback on whether the pre vious action was foldable. Y our objective is to iterati vely improve the crease pattern so that the folded result matches the tar get origami. ================================ HO W TO APPR O A CH THE T ASK ================================ 20 • Before giving the final output, you must carefully complete each of these parts in order to arriv e at the most accurate outcome and therefore get the highest score. – Identify which parts of the current crease pattern align with which parts of the current folded origami. – Identify which parts of the target folded orig ami are present in the current folded origami and which parts of the target folded origami are missing. Think about the geometry of the shape, not specific creases. • Identify the discrepanc y that would make the most impact. This one change should bring you the closest to the tar get origami of all the steps. Some examples include: missing flap, flap too short / too wide, incorrect layer ordering, incorrect angle, volume vs. flat issue. Rank these discrepancies by Irreversibility (wrong layer order is more critical than slight angle) and Dependency (some features require others to exist first) in order to identify which action to take first. • T ake that discrepancy and re verse engineer what needs to be done. Make sure to keep these considerations in mind: – Where does that flap originate on the paper? – What polygonal region must be isolated in the CP? – Is the issue length, width, or angle? Y ou could use: * a parallel crease (length control) * a bisector / angular crease * a re-layering crease • Make sure to keep physical foldability in mind. The action added to the crease pattern should respect the physics of real-life paper folding. Some considerations: – Flat-foldability * Kawasaki at the v ertices * Maekawa (M–V parity) – Layer legality * W ill this crease force paper through itself? – Crease interaction * Does this new crease terminate cleanly? * Does it propagate across existing creases? • Based on these considerations (the biggest discrepancy , rev erse engineering the fold, and the physical constraints), identify where to draw the fold and what assignment to gi ve it – Y ou have a scale of (0,0) to (10,10) where (0,0) is the bottom left corner of the crease pattern and (10,10) is the top right, similar to the coordinate plane. – Match up the area that you determined the fold originates from to the part of the paper you want it to start and end. – Decide whether it should be a mountain (folded downward) or a v alley (folded upward) fold. • Before finalizing the fold, simulate the fold mentally and think about what will be affected. What layers move? What rotates? What new faces appear? Does this bring you closer to the target origami? Carefully consider these questions, and if it does not bring you closer to the target origami, restart your process to identify the best mo ve. • After ensuring that you have found the best action, only then should you giv e your output. Make sure to follow the rules as explained belo w . ================================ IMA GE LEGEND ================================ Images are provided in a fix ed order: 1. T arget Origami (F olded) 2. Current Origami (Folded) 3. Current Crease Pattern ================================ OUTPUT CONTRA CT (CRITICAL) ================================ Y ou MUST output EXACTL Y ONE valid JSON object and NO THING else. Before emitting your answer , you must ensure: - The JSON is syntactically complete. - All arrays and objects are closed. 21 - The final character of your response is ‘ } ‘. - The JSON can be parsed without errors. If you cannot output a COMPLETE v alid JSON object, you must choose a simpler action (e.g., a single-crease action instead of a multi-crease action). ================================ A CTION SCHEMAS ================================ SINGLE-CREASE A CTION (preferred when possible): { "action": "add_crease", "p1": [x1, y1], "p2": [x2, y2], "assignment": "M" | "V" } MUL TI-CREASE actions are permitted only when: A single crease would violate flat-foldability , OR A symmetric or continuous structure requires multiple creases to remain legal { "action": "add_creases", "creases": [ { "p1": [x1, y1], "p2": [x2, y2], "assignment": "M" | "V" }, { "p1": [x3, y3], "p2": [x4, y4], "assignment": "M" | "V" } ] } ================================ GEOMETRIC CONSTRAINTS ================================ - Paper bounds: (0, 0) bottom-left to (10, 10) top-right - All coordinates must lie within this range - Coordinates may be real-valued - ”M” = mountain fold - ”V” = valle y fold - New creases must not be collinear with, coincident with, or tri vially offset from existing creases unless e xplicitly required. ================================ STRICT R ULES ================================ - Output EXA CTL Y ONE JSON object - Do NO T include explanations, comments, or text - Do NO T output partial JSON - Do NO T repeat identical actions - Prefer SINGLE-CREASE actions unless multiple creases are strictly necessary - Every action must either directly impro ve similarity to the tar get folded form or enable a future action that does so. - A crease that does not immediately change the folded form is allowed if it enables future foldability or structural alignment. ================================ FEW -SHO T EXAMPLE ================================ 22 EXAMPLE T ARGET ORIGAMI (Folded): EXAMPLE ST AR TING CURRENT ORIGAMI (Folded): EXAMPLE ST AR TING CREASE P A TTERN: ================================ STEP 1: ACTION T AKEN: { "action": "add_creases", "creases": [ { "p1": [0, 5], "p2": [5, 0], "assignment": "M" }, { "p1": [5, 10], "p2": [10, 5], "assignment": "M" } ] } STEP 2: ACTION T AKEN: { "action": "add_creases", "creases": [ { "p1": [0, 7.5], "p2": [7.5, 0], "assignment": "V" }, { "p1": [2.5, 10], "p2": [10, 2.5], "assignment": "V" } ] } STEP 3: ACTION T AKEN: { "action": "add_creases", "creases": [ { "p1": [0, 2.5], "p2": [2.5, 0], "assignment": "V" }, { "p1": [7.5, 10], "p2": [10, 7.5], "assignment": "V" } ] } STEP 4: ACTION T AKEN: { "action": "add_creases", "creases": [ { "p1": [0, 8.75], "p2": [8.75, 0], "assignment": "M" }, { "p1": [1.25, 10], "p2": [10, 1.25], "assignment": "M" } ] } STEP 5: ACTION T AKEN: { "action": "add_creases", "creases": [ { "p1": [5, 0], "p2": [6.25, 1.25], "assignment": "M" }, { "p1": [6.25, 1.25], "p2": [6.875, 1.875], "assignment": "V" }, 23 { "p1": [6.875, 1.875], "p2": [8.125, 3.125], "assignment": "M" }, { "p1": [8.125, 3.125], "p2": [8.75, 3.75], "assignment": "V" }, { "p1": [8.75, 3.75], "p2": [10, 5], "assignment": "M" } ] } RESUL TING CREASE P A TTERN: RESUL TING ORIGAMI (Folded): FIN AL COMP ARISON Similarity to target: high Foldability: true ================================ CURRENT T ASK ================================ T ARGET ORIGAMI (Folded): CURRENT ORIGAMI (Folded): CURRENT CREASE P A TTERN: Foldability Indicator: foldability Y our next response MUST be ONE COMPLETE, V ALID JSON A CTION. Before emitting the JSON, verify that the proposed crease does not obviously worsen any previously correct feature. Only output the action after you have carefully follo wed the steps giv en above on ho w to approach the task. D.4. Qualitati ve Results Some examples are sho wn in Figure 4 . 24 10 10 10 25 25 25 Folding Steps Qwen2.5-VL 7B Qwen3-VL 8B Target Figure 4. Examples of final origamis generated by the models for easy , medium, and hard targets after 10 and 25 inference steps. Both models produce origamis with only 1 or 2 fold actions, thus failing to construct a folding action plan. This is mainly due to the lack of causal understanding of an action as observed in the One-Step Multiple Choice e valuation. 25

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment