DUCTILE: Agentic LLM Orchestration of Engineering Analysis in Product Development Practice

Engineering analysis automation in product development relies on rigid interfaces between tools, data formats and documented processes. When these interfaces change, as they routinely do as the product evolves in the engineering ecosystem, the automa…

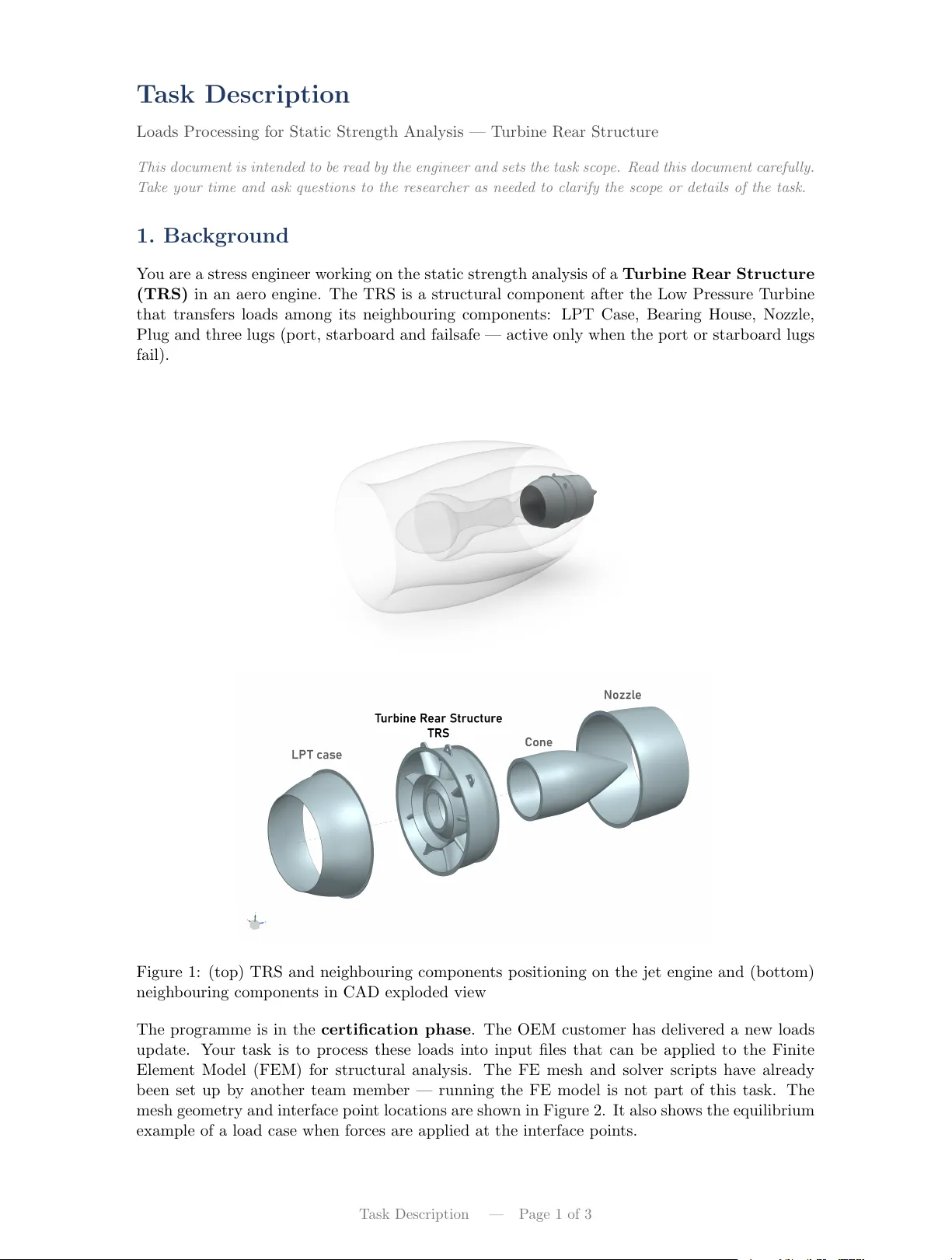

Authors: Alej, ro Pradas-Gomez, Arindam Brahma