AOI: Turning Failed Trajectories into Training Signals for Autonomous Cloud Diagnosis

Large language model (LLM) agents offer a promising data-driven approach to automating Site Reliability Engineering (SRE), yet their enterprise deployment is constrained by three challenges: restricted access to proprietary data, unsafe action execut…

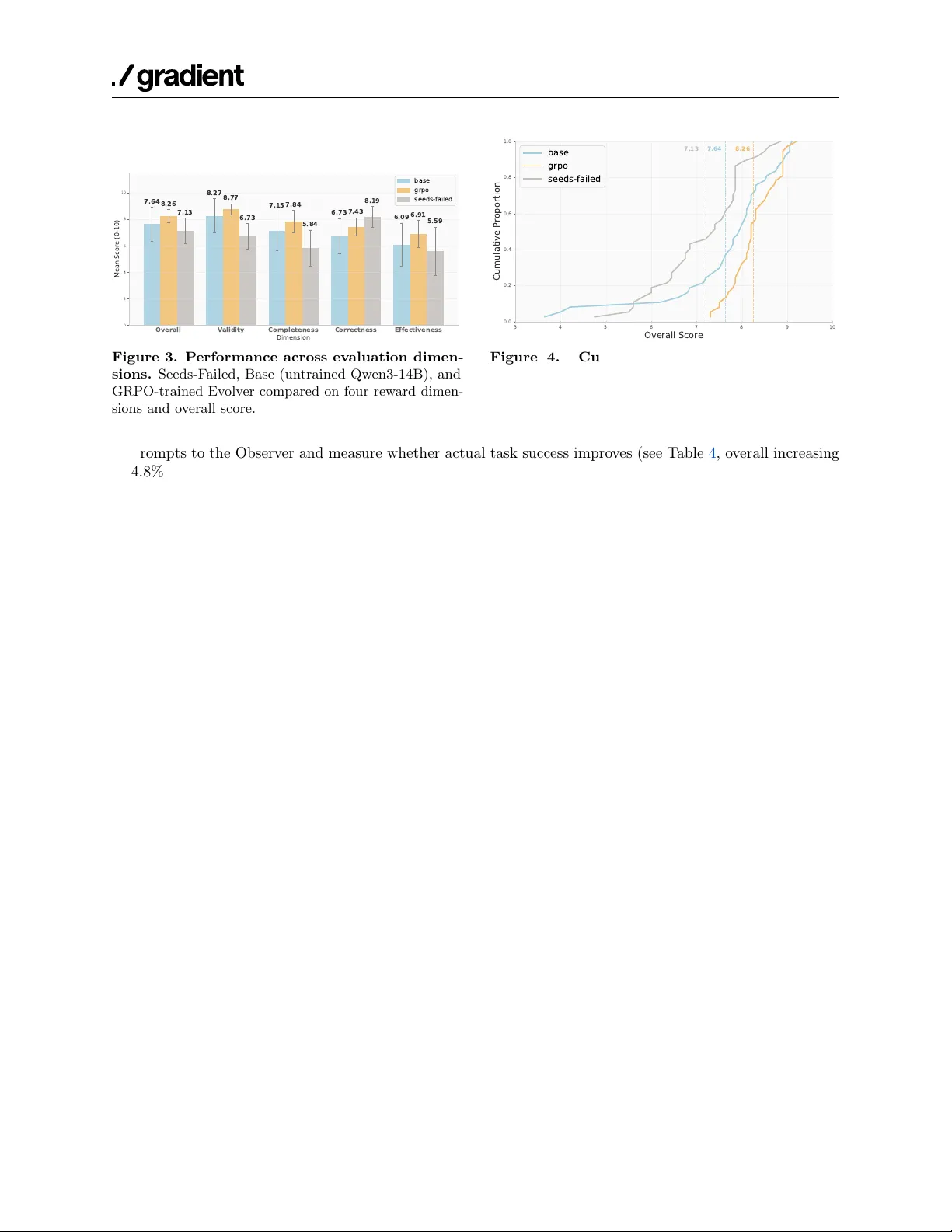

Authors: Pei Yang, Wanyi Chen, Asuka Yuxi Zheng