Closed-Loop Action Chunks with Dynamic Corrections for Training-Free Diffusion Policy

Diffusion-based policies have achieved remarkable results in robotic manipulation but often struggle to adapt rapidly in dynamic scenarios, leading to delayed responses or task failures. We present DCDP, a Dynamic Closed-Loop Diffusion Policy framewo…

Authors: Pengyuan Wu, Pingrui Zhang, Zhigang Wang

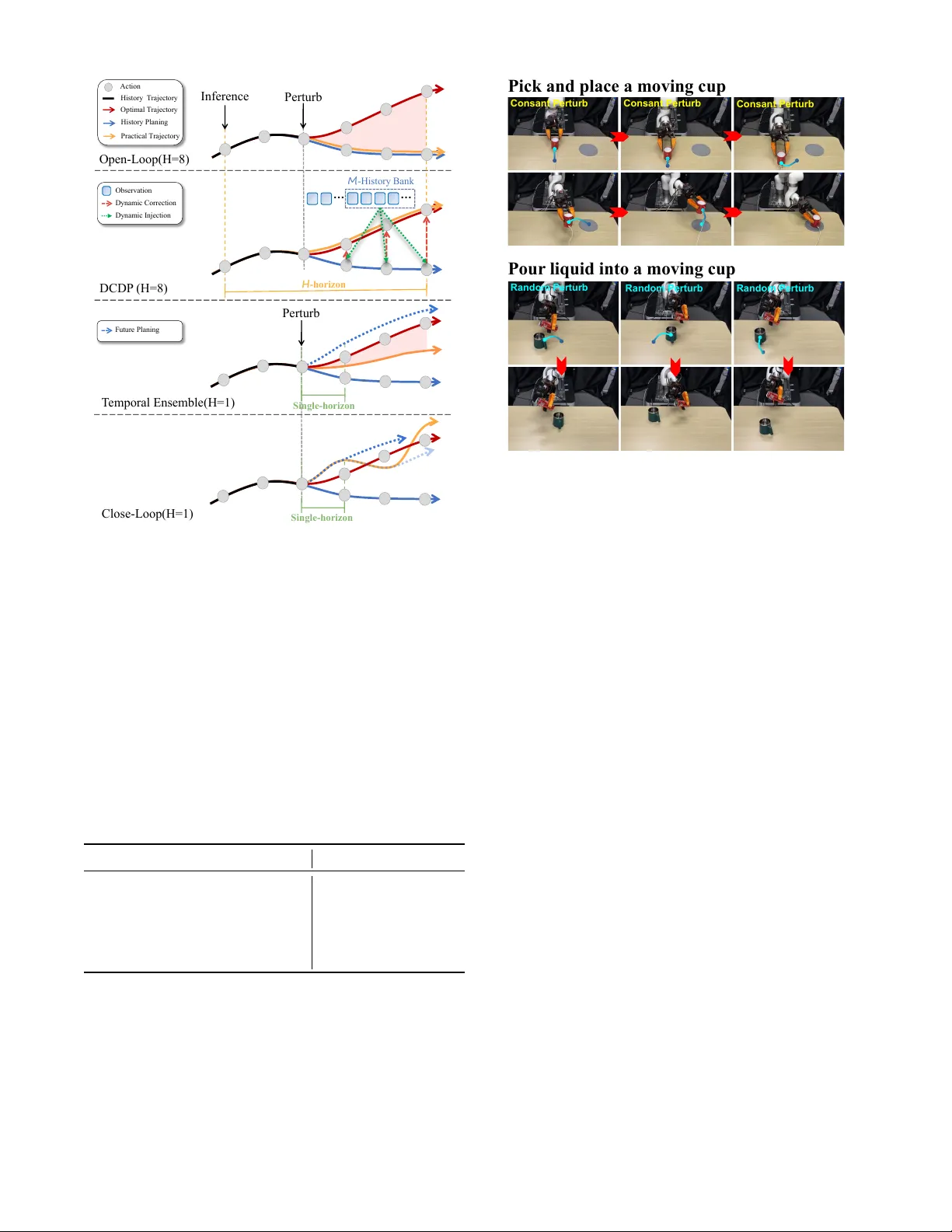

Closed-Loop Action Chunks with Dynamic Corr ections f or T raining-Fr ee Diffusion P olicy Pengyuan W u 1 , 2 ∗ , Pingrui Zhang 2 , 3 ∗ , Zhigang W ang 2 , Dong W ang 2 , Bin Zhao 2 , 4 B , Xuelong Li 5 , F ellow ,IEEE Abstract — Diffusion-based policies hav e achieved r emarkable results in robotic manipulation but often struggle to adapt rapidly in dynamic scenarios, leading to delayed responses or task failures. W e present DCDP , a Dynamic Closed-Loop Diffusion Policy framework that integrates chunk-based action generation with real-time correction. DCDP integrates a self- supervised dynamic feature encoder , cross-attention fusion, and an asymmetric action encoder -decoder to inject envir onmental dynamics befor e action execution, achieving real-time closed- loop action correction and enhancing the system’s adaptability in dynamic scenarios. In dynamic PushT simulations, DCDP impro ves adaptability by 19% without retraining while requir - ing only 5% additional computation. Its modular design enables plug-and-play integration, achieving both temporal coherence and real-time responsiveness in dynamic robotic scenarios, including real-world manipulation tasks. The project page is at: https://github.com/wupengyuan/dcdp I . I N T R O D U C T I O N In recent years, diffusion policies ha ve achiev ed remark- able results in robotic manipulation tasks [1], [2], [3]. These methods typically reason over action chunks to capture non- Markovian dependencies, reducing compounding errors in sequential prediction and enabling coherent long-horizon action generation [4], [5]. Howe ver , achieving efficient robotic manipulation in dy- namic en vironments requires more than long-term planning; the policy must also be capable of promptly responding to rapid en vironmental changes. This dual demand poses a significant challenge to existing approaches: the policy must generate coherent action sequences ov er extended time horizons while simultaneously percei ving and adapting to external disturbances or target motions during execution. W ithout such responsiv eness, open-loop execution often leads to delays or task failures. In typical scenarios, such as grasping moving objects, the lack of sufficient reactivity can drastically reduce success rates. Generally , the limitations of existing approaches can be summarized as follows: (1) Open-loop action generation. Action chunks are fully generated prior to ex ecution, lacking the capability to dynamically adjust subsequent actions based on the latest observations. This sev erely restricts respon- siv eness in dynamic environments. (2) Insufficient temporal modeling. The inference process often relies on single-frame 1 Zhejiang Univ ersity . 2 Shanghai Artificial Intelligence Laboratory . 3 Fudan University . 4 Northwestern Polytechnical University . 5 Institute of Artificial Intelligence, China T elecom Corp Ltd. Emails: { pengyuanwu0810, zprzpr121 } @gmail.com ∗ Equal contribution. B Corresponding author. Open - Loop Action C hunking Closed - Loop Action C hunking Closed - Loop Acti on Chunking w it h Dy nam ic Correct ion H - ho ri zo n H - ho ri zo n Si ng l e - h o ri zo n M - H i s t o ry Ban k … … A ct i on O bse r v at i on D y nam i c C or r ect i on Traj ect or y Fig. 1. Comparison of the Open-Loop Diffusion Policy , Closed- Loop Diffusion Policy , and Our Closed-Loop with Dynamic Correction Diffusion Policy . The orange line depicts the action chunking prediction of length H for the Open-Loop Diffusion Policy . Meanwhile, the green line denotes the Closed-Loop Diffusion Policy , which employs single-step inference to achieve closed-loop control. Howe ver , this approach incurs high latency and requires frequent re-planning. By contrast, our Dynamic Correction Closed-Loop Diffusion Policy (as indicated by the red line ) lev erages information from a length- M History Bank to perform lightweight, fast corrections at each inference step, thereby achie ving closed-loop control. or a small number of static observ ations, failing to fully exploit continuous temporal information, which in turn limits adaptability to environmental changes. T o address the above issues, previous studies hav e ex- plored two primary avenues for improv ement. The first increases inference frequency by shortening the prediction horizon or reducing denoising steps to enhance system responsiv eness [6], [1], [4]. Howe ver , these approaches gen- erally compromise action generation quality , and e xcessiv ely frequent updates may disrupt action sequence continuity , thereby weakening task ex ecution stability . The second av- enue employs temporal ensemble or bidirectional decoding to achiev e closed-loop execution by integrating multi-step in- ferred action sequences [7], [8]. Ne vertheless, these methods face two main limitations: they are either constrained by his- torical inference information, hindering true real-time closed- loop execution, or they require sampling multiple action sequences within a single inference step for decoding and selection, which incurs substantial computational ov erhead. Facing these challenges, this paper proposes a closed-loop action-chunking policy framework that integrates long-term planning with real-time dynamic correction. The framew ork lev erages the strengths of diffusion policies in long-horizon planning, while incorporating a lightweight dynamic feature module that injects high-frequency environmental informa- tion into action generation. In this way , closed-loop execution and dynamic responsiv eness are achiev ed at every time step. Specifically , we first design a self-supervised dynamic feature encoder to extract information about en vironmental changes. This encoder collects recent observ ation images within a sliding window and applies self-supervised con- trastiv e learning on their differential features, thereby cap- turing high-frequency dynamics. T o further enhance tempo- ral awareness, we introduce cross-attention and temporal- attention modules, which strengthen feature modeling in dy- namic scenes. In addition, we develop an asymmetric action encoder that compresses raw action sequences into latent representations and reconstructs them using dynamic feature information. By enforcing reconstruction loss together with a KL div ergence constraint, the decoder is compelled to rely on recent dynamic observations, thereby improving the adaptability of action generation. Generally , the training and inference stages of the pro- posed method can be illustrated as follows: Stage 1 (Training):Using labeled data, the asymmetric action encoder and the self-supervised dynamic feature en- coder are trained end-to-end. Reconstruction loss and KL div ergence are employed to guide the decoder to attend to dynamic features. Stage 2 (Inference):The pretrained dif fusion policy gener- ates complete action chunks to ensure long-term action con- sistency . Simultaneously , the dynamic feature module contin- uously extracts en vironmental change information through a sliding window and jointly decodes it with the action latent representations, enabling real-time correction of actions at each time step. Because the module updates at the same frequency as the action execution, it can significantly en- hance adaptability to dynamic en vironments while maintain- ing overall action coherence. It is worth emphasizing that this approach does not require any retraining of the original diffusion policy . By simply inserting the dynamic correction module during inference, the framework substantially improves responsiv eness and robustness in dynamic scenarios. Moreover , the framework is highly modular and compatible, allowing seamless integra- tion with various action-chunk-based policies and providing a plug-and-play solution to balance long-term planning with real-time closed-loop control. W e ev aluated the DCDP method on the dynamic PushT simulation task and two real-world tasks. The results demon- strate that DCDP can effecti vely mitigate the adaptability limitations of Diffusion Policy in dynamic scenarios without requiring retraining. Compared with the original inference method, it achieved a 19% improvement in success rate with only 5% additional computational overhead. Furthermore, in static scenarios, its ef ficient closed-loop characteristic further improv ed task success. In summary , the contributions of this work are as follo ws: • Dynamic Closed-Loop Framework : Integrates long- horizon planning with real-time correction, preserving Dif- fusion Policy consistency while enabling fast responses to en vironmental changes. • Dynamic Featur e Extraction and Action Correction : Lightweight temporal attention module learns environmen- tal dynamics and fuses them with latent actions, allowing flexible adaptation to perturbations and moving targets. • T raining-Free and Modular Design : Enhances dynamic adaptability without retraining Diffusion Policy; modular design supports plug-and-play integration with various action-chunk strategies for long-term planning and real- time control. I I . R E L AT E D W O R K A. Behavior Cloning Recent advancements in data collection and benchmark dev elopment, both in simulated and real-world environ- ments [9], [10], [11], [12], hav e significantly contributed to the progress of robotics. Imitation learning, particularly through expert demonstrations, has emerged as a piv otal driv er in adv ancing robotic capabilities [13], [14], [15], [16], [17], [18], [19], [20]. Among the various techniques in imitation learning, Generativ e Behavior Cloning has g arnered significant attention due to its ability to effecti vely model the distribution of expert demonstrations. This approach not only simplifies the learning process but has also shown strong empirical success in real-world applications [21], [22], [23]. Recently , a Behavior Cloning method incorporating action chunking has been proposed [1], [24], [8]. This technique effecti vely manages temporal dependencies by predicting sequences of continuous actions. Howe ver , the process of inferring action chunks often depends on single-frame or a limited number of frame observations, which fails to fully leverage the continuous temporal information present in the data. In contrast, our approach exploits previously underutilized temporal cues in the observ ations and integrates a fast policy to inject these signals into a slower diffusion policy , facilitating online policy correction. B. Closed-Loop Action Chunks The dif fusion policy generates high-quality action through iterativ e refinement from Gaussian noise, resulting in sig- nificant improvements in robotic manipulation [1], [2], [5]. Howe ver , the re-planning frequency of the diffusion policy is limited, and the execution of an action chunking process remains open-loop. The poor performance of the diffusion policy in rapidly changing en vironments is also attributed to the open-loop nature of action chunking, which prev ents it from receiving timely feedback and responding dynami- cally . T o address this, some approaches [25], [26], [27] aim to incorporate high-frequency policies, injecting additional information during the execution of an action chunk to enable closed-loop control. W e also adopt a closed-loop scheme, enhancing the dynamic capabilities of the diffusion policy by fully utilizing the memory and dif ferential dynamic information within the closed-loop action chunks. C. Dynamic Manipulation In robot-object interactions, objects are often in motion, such as when handling items on an industrial conv eyor belt or interacting with dynamic objects like a soccer ball [28], a badminton shuttlecock [29], or a table tennis ball [30] during sports activities. Howe ver , there is currently no dedicated real or simulated dataset specifically designed to train robots for adapting to such dynamic scenarios. T o address dynamic challenges, some approaches [31], [32] integrate Model Pre- dictiv e Control (MPC) [33] to achiev e real-time performance. While effecti ve in structured en vironments, these methods tend to generalize poorly when the motion sequences of the manipulated objects become more uncertain. Addition- ally , some methods [34], [35], [36] combine reinforcement learning to explore dynamic capabilities. Ho wev er, these approaches do not fully lev erage the action expert data deriv ed from imitation learning, as discussed in Section II- A. In contrast, our approach injects dynamic correction information into the dif fusion policy , which has been trained through imitation learning, in a training-free manner during the second-stage dynamic scene ev aluation. This method eliminates the need for additional training of the action model and enhances the model’ s ability to operate ef fectively in dynamic environments. I I I . M E T H O D A. Preliminaries Diffusion policy with action chunking. Let A t : t + H − 1 : = [ a t , a t + 1 , . . . , a t + H − 1 ] denote a horizon- H action chunk con- ditioned on the current observ ation o t . W e instantiate the slow policy as a conditional diffusion model, π s ( A t : t + H − 1 | o t ) , which transforms Gaussian noise into A t : t + H − 1 via an observation-conditioned denoiser ε θ s . During dynamic ev aluation we keep the parameters θ s fixed (training-free), reusing the weights learned on a static dataset. While π s promotes temporal coherence across the chunk, it operates open-loop within the chunk and thus cannot promptly react to object motion in rapidly changing scenes (Please see Figure 2 Stage 2). Dynamics-aware policy . W e maintain a history bank of the most recent M observations (Figure 2 Stage 1), O t − M + 1: t : = [ O t − M + 1 , . . . , O t ] . A fast policy extracts dynamics-aware, memory features from this history , F M = π f ( O t − M + 1: t ) . Joint policy f or dynamic evaluation. At dynamic e valua- tion time, we fuse the slow and fast pathways through a joint policy π c ( A ′ t : t + H − 1 | o t , F M ) , where A ′ t : t + H − 1 is the action chunk after online dynamic correction, jointly predicted from the current observation o t and the features F M . In the following, Section III-B details the training of the fast dynamic aware policy π f , and Section III-C explains how its features condition the diffusion policy to yield corrected action chunks in dynamic en vironments. B. Stage 1: F ast Dynamic A ware P olicy Training This section details the Stage 1 training procedure of the Fast Dynamic-A ware Policy , as illustrated in Figure 2 (left). W e use a History Bank to store a sliding windo w of observations of size M , denoted as O t − M + 1: t . The His- tory Bank is then used in conjunction with the computed differential features for cross-attention, aiming to capture dynamic information, while utilizing frame-wise temporal attention to learn the sequential history (see Section III-B.1). Additionally , we compute a self-supervised loss using the differential features for the output of the fast dynamic aware policy , and train the model accordingly (see Section III-B.5). T o ensure that the Fast Dynamic A ware Policy training fully lev erages expert data, we employ a lightweight V ariational Autoencoder for action prediction training on pushT data. In this case, the output of the Fast Dynamic A ware Policy is jointly used with o t to predict the action and compute the loss for backpropagation (see Section III-B.6). 1) History Bank Memory Learning : W e propose a Dy- namic Feature Extractor, which is designed to capture both temporal dependencies and dynamic changes in the environ- ment. The system relies on a sliding windo w of the most recent observations, stored in the History Bank , to extract dynamic features using a combination of con volutional layers and attention mechanisms. Let O t − M + 1: t : = [ O t − M + 1 , O t − M + 2 , . . . , O t ] represent the history bank containing the most recent M observations. These observ ations are fed into the feature extractor to capture spatial and temporal dependencies. Initially , the input O t − M + 1: t is processed through a pre-trained ResNet18 backbone to extract spatial features. Let the e xtracted feature map be denoted by: X spatial = ResNet ( O t − M + 1: t ) , (1) where X spatial ∈ R M × C × H f × W f ; here M is the number of frames, and C , H f , W f denote the channel, height, and width of the feature maps, respectiv ely . 2) Diff erential F eature Computation : T o capture the dynamic changes between consecutiv e frames, we compute the differential feature ∆ X t as: ∆ X t = X t + 1 − X t , (2) where X t represents the extracted feature map for frame t . W e scale the differential by a learnable parameter α : D t = α · ( X t + 1 − X t ) , (3) where D t ∈ R ( M − 1 ) × C ′ × H f × W f represents the differential fea- tures for the sliding window . This operation allows the model to capture temporal dynamics between adjacent frames. 3) T emporal Attention : The T emporal Attention mech- anism is designed to capture dependencies across the time dimension in a sequence of frames. It enables the model to learn temporal relationships between frames, allowing it to focus on the most rele vant time steps and capture long-range temporal dependencies. Giv en frame features X spatial ∈ R M × C ′ × H f × W f , the model computes, for each time step t , the query ( Q t ) , key ( K t ′ ) , Stage 1 V AE Enco der V AE Decod er Stage 2 In fer ence T ime Dir ec tion T = t … T = t+ n ~ t+n +M … O bserv a tions … F ast D yn ami c A war e p o l i cy … T em po ra l A ttenti o n F us i o n C r o s s A ttenti o n Query Key V a l ue R es N et T = t+ H T = t+ H+n ~ t+ H+n + M Fa st D yn ami c A w are po l i cy D eno i s i ng U - N et Action Chunk ing … Action Chunk ing w ith Dy na m ic Co rr ection V AE E n c od e r V AE De c od e r … … Fa st D yn ami c A w are po l i cy D eno i s i ng U - N et Action Chunk ing V AE E n c od e r V AE De c od e r W a i ti n g fo r the dy na m i c co rr ecti o n … Fig. 2. Overview of the DCDP . Our method adopts a two-stage framework. In Stage 1 (left panel of the figure), we train the Fast Dynamic-A ware Policy and a variational autoencoder (V AE). In Stage 2 (right panel of the figure), we apply training-free, per-step action corrections using the aforementioned Fast Dynamic-A ware Policy; the corrected actions are then decoded by the V AE decoder . and value ( V t ′ ) , obtained from X spatial via learned linear projections. The attention scores are then computed by taking the dot product between the query for time step t and the key for time step t ′ , with the attention score Attn t , t ′ giv en by: Attn t , t ′ = SoftMax ( Q t · K T t ′ √ D ) , (4) where D denotes the dimensionality of the query and key vectors; the score quantifies temporal similarity between time steps t and t ′ and is scaled by 1 / √ D to stabilize gradients during training. The attention scores are then normalized using the softmax function to con vert them into a probability distribution. Finally , the attention scores are applied to the values V t ′ , producing the attended output for time step t . The output is the weighted sum of the values across all time steps, allowing the model to focus on the most important frames. The attended features for time step t are computed as: X attended , t = ∑ t ′ Attn t , t ′ · V t ′ . (5) The final attended features are then passed through a linear projection to produce the output tensor X temporal . The T emporal Attention mechanism effecti vely learns long-range temporal dependencies by focusing on rele vant time steps, making it especially po werful for modeling dynamic en vironments where the relationships between past and future frames are crucial for accurate predictions. 4) Fusion Cross-Attention : T o relate dynamic features to the observation history , we apply cross-attention to fuse the dif ferential feature D t with temporal context from the history bank X temporal . Given queries Q t from X temporal and keys/v alues K t , V t from D t , the attention output is Attn ( Q t , K t , V t ) = softmax Q t K ⊤ t √ d k V t , (6) where d k is the key dimensionality . This cross-attention aligns temporal context with dif ferential cues, enabling the model to attend to dynamic changes o ver time. Let F M denote the fused representation that summarizes both historical memory and dynamic variation. 5) Self-Supervised with Differential : In this section, we present a self-supervised learning scheme for the dynamic feature extractor that leverages frame-to-frame differentials to model temporal change, removing the need for manual labels. During training, at step M the extractor produces predicted dynamic features F M , while the history bank provides dif fer- ential targets D M − 1 (Sec. III-B.1). The model is conditioned on preceding frames, and D M − 1 serves as supervision. T o align predictions with observed changes, we minimize the KL diver gence between normalized features: L diff = T ∑ t = 1 KL softmax ( F ( t ) M ) ∥ softmax ( D ( t ) M − 1 ) . (7) This objective encourages the representation to capture tem- poral dynamics without manual annotations. 6) V ariational Autoencoder : W e utilize a modified V ari- ational A utoencoder (V AE) to predict future actions. The model consists of an encoder that processes the action sequence and a decoder that generates the predicted future actions conditioned on dynamic temporal features. This architecture allo ws the model to learn a compact latent representation that captures the essential temporal dynamics between the current and future actions. Encoder and Latent Space Representation. The en- coder network takes an action sequence A t as input, and outputs the mean ( µ ) and log-variance (log ( σ 2 ) ) of a Gaussian distribution, which parameterizes the approximate posterior distribution q ( z | A t ) over the latent variable z : q ( z | A t ) = N ( µ , σ 2 ) . (8) This distribution is used to capture the underlying factors of variation in the action sequence. The latent vector z is then sampled from this distribution using the reparameterization trick to allow for backpropagation through the stochastic sampling process: z = µ + σ · ε , (9) where ε ∼ N ( 0 , I ) is random noise, and σ = e xp 1 2 log ( σ 2 ) is the standard deviation deriv ed from the log-variance. Decoder and Action Prediction. The decoder generates the predicted future actions ˆ A t , conditioned on both the latent vector z and the dynamic features F M of the action sequence. These dynamic features F M capture the temporal dependen- cies of the en vironment, which are used as additional context for action prediction. The decoder reconstructs the action sequence using a recurr ent neural network (RNN) that takes in the latent vector and the temporal context to output the predicted action sequence: p ( A t | z , F M ) = N ( ˆ A t , σ 2 decoder ) . (10) Here, ˆ A t is the predicted action, and σ decoder is the standard deviation of the predicted distribution. 7) Loss : The training objectiv e of the V AE is a combi- nation of the reconstruction loss and the KL divergence regularization. The reconstruction loss is computed as: L recon = MSELoss ( ˆ A t , A t ) . (11) The KL diver gence is computed as: L KL = − 1 2 d ∑ i = 1 1 + log ( σ 2 i ) − µ 2 i − σ 2 i , (12) Where µ i and σ i are the mean and standard deviation of the posterior for the i -th latent dimension. The differential loss L diff is computed using the KL diver gence as mentioned abov e. Thus, the total loss function is the weighted sum of the reconstruction loss, the KL diver gence, and the differential loss: L total = L recon + λ KL L KL + λ diff L diff , (13) where λ KL and λ diff are hyperparameters controlling the relativ e importance of each term. The forward pass in volv es the action sequence A t and the temporal conditional features F M , and the V AE model outputs the reconstructed actions ˆ A t , along with the mean µ and log-variance log ( σ 2 ) from the encoder . The total loss is then backpropagated to update the model parameters. C. Stage 2: Dynamic Injection for Training-F ree Diffusion P olicy During dynamic e v aluation, we maintain a sliding win- dow of length M over the observations, O t − M + 1: t = [ o t − M + 1 , . . . , o t ] . W e then compute dynamics-aware features online using the Stage-1 Fast Dynamic-A ware extractor π f : F t = π f ( O t − M + 1: t ) ∈ R d f . (14) A frozen slo w diffusion policy produces a horizon- H open- loop action chunk conditioned on the current observation, A t : t + H − 1 ∼ π s ( · | o t ) , A t : t + H − 1 = [ a t , . . . , a t + H − 1 ] . (15) For feature injection, we first apply elementwise normal- ization using known bounds a min , a max : ˜ A t : t + H − 1 = 2 A t : t + H − 1 − a min a max − a min − 1 , (16) where all operations are elementwise and 1 denotes an all- ones tensor matching the shape of A t : t + H − 1 . W e then encode this chunk into a latent vector with the frozen V AE encoder E trained in Stage 1: z t = E ˜ A t : t + H − 1 ∈ R d z . (17) W ithin each chunk, execution proceeds in a closed loop. For each step s ∈ { 0 , . . . , H − 1 } , we refresh the history window and recompute features: F t + s = π f ( O t + s − M + 1: t + s ) . (18) W e then decode a dynamically corrected action with the frozen V AE decoder D trained in Stage 1, conditioned on a step embedding e s : ˆ a t + s = D ( z t , F t + s , e s ) . (19) Aggregating ov er steps yields the closed-loop chunk: ˆ A ′ t : t + H − 1 = [ D ( z t , F t , e 0 ) , . . . , D ( z t , F t + H − 1 , e H − 1 ) ] . (20) Equiv alently , this induces a deterministic joint closed-loop policy π c ( ˆ a t + s | o t , O t + s − M + 1: t + s ) = δ ( ˆ a t + s − D ( E ( norm ( π s ( o t ))) , π f ( O t + s − M + 1: t + s ) , e s )) (21) where norm ( · ) denotes the linear normalization above and δ ( · ) is a Dirac delta indicating a deterministic mapping. After s = H − 1, we replan by resampling a new chunk from π s with the latest observ ation (receding-horizon), while keeping all modules frozen; thus, the entire Stage-2 procedure is training-fr ee . The continual injection of F t + s provides fine- grained online corrections to the open-loop diffusion chunk, improving responsi veness and robustness in rapidly changing scenes. I V . E X P E R I M E N T In this section, we ev aluate the proposed algorithm on the PushT task in dynamic scenarios. First, under static settings, we ev aluate the effect of the method on task success rate and compare it with the standard Diffusion Policy inference strategy . Then, in dynamic scenarios, we further in vestigate the robustness and generalization of the algorithm, and conduct ablation studies to validate the effecti veness of each design module. Finally , we measure the inference latency of the algorithm, demonstrate its lightweight nature, and show that it can support high-frequency closed-loop control and real-time deployment. A. Experimental Settings For the PushT task, we employ a Dif fusion Policy trained from human demonstrations as the basic control strategy . During inference, we set the batch size to N = 50, meaning that 50 initial poses are randomly generated in the simulation en vironment and kept fixed throughout the experiments. For each initial condition, we conduct rollouts in the en vironment and ev aluate the performance of each method in terms of task success rate and inference latency . The simulation terminates either when the maximum number of steps T max = 300 is reached, or earlier if the overlap ratio σ between the object and the target position exceeds 95%. The simulation environment operates at 10 Hz. The model predicts the next 16 actions in a single inference step and ex ecutes the first 8 actions in open-loop mode. Baselines : W e consider three representativ e existing infer- ence methods as baselines for comparison: • Original Open-loop(H=8): Execute an entire action chunk at each inference step. • Original Closed-loop(H=1): Execute only the most recent action at each inference step. • T emporal Ensemble [23](H=1): At each overlapping step, we average the ne w prediction a with the previous predic- tion ˆ a to produce smoother action chunks: a t = λ a t + ( 1 − λ ) ˆ a t , with λ set to 0.5. H denotes that the Diffusion Policy model is inferred every H time steps. Perturbations: T o systematically ev aluate the robustness and generalization of the proposed method under dynamic conditions, we introduce two types of perturbations in sim- ulation: • Constant-direction Perturbations: During each ex ecution step, an offset of fixed magnitude and direction is applied to the object to emulate a sustained external force. • Random-direction Perturbations: During each execution step, an of fset with fixed magnitude and randomly varying direction is applied, where the direction is resampled every N = 50 steps. Static Co n stan t Perturb atio n s Ran d o m Perturb atio n s Fig. 3. Different types of perturbations are considered, where the random perturbation updates its direction at certain time steps. Dataset : This study employs a publicly av ailable human demonstration dataset consisting of 200 trajectories, where the blocks are free from external Perturbations. Model T raining : Our method was trained from scratch on a single NVIDIA A800 80GB GPU. For comparison, as a baseline, the Dif fusion Policy is trained with default parameters for 500 epochs to ensure conv ergence. Inference Latency Measurement : T o ensure a fair ev al- uation of inference efficienc y , we measure all inference latencies on a single NVIDIA R TX 4090 GPU 24GB under identical hardware and software configurations. B. Quantitative Analysis T able I presents a comparison of task success rates be- tween the proposed method and se veral baselines in both static scenarios and dynamic scenarios under varying Pertur- bations. T ABLE I S U CC E S S R ATE S A CR OS S T H R EE L E VE L S O F P E RTU R BATI O N I N T EN S I TY S H OW T H A T T H E P R OP O S ED D C DP M E TH O D O U T PE R F OR M S A L L BA S E L IN E M E T HO D S . Methods Static Constant Perturbations Random Perturbations Open-Loop 88.4 58.2 52.8 Close-Loop 84.6 76.1 61.6 T emporal Ensemble 81.0 65.8 57.3 DCDP 92.5 77.6 71.9 T able II shows the av erage single-step latency for each method, highlighting the real-time and computational im- prov ements of our method. T ABLE II C O MPA R IS O N O F P E R - S T EP I N FE R E N CE L A T E N CY . D C DP A D DS O N L Y 5 % OV E RH E A D I N C L O S ED - L O OP E X E C UT I O N , S U B STA N TI A L L Y L OW E R T H AN O T HE R C L O SE D - L OO P M E T HO D S . Methods OL(H=8) CL(H=1) TE(H=1) DCDP(H=8) Delay (ms) 7.05 53.60 53.74 7.39 W e observe that the original closed-loop policy outper- forms the open-loop policy in terms of task success rate under dynamic scenarios, whereas it exhibits a marked performance drop in static scenarios. W e speculate that this is because, as a diffusion model, Diffusion Policy replans the entire action sequence during each inference step, which results in discontinuity between consecuti ve actions. This may impair its ability to reproduce long-term coordination in human demonstrations, thus reducing the task success rate. In contrast, DCDP operates at a lower replanning frequency , exploiting the long-term planning ability of the dif fusion policy while integrating recent observ ations for rapid closed- loop control, resulting in notable improvements in both static and dynamic tasks. In addition, the inference latency data sho w that our lightweight model has clear advantages in real-time per- formance, with lower latency than the direct closed-loop strategy and supporting high-frequency closed-loop control … … H - ho ri zo n Per tu rb Infe ren ce M - H i s t o ry Ban k Op en - Loop(H =8) DCD P (H=8) Sing l e - h o ri zo n Si ng l e - h o ri zo n Close - Loop(H= 1) T emporal E nsem ble (H=1) O bse r v at i on D y nam i c Cor r ec t i on A ct i on H i st or y Traj ect or y O pt i m al Traj ect or y H i st or y P l ani ng P r act i cal Traj ect or y D y nam i c I nj ect i on F ut ur e P l ani ng Per turb Fig. 4. V isualization of how various inference strategies respond to perturbations. C. Ablation Study T o assess the necessity and effecti veness of each module in the proposed architecture, we conduct ablation studies to analyze their relative contributions to model performance. Specifically , we conducted ablation studies on the self- supervised dynamic feature extraction module. Ke y compo- nents were sequentially remov ed, and Stage 1 was retrained after each remov al. Training parameters and ev aluation met- rics were kept the same as in the original experiment. T ABLE III T A S K S U C CE S S R A T E S A F TE R A B L A T I N G I N D IV I D UA L M O DU L E S , D E MO N S T RATI N G T H A T E AC H M O D UL E I N D C D P I S E FF E CT I V E . T A SSD DCA SR static SR dynamic 88.40 58.20 ✓ ✓ 92.05 73.59 ✓ ✓ 92.31 73.50 ✓ ✓ 93.36 68.23 ✓ ✓ ✓ 92.50 77.60 T able III presents the results of the ablation experi- ments. T A refers to temporal attention, SSD refers to self- supervised dif f loss, DCA refers to diff cross attention, and SR static , SR dynamic , denotes success rate (%). W e selected static scenarios and constant-direction perturbations as e val- uation metrics. The results demonstrate that all proposed modules make positiv e contributions to system performance. Co n sa n t P er t u r b Co n sa n t P er t u r b Co n sa n t P er t u r b R and o m P er t u r b R and o m P er t u r b R and o m P er t u r b Pi ck and place a moving c up Pour li qu id i nto a moving cup Fig. 5. The two tasks comprise two types of perturbations: constant- direction and random-direction. These perturbations were applied exclu- siv ely to the components highlighted in the figure. D. Real W orld Application T o validate the effecti veness of the proposed algorithm in real-world scenarios, we select two representativ e manipula- tion tasks for ev aluation: • Pick-and-place of a mo ving cup, corresponding to the constant-direction perturbation scenario, which examines the algorithm’ s robustness under continuous perturbations; • Pouring liquid into a moving cup, corresponding to the random-direction perturbation scenario, which ev aluates the algorithm’ s adaptability to uncertain perturbations. W e employed the UMI [22] gripper to perform the tasks and utilized the publicly av ailable FastUMI dataset, repro- ducing its scenarios to construct a real-world ev aluation platform. In the real-world task tests, we adopted the PushT dy- namic task ev aluation design and implemented constant- and random perturbations. Constant-direction perturbations were applied in the ”picking and placing a moving cup” task, in which the cup moved along a single direction prior to grasping. Random perturbations were applied in the ”pouring liquid into a moving cup” task, in which the cup’ s movement direction was random, requiring the robotic arm to pour liquid accurately into it. The results indicate that, compared with the original inference method, DCDP exhibits significantly enhanced adaptability in dynamic environments and can handle a range of dynamic scenarios. V . C O N C L U S I O N W e propose DCDP , a training-free closed-loop action- chunk framework that injects high-frequency dynamic fea- tures into a pretrained diffusion policy for real-time correc- tion. Key elements include a self-supervised dynamic feature encoder , cross/temporal attention for temporal awareness, and an asymmetric action encoder/decoder that decodes frozen diffusion chunks with updated context. Since cor- rection happens at inference without retraining, DCDP is a lightweight, plug-and-play balance between long-horizon planning and responsi ve control. Current limits include ev al- uation on a single simulated task and small data; future work should test div erse tasks, real hardware, and adaptiv e scheduling with multi-modal dynamics. AC K N OW L E D G M E N T This work was supported by the Shanghai AI Labora- tory , the National Key Research and Dev elopment Project (2024YFC3015503), the National Natural Science Founda- tion of China (62376222), and the Natural Science Basic Research Program of Shaanxi (2025JC-TBZC-07). R E F E R E N C E S [1] C. Chi, S. Feng, Y . Du, Z. Xu, E. Cousineau, B. Burchfiel, and S. Song, “Diffusion Policy: V isuomotor Policy Learning via Action Diffusion, ” in Robotics: Science and Systems XIX . Robotics: Science and Systems Foundation, July 2023. [2] M. Reuss, M. Li, X. Jia, and R. Lioutikov , “Goal-conditioned imi- tation learning using score-based diffusion policies, ” arXiv preprint arXiv:2304.02532 , 2023. [3] Y . Liu, W . C. Shin, Y . Han, Z. Chen, H. Ravichandar , and D. Xu, “Immimic: Cross-domain imitation from human videos via mapping and interpolation, ” 2025. [4] M. Janner , Y . Du, J. B. T enenbaum, and S. Levine, “Plan- ning with diffusion for flexible behavior synthesis, ” arXiv preprint arXiv:2205.09991 , 2022. [5] T . Pearce, T . Rashid, A. Kanervisto, D. Bignell, M. Sun, R. Georgescu, S. V . Macua, S. Z. T an, I. Momennejad, K. Hofmann, et al. , “Imitating human behaviour with diffusion models, ” arXiv pr eprint arXiv:2301.10677 , 2023. [6] A. Prasad, K. Lin, J. W u, L. Zhou, and J. Bohg, “Consistency policy: Accelerated visuomotor policies via consistency distillation, ” arXiv pr eprint arXiv:2405.07503 , 2024. [7] Y . Liu, J. I. Hamid, A. Xie, Y . Lee, M. Du, and C. Finn, “Bidirectional decoding: Improving action chunking via closed-loop resampling, ” International Conference on Learning Representations (ICLR) , 2025. [8] A. George and A. B. Farimani, “One act play: Single demonstration behavior cloning with action chunking transformers, ” arXiv preprint arXiv:2309.10175 , 2023. [9] Q. V uong, S. Levine, H. R. W alke, K. Pertsch, A. Singh, R. Doshi, C. Xu, J. Luo, L. T an, D. Shah, et al. , “Open x-embodiment: Robotic learning datasets and rt-x models, ” in T owards Generalist Robots: Learning P aradigms for Scalable Skill Acquisition@ CoRL2023 , 2023. [10] A. Khazatsky , K. Pertsch, S. Nair, A. Balakrishna, S. Dasari, S. Karam- cheti, S. Nasiriany , M. K. Srirama, L. Y . Chen, K. Ellis, et al. , “Droid: A large-scale in-the-wild robot manipulation dataset, ” arXiv preprint arXiv:2403.12945 , 2024. [11] H. R. W alke, K. Black, T . Z. Zhao, Q. V uong, C. Zheng, P . Hansen- Estruch, A. W . He, V . Myers, M. J. Kim, M. Du, et al. , “Bridgedata v2: A dataset for robot learning at scale, ” in Conference on Robot Learning . PMLR, 2023, pp. 1723–1736. [12] P . Zhang, X. Gao, Y . W u, K. Liu, D. W ang, Z. W ang, B. Zhao, Y . Ding, and X. Li, “Moma-kitchen: A 100k+ benchmark for affordance- grounded last-mile navigation in mobile manipulation, ” in Proceed- ings of the IEEE/CVF International Conference on Computer V ision (ICCV) , October 2025, pp. 6315–6326. [13] F . T orabi, G. W arnell, and P . Stone, “Behavioral cloning from obser- vation, ” arXiv preprint , 2018. [14] M. J. Kim, K. Pertsch, S. Karamcheti, T . Xiao, A. Balakrishna, S. Nair, R. Rafailov , E. Foster , G. Lam, P . Sanketi, et al. , “Open- vla: An open-source vision-language-action model, ” arXiv preprint arXiv:2406.09246 , 2024. [15] S. Liu, L. W u, B. Li, H. T an, H. Chen, Z. W ang, K. Xu, H. Su, and J. Zhu, “Rdt-1b: a diffusion foundation model for bimanual manipulation, ” arXiv pr eprint arXiv:2410.07864 , 2024. [16] K. Black, N. Brown, D. Driess, A. Esmail, M. Equi, C. Finn, N. Fusai, L. Groom, K. Hausman, B. Ichter, et al. , “ π 0 : A vision- language-action flow model for general robot control, ” arXiv pr eprint arXiv .2410.24164 , 2024. [17] H. Liang, X. Chen, B. W ang, M. Chen, Y . Liu, Y . Zhang, Z. Chen, T . Y ang, Y . Chen, J. Pang, et al. , “Mm-act: Learn from multimodal parallel generation to act, ” arXiv preprint , 2025. [18] S. Y ang, H. Li, Y . Chen, B. W ang, Y . Tian, T . W ang, H. W ang, F . Zhao, Y . Liao, and J. Pang, “Instructvla: V ision-language-action instruction tuning from understanding to manipulation, ” arXiv pr eprint arXiv:2507.17520 , 2025. [19] X. Chen, Y . Chen, Y . Fu, N. Gao, J. Jia, W . Jin, H. Li, Y . Mu, J. Pang, Y . Qiao, et al. , “Internvla-m1: A spatially guided vision- language-action framew ork for generalist robot policy , ” arXiv preprint arXiv:2510.13778 , 2025. [20] P . Zhang, Y . Su, P . Wu, D. An, L. Zhang, Z. W ang, D. W ang, Y . Ding, B. Zhao, and X. Li, “Cross from left to right brain: Adaptiv e text dreamer for vision-and-language navigation, ” 2025. [21] A. Brohan, N. Brown, J. Carbajal, Y . Chebotar, J. Dabis, C. Finn, K. Gopalakrishnan, K. Hausman, A. Herzog, J. Hsu, et al. , “Rt-1: Robotics transformer for real-world control at scale, ” arXiv preprint arXiv:2212.06817 , 2022. [22] C. Chi, Z. Xu, C. P an, E. Cousineau, B. Burchfiel, S. Feng, R. T edrake, and S. Song, “Univ ersal manipulation interface: In-the-wild robot teaching without in-the-wild robots, ” arXiv preprint , 2024. [23] T . Z. Zhao, V . Kumar , S. Levine, and C. Finn, “Learning fine-grained bimanual manipulation with low-cost hardware, ” arXiv pr eprint arXiv:2304.13705 , 2023. [24] G. Swamy , S. Choudhury , D. Bagnell, and S. Wu, “Causal imitation learning under temporally correlated noise, ” in International Confer- ence on Machine Learning . PMLR, 2022, pp. 20 877–20 890. [25] H. Xue, J. Ren, W . Chen, G. Zhang, Y . Fang, G. Gu, H. Xu, and C. Lu, “Reactiv e diffusion policy: Slow-fast visual-tactile policy learning for contact-rich manipulation, ” arXiv preprint , 2025. [26] X. Y e, R. H. Y ang, J. Jin, Y . Li, and A. Rasouli, “Ra-dp: Rapid adaptiv e diffusion policy for training-free high-frequency robotics replanning, ” arXiv preprint arXiv:2503.04051 , 2025. [27] L. X. Shi, Z. Hu, T . Z. Zhao, A. Sharma, K. Pertsch, J. Luo, S. Levine, and C. Finn, “Y ell at your robot: Improving on-the-fly from language corrections, ” arXiv pr eprint arXiv:2403.12910 , 2024. [28] A. Labiosa, Z. W ang, S. Agarwal, W . Cong, G. Hemkumar, A. N. Harish, B. Hong, J. Kelle, C. Li, Y . Li, et al. , “Reinforcement learning within the classical robotics stack: A case study in robot soccer, ” in 2025 IEEE International Confer ence on Robotics and Automation (ICRA) . IEEE, 2025, pp. 14 999–15 006. [29] Z. Shi, X. Zhang, C. Zhu, H. W ang, J. Y an, F . Y ang, and D. Xuan, “Mv-bmr: A real-time motion and vision sensing integration based agile badminton robot, ” Information Fusion , p. 103337, 2025. [30] Z. Su, B. Zhang, N. Rahmanian, Y . Gao, Q. Liao, C. Regan, K. Sreenath, and S. S. Sastry , “Hitter: A humanoid table ten- nis robot via hierarchical planning and learning, ” arXiv preprint arXiv:2508.21043 , 2025. [31] N. Hansen, X. W ang, and H. Su, “T emporal difference learning for model predictive control, ” arXiv preprint , 2022. [32] T . Salzmann, E. Kaufmann, J. Arrizabalaga, M. Pav one, D. Scara- muzza, and M. Ryll, “Real-time neural mpc: Deep learning model predictiv e control for quadrotors and agile robotic platforms, ” IEEE Robotics and Automation Letters , vol. 8, no. 4, pp. 2397–2404, 2023. [33] J. B. Rawlings, D. Q. Mayne, M. Diehl, et al. , Model predictive contr ol: theory, computation, and design . Nob Hill Publishing Madison, WI, 2020, vol. 2. [34] Y . Zhang, T . Liang, Z. Chen, Y . Ze, and H. Xu, “Catch it! learning to catch in flight with mobile dexterous hands, ” in 2025 IEEE International Conference on Robotics and Automation (ICRA) . IEEE, 2025, pp. 14 385–14 391. [35] H. Zhang, S. Christen, Z. Fan, O. Hilliges, and J. Song, “Graspxl: Generating grasping motions for div erse objects at scale, ” in Eur opean Confer ence on Computer V ision . Springer , 2024, pp. 386–403. [36] Y . Zhang, M. Xu, X. Bai, K. Chen, P . Zhang, Y . Xiang, and M. Zhang, “Instruction anchors: Dissecting the causal dynamics of modality arbitration, ” arXiv pr eprint arXiv:2602.03677 , 2026.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment