A Novel Evolutionary Method for Automated Skull-Face Overlay in Computer-Aided Craniofacial Superimposition

Craniofacial Superimposition is a forensic technique for identifying skeletal remains by comparing a post-mortem skull with ante-mortem facial photographs. A critical step in this process is Skull-Face Overlay (SFO). This stage involves aligning a 3D…

Authors: Práxedes Martínez-Moreno, Andrea Valsecchi, Pablo Mesejo

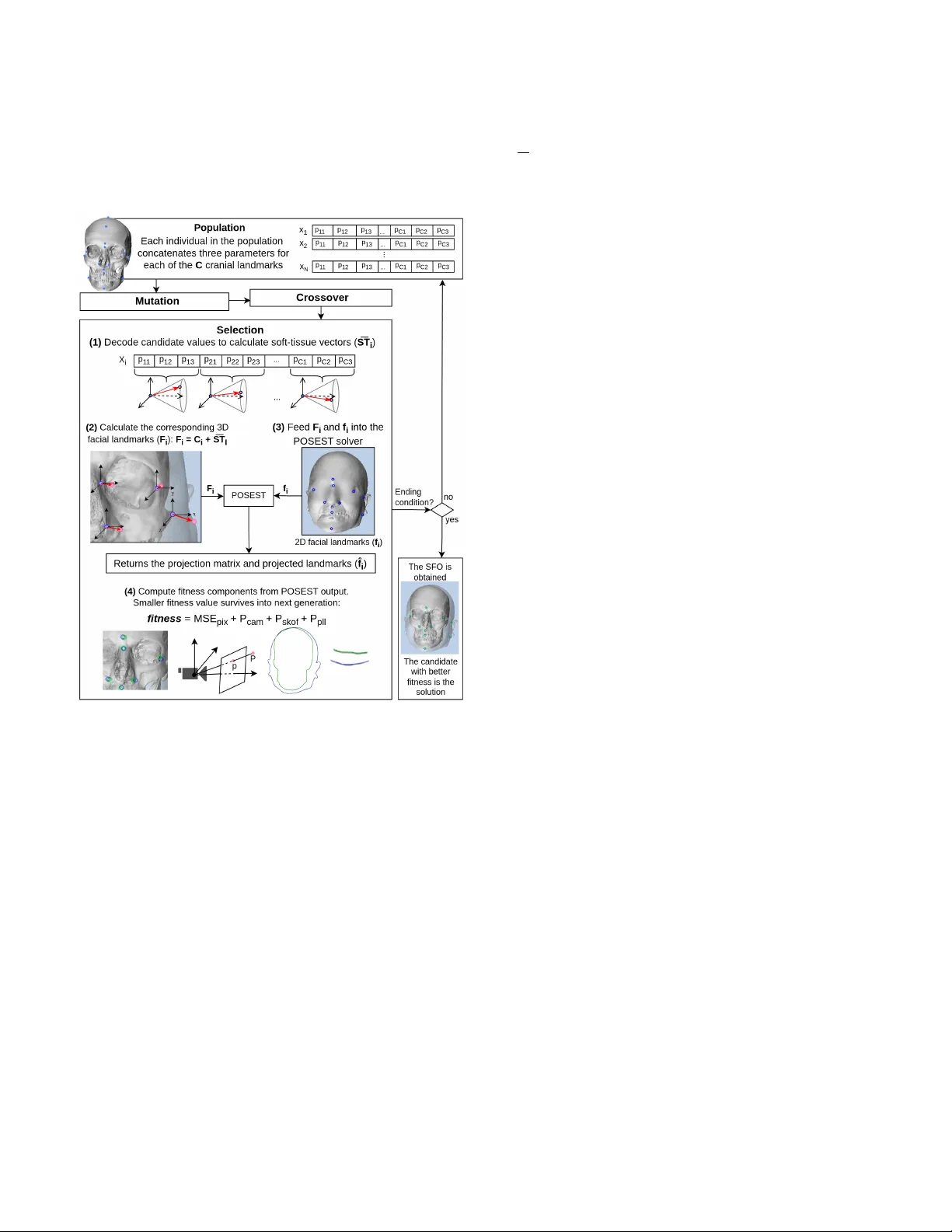

1 A No v el Ev olutionary Method for Automated Skull-F ace Ov erlay in Computer -Aided Craniof acial Superimposition Pr ´ axedes Mart ´ ınez-Moreno*, Andrea V alsecchi*, Pablo Mesejo, Pilar Nav arro-Ram ´ ırez, V alentino Lugli, and Sergio Damas This work has been submitted to the IEEE for possible publication. Copyright may be transferr ed without notice, after which this version may no longer be accessible. Abstract —Craniofacial Superimposition is a f orensic technique for identifying skeletal remains by comparing a post-mortem skull with ante-mortem facial photographs. A critical step in this process is Skull-Face Overlay (SFO). This stage in volves aligning a 3D skull model with a 2D facial image, typically guided by cranial and facial landmarks’ correspondence. Howev er , its accuracy is undermined by individual variability in soft-tissue thickness, introducing significant uncertainty into the overlay . This paper introduces Lilium, an automated evolutionary method to enhance the accuracy and robustness of SFO. Lilium explicitly models soft-tissue variability using a 3D cone-based repr esenta- tion whose parameters ar e optimized via a Differ ential Evolution algorithm. The method enfor ces anatomical, morphological, and photographic plausibility through a combination of constraints: landmark matching, camera parameter consistency , head pose alignment, skull containment within facial boundaries, and region parallelism. This emulation of the usual forensic practitioners’ approach leads Lilium to outperform the state-of-the-art method in terms of both accuracy and rob ustness. Index T erms —Forensic human identification, craniofacial su- perimposition, skull-face overlay , 3D pose estimation, 3D-2D image registration, ev olutionary optimization I . I N T RO D U C T I O N C RANIOF A CIAL Superimposition (CFS) is a forensic technique used to aid identification when dealing with skeletal remains [1]. CFS has been applied to support the identification in war , crime, and nature casualties, among others [2]. In such scenarios, the application of well-known identification techniques (such as fingerprints or DNA) is often unfeasible due to the lack of either ante-mortem or post- mortem crucial information [3]. The technique assists forensic practitioners by comparing one or more ante-mortem facial photographs with a recovered post-mortem skull. In order to achiev e this, the projection of the latter onto the a v ailable f acial Pr ´ axedes Mart ´ ınez-Moreno and Pablo Mesejo with the Department of Com- puter Science and Artificial Intelligence, University of Granada, Spain and the Andalusian Research Institute in Data Science and Computational Intelli- gence, University of Granada, Granada, Spain (e-mail: praxedesmm@ugr .es; pmesejo@ugr .es). Andrea V alsecchi, Pilar Nav arro-Ram ´ ırez, and V alentino Lugli with Panacea Cooperati ve Research S. Coop., Ponferrada, Spain (e-mail: valsecchi.andrea@gmail.com; pilarna v ar98@gmail.com; valentinolugli@gmail.com). Sergio Damas with the Department of Software Engineering, Uni versity of Granada, Spain and the Andalusian Research Institute in Data Science and Computational Intelligence, University of Granada, Granada, Spain (e-mail: sdamas@ugr .es). * These authors contributed equally to this work. images is performed. Three stages are distinguished in the CFS process [4], [5] (represented in Figure 1): 1) Acquisition and processing of the post-mortem skull and ante-mortem facial photograph(s), together with the location of anatomical landmarks. These landmarks fall into two categories: craniometric landmarks (here- after “cranial landmarks”), which are anatomically de- fined points on the skull; and cephalometric landmarks (hereafter “facial landmarks”), which are correspond- ing points located on the face. The interested reader is referred to [6] for the location and name of the cranial and facial landmarks typically used in CFS. The correspondence between a cranial and a facial landmark is not exact due to the presence of soft tissue, which surrounds and protects the body structures and organs. 2) Skull-Face Overlay (SFO), which aims to achiev e the most accurate alignment of a 3D skull model with a facial photograph, often by matching corresponding cra- nial and facial landmarks. When multiple photographs are av ailable, separate SFO processes are conducted. 3) Decision-making, which is performed by the practitioner assessing the degree of anatomical and morphological correspondence based on the SFO to determine whether the skull and facial photograph(s) belong to the same individual. Fig. 1. Overvie w of the CFS process comprising three main stages. Despite its extensi ve applicability and significant value, the CFS technique remains intricate and challenging to perform [6]. Historically , one of the main drawbacks of this technique 2 has been its reliance on non-standardized methods [7]–[9]. The MEPR OCS European project 1 was de v eloped to o vercome this challenge, with its results and best practice guidelines presented in [10]. Despite these ef forts, the three stages of CFS are still performed manually in most forensic laboratories. The SFO stage, in particular, remains highly complex and time-consuming, often requiring hours of trial and error to achiev e an optimal alignment between the skull and facial photograph [4], [8]. Our work specifically contributes to the second stage of the CFS technique: the SFO process. This task can be framed as a 3D-2D Image Registration (IR) problem, where the goal is to align two different objects: a 3D skull model with a 2D facial photograph [11]. IR aims to identify the transformation (e.g., rotation and translation, among others) that superimposes two or more images captured under distinct conditions [12]. In the CFS context, IR is a challenging optimization problem. Evolutionary Algorithms (EAs) hav e emerged as a promising solution due to their global optimization capabilities, enabling robust searches even in complex and ill-defined problems [11], [13]–[15]. The SFO process thus inv olv es determining the ideal skull pose (its position and orientation in three- dimensional space) and camera settings to ensure that the pro- jected cranial landmarks align with their facial counterparts, accounting for the soft tissue between ev ery pair of landmarks. SFO also faces dif ficulties due to subjectivity and incom- plete information. Accurately pinpointing certain landmarks can be highly subjectiv e [16], [17]. Sev eral factors further complicate this process, such as variations in the mandible ar- ticulation, the pose of the subject in the photo, and occlusions from hair , beards, glasses, clothing, or overall image quality . Regarding the skull, structural damage or missing parts can hinder the accurate location of landmarks. Furthermore, the specific thickness of the soft tissue separating homologous cra- nial and facial landmarks for an individual remains unknown. Although general estimates based on population studies are av ailable [18], and some studies ha ve attempted to predict soft- tissue thickness using skull morphology and biological profile [19], significant uncertainties continue to exist [20]. The current state-of-the-art method in automated SFO was introduced in [20], namely POSEST -SFO. It is recognized for its high performance and accuracy . Howe ver , unlike earlier ap- proaches [11], this method does not directly model important sources of uncertainty , such as the aforementioned estimation of soft-tissue thickness. Instead, this method requires (as input) 3D vectors connecting cranial and facial landmarks for the individual under study . This is unrealistic in actual casew orks. Moreov er , there is only one of such vectorial studies [21]. In this paper, we introduce Lilium, an automated ev o- lutionary method [22] for the SFO stage of CFS. Lilium explicitly handles uncertainty regarding soft tissue to improve the alignment of cranial and facial landmarks. The method considers for the first time the anatomical and morphological correspondence between the skull and face, as well as the camera settings and head pose, to ensure that the overlays are plausible. Bilateral landmarks are optimized jointly to enforce 1 https://cordis.europa.eu/project/id/285624/es consistency and prev ent asymmetrical distortions. T o quan- titativ ely validate Lilium, we simulate controlled SFO cases providing exact ground-truth data, enabling rigorous compar- isons. These innov ations allow for robust SFOs across various scenarios with increasing levels of dif ficulty . In essence, our contribution makes the automatic overlapping process more analogous to the methodology employed by a forensic anthro- pologist, and allo ws us to yield both state-of-the-art and more explainable results in this challenging problem. I I . O U R P R O P O S AL : L I L I U M A. F rom Camera Calibration and 3D P ose Estimation to SFO Registering a 3D skull model with a 2D facial photograph in volves determining the relative position and orientation be- tween the head and the camera. This task hinges on both the pose of the camera and its intrinsic parameters (e.g., focal length, principal point, and lens distortion). “Camera calibration” refers to the process of identifying these param- eters typically achiev ed by capturing images of an object with known dimensions or multiple views of an unknown object. The “Perspectiv e-n-Point” ( PnP ) problem, instead, entails computing the pose of the camera based on a set of n known 3D points and their corresponding projections onto the image [23]. In many cases, image formation is approximated by the pinhole model, reducing complexity to just the focal length and thereby merging calibration and pose estimation into what is known as the PnP+f problem, where f stands for focal [20]. Existing methods, such as RCGA [8] and RCGA-C [11], lev erage EAs to tackle this challenge through iterative and stochastic optimization. In contrast, an algebraic approach was introduced that finds an optimal solution by solving polynomial equations for n ≥ 4 [24]. These equations relate the distances between the n points before and after their projection. This technique was later inte grated into a RANSA C framew ork [25] with an additional local optimization step, resulting in the POSEST algorithm [26]. Extending these dev elopments, POSEST -SFO resolves the SFO problem taking into account the presence of the soft tissue, representing the state-of-the-art automatic SFO method. Howe ver , it does not model the uncertainty related to soft tissue. Rather , the vectors indicating its direction at each landmark are provided as input, and their lengths are fixed at the a verage soft-tissue depths reported in [18]. Once this adjustment is made, POSEST is applied for the PnP+f computation [20]. B. Soft-T issue Modeling with 3D Cones In the SFO process, RCGA-C models soft-tissue uncertainty using fuzzy sets shaped like a 3D cone. Our method, Lilium, adopts a cone-based representation that builds on the core idea introduced in RCGA-C [11], but simplifies it significantly . Instead of relying on fuzzy membership functions to define soft-tissue uncertainty , we use a geometric 3D cone whose key elements are defined as follo ws: • The cone vertex is positioned at the 3D cranial landmark. • The axis of the cone is approximated by the expected di- rection of soft-tissue growth at the cranial landmark. This 3 Fig. 2. Cone-based soft-tissue modeling approach for 3D facial landmark estimation. The volume defines an anatomically plausible conical search region with a 40 ◦ angular aperture, originating from a cranial landmark (left). The soft-tissue depth ( || S T i || ) is calculated as a linear interpolation between population-based minimum ( minP i ) and maximum ( maxP i ) values using the parameter p 1 (second from left). The angular aperture ( α ) of the cone is scaled by p 2 , and the rotation angle ( θ ) about the cranial vector is controlled by p 3 , which allows rotation across the entire 360 ◦ range (third and fourth from left, respectiv ely). T ogether, these parameters define a constrained 3D region where the facial landmark is likely to be found. rough direction is deriv ed from anatomical or empirical data gathered by forensic practitioners [18], [21]. • The height of the cone corresponds to the landmark maximum soft-tissue depth deriv ed from a statistical population study we conducted for this work. T o ensure a broader sample, this study inv olved our core dataset (Section III-A) supplemented by data from six additional CBCT scans 2 . • The volume of the cone represents the spatial region where the corresponding 3D facial landmark is expected to be located. In our approach, the 3D facial landmarks are sampled within the cones using three parameters that define a soft-tissue vector ( S T i ). This vector represents the displacement between a 3D cranial landmark ( C i ) and the corresponding 3D facial landmark ( F i ), expressed as S T i = F i − C i . Figure 2 illustrates the overall sampling process. The parameters used to define a soft-tissue vector are: • p 1 : Adjusts the length of the vector originating from the cranial landmark, determining the soft-tissue depth. • p 2 : Controls the angular aperture from the axis of the cone, capturing natural v ariation in the direction of soft- tissue growth. In our study , this angle ranges from 0 ◦ to 40 ◦ . This choice is supported by a preliminary analysis, which indicated that de viations within this range yield anatomically consistent landmark placements. Moreover , this aligns with prior work such as RCGA-C-45 [11] that demonstrated superior performance. • p 3 : Modifies the rotation angle about the axis of the cone, introducing circumferential variation in the soft- tissue vector . It can take any value in the range [0 ◦ , 360 ◦ ] . These three parameters take values within [0 , 1] . The pro- posed representation thus provides a biologically plausible region for each 3D facial landmark, reduces model comple xity , and offers a more transparent and interpretable alternati ve. 2 These six subjects were excluded from our experiments because they lacked the complete forehead region, which is essential for our proposal. C. Evolutionary Optimization of Soft-T issue Modeling In order to solve the soft-tissue modeling problem within the anatomically constrained 3D cone space, we employ the Differential Evolution (DE) algorithm, which is a population- based, stochastic optimizer well suited for non-linear , non- con vex search spaces with mixed continuous parameters. Specifically , we use the rand/1/exp variant, as its inherent tunable balance between exploration (through mutation) and exploitation (through crossover) is crucial for navigating the intricate landscape of soft-tissue deformation [27]. While RCGA-C optimizes twelve parameters of the 3D-to-2D ge- ometric transformation that projects the skull onto the photo- graph, Lilium optimizes three parameters ( p 1 , p 2 , and p 3 ) that determine the estimated 3D location of each facial landmark. Then, POSEST is used to compute the pose of the skull based on these estimated 3D landmarks and those from the facial image. The ov erall DE workflo w is illustrated in Figure 3. The genotype for each indi vidual in the DE population is defined as a vector x that concatenates the three cone parame- ters for each of the C cranial landmarks. In the case of paired bilateral landmarks, right and left counterparts tend to exhibit a strong correlation in thickness [28]. T o model this anatomical correspondence, symmetry is encouraged through a hybrid strategy . For each bilateral pair , the three cone parameters of one landmark are generated via the usual sampling process, while those of its symmetrical counterpart are computed as a weighted combination: 90% drawn from the sampled values of the first landmark and 10% dra wn from an independent sam- ple. This approach preserves the expected correlation between bilateral landmarks, while allowing for sufficient variability to reproduce realistic anatomical differences. Note that the global transformation of RCGA-C cannot capture this lev el of detail. Therefore, each candidate solution in the DE population takes the form x = p 11 , p 12 , p 13 , . . . , p C 1 , p C 2 , p C 3 , where p i 1 , p i 2 , and p i 3 modify the depth, angular aperture, and rotation angle for each cone, respecti vely . At the beginning, this population is initialized with random values drawn from the range [0 , 1] . In each generation, ev ery member of the population giv es rise to a trial vector through a two-step process: first, three distinct individuals are chosen at random 4 and their differences are linearly combined to form a mutant vector; then, the exponential crossov er process combines the mutant with the original individual at a given probability . A composite fitness score is then ev aluated for every trial alongside its parent, and the vector with better fitness survives into the next generation. Fig. 3. This figure illustrates the Lilium pipeline. The process begins with the random initialization of a solution population, which is then iterativ ely refined by the DE algorithm through mutation, crossover , and selection. A key element in this refinement is Lilium’ s powerful composite fitness function (see Section II-C). This iterati ve process continues until the defined stopping criterion is met, at which point the vector with the best fitness score represents the optimal SFO solution. In order to assess the quality of a DE individual x , we first follow this pipeline (also illustrated in Figure 3): For each cranial landmark C i , we decode its soft-tissue vector S T i from its parameters ( p i 1 , p i 2 , and p i 3 ) inside the 3D cone. Subsequently , these soft-tissue vectors are used to generate 3D facial landmarks by computing F i = C i + S T i for all i . Finally , for pose estimation, the 3D-2D correspondences ( F i , f i ) are fed into the POSEST solver , where f i is the matching 2D land- mark previously pinpointed in the facial image. POSEST thus returns the projection matrix P (including camera intrinsic and extrinsic parameters) and the projected points ˆ f i = P ( F i ) . The quality of a candidate is thus defined by combining: (1) the accuracy of the matching between every pair of projected landmark ˆ f i and its corresponding facial landmark in the photograph f i , and (2) anatomical, morphological, and photo- graphic plausibility penalties. Lo wer fitness indicates a better SFO. The fitness of a candidate for a specific facial photograph is calculated as f itness = MSE pix + P cam + P skof + P pll , where: • MSE pix is the Mean Squared Error (MSE) in pixels between the projected 3D facial landmarks and their corresponding 2D points in the photograph: MSE pix = 1 N P i ∥ ˆ f i − f i ∥ 2 . • P cam is a penalty related to the camera intrinsic and ex- trinsic parameters, as well as the pose difference relativ e to the one shown in the photograph ( ∆ β ). This term is outlined in Section II-D. • P skof is a penalty applied when the skull projection extends beyond the boundaries of the face (detailed in Section II-E). The name “skof ” is a shorthand for skull- outside-face . • P pll is a term that quantifies the lack of parallelism between the skull and facial chin-jaw or forehead regions (explained in Section II-F). The cycle of mutation, crossover , and selection is repeated across the population until the stopping criterion is met, ensuring exploration while preserving high-quality solutions. The best indi vidual in terms of fitness yields the optimized soft-tissue parameters, which lead to the final SFO. Note that the goal is to achiev e SFOs in which the penalty terms are zero or close to zero, indicating no violations of anatomical, morphological, or geometric plausibility . Therefore, while fitness serves as a guide during optimization, its actual value is secondary to ensuring that all constraints are satisfied. The stopping criterion was determined through a prelim- inary empirical analysis of conv ergence behavior . For all compared methods (see Section III-B), the median relativ e improv ement in best-so-far fitness rapidly decreased during early e volution and plateaued around 500 generations, with only marginal gains thereafter . Approximately 500 seconds captured nearly all meaningful improvements while avoiding unnecessary computational cost. T o ensure suf ficient explo- ration without fav oring faster methods, a maximum of 750 generations was also imposed. Execution was therefore termi- nated upon reaching either 500 seconds or 750 generations. D. Camera P arameters Criterion In order to guarantee that the estimated projection fulfills realistic photographic constraints, we introduce a camera- parameter penalty term, P cam . This term accounts for focal length ( f x ), subject-to-camera distance (SCD), and the angular deviation between the inferred and reference head poses ( ∆ β ). The v alues of f x , SCD, and pose angles are obtained through a dual estimation strategy . On the one hand, inde- pendent estimates (referred to as “a priori information”) are deriv ed directly from the input image using machine learning techniques. For instance, SCD can be estimated using methods such as the CNN-based approach introduced by Bermejo et al. [29]. Inference of head pose can be facilitated by employing methods similar to those outlined by Chun et al. [30]. The focal length can be obtained either from the EXIF metadata of the image or via monocular camera calibration, such as the deep learning approach proposed by W orkman et al. [31]. Each estimated quantity is associated with an admissible interval that reflects its expected range of variation. These intervals are denoted as f x ∈ [ ap f x , min , ap f x , max ] and 5 SCD ∈ [ ap SCD , min , ap SCD , max ] , while pose consistency is constrained by a maximum angular deviation β tol . In parallel, the same parameters are also extracted from the output of the POSEST solver . These values are ev aluated against the defined admissible intervals. Any parameter devi- ating beyond its expected range is penalized within the fitness function. The penalty term is defined as P cam = 1 { f x =0 } C ∞ + max(0 , ap f x , min − f x ) 2 + max(0 , f x − ap f x , max ) 2 + max(0 , ap SCD , min − SCD) 2 + max(0 , SCD − ap SCD , max ) 2 + [max(0 , ∆ β − β tol )] 2 , where the term 1 { f x =0 } C ∞ introduces a large penalty if the estimated f x collapses to zero, indicating an in valid projection. The squared penalty terms ensure that deviations from the per- missible intervals for f x and SCD are penalized in proportion to their magnitude. Similarly , if the angular difference ∆ β between the optimized and image-inferred pose exceeds the tolerance β tol , the penalty increases quadratically . In practice, if POSEST does not find a solution or f x exceeds a predefined realistic limit, a significantly large penalty is applied. This realistic limit is based on real-world camera lens capabilities and is set to 10,000 in our study . Finally , by incorporating P cam into the ov erall fitness function, we ensure that the final alignment is consistent with physically feasible camera and head-pose parameters. E. Skull-Outside-F ace Criterion In order to promote anatomically plausible alignments, we introduce the P skof term, which penalizes configurations in which the projected skull contour extends beyond the visible facial boundary in the image. The computation proceeds as follows: first, the full facial contour is e xtracted from the input image, and the skull is projected onto the image plane using the projection matrix returned by POSEST . The projected skull region is then rasterized to produce a binary mask. The penalty is defined as the number of pixels in the skull mask that fall outside the facial mask. Formally , letting S denote the set of pixels occupied by the projected skull and F the facial region, the penalty is given by P skof = 100 × |S \ F | , where | · | denotes the set cardinality , i.e., the number of pixels. This quantity is multiplied by a scaling factor of 100 to emphasize the impact of anatomically implausible configurations and ensures that they are strongly penalized in the optimization process. By incorporating P skof into the overall fitness function, the method steers the optimization to wards alignments in which the skull remains spatially enclosed by the facial region. F . P arallelism Criterion: Chin-J aw and F orehead Curves Finally , the P pll term aims to enforce morphological con- sistency by ev aluating the alignment between the projected cranial re gion and the corresponding facial re gion in the image. In our proposal, the parallelism metric is applied according to the facial pose in the image: for frontal poses, the chin and jaw regions are analyzed, while for lateral poses the forehead region is examined. For that purpose, we first extract the relev ant contour curves via a three-stage pipeline: 1) Mesh Segmentation. The region of interest is isolated. For the forehead re gion, we rely on two anatomical land- marks: glabella and metopion [32]. W e use metopion as a reference to define and refine the forehead curve due to its standardized anatomical basis, despite some location imprecision. T o extract the region, we place two cutting planes (both parallel to the Frankfurt plane [32]) so that one plane passes through glabella and the other through metopion. Ev erything between these planes is automat- ically cropped as the forehead mesh. For the chin-jaw region, a similar approach is applied using anatomical references. Segmentation is performed by placing one horizontal cutting plane through the infradentale and tw o vertical planes through the mental foramina for skull meshes, or through the cheilion landmarks for facial meshes (see the mesh segmentation panel in Figure 4). 2) Curve Detection. The boundary of the region is e xtracted from the segmented mesh by projecting and rendering it, and then applying standard image-processing techniques to isolate the contour . In the case of the forehead, the raw curve is further constrained by discarding all points outside the vertical span defined by the y -coordinates of the glabella and metopion landmarks. 3) Curve Refinement. The detected contour is then sub- jected to a cleaning process, whereby isolated pixels are removed, and the remaining ones are sorted. Any disconnected fragments are joined by straight segments to yield a single continuous path. Finally , to make skull and face curves directly comparable, each is trimmed at the point where the averaged normal (computed over the terminal 20% of its length) intersects its counterpart (see the curve refinement panel in Figure 4). Using the refined curves, the parallelism metric is computed. First, for each pixel on the cranial curve, the closest pixel on the facial curve is found, and vice versa for any unpaired facial pixels. Then, the distances between the paired points are recorded. Let { ( s i , p i , d i ) } N i =1 be the list of matched skull-curve points s i and face-curve points p i , with Euclidean distance d i = ∥ s i − p i ∥ . The parallelism metric is thus computed as P pll = ∆ d + P conv + P int , where: • ∆ d is the base parallelism metric, which represents the spread of the distances: ∆ d = max d i − min d i . • P conv is a con ver gence penalty applied when the distances between the two curves at their endpoints deviate signif- icantly from the ov erall mean. Let ¯ d = 1 N P N i =1 d i be the mean of all pairwise distances. Denote by d 1 and d N the distances between the first matched pair ( s 1 , p 1 ) and the last matched pair ( s N , p N ) , respecti vely , and define δ 1 = d 1 − ¯ d and δ N = d N − ¯ d . If either δ e > 0 . 25 ¯ d where e ∈ { 1 , N } , a penalty is incurred according to P conv = 2 ( δ 1 + δ N ) if both ends conv erge, 4 δ e if only one end con verges, 0 otherwise 6 Fig. 4. Contour extraction for the parallelism term P pll . The re gions of interest are isolated from the skull and the facial meshes using anatomically defined cutting planes (top). The boundary of each segmented region is extracted, cleaned, ordered, and merged into a single continuous curve. The curves are then trimmed using averaged endpoint normals to ensure consistent and comparable extents between skull and face (bottom). • P int is an intersection penalty that quantifies any contour- crossing between skull and face. After rasterizing both curves, the skull pix els lying on the wr ong side of the face boundary are identified. Each violation contributes a fixed penalty of 1,000 to the total score. In frontal facial poses, a violation occurs whenev er a paired point satisfies s i .y ≥ p i .y , meaning the skull jaw contour lies below the facial chin contour . In lateral poses, the condition depends on the viewing direction: ( s i .x ≤ p i .x if the subject is looking left, s i .x ≥ p i .x if the subject is looking right The total directional penalty is proportional to the num- ber of such violations and is expressed as P int = 1 , 000 P N i =1 penalized ( s i , p i ) , where penalized ( s i , p i ) = ( 1 if ( s i , p i ) violates the condition, 0 otherwise. A lo w P pll thus indicates anatomical alignment between skull and face in the region of interest. I I I . E X P E R I M E N TA L S T U DY A. Dataset Unlike many established forensic methods, the CFS ap- proach does not rely on ground-truth data, that is, there is no infallible procedure that can yield a perfect, indisputable SFO [10]. Moreov er , real forensic data are often incomplete and scarce, limiting their av ailability for research purposes. T o overcome these challenges, controlled environments can be utilized to gather data and create synthetic identification scenarios. Building on the framew ork introduced in [20], we replace real facial photographs with renderings generated from 3D facial models. The process is illustrated in Figure 5. ”Female head sculpt. ” (https://skfb .ly/I6rZ) by riceart is licensed under Creati ve Commons Attrib ution (http://creativ ecommons.org/licenses/by/4.0/). Our objectiv e is to produce fully specified, error-controlled identification cases in which its corresponding ground-truth solution is known e xactly . For each case, we begin by ex- tracting from the CT scan: the 3D skull mesh; the coordinates of cranial landmarks; and the associated soft-tissue vectors, which together define the 3D facial landmark positions (see Section II-B). Then, rather than acquiring a con ventional photograph and manually annotating 2D facial landmarks ( f ), we render a synthetic image from a 3D facial model. In this model, the 3D facial landmarks are already precisely defined. By applying a known projection P (determined by the position, orientation, and intrinsic parameters of the camera) we ensure that f i = P ( C i + S T i ) = P ( F i ) , thereby removing all subjectivity and 2D landmark-location error . This rendering approach permits unlimited variation in subject pose and camera parameters, enabling the creation of large, div erse datasets for comprehensiv e algorithm testing. T o emulate landmark-location v ariability , we introduce small, random perturbations E i to each ideal 2D landmark: f ′ i = f i + E i , which mimics the typical inaccuracies of manual annotation [16]. Finally , as part of this data generation process, the facial chin-jaw and forehead re gions are segmented and their contour curves are automatically detected and incorpo- rated into the dataset. The methodology is equiv alent to that delineated in Section II-F. Our study in volves a total of 17 CT scans (10 male and 7 female subjects) obtained from the Ne w Mexico Decedent Im- age Database (NMDID) [33]. The ages of the subjects ranged from 20 to 88 years, with an average age of 42 years. For each subject, we generated 30 frontal and 30 lateral SFO images, resulting in a total of 17,340 superimpositions. This dataset significantly surpasses those used in previous experimental studies on automatic SFO [8], [16], [20], [34], [35]. Ke y parameters for each generated image, including the pose of the camera, focal length, and image size, were randomly selected to reflect a div erse range of realistic scenarios. The choice of landmarks varied across subjects, as some 3D skull models lacked sufficient detail in specific areas. Landmarks that were not visible due to the pose of the subject were excluded from the SFO process. The cranial landmarks in volved were: left/right (L/R) ectoconchion, L/R gonion, prosthion, glabella, bregma, metopion, L/R dacryon, L/R zygion, L/R alare, L/R frontotemporale, nasion, pogonion, gnathion, and subspinale. The facial ones were: L/R exocanthion, L/R gonion, labiale superius, L/R endocanthion, L/R zygion, L/R alare, L/R fron- totemporale, nasion, pogonion, gnathion, subnasale, bregma, glabella, and metopion. B. Experimental Settings In order to systematically assess the robustness and effec- tiv eness of our approach, we compared it against the state- of-the-art POSEST -SFO algorithm across three experiments of increasing complexity (A–C). The base configuration of our method, Lilium, relies on two fundamental components: 7 Fig. 5. Real facial photographs are replaced by synthetic renderings generated from 3D facial models, where landmarks are precisely defined. These 3D landmarks are projected into 2D using known camera parameters, eliminating annotation errors. This visualization is based on a synthetic face model used solely for illustrativ e purposes. The underlying 3D model is “Female head sculpt” by riceart (https://skfb.ly/I6rZ), licensed under Creativ e Commons Attribution 4.0 (https://creativecommons.or g/licenses/by/4.0/). the MSE pix and the P cam terms. From this baseline, we progressiv ely added P skof and P pll constraints, both separately and in combination, to ev aluate the specific contribution of each proposed criterion. The experiments are defined by the complexity of the scenario: • Exp. A (Ideal Scenario): No noise was applied to either the 2D facial landmarks or the soft-tissue vectors. • Exp. B (Landmark Noise): Random noise of up to ± 5 pixels was added to 2D landmark locations to simulate typical localization errors [16], [17]. • Exp. C (Realistic Scenario): Soft-tissue vector directions were perturbed by up to 30 ◦ to simulate estimation uncertainty . This perturbation magnitude represents a deliberately challenging condition, designed to ev aluate the model’ s robustness under substantial directional noise. In addition, landmark noise was applied concurrently to approximate the most realistic scenario. Since our synthetic data provides known ground-truth values for camera parameters and head pose, we employed them to define acceptance intervals with confidence: ± 5% for f x , ± 10% for SCD, and a maximum deviation of 10 ◦ for head pose (see Section II-D). W e aim to ev aluate identification accuracy using a ranking- based metric [20]. For a given facial photograph, a superim- position is performed with ev ery skull in our database. The resulting SFOs are then sorted based on their error metric: the mean pixel-wise projection error for POSEST -SFO and the fitness value for Lilium. The rank of the true matching skull serves as our key performance indicator; a lower rank signifies a higher identification capacity . Moreov er , we also report the back-projection error (BPE), which is computed as the mean 3D point-to-line distance in mm between each ground-truth 3D facial landmark and the back-projection ray induced by the estimated projection [20]. Facial landmarks not visible in the image due to pose are excluded from estimation b ut included in the BPE computation for ev aluation. Lo wer BPE values indicate a smaller mean 3D displacement of the facial landmarks caused by using the estimated projection instead of the ground-truth projection. T o account for the stochastic nature of DE, all experiments were repeated fi ve times. In Exp. C, an additional source of variability is introduced through the use of fiv e distinct sets of perturbed soft-tissue vectors, resulting in a total of 25 executions. For ev ery experiment, reported results corre- spond to the mean ranks and BPE values computed ov er all associated executions. By contrast, POSEST -SFO is a deterministic method and was therefore ex ecuted only once per input instance. Finally , we assess the quality of the resulting SFOs by means of a metric deriv ed from the skull-outside-face cri- terion described in Section II-E. W e repurposed P skof as an a posteriori e valuation measure to enable a fair comparison between dif ferent SFO methods. The quality score is defined as the percentage of SFOs in which any of the skull pixels do not overlap onto a pixel of the facial mask. Lower percent- ages indicate higher-quality , anatomically consistent ov erlays, whereas higher values correspond to poorer alignments. Since sev eral methods are ev aluated under multiple runs or across different datasets, we report worst-case performance in terms of the percentage of implausible SFOs to explicitly account for variability and to assess robustness under adverse conditions. All experiments were conducted on a supercomputer deli v- ering 4.36 PetaFLOPS across 357 nodes, totaling 22,848 Intel Xeon Ice Lake 8352Y cores. C. Results and Discussion T able I reports the mean frontal and lateral ranks and BPE values, along with runtime, for all methods across three exper- imental scenarios. T able II reports the statistical significance of the differences between POSEST -SFO and the Lilium variant with the most competitive mean rank or BPE. In ideal conditions (Exp. A), POSEST -SFO obtains the lowest mean frontal rank (1.18) and the lowest frontal error (1.078 mm). Among the remaining methods, Lilium P skof fol- lows, with a mean rank of 1.412 and a comparable frontal BPE (1.084 mm). The W ilcoxon signed-rank test failed to detect a difference in frontal BPE between Lilium P skof and POSEST -SFO ( p > 0 . 999 ), suggesting that enforcing skull containment alone is sufficient to approach the geometric accuracy of the state-of-the-art method under ideal conditions. In lateral alignment, Lilium P skof +P pll outperforms POSEST - SFO in terms of rank (1.1 vs 1.135), although the W ilcoxon 8 T ABLE I M E AN R AN K , B P E , A N D C O M PU TA T I O NA L T I ME RE S U L T S F RO M E X P S . A– C . B O L D I N DI C A T E S T H E B E ST V A L UE IN E AC H C OL U M N . Exp A (Ideal) Exp B (Landm. Noise) Exp C (Realistic) Rank BPE Time Rank BPE Time Rank BPE Time Method Frontal Lateral Frontal Lateral (s) Frontal Lateral Frontal Lateral (s) Frontal Lateral Frontal Lateral (s) POSEST -SFO 1.18 1.135 1.078 2.158 0.014 1.502 1.424 1.861 2.998 0.018 2.147 2.094 2.328 4.103 0.017 Lilium 1.552 1.883 1.15 1.998 220.286 1.672 1.626 2.296 2.769 222.439 1.909 1.781 2.608 3.311 223.757 Lilium (P pll ) 1.632 1.259 1.229 2.223 515.921 1.693 1.257 1.811 2.599 515.432 1.95 1.374 2.174 2.92 512.609 Lilium (P skof ) 1.412 1.34 1.084 1.894 386.235 1.625 1.271 2.07 2.539 387.511 1.899 1.341 2.299 2.87 385.442 Lilium (P skof +P pll ) 1.451 1.1 1.132 2.099 537.961 1.576 1.111 1.679 2.499 536.965 1.897 1.216 1.967 2.748 535.077 signed-rank test f ailed to detect a difference ( p = 0 . 201 ), while exhibiting a marginally lo wer BPE (2.099 mm vs 2.158 mm), indicating comparable 3D alignment accuracy . Notably , Lilium P skof achiev es the lowest lateral BPE overall (1.894 mm). Under noisy or perturbed conditions (Exps. B and C), the advantage of combining P skof and P pll in Lilium becomes evident. In Exp. B, for frontal alignments, Lilium P skof + P pll achiev es a mean rank very close to POSEST -SFO (1.576 vs. 1.502), with no statistically significant differences detected ( p > 0 . 999 ), while exhibiting a lower frontal BPE (1.679 mm vs 1.861 mm, p < 0 . 0001 ), indicating that anatomical con- straints improv e geometric accuracy e ven under landmark noise. In lateral poses, the fully constrained Lilium achiev es a significantly lower rank (1.111 vs 1.424, p < 0 . 0001 ) and BPE (2.499 mm vs 2.998 mm, p = 0 . 0001 ) than POSEST - SFO, demonstrating that the combination of priors stabilizes optimization under noisy conditions. In Exp. C (realistic sce- nario), Lilium P skof + P pll significantly outperforms POSEST - SFO in both frontal (1.897 vs 2.147, p < 0 . 0001 ) and lateral (1.216 vs 2.094, p < 0 . 0001 ) poses, with lo wer BPEs (1.967 vs 2.328 mm frontal, 2.748 vs 4.103 mm lateral, both p < 0 . 0001 ). A consistent trend observed across all experiments was the superior identification performance of lateral poses compared to frontal ones. Lateral views of fer a more constrained and discernible profile of features like the jawline, forehead, and nose, providing less ambiguous cues for alignment. Frontal poses, conv ersely , are more challenging due to the potential for bilateral landmarks to compensate for each other and the risk of landmark coplanarity , which can lead to multiple 3D poses producing the same 2D projection. Note that the BPE values sho w the opposite tendency , being consistently smaller in frontal views. This is because in frontal views, most facial landmarks are fully visible, facing the camera directly . In lateral poses, by contrast, some landmarks are occluded, reducing the ef fecti ve information av ailable for accurately recov ering the 3D configuration. The superior performance of POSEST -SFO in frontal poses, particularly in Exp. A and to some extent in Exp. B, can be attributed to the fact that it relies on ground-truth soft- tissue directional information. Under noise-free or low-noise conditions, this access to precise orientation data effecti vely acts as an oracle, giving POSEST -SFO an advantage that is not a vailable in realistic forensic case work. In contrast, Lilium leverages anatomical priors to provide robust guidance, allowing it to maintain strong identification performance even in challenging scenarios. T ABLE II S T A T I S T IC A L C O M P A R I SO N AC RO S S F RO N TAL A ND LAT ER A L P O S ES ( E XP S . A – C ). S IG N I FI CA N C E A S SE S S ED V IA W IL C OX O N S I GN E D - RA N K T E ST . B O L D I N DI C A T E S p < 0 . 05 A N D B E T TE R ( L OW E R ) VAL U E S . Rank BPE (mm) Exp. Pose Lilium POSEST p Lilium POSEST p A Frontal 1.412 1.18 < 0.0001 1.084 1.078 > 0 . 999 Lateral 1.1 1.135 0.201 1.894 2.158 0.001 B Frontal 1.576 1.502 > 0 . 999 1.679 1.861 < 0.0001 Lateral 1.111 1.424 < 0.0001 2.499 2.998 0.0001 C Frontal 1.897 2.147 < 0.0001 1.967 2.328 < 0.0001 Lateral 1.216 2.094 < 0.0001 2.748 4.103 < 0.0001 Regarding the worst-case SFO quality , T able III reports the results across experiments. In Exp. A, Lilium P skof produces anatomically implausible SFOs in 19.47% of cases, with only 3.03% of skull pixels lying outside the facial region. Under noisier and more realistic conditions (Exps. B and C), Lilium P skof + P pll further improves robustness, limiting implausible ov erlays to 17.26–19.83% of cases with similarly low outside- pixel ratios (2.84–3.18%). Note that the number of positive SFOs (the skull and face originate from the same subject) clas- sified as implausible remains minimal across all experiments. This indicates that positiv e pairs are rarely rejected due to anatomical violations. Furthermore, manual inspection re veals that many of the few Lilium implausible cases are typically due to open-mouth expressions or minor mesh cropping below the chin, rather than genuine anatomical inconsistencies. In contrast, POSEST -SFO exhibits a substantially higher incidence of anatomically implausible overlays across all experiments, affecting 70.51–78.29% of cases and sho wing larger proportions of skull pixels outside the facial region (3.98–5.08%). These results demonstrate that low mean-rank or BPE performance alone does not guarantee anatomically realistic SFOs and highlight the importance of explicitly incorporating anatomical plausibility criteria. Qualitative ev al- uation of SFOs from the most challenging scenario (Exp. C, Figure 6) confirms these trends. POSEST -SFO results show visible misalignment and anatomical inconsistencies, whereas Lilium P skof + P pll generates ov erlays that maintain correct landmark positions while respecting craniofacial morphology . 9 Fig. 6. Representativ e SFO results from Exp. C across models for multiple subjects. Each column shows a different model; the last is ground truth. Blue circles mark facial landmarks in the image; green dots are projected 3D estimates. T ABLE III W OR S T - C AS E I M P L AUS I B L E S F O M E T RI C S A CR OS S E X P E RI M E N TS . R E PO RT E D C O UN T S C O R RE S P O ND TO T H E N U M BE R O F IM P L AU SI B L E S F O S O U T O F 1 7 ,3 4 0 C A SE S . B O LD I N D I CAT ES B E T T ER ( L OW E R ) V A L UE S . Exp. Method Implausible SFOs (%) A vg pixels outside (%) Positiv e (n) Negative (n) A POSEST -SFO 70.51 3.98 320 11,902 Lilium P skof 19.47 3.03 18 3,354 B POSEST -SFO 72.6 4.51 460 12,122 Lilium P skof + P pll 17.26 3.18 37 2,952 C POSEST -SFO 78.29 5.08 634 12,937 Lilium P skof + P pll 19.83 2.84 48 3,387 Regarding computational efficiency , POSEST -SFO is ex- tremely fast (under 0.018 s per SFO). Lilium’ s runtime scales with optimization complexity , reaching se veral minutes per SFO for the fully constrained configuration. For forensic applications, this additional computation is justified, as manual superimposition can take hours, while Lilium provides both automated support for identification and anatomically plausi- ble results. I V . C O N C L U S I O N The SFO stage within CFS presents significant challenges, primarily due to the complexities of aligning a 3D skull with a 2D facial photograph while accounting for soft-tissue uncertainty . This work has introduced Lilium, an innov a- tiv e automated ev olutionary method designed to address this challenge systematically . Lilium explicitly models soft-tissue uncertainty through a novel 3D cone-based representation, with its parameters optimized via a DE algorithm. A key strength of our method is its comprehensi ve fitness function, which guides the optimization process by minimizing landmark projection error while simultaneously penalizing so- lutions that violate crucial plausibility constraints: (1) unrealis- tic camera settings ( f x and SCD); (2) inconsistencies between the estimated and image-inferred head poses; (3) SFOs where the projected skull extends beyond the facial boundaries (P skof term); and (4) lack of parallelism between skull and facial regions (P pll term). Furthermore, Lilium promotes anatomical accuracy by employing a hybrid strategy for the joint opti- mization of bilateral landmarks, encouraging symmetry while preserving realistic anatomical v ariation. These innov ations enable the generation of robust and accurate overlays making the automated SFO process more analogous to the work conducted by forensic anthropologists. T o validate the proposed methodology , we compared it against the state-of-the-art POSEST -SFO algorithm through three experiments. Each experiment comprised 17,340 super- impositions per run and introduced a distinct noise profile to simulate varying levels of forensic complexity . Across the three scenarios, Lilium with combined constraints con- sistently achiev ed robust performance. In the ideal, noise-free frontal setting (Exp. A), POSEST -SFO attained lower ranks. Nonetheless, the W ilcoxon signed-rank test failed to detect a difference in BPE in frontal scenarios between POSEST -SFO and Lilium P skof , indicating comparable geometric accuracy . Under noisy or perturbed conditions (Exps. B and C), Lilium P skof + P pll demonstrated superior robustness. In Exp. B, although POSEST -SFO obtained a slightly lower mean rank in frontal poses (1.502 vs 1.576), the W ilcoxon signed-rank test failed to detect a difference, whereas Lilium achie ved significantly lo wer BPE values. Regarding lateral poses, Lil- ium significantly outperformed POSEST -SFO in both rank (1.111 vs 1.424) and BPE (2.499 mm vs 2.998 mm). In the most realistic scenario (Exp. C), Lilium clearly outperformed POSEST -SFO in both frontal (1.897 vs 2.147) and lateral (1.216 vs 2.094) ranks, accompanied by significantly lower BPEs in both poses (frontal: 1.967 mm vs 2.328 mm; lateral: 2.748 mm vs 4.103 mm). Regarding the SFO quality ev aluation, Lilium P skof +P pll and Lilium P skof ensured anatomical plausibility , reducing worst- case implausible overlays from 70.51–78.29% with POSEST - SFO to only 17.26–19.83% of cases, while also limiting skull pixels outside the face to 2.84–3.18%. Qualitativ e results 10 from Exp. C confirmed that fully constrained Lilium gener- ates visually coherent and anatomically consistent ov erlays, balancing identification accuracy with morphological fidelity . Although POSEST -SFO of fers faster execution times, this comes at the expense of anatomical reliability . Lilium requires higher computational costs, but remains acceptable in forensic practice where the manual superimposition process typically takes sev eral hours. In summary , this study demonstrates that le veraging anatom- ical and morphological criteria substantially enhances SFO performance, offering crucial robustness to noise. Future work will focus on several complementary directions. First, we plan to refine the modeling of soft-tissue uncertainty by exploring irregular 3D regions dynamically adapted to direction-specific variability . Second, an important line of future in vestigation will inv olve extending v alidation to larger and more di verse datasets, including real forensic imagery , in order to bet- ter characterize these sources of uncertainty . Third, from a computational perspectiv e, future dev elopments will in ves- tigate performance optimization strategies, including GPU- accelerated rendering, as well as parallel implementations of the optimization pipeline. Finally , we intend to integrate additional anatomical constraints to further impro ve robustness and to support broader acceptance of the method within the forensic science community . A C K N O W L E D G M E N T S This publication is part of the R&D&I project PID2024-156434NB-I00 (CONFIA2), funded by MICIU/AEI/10.13039/501100011033 and ERDF , EU. Miss Mart ´ ınez-Moreno is supported by grant PRE2022- 102029 funded by MICIU/AEI/10.13039/501100011033 and by FSE+. Dr . V alsecchi’ s work is supported by Red.es under grant Skeleton-ID2.0 (2021/C005/00141299). This contribution made use of the Free Access Decedent Database, funded by the National Institute of Justice un- der grant number 2016-DN-BX-0144. Moreover , the authors would like to thank R. Guerra, M.A. Guativ onza, F . Nav arro for their v aluable contributions and support throughout the dev elopment of this work. Dr . V alsecchi, Dr . Mesejo, Miss Mart ´ ınez-Moreno, and Prof. Damas among other researchers who did not collaborate in the current study are coin ventors with dif ferent percentages of o wnership of an in vention titled “Method for forensic identification by automatically comparing a 3D model of a skull with one or more photos of a face”, for which a patent application has been filed in the US (No. US 2024/0320911 AI) among other countries. R E F E R E N C E S [1] M. Y oshino, “Craniofacial superimposition, ” in Craniofacial Identifica- tion , C. Wilkinson and C. Rynn, Eds. Uni versity Press, Cambridge, 2012, pp. 238–253. [2] S. Blau and D. Ubelaker , “Handbook of forensic anthropology and archaeology , ” in Handbook of F orensic Anthropology and Ar chaeology . W alnut Creek: W est Coast Press, 2009, pp. 141–149. [3] A. Leggio, G. Iacobellis, C. Salzillo, and L. Innamorato, “The faceless enigma: Craniofacial superposition reveals identity concealed by decom- position, solving a judicial case, ” F or ensic Sciences , vol. 5, no. 4, 2025. [4] S. Damas et al. , “Forensic identification by computer-aided craniofacial superimposition: a survey , ” ACM Comput. Surv . , vol. 43, no. 4, pp. 1–27, 2011. [5] M. I. Huete, O. Ib ´ a ˜ nez, C. Wilkinson, and T . Kahana, “Past, present, and future of craniofacial superimposition: Literature and international surveys, ” Leg . Med. , vol. 17, no. 4, pp. 267–278, 2015. [6] K. Burns, F orensic Anthr opology T raining Manual . Prentice-Hall, 2007. [7] S. Damas et al. , “Study on the performance of different craniofacial superimposition approaches (II): Best practices proposal, ” F or ensic Sci. Int. , vol. 257, pp. 504–508, 2015. [8] O. Ib ´ anez, O. Cord ´ on, S. Damas, and J. Santamaria, “Modeling the skull–face overlay uncertainty using fuzzy sets, ” IEEE T rans. Fuzzy Syst. , vol. 19, no. 5, pp. 946–959, 2011. [9] Ib ´ a ˜ nez et al. , “MEPROCS framework for craniofacial superimposition: V alidation study , ” Leg . Med. , vol. 23, pp. 99–108, 2016. [10] S. Damas, O. Cord ´ on, and O. Ib ´ a ˜ nez, Handbook on Craniofacial Superimposition: The MEPROCS Pr oject , 1st ed. Springer , 2020. [11] B. R. Campomanes- ´ Alvarez, O. Ib ´ a ˜ nez, C. Campomanes- ´ Alvarez, S. Damas, and O. Cord ´ on, “Modeling facial soft tissue thickness for automatic skull-face overlay , ” IEEE Tr ans. Inf. F orensics Secur . , vol. 10, no. 10, pp. 2057–2070, 2015. [12] B. Zito v ´ a and J. Flusser, “Image registration methods: a surve y , ” Imag e V is. Comput. , vol. 21, no. 11, pp. 977–1000, 2003. [13] S. Damas, O. Cord ´ on, and J. Santamar ´ ıa, “Medical image registration using evolutionary computation: An experimental survey , ” IEEE Com- put. Intell. Mag. , vol. 6, no. 4, pp. 26–42, 2011. [14] J. Santamar ´ ıa, O. Cord ´ on, and S. Damas, “ A comparative study of state- of-the-art ev olutionary image registration methods for 3D modeling, ” Comput. V is. Image Underst. , vol. 115, no. 9, pp. 1340–1354, 2011. [15] B. R. Campomanes-Alvarez et al. , “Computer vision and soft comput- ing for automatic skull–face overlay in craniofacial superimposition, ” F or ensic Sci. Int. , vol. 245, pp. 77–86, 2014. [16] B. R. Campomanes- ´ Alvarez, O. Ib ´ a ˜ nez, F . Navarro, I. Alem ´ an, O. Cord ´ on, and S. Damas, “Dispersion assessment in the location of facial landmarks on photographs, ” Int. J. Leg. Med. , vol. 129, no. 1, pp. 227–236, 2015. [17] M. Cummaudo et al. , “Pitfalls at the root of facial assessment on photographs: A quantitative study of accuracy in positioning facial landmarks, ” Int. J. Le g. Med. , vol. 127, no. 3, pp. 699–706, 2013. [18] C. N. Stephan and E. K. Simpson, “Facial soft tissue depths in craniofacial identification (Part I): An analytical re view of the published adult data, ” J. F orensic Sci. , vol. 53, no. 6, pp. 1257–1272, 2008. [19] P . Guyomarc’h, B. Dutailly , J. Charton, F . Santos, P . Desbarats, and H. Coqueugniot, “ Anthropological facial approximation in three dimen- sions (AF A3D): Computer-assisted estimation of the facial morphology using geometric morphometrics, ” J. F orensic Sci. , vol. 59, 2014. [20] A. V alsecchi, S. Damas, and O. Cord ´ on, “ A rob ust and ef ficient method for skull-face ov erlay in computerized craniofacial superimposition, ” IEEE T rans. Inf. F orensics Secur . , vol. 13, no. 8, pp. 1960–1974, 2018. [21] F . Nav arro, R. Martos, S. Damas, and I. Aleman, “ A 3D vector - based approach to facial soft tissue–cranial relationships for forensic identification in the spanish population, ” F orensic Science International , vol. 380, p. 112802, 2026. [22] A. E. Eiben and J. E. Smith, Intr oduction to evolutionary computing . Springer , 2015. [23] R. Hartle y and A. Zisserman, Multiple vie w geometry in computer vision . Cambridge university press, 2003. [24] M. Bujnak, Z. Kukelov a, and T . Pajdla, “ A general solution to the p4p problem for camera with unkno wn focal length, ” in IEEE conference on computer vision and pattern reco gnition (CVPR) , 2008, pp. 1–8. [25] M. A. Fischler and R. C. Bolles, “Random sample consensus: A paradigm for model fitting with applications to image analysis and automated cartography , ” Commun. ACM , vol. 24, no. 6, pp. 381–395, 1981. [26] M. Lourakis and X. Zabulis, “Model-based pose estimation for rigid ob- jects, ” in International confer ence on computer vision systems . Springer , 2013, pp. 83–92. [27] R. Storn and K. Price, “Differential evolution–a simple and efficient heuristic for global optimization over continuous spaces, ” J. Glob . Optim. , vol. 11, no. 4, pp. 341–359, 1997. [28] J.-H. Chung, H.-T . Chen, W .-Y . Hsu, G.-S. Huang, and K.-P . Shaw , “ A ct-scan database for the facial soft tissue thickness of taiwan adults, ” F or ensic Sci. Int. , vol. 253, pp. 132.e1–132.e11, 2015. [29] E. Bermejo, E. Fernandez-Blanco, A. V alsecchi, P . Mesejo, O. Ib ´ a ˜ nez, and K. Imaizumi, “Facialscdnet: A deep learning approach for the estimation of subject-to-camera distance in facial photographs, ” Expert Syst. Appl. , vol. 210, p. 118457, 2022. 11 [30] S. Chun and J. Y . Chang, “6dof head pose estimation through explicit bidirectional interaction with face geometry , ” in Eur opean Conference on Computer V ision (ECCV) , 2025, pp. 146–163. [31] S. W orkman, C. Greenwell, M. Zhai, R. Baltenberger , and N. Jacobs, “Deepfocal: A method for direct focal length estimation, ” in IEEE International Confer ence on Image Processing (ICIP) , 2015, pp. 1369– 1373. [32] J. Caple and C. N. Stephan, “ A standardized nomenclature for cranio- facial and facial anthropometry , ” Int. J. Leg . Med. , vol. 130, no. 3, pp. 863–879, 2016. [33] H. J. H. Edgar , S. Daneshvari Berry , E. Moes, N. L. Adolphi, P . Bridges, and K. B. Nolte, “New mexico decedent image database, ” 2020. [34] O. Ib ´ a ˜ nez, F . Cavalli, B. R. Campomanes- ´ Alvarez, C. Campomanes- ´ Alvarez, A. V alsecchi, and M. I. Huete, “Ground truth data generation for skull–face ov erlay, ” Int. J. Leg. Med. , v ol. 129, no. 3, pp. 569–581, 2015. [35] O. Ib ´ a ˜ nez, O. Cord ´ on, S. Damas, and J. Santamar ´ ıa, “ An advanced scat- ter search design for skull-face overlay in craniofacial superimposition, ” Expert Syst. Appl. , vol. 39, no. 1, pp. 1459–1473, 2012.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment