Evaluating Agentic Optimization on Large Codebases

Large language model (LLM) coding agents increasingly operate at the repository level, motivating benchmarks that evaluate their ability to optimize entire codebases under realistic constraints. Existing code benchmarks largely rely on synthetic task…

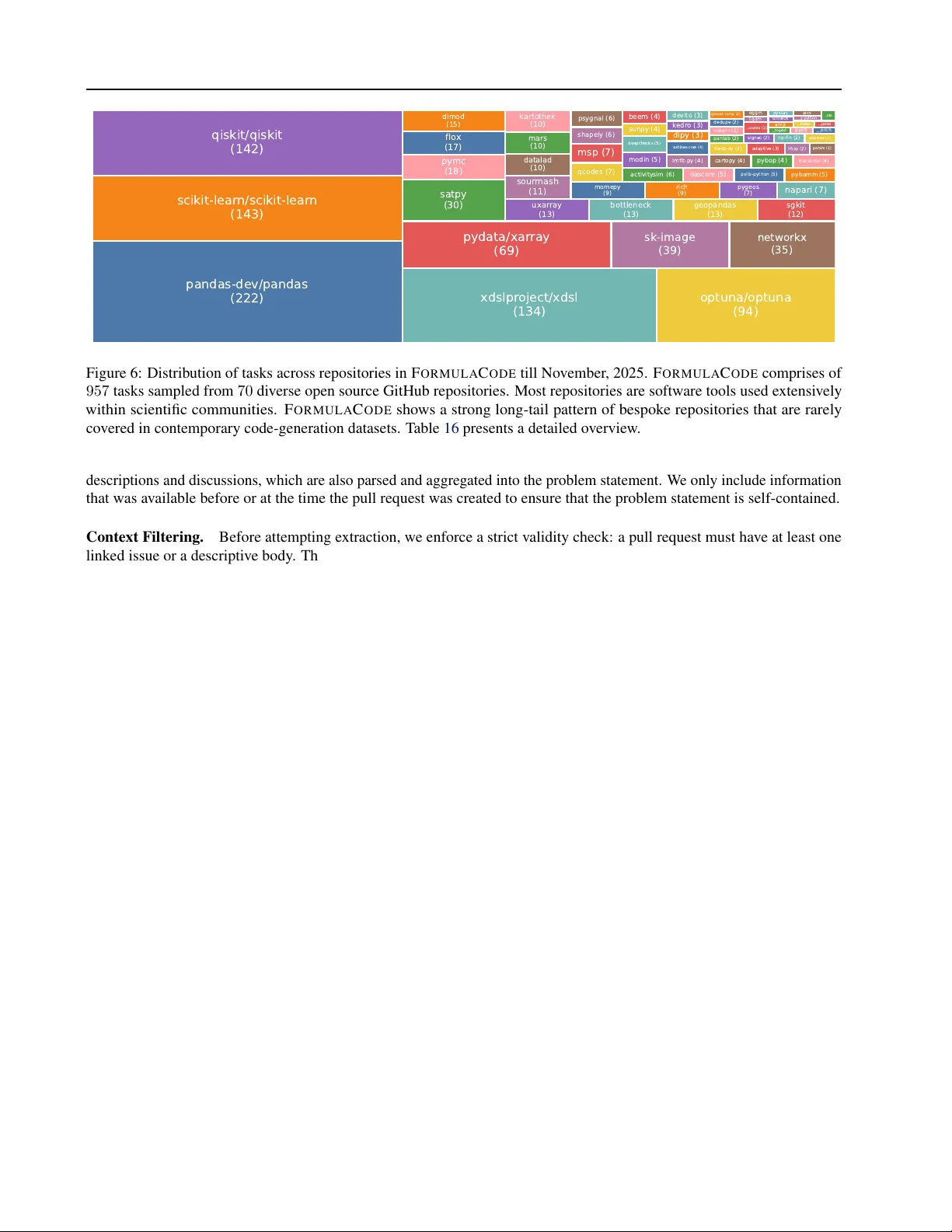

Authors: Atharva Sehgal, James Hou, Akanksha Sarkar