FEEL (Force-Enhanced Egocentric Learning): A Dataset for Physical Action Understanding

We introduce FEEL (Force-Enhanced Egocentric Learning), the first large-scale dataset pairing force measurements gathered from custom piezoresistive gloves with egocentric video. Our gloves enable scalable data collection, and FEEL contains approxima…

Authors: Eadom Dessalene, Botao He, Michael Maynord

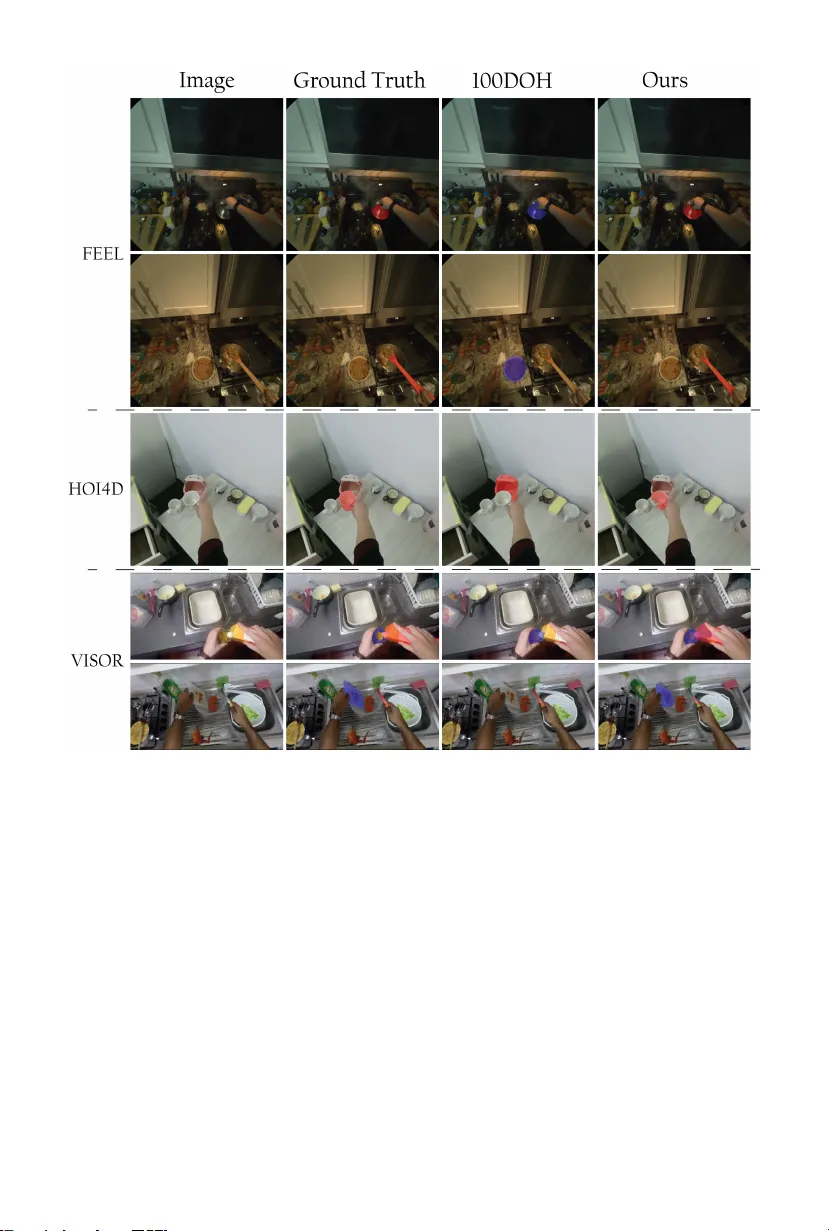

FEEL (F orce-Enhanced Ego cen tric Learning): A Dataset for Ph ysical A ction Understanding Eadom Dessalene, Botao He, Mic hael Maynord, Y onatan T ussa, Pa v an Man tripragada, Yianni Karabatis, Nirupam Roy, and Yiannis Aloimonos Univ ersity of Maryland, College Park, USA Email: edessale@umd.edu https://www.cs.umd.edu/~edessale/feel Fig. 1: FEEL pairs ego centric video with force measurements to capture the physical causes—not just visual effects—of hand-ob ject interaction. Abstract. W e introduce FEEL (F orce-Enhanced Egocentric Learning), the first large-scale dataset pairing force measurements gathered from custom piezoresistive glov es with ego centric video. Our glov es enable scalable data collection, and FEEL con tains approximately 3 million force-sync hronized frames of natural unscripted manipulation in kitchen en vironments, with ∼ 45% of frames inv olving hand-ob ject contact. Be- cause force is the underlying cause that drives physical interaction, it is a critical primitive for ph ysical action understanding. W e demonstrate the utilit y of force for physical action understanding through application of FEEL to tw o families of tasks: (1) con tact understanding , where we join tly perform temporal con tact segmen tation and pixel-lev el con tacted ob ject segmentation; and, (2) action representation learning , where force prediction serves as a self-sup ervised pretraining ob jectiv e for video bac kb ones. W e achiev e state-of-the-art temporal contact segmentation results and comp etitive pixel-lev el segmentation results without any need for manual contacted ob ject segmentation annotations. F urthermore we demonstrate that action representation learning with FEEL improv es transfer p erformance on action understanding tasks without any man- ual lab els ov er EPIC-Kitchens, SomethingSomething-V2, EgoExo4D and Meccano. 2 Dessalene et al. Fig. 2: F orces reveal in teraction dynamics. Representativ e sequences showing how force measurements disambiguate physical interactions. Left: Grasping and lifting a pan exhibits distinct force signatures with an onset at the end of the reach and a spike as the pan is lifted. Righ t: Stirring produces rh ythmic force patterns reflecting cyclic manipulation. 1 In tro duction Ph ysical manipulation actions are defined by the forces the hands exert on the w orld. F or this reason, force should not b e treated as a p eripheral signal, but as a primitive for physical action understanding. Vision, in contrast, primarily measures motion and appearance — observ able effects of action but not causal in themselves. Learning actions from video alone leav es the underlying action structure implicit in a high dimensional stream of pixels - incorp orating force turns the hidden action structure into an intrinsically lo wer dimensional learnable signal. Ego cen tric video datasets hav e enabled ma jor progress by fo cusing on the visual mo dalit y for action understanding tasks. This data is useful, but it lac ks a mo dalit y directly capturing the causal dynamics of action: force. F orce is absent from existing datasets largely b ecause it cannot be reliably obtained through man ual lab eling. The physical resp onse of ob jects to forces applied are often v ery subtle and difficult for annotators to recognize directly from visual changes. This difficult y includes annotating the making and breaking of contact. F or illustration, see Figure 3 for a case where app earance is inconclusiv e while force disam biguates. In order to address the shortcomings of existing datasets, we introduce FEEL (F orce-Enhanced Ego cen tric Learning). FEEL is built around a custom force sensing glov e (see Figure 4 ) consisting of 5 Piezoresistive sensors mounted to the pads of the fingers, as well as a long Piezoresistive sensor mounted to the palm. This glov e when worn provides a stream of measurements of the forces imparted b y hands. W e record this stream alongside egocentric captures collected with Meta Aria glasses [ 15 ]. The result is time-sync hronized ego cen tric video, audio, and force sensing of natural, unscripted, unstructured manipulation. See Figure 2 for illustration of sync hronized video and force sensing streams. Contact is deriv ed from force exchange, yielding temp orally precise p er-frame con tact state and boundaries (see Figure 3 ). A k ey b enefit to force-derived contact lab els as FEEL 3 Fig. 3: Con tact detection from force measuremen ts. Despite visual similarity across frames, force measurements precisely identify con tact b oundaries. T op: Raw sensor forces (pinky and middle finger dominate this grasp). Middle: Consolidated force with dual thresholds—ab o ve C threshold indicates con tact, b elow NC threshold indicates non-contact, b et ween is am biguous (excluded). F orces enable scalable contact sup ervision without manual annotation of ambiguous visual transitions. opp osed to manual annotation of contact lab els o ver RGB frames is scalability . W e fo cus on kitchens, which provide a broad range of interaction/action types. FEEL contains ∼ 3 M frames o ver ∼ 27 hours of dense manipulation, with ∼ 45% of frames in volving contact. Using force as sup ervision pro vides a low-dimensional physically grounded signal that constrains how action is extracted from high-dimensional pixel space. Along these lines we dev elop learning metho ds leveraging forces as sup ervision for the following tasks: Contact Understanding and Action Representation Learning (see Figure 5 ). In the Contact Understanding task we leverage our force signal in the mo deling of con tact in ego cen tric video. This task is defined as join tly: (1) temp oral con tact segmentation - classifying the p er-frame contact state o ver time for eac h hand; and, (2) pixel-level segmentation - segmen ting the ob ject(s) in con tact with each hand. Our force measurements provide temp orally precise sup ervision for the onset and offset of contact, enabling the learning of sharp in teraction b oundaries which would b e difficult to learn ov er imprecise con tact annotations (see the Supplemen tary Materials for examples). In the A ction Representation Learning task, we use FEEL to demonstrate the utility of force for learning action representations ov er video. T raining to 4 Dessalene et al. predict forces encourages the net work to infer ph ysically grounded action structure from video. W e ev aluate transfer of the resulting representations to the task of action recognition. W e ev aluate on established b enchmarks, including EPIC- Kitc hens, Ego-Exo4D, Something-Something V2, and Meccano. The primary con tributions of this work are as follows: 1. Release of FEEL: W e release the largest ego cen tric force–video dataset, capturing the physical causes - not just the visual effects - of hand-ob ject manipulation. FEEL consists of approximately 3 million frames collected during natural, unscripted manipulation. 2. Scalable force-deriv ed contact lab els: W e derive p er-frame contact states, releasing 1 . 35 M frames of hands and ob jects in con tact (along with ob ject segmen tations) and 1 . 65 M frames of hands and ob jects not in contact. 3. Self-sup ervised force pre-training pip eline A demonstration of the b enefits of training force-aw are video bac kb ones for downstream tasks. F orce- a ware pretraining ov er FEEL impro ves downstream action recognition across nearly all datasets. 4. A new state-of-the-art con tact estimation mo del: W e train a mo del to join tly p erform temporal contact segmentation and pixel-level ob ject-in- con tact segmentation ov er still images. Our mo del ac hieves state-of-the-art temp oral contact detection across all three b enchmarks. The rest of this pap er is structured as follows: In Section 2 we co ver related w ork, in Section 3 we detail our metho d, in Section 4 we co ver ev aluations, and in Section 5 w e discuss and conclude. 2 Related W ork Con tact Understanding The predominant paradigm for contact understanding relies on large, man ually annotated datasets o ver images [ 7 , 8 , 26 , 32 , 40 ] or video [ 8 , 10 , 13 , 23 ], which incur significant annotation cost and label noise. Some w orks mitigate this through semi-automatic segmen tation workflo ws that propagate manual annotations using off-the-shelf video to ols [ 10 , 13 ]. Alternative approac hes collect data in controlled lab settings with motion capture to precisely trac k hands and ob jects in 3D [ 4 , 16 , 39 ], or sidestep real-world collection entirely via syn thetic data [ 20 ]. F orce Understanding Imbuing vision mo dels with physical understanding remains highly challenging and underexplored [ 14 , 17 , 29 , 44 ]. Due to the scarcity of real-w orld force data, most vision-based approaches rely on simulation [ 14 , 21 , 44 ]. In rob otics, a gro wing b o dy of w ork addresses force-aw are visual representations through real-world data collection [ 9 , 11 , 42 ], though these are confined to simple manipulation tasks suc h as pick-and-place. The closest work to ours is [ 38 ]; our dataset is o ver 5 × larger and we explore tasks un-explored within [ 38 ]. Applications of F orce and Con tact Understanding Reliably inferring ph ysical hand-ob ject interactions unlo c ks a range of important downstream tasks. Con tact understanding has b een shown to impro ve action recognition [ 13 , 23 , 34 ] FEEL 5 and to enable rob ots to learn manipulation tra jectories by parsing con tacts b et w een hands and ob jects from human video [ 27 , 36 , 37 ]. F orce understanding has seen comparatively fewer applications: [ 1 ] trains force-a ware rob ot p olicies on a small dataset of simplistic tasks, and [ 38 ] successfully demonstrates that forces improv e grasp classification p erformance. W e are the first to demonstrate zero-shot transfer of our learned representations ov er our force dataset for b oth con tact understanding and action representation learning. 3 Metho ds W e introduced tw o families of learning tasks that leverage force as sup ervision: con tact understanding and action represen tation learning across mo dal- ities. Con tact understanding jointly addresses temporal contact segmentation (p er-frame contact state classification) and pixel-level segmen tation of ob jects in con tact. F or action understanding, we use force prediction as a pretraining ob jec- tiv e to learn representations that are more physically grounded than app earance based features alone. Figure 5 illustrates our approac h: we train mo dalit y-sp ecific bac kb ones on image and video inputs with force prediction as a self-sup ervised pretraining ob jective. The remainder of this section is organized as follows: Section 3.1 describ es our data collection pip eline and the custom force-sensing hardware; Section 3.2 details con tact understanding; Section 3.3 describ es action representation learning. The a v ailability of synchronized force measurements as a new supervisory mo dality enables both the scalable deriv ation of contact annotations and the learning of ph ysically grounded action representations — applications that would b e difficult or imp ossible with vision alone. Fig. 4: F orce-sensing glo ve hardware. Leftmost: P alm-facing views of the left and righ t glo ves, showing the six piezoresistive sensors ( red ) mounted at each fingertip and across the palm, the Arduino micro controller case ( blue ) mounted at the wrist, and the on/off switch ( green ). Middle and righ t: Side and in-use views demonstrating the glo ve’s lo w-profile form factor during natural ob ject manipulation. 6 Dessalene et al. Fig. 5: Learning contacts and action from forces. (a) Contact Understanding Image netw ork trained with force-derived contact labels p erforms con tact detection and segmen tation (red mask) for each hand (L/R). (b) A ction Representation Learning Video netw ork predicts p er-hand forces from clips for pretraining. The video backbone is transferred to action recognition after discarding force heads. No manual contact or action lab els required during pre-training. 3.1 Dataset Collection T o obtain synchronized visual, acoustic, and force streams, we developed a ligh tw eight sensing system combining a custom piezoresistive glov e with Meta Aria glasses for ego cen tric capture. Hardw are Our sensing system combines tw o synchronized comp onents: a custom piezoresistive force glov e and Meta’s Pro ject Aria glasses (Gen 1) . This pairing enables simultaneous capture of manipulation forces, ego centric visual streams, and spatial audio. F orce Glo ve The force sensing comp onen t consists of six piezoresistive sensors (Flexiforce A301, T ekscan) mounted on a glov e - one sensor p er fingertip and a sensor strip mounted on the palm. See Figure 4 for the glov e we construct and use for data collection. Each A301 sensor measures forces up to 45 N (approximately 10 lbs). The sensors connect to an Arduino Nano micro controller mounted in a case enclosure that also houses a 3 . 3 V Lithium Polymer (LiPo) battery and toggle switc h for p o wering the device on and off to start and stop recordings. Pro ject Aria Glasses F or ego cen tric visual stream and spatial audio capture, w e use Meta’s Pro ject Aria device. The device captures synchronized multimodal streams: a forward-facing RGB camera ( 1440 × 1440 pixels), tw o mono c hrome scene cameras ( 640 × 480 pixels) p ositioned on the left and right temples for wide p eripheral co verage, a 7-microphone arra y for spatial audio, and dual IMUs sampled at 800 Hz and 1000 Hz. Sensor Calibration and Sync hronization Synchronizing the force glov es and Aria glasses requires aligning tw o indep enden t data streams op erating on separate internal clo c ks. See the Supplemen tary Materials for the three-step calibration pro cedure employ ed at the start of each recording session. FEEL 7 T able 1: Comparison of commonly used hand-ob ject in teraction datasets. Mo dalit y co des: V=video, A=audio, D=depth, F=force, E=ey e tracking. Contact annotation for Ego4D (FHO) is partial (only a single critical frame is annotated out of each sequence). FEEL is the only real-world (Real) ego centric dataset providing b oth contact annotations and force measuremen ts — all other datasets with force lack con tact labels or real-world capture, and all datasets with contact lack force. Dataset View Scale Real Mo dalities Con tact F orces EgoHands [ 22 ] Ego 1.5 hrs ✓ V × × Arctic [ 16 ] Both 4 hrs ✓ V/D ✓ × VISOR [ 10 ] Ego 36 hrs ✓ V/A ✓ × HOI4D [ 24 ] Ego 45 hrs ✓ V/D ✓ × Ego4D (FHO) [ 18 ] Ego 120 hrs ✓ V/A 1/2 × 100DOH [ 32 ] Both - ✓ V ✓ × Zhang et al. [ 43 ] Neither 1 hour × V/F × ✓ Ehsani et al. [ 14 ] Exo 0.5 hrs × V/F ✓ ✓ Op enT ouc h. [ 38 ] Ego 5 hrs ✓ V/A/F/E ✓ ✓ FEEL (Ours) Ego 27 hrs ✓ V/A/F/E ✓ ✓ Data Processing Ego cen tric P ose and Hand T rac king W e leverage Meta’s Mac hine Perception Services (MPS) to extract foundational geometric and kinematic information from the Aria recordings. MPS pro cesses the side camera streams and IMU data through a visual-inertial o dometry pipeline to pro duce high-frequency (1 kHz) 6-DoF camera tra jectories. Additionally , MPS p erforms 3D hand tracking on the side camera imagery , recov ering hand keypoints for each frame in the recordings. A dditional Pro cessing T o arriv e at nouns of ob jects of interaction, w e adopt a w eak lab eling strategy: for each recording session, we partition the video into 2 -min ute temp oral windows and select 8 uniformly sampled frames within each windo w. Over those frames we annotate the set of ob ject nouns in teracted with, without sp ecifying temp oral order or precise timing. W e further compute dense geometric cues to supp ort contacted ob ject segmen tation (see Section 3.2 ): scaled depth maps are predicted (in meters) using MLDepthPro [ 3 ], and optical flow fields are densely estimated b et ween consecutive frames using SEA-RAFT [ 41 ]. 3.2 Con tact Understanding Prior approaches to contact understanding [ 10 , 32 ] dep end on human annotators to lab el con tact even ts from RGB frames—a pro cess that is b oth lab or-in tensive and difficult to scale. Our approach instead exploits direct physical measure- men ts - which, among other adv an tages, enable us to determine contact states and temp orally lo calize contacted ob jects without manual annotation. F rom these force-derived contact states, we introduce a w eakly sup ervised metho d for generating pseudolab el segmen tations of contacted ob jects. This physically 8 Dessalene et al. grounded sup ervision enables training paradigms that would not b e p ossible with vision-only approac hes. Con tact Detection Ra w piezoresistive sensors exhibit substantial baseline drift and high-frequency noise that preclude simple thresholding for con tact detection. W e apply a multi-stage filtering pip eline to eac h sensor: outlier remov al via Hamp el filtering, Gaussian smo othing, time-v arying baseline estimation via rolling percentile, baseline subtraction with negative clipping, and exclusion of regions with abrupt baseline changes or high RMS magnitudes. W e then normalize eac h sensor by its 99 . 5 th p ercen tile v alue and compute the geometric mean across all six sensors to pro duce a unified force signal robust to individual sensor failures. F rames where this signal exceeds an upp er threshold indicate con tact, frames b elo w a low er threshold indicate non-contact, and frames b et ween thresholds are excluded as ambiguous regions. F or full details see the Supplementary Materials. Segmen tation Pseudolab el Generation W e develop a weakly sup ervised approac h to generate pseudo-lab els for spatially segmenting ob jects in contact, eliminating the need for man ual ob ject segmen tation annotations. The inputs to our segmentation pip eline are: (i) frames designated as contain- ing contact b y our force-based detection system; (ii) a set of ob ject mask p roposals pro duced b y a set of concept prompts fed to SAM3 (the set of concepts are the only w eak lab els pro vided, pro duced as p er Section 3.1 ); (iii) pre-computed optical flow fields using SEA-RAFT; (iv) camera intrinsics/extrinsics from MPS; and (v) pre-computed depth maps. The pro cess by which these inputs are deriv ed is describ ed in Section 3.1 . Giv en these inputs, we generate contacted-ob ject pseudo-labels by: (1) ex- tracting the masked optical flo w for each ob ject prop osal; (2) computing the fundamen tal matrix b etw een the current frame and the next frame using the Aria Machine Perception Services (MPS) provided intrinsics/extrinsics; (3) ev al- uating the Sampson epip olar error [ 25 ] within each mask using the masked flo w and fundamental matrix (where higher error indicates stronger violations of the static-scene (epipolar) constrain t due to ob ject motion or manipulation); (4) selecting the prop osal with the maxim um mean epip olar error; and (5) accepting this prop osal as the con tacted ob ject if and only if at least a set num b er of pixels b elonging to the mask lie within a 3D distance threshold of the 3D hand cen troid (ensuring that the selected high-motion region is spatially pro ximate to the manipulating hand). W e hav e 500 K images with binary con tact pseudolabels, 180 K of which contain binary segmentations of the ob ject(s) in con tact. F or full details see Supplemen tary Materials. T raining T o demonstrate the utility of FEEL, w e train a contact understand- ing mo del for comparison against state of the art vision netw orks trained against large datasets of manually collected contact annotations. As illustrated in the first ro w of Figure 5 , w e train this netw ork to pro cess a single R GB image and pro duce tw o prediction heads p er hand (left and righ t): (1) a binary con tact classifier indicating whether the hand is in contact with an ob ject, and (2) a segmen tor of the ob ject in contact with that hand. W e sup ervise the devoted net work head for each hand only when that hand is at least partially visible in the FEEL 9 T able 2: T emp oral contact detection results ev aluated on the v alidation sets of FEEL, EPIC-VISOR, and HOI4D. W e emphasize that while our mo del was only trained ov er 1 dataset (FEEL), the 100DOH mo del and VISOR mo del were b oth trained ov er the EPIC-VISOR dataset [ 10 ]. BC represen ts "Binary contact detection" - a prediction is correct iff it captures the binary contact state b etw een the hand and the ob ject, irresp ectiv e of which predicted hand side is inv olved. BSC represents "Binary Signed Con tact" - a prediction is correct iff it captures b oth the binary contact state of b et ween the hand and the ob ject, as well as the hand side in volv ed. Metho d Sup. T raining Set # HOI4D VISOR FEEL BC BSC BC BSC BC BSC Depth [ 3 ] Self 5 66.6 66.0 52.8 39.9 48.6 30.0 HOIRef [ 2 ] F ull 2 (inc. VISOR) 52.1 50.8 55.4 53.7 59.6 57.2 VISOR [ 10 ] F ull 1 (VISOR) 55.3 55.0 66.3 65.2 45.2 40.2 100DOH [ 32 ] F ull 2 (inc. VISOR) 90.0 80.5 81.3 71.9 49.0 40.0 Ours Self 1 (inc. FEEL) 82.2 81.3 87.3 79.7 95.6 88.9 frame; netw ork heads corresp onding to out-of-view hands receive no sup ervision. F or data augmentation, w e apply random crops and random horizontal flips. When flipping, w e mirror b oth the input image and the target outputs in order to main tain corresp ondence. 3.3 Learning F orces from Video T raditionally , video netw orks are pretrained on large-scale datasets of lab eled images and videos (e.g., ImageNet [ 12 ] and Kinetics [ 6 ]), whic h provide strong app earance- and motion-level priors but little direct sup ervision ab out the un- derlying physical interaction; in contrast, we use FEEL’s physically grounded force signals to pretrain video mo dels to capture force dynamics (see Figure 5 ) and transfer these representations to downstream action understanding tasks. W e formulate video-to-force prediction as a self-sup ervised pretraining task, then transfer the learned representations to downstream action recognition b enc h- marks. During the video-to-force pretraining, we only select clips for which at least one hand is visible for 50% of the frames, ensuring that a training signal is reflected in the input images. 4 Exp erimen ts W e ev aluate FEEL across tw o families of tasks: (1) contact understanding , where we p erform temp oral contact detection and pixel-level ob ject segmentation, and (2) action representation learning , where force prediction serves as a self-sup ervised pretraining ob jective for video backbones. See Figure 5 for a depiction of the mo deling of b oth tasks. 10 Dessalene et al. T able 3: Spatial contact segmentation results ev aluated on the v alidation sets of FEEL, EPIC-VISOR, and HOI4D. W e emphasize that while our mo del w as only trained o ver 1 dataset (FEEL), the 100DOH mo del and VISOR mo del were both trained o ver the EPIC-VISOR dataset. As the 100DOH mo del pro duces b ounding b o xes (not segmen tations), w e employ in addition a SAM mo del (trained ov er Internet-scale data) to segment the 100DOH model predictions. Results reported as intersection-o ver-union (IOU) ↑ . Metho d Sup. T raining Set # Dataset HOI4D VISOR FEEL MO VES [ 19 ] W eak N/A - - 0.309 SAM3 [ 5 ] F ull Comp. 0.201 0.085 0.030 VISOR [ 10 ] F ull 1 (inc. VISOR) 0.502 0.402 0.111 100DOH [ 32 ]+SAM [ 5 ] F ull Comp. (inc. VISOR) 0.765 0.443 0.218 Ours W eak 1 (FEEL) 0.751 0.399 0.625 Fig. 6: T emp oral con tact detection. A representativ e sequence showing our mo del’s con tact predictions ov er time as a hand reac hes to ward and grasps a mug. The model correctly predicts no contact during the approach phase and transitions to contact precisely at the moment of grasp. 4.1 Con tact Understanding T ask: Contact understanding jointly addresses temp oral contact segmentation (p er-frame contact state classification) and pixel-level segmentation of ob jects in con tact. The mo del takes an image as input and pro duces a prediction as to the binary contact state of each hand as w ell as a pixel-level segmentation of the con tacted ob ject for eac h hand. See Figure 5 . Mo del and implementation: T raditional contact understanding mo dels [ 32 ] are complex to train due to the non-differentiable op erations that happ en along the w ay , along with the additional h yp erparameters introduced. F or simplicity of implemen tation, we train a Dense Prediction T ransformer (DPT) [ 30 ] on top of frozen DINOv3 [ 35 ] features. The image is fed as input to DINO, which pro duces feature maps subsequently fed into DPT, which pro duces t wo sets of outputs: one set of outputs as a binary logit based on the contact detected for each of the left and righ t hand; and a t wo-c hannel segmentation map devoted to the left and right hand, returned at the input resolution of the image. F or further implemen tation details, see the Supplementary Materials. FEEL 11 Baselines The contact detections we learn ov er are derived in a fully self- sup ervised fashion; the con tact segmentations we learn o ver are deriv ed in a w eakly-sup ervised fashion, as the pseudolab el generation algorithm is provided as input the ob ject categories of all ob jects interacted with throughout the recording session. A t no p oin t is our training dep endent on manually provided ob ject segmen tations. As suc h, w e compare against other (1) self-supervised approaches, (2) w eakly-sup ervised approac hes, and (3) fully-sup ervised approaches. Con tact detection baselines. T o our knowledge, there is no commonly used metho d for learning to detect contact in a self-sup ervised fashion. Therefore we in tro duce a simple zero-shot baseline combining recen t adv ances in depth and segmen tation netw orks. W e compute segmentations of the b ody , and from the depth map of the scene compute a signed distance field of the scene with resp ect to each of the t wo hands. If the n umber of pixels where the distance d ( x, y ) for all pixels ( x, y ) exceeds a threshold, the contact prediction is p ositive, and vice-v ersa. W e also compare against three fully-sup ervised baselines: HOIRef [ 2 ], EPIC-VISOR [ 10 ], and 100DOH [ 32 ]. See T able 2 for results. Con tact segmentation baselines. As for comparisons to other contact segmen- tation approac hes, the closest self-sup ervised and weakly-supervised comparisons are [ 19 ] and [ 33 ], but their metho ds are not publicly av ailable. W e instead com- pare against a reimplementation of the method for generating ground truth pseudolab els pro duced within [ 19 ] - we compare our netw ork’s segmen tation pre- dictions directly against those pseudolab els. W e also compare against SAM with its concept prompting, as well as t wo fully-sup ervised baselines: EPIC-VISOR and 100DOH. See T able 3 for results. Ev aluation Datasets W e ev aluate our metho d as well as other metho d ov er the con tact pseudolab els b elonging to FEEL, as well as zero-shot transfer o ver t wo p opular ego cen tric video datasets that con tain contact annotations - EPIC VISOR [ 10 ] and HOI4D [ 24 ]. 4.2 A ction Represen tation Learning from Video A ction representation learning inv olves the exploring of pretraining ob jectiv es for video backbones b efore transferring of action representations for downstream tasks. When pre-training ov er FEEL, the mo del tak es a video as input and pro duces force predictions. See Figure 5 . W e then transfer those learned repre- sen tations to the task of action recognition. He re action recognition takes video as input, and pro duces a distribution ov er action categories as output. Due to computational constraints, rather than jointly pretraining on K710/Ego4D and FEEL, we instead initialize from K710 and Ego4D pretrained w eights, fine- tune o ver FEEL’s force data, and transfer the resulting force-aw are representations to action recognition. Do wnstream A ction Recognition F orces are most impactful for the understanding of physic al actions p erformed in video. Therefore, after pretraining these video net works ov er FEEL, we fo cus our ev aluation o ver downstream datasets centered on manipulation betw een hands and ob jects. The datasets 12 Dessalene et al. Fig. 7: Qualitative contact segmen tation results. Each row shows an input image, ground truth, 100DOH prediction, and our prediction across FEEL, HOI4D, and VISOR. Righ t and left hand contacts are sho wn in red and blue resp ectiv ely . Our mo del correctly iden tifies hand side (row 1), av oids confusing proximit y for contact (rows 2-3), and handles simultaneous tw o-hand contacts (rows 4-5). Where our mo del pro duces partial segmen tations (ro w 3), 100DOH (combined with SAM) confidently segments the wrong ob ject entirely . w e ev aluate ov er are EPIC-Kitchens-100, SomethingSomething-V2, and Ego- Exo4D. W e ev aluate each model o ver the task of action recognition - in the case of EPIC-Kitchens, predicting verbs and nouns , whereas in Ego-Exo4D, SomethingSomething-V2 and Meccano the task is to predict actions . F or results, see T able 1 . FEEL 13 T able 4: A ction recognition results across all b enc hmarks. T op-1 accuracy for frozen and unfrozen settings across EPIC-Kitc hens (verb/noun), SSV2, Ego-Exo4D, and Meccano. F or each mo del, we compare standard pretraining (K710 for Hiera-B, Ego4D for EgoVideo-B) against the same weigh ts further pretrained on FEEL’s force data. Bold denotes the b etter result within eac h mo del pair. Mo dels pre-trained ov er FEEL’s data generally outp erform the mo dels pre-trained solely o ver Kinetics and Ego4D. W e particularly observe this in the frozen ev aluation setting compared to the unfrozen, end-to-end training. Mo del Pretrain EPIC-Kitc hens SSV2 Ego-Exo4D Meccano V erb Noun A ction A ction A ction F r ozen Hiera-B K710 41.65 15.12 36.08 13.11 26.42 Hiera-B Ours 46.50 21.38 39.04 20.83 29.57 EgoVideo-B Ego4D 59.24 29.42 49.61 26.00 36.59 EgoVideo-B Ours 62.00 30.11 54.34 27.75 38.23 Unfr ozen Hiera-B K710 68.34 48.18 68.88 35.25 38.52 Hiera-B Ours 68.24 49.08 70.01 41.19 41.13 EgoVideo-B Ego4D 70.99 55.67 74.55 40.92 48.99 EgoVideo-B Ours 71.34 55.60 74.91 40.75 50.01 5 Discussion T emp oral Contact Detection Qualitative results sho w in Figure 6. Note that our metho d accurately lo calizes the precise p oin t in time at which contact is established, and is not confounded by mere hand / ob ject proximit y , correctly transitioning from no-contact to contact exactly at the onset of grasp formation. T able 2 shows that our force-supervised mo del substantially outp erforms the zero-shot depth baseline and surpasses fully sup ervised comp etitors across all three datasets, despite nev er receiving manually annotated contact lab els. The most comp etitiv e baseline is 100DOH [ 32 ], whic h b enefits from training on tw o large, fully lab eled datasets — the original 100DOH dataset and EPIC-VISOR — to achiev e strong generalization to egocentric video. Our model surpasses the 100DOH mo del while relying solely on ph ysical force measuremen ts for sup ervision. A further adv antage of our approac h is its handling of handedness. Our mo del exp eriences a smaller drop b et ween the Binary Contact (BC) and Binary Signed Con tact (BSC) metrics than 100DOH, reflecting more reliable left/right hand discrimination. The larger BC–BSC gap in 100DOH may stem from annotation noise: distinguishing hand side is subtle and error-prone for human annotators, p oten tially introducing lab el inconsistencies that degrade signed predictions. F or am biguities in annotations of handedness, see the Supplementary Materials. Con tact Segmen tation T able 3 demonstrates that our w eakly sup ervised mo del achiev es comp etitiv e spatial segmentation p erformance across all three datasets. On VISOR, our mo del slightly trails 100DOH, which is exp ected given that 1) the 100DOH mo del relies on SAM3 [ 5 ], a p o w erful segmentation mo del trained o ver Internet-scale data, and 2) the 100DOH mo del is fully trained on 14 Dessalene et al. the EPIC-VISOR training split while our mo del is deploy ed zero-shot. Despite this disadv an tage, the gap is narrow. Our model correctly identifies hand side, av oids confusing proximit y for con tact, and handles simultaneous t wo-hand contacts. While 100DOH — which relies on b ounding b ox predictions follow ed by SAM [ 5 ] — pro duces complete but o ccasionally ov er-confident masks of the wrong ob ject, our end-to-end mo del more consisten tly identifies the correct contacted ob ject, sometimes at the cost of partial co verage. See Figure 7 for examples. A ction Represen tation Learning T able 1 reveals tw o consistent trends across all four do wnstream benchmarks across Hiera [ 31 ] and EgoVideo [ 28 ] mo dels. F or c e pr etr aining impr oves fr ozen r epr esentations mor e than fine-tune d ones. P erformance gains are consistently larger in the frozen-ev al setting than under end- to-end fine-tuning. This indicates that force-aw are features are more immediately transferable than conv en tional appearance-based pretraining w eights, and sugges ts that force sup ervision instills a qualitatively different represen tational prior. Gains ar e lar gest on smal ler downstr e am datasets. The four ev aluation datasets span a wide range of sizes (Meccano smallest, Ego-Exo4D second smallest, EPIC Kitc hens second largest, and SomethingSomething-V2 largest), and the relative p erformance b enefit of force pretraining is most pronounc ed at the smaller end of this sp ectrum. This is consisten t with the hypothesis that ph ysically grounded represen tations reduce the amount of lab eled downstream data needed to learn effectiv e action classifiers — a practically v aluable prop ert y giv en the cost of large-scale action annotation. Limitations. On the hardw are side, rubb er gel pad moun ts w ere uncom- fortable for extended wear, individual sensors w ere sometimes unreliable due to mec hanical drift and b ending, and the sensors obstruct the fingertips during tasks requiring fine dexterity - limitations that could b e addressed by mo ving to abov e-site sensing suc h as wrist-mounted EMG devices. On the algorithmic side, our pseudolabel generation relies on ob ject motion, meaning w e cannot segmen t stationary contacted ob jects suc h as tables or heavy appliances, whic h will require cues b ey ond motion to resolve. Summary F orce is the underlying cause that drives physical interaction, it is a critical primitive for ph ysical action understanding. W e release FEEL the largest ego cen tric force–video dataset, capturing the physical causes - not just the visual effects - of hand-ob ject manipulation. W e empirically demons trate the utilit y of feel ov er b oth Contact Understanding, and Action Representation Learning without any need need for manual action lab el or ob ject segmentation annotations. W e hop e FEEL serv es as a foundation for future work spanning finer-grained hand understanding, force-aw are visuomotor control, and manipulation policy learning from human video; and that as wearable sensing hardw are contin ues to impro ve in form factor and comfort, the communit y will scale datasets like FEEL to further close the gap b et ween the richness of physical interaction and what can b e observed from video alone — with applications reaching from rob otics and h uman-rob ot in teraction to virtual and augmented reality . FEEL 15 References 1. A deniji, A., Chen, Z., Liu, V., Pattabiraman, V., Bhirangi, R., Haldar, S., Abb eel, P ., Pinto, L.: F eel the force: Contact-driv en learning from h umans. arXiv preprin t arXiv:2506.01944 (2025) 2. Bansal, S., W ra y , M., Damen, D.: Hoi-ref: Hand-ob ject interaction referral in ego cen tric vision. arXiv preprint arXiv:2404.09933 (2024) 3. Bo c hko vskii, A., Delauno y , A., Germain, H., Santos, M., Zhou, Y., Ric hter, S.R., K oltun, V.: Depth pro: Sharp mono cular metric depth in less than a second. arXiv preprin t arXiv:2410.02073 (2024) 4. Brahm bhatt, S., T ang, C., T wigg, C.D., Kemp, C.C., Hays, J.: Contactpose: A dataset of grasps with ob ject contact and hand pose. In: Europ ean Conference on Computer Vision. pp. 361–378. Springer (2020) 5. Carion, N., Gustafson, L., Hu, Y.T., Debnath, S., Hu, R., Suris, D., Ryali, C., Alw ala, K.V., Khedr, H., Huang, A., et al.: Sam 3: Segment anything with concepts. arXiv preprint arXiv:2511.16719 (2025) 6. Carreira, J., Zisserman, A.: Quo v adis, action recognition? a new mo del and the kinetics dataset. In: proceedings of the IEEE Conference on Computer Vision and P attern Recognition. pp. 6299–6308 (2017) 7. Chen, Y., Dwivedi, S.K. , Black, M.J., T zionas, D.: Detecting human-ob ject contact in images. In: Proceedings of the IEEE/CVF Conference on Computer Vision and P attern Recognition. pp. 17100–17110 (2023) 8. Cheng, T., Shan, D., Hassen, A., Higgins, R., F ouhey , D.: T o wards a richer 2d understanding of hands at scale. Adv ances in Neural Information Processing Systems 36 , 30453–30465 (2023) 9. Chi, H.G., Barreiros, J., Mercat, J., Ramani, K., Kollar, T.: Multi-mo dal represen- tation learning with tactile data. In: 2024 IEEE/RSJ International Conference on In telligent Rob ots and Systems (IR OS). pp. 9660–9667. IEEE (2024) 10. Darkhalil, A., Shan, D., Zhu, B., Ma, J., Kar, A., Higgins, R., Fidler, S., F ouhey , D., Damen, D.: Epic-kitc hens visor benchmark: Video segmentations and ob ject relations. Adv ances in Neural Information Pro cessing Systems 35 , 13745–13758 (2022) 11. Da ve, V., Lygerakis, F., Ruec kert, E.: Multimodal visual-tactile representation learning through self-sup ervised contrastiv e pre-training. In: 2024 IEEE Interna- tional Conference on Rob otics and Automation (ICRA). pp. 8013–8020. IEEE (2024) 12. Deng, J., Dong, W., So c her, R., Li, L.J., Li, K., F ei-F ei, L.: Imagenet: A large-scale hierarc hical image database. In: 2009 IEEE conference on computer vision and pattern recognition. pp. 248–255. Ieee (2009) 13. Dessalene, E., Dev ara j, C., Ma ynord, M., F ermüller, C., Aloimonos, Y.: F orecasting action through contact representations from first p erson video. IEEE T ransactions on Pattern Analysis and Mac hine Intelligence 45 (6), 6703–6714 (2021) 14. Ehsani, K., T ulsiani, S., Gupta, S., F arhadi, A., Gupta, A.: Use the force, luke! learning to predict ph ysical forces by simulating effects. In: Pro ceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition. pp. 224–233 (2020) 15. Engel, J., Somasundaram, K., Goesele, M., Sun, A., Gamino, A., T urner, A., T alattof, A., Y uan, A., Souti, B., Meredith, B., et al.: Pro ject aria: A new tool for ego cen tric multi-modal ai research. arXiv preprint arXiv:2308.13561 (2023) 16 Dessalene et al. 16. F an, Z., T aheri, O., T zionas, D., K o cabas, M., Kaufmann, M., Black, M.J., Hilliges, O.: Arctic: A dataset for dexterous bimanual hand-ob ject manipulation. In: Pro- ceedings of the IEEE/CVF conference on computer vision and pattern recognition. pp. 12943–12954 (2023) 17. F ermüller, C., W ang, F., Y ang, Y., Zamp ogiannis, K., Zhang, Y., Barranco, F., Pfeiffer, M.: Prediction of manipulation actions. International Journal of Computer Vision 126 (2), 358–374 (2018) 18. Grauman, K., W estbury , A., Byrne, E., Cha vis, Z., F urnari, A., Girdhar, R., Ham burger, J., Jiang, H., Liu, M., Liu, X., et al.: Ego4d: Around the world in 3,000 hours of ego cen tric video. In: Pro ceedings of the IEEE/CVF conference on computer vision and pattern recognition. pp. 18995–19012 (2022) 19. Higgins, R.E., F ouhey , D.F.: Mov es: Manipulated ob jects in video enable segmen- tation. In: Pro ceedings of the IEEE/CVF Conference on Computer Vision and P attern Recognition. pp. 6334–6343 (2023) 20. Leonardi, R., F urnari, A., Ragusa, F., F arinella, G.M.: Are synthetic data useful for ego cen tric hand-ob ject in teraction detection? In: European Conference on Computer Vision. pp. 36–54. Springer (2024) 21. Li, Z., Sedlar, J., Carpentier, J., Laptev, I., Mansard, N., Sivic, J.: Estimating 3d motion and forces of p erson-ob ject interactions from mono cular video. In: Pro ceed- ings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition. pp. 8640–8649 (2019) 22. Lin, F., Price, B., Martinez, T.: Ego2hands: A dataset for ego cen tric tw o-hand segmen tation and detection. arXiv preprint arXiv:2011.07252 (2020) 23. Liu, S., T ripathi, S., Ma jumdar, S., W ang, X.: Joint hand motion and interaction hotsp ots prediction from egocentric videos. In: Proceedings of the IEEE/CVF conference on computer vision and pattern recognition. pp. 3282–3292 (2022) 24. Liu, Y., Liu, Y., Jiang, C., Lyu, K., W an, W., Shen, H., Liang, B., F u, Z., W ang, H., Yi, L.: Hoi4d: A 4d ego centric dataset for category-level human-ob ject interaction. In: Pro ceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition. pp. 21013–21022 (2022) 25. Luong, Q.T., Deriche, R., F augeras, O., Papadopoulo, T.: On determining the fundamen tal matrix: Analysis of different metho ds and exp erimental results. Ph.D. thesis, Inria (1993) 26. Narasimhasw amy , S., Nguy en, T., Nguyen, M.H.: Detecting hands and recognizing ph ysical contact in the wild. Adv ances in neural information pro cessing systems 33 , 7841–7851 (2020) 27. P an, C., W ang, C., Qi, H., Liu, Z., Bharadh wa j, H., Sharma, A., W u, T., Shi, G., Malik, J., Hogan, F.: Spider: Scalable physics-informed dexterous retargeting. arXiv preprin t arXiv:2511.09484 (2025) 28. P ei, B., Chen, G., Xu, J., He, Y., Liu, Y., Pan, K., Huang, Y., W ang, Y., Lu, T., W ang, L., et al.: Egovideo: Exploring egocentric foundation mo del and downstream adaptation. arXiv preprint arXiv:2406.18070 (2024) 29. Pham, T.H., Kyriazis, N., Argyros, A.A., Kheddar, A.: Hand-ob ject contact force estimation from markerless visual tracking. IEEE transactions on pattern analysis and machine intelligence 40 (12), 2883–2896 (2017) 30. Ranftl, R., Bo c hko vskiy , A., Koltun, V.: Vision transformers for dense prediction. In: Pro ceedings of the IEEE/CVF international conference on computer vision. pp. 12179–12188 (2021) 31. Ry ali, C., Hu, Y.T., Boly a, D., W ei, C., F an, H., Huang, P .Y., Aggarw al, V., Cho wdhury , A., Poursaeed, O., Hoffman, J., et al.: Hiera: A hierarchical vision FEEL 17 transformer without the b ells-and-whistles. In: International conference on machine learning. pp. 29441–29454. PMLR (2023) 32. Shan, D., Geng, J., Shu, M., F ouhey , D.F.: Understanding human hands in contact at internet scale. In: Pro ceedings of the IEEE/CVF conference on computer vision and pattern recognition. pp. 9869–9878 (2020) 33. Shan, D., Higgins, R., F ouhey , D.: Cohesiv: Contrastiv e ob ject and hand embedding segmen tation in video. A dv ances in neural information processing systems 34 , 5898–5909 (2021) 34. Shiota, T., T ak agi, M., Kumagai, K., Seshimo, H., Aono, Y.: Egocentric action recognition by capturing hand-ob ject contact and ob ject state. In: Pro ceedings of the IEEE/CVF Win ter Conference on Applications of Computer Vision. pp. 6541–6551 (2024) 35. Siméoni, O., V o, H.V., Seitzer, M., Baldassarre, F., Oquab, M., Jose, C., Khali- do v, V., Szafraniec, M., Yi, S., Ramamonjisoa, M., et al.: Dino v3. arXiv preprint arXiv:2508.10104 (2025) 36. Singh, H.G., Lo quercio, A., Sferrazza, C., W u, J., Qi, H., Abbeel, P ., Malik, J.: Hand- ob ject interaction pretraining from videos. In: 2025 IEEE International Conference on Rob otics and Automation (ICRA). pp. 3352–3360. IEEE (2025) 37. Siv akumar, A., Shaw, K., Pathak, D.: Rob otic telekinesis: Learning a rob otic hand imitator by watc hing humans on youtube. arXiv preprin t arXiv:2202.10448 (2022) 38. Song, Y.R., Li, J., F u, R., Murphy , D., Zhou, K., Shiv, R., Li, Y., Xiong, H., Owens, C.E., Du, Y., et al.: Opentouc h: Bringing full-hand touch to real-world interaction. arXiv preprint arXiv:2512.16842 (2025) 39. T aheri, O., Ghorbani, N., Blac k, M.J., T zionas, D.: Grab: A dataset of whole- b ody human grasping of ob jects. In: European conference on computer vision. pp. 581–600. Springer (2020) 40. T ripathi, S., Chatterjee, A., Passy , J.C., Yi, H., T zionas, D., Black, M.J.: Deco: Dense estimation of 3d human-scene contact in the wild. In: Pro ceedings of the IEEE/CVF International Conference on Computer Vision. pp. 8001–8013 (2023) 41. W ang, Y., Lipson, L., Deng, J.: Sea-raft: Simple, efficient, accurate raft for optical flo w. In: Europ ean Conference on Computer Vision. pp. 36–54. Springer (2024) 42. Y u, K., Han, Y., W ang, Q., Saxena, V., Xu, D., Zhao, Y.: Mimictouch: Leveraging m ulti-mo dal h uman tactile demonstrations for contact-ric h manipulation. arXiv preprin t arXiv:2310.16917 (2023) 43. Zhang, X., Bhatnagar, B.L., Starke, S., Petro v, I., Guzov, V., Dhamo, H., Pérez- P ellitero, E., Pons-Moll, G.: F orce: Physics-a ware human-ob ject interaction. In: 2025 International Conference on 3D Vision (3DV). pp. 1473–1486. IEEE (2025) 44. Zh u, Y., Jiang, C., Zhao, Y., T erzop oulos, D., Zhu, S.C.: Inferring forces and learning human utilities from videos. In: Pro ceedings of the IEEE Conference on Computer Vision and Pattern Recognition. pp. 3823–3833 (2016)

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment