Differential Privacy for Network Connectedness Indices

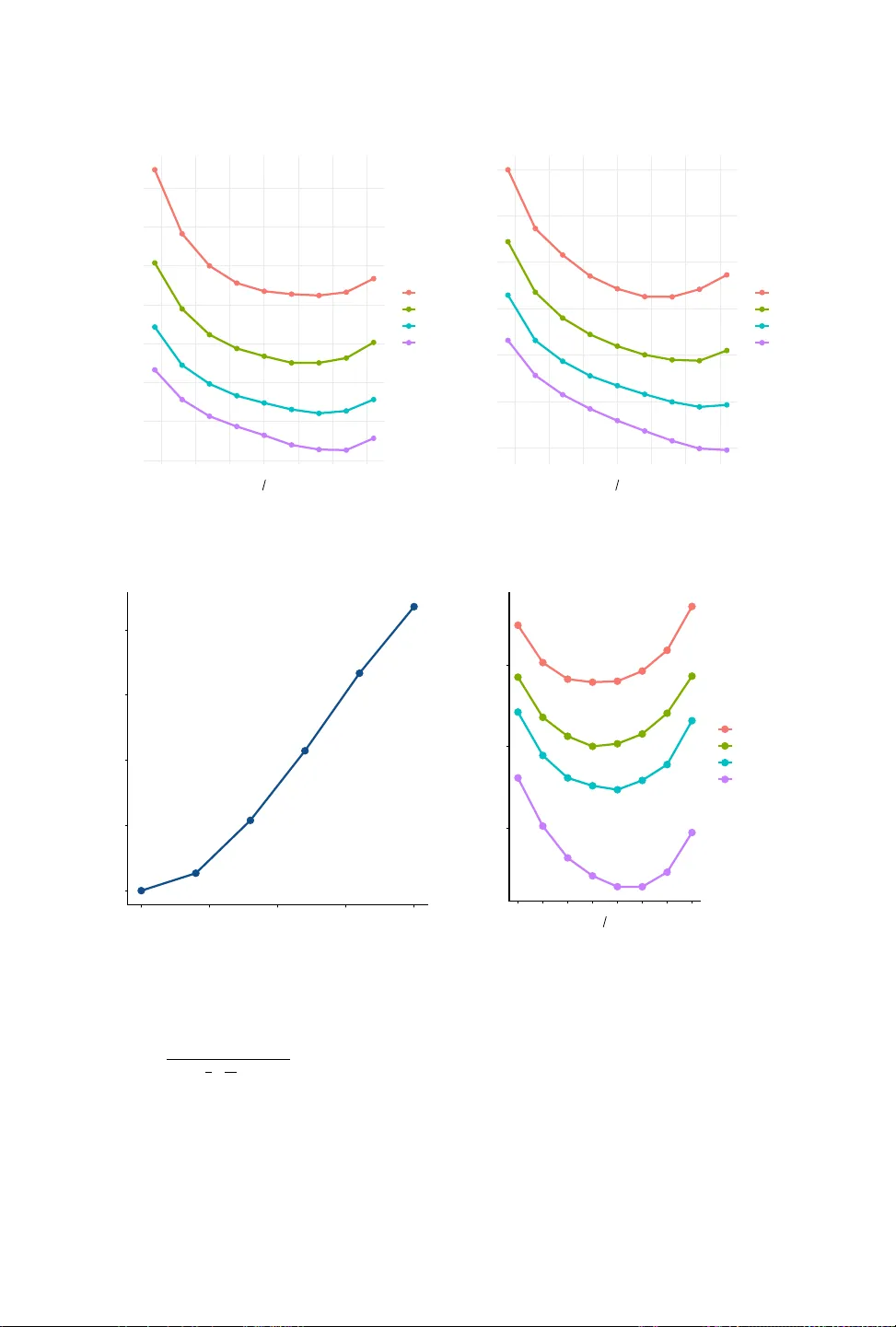

Researchers increasingly use data on social and economic networks to study a range of social science questions, but releasing statistics derived from networks can raise significant privacy concerns. We show how to release network connectedness indice…

Authors: Tom A. Rutter, Yuxin Liu, M. Amin Rahimian