Detection of Autonomous Shuttles in Urban Traffic Images Using Adaptive Residual Context

The progressive automation of transport promises to enhance safety and sustainability through shared mobility. Like other vehicles and road users, and even more so for such a new technology, it requires monitoring to understand how it interacts in tr…

Authors: Mohamed Aziz Younes, Nicolas Saunier, Guillaume-Alex

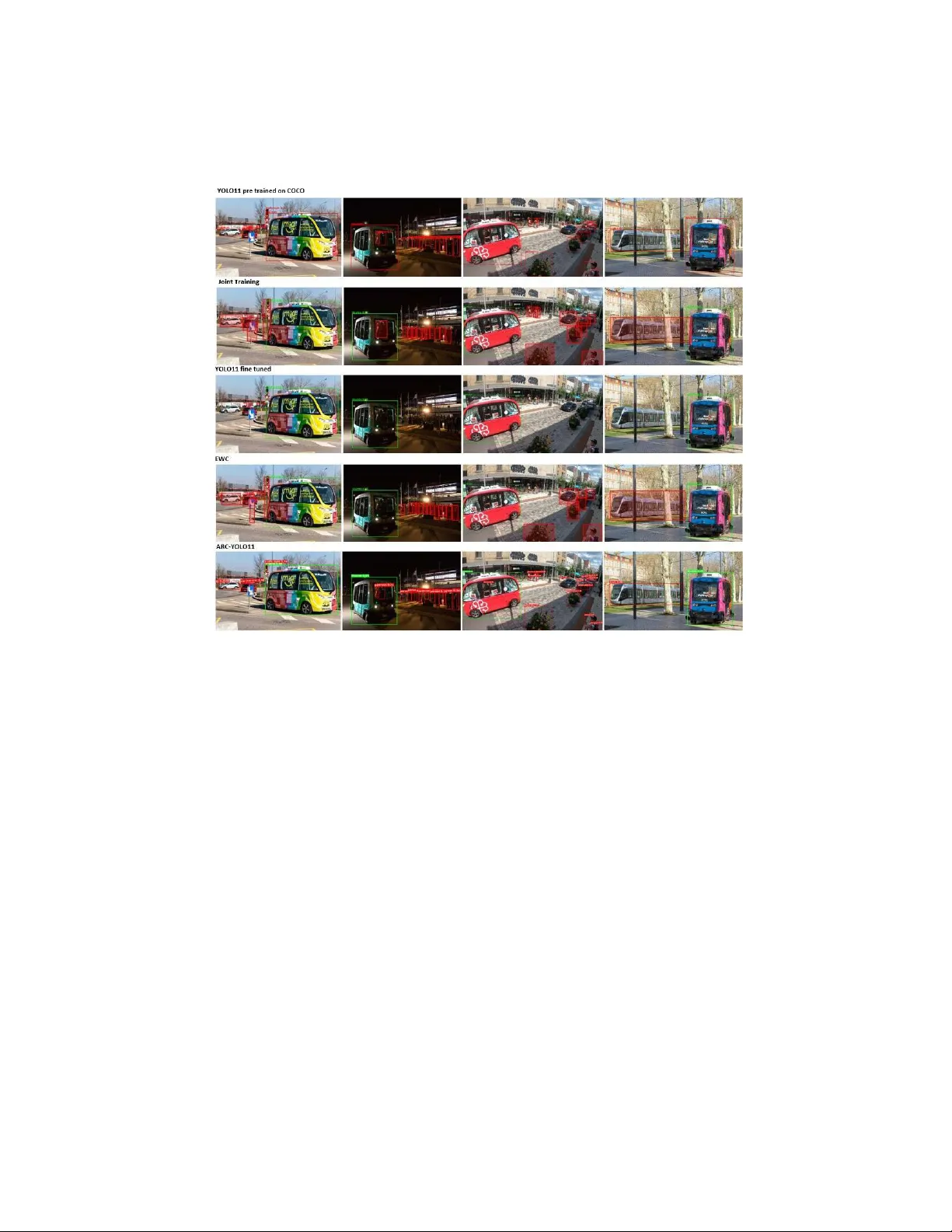

Detection of Autonomous Sh uttles in Urban T raffic Images Using A daptiv e Residual Con text Mohamed Aziz Y ounes 1 [0009-0005-8691-5678] , Nicolas Saunier 1 [0000-0003-0218-7932] , and Guillaume-Alexandre Bilo deau 1 [0000-0003-3227-5060] P olytechnique Mon tréal, Mon tréal, Canada {mohamed-aziz-2.younes,nicolas.saunier,gabilodeau}@polymtl.ca Abstract. The progressive automation of transport promises to en- hance safety and sustainability through shared mobility . Like other ve- hicles and road users, and even more so for such a new technology , it requires monitoring to understand ho w it interacts in traffic and to ev al- uate its safety . This can be done with fixed cameras and video ob ject detection. Ho wev er, the addition of new detection targets generally re- quires a fine-tuning approac h for regular detection methods. Unfortu- nately , this implemen tation strategy will lead to a phenomenon known as catastrophic forgetting, which causes a degradation in scene understand- ing. In road safety applications, preserving contextual scene knowledge is of the utmost imp ortance for protecting road users. W e introduce the A daptive R esidual Context (ARC) architecture to address this. ARC links a frozen context branch and trainable task-sp ecific branches through a Context-Guide d Bridge , utilizing atten tion to transfer spatial features while preserving pre-trained representations. Exp erimen ts on a custom dataset show that ARC matches fine-tuned baselines while significantly impro ving knowledge retention, offering a data-efficient solution to add new vehicle categories for complex urban en vironments. Keyw ords: Ob ject detection · T ransfer learning · Con tinual learning · Autonomous shuttles · Catastrophic forgetting · YOLO 1 In tro duction While driv er assistance technologies and driverless vehicles hold great promise for transp ortation, to make traffic safer and more efficient, the introduction of new agents, such as autonomous shuttles (Figure 1) ma y hav e unforeseen conse- quences. T raffic must therefore b e monitored to understand the impact of new automated vehicles. Video sensors are commonly used for traffic monitoring, but can generally not detect new categories of ob jects and vehicles if the computer vision systems ha ve not b een trained with such data. Unlike regular v ehicles, automated sh uttles op erate in close pro ximit y to p edestrians. They ha ve an unfamiliar visual fo otprin t, are frequen tly o ccluded, and are often not part of 2 M. A. Y ounes et al. Fig. 1. An example of the ARC model outputting joint detections of the original dataset classes (in red) and a new task sp ecific class (in green). existing annotated data. Applying detectors from the YOLO family [10,4] to sp ecialized tasks t ypically requires fine-tuning, which often leads to catastrophic forgetting [8]. In this scenario, optimizing for a new class, say automated shut- tles, causes the mo del to o verwrite or distort the weigh ts that were previously learned for general ob ject detection. Existing approac hes, such as joint training and regularization-based metho ds, can av oid this issue, but they come with sig- nifican t trade-offs: high storage demands, limited capacity to learn new features, or degraded p erformance on the original tasks. T o address these challenges, we prop ose the A daptive Residual Con text (ARC) architecture. ARC extends the YOLO framework with multiple heads: a frozen generalist head preserves knowl- edge from the large-scale pretraining, while trainable sp ecialist heads fo cus on the new classes. A Con text-Guided Bridge facilitates the transfer of spatial and seman tic cues from the frozen backbone to the sp ecialist branches. This allows the netw ork to use high-qualit y , pretrained representations without re-tuning them, thereb y preven ting catastrophic forgetting. W e ev aluate ARC against conv en tional adaptation strategies on a custom dataset with the new sh uttle class. Figure 1 shows an example for our mo del output where it successfully distinguishes b et ween the new class (in green) and the original classes (in red). More globally , results show that fine-tuning meth- o ds are no longer necessary to main tain p erformance, ARC ac hieves comparable detection while mitigating catastrophic forgetting. Our con tributions are as fol- lo ws: 1. W e in tro duce a multiple head architecture that enables task-sp ecific special- ization while preserving pre-trained kno wledge with a frozen generalist head and trainable sp ecialist heads; 2. W e propose an adaptiv e residual atten tion mec hanism that injects spatial con text into the sp ecialist heads without complex temp oral inputs; 3. W e show that AR C matches the p erformance of fully fine-tuned mo dels while main taining the integrit y of original features. A daptive Residual Context for Shuttle Detection 3 2 Related W ork 2.1 Ob ject Detection in Shared Autonomous Mobility Autonomous sh uttles are an unaddressed category in the literature. This absence of study is mainly b ecause of its rarity and their currently limited op erational deplo yment, which has made the collection of sufficient data for analysis hard. F urthermore, existing research prioritizes ego cen tric na vigation [2] or in-vehicle moun ted detections and largely ov erlo oks allo cen tric detection from fixed sensors t ypically used for traffic monitoring. 2.2 Con tinual Learning and Catastrophic F orgetting Con tinual learning, the ability to acquire new tasks without degrading p erfor- mance on previously learned ones, is hindered by catastrophic forgetting. Exist- ing solutions generally fall into three categories: regularization, rehearsal, and arc hitectural strategies. Regularization-based metho ds constrain parameter up- dates to preserve important weigh ts. Elastic W eigh t Consolidation (EW C) [5] estimates parameter importance via the Fisher Information Matrix, whereas Synaptic Intelligence (SI) [14] accumulates path in tegrals of parameter up dates to p enalize changes to weigh ts that con tributed significan tly to the drop in loss. Similarly , Memory A w are Synapses (MAS) [1] calculates imp ortance based on the sensitivity of the learned function outputs rather than the loss magnitude. Rehearsal-based approaches av oid forgetting by replaying a subset of old data or approximating it. iCaRL [9] combines a nearest-mean-of-exemplars classifier with distillation, while Gradien t Episo dic Memory (GEM) [7] pro jects gradien ts during training to ensure they do not increase the loss on stored episodic mem- ories. Although effective, these metho ds incur high storage costs. Alternativ ely , parameter-isolation metho ds lik e Progressiv e Neural Netw orks [11] gro w the ar- c hitecture for each new task to preven t in terference en tirely . Distillation-based metho ds, suc h as Learning without F orgetting (LwF) [6], offer a compromise by effectiv ely using the previous mo del as a teacher to regularize the curren t mo del output on new data. Our approach aligns with distillation strategies but uniquely enforces preserv ation at the architectural lev el to address the plasticity-stabilit y dilemma. 2.3 A ttention-Guided F eature F usion Merging frozen and trainable feature streams often results in feature im bal- ance, where activ ations from the frozen backbone dominate those of the train- able head. A ttention mechanisms help alleviate this issue b y rew eighting feature imp ortance. The Con volutional Blo c k A ttention Mo dule (CBAM) [13] applies c hannel atten tion using both max- and a v erage-p ooling follo wed b y a m ulti- la yer p erceptron to mo del inter-c hannel dep endencies, and then applies spatial atten tion to highlight informative regions. CSPNet [12] mitigates gradient re- dundancy by partitioning feature maps so that only part of the features un- dergo dens e transformations, impro ving gradient diversit y and computational 4 M. A. Y ounes et al. Fig. 2. The ARC arc hitecture: A frozen YOLO11 branch transfers features to trainable task-sp ecific branches via a Con text-Guided Bridge. The bridge employs sequential Channel Atten tion and Spatial Gating, fused via a learnable residual connection to enhance target detection. Several task sp ecific branches can b e added to handle new classes efficiency . How ev er, these approaches fo cus on static feature refinement and do not model the teac her–student in teractions fundamen tal to transfer learn- ing [3]. T o address this gap, w e introduce a Con text-Guided Bridge in which the frozen bac kb one generates spatial gating signals that explicitly guide the trainable heads. 3 Metho dology W e prop ose the Adaptiv e Residual Con text (ARC) arc hitecture. This is a detection framew ork designed with the limitations of conv en tional fine-tuning in mind by separating knowledge preserv ation from task-sp ecific learning. The mo del is based on the YOLO11 backbone [4] and adds a structural modification at the detection head level. As illustrated in Figure 2, the original detection head is replaced b y a dual-branch configuration comp osed of t wo streams: 1. F rozen Con text Branc h: A direct copy of the pre-trained detection head with all parameters frozen. This branch preserv es high-lev el seman tic repre- sen tations learned during large-scale pre-training and acts as a stable source of information. It outputs the original dataset classes. 2. T ask-Specific Branch: One or sev eral trainable detection heads initialized for the target task. This branc h learns task-sp ecific geometric and related visual cues. A daptive Residual Context for Shuttle Detection 5 Con t e xt F ea tu r es ( I n p u t fr o m Fr o z en Br anch) G lob al A v g P ool Gl o b al Ma x P o o l MLP Sigmo id Sigmo id Con v2d R eL U Pr oje ct ion ( R es iz e) C W H W x H x C Ch ann el W eigh t R e fined F ea tu r es ( Ch ann el - wise ) Spa tia l Hea tmap Sc al ed Hin t Out p u t t o T r ai n able Br anch Ch ann el A t t en tion M odu le Spa tia l Ga ting Modu le Pr o jec tio n & Scali n g A lph a ( Learn able Sc al e) ctx Fig. 3. Inside the Context-Guided Bridge. This bridge utilizes Channel A ttention and Spatial Gating to refine features before injecting them into the task-sp ecific stream through a learnable residual connection Alpha ( α ). W e implemen t AR C, replacing the original detection head in memory to reuse backbone weigh ts. During inference, w e apply a veto lo gic to reduce false p ositiv es: task-sp ecific predictions are suppressed if the frozen context branch detects a conflicting high-confidence ob ject (IoU > 0 . 5 ) in the same region. This preven ts the mo del from hallucinating shuttles in ph ysically implausible lo cations. T o transfer useful con textual information from the frozen branc h to the task- sp ecific branc h, we introduce a Con text-Guided Bridge illustrated in Fig- ure 3. This module performs selectiv e feature distillation through a residual con- nection, allowing the task-specific heads to b enefit from contextual cues while main taining learning. Let F in ∈ R C × H × W denote the input feature map from the bac kb one, and let X ctx represen t the corresp onding feature map pro duced by the frozen con text branch. The bridge consists of three sequential comp onen ts: c hannel attention, spatial gating, and resid ual pro jection in to the task-sp ecific branc h. Channel A ttention The channel attention mo dule determines which feature c hannels con vey the most relev an t seman tic information. Spatial information from X ctx is aggregated using a verage p o oling and max p ooling: z avg = P avg ( X ctx ) , z max = P max ( X ctx ) . (1) Both descriptors are passed through a shared multi-la yer p erceptron (MLP) and com bined to form a channel-wise attention map: 6 M. A. Y ounes et al. M c = σ ( MLP ( z avg ) + MLP ( z max )) , (2) where σ ( · ) denotes the sigmoid activ ation. The con text features are then refined via elemen t-wise multiplication: X ′ ctx = M c ⊗ X ctx . (3) Spatial Gating While channel atten tion fo cuses on what to emphasize, the spatial gating mo dule identifies wher e salient information is located. The refined con text features are first compressed along the c hannel dimension using a 1 × 1 con volution, follow ed b y a 7 × 7 con volution to generate a spatial attention map: M s = σ Con v 7 × 7 Con v 1 × 1 ( X ′ ctx ) . (4) This spatial mask highligh ts regions of interest while suppressing background activ ations. Residual Pro ject in to the T ask-specific Branc h The final step pro jects the refined con textual features into the feature space of the task-sp ecific branc h and injects them via a residual connection. A learnable scaling parameter α con trols the influence of the con textual signal: F enhanced = F in + α · Pro j ( M s ⊗ X ′ ctx ) . (5) The enhanced feature map F enhanced is then forwarded to each task-sp ecific detection head. This design guides the sp ecialized heads tow ard semantically meaningful regions while preserving the flexibilit y to adapt to the target task. 4 Exp erimen ts This section outlines the exp erimen tal setup and the ev aluation of the prop osed mo del. Our exp erimen ts fo cus on one new class for automated shuttles. 4.1 Dataset and Ev aluation Metrics T o address the scarcity of a v ailable data for these v ehicles, w e created a custom dataset that includes public domain videos and images from y outub e and in ad- dition to videos recorded at lo cal intersections in Montreal, Canada from existing datasets. W e also applied a strict threshold to identify and discard near-identical frames. The final version comprises 3,120 images, manually annotated via the Rob oflo w platform (see samples in Figure 4). T o improv e mo del generalization, w e applied v arious augmen tations during training. As shown in Figure 5. W e ev aluate detection p erformance using standard COCO b enc hmark met- rics, rep orting mAP@0.5 and mAP@0.5:0.95 alongside precision and recall. T o explicitly quantify the prev ention of catastrophic forgetting, w e utilize a F orget- ting Measure, defined as the absolute decline in mAP on the original base classes (e.g., COCO) after the mo del has b een trained on the target sh uttle dataset. A daptive Residual Context for Shuttle Detection 7 Fig. 4. Annotated samples from our custom dataset, sho wcasing diverse environmen ts; p oin ts of views, and ligh ting conditions. 4.2 Implemen tation Details and Mo del T raining Our mo dels were implemented using Python 3.11 and the PyT orch library , using the Ultr alytics library for mo del construction and Op enCV for pre-processing. All exp erimen ts were done on a High-P erformance Computing (HPC) cluster managed by CCDB - Digital Researc h Alliance of Canada. The training infras- tructure consisted of a no de equipp ed with a single NVIDIA H100 T ensor Core GPU and 64GB of system RAM. W e adopted the YOLO11 architecture, pre- trained on the COCO dataset, as the structural foundation for feature extrac- tion. In our prop osed ARC framework, this bac kb one is extended into parallel branc hes: a frozen con text branc h, designed to retain pre-learned representations of traffic scenes without degradation, and trainable task branches, dedicated to learning the specific features of the new targets. All input images are resized to 640 × 640 pixels. W e fine-tuned the task sp ecific branc h while keeping the con- text branc h frozen. W e used SGD (lr= 0 . 01 , momen tum= 0 . 937 , decay= 0 . 0005 ) for 100 epo c hs with a batch size of 8. A 3-epo c h linear w arm-up w as applied, with mosaic and mixup augmen tations disabled during the final 10 epo c hs to refine distribution alignment. The data was devided in to train (80%), testing (10%) and v alidation (10%). 4.3 Comparison with Baseline Solutions T able 1 compares our proposed ARC framew ork against standard adaptation strategies. W e observe the limitations of the pre-trained YOLO baseline, which lac ks a sp ecific representation for the autonomous sh uttle class. The fine-tuning approac h successfully adapts to the shuttle domain but it suffers from catas- trophic forgetting. As training progresses and the num ber of ep ochs increases, 8 M. A. Y ounes et al. 0 1 2 3 4 5 6 7 Number of Shuttles 0 500 1000 1500 2000 Image Count Instances per Image Distribution 0.0 0.2 0.4 0.6 0.8 1.0 Nor malized Ar ea (W * H) 0 100 200 300 400 F r equency BBo x Size (Nor malized Ar ea) Distribution 0.0 0.2 0.4 0.6 0.8 1.0 W idth 0.0 0.2 0.4 0.6 0.8 1.0 Height BBo x W idth vs Height (Nor malized) 0.0 0.2 0.4 0.6 0.8 1.0 Nor malized Center X 0.0 0.2 0.4 0.6 0.8 1.0 Nor malized Center Y Spatial Distribution of Centers 0.0 0.2 0.4 0.6 0.8 1.0 W idth 0 25 50 75 100 125 150 175 BBo x Nor malized W idth Distribution 0.2 0.4 0.6 0.8 1.0 Height 0 25 50 75 100 125 150 175 BBo x Nor malized Height Distribution 20 40 60 80 100 Count Dataset Characteristics (Autonomous Shuttle) Fig. 5. Statistical analysis of the dataset. T op: Instance counts, normalized areas (scale v ariabilit y), and aspect ratios. Bottom: Spatial distribution of box centers (spatial bias) and width/height histograms. T able 1. Quan titative comparison of adaptation strategies. Bold indicates best results, while Underlined indicates second b est result. Metho d P aradigm Sh uttle mAP COCO mAP F orgetting Pre-trained YOLO old task 0.0% 63.7% N.A YOLO11 Fine-tuning 47.4% 0.0% -100% Joint T raining Multi-task 47.6% 60.2% N/A EWC [5] Regularization 40.3% 51.8% -11.9% LwF [6] Distillation 46.8% 59.0% -3.7% AR C-YOLO11 Structural F reeze 47.3% 62.6% -1.1% the mo del weigh ts are aggressively up dated to minimize the new task loss. Joint training is computationally prohibitiv e for real-world applications where retain- ing massive original datasets is not feasible. Regularization-based metho ds like EW C and distillation-based metho ds lik e LwF offer a compromise. How ever, they struggle to match the plasticity of the fine-tuned baseline, often suppressing the learning of the new task to protect the old weigh ts. In contrast, ARC achiev es the most effectiv e balance. By anchoring the feature extraction, it preven ts the destructiv e weigh t up dates seen in standard fine-tuning. Our approach matches the detection capability of the fine-tuned mo del on the shuttle task while pre- serving the original COCO p erformance significantly b etter than EWC or LwF. Figure 6 giv es a visualization of the outputs of ev ery metho d used, w e can notice that AR C is capable to distinguish b et ween the dataset class and the old COCO classes in different conditions achieving the results seen in models lik e EWC and A daptive Residual Context for Shuttle Detection 9 Fig. 6. Side b y side comparaison betw een the methodes seen in table 1 with detections of the original Classes (in red) and the new class (in green). the joint-training approach, unlike the fine-tuned model whic h has completely forgotten the COCO dataset classes after 100 ep ochs. 5 Conclusion W e introduced the Adaptiv e Residual Context architecture to solve the stabilit y- plasticit y dilemma in autonomous sh uttle p erception. By decoupling semantic preserv ation from task-sp ecific adaptation, ARC decreases the catastrophic for- getting of fine-tuning while a voiding the inefficiencies of joint training. Although v alidated on sh uttles, the ARC core principle is task-agnostic, offer- ing a scalable path wa y for learning new classes without retraining the backbone. F uture work will extend the Context-Guided Bridge into the temp oral domain, ev olving the curren t framew ork into a Sp atio-T emp or al Gating mechanism to lev erage motion patterns for enhanced tracking in dynamic environmen ts. 10 M. A. Y ounes et al. A c kno wledgement The authors gratefully ac knowledge the financial supp ort of MIT ACS through its Globalink program, and the computing supp ort provided by the Digital Researc h Alliance of Canada. References 1. Aljundi, R., Babiloni, F., Elhosein y , M., Rohrbach, M., T uytelaars, T.: Memory a ware synapses: Learning what (not) to forget. In: Pro ceedings of the Europ ean Conference on Computer Vision (ECCV). pp. 139–154 (2018) 2. Apurv, K., Tian, R., Sherony , R.: Detection of e-sco oter riders in naturalistic scenes. arXiv preprint arXiv:2111.14060 (2021) 3. Hin ton, G., Viny als, O., Dean, J.: Distilling the knowledge in a neural netw ork. arXiv preprint arXiv:1503.02531 (2015) 4. Jo c her, G., Chaurasia, A., Qiu, J.: Ultralytics YOLOv8 (2023), https://github. com/ultralytics/ultralytics 5. Kirkpatric k, J., P ascanu, R., Rabino witz, N., V eness, J., Desjardins, G., Rusu, A.A., Milan, K., Quan, J., Ramalho, T., Grabsk a-Barwinsk a, A., Hassabis, D., Clopath, C., Kumaran, D., Hadsell, R.: Overcoming catastrophic forgetting in neu- ral netw orks. Pro ceedings of the National Academ y of Sciences 114 (13), 3521–3526 (2017). https://doi.org/10.1073/pnas.1611835114 6. Li, Z., Hoiem, D.: Learning without forgetting. IEEE T ransactions on P attern Analysis and Machine Intelligence 40 (12), 2935–2947 (2017) 7. Lop ez-P az, D., Ranzato, M.: Gradient episo dic memory for contin ual learning. In: A dv ances in Neural Information Pro cessing Systems (NeurIPS). vol. 30 (2017) 8. McClosk ey , M., Cohen, N.J.: Catastrophic in terference in connectionist netw orks: The sequential learning problem. Psychology of Learning and Motiv ation 24 , 109– 165 (1989) 9. Rebuffi, S.A., Kolesnik o v, A., Sp erl, G., Lamp ert, C.H.: icarl: Incremental classifier and representation learning. In: Pro ceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR). pp. 2001–2010 (2017) 10. Redmon, J., Divv ala, S., Girshick, R., F arhadi, A.: Y ou only lo ok once: Unified, real-time ob ject detection. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR). pp. 779–788 (2016) 11. Rusu, A.A., Rabino witz, N.C., Desjardins, G., Soy er, H., Kirkpatrick, J., Ka vukcuoglu, K., P ascan u, R., Hadsell, R.: Progressiv e neural net works. arXiv preprin t arXiv:1606.04671 (2016) 12. W ang, C.Y., Liao, H.Y.M., W u, Y.H., Chen, P .Y., Hsieh, J.W., Y eh, I.H.: Cspnet: A new backbone that can enhance learning capability of cnn. In: Pro ceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR) W orkshops. pp. 390–391 (2020) 13. W o o, S., Park, J., Lee, J.Y., Kw eon, I.S.: CBAM: Conv olutional blo c k attention mo dule. In: Pro ceedings of the European Conference on Computer Vision (ECCV). pp. 3–19 (2018) 14. Zenk e, F., Poole, B., Ganguli, S.: Contin ual learning through synaptic intelligence. In: International Conference on Machine Learning (ICML). pp. 3987–3995 (2017)

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment