AI Evasion and Impersonation Attacks on Facial Re-Identification with Activation Map Explanations

Facial identification systems are increasingly deployed in surveillance and yet their vulnerability to adversarial evasion and impersonation attacks pose a critical risk. This paper introduces a novel framework for generating adversarial patches capa…

Authors: Noe Claudel, Weisi Guo, Yang Xing

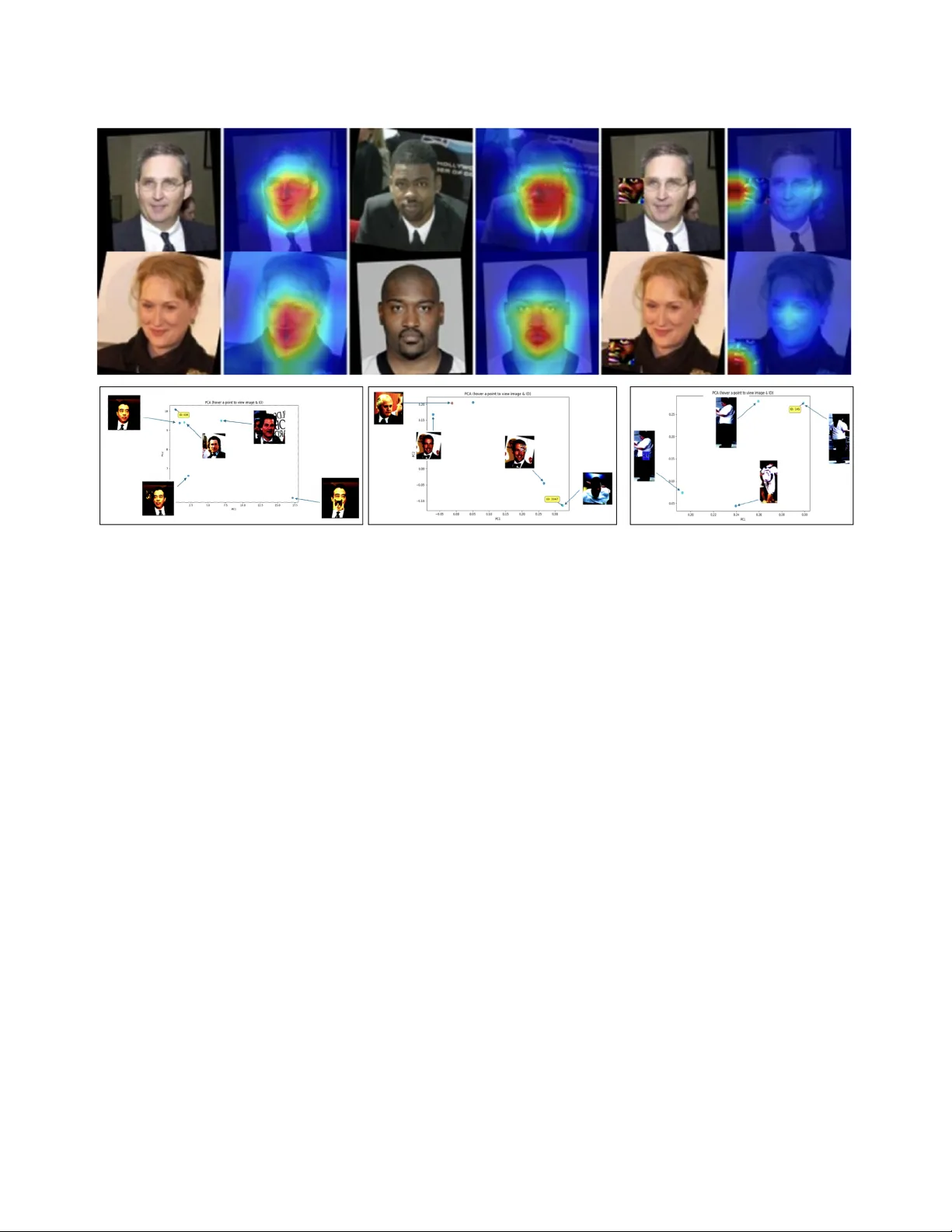

1 AI Ev asion and Impersonation Attacks on F acial Re-Identification with Acti v ation Map Explanations Noe Claudel 1 , W eisi Guo 1 ∗ , Y ang Xing 1 Abstract —Facial identification systems are increasingly de- ployed in sur veillance and security applications, yet their vul- nerability to adversarial evasion attacks pose a critical risk. This paper introduces a nov el framework f or generating adversarial patches capable of performing both e vasion and imperson- ation attacks against deep re-identification models across non- overlapping cameras. Unlike prior approaches that require iterative optimization for each target, our method employs a conditional encoder–decoder network to synthesize adversarial patches in a single forward pass, guided by multi-scale features from source and target images. The patches are optimized with a dual adversarial objective comprising pull and push terms. T o enhance imperceptibility and aid physical deployment, we further integrate naturalistic patch generation using pre-trained latent diffusion models. Experiments on standard pedestrian (Market-1501, DukeMTMCr eID) and facial recognition benchmarks (CelebA- HQ, PubFig) datasets demonstrate the effectiveness of the proposed method. Our adversarial evasion attacks reduce mean A verage Precision fr om 90% to 0.4% in white-box settings and from 72% to 0.4% in black-box settings, showing str ong cross-model generalization. In targeted impersonation attacks, our framework achieves an success rate of 27% on CelebA-HQ, competing with other patch-based methods. W e go further to use clustering of activation maps to interpret which features are most used by adversarial attacks and propose a pathway for future countermeasur es. The results highlight the practicality of adversarial patch attacks on retrie val based systems and underline the urgent need for robust defense strategies. Index T erms —Adversarial AI; Cybersecurity; Surveillance; I . I N T RO D U C T I O N Person re-identification (Re-ID) is the problem of re- trieving images of the same individual captured by non- ov erlapping cameras. In other words, given a query person image the system must determine if that person appears in other camera views. Re-ID is widely used in security , surveillance and is challenging because must contend with variations in vie wpoint, illumination, resolution, and back- ground content. In recent years, the dev elopment of smart city technologies has enhanced urban living by integrating this adv anced system that improve safety and efficienc y . 1 All authors are with the Centre for Assured and Connected Au- tonomy , Cranfield Uni versity , Bedford, UK. ∗ Corresponding author: weisi.guo@cranfield.ac.uk. This work is supported by Bedfordshire Police. Modern systems use deep learning which achiev e state-of- the-art performance in person re-identification. Ho wever , the robustness of deep neural networks in these contexts has become a ke y concern, as numerous studies have revealed their vulnerability to adv ersarial attacks. An adversarial attack can be categorized along sev eral dimensions [1], [2]: • White-box vs Black-box attack: In white box attacks the adversary knows the model parameters and architecture, whereas in black-box attacks they do not. • Training vs Inference: Poisoning attacks inject malicious examples into the training data using backdoor access, while e v asion attacks perturb inputs at inference time. • Digital vs Physical: Digital attacks are applied directly to pixel inputs, whereas physical attacks [3] assume the perturbation is printed or worn in the real w orld. • T argeted vs Untargeted: In impersonation (targeted) at- tack, the goal is to hav e the person recognized as a spe- cific other identity while in ev ading attack (untargeted) the goal is simply to av oid correct recognition. • Conspicuous vs Unconspicous: Conspicuous attacks are visually obvious and may raise suspicion. In contrast unconspicuous attack are designed to blend into the natural appearance of the scene or object [4], [5]. A. Revie w on Evasion Attac ks The first physical adversarial attack for Re-ID was pro- posed by W ang et al. [6] called AdvPattern. They generated printable patches to ev ade search or impersonate a target identity . Follo wing this, Ding et al. [7] introduced a univ ersal adversarial perturbation (MU AP) that disrupts the similarity ranking of a Re-ID model. This is a digital attack that showed that a single perturbation pattern added to any query image can significantly degrade retriev al accuracy . W ang et al. [8] similarly attacked the similarity computation to induce incorrect rankings. W ang et al. [9] proposed using adversarial attacks as a form of target protection crafting perturbation, so the person’ s feature does not match any gallery identity . Gong et al. [10] extended uni versal perturbations to visible- infrared cross-modality Re-ID, though with limited real-world applicability . A landmark result by Sharif et al. [11] demon- strated that printed adv ersarial e yeglass frames can cause high 2 mis-recognition rates. They optimized a perturbation pattern on eyeglass and achie ved up to 97% success in dodging attacks and 75% in impersonation attacks. In 3-dimensional or soft body cases, stretch in v ariance can be added to the patch training process to take into account real-world rotation and stretch transformations [3]. In summary , extensi ve prior work has shown that deep Re-ID and face recognition models can be misled by adversarial patches. All the methods cited abov e help motiv ate our approach and establish performances baselines for comparison. B. Gap and Novelties The current gaps in research include 2 areas: (1) the ability for attacks to mis-identify or impersonate to a different target especially in black-box settings, and (2) the practical deployability of these patch attacks under div erse settings such as single training process, printer friendly , and low human perceptibility (unconspicous). W e propose a pose- in variant adversarial patch generation framework designed to mislead deep learning-based re-identification system. Our nov elties are: 1) One-Off T argeted Attack Training: dev eloped targeted and untargeted attack in one forwar d pass of a patch generator network without ne w training for each new identity targeted. The method integrates conditional encoder-decoder network that synthesizes patches in- formed by multi-scale features from both a source and a tar get image. 2) Improved Imperceiv able (Unconspicous) and Deploy- ment Attributes: T o improve the stealth and printabil- ity of the adversarial patches we further introduce a naturalistic patch generation strategy . W e introduce the perturbation in the latent space of a pretrained diffusion model to preserve realism while maintaining adv ersarial effecti veness. Our innov ations are rigorously ev aluated on two pedestrian re-identification benchmarks (Market1501 and DukeMTMC) as well as two facial recognition datasets (PubFig and CelebA-HQ) under both white-box and black-box attack assumptions. I I . S Y S T E M S E T U P A N D M E T H O D O L O G Y Let I s ∈ R H × W × 3 denote a source (probe) image con- taining a person whose identity is y s , and let I t ∈ R H × W × 3 denote a target gallery image of a different persons with identity y t . A trained ReID model f maps each input image to a feature embedding f ( I ) ∈ R d . The ReID decision relies on the distance (e.g., Euclidean or cosine) between probe and gallery embeddings. Our goal is to generate a sparse, Fig. 1. Architectural overvie w of adversarial patch generation workflow . learnable patch P ∈ R h × w × 3 such that, when P is pasted onto I s , the resulting adv ersarial image: I adv = (1 − M ) ⊕ I s + M ⊕ T ( P ) , (1) fools the ReID model into matching I adv with the targeted attack I t while moving it away from I s . Here T , denotes a set of random spatial and photometric transformations applied to P (e.g., affine warp, perspectiv e, Gaussian blur) to increase robustness. During the training phase, one or more pre-trained person re-identification or facial recognition models, called targeted models, are employed to extract feature embeddings from the adversarially perturbed images. These embeddings represent the features that the targeted model uses for matching. The generator network which produces the patch is optimized so that when the patch is applied to an image, the resulting embedding leads to a successful attack. A global overvie w of the method is shown on Figure 1. T o achiev e this the extracted embeddings are used to compute the adversarial loss function, which directly constitute the training objectiv e. During the inference phase, the process is more lightweight. Only the trained generator is required to produce the adv ersarial patch. Gi ven a source image and, if the scenario is tar geted, a target image representing the desired identity , the generator creates the adversarial patch. A. P atch Gener ation This design embeds target-specific pedestrian attributes into the patch, enhancing its ability to mimic gallery em- beddings. W e design a conditional, encoder–decoder network that synthesizes P based on multi-scale features from I s both and I t : • Feature Extraction: A pretrained backbone (e.g., ResNet- 50) encodes each image to extract deep feature maps at three spatial resolutions for source and target. 3 • Feature Fusion: W e concatenate the highest-level source and target features along the channel dimension and reduce them to 512 channels via a 1×1 con volution. • Up-sampling Decoder: Four sequential Up-Blocks pro- gressiv ely up-sample the fused feature to the patch spatial size ( h, w ). At each stage, skip connections inject corresponding target features to guide shape and texture synthesis. The Up-Blocks in the generator architecture are designed to progressively increase the spatial res- olution while integrating information from the target features at each stage. Each block consists of a transpose con volution layer that up samples the spatial dimensions of the input feature map by a factor 2. Feature concatena- tion where the up-sampled feature map is concatenated with the corresponding target feature map. Finally , a 2d con volution layer processes the concatenated features to refine them and learn effecti ve joint representation. This con volution is follo wed by a ReLU acti vation and by a batch normalization. • Output Head: A final 3×3 con volution followed by a tanh activ ation produces the RGB patch P , whose values are scaled to [—1,1]. B. P atch Embedding and Differ ential Blending T o paste the patch onto I s , we sample a random location ( x, y ) . within the image boundaries and generate a binary mask M of the same size as I s , where values inside a h × w window at ( x, y ) are 1, and 0 elsewhere. W e then blend: I adv = (1 − M ) ⊕ I s + M ⊕ P tiled , (2) where P tiled is the patch broadcast to the full image size. This affine blending remains fully dif ferentiable with respect to the P . C. Adversarial Objectives 1) T ar geted Misidentification Attack: Using the ReID model f as a fix ed feature extractor , we denote the normalized embeddings of I s , I t , I adv and z s , z t , z adv ∈ R d . Our loss function is comprised of a push and pull term augmented by hyperparameters: L adv = λ 1 L pull + λ 2 L push , (3) The pull term is: L pull = 1 − cos ( z adv , z t ) , (4) which encourages I adv to move closer to the target via the cosine similarity function. The push term is: L push = max(0 , 1 − cos ( f adv , f t )) + τ − [1 − cos ( f adv , f s )] , (5) Fig. 2. Pipeline of natural patch generation using latent space perturbations. where τ penalizes the cosine similarity function to drive I adv away from the original identity . By introducing the margin- based formulation, the push effect is only applied when the similarity to the source identity is abov e τ . Once the similarity drops belo w the mar gin the penalty becomes zero. This ensures a better balance between moving away from the original identity and con verging to ward the tar get identity . 2) Untar geted Evasion Attac k: W e only seek to e vade the source identity , without pushing the embedding to ward any specific target. Here, we only define a single “push” term that penalizes high similarity between I adv , and its identity: L push = cos ( z adv , z s ) , (6) where by only separating it from its original identity , we simplify the loss landscape and achieve faster con ver gence and stronger performance. This is the baseline attack setting which is a deri vati ve of the tar geted attack. I I I . T R A I N I N G P R O C E D U R E A N D N A T U R A L I S T I C S Y N T H E S I S W e optimize the patch generator parameters θ via stochas- tic gradient descent over mini batches of image pairs I s , I t and we train for 100 epochs with an initial learning rate of 2 x 10 − 4 , τ = 0 . 3 , λ 1 = 1 , λ 2 = 0 . 5 . During each iteration, we sample a random location and transformation for each patch, ensuring varied adversarial examples. The resulting generated patch has an aggressi ve texture which make it hard to print. Even though there are many tricks to constraints the pixel values distribution such as the TV loss it is still very 4 conspicuous and might raise attentions. So that lev eraging image generati ve model can help to create natural looking adversarial patches. W e focused on two different try to get natural looking image: 1) GAN-based Adversarial Patch Synthesis: During train- ing, the discriminator parameters are updated by min- imizing: L D = − [ E x ∼ p data (log D x ) + E z ∼ p z (log(1 − D ( G ( z ))))] , where we penalize the first term D if it assigns low realness to patches . Simultaneously the generator is trained to both fool the discriminator and to produce a patch when ov erlaid on inputs, causes mis- classification in the target model f , with loss function: L G = − E z ∼ p z [log( D ( G ( z )))] + λ adv L adv . 2) Latent Space Perturbation in a Pretrained Diffusion Model: The second approach exploits the exceptionally high image quality of pretrained latent diffusion mod- els [12]. A dif fusion pipeline consists of a denoising network f denoise that iteratively refines a noise tensor I noisy into a clean latent code l ∈ R C × h × w , and a V ari- ational Auto-Encoder (V AE) decoder that reconstructs pixel-space images from l . T o achiev e low practical imperceptibility , we introduce a learnable perturbation δ ∈ R C × h × w into the denoised latent space: l = l + δ , and pass it through the V AE to obtain the final image. Optimising for δ needs to regularise the perturbations by penalizing de viations in latent norm. Figure2 illustrates the overall w orkflo w of the natural patch generation process. The adversarial generator produces an output vector that is injected directly into the latent space of the LDM by adding it to the original latent represen- tation of the image. This perturbed latent representation is then decoded through the LDM’ s V AE to obtain the final adversarial patch in the image space. Importantly , both the U-Net denoiser and the V AE decoder of the LDM remain frozen throughout adversarial training, ensuring that only the generator is updated. This design choice prevents degradation of the generativ e prior and guarantees that the adversarial patches retain the naturalness and realism imposed by the diffusion model, while still being optimized to fool the downstream Re-ID or face recognition system. I V . D AT A S E T S , M O D E L S , A N D A S S U M P T I O N S T o train and ev aluate our adversarial patch generator in the person re-identification (ReID) setting, we rely on two standard public benchmarks widely used in the literature: The market-1501 and the DukeMTMC-reID. The first has a total of 32 668 annotated pedestrian images captured by 6 cameras. It contains 1 501 unique persons (751 for training, 750 for testing). DukeMTMC-reID has 36 411 images from 8 cameras and it contains 1 812 identities (702 training, 702 testing, 408 distractors).In the ev ading attack scenario we consider , the objective is to prevent the model from correctly matching a person’ s appearance across dif ferent camera views. That is, the adversarial patch is optimized to cause mismatches between different images of the same identity , breaking the continuity of tracking and recognition across a camera network. These tw o datasets are particularly well-suited for this task, as they include multiple images per person captured under varying viewpoints and en vironmental conditions. The target model refers to the re-identification network used during the training phase of the adversarial patch gener- ator . In our setup, this model is based on OSNet, a lightweight con volutional architecture specifically designed for person re- identification. W e train OSNet on the Market-1501 dataset using the combined standard identity classification objectiv e and the triplet loss, allowing it to learn robust feature em- beddings for distinguishing individuals across camera views. T o assess the transferability of the adversarial patch and its general effecti veness beyond a single model, we introduce an auxiliary model for ev aluation. This auxiliary network is a ResNet-50, also trained on the Market-1501 dataset using identical supervision. Importantly , the ResNet-50 model is not exposed to the patch generator during training. It thus serves as an independent benchmark to ev aluate whether the ev ading attack generalizes to unseen model architectures. This setup enables us to measure both: • White-box performance, where the adversarial patch attacks the model, it was trained against (OSNet). • Black-box performance, where the patch is ev aluated on a structurally different, independently trained model (ResNet-50). By comparing results across both models, we gain insight into the rob ustness and generalization ability of our adversarial ev asion strategy . V . R E S U LT S Figure 3 illustrates the ef fect of the adversarial ev asion attack using a natural looking patch. The figure compares the top 10 retriev al results for the same probe image in two settings: without and with the adversarial patch. W ithout attack, the system correctly retrie ves images corresponding to the same identity . In contrast, once adversarial patch is applied none of the top 10 retriev ed images correspond to the correct identity . This highlights the ability of the naturalistic adversarial patch to mislead the recognition system. A. Evasion Attacks The white and black box assumption results in T able 1 and 2 mean the attack either understands the classifier used or does not. T o quantitativ ely assess the effecti veness of the adversarial ev ading attack, we adopt the mean A verage 5 Fig. 3. T op 10 retriev al for the same probe non-attacked and attacked. Precision (mAP) metric, which is the standard benchmark for retriev al-based tasks. A lower mAP after patch application in- dicates that the model struggles to recognize the adversarially perturbed person, confirming the effecti veness of the attack. The white box mAP performances show our adversarial patch can erode mAP from 90% to 0.4% or 5% if we apply a naturalistic patch. The other panel results sho w the erosion of 10th likely match and 1st likely match, which follo ws a similar pattern. In the black box case, the naturalistic patch achiev es a far less desirable performance, as the naturalistic constraint limits the expressiv eness of the patch restricting the extent to which adversarial features can be encoded and transferred to other unknown black box models. Other variables we examined: • Size of Patch: as the size of the patch decreases, the mAP can increase from 0.3% to 60% for a patch size decrease of 3x. • Data Set T ransferability: we tried the DukeMTMC-reID data set and our results still achiev ed 0.3% mAP . T ABLE I E V A S IO N P E RF O R M AN C E S U N D ER W H IT E B OX S ET T I N G mAP 10th 1st No Patch 90% 100% 100% Random Patch 75% 94% 98% Adversarial P atch 0.4% 0.3% 0.9% Naturalistic Patch 5% 3.4% 9.2% T ABLE II E V A S IO N P E RF O R MA N C E S U N D ER B L AC K B OX S E TT I N G mAP 10th 1st No Patch 72% 89% 97% Random Patch 68% 87% 96% Adversarial P atch 0.4% 0.3% 5% Naturalistic Patch 43% 61% 87% B. T ar geted Mis-classification Attacks T o quantitatively assess the effecti veness of the adversarial mis-classification tar get attack, we adopt the attack success Fig. 4. Example of impersonation attack. rate (ASR) metric, which is the proportion of adversarial examples for which the similarity with the attacker’ s target identity exceeds a pre-defined threshold τ : AS R = 1 N N X i =1 cos ( f i adv , f i t ) > τ , (7) where N is the number of trials and a high ASR indicates that more patches successfully deceive the model into matching the attacker’ s target. Overall, we achiev e a 54% ASR with ResNet50 and 27% ASR with FaceNet in attack success. A detailed breakdown is given in T able 3, and comparati ve performance is sho wn in T able 4. Whilst our approach is not better in terms of performance, we only require a single training process. T ABLE III I M PE R S O NA T I O N C O S I NE S I MI L A R IT Y P E RF O R M AN C E S source target random accurac y ResNet50 No P atch 85% 66% 68% 92% ResNet50 with P atch 76% 77% 66% 47% FaceNet No Patch 75% 18% 20% 98% FaceNet with Patch 69% 48% 22% 76% T ABLE IV I M PE R S O NA T I O N A TTAC K B EN C H M AR K A G A IN S T S T AT E - O F - TH E - A RT Performance Adv-Hat [13] 4.7% Adv-Glasses [11] 9.1% Our Approach 26.7% V I . A C T I V A T I O N M A P E X P L A NAT I O N S T o better understand what features the patch generator extracts and utilizes to create adversarial patches, we propose a visualization of the activ ation maps from the last feature layer of the feature extractor . This analysis provides insight into which regions of the input the model focuses on during patch generation. Gi ven an acti vation tensor F ∈ R B × C × h × w we compute a 2D acti vation map per image by summing the 6 Fig. 5. (T op) Examples of activation maps highlighting the image regions most activ ated by the target model. (Bottom) Embeddings projected in 2D space in context of evasion attack on face recognition system. squared activ ations across the channel dimensions: A i,j = P C c =1 F 2 c,i,j . This results in a single 2D acti v ation map per image. The visualization of the acti v ation maps in Figure5 top shows how the patch influences the targeted model’ s internal representations. This visualization rev eals that the patch draws and concentrates nearly all of the model’ s attention, effecti vely dominating the feature representation and ov ershadowing other f acial regions. W e then employ Principal Component Analysis (PCA) as a visual and quantitati ve tool to assess the adversarial ef fect on the generated patch on image embeddings. The core idea is to compare the position of attacked sample in the feature space to that of their clean and target counterparts. The procedure is as follows: The L2-normalised embeddings are extracted from the targeted model for: (a) source image, (b) clean comparison image of same source, (c) a target image, and (d) the adversarial patched image. The PCA is trained on features matrix X ∈ R N × d . W e analysed the embeddings’ projections under different attack scenarios. Figure5 bottom illustrates an ev asion attack on face recognition using natural- looking generated patches: the attacked images are pushed far from their original source points and/or drawn to ward the target. V I I . C O N C L U S I O N S A N D F U T U R E W O R K The key novelty of our approach lies in the ability to generate adversarial patches without retraining for each new target identity , making it more efficient and adaptable than prior works. The promising results for both ev ading and targeted attacks suggest that our architecture provides a flexible framew ork for exploring adv ersarial vulnerabilities in re-id systems. Our ev ading attack experiments demonstrate strong effec- tiv eness by reducing the mAP of the Re-ID model to below 1%. The generated patches show good transferability across different models and datasets, highlighting the rob ustness of our method. By incorporating a pre-trained latent diffusion model, we were also able to produce perturbations that retain natural-looking appearance enhancing the stealthiness of the adversarial e xamples. For the targeted attack, we ev aluated the attack success rate on the CelebA-HQ dataset and found that our method achiev es a slightly lower performance compared to existing state-of-the-art without having to be re-trained for dif ferent targets which is a crucial adv antage. Future work should further in vestigate real-world physical deployment, e v aluate targeted attacks on large-scale re-id datasets, and explore adapti ve defences that can mitigate the transferability of our generated patches. 7 R E F E R E N C E S [1] K. Nguyen, T . Fernando, C. Fookes, and S. Sridharan, “Physical adversarial attacks for surveillance: A survey , ” IEEE T ransactions on Neural Networks and Learning Systems , vol. 35, no. 12, pp. 17 036– 17 056, 2024. [2] A. Guesmi, M. A. Hanif, B. Ouni, and M. Shafique, “Physical adversarial attacks for camera-based smart systems: Current trends, categorization, applications, research challenges, and future outlook, ” IEEE Access , vol. 11, pp. 109 617–109 668, 2023. [3] C. Li and W . Guo, “Soft body pose-inv ariant ev asion attacks against deep learning human detection, ” in 2023 IEEE Ninth International Confer ence on Big Data Computing Service and Applications (Big- DataService) , 2023, pp. 155–156. [4] C. Chen, X. Zhao, and M. C. Stamm, “Generative adversarial attacks against deep- learning-based camera model identification, ” IEEE T rans- actions on Information F or ensics and Security , vol. 20, pp. 7679–7694, 2025. [5] J. Li, F . Schmidt, and Z. Kolter , “ Adversarial camera stickers: A physical camera-based attack on deep learning systems, ” in Pro- ceedings of the 36th International Conference on Mac hine Learning , ser . Proceedings of Machine Learning Research, K. Chaudhuri and R. Salakhutdinov , Eds., vol. 97. PMLR, 09–15 Jun 2019, pp. 3896– 3904. [6] Z. W ang, S. Zheng, M. Song, Q. W ang, A. Rahimpour , and H. Qi, “advpattern: Physical-world attacks on deep person re-identification via adversarially transformable patterns, ” in Pr oceedings of the IEEE/CVF International Confer ence on Computer V ision (ICCV) , October 2019. [7] W . Ding, X. W ei, R. Ji, X. Hong, Q. Tian, and Y . Gong, “Beyond univ ersal person re-identification attack, ” IEEE T ransactions on Infor- mation F or ensics and Security , vol. 16, pp. 3442–3455, 2021. [8] H. W ang, G. W ang, Y . Li, D. Zhang, and L. Lin, “Transferable, control- lable, and inconspicuous adversarial attacks on person re-identification with deep mis-ranking, ” in Pr oceedings of the IEEE/CVF Conference on Computer V ision and P attern Recognition (CVPR) , June 2020. [9] L. W ang, W . Zhang, D. W u, F . Zhu, and B. Li, “ Attack is the best defense: T owards preempti ve-protection person re- identification, ” in Pr oceedings of the 30th ACM International Confer ence on Multimedia . New Y ork, NY , USA: Association for Computing Machinery , 2022, p. 550–559. [Online]. A vailable: https://doi.org/10.1145/3503161.3547958 [10] Y . Gong, Z. Zhong, Y . Qu, Z. Luo, R. Ji, and M. Jiang, “Cross-modality perturbation synergy attack for person re-identification, ” Advances in Neural Information Processing Systems , vol. 37, pp. 23 352–23 377, 2024. [11] M. Sharif, S. Bhagav atula, L. Bauer, and M. K. Reiter, “ Accessorize to a crime: Real and stealthy attacks on state-of-the-art face recognition, ” in Proceedings of the 2016 acm sigsac confer ence on computer and communications security , 2016, pp. 1528–1540. [12] R. Rombach, A. Blattmann, D. Lorenz, P . Esser, and B. Ommer, “High-resolution image synthesis with latent dif fusion models, ” in Pr oceedings of the IEEE/CVF conference on computer vision and pattern r ecognition , 2022, pp. 10 684–10 695. [13] S. Komkov and A. Petiushko, “ Advhat: Real-world adversarial attack on arcface face id system, ” in 2020 25th International Conference on P attern Recognition (ICPR) , 2021, pp. 819–826.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment