Efficient Morphology-Control Co-Design via Stackelberg Proximal Policy Optimization

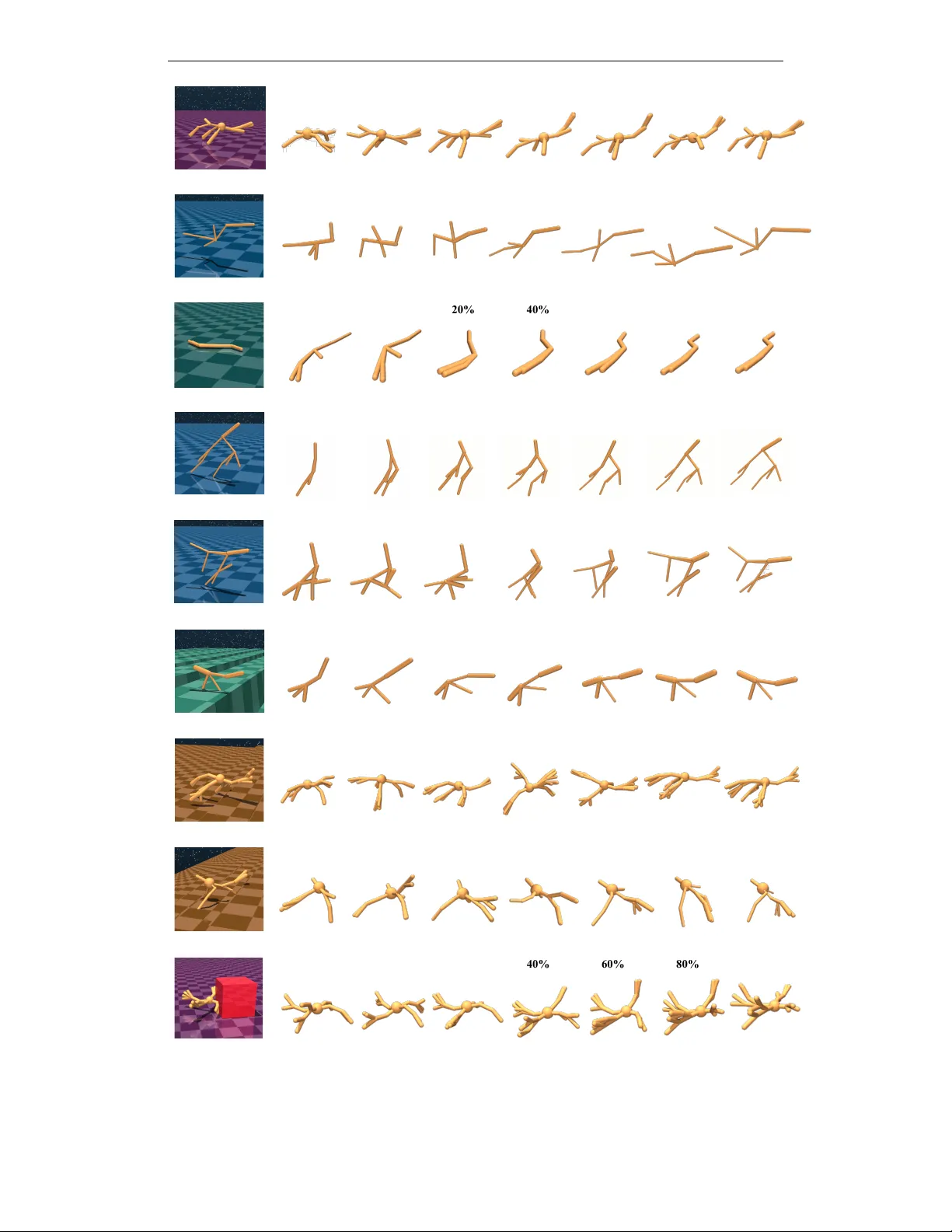

Morphology-control co-design concerns the coupled optimization of an agent's body structure and control policy. This problem exhibits a bi-level structure, where the control dynamically adapts to the morphology to maximize performance. Existing metho…

Authors: Yanning Dai, Yuhui Wang, Dylan R. Ashley