RieMind: Geometry-Grounded Spatial Agent for Scene Understanding

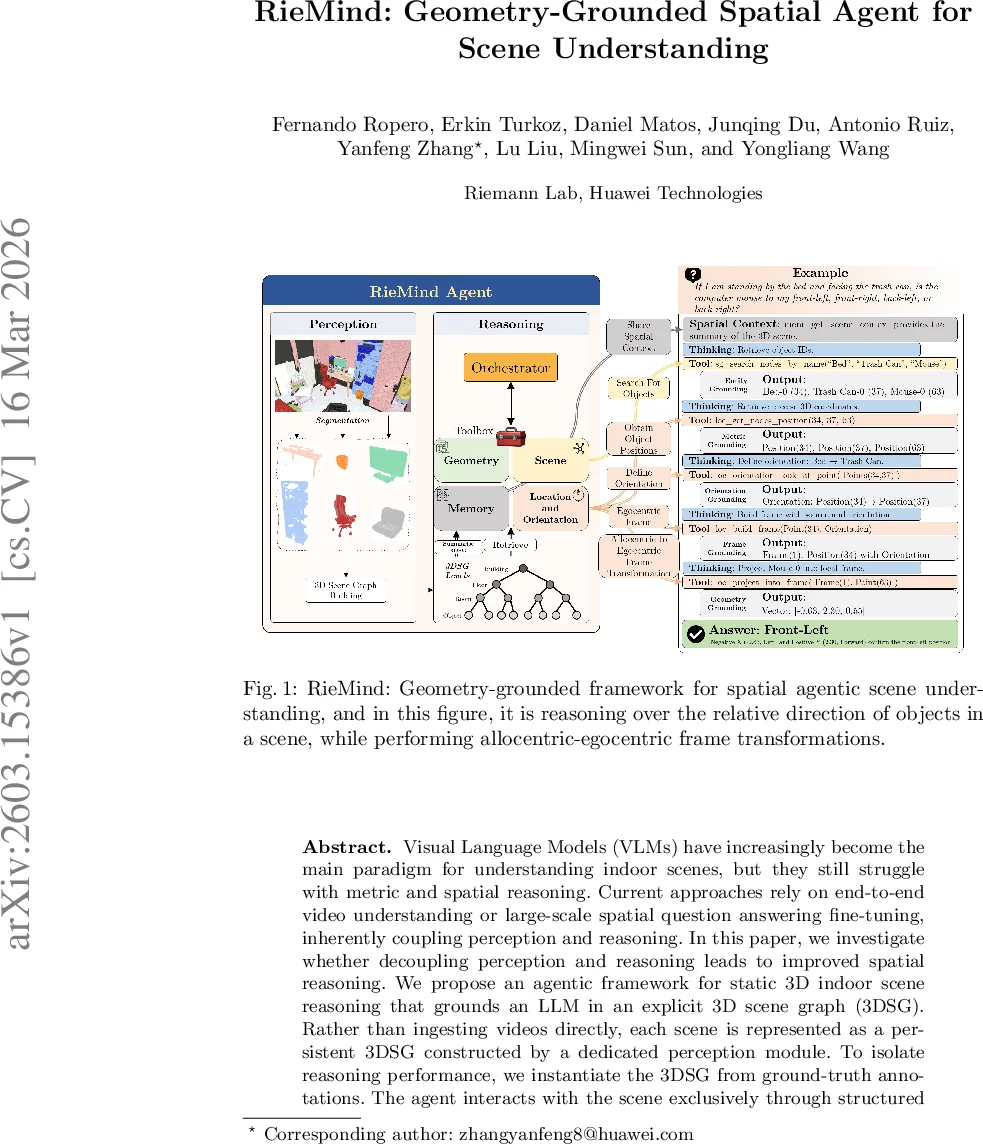

Visual Language Models (VLMs) have increasingly become the main paradigm for understanding indoor scenes, but they still struggle with metric and spatial reasoning. Current approaches rely on end-to-end video understanding or large-scale spatial question answering fine-tuning, inherently coupling perception and reasoning. In this paper, we investigate whether decoupling perception and reasoning leads to improved spatial reasoning. We propose an agentic framework for static 3D indoor scene reasoning that grounds an LLM in an explicit 3D scene graph (3DSG). Rather than ingesting videos directly, each scene is represented as a persistent 3DSG constructed by a dedicated perception module. To isolate reasoning performance, we instantiate the 3DSG from ground-truth annotations. The agent interacts with the scene exclusively through structured geometric tools that expose fundamental properties such as object dimensions, distances, poses, and spatial relationships. The results we obtain on the static split of VSI-Bench provide an upper bound under ideal perceptual conditions on the spatial reasoning performance, and we find that it is significantly higher than previous works, by up to 16%, without task specific fine-tuning. Compared to base VLMs, our agentic variant achieves significantly better performance, with average improvements between 33% to 50%. These findings indicate that explicit geometric grounding substantially improves spatial reasoning performance, and suggest that structured representations offer a compelling alternative to purely end-to-end visual reasoning.

💡 Research Summary

The paper “RieMind: Geometry‑Grounded Spatial Agent for Scene Understanding” introduces a novel framework that separates perception from reasoning to improve spatial reasoning in indoor 3‑D environments. Instead of feeding raw video streams into a visual‑language model (VLM) and expecting the model to implicitly learn metric information, the authors construct an explicit 3‑D scene graph (3DSG) that encodes hierarchical semantic nodes (building, floor, room, object) and metric attributes (position, dimensions, volume, orientation). Each node carries a persistent unique identifier, enabling deterministic queries.

The perception component builds the 3DSG from RGB‑D frames and camera parameters; for the purpose of measuring pure reasoning capability, the authors bypass any perception errors by populating the graph directly from ground‑truth annotations. The reasoning component consists of a large language model (LLM) that never performs arithmetic itself but accesses a toolbox of four namespaces: memory, scene, geometry, and location/orientation. Each toolbox function is deliberately atomic—e.g., retrieving an object’s volume, measuring distance between two IDs, or projecting a point into an ego‑centric frame. The LLM receives a system prompt that defines its role, lists available tools with signatures, and specifies a JSON answer format containing a natural‑language summary, a list of tool calls (evidence), and a data dictionary of results.

When a question from the VSI‑Bench static split is posed, the LLM first calls a memory tool to obtain a concise textual summary of the scene graph hierarchy. It then resolves any object mentions to concrete node IDs using scene‑graph search tools, ensuring unambiguous grounding. Subsequent geometry calls fetch the exact numeric values required (e.g., distance, area, orientation). If a relational reasoning step is needed, the location/orientation tools construct an ad‑hoc coordinate frame (often ego‑centric) and project objects into it before performing comparisons. Every step is logged as a tool call, guaranteeing traceability and preventing hidden “hallucinations”.

Empirically, RieMind achieves substantial gains over prior VLM‑based approaches. On the static split of VSI‑Bench, the agent outperforms fine‑tuned spatial QA models such as ViCA and SpaceR by up to 16 % absolute accuracy, and surpasses base VLMs by 33 %–50 % relative improvement. The most pronounced advantages appear on queries that require precise metric reasoning—distances, volumes, and relative directions—demonstrating that explicit geometric grounding supplies the LLM with reliable quantitative evidence.

The authors highlight three design principles: (1) Minimal geometric primitives—tools expose only single attributes or simple transformations; (2) Explicit grounding—every query is anchored to a persistent node ID, eliminating ambiguous textual references; (3) Determinism—tool outputs depend solely on the immutable 3DSG state, not on the LLM’s internal stochasticity. These principles collectively enforce interpretability, consistency, and reproducibility.

Limitations are acknowledged. The current evaluation assumes a perfect 3DSG, sidestepping errors that would arise from real‑world perception pipelines (segmentation, depth estimation). Future work should assess robustness when the graph is noisy, explore efficient tool‑call scheduling to reduce token overhead, and integrate the framework with real‑time robotic platforms where perception and reasoning must operate jointly.

In summary, RieMind demonstrates that coupling an LLM with a structured, geometry‑grounded scene representation can dramatically boost spatial reasoning performance, offering a compelling alternative to end‑to‑end video‑based VLMs and opening avenues for interpretable, metric‑aware AI agents in embodied AI and robotics.

Comments & Academic Discussion

Loading comments...

Leave a Comment