xplainfi: Feature Importance and Statistical Inference for Machine Learning in R

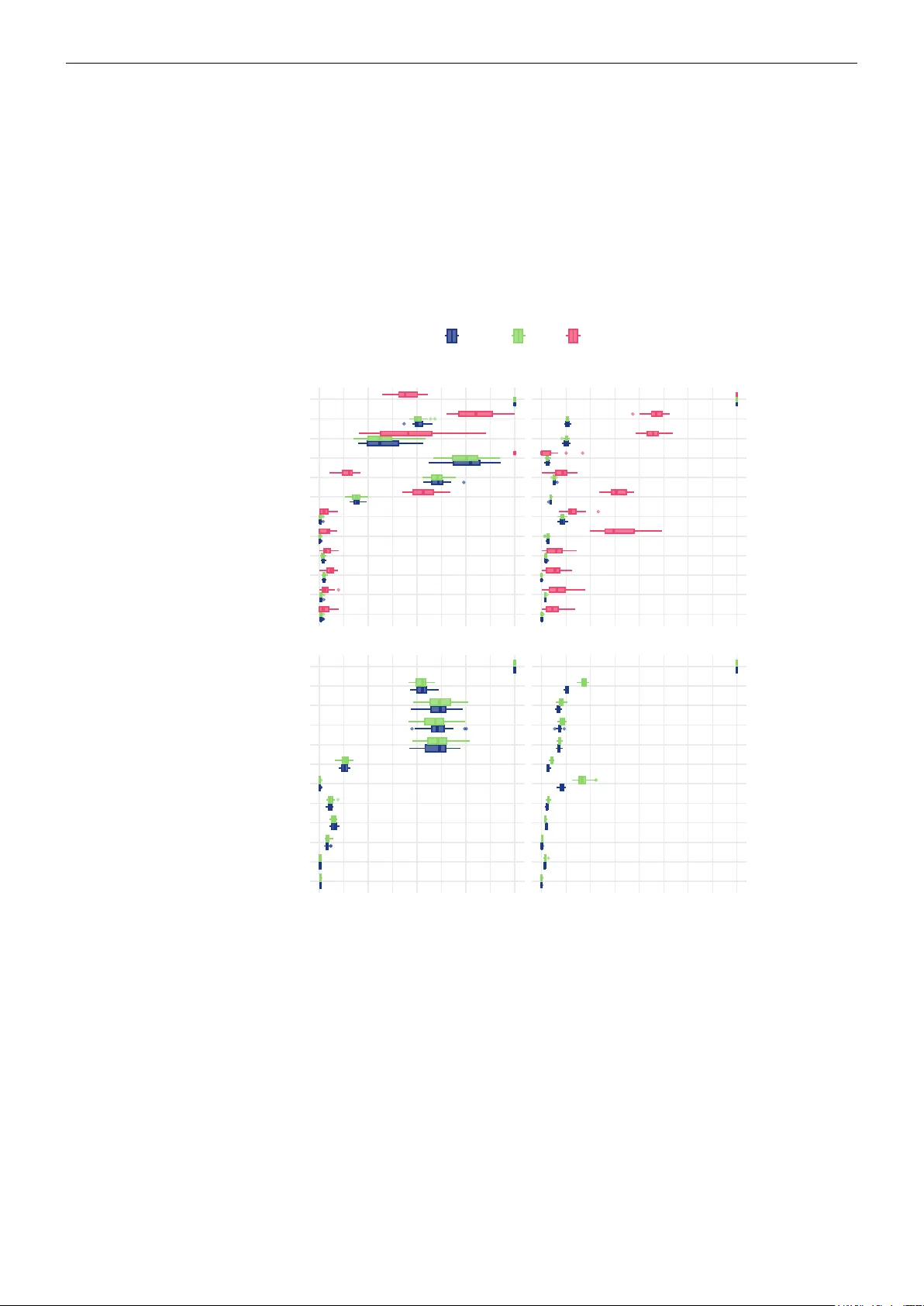

We introduce xplainfi, an R package built on top of the mlr3 ecosystem for global, loss-based feature importance methods for machine learning models. Various feature importance methods exist in R, but significant gaps remain, particularly regarding c…

Authors: Lukas Burk, Fiona Katharina Ewald, Giuseppe Casalicchio