Beyond Monolithic Models: Symbolic Seams for Composable Neuro-Symbolic Architectures

Current Artificial Intelligence (AI) systems are frequently built around monolithic models that entangle perception, reasoning, and decision-making, a design that often conflicts with established software architecture principles. Large Language Model…

Authors: Nicolas Schuler, Vincenzo Scotti, Raffaela Mir

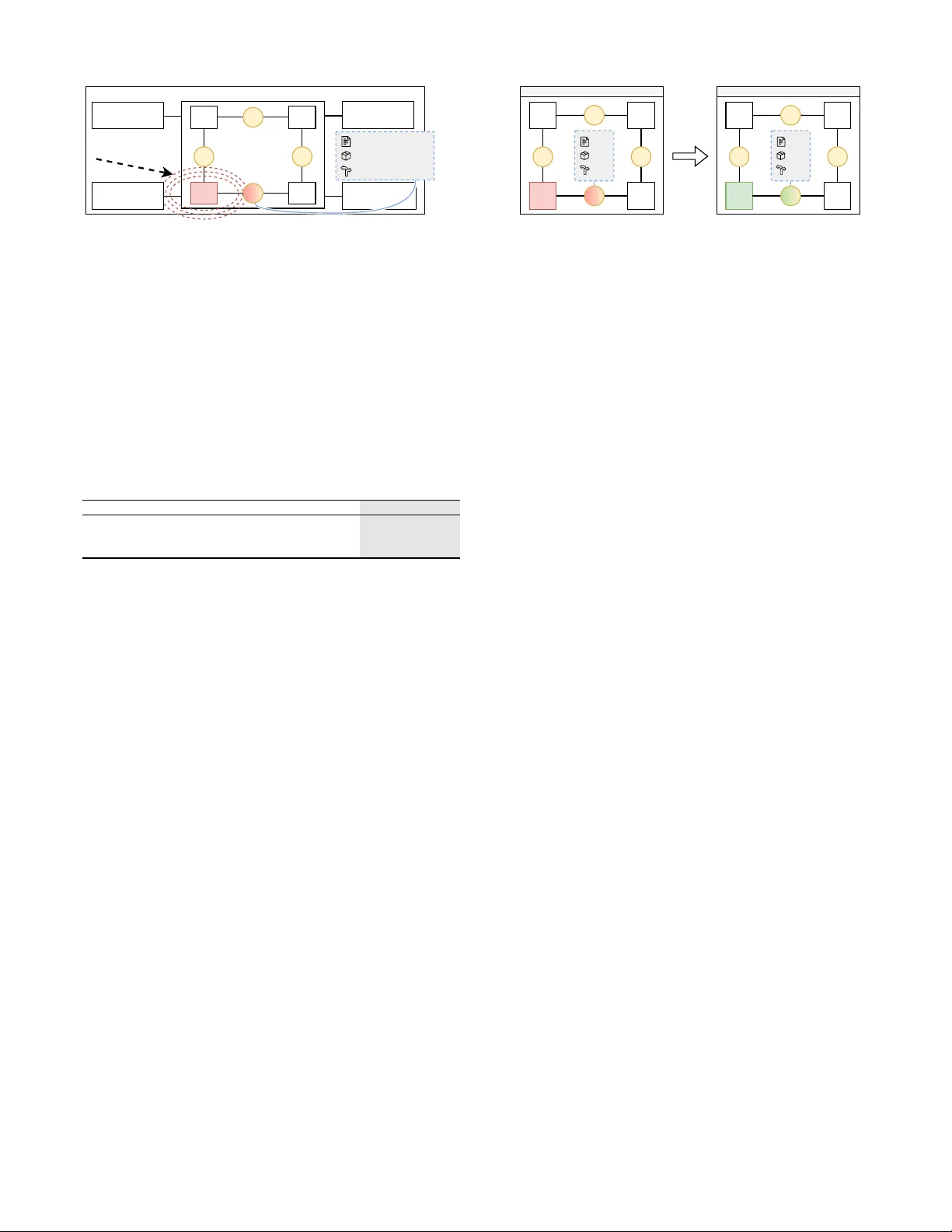

Be yond Monolithic Models: Symbolic Seams for Composable Neuro-Symbolic Architectures Nicolas Schuler , V incenzo Scotti, Raf faela Mirandola KASTEL - Institute of Information Security and Dependability Karlsruhe Institute of T ec hnology (KIT). Germany { nicolas.schuler , vincenzo.scotti, raf faela.mirandola } @kit.edu Abstract —Current Artificial Intelligence (AI) systems are frequently built around monolithic models that entangle perception, reasoning, and decision-making, a design that often conflicts with established software architecture principles. Large Language Models (LLMs) amplify this tendency , offering scale but limited transpar ency and adaptability . T o address this, we argue for composability as a guiding principle that tr eats AI as a living architectur e rather than a fixed artifact. W e introduce symbolic seams : explicit architectural breakpoints where a system commits to inspectable, typed boundary objects, versioned constraint bundles, and decision traces. W e describe how seams enable a composable neuro-symbolic design that combines the data-driven adaptability of learned components with the verifiability of explicit symbolic constraints – combining strengths neither paradigm achieves alone. By treating AI systems as assemblies of interchangeable parts rather than indivisible wholes, we outline a dir ection f or intelligent systems that ar e extensible, transparent, and amenable to principled evolution. Index T erms —Neuro-Symbolic Ar chitectures, Softwar e Architectur e, Machine Learning, AI I . I N T R OD U C T I O N Artificial Intelligence ( AI ) 1 has advanced through models of e ver - increasing scale, yet this progress has come at a cost to architectural discipline. Software engineering has long emphasized modularity (principled decomposition into cohesive components) [1], [2] and composability (recombination through explicit interfaces) [3]. Contemporary AI systems, by contrast, are frequently constructed as monolithic entities that bind perception, reasoning, and decision - making into opaque structures [4]. In architectural terms, this design trajectory con- stitutes an anti - pattern [5]: a recurring solution that undermines the principles of maintainability , transparency , and adaptability . Large Language Models ( LLM s) ex emplify this anti - pattern. As trained statistical artifacts, they demonstrate extraordinary fluency and predicti ve capacity , but their design reinforces a paradigm in which scale can substitute for structure and statistical power can eclipse architectural clarity . The systems built around such artifacts, which integrate them with data pipelines, orchestration, and control logic, inherit these structural limitations. This creates a critical tension: the This work was supported by funding from the pilot program Core Informatics at KIT (KiKIT) of the Helmholtz Association (HGF) and supported by the German Research Foundation (DFG) - SFB 1608 - 501798263 and KASTEL Security Research Labs, Karlsruhe. 1 W e use AI as the umbrella term for intelligent systems. Our architectural arguments apply across the AI ⊃ ML ⊃ LLM hierarchy . achiev ements of systems based on monolithic AI components conflict with design principles that hav e guided software architecture for decades [6], [7]. The challenge, then, is not simply technical but architectural. If AI is to evolv e beyond its current trajectory , it must be reimagined in terms of design commitments rather than sheer scale. Symbolic AI provides formal structure and – to some extent – verifiability , but struggles to learn from raw data at scale [8]. Data-dri ven approaches, e.g., Machine Learning ( ML ), deep learning, and LLM s, achieve remarkable learning capacity , but sacrifice transparency and principled decomposition [8], [9]. Neuro-symbolic integration seeks to combine both strengths [10], yet the architectural foundations for composing learned and symbolic components remain underspecified. This paper offers a first step to tackle that challenge. Using composability as a lens, we connect established software architecture principles to neuro-symbolic designs that combine learned components with explicit symbolic artifacts (rules, constraints, logic-based reasoning) through defined interfaces. W ith this first step, we outline a reference direction for systems that must ev olve, repositioning AI as a living architecture [11]. This paper contributes three elements: (1) the concept of symbolic seams as explicit architectural breakpoints for constraining and recomposing AI systems, (2) four design commitments – typed boundary objects, ev olv- able constraint configuration, externalized reasoning traces, and bounded change propagation – that operationalize composability in neuro-symbolic settings, and (3) a research agenda to make these commitments practical through contracts, change-impact reasoning, and constraint gov ernance. T o make this concrete, consider an enterprise assistant that triggers workflo ws under ev olving constraints (access control, approv als). In monolithic and prompt-orchestrated designs, policy updates are costly to validate: retraining may be required, or constraints remain scattered across prompts. A symbolic seam makes boundaries explicit, enabling localized validation when policies change. Our contribution is architectural. In fact, neuro-symbolic systems already employ explicit neural–symbolic interfaces, and prompt-orchestration frameworks expose intermediate steps and states [12]–[15]. Con versely , we specify seams as explicit connectors, shifting composability from “modular execution” to “gov erned recombination under stable contracts”, regardless of whether components are neural, symbolic, or hybrid. I I . B AC K G RO U N D A N D R E L A T E D W O R K Our argument draws on four research areas: (1) foundational architectural principles that make systems e volv able, (2) empirical e vidence that ML systems violate those principles, (3) prompt-orchestration framew orks that modularize execution without governing interaction semantics, and (4) neuro-symbolic approaches that restore composable structure but leave a connector-le vel gap. Ar chitectur al foundations. Software engineering rests on foundational principles established through decades of empiri- cal research. Examples include Parnas’ s information hiding [1], Dijkstra’ s separation of concerns [3], and Baldwin and Clark’ s theory of modularity [2], collectiv ely demonstrating that princi- pled decomposition improves flexibility and comprehensibility . Maintainability , transparency , and adaptability emerge not coincidentally , but systematically from such decomposition. T ec hnical debt in ML systems. ML systems incur unique forms of technical debt [5], including what the authors term abstraction debt : the difficulty of enforcing strict abstraction boundaries, which degrades modularity . Empirical studies confirm these risks: ML repositories exhibit higher levels of technical debt than non- ML projects [16], often characterized by weak component boundaries and fragile orchestration (“glue code”) [17]. While recent research proposes methods to detect ML -specific code smells [18], these approaches often treat symptoms of the underlying architectural tension rather than resolving it. Pr ompt-or chestr ation frameworks. Frameworks such as LangChain [14] and DSPy [15] respond pragmatically to this tension: they decompose AI application logic into pipelines of discrete steps (prompting, retriev al, tool in vocation), thereby modularizing flow and exposing intermediate states for inspection. Y et, the constraints governing interaction between steps (input and output schemas, validation rules, consistency requirements) typically remain embedded in prompt templates and ad-hoc checks rather than in gov erned interfaces. Neur o-symbolic appr oaches and composability . Recent work on neuro-symbolic AI emphasizes explicit interfaces between neural and symbolic components, motiv ated in part by concerns that end-to-end inte gration conflicts with architectural principles that ha ve long proven effecti ve in software engineering and symbolic AI [12]. Neuro-symbolic systems typically parti- tion functionality into distinct layers with defined interfaces: neural components extract patterns from unstructured data, while symbolic components apply logical inference and rule- based constraints to those abstractions [10], [13]. Researchers emphasize that this separation exposes human-readable rea- soning traces and supports component-level replacement and e volution [12]. By contrast, purely neural modular designs can also be composed, but their interfaces are often statistical and may require additional alignment when modules are replaced. Existing frameworks such as Logic T ensor Networks [13] and DeepProbLog [19] demonstrate that composable neuro- symbolic designs are implementable and can achie ve strong per- formance where interpretability or domain constraints matter . Related work and gap. Across these four lines of work, the literature identifies symptoms and provides building blocks, but it does not close the architectural loop: it lacks a connector- lev el specification that turns inevitable change into bounded, checkable revalidation. W ork on ML technical debt, entan- glement diagnoses, and glue-code fragility exists [5], [16], [17], yet of fers no notion of seam-level contracts that scope the impact of updates. Neuro-symbolic framew orks introduce explicit interfaces for injecting constraints [10], [12], [13], but typically treat constraints and traces as task-bound mechanisms rather than versioned, governed artifacts that persist across system evolution. Prompt-orchestration frameworks, despite modularizing ex ecution, lea ve interaction semantics informal and therefore difficult to validate or ev olve systematically . Self- adaptiv e systems (SAS) frame works such as MAPE-K [20] formalize adaptation loops with architectural models, and ADLs such as Wright [21] specify typed connector protocols. These provide general-purpose adaptation mechanisms but target man- aged components with predictable behavior; they do not address the stochastic outputs, distributional contracts, and retraining- driv en e volution specific to learned components. Symbolic seams close this gap by ele vating boundary objects, constraint bundles, and decision traces into durable connector specifica- tions : changes are mediated through seam versions, triggering localized validation rather than system-wide rev alidation. I I I . W H Y M O N O L I T H I C A I R E S I S T S A R C H I T E C T U R A L E VO L U T I O N Before introducing symbolic seams, we examine what makes monolithic architectures brittle. The core pattern is clear; Symptom : small changes require broad rev alidation; Cause : latent coupling from concerns fused in a single parametric space; Consequence : e xpensiv e e volution and brittle go vernance. Sculley et al. first articulated the Changing Anything Changes Everything (CACE) phenomenon in their study of ML technical debt [5]. While their core argument was framed as the accumu- lation of technical debt, CA CE also points to a deeper architec- tural problem: entanglement in end-to-end trained ML models. Entanglement means that multiple concerns are encoded jointly , so changing one aspect perturbs others in hard-to-predict ways, undermining ar chitectur al scalability (i.e., the ability to absorb change with bounded impact and diagnosable consequences). Consider an end-to-end trained ML component such as an LLM : representations, intermediate computations, and output selection are optimized together against a single global loss function. Changes to learned representations (e.g., modifying how entities are encoded) can cascade through do wnstream computations, which in turn alter decision-making behavior . This coupling is structural: upstream changes shift downstream input distrib utions, potentially in v alidating learned mappings throughout the system [5]. Figure 1a exemplifies this posture: perception, reasoning, and decision-making are collapsed into SYSTEM UI ChangeΔ API Eval/Monitor Data Pipeline Data OP AQUE MONOLITIC AI COMPONENT (a) Monolitic AI system ChangeΔ LLM Prompts UI/API T ools Memory/State Orchestrator unspecified str str str SYSTEM (b) Prompt-Orchestrated System Fig. 1: Current entanglement postures: (a) system is substantially overlapped with its neural logic; (b) entanglement is hidden through informal mechanisms. ∆ s represent the changes that affect the system. a single statistical artifact and its surrounding “glue” – an extreme case of entanglement. This structural entanglement conflicts with at least three foundational software architecture principles. Separation of Concerns becomes difficult to enforce when concerns are fused in a single parametric space: responsibilities cannot be cleanly isolated because they share optimization objectiv es and parameter updates. Information Hiding is weakened because the decision pathway remains opaque – there is often no clean boundary where intermediate reasoning is exposed for inspection or validation [22], [23]. Composability becomes difficult to achie ve: upgrading or swapping one component (e.g., perception) can cascade through reasoning and decision logic, locking the system in mutual dependence. These problems are not merely implementation details to be fixed through better engineering, but constraints imposed by how monolithic AI systems are built and optimized end-to-end [24]. This rigidity manifests in three critical deficiencies: (1) Explainability : decisions emerge from opaque parameter spaces; post-hoc methods typically offer local approximations rather than faithful explanations and remain limited under entanglement [22], [23]. (2) Adaptability : model and concept drift (degradation as real-world data distributions shift) often driv e expensi ve full retraining rather than localized component-lev el updates [25], [26]. (3) Composability : perception, reasoning, and decision components are difficult to swap independently , forcing monolithic de velopment or ad-hoc composition without principled interfaces [27]. Prompt-orchestration frame works (Figure 1b) attempt to address these deficiencies by modularizing execution flows. Y et, the critical semantics of intermediate states and constraints often remain informal and entangled in prompts and ad-hoc checks. This raises an architectural question: can we reimagine AI systems as decomposable structures in which perception, reasoning, and decision-making are articulated as interoperable, independently ev olv able layers? I V . S Y M B O L I C S E A M S F O R C O M P O S A B L E A R C H I T E C T U R E Decomposition is the natural response to architectural brittleness introduced by entanglement, but it requires stable boundaries that preserve the ability to reason about, ev olve, and govern a system under change. As Fig. 1 showed, neither the monolithic nor the prompt-orchestrated posture provides such boundaries. A. Overview Symbolic seams are our envisoned solution to tackle the challenges of current AI-based systems. A symbolic seam is a connector-lev el constraint checkpoint that emits typed boundary objects, ev aluates versioned constraint bundles, and records decision traces. Seams are not components or modules themselves, but commitments that externalize constraints and trace decisions be- tween components. Figure 2a makes this architecture concrete. Learned modules ( θ 1 – θ 4 ) handle data-driv en tasks, perception, classification, generation, while symbolic seam connectors ( ϕ A – ϕ D ) mediate every crossing between them. Each ϕ validates boundary objects against the activ e constraint bundle and emits a decision trace before data passes to the next module. When a change arri ves (change ∆ in the figure), its impact is bounded by the surrounding seams: the dashed circle around θ 3 shows that only the affected module and its adjacent contracts require rev alidation, leaving the rest of the assembly unaffected. Figure 2b illustrates the temporal consequence. On the left, modules θ 1 – θ 4 are wired through seams ϕ A – ϕ D . On the right, after a change occurred, θ 3 has been replaced by an updated version (mark ed θ ′ 3 , denoting a retrained, fine-tuned, or sw apped v ariant) with a versioned connector lev el update of ϕ ′ B . Seams form the system’ s durable, versioned skeleton, providing stable contract behavior against which each new module version can be v alidated independently . Seams typically wrap learnable components, but constraints can also be partially internalized when they shape a component’ s structure or learning objectiv e. They behave as connector contracts (Figure 3) – explicit inter- faces that bundle typed boundary objects, constraint configura- tion, and trace schemas to gov ern interaction and change propa- gation. They guarantee checkability of the declared interf ace but not completeness of all downstream reasoning. Figure 3 scopes the seam’ s interface commitments, not a full formal semantics. For stochastic components, “typed” means that boundary objects declare not only structure (fields, types, ranges) but also distributional properties (e.g., calibration bounds, coverage guarantees) that the seam can validate at the interface. θ 1 θ 2 θ 3 θ 4 φ A φ C φ D SYSTEM UI/API Eval/Monitor Serving ChangeΔ φ B Boundary object Constraint bundle Decision trace Data Pipeline SYMBOLIC SEAMS-DESIGNED AI COMPONENT (a) Symbolic Seams Posture θ 1 θ 2 θ 3 θ 4 φ A φ C φ D t t+1 φ B θ 1 θ 2 θ 3 θ 4 φ A φ C φ D φ B ' ' v1 v1 v1 v2 v2 v1 (b) Change Propagation Fig. 2: Proposed posture: (a) learned modules ( θ ) are separated by symbolic seam connectors ( ϕ ) that checkpoint constraints; the dashed circle sho ws change bounded at the affected seam. (b) Seams persist across ev olution while modules are replaced ( θ ′ and ϕ ′ are the updated versions). Effect of change ∆ is contained. Seam ( ϕ ) ≜ T in , T out , C ( v ) , S trace ϕ : T in × C ( v ) − → T out × Status × T race T r ace ≜ v , e vidence Fig. 3: A seam ( ϕ ) commits to typed boundary objects ( T in , T out ), a versioned constraint bundle C ( v ) , and a trace schema S trace . For stochastic components, the contract may specify distributional properties. Model-Centric Prompt-Or ch. Sym. Seams Contracts Implicit Informal (str) T yped, versioned Evolution Retrain all Edit prompts Seam-lev el config Change visibility None Unclear Seam validation T ABLE I: Comparison of entanglement postures. Symbolic seams dif fer from prior work in three respects, summarized in T able I: (1) they specify interaction at the connector le vel rather than as component-level mechanisms, (2) the y treat constraints and traces as versioned, governed artifacts rather than task-specific logic, (3) they make bounded change pr opagation an explicit architectural property rather than an emergent side effect. The shift is from exchanging intermediate representations to governing interaction, e volution, and validation at architectural boundaries. B. Design Commitments W e propose four design commitments that specify the interface properties a symbolic seam must uphold. T yped, Inspectable Boundary Objects: Components com- municate via explicit boundary objects with declared structure and semantics, rather than through implicit latent couplings. A boundary object may be symbolic, neural, or hybrid, but it must be inspectable and testable as an interface artifact [4], [7]. Evolvable Constraint Configuration: Behavioral constraints (policies, domain rules, safety boundaries) are externalized as first-class configuration artifacts that can be injected, modified, and rolled back without full end-to- end retraining. This turns many behavior changes from a re-optimization problem into a governed configuration change. Externalized Reasoning T races (Decision Receipts): The system emits intermediate reasoning checkpoints during normal operation, producing bounded, checkable traces for debugging, audit, and governance. Traces are structured ex ecution artifacts (e.g., constraint checks and evidence references), not necessarily free-form natural-language rationales. This makes transparency a system property rather than a retroactiv e interpretation of opaque outputs. Bounded Change Propagation: Symbolic seams function as abstraction barriers, where changes behind a seam hav e diagnosable and bounded impact on other components unless the interface changes. This enables localized ev olution (e.g., replacing a perception component) while preserving system-lev el predictability . C. Illustrative Scenario: Enterprise P olicy Assistant Considering the enterprise assistant sketched in Section I that answers questions, drafts tickets, and triggers workflo ws under e volving constraints, in a model-centric design, constraints are absorbed into training data, fine-tuning, or monolithic prompt- ing patterns, making updates costly and dif ficult to validate. In an orchestrated design, policies are implemented as prompt fragments and scattered checks; workflows are modularized, but constraint semantics remain fragile and difficult to gov ern. Under symbolic seam commitments, the assistant is orga- nized around explicit contracts at connector boundaries: each stage emits a typed boundary object that can be validated and logged, rather than keeping semantics implicit in prompts. Boundary objects and traces li ve at the seam : components may produce internal intermediate representations, b ut the seam commits to externalized, inspectable artifacts. It governs vali- dation, versioning, and trace emission, even when hybrid com- ponents emit boundary objects directly . When a policy changes (e.g., a new approval rule), the update becomes a constraint- bundle version change with seam-level regression checks over boundary objects and decision records. If the boundary-object contract remains stable, learned components can be swapped or retrained behind the seam without rewriting downstream policy logic. For a policy update, the postures diver ge sharply: monolithic approaches require retraining with full regression; in prompt-orchestrated approaches, edits affect prompts without v alidation contracts; symbolic seams-based approaches version a constraint bundle with seam-scoped regression and rollback. Symbolic seams primarily make run-time constraints explicit and gov ernable, though the same commitments support compile- time approaches where constraints shape a component’ s learning objectiv e [28], [29]. Seen through a composability lens, seams enable three disciplined operations: (1) evolve behavior by editing and versioning constraints, (2) r eplace learnable components behind a seam as long as their boundary-object contract remains stable, and (3) r ecompose workflows by wiring components through typed boundary objects rather than implicit prompt orchestration. This highlights a key distinction: modularity decomposes execution, whereas composability enables safe recombination under explicit contracts. Components can be replaced, reordered, or constrained with bounded validation as long as the seam contract remains stable. Existing neuro-symbolic approaches demonstrate that neural learning can be combined with explicit symbolic interfaces [13], [19], yet these approaches remain largely domain-specific and require substantial expertise to deploy at scale. The commitments above are architectural standards rather than recipes: closing the gap between these principles and practical tooling remains an open challenge. V . D I S C U S S I O N A N D R E S E A R C H A G E N D A Symbolic seams offer a complementary direction to the current emphasis on scale and end-to-end optimization, treating constraints, interfaces, and traces as durable architectural artifacts rather than addressing ev ery change through retraining or prompt engineering. Unlike post-hoc explainability methods that infer rationales after the fact, seam-lev el traces are ex ecution artifacts: intermediate decisions and constraint ev aluations are emitted during normal operation and can be v alidated at the interface, making trace fidelity an architectural requirement. This shifts the question from “why did the model say this?” to “did the system follow the specified constraints and contracts?”, turning verification and validation into the primary assurance mechanism. Rather than relying on interpretability alone, engineers can check contract satisfaction at each seam, validate trace completeness against declared decision points, and regression-test constraint enforcement when components change. T o make the abov e commitments actionable, we outline a research agenda focused on turning seams, constraints, and traces into engineering artifacts with the following concrete challenges: (1) Interface formalization: How can contracts capture stochastic outputs while remaining checkable? (2) Change-impact reasoning: How can engineers estimate the behavioral impact of changing constraints or replacing com- ponents without exhaustiv e testing or expensi ve retraining? (3) Operational observability and trace fidelity: How can we ensure reasoning traces remain faithful to the actual decision process and operationally reliable as models, constraints, and components ev olve? (4) T ooling for constraint gov ernance: What are the analogues of version control, diffing, and rollback for constraint specifications and their enforced implementations? Evaluation can borrow from self-adaptive systems by treating policy updates and component swaps as adaptation scenarios and measuring change locality , assurance effort, and contract breakage rate across architectural postures. That said, composability does not come for free: end-to-end optimization can yield higher raw performance precisely because it exploits cross-component entanglement as a learnable parameter . The architectural question is therefore not whether to decompose, but where to place seams so that the benefits of adaptability , explainability , and gov ernance outweigh the cost of explicit interfaces and constraints – a central trade-off of treating AI as a living architecture. R E F E R E N C E S [1] D. L. Parnas, “On the criteria to be used in decomposing systems into modules, ” Commun. A CM , vol. 15, no. 12, p. 1053–1058, Dec. 1972. [2] C. Y . Baldwin and K. B. Clark, Design Rules: The P ower of Modularity V olume 1 . Cambridge, MA, USA: MIT Press, 1999. [3] E. W . Dijkstra, Selected writings on computing: a personal perspective . Springer Science & Business Media, 2012. [4] M. Mitchell et al. , “Model cards for model reporting, ” in Proceedings of the Conference on F airness, Accountability , and Tr anspar ency , ser . F A T* ’19. Ne w Y ork, NY , USA: Association for Computing Machinery , 2019, p. 220–229. [5] D. Sculley et al. , “Hidden technical debt in machine learning systems, ” NeurIPS , vol. 28, 2015. [6] G. A. Lewis et al. , “Software Architecture and Machine Learning (Dagstuhl Seminar 23302), ” Dagstuhl Reports , vol. 13, no. 7, pp. 166–188, 2024. [7] L. J. Bass et al. , Software arc hitecture in practice , ser . SEI series in software engineering. Addison-W esley-Longman, 1999. [8] H. A. Kautz, “The third ai summer: Aaai robert s. engelmore memorial lecture, ” AI Magazine , vol. 43, no. 1, pp. 105–125, 2022. [9] A. d. Garcez and L. C. Lamb, “Neurosymbolic AI: The 3rd wave, ” Artificial Intelligence Review , vol. 56, no. 11, pp. 12 387–12 406, 2023. [10] V . Belle, “On the relev ance of logic for artificial intelligence, and the promise of neurosymbolic learning, ” Neurosymbolic Artificial Intelligence , vol. 1, 2025. [11] I. Ozkaya, “ An ai engineer versus a software engineer, ” IEEE Software , vol. 39, no. 6, pp. 4–7, Nov 2022. [12] A. Mileo, “T owards a neuro-symbolic cycle for human-centered explainability , ” Neur osymbolic Artificial Intelligence , vol. 1, pp. N AI–240 740, 2025. [13] S. Badreddine et al. , “Logic tensor networks, ” Artificial Intelligence , vol. 303, p. 103649, 2022. [14] H. Chase, “LangChain, ” Oct. 2022. [Online]. A vailable: https://github.com/langchain- ai/langchain [15] O. Khattab et al. , “Dspy: Compiling declarativ e language model calls into self-improving pipelines, ” CoRR , vol. abs/2310.03714, 2023. [16] D. OBrien et al. , “23 shades of self-admitted technical debt: an empirical study on machine learning software, ” in FSE , ser . ESEC/FSE 2022. New Y ork, NY , USA: Association for Computing Machinery , 2022, p. 734–746. [17] R. Nazir et al. , “ Architecting ml-enabled systems: Challenges, best practices, and design decisions, ” Journal of Systems and Software , vol. 207, 2024. [18] G. Recupito et al. , “T echnical debt in ai-enabled systems: On the prev alence, sev erity , impact, and management strategies for code and architecture, ” Journal of Systems and Software , vol. 216, 2024. [19] R. Manhae ve et al. , “Deepproblog: Neural probabilistic logic programming, ” in NeurIPS , vol. 31. Curran Associates, Inc., 2018. [20] J. Kephart and D. Chess, “The vision of autonomic computing, ” Computer , vol. 36, no. 1, pp. 41–50, 2003. [21] R. Allen and D. Garlan, “ A formal basis for architectural connection, ” ACM T rans. Softw . Eng. Methodol. , vol. 6, no. 3, p. 213–249, Jul. 1997. [22] C. Rudin et al. , “Interpretable machine learning: Fundamental principles and 10 grand challenges, ” CoRR , vol. abs/2103.11251, 2021. [23] C. Rudin, “Stop explaining black box machine learning models for high stakes decisions and use interpretable models instead, ” Nature Machine Intelligence , vol. 1, no. 5, pp. 206–215, May 2019. [24] A. Bhatia et al. An Empirical Study of Self-Admitted T echnical Debt in Machine Learning Software. [25] D. V ela, A. Sharp, R. Zhang, T . Nguyen, A. Hoang, and O. S. Pianykh, “T emporal quality degradation in ai models, ” Scientific Reports , vol. 12, no. 1, p. 11654, Jul 2022. [26] T . Glasmachers, “Limits of end-to-end learning, ” in Pr oceedings of the Ninth Asian Confer ence on Machine Learning , v ol. 77. Y onsei Univ ersity , Seoul, Republic of K orea: PMLR, 15–17 Nov 2017, pp. 17–32. [27] R. Pan and H. Rajan, “On decomposing a deep neural network into modules, ” in FSE . ACM, 2020, pp. 889–900. [28] S. N. Tran and A. S. d’A vila Garcez, “Logical boltzmann machines, ” CoRR , vol. abs/2112.05841, 2021. [29] Y . Du et al. , “Compositional visual generation with energy based models, ” in NeurIPS 2020, December 6-12, 2020, virtual , 2020.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment