Generative Semantic HARQ: Latent-Space Text Retransmission and Combining

Semantic communication conveys meaning rather than raw bits, but reliability at the semantic level remains an open challenge. We propose a semantic-level hybrid automatic repeat request (HARQ) framework for text communication, in which a Transformer-…

Authors: Bin Han, Yulin Hu, Hans D. Schotten

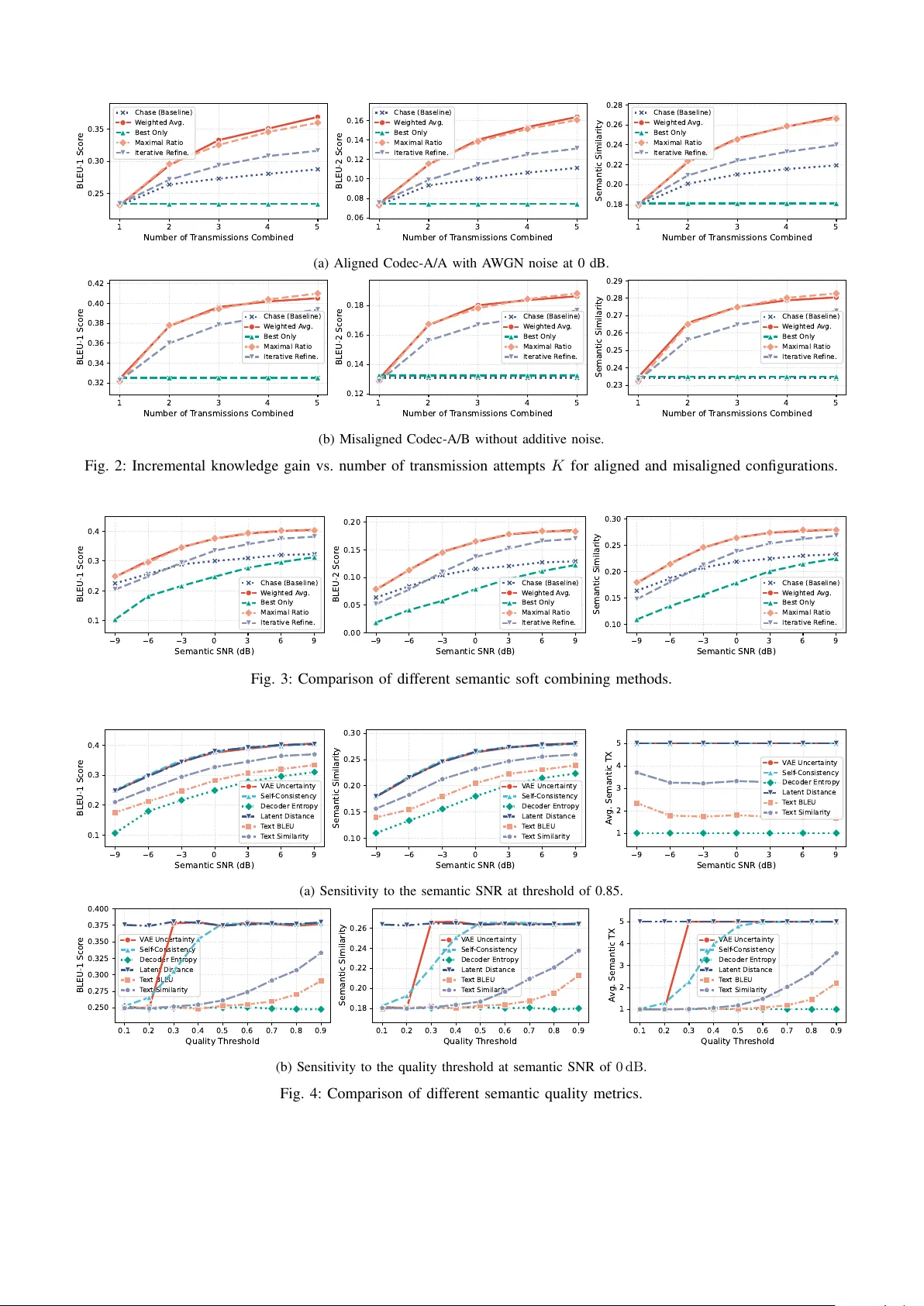

Generati v e Semantic HARQ: Latent-Space T e xt Retransmission and Combining Bin Han ∗ , Y ulin Hu † , and Hans D. Schotten ∗ ‡ ∗ RPTU Univ ersity Kaiserslautern-Landau † W uhan Univ ersity ‡ German Research Center for Artificial Intelligence (DFKI GmbH) Abstract —Semantic communication con veys meaning rather than raw bits, but reliability at the semantic lev el remains an open challenge. W e pr opose a semantic-level h ybrid automatic repeat request (HARQ) framework for text communication, in which a T ransf ormer -variational autoencoder (V AE) codec operates as a lightweight overlay on the conventional pr otocol stack. The stochastic encoder inherently generates di verse latent repr esentations across retransmissions—pr oviding incremental knowledge (IK) from a single model without dedicated protocol design. On the receiver side, a soft quality estimator triggers retransmissions and a quality-aware combiner merges the recei ved latent vectors within a consistent latent space. W e systematically benchmark six semantic quality metrics and four soft combining strategies under hybrid semantic distortion that mixes systematic bias with additive noise. The results suggest combining W eighted-A verage or MRC-Inspired combining with self-consistency-based HARQ triggering for the best perfor - mance. Index T erms —Semantic communications, HARQ, variational autoencoder , quality estimation, soft combining I . I N T R O D U C T I O N Driv en by the rising demand for post-Shannon commu- nication systems, semantic communication (SemCom) has emerged as a promising paradigm that transmits mean- ing rather than raw bits, achie ving higher communication efficienc y especially under bandwidth-constrained condi- tions [1]. Deep learning-based SemCom systems, such as DeepSC [2] for text and Deep joint source-channel coding (JSCC) [3] for images, have demonstrated significant gains ov er con ventional separate source-channel coding by jointly optimizing the encoding and decoding processes end-to-end across the semantic and physical layers. While the majority of SemCom research has focused on visual data (images, videos, point clouds) for their high com- pression potential, text-oriented SemCom remains compara- tiv ely under-e xplored. This is despite the growing importance of textual semantics as i) the interface layer between con- ventional communication systems and emerging token-based multimedia communications, where compact textual descrip- tions conv ey visual or audio content, ii) a key modality for cross-modal translation tasks such as text-to-image and text-to-speech generation, and iii) a nativ e representation for the semantic plane in AI-RAN architectures. T o enhance the reliability of SemCom, sev eral works have extended hybrid automatic repeat request (HARQ) mecha- nisms to the semantic lev el. Jiang et al. [4] proposed the first end-to-end semantic HARQ for sentence transmission (SCHARQ), employing chase combining and incremental redundancy in the latent space. Zhou et al. [5] introduced incremental knowledge (IK)-based HARQ (IK-HARQ) with adaptiv e bit-rate control. Beyond text, semantic HARQ has been in vestigated for image transmission [6], [7], feature transmission [8], [9], video conferencing [10], and coop- erativ e perception [11]. Howe ver , three challenges remain. First, unlike con ventional HARQ where cyclic redundancy check (CRC) on the medium access control (MA C) layer triggers retransmissions and soft combining operates on the physical (PHY) layer , semantic-le vel HARQ must integrate error detection and correction within the semantic layer itself, requiring new quality assessment methods beyond bit-lev el checks. Second, all existing semantic HARQ ap- proaches employ deterministic encoders, so retransmissions carry identical or structurally constrained representations. Third, while individual works propose ad-hoc solutions for quality estimation and combining, no systematic comparison of these methods exists. In this work, we propose a generative semantic HARQ framew ork for text communication that addresses these gaps. Our main contributions are: i) W e design a T rans- former-v ariational autoencoder (V AE) based semantic en- coder-decoder that serves as a lightweight overlay on top of con ventional communication systems. The stochastic nature of the V AE naturally provides diverse latent representations across retransmissions, enabling IK without explicit protocol design. ii) W e systematically compare six semantic quality metrics—ranging from encoder uncertainty and latent-space distance to round-trip consistency checks—as retransmission triggers, benchmarking their effecti veness under the same experimental conditions. iii) W e systematically compare four semantic combining strategies—from softmax-weighted av- eraging to iterativ e refinement approaches—against the base- line of chase combining, ev aluating their ability to exploit multiple receiv ed latent representations. The remainder of this paper is organized as follows. Sec- tion II presents the proposed frame work, including the sys- tem architecture, quality estimation methods, and combining strategies. Section III provides the performance e v aluation. Section IV concludes the paper . I I . P R O P O S E D A P P R OA C H A. System Ar chitectur e The proposed system architecture is illustrated in Fig. 1. A semantic codec pair operates as a lightweight overlay on top of the conv entional communication stack. The semantic encoder maps a source sentence s into a compact latent v ector z ∈ R D , where D is the latent dimension, which is trans- mitted through the con ventional PHY chain (channel coding, modulation, etc.) and received as ˜ z . On the receiver side, a semantic decoder reconstructs the sentence as ˆ s = f dec ( ˜ z ) . At the lo wer layers, con ventional channel coding combined with CRC-based HARQ can generally provide sufficient integrity of the data packets. The propagation of bit errors upward into the semantic layer is therefore very rare and practically negligible. Nev ertheless, the received latent ˜ z may still differ from the transmitted z due to distortions that originate within or above the semantic layer itself, motiv ating a dedicated semantic-lev el HARQ mechanism. W e identify two categories of such distortion that may coexist. The first is unbiased, additive distortion , which is approximately zero-mean and i.i.d. across transmissions. Sources include quantization and rounding errors from fix ed-point or reduced- precision inference on edge devices, residual channel noise that surviv es PHY-layer error correction b ut still perturbs the latent, and intentionally injected noise for purposes such as differential pri vac y . The second category is biased distortion , which introduces a systematic shift in the latent space and is not i.i.d. across transmissions. Sources include encoder-decoder misalignment due to asynchronous model updates (e.g., one side is updated while the other retains an older version), deployment asymmetry where the encoder and decoder run on heterogeneous hardware with different model variants (e.g., full-precision vs. distilled), and domain shift between the training distribution and the deployment context. Biased distortion is particularly challenging because it persists across retransmissions and cannot be mitigated by simple averaging—i.e., chase combining of identical latent representations. Effecti ve semantic HARQ therefore requires IK : each retransmission must carry a distinct representation of the same semantic content, so that the combiner can exploit diversity rather than merely averaging out noise. This fundamentally requires a semantic encoder whose output varies across retransmissions of the same source, which motiv ates the generativ e codec design presented next. The semantic HARQ operates as follows. After each transmission round k , a quality estimator computes a score q k ∈ [0 , 1] for the recei ved latent ˜ z k . If q k falls belo w a predefined threshold q th , a NA CK triggers retransmission. After K rounds (or upon A CK), the recei ver combines all receiv ed latents { ˜ z 1 , . . . , ˜ z K } into ˆ z and decodes the final output ˆ s = f dec ( ˆ z ) . The design of the codec, quality estima- tor , and combiner are detailed in the following subsections. B. Generative Semantic Codec As established above, effecti ve semantic HARQ relies on IK, i.e., each retransmission must carry a structurally distinct representation of the same semantic content. A straightforward realization w ould emplo y multiple, separately trained encoders—analogous to incremental redundancy (IR) on lower -layer HARQ—but this multiplies model storage and maintenance cost on the transmitter side. W e instead adopt a T ransformer-V AE architecture whose single stochas- tic encoder inherently produces diverse latent representations via random sampling. Because ev ery sample is drawn from the same learned latent space R D , the semantic-to-latent mapping remains consistent across retransmissions and all receiv ed vectors { ˜ z 1 , . . . , ˜ z K } reside in the same space. Combining therefore reduces to a fixed-dimensional vector operation whose output can be directly fed to the same decoder , regardless of the number of retransmissions K . This avoids the dimensionality growth that would arise from concatenating heterogeneous representations, which would require K -specific decoders on the recei ver and impose a hard cap on the maximum number of retransmissions, since each encoder v ariant must be provisioned in advance. The consistent latent space also simplifies quality estimation, as all receiv ed vectors are directly comparable. The encoder processes a source sentence s through tok- enization, embedding with positional encoding, and L layers of multi-head self-attention with feed-forward networks. The resulting contextualized representations are mean-pooled and projected into the V AE latent space: ( µ , log σ 2 ) = f enc ( s ) , z = µ + σ ⊙ ϵ , ϵ ∼ N ( 0 , I ) , (1) where ⊙ denotes the element-wise product. The reparame- terization enables gradient-based training while maintaining stochasticity . Each retransmission draws a fresh noise sample ϵ , producing a distinct latent z k that encodes the same semantic content from a different region of the latent space. The decoder projects the received latent ˜ z into a cross- attention memory and an input injection embedding, then generates the output sentence autoregressi vely via L T rans- former decoder layers with causal masking. The procedures are detailed in Algorithms 1 and 2. In both algorithms, T O K ( · ) and D E T O K ( · ) denote tokenization and detokeniza- tion with respect to vocab ulary V ; E M B ( · ) is the shared token embedding layer; P E ( · ) adds sinusoidal positional encoding; T F E N C ( · ) and T F D E C ( · ) denote the L -layer T ransformer encoder and decoder blocks; P O O L ( · ) performs mean pooling ov er non-padded positions; and ⟨ S ⟩ , ⟨ E ⟩ , ⟨ P ⟩ are the start-of- sequence, end-of-sequence, and padding tokens. The binary padding mask M pad indicates non-padded positions. The model is trained end-to-end by minimizing a com- bined reconstruction and Kullback-Leibler (KL) di ver gence loss over an additiv e white Gaussian noise (A WGN) channel: L = L recon + β · L KL , (2) Fig. 1: The proposed framework of semantic-lev el HARQ as a lightweight overlay on conv entional communication systems. where L recon is the cross-entropy between the decoder out- put and the target tokens, and the KL term with free-bits regularization is L KL = − 1 2 D X d =1 max 1 + log σ 2 d − µ 2 d − σ 2 d , λ free . (3) The weighting factor β follows a linear annealing schedule to prevent posterior collapse. During each training step, the signal-to-noise ratio (SNR) is randomly sampled from a pre- defined set S to ensure robustness across channel conditions. The training and validation procedures are summarized in Algorithm 3, where R E P A R A M ( · ) denotes the reparameteri- zation trick in (1), AW G N ( · ) adds channel noise at the giv en SNR, and C L I P G R A D ( · ) clips gradients by norm. Algorithm 1: Semantic Encoding (Transmitter) Input: Source sentence s , vocabulary V Output: Latent vector z ∈ R D 1 t ← T OK ( s , V ) // [ ⟨ S ⟩ , w 1 , . . . , w n , ⟨ E ⟩ , ⟨ P ⟩ , . . . ] 2 X ← E M B ( t ) · √ d model + P E ( | t | ) 3 H ← T F E N C ( X , M pad ) // L self-attention layers 4 h ← P O OL ( H , M pad ) 5 µ ← W µ h + b µ , log σ 2 ← W σ h + b σ 6 ϵ ∼ N ( 0 , I ) 7 z ← µ + exp(0 . 5 · log σ 2 ) ⊙ ϵ 8 return z Algorithm 2: Semantic Decoding (Receiver) Input: Received latent ˜ z , vocabulary V Output: Reconstructed sentence ˆ s 1 m ← W proj ˜ z // cross-attention memory 2 z emb ← W emb ˜ z // input injection 3 out ← [ ⟨ S ⟩ ] 4 for t = 1 , 2 , . . . , t max do 5 Y ← E MB ( out ) · √ d model + z emb + P E ( t ) 6 D ← T F D E C ( Y , m , M causal ) 7 w next ← arg max W out D [ t ] 8 out ← out ∥ [ w next ] 9 if w next = ⟨ E ⟩ then break 10 end 11 ˆ s ← D E TO K ( out , V ) 12 return ˆ s Algorithm 3: Training and V alidation Input: D train , D val , SNR set S , max epochs N e , patience P 1 θ ← I NI T P A RA M S () ; ϕ ← A DA M W ( θ ) ; η ← R E DU C E L R ( ϕ ) 2 m ∗ ← −∞ ; c ← 0 // best metric; stall counter 3 for e = 1 , . . . , N e do 4 β ← A N NE A L ( e ) // KL weight warm-up 5 for each ( s , t in , t out ) ∈ D train do 6 γ ← S A MP L E ( S ) // random SNR 7 ( µ , log σ 2 ) ← f enc ( s ) 8 z ← R E P A R AM ( µ , σ ) 9 ˜ z ← AWG N ( z , γ ) 10 ˆ t ← f dec ( ˜ z , t in ) 11 L ← C E ( ˆ t , t out ) + β · L KL // Eqs. (2) -- (3) 12 ∇ ← C L IP G RA D ( ∇ θ L ) ; θ ← ϕ. S T E P ( ∇ ) 13 end 14 m ← E V A L ( D val ) // BLEU-4, similarity 15 η ← U PD A T E LR ( η, m ) // reduce on plateau 16 if m > m ∗ then 17 m ∗ ← m ; c ← 0 ; save θ 18 else 19 c ← c + 1 20 end 21 if c ≥ P then 22 break // early stopping 23 end 24 end C. Semantic Quality Estimation In conv entional HARQ, retransmission is triggered by a binary CRC check that unambiguously detects bit errors. At the semantic lev el, no such clear-cut criterion exists: semantic meaning is inherently tolerant of minor variations, and an overly strict detector would forfeit the very flexibility that makes SemCom attracti ve. The challenge is therefore to design a soft quality metric that distinguishes genuine semantic degradation from benign variation. All estimation is performed at the recei ver . W e consider six metrics that produce a scalar score q ∈ [0 , 1] (higher is better), summa- rized in T able I. The methods differ in their computational requirements at the receiv er . Metrics A and D require re- encoding the decoded sentence to obtain the encoder statistics µ and σ , but inv olve no additional decoding step. Metric C requires only the decoder, running N independent decoding passes to measure output consistency . Metrics B, E, and F perform a full round-trip: decode the recei ved latent, re- encode the result to obtain µ ′ , and then decode µ ′ again to assess consistency at the text lev el (Metrics E, F) or latent lev el (Metric B). In Metric B, δ ( µ , µ ′ ) = max 0 , 1 − ∥ µ − µ ′ ∥ 2 / √ D is a normalized distance penalty; ϵ = 10 − 8 in Metric D prev ents division by zero. T ABLE I: Semantic quality metrics. Metric RX overhead A V AE Uncertainty: q = max 0 , 1 − 1 D P d σ d Re-enc. B Self-Consistency: q = cos( µ , µ ′ ) · δ ( µ , µ ′ ) Re-enc.+dec. C Decoder Entropy: q = 1 N P n ⊮ [ ˆ s ( n ) = ˆ s (1) ] N × dec. D Latent Distance: q = max 0 , 1 − ∥ ˜ z − µ ∥ ∥ µ ∥ + ϵ Re-enc. E T ext BLEU: q = BLEU-1 ( ˆ s 1 , ˆ s 2 ) Re-enc.+dec. F T ext Similarity: q = J ( ˆ s 1 , ˆ s 2 ) Re-enc.+dec. D. Semantic Combining Because the V AE encoder—as discussed in Section II-B— maps e very retransmission into the same latent space R D , the combiner’ s task reduces to merging K vectors that share a common coordinate system into a single estimate ˆ z ∈ R D , which is then passed to the unchanged decoder . This fixed-dimensional formulation allo ws us to draw on classical combining principles from PHY-layer div ersity reception and adapt them to the semantic domain. W e consider four quality-aware strategies, summarized in T able II. Method A applies softmax-normalized weights de- riv ed from the quality scores, providing a smooth weighting that retains ev ery received transmission. Method B can be viewed as an extreme variant that zeros out all weights except for the highest-quality transmission. Method C, inspired by classical maximal ratio combining (MRC), strikes a compro- mise between the two: it still incorporates ev ery transmission, but its quadratic weighting rule fav ors high-quality recep- tions far more aggressiv ely than the exponential softmax of Method A, since on q k ∈ [0 , 1] the quadratic mapping com- presses low scores much more strongly . Method D takes an iterativ e approach, starting from the highest-quality reception and progressiv ely blending in the remaining vectors. T ABLE II: Semantic combining methods. Method Complexity A W eighted-A vg.: ˆ z = P k w k ˜ z k , w k = e q k P j e q j O ( K D ) B Best-Only: ˆ z = ˜ z k ∗ , k ∗ = arg max k q k O ( K ) C MRC-Inspired: ˆ z = P k q 2 k ˜ z k P k q 2 k O ( K D ) ˆ z (0) = ˜ z k ∗ , D Iterativ e: ˆ z ( t +1) = α ˆ z ( t ) + (1 − α ) ˜ z k , O ( K D ) α = q ( t ) q ( t ) + q k I I I . P E R F O R M A N C E E V A L UAT I O N A. Evaluation Setup W e ev aluate on the MS-COCO 2014 T rain/V al annotations dataset [12], comprising 400 172 English sentences (5–50 words each) with a vocab ulary of |V | = 10 000 tokens, split into 320 137 training, 40 017 validation, and 40 018 test sen- tences (80/10/10%). The model and training hyperparameters are summarized in T able III. Semantic distortion is modeled as i.i.d. Gaussian noise n ∼ N ( 0 , σ 2 n I ) added to the latent vector , with semantic SNR ≜ 10 log 10 ( P z /σ 2 n ) dB, where P z = E [ ∥ z ∥ 2 /D ] is the average per-dimension latent power . During training, the SNR is uniformly sampled between 0 dB to 20 dB ; at test time, the SNR range is extended to − 5 dB to 30 dB . T ABLE III: Model and training configuration. Model T raining Enc./Dec. layers 6 / 6 Optimizer AdamW d model / d ff 768 / 3072 Learning rate 10 − 4 Attention heads 12 Batch size 16 Latent dim. D 256 KL annealing β 0.01 → 1.0 Max seq. length 64 Free bits λ free 0.25 nats/dim Dropout 0.1 W ord dropout 0.5 Pooling Mean Label smoothing 0.1 Early stopping patience 15 T wo model checkpoints are retained from the training process, at epochs 49 and 50, hereafter referred to as Codec- A and Codec-B , respectively . Pairing both sides with Codec- A yields an aligned TX–RX configuration, whereas using Codec-A at the transmitter and Codec-B at the recei ver creates a controlled misaligned pair that emulates biased distortion from asynchronous model updates. T o isolate the semantic-layer mechanisms, all experiments assume error - free lower -layer transmission (i.e., the PHY and MAC layers introduce no codew ord errors). Performance is measured by BLEU and cosine sentence similarity , each averaged over 100 test sentences with 50 independent trials per sentence. B. Incr emental Knowledge Gain W e first verify that the V AE-based stochastic encoder provides meaningful IK across retransmissions. For this pur- pose, we sweep the number of forced transmission attempts K ∈ { 1 , 2 , 3 , 4 , 5 } without quality-based triggering, and compare the four combining methods from T able II together with chase combining (where the same latent vector is used in every retransmission) as baseline. T wo scenarios are ev aluated: (i) aligned Codec-A/A with A WGN-modeled semantic noise at SNR = 0 dB, and (ii) misaligned Codec- A/B without additiv e noise (SNR → ∞ ), isolating the effect of biased distortion alone. The results are sho wn in Figs. 2(a) and 2(b), respecti vely . Under unbiased additiv e distortion alone, all methods—including chase combining— benefit from increasing K due to the av eraging effect, except Best-Only , whose quality scores are too uniformly low at this SNR to support meaningful selection gain. Howe ver , against biased distortion caused by model misalignment, chase combining does not provide any benefit. In both cases, the W eighted-A verage and MRC-Inspired methods perform comparably and emerge as the top two methods (with W eighted-A vg. slightly ahead under A WGN and MRC- Inspired slightly ahead under misalignment), outperforming the Iterativ e method that provides an intermediate IK gain. C. Combining Strate gy Comparison Next, we benchmark the combining strategies under the joint effect of biased and unbiased distortion. The misaligned Codec-A/B pair is used with A WGN-modeled semantic noise, and the semantic SNR is swept between − 9 dB to 9 dB . The number of transmissions is fixed to K = 5 for all methods, bypassing quality-based triggering so that the combiners are compared under identical input conditions. The same five methods as in Section III-B are ev aluated, and the results are shown in Fig. 3. Consistent with the IK gain results, the W eighted-A verage and MRC-Inspired methods perform comparably as the top two, followed by the Iterativ e method. The Best-Only method performs poorly at low SNR, outperformed by the chase combining baseline—but the gap narrows quickly as the SNR increases. D. Quality Estimation Comparison Finally , we e valuate the six quality estimation metrics from T able I in a closed-loop semantic HARQ setting. The combiner is fixed to W eighted-A verage (Method A), which is the best-performing strategy from Section III-C. The misaligned Codec-A/B configuration with A WGN semantic noise is used, with a maximum of K max = 5 attempts per sentence. In addition to BLEU and sentence similarity , the av erage number of transmissions per sentence is recorded to assess retransmission efficienc y . The ev aluation proceeds in two stages. First, the quality threshold is fixed at q th = 0 . 85 and the semantic SNR is swept between − 9 dB to 9 dB to compare the estimators’ ability to detect semantic degradation across channel condi- tions (Fig. 4(a)). Under this configuration, V AE uncertainty , self-consistency , and latent distance consistently trigger the maximum retransmissions over all SNRs; decoder entropy nev er triggers any retransmission; while BLEU and text similarity fall in between and respond to the SNR variation. Howe ver , comparing estimators at a single threshold is inherently limited: the six metrics have dif ferent physical interpretations, so a fixed q th does not impose an equally stringent criterion on each. T o obtain a fairer assessment, a second stage sweeps the quality threshold over q th ∈ { 0 . 1 , 0 . 2 , . . . , 0 . 9 } at a representati ve mid-range operating point of SNR = 0 dB (Fig. 4(b)). This threshold sensitivity analysis rev eals the differences among the metrics regarding their dynamic range and elasticity . While latent distance and text similarity exhibit rigid beha vior at two extremes of the sensitivity , the remaining four metrics show a more gradual and smooth response to the threshold variation. Especially , the self-consistency metric demonstrates the widest dynamic range with a smooth elasticity , making it the most adaptable to different operating points and requirements. I V . C O N C L U S I O N W e proposed a HARQ framework for generative semantic text communication, where a Transformer -V AE codec oper- ates as a lightweight overlay on the conv entional protocol stack. The stochastic encoder inherently provides di verse latent representations across retransmissions—enabling IK from a single model without dedicated protocol design— while the consistent latent space permits fixed-dimensional combining with an unchanged decoder re gardless of the num- ber of retransmissions. W e systematically benchmarked four quality-aware combining strategies and six semantic quality estimation methods under mixed semantic distortion. The ev aluation demonstrated that our proposed framew ork offers significant IK gain, especially when combining W eighted- A verage or MRC-Inspired methods with self-consistency as the quality metric. Future work includes ev aluating under more sev ere codec misalignment scenarios, extending the framew ork to multi-modal semantic communication, and joint HARQ optimization across semantic and PHY layers. R E F E R E N C E S [1] L. X. Nguyen, A. D. Raha et al. , “ A contemporary survey on semantic communications: Theory of mind, generati ve ai, and deep joint source- channel coding, ” IEEE Commun. Surv . Tutor . , vol. 28, pp. 2377–2417, 2026. [2] H. Xie, Z. Qin et al. , “Deep learning enabled semantic communication systems, ” IEEE T rans. Signal Pr ocess. , vol. 69, pp. 2663–2678, 2021. [3] E. Bourtsoulatze, D. B. Kurka, and D. G ¨ und ¨ uz, “Deep joint source- channel coding for wireless image transmission, ” IEEE Tr ans. Cogn. Commun. Netw . , vol. 5, no. 3, pp. 567–579, 2019. [4] P . Jiang, C.-K. W en et al. , “Deep source-channel coding for sentence semantic transmission with harq, ” IEEE T rans. Commun. , vol. 70, no. 8, pp. 5225–5240, 2022. [5] Q. Zhou, R. Li et al. , “ Adaptive bit rate control in semantic com- munication with incremental knowledge-based harq, ” IEEE Open J. Commun. Soc. , vol. 3, pp. 1076–1089, 2022. [6] Y . Zheng, F . W ang et al. , “Semantic base enabled image transmission with fine-grained harq, ” IEEE T rans. W ireless Commun. , vol. 24, no. 4, pp. 3606–3622, 2025. [7] G. Zhang, Q. Hu et al. , “SCAN: Semantic communication with adap- tiv e channel feedback, ” IEEE T rans. Cogn. Commun. Netw . , vol. 10, no. 5, pp. 1759–1773, 2024. [8] J. Hu, F . W ang et al. , “Semharq: Semantic-aw are hybrid automatic repeat request for multi-task semantic communications, ” IEEE T rans. W ireless Commun. , vol. 25, pp. 3170–3185, 2026. [9] M. W ang, J. Li et al. , “Spiking semantic communication for feature transmission with HARQ, ” IEEE T rans. V eh. T echnol. , vol. 74, no. 6, pp. 10 035–10 040, 2025. [10] P . Jiang, C.-K. W en et al. , “Wireless semantic communications for video conferencing, ” IEEE J. Sel. Areas Commun. , vol. 41, no. 1, pp. 230–244, 2023. [11] Y . Sheng, L. Liang et al. , “Semantic communication for cooperativ e perception using HARQ, ” IEEE T rans. Cogn. Commun. Netw . , vol. 12, pp. 1139–1154, 2026. [12] T .-Y . Lin, M. Maire et al. , “Microsoft COCO: Common objects in context, ” in Computer V ision – ECCV 2014 . Springer , 2014, pp. 740–755. 1 2 3 4 5 Number of T ransmissions Combined 0.25 0.30 0.35 BLEU-1 Scor e Chase (Baseline) W eighted A vg. Best Only Maximal R atio Iterative R efine. 1 2 3 4 5 Number of T ransmissions Combined 0.06 0.08 0.10 0.12 0.14 0.16 BLEU-2 Scor e Chase (Baseline) W eighted A vg. Best Only Maximal R atio Iterative R efine. 1 2 3 4 5 Number of T ransmissions Combined 0.18 0.20 0.22 0.24 0.26 0.28 Semantic Similarity Chase (Baseline) W eighted A vg. Best Only Maximal R atio Iterative R efine. (a) Aligned Codec-A/A with A WGN noise at 0 dB. 1 2 3 4 5 Number of T ransmissions Combined 0.32 0.34 0.36 0.38 0.40 0.42 BLEU-1 Scor e Chase (Baseline) W eighted A vg. Best Only Maximal R atio Iterative R efine. 1 2 3 4 5 Number of T ransmissions Combined 0.12 0.14 0.16 0.18 BLEU-2 Scor e Chase (Baseline) W eighted A vg. Best Only Maximal R atio Iterative R efine. 1 2 3 4 5 Number of T ransmissions Combined 0.23 0.24 0.25 0.26 0.27 0.28 0.29 Semantic Similarity Chase (Baseline) W eighted A vg. Best Only Maximal R atio Iterative R efine. (b) Misaligned Codec-A/B without additive noise. Fig. 2: Incremental knowledge gain vs. number of transmission attempts K for aligned and misaligned configurations. 9 6 3 0 3 6 9 Semantic SNR (dB) 0.1 0.2 0.3 0.4 BLEU-1 Scor e Chase (Baseline) W eighted A vg. Best Only Maximal R atio Iterative R efine. 9 6 3 0 3 6 9 Semantic SNR (dB) 0.00 0.05 0.10 0.15 0.20 BLEU-2 Scor e Chase (Baseline) W eighted A vg. Best Only Maximal R atio Iterative R efine. 9 6 3 0 3 6 9 Semantic SNR (dB) 0.10 0.15 0.20 0.25 0.30 Semantic Similarity Chase (Baseline) W eighted A vg. Best Only Maximal R atio Iterative R efine. Fig. 3: Comparison of different semantic soft combining methods. 9 6 3 0 3 6 9 Semantic SNR (dB) 0.1 0.2 0.3 0.4 BLEU-1 Scor e V AE Uncertainty Self -Consistency Decoder Entr opy Latent Distance T e xt BLEU T e xt Similarity 9 6 3 0 3 6 9 Semantic SNR (dB) 0.10 0.15 0.20 0.25 0.30 Semantic Similarity V AE Uncertainty Self -Consistency Decoder Entr opy Latent Distance T e xt BLEU T e xt Similarity 9 6 3 0 3 6 9 Semantic SNR (dB) 1 2 3 4 5 A vg. Semantic TX V AE Uncertainty Self -Consistency Decoder Entr opy Latent Distance T e xt BLEU T e xt Similarity (a) Sensitivity to the semantic SNR at threshold of 0.85. 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 Quality Thr eshold 0.250 0.275 0.300 0.325 0.350 0.375 0.400 BLEU-1 Scor e V AE Uncertainty Self -Consistency Decoder Entr opy Latent Distance T e xt BLEU T e xt Similarity 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 Quality Thr eshold 0.18 0.20 0.22 0.24 0.26 Semantic Similarity V AE Uncertainty Self -Consistency Decoder Entr opy Latent Distance T e xt BLEU T e xt Similarity 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 Quality Thr eshold 1 2 3 4 5 A vg. Semantic TX V AE Uncertainty Self -Consistency Decoder Entr opy Latent Distance T e xt BLEU T e xt Similarity (b) Sensitivity to the quality threshold at semantic SNR of 0 dB . Fig. 4: Comparison of different semantic quality metrics.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment