RAZOR: Ratio-Aware Layer Editing for Targeted Unlearning in Vision Transformers and Diffusion Models

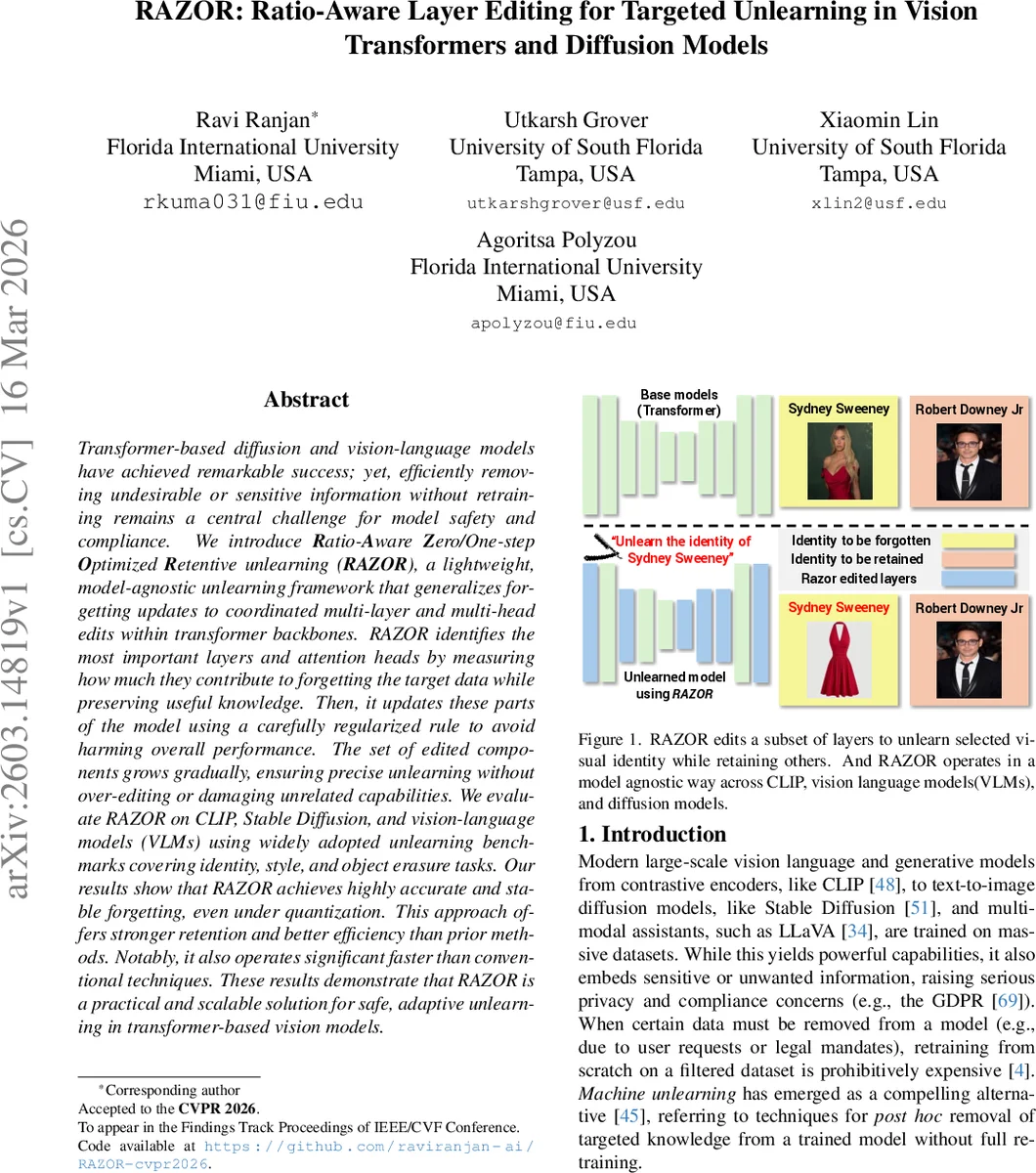

Transformer based diffusion and vision-language models have achieved remarkable success; yet, efficiently removing undesirable or sensitive information without retraining remains a central challenge for model safety and compliance. We introduce Ratio-Aware Zero/One-step Optimized Retentive unlearning (RAZOR), a lightweight, model-agnostic unlearning framework that generalizes forgetting updates to coordinated multi-layer and multi-head edits within transformer backbones. RAZOR identifies the most important layers and attention heads by measuring how much they contribute to forgetting the target data while preserving useful knowledge. Then, it updates these parts of the model using a carefully regularized rule to avoid harming overall performance. The set of edited components grows gradually, ensuring precise unlearning without over-editing or damaging unrelated capabilities. We evaluate RAZOR on CLIP, Stable Diffusion, and vision-language models (VLMs) using widely adopted unlearning benchmarks covering identity, style, and object erasure tasks. Our results show that RAZOR achieves highly accurate and stable forgetting, even under quantization. This approach offers stronger retention and better efficiency than prior methods. Notably, it also operates significant faster than conventional techniques. These results demonstrate that RAZOR is a practical and scalable solution for safe, adaptive unlearning in transformer-based vision models.

💡 Research Summary

The paper introduces RAZOR (Ratio‑Aware Zero/One‑step Optimized Retentive unlearning), a lightweight, model‑agnostic framework for targeted forgetting in large transformer‑based vision, vision‑language, and diffusion models. The authors observe that existing unlearning approaches either require costly full‑model fine‑tuning, suffer from incomplete forgetting, or degrade unrelated capabilities. To address these issues, RAZOR simultaneously evaluates how each layer or attention head contributes to forgetting the target data (the “forget” gradient) and how it preserves the knowledge that must be retained (the “retain” gradient).

For a given forget set D_f and retain set D_r, the method computes one‑shot gradients g_f^l = ∇{θ^l} L_forget and g_r^l = ∇{θ^l} L_retain for every layer/head l. A ratio‑aware saliency score is then defined as

ϕ(l) = ‖g_f^l‖₂ / ‖θ^l‖₂ + ε·|1 – cos(g_f^l, g_r^l)|^α,

where α balances magnitude versus orthogonality and ε ensures numerical stability. High ϕ(l) indicates strong forgetting influence with minimal interference to retained knowledge. Layers whose score exceeds a threshold τ form the edit set K.

RAZOR’s loss combines three components: (1) a retain loss (symmetric InfoNCE for CLIP, denoising loss for diffusion, or analogous contrastive loss for VLMs) that preserves performance on D_r; (2) a forget loss (cosine‑embedding “push‑away” loss 1‑⟨v_i, t_i⟩) that drives apart embeddings of forgotten image‑text pairs; and (3) a mismatch regularizer that penalizes deviation from the original model’s similarity scores, limiting drift and ensuring stability under quantization. The overall objective is

L_RAZOR = L_retain + λ_f ρ L_forget + λ_m L_mismatch,

with ρ controlling the forget/retain trade‑off.

Updates are performed with a blended gradient:

Δθ^l = –η_l

Comments & Academic Discussion

Loading comments...

Leave a Comment