Descent-Guided Policy Gradient for Scalable Cooperative Multi-Agent Learning

Scaling cooperative multi-agent reinforcement learning (MARL) is fundamentally limited by cross-agent noise. When agents share a common reward, the actions of all $N$ agents jointly determine each agent's learning signal, so cross-agent noise grows w…

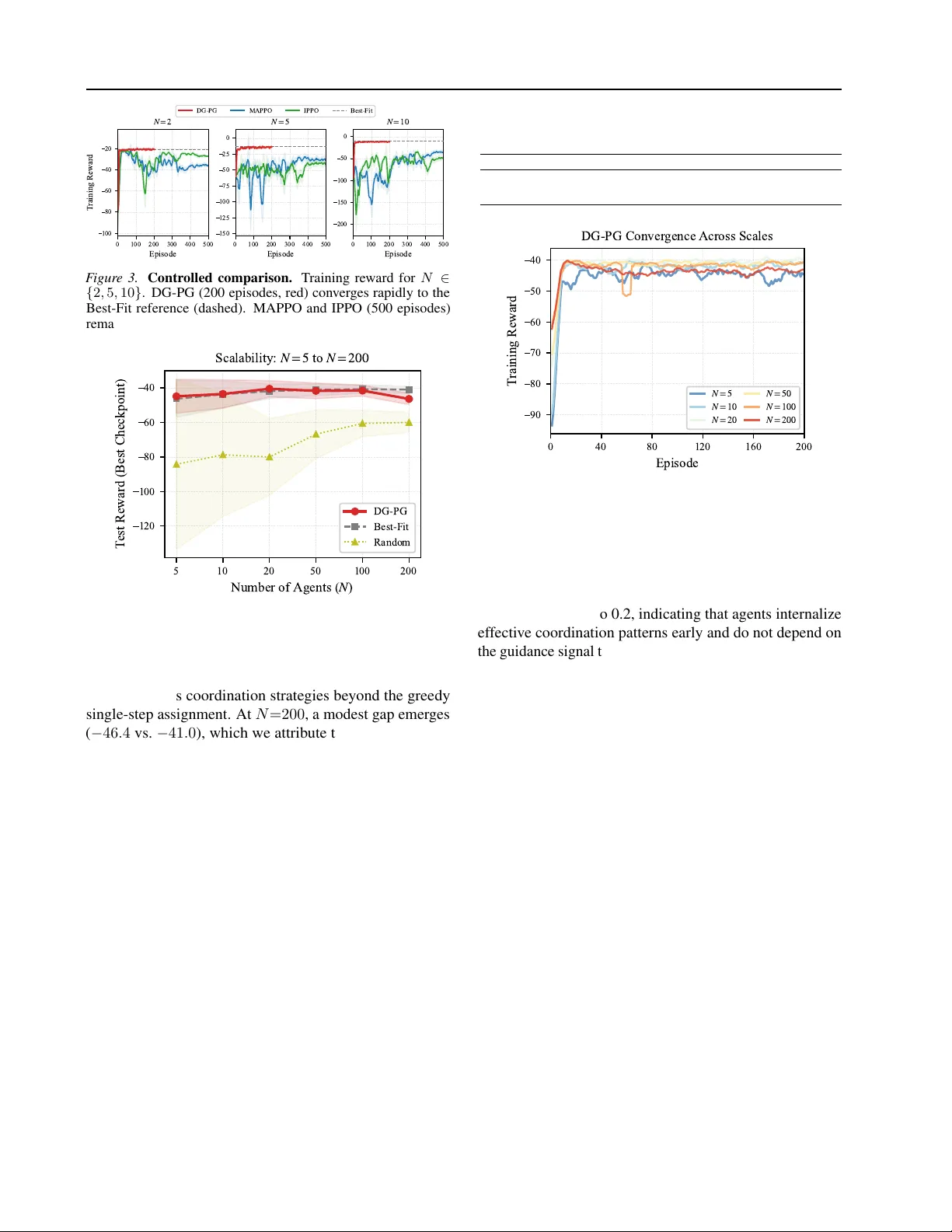

Authors: Shan Yang, Yang Liu