A Deconfounding Framework for Human Behavior Prediction: Enhancing Robotic Systems in Dynamic Environments

Accurate prediction of human behavior is crucial for effective human-robot interaction (HRI) systems, especially in dynamic environments where real-time decisions are essential. This paper addresses the challenge of forecasting future human behavior using multivariate time series data from wearable sensors, which capture various aspects of human movement. The presence of hidden confounding factors in this data often leads to biased predictions, limiting the reliability of traditional models. To overcome this, we propose a robust predictive model that integrates deconfounding techniques with advanced time series prediction methods, enhancing the model’s ability to isolate true causal relationships and improve prediction accuracy. Evaluation on real-world datasets demonstrates that our approach significantly outperforms traditional methods, providing a more reliable foundation for responsive and adaptive HRI systems.

💡 Research Summary

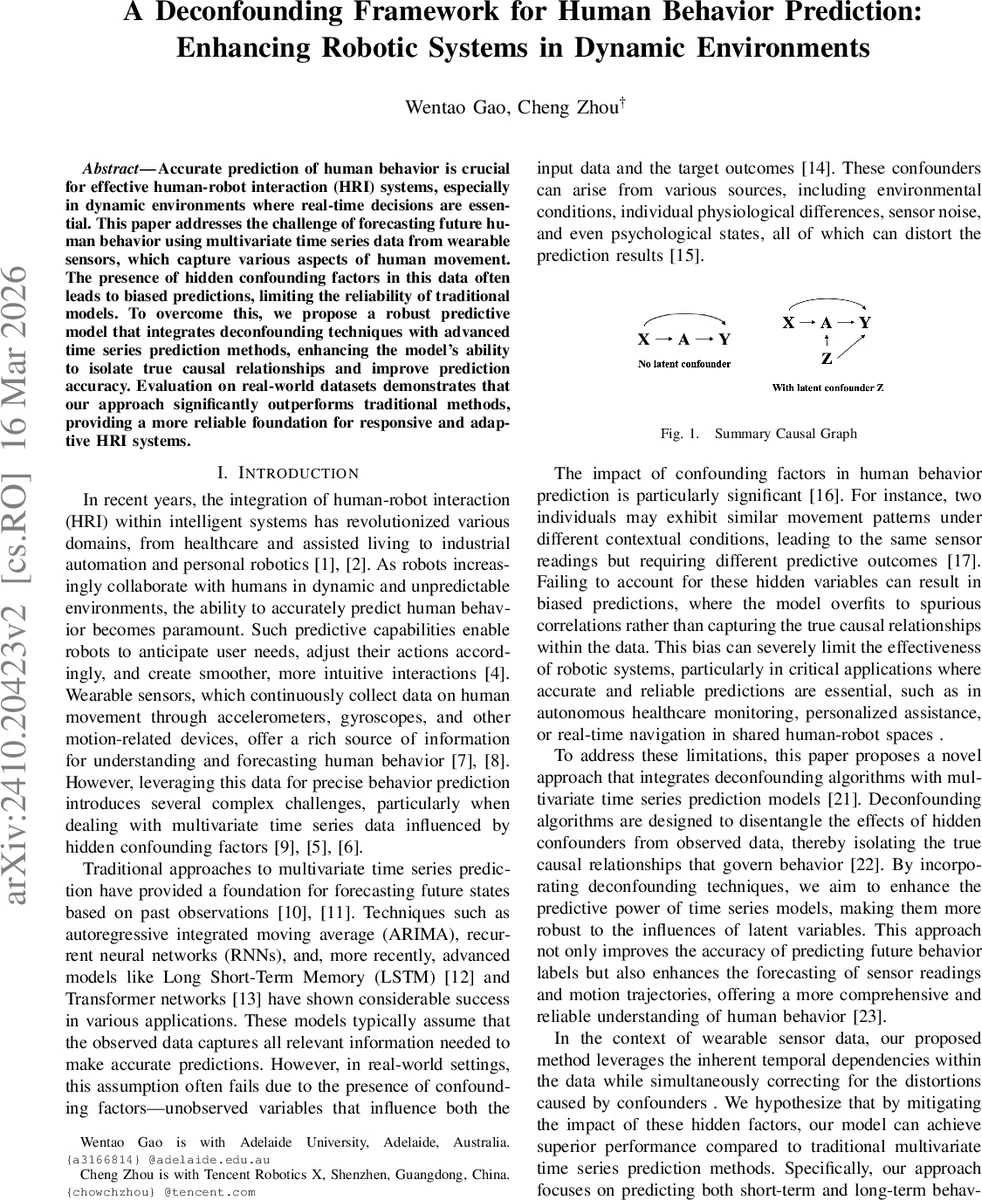

The paper tackles a fundamental problem in human‑robot interaction: predicting future human behavior from wearable‑sensor time‑series data in dynamic, real‑time environments. While existing multivariate time‑series models such as ARIMA, LSTM, and recent Transformer‑based approaches have achieved notable success, they typically assume that all relevant information is captured in the observed variables. In practice, hidden confounding factors—environmental conditions, physiological states, sensor noise, and psychological influences—simultaneously affect the sensor readings (Xₜ), the observed actions or treatments (Aₜ), and the future outcomes (Yₜ₊ₕ). Ignoring these latent variables leads to biased forecasts and limits the reliability of robotic decision‑making.

To address this gap, the authors propose the Deconfounding Action Factor Model (DAFM), a two‑stage framework that explicitly infers hidden confounders (denoted Zₜ) and incorporates them into state‑of‑the‑art time‑series predictors. In the first stage, a recurrent neural network (RNN) processes the historical context Ĥₜ₋₁ = {Āₜ₋₁, X̄ₜ₋₁, Z̄ₜ₋₁} and outputs a latent representation Zₜ = g(Ĥₜ₋₁). This RNN captures long‑range temporal dependencies, allowing Zₜ to encode the cumulative effect of unobserved influences. In the second stage, the inferred Zₜ is concatenated with the current action Aₜ (and optionally Xₜ) and fed into advanced forecasting models: iTransformer, TimesNet, and Non‑stationary Transformer. These models are chosen for their ability to handle irregular sampling, multi‑scale patterns, and non‑stationarity—characteristics common in human motion data.

Training minimizes a composite loss

L = MSE(Yₜ₊ₕ, Ŷₜ₊ₕ) + λ ∑ₜ‖Zₜ − g(Ĥₜ₋₁)‖²,

where the first term penalizes prediction error and the second regularizes the latent variables to stay faithful to the historical context.

A key theoretical contribution is the introduction of Sequential Kallenberg Construction. The authors prove that if, at each time step, the joint distribution of the observed actions can be expressed as Aₜⱼ = fₜⱼ(Zₜ, Xₜ, Uₜⱼ) with Uₜⱼ ∼ Uniform

Comments & Academic Discussion

Loading comments...

Leave a Comment