PulmoVec: A Two-Stage Stacking Meta-Learning Architecture Built on the HeAR Foundation Model for Multi-Task Classification of Pediatric Respiratory Sounds

Background: Respiratory diseases are a leading cause of childhood morbidity and mortality, yet lung auscultation remains subjective and limited by inter-listener variability, particularly in pediatric populations. Existing AI approaches are further c…

Authors: Izzet Turkalp Akbasli, Oguzhan Serin

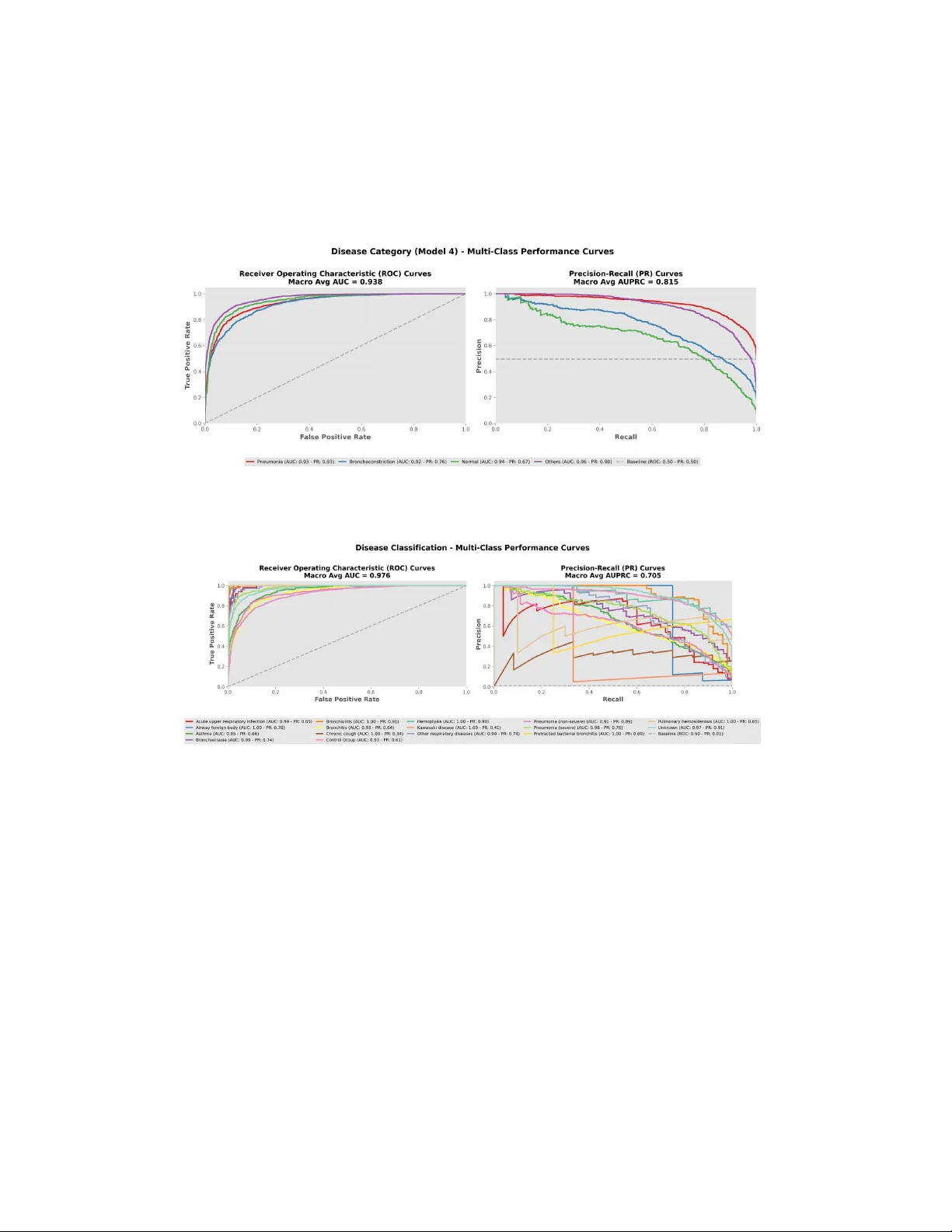

PulmoV ec: A T w o-Stage Stac king Meta-Learning Arc hitecture Built on the HeAR F oundation Mo del for Multi-T ask Classification of P ediatric Respiratory Sounds Izzet T urk alp Akbasli 1,* and Oguzhan Serin 2,* 1 Departmen t of P ediatric In tensiv e Care Medicine, Life Supp ort Cen ter, Hacettep e Univ ersit y , Ank ara, Türkiy e 2 Departmen t of P ediatric Emergency Medicine, Life Supp ort Center, Hacettep e Universit y F aculty of Medicine, Ank ara, Türkiy e * * Indic ates c o-first authors Corresp onding Author: Izzet T urk alp Akbasli, MD Departmen t of P ediatric Emergency Medicine, Hacettep e Universit y F aculty of Medicine 06100 Sihhiy e, Ank ara, T urkey Email: iakbasli@hacettep e.edu.tr T elephone: +90 312 305 1350 OR CID: 0000-0003-3055-7355 Running Head: PulmoV ec for Pediatric Respiratory Sounds Keyw ords: p e diatric r espir atory sounds, lung sound classific ation, foundation audio mo del, HeAR, tr ansfer le arning, stacking ensemble, LightGBM, digital auscultation, SPRSound Abstract Bac kground: Respiratory diseases are a leading cause of childhoo d morbidit y and mortalit y , yet lung auscultation remains sub jective and limited b y in ter-listener v ari- abilit y , particularly in p ediatric p opulations. Existing AI approac hes are further con- strained by small datasets and single-task designs. W e dev elop ed PulmoV ec, a multi- task framew ork built on the Health A coustic Represen tations (HeAR) foundation mo del for classification of p ediatric respiratory sounds. Metho ds: In this retrosp ectiv e analysis of the SPRSound database, 24,808 even t-level annotated segments from 1,652 p ediatric patien ts were analyzed. Three task-sp ecific classifiers were trained for screening, sound-pattern recognition, and disease-group pre- diction. Their out-of-fold probabilit y outputs were com bined with demographic meta- data in a LightGBM stacking meta-mo del, and ev en t-lev el predictions were aggregated to the patien t lev el using ensem ble v oting. 1 Results: At the even t level, the screening mo del achiev ed an ROC-A UC of 0.96 (95% CI, 0.95–0.97), the sound-pattern recognition mo del a macro ROC-A UC of 0.96 (95% CI, 0.96–0.97), and the disease-group prediction mo del a macro ROC-A UC of 0.94 (95% CI, 0.93–0.94). At the patient level, disease-group classification yielded an accuracy of 0.74 (95% CI, 0.71–0.77), a weigh ted F1-score of 0.73, and a macro ROC-A UC of 0.91 (95% CI, 0.90–0.93). Stac king improv ed p erformance across all tasks compared with base mo dels alone. Conclusions: PulmoV ec links ev en t-lev el acoustic phenot yping with patient-lev el clin- ical classification, supp orting the p otential of foundation-mo del-based digital ausculta- tion in p ediatric respiratory medicine. Multi-cen ter external v alidation across devices and real-w orld conditions remains essen tial. INTR ODUCTION Bac kground and Motiv ation Respiratory diseases are among the leading causes of morbidit y and mortality in children w orldwide, with lo wer respiratory tract infections con tin uing to represent a ma jor cause of hospitalization and death, particularly in low and middle-income countries [ 1 , 2 ]. Pneumo- nia, in particular, remains the ma jor con tributor to c hildho o d mortalit y , accounting for 14% of all deaths in children under five year s of age and causing 740,180 deaths in 2019; timely and accurate diagnosis has b een shown to reduce mortality b y up to 28% [ 1 ]. T o supp ort early and accurate diagnostic decision-making in children with respiratory disease, clinicians rely on lung auscultation, a clinical technique that inv olves iden tifying pathological patterns in respiratory sounds using a stethoscop e and that has remained a fundamen tal skill for nearly t w o cen turies [ 3 , 4 ]. Despite its longstanding clinical use, lung auscultation remains inheren tly sub jective, and substan tial v ariabilit y in the in terpretation of respiratory sounds can o ccur ev en among trained clinicians [ 5 ]. These difficulties are further amplified in p e- diatric p opulations, where patien t co op eration is limited and respiratory physiology differs from that of adults [ 6 , 7 ]. Recen t adv ances in digital stethoscop es and smartphone-based recording tec hnologies no w enable the acquisition, storage, and computational analysis of respiratory sounds, creating new opp ortunities for ob jective ev aluation and automated di- agnostic supp ort [ 3 , 8 , 9 ]. Problem Statemen t The existing p ediatric respiratory sound classification literature is characterized b y notable limitations. Most studies rely on small, single-center datasets, and heterogeneity across recording devices, annotation proto cols, and lab el taxonomies limits cross-study compara- bilit y [ 10 , 11 , 12 ]. Most curren t architectures employ single-task classifiers, whic h may not capture the full clinical complexity of respiratory sounds in c hildren [ 3 , 11 ]. In this context, the central researc h question is as follo ws: can a foundation audio model trained through large-scale self-sup ervised learning recognize acoustic patterns in p ediatric respiratory sounds in a clinically meaningful wa y , and can a multi-task stac king architecture impro v e up on the p erformance of existing single-task approaches? 2 Researc h Gap and Ob jectiv es The recen t emergence of self-supervised foundation audio mo dels for health acoustics has op ened new p ossibilities. One of the most influen tial is Health Acoustic Represen tations (HeAR), a large-scale foundation enco der trained on 313.3 million audio clips from roughly 3 billion Y ouT ub e videos, with demonstrated strength across multiple health acoustics tasks [ 13 ]. Despite this progress, most applications of HeAR rely on simple single-task clas- sifiers, and researc h on m ulti-task stacking architectures for respiratory sound classification remains limited [ 14 , 15 ]. Recen t reviews further highligh t p ersistent gaps in explainability , external v alidation, and clinically meaningful lab el design [ 3 , 10 , 11 , 16 ]. T o address these limitations, this study introduces PulmoV ec, a tw o-stage architecture that leverages HeAR as a shared acoustic backbone and in tegrates three complementary task- sp ecific base classifiers with a Ligh tGBM stac king meta-mo del that incorp orates demographic metadata. The system is designed to mirror a step wise clinical decision-supp ort w orkflo w: first distinguishing normal from abnormal sounds , then iden tifying the sp ecific sound pattern, follo w ed b y predicting the disease group asso ciated with pathological patterns, and finally in tegrating acoustic outputs with clinical v ariables suc h as age, sex, and recording lo cation to generate more clinically meaningful predictions. METHODS Study Design and Data Source This study is based on a retrosp ective analysis of the publicly a v ailable SPRSound p ediatric respiratory sound database, collected using an electronic stethoscop e at Shanghai Children’s Medical Cen ter (SCMC) [ 17 ]. SPRSound provides b oth record-lev el and even t-lev el annota- tions (Normal, Fine Crac kle, Coarse Crac kle, Wheeze, Wheeze&Crac kle, Rhonchi, Stridor) with millisecond-precision timestamps, generated by 11 exp erienced p ediatric ph ysicians [ 17 ]. The database encompasses 16 distinct disease diagnoses; the detailed distribution of these diagnoses is pro vided in the supplemen tary material (Supplementary T able S1). Because th is study used a publicly av ailable, pre-anonymized dataset and inv olved no direct p articipan t con tact or access to iden tifiable priv ate information, additional institutional review b oard appro v al and individual informed consen t were not required for the presen t secondary analy- sis. Study design, analysis, and rep orting were conducted in accordance with TRIPOD+AI recommendations, and the completed TRIPOD+AI chec klist is pro vided in Supplementary App endix 1. Data Curation Data curation was p erformed in three stages: (1) remov al of p otential duplicate recordings, (2) exclusion of recordings lab eled as “P o or Qualit y” at the record level, and (3) exclusion of recordings lac king even t-level annotations. There w ere no missing data in the final analytic dataset for the v ariables used in mo del dev elopmen t, including age, sex, recording lo cation, and even t-lev el lab els; accordingly , no imputation pro cedures w ere p erformed. Segmen ts lab eled as “No Ev en t” in even t-level annotations were included in the Normal class, consisten t 3 with their clinical in terpretation. The current expanded version of the SPRSound database w as used. Audio Prepro cessing and F eature Extraction All signals w ere resampled to 16 kHz mono and standardized to 2.0-second windo ws (zero- padded if shorter, center-cropped if longer). A 10% ov erlap margin w as applied around even t b oundaries. A coustic feature extraction w as p erformed using the HeAR foundation audio mo del [ 13 ], pro ducing a 512-dimensional em b edding v ector for eac h clip. Base Classifier Arc hitecture and Lab el Design Three task-sp ecific base classifiers w ere constructed on the shared HeAR em b edding space. The classification head architecture was iden tical across all mo d els: a fully connected la y er (512 to 256 neurons), nonlinear activ ation, drop out ( p = 0 . 3 ), and an output la yer. The Sound Pattern Recognition Mo del classified ev en ts as Normal, Crac kles (combining Fine and Coarse Crackle), or Rhonc hi (wheeze and rhonc hi-lik e contin uous patterns). The Screening Mo del p erformed a Normal/Abnormal distinction. The Disease Group Prediction Mo del mapp ed the 16 disease lab els in SPRSound to four clinically meaningful groups: Pneumonia (sev ere and non-sev ere), Bronchial diseases (asthma, bronc hitis, bronchiolitis, bronc hiectasis, and protracted bacterial bronchitis), Normal (con trol group), and Others (upp er respiratory tract infection, hemopt ysis, pulmonary hemosiderosis, c hronic cough, airwa y foreign b ody , Ka w asaki disease, and other respiratory diseases). The complete lab el mapping from original annotations to study targets is provided in Supplementary T able S2. T w o-Stage Fine-T uning Proto col T raining follow ed a tw o-stage proto col. In the first stage, the HeAR enco der was frozen and only the classification head w as optimized for 10 ep o chs at a learning rate of 1 × 10 − 4 . In the second stage, the enco der w as unfrozen and the entire mo del w as fine-tuned for 40 ep o c hs at a learning rate of 5 × 10 − 7 . Class imbalance w as addressed through class w eigh ting in the loss function, and early stopping based on v alidation p erformance was applied. Stac king Meta-Learning After training the base mo dels, an out-of-fold approac h w as used to preven t data leak age from base mo del predictions into the meta-model training data: probabilit y outputs for eac h training example w ere generated using a fold in which that example was not part of the training set. A total of 9 probabilit y-based features were obtained from the three base mo dels (3 from the Sound Pattern Recognition Mo del, 2 from the Screening Mo del, and 4 from the Disease Group Prediction Mo del). These probabilit y features were combined with age, sex, and recording lo cation to form an 11-dimensional meta-feature matrix. Three clinical classification targets are presen ted in this study: Screening (Normal/Abnormal), Sound P attern (Normal/Crac kles/Rhonc hi), and Disease Group (Pneumonia/Bronc hial Dis- eases/Normal/Others). LightGBM hyperparameters w ere indep endently optimized for each 4 target using Optuna-based Bay esian searc h. T raining h yp erparameters and LightGBM opti- mization search ranges are detailed in Supplementary T able S4. Results for additional classi- fication targets (including a 7-class even t type and a 16-disease detailed mo del) are provided in the supplementary material (Supplemen tary T able S3; Supplemen tary Figures S2 and S3). P atien t-Lev el Aggregation: Ensem ble V oting Since clinical decision-making o ccurs at the patien t level, an ensem ble v oting metho d com- bining three complementary strategies was dev elop ed to aggregate ev en t-lev el predictions to the patien t level: simple soft voting (w eigh t: 30%), confidence-w eighted voting (w eight: 40%), and ma jorit y v oting (w eigh t: 30%). Ma jorit y voting was activ ated only when at least 60% of even ts agreed on a class and at least 50% sho w ed high confidence ( > 0 . 7 ); otherwise, the system fell back to soft v oting. The final patien t-lev el prediction was calculated as a w eigh ted linear com bination of the three strategies. The relative weigh ts were determined empirically using the v alidation set through a predefined search ov er candidate com binations, prioritizing stable patient-lev el decisions and balanced class p erformance rather than o v erall accuracy alone. The weigh ts and decision thresholds in this ensemble scheme were not chosen arbitrarily but were selected by jointly ev aluating p erformance and decision stabilit y on the v alida- tion split. Different w eigh t com binations were tested in a predefined searc h, with selection based not only on ov erall accuracy but also on main taining balance across classes, reducing excessiv e volatilit y in lo w-sample classes, and prev enting a single high-probability segment from disprop ortionately driving the patient-lev el decision. Similarly , the > 0 . 7 probabilit y threshold was set to ensure that only consisten tly dominant patterns generated patient-lev el decisions. These parameters should b e view ed not as universal optima but as empirical design parameters that yielded the most balanced v alidation p erformance under the noise structure and class distribution of the current dataset. Co debase, Mo del Ev aluation, and Accessibilit y P erformance was ev aluated using accuracy , weigh ted-F1, macro-F1, p er-class precision, re- call, sp ecificity , NPV, ROC-A UC, and Precision-Recall analysis. In multi-class tasks, ROC- A UC v alues w ere computed using the one-vs-rest approac h and rep orted as macro a v erages. The o verall adequacy of probabilistic outputs w as summarized using the Brier score. 95% confidence interv als w ere computed using nonparametric b o otstrap with 1,000 iterations at the patien t lev el in the test set. All analyses were conducted using Python 3.11.2, with pandas, NumPy , PyT orc h, scikit-learn, and SciPy libraries. Pro cessed data, co de, and ex- planatory do cumen tation are pro vided in a publicly a v ailable GitHub rep ository to supp ort repro ducibilit y ( https://github.com/turkalpmd/PulmoVec ). 5 RESUL TS Cohort Characteristics The final analysis cohort after curation consisted of 1,652 unique patients and 24,808 ev en t- lev el lab eled sound segments. Stratified patien t-lev el splitting allo cated 1,321 patients (20,567 ev ents) to the training set and 331 patien ts (4,241 ev ents) to the test set. No statistically significan t differences w ere observed b etw een the tw o groups in terms of age ( p = 0 . 066 ), disease group distribution ( p = 1 . 000 ), sound pattern distribution ( p = 0 . 181 ), or recording lo cation ( p = 0 . 307 ) (T able 1 ). The cohort comprised 54.7% pneumonia, 21.8% bronc hial diseases, 9.7% control group, and 13.9% other respiratory di seases (see Supple- men tary T able S1 for the detailed 16-disease distribution). 6 T able 1: Cohort descriptive statistics. Cate goric al variables: chi-squar e test; c ontinuous variables: Mann-Whitney U test. ∗ No Event se gments wer e include d in the Normal class. V ariable All Cohort T rain T est p v alue ( n ) n (%) n (%) T otal patien ts 1,652 1,321 331 — Sex 0.105 Male 850 666 (50.4) 184 (55.6) F emale 802 655 (49.6) 147 (44.4) Age (y ears), median (IQR) — 5.0 (3.4–7.4) 4.5 (3.3–7.0) 0.066 Disease group (patien t-level) 1.000 Pneumonia 904 723 (54.7) 181 (54.7) Bronc hial diseases 359 287 (21.7) 72 (21.8) Normal 159 127 (9.6) 32 (9.7) Others 230 184 (13.9) 46 (13.9) T otal ev en ts 24,808 20,567 4,241 — Ev en t type distribution 0.181 Normal 18,772 15,623 (76.0) 3,149 (74.3) Fine Crac kle 3,530 2,909 (14.1) 621 (14.6) Coarse Crac kle 177 161 (0.8) 16 (0.4) Wheeze 1,505 1,254 (6.1) 251 (5.9) Wheeze+Crac kle 303 229 (1.1) 74 (1.7) Rhonc hi 217 201 (1.0) 16 (0.4) Stridor 74 18 (0.1) 56 (1.3) No Ev en t ∗ 230 172 (0.8) 58 (1.4) Sound pattern distribution 0.181 Normal 19,002 15,795 (76.8) 3,207 (75.6) Crac kles 4,010 3,299 (16.0) 711 (16.8) Rhonc hi 1,796 1,473 (7.2) 323 (7.6) Recording lo cation (p1–p4) 0.307 p1 6,243 5,162 (25.1) 1,081 (25.5) p2 6,555 5,400 (26.3) 1,155 (27.2) p3 5,765 4,814 (23.4) 951 (22.4) p4 6,038 5,030 (24.5) 1,008 (23.8) Ev en t-Lev el Classification When comparing individual HeAR base mo del outputs with the final LightGBM stac king meta-mo del outputs at the even t leve l, p erformance gains of 9 to 12 p ercentage p oints w ere observ ed across all tasks. F or the first tw o ev en t-lev el acoustic segment classification mo dels, p erformance metrics were as follows: the Screening Mo del achiev ed an accuracy of 0.92 (95% CI, 0.92 to 0.93), w eigh ted-F1 of 0.92, and R OC-A UC of 0.96 (95% CI, 0.95 to 0.97), with a Brier score of 0.12 (95% CI, 0.11 to 0.13). The Sound Pattern Recognition Mo del achiev ed 7 an accuracy of 0.91 (95% CI, 0.90 to 0.92), weigh ted-F1 of 0.91, and macro ROC-A UC of 0.96 (95% CI, 0.96 to 0.97), with a Brier score of 0.14 (95% CI, 0.12 to 0.15). P er-class p erformance metrics for the Sound Pattern Recognition Mo del are presented in T able 2 . T able 2: Sound Pattern R e c o gnition Mo del p er-class p erformanc e metrics. Class R OC- A UC (OvR) F1- Score Recall (Sensitivit y) Sp ecificit y NPV Precision (PPV) Normal 0.96 0.95 0.97 0.76 0.88 0.93 ( n = 3 , 743 ) [0.95–0.96] [0.94– 0.95] [0.96–0.97] [0.74–0.79] [0.86– 0.89] [0.92–0.94] Crac kles 0.95 0.74 0.68 0.97 0.94 0.82 ( n = 813 ) [0.94–0.96] [0.72– 0.77] [0.65–0.72] [0.96–0.97] [0.93– 0.95] [0.79–0.84] Rhonc hi 0.98 0.86 0.83 0.99 0.99 0.88 ( n = 345 ) [0.97–0.99] [0.83– 0.88] [0.80–0.87] [0.99–0.99] [0.98– 0.99] [0.84–0.91] V alues are rep orted with 95% confidence interv als. R OC-AUC = area under the receiver op erating c haracteristic curve; F1-score = harmonic mean of precision and recall; Recall = sensitivity; Sp ecificity = true negativ e rate; PPV = p ositive predictive v alue; NPV = negative predictiv e v alue. 8 Figure 1: Qualitative p erformanc e example for a se gment annotate d as Cr ack- les: Example qualitative pr e diction for a r espir atory sound se gment lab ele d as cr ackles. The top p anel shows the r aw audio waveform, the midd le p anel displays the c orr esp onding Mel- sp e ctr o gr am r epr esentation, and the b ottom p anel pr esents the mo del’s pr e dicte d class pr ob- abilities for Normal, Cr ackles, and R honchi. The mo del c orr e ctly identifies the se gment as cr ackles with high c onfidenc e (0.859). The Disease Group Prediction Mo del achiev ed an accuracy of 0.80 (95% CI, 0.78 to 0.81), w eigh ted-F1 of 0.79, and macro R OC-A UC of 0.94 (95% CI, 0.93 to 0.94), with a Brier score of 0.29 (95% CI, 0.28 to 0.31). Per-class results are sho wn in T able 3 . Ev en t-lev el confusion matrices for all classification tasks are provided in Supplementary Figure S2. 9 T able 3: Dise ase Gr oup Pr e diction Mo del event-level p er-class p erformanc e metrics. Class R OC- A UC (OvR) F1- Score Recall (Sensitivit y) Sp ecificit y NPV Precision (PPV) Pneumonia 0.93 0.85 0.89 0.81 0.88 0.82 ( n = 2 , 436 ) [0.92–0.94] [0.84– 0.86] [0.88–0.90] [0.79–0.82] [0.87– 0.89] [0.81–0.84] Bronc hial 0.92 0.68 0.63 0.95 0.92 0.75 diseases [0.91–0.93] [0.66– 0.70] [0.59–0.66] [0.94–0.96] [0.91– 0.93] [0.72–0.78] ( n = 920 ) Normal 0.94 0.61 0.54 0.98 0.96 0.71 ( n = 405 ) [0.93–0.95] [0.57– 0.65] [0.49–0.59] [0.98–0.98] [0.95– 0.97] [0.66–0.76] Others 0.96 0.81 0.82 0.94 0.95 0.79 ( n = 1 , 140 ) [0.96–0.97] [0.79– 0.82] [0.80–0.84] [0.93–0.94] [0.94– 0.95] [0.77–0.82] V alues are rep orted with 95% confidence interv als. R OC-AUC = area under the receiver op erating c haracteristic curve; F1-score = harmonic mean of precision and recall; Recall = sensitivity; Sp ecificity = true negativ e rate; PPV = p ositive predictive v alue; NPV = negative predictiv e v alue. P atien t-Lev el Classification P atien t-lev el Disease Group Prediction Mo del results obtained through ensemble v oting are presen ted in T able 4 . A t the patien t lev el, accuracy was 0.74 (0.71 to 0.77), w eigh ted-F1 w as 0.73, and macro ROC-A UC w as 0.91 (0.90 to 0.93). Compared to the ev en t level, accuracy decreased b y 0.06 p oin ts while macro R OC-AUC declined from 0.94 to 0.91. P atien t-lev el recall for pneumonia (0.85) remained close to the ev ent-lev el v alue (0.89); the Normal class had the lo w est recall (0.39), and p ossible explanations for this finding are addressed in the Discussion. The patien t-lev el Brier score was 0.35 (0.32 to 0.39). P atien t-lev el R OC and Precision-Recall curv es are sho wn in Figure 2 . 10 T able 4: Dise ase Gr oup Pr e diction Mo del p atient-level (ensemble voting) p er-class p erfor- manc e metrics. Class R OC- A UC (OvR) F1- Score Recall (Sensitivit y) Sp ecificit y NPV Precision (PPV) Pneumonia 0.89 0.80 0.85 0.73 0.82 0.77 ( n = 386 ) [0.86–0.91] [0.78– 0.83] [0.81–0.88] [0.68–0.77] [0.77– 0.86] [0.73–0.81] Bronc hial 0.91 0.64 0.58 0.94 0.89 0.72 diseases [0.89–0.93] [0.58– 0.71] [0.51–0.66] [0.92–0.96] [0.87– 0.91] [0.64–0.79] ( n = 165 ) Normal 0.92 0.48 0.39 0.98 0.94 0.65 ( n = 67 ) [0.89–0.95] [0.36– 0.60] [0.27–0.51] [0.97–0.99] [0.92– 0.96] [0.49–0.81] Others 0.93 0.73 0.78 0.92 0.95 0.68 ( n = 134 ) [0.91–0.96] [0.67– 0.78] [0.71–0.85] [0.90–0.94] [0.93– 0.97] [0.61–0.75] V alues are rep orted with 95% confidence interv als. R OC-AUC = area under the receiver op erating c haracteristic curve; F1-score = harmonic mean of precision and recall; Recall = sensitivity; Sp ecificity = true negativ e rate; PPV = p ositive predictive v alue; NPV = negative predictiv e v alue. Figure 2: Dise ase Gr oup Pr e diction Mo del p atient-level ROC and Pr e cision-R e c al l curves (macr o A UC=0.91; macr o AUPR C=0.76). 11 DISCUSSION The primary contribution of this study is the presentation of a multi-task, tw o-stage ar- c hitecture that links even t-level acoustic phenotyping to patient-lev el clinical decisions in p ediatric respiratory sounds. PulmoV ec combined the acoustic represen tations pro duced b y the HeAR foundation mo del with base learners that classify from differen t clinical p ersp ec- tiv es and mo deled a step wise decision pro cess b y incorp orating demographic con text through a stacking meta-learner. This approach differs structurally from the dominant single-task CNN paradigm in the literature and offers the ability to in tegrate clinical information at m ultiple lev els. Strengths of the Study PulmoV ec is among the p ediatric respiratory sound studies that com bine the HeAR foun- dation mo del with a m ulti-task stacking arc hitecture. Through probabilit y-based feature fusion, the arc hitecture transfers classification uncertaint y to the meta-learner rather than losing it at the hard lab el level, which ma y allo w for b etter represen tation of in termediate states among acoustic patterns. The ensem ble voting strategy dev elop ed for transition- ing from ev en t-lev el predictions to patient-lev el decisions provides direct ev aluation at the patien t lev el, the natural unit of clinical decision-making. Probability calibration w as quan- tified using Brier scores, and the observed v alues (0.12 to 0.29 at the even t lev el; 0.35 at the patien t lev el) suggest non-random probabilistic discrimination that v aries across tasks, al- though class-sp ecific reliability analyses and calibration curves w ould b e v aluable for a more comprehensiv e ev aluation in the future. The use of the expanded v ersion of SPRSound (1,652 patien ts, 24,808 even ts) constitutes one of the largest single-cen ter studies in the p ediatric respiratory sound domain. Comparison with the Literature The meta-analysis by Park et al., cov ering 41 p ediatric studies, rep orted p o oled sensitivities of 0.90 for wheeze detection and 0.91 for abnormal sound detection [ 11 ]. The systematic review b y Landry et al., encompassing 81 studies, rep orted classification accuracies in the 60 to 100% range while identifying substan tial heterogeneity [ 10 ]. The patien t-level macro R OC- A UC of 0.91 for PulmoV ec’s Disease Group Prediction Mo del is broadly consistent with these meta-analytic estimates. The DeepBreath study rep orted an internal v alidation A UR OC of 0.93 in 572 pediatric patien ts across fiv e coun tries, but this v alue declined to 0.74 to 0.89 during external v alidation; this decline is a notable example underscoring the imp ortance of the lack of external v alidation in the present study . In the multi-cen ter wireless stethoscop e trial, sensitivit y and sp ecificity exceeded 92% in sub ject-dep enden t scenarios [ 18 , 19 ]. The recent p ediatric lung sound AI literature sho ws that while p erformance metrics are promising, there is mark ed heterogeneity in datasets, lab eling schemes, recording devices, and v alidation strategies [ 11 ]. Reviews ev aluating the broader respiratory acoustics literature rep ort that external v alidation, explainabilit y , standardized lab el on tologies, and cross-device generalizabilit y remain the main op en issues in the field [ 10 , 18 ]. In this con text, the presen t 12 findings pro vide a strong internal v alidation signal, but the ultimate clinical v alue will b ecome clear through m ulti-cen ter external v alidation studies. Mec hanism of the Stac king Arc hitecture The consistent p erformance gains observ ed across all tasks (9 to 12 p ercentage p oints in accuracy) likely effects not merely combining multiple mo del outputs, but to preserving and transferring probabilistic uncertaint y to the meta-learner. By in tegrating demographic meta- data alongside base-mo del probabilities, the stacking arc hitecture allows acoustic predictions to be interpreted within clinical context. Compared with hard lab el-lev el decision fusion, this approac h may b etter capture in termediate states across acoustic patterns. The largest gains were observ ed in m ulti-class tasks, suggesting that the in tegration of complementary task-sp ecific classifiers improv ed generalization in more complex prediction settings. The Ligh tGBM-based meta-learner also enables examination of decision contributions through feature-imp ortance rankings, providing a more interpretable framework than a fully opaque com bination sc heme [ 16 , 20 , 21 ]. T ransition from Ev ent Lev el to P atien t Level In the Disease Group Prediction Mo del, accuracy decreased from 0.80 to 0.74 and macro R OC-A UC declined from 0.94 to 0.91 in the transition from ev en t level to patient level. This decline is an exp ected finding in fo cal lung pathologies, where only the affected regions among m ultiple recording lo cations for a given patient pro duce pathological sounds. The preserv ation of patient-lev el recall for pneumonia (0.85) close to the even t-level v alue (0.89) suggests that the ensemble v oting strategy limited information loss in this class. The relatively low recall observ ed for the Normal class at the patient lev el (0.39) should b e interpreted not as a failure of the mo del to distinguish normal lung sounds, but as a reflection of the phase- and region-dep enden t nature of respiratory sounds. The classical auscultation literature describ es wheezes as m usical, predominantly expiratory sounds asso- ciated with airw a y obstruction, while crac kles are short-duration, discon tin uous sounds that are most prominent during the inspiratory phase [ 4 , 22 , 23 ]. Therefore, it is exp ected that some segments may app ear acoustically normal ev en in patients with a diagnosis of bronchial diseases or pneumonia: while wheezes ma y b e heard during b oth inspiration and expiration as obstruction sev erit y increases, expiratory predominance is the classic pattern; crackles need not b e presen t throughout the entire respiratory cycle [ 3 , 7 ]. Such segmental heterogeneity can weak en the concordance b etw een the patient-lev el lab el and the acoustic app earance at the short-window lev el, thereb y reducing sensitivity particularly in the Normal class. This finding p oin ts not only to a mo del limitation but also to the inherently high in tra-patien t het- erogeneit y of p ediatric respiratory sounds, suggesting that future respiratory cycle-aligned or inspiratory/expiratory phase-a w are mo deling strategies could impro v e patient-lev el de- cision p erformance. The disease versus even t type distribution heatmap (Supplementary Figure S1) further illustrates this acoustic heterogeneity , confirming that normal-sounding segmen ts predominate across nearly all disease categories. 13 Digital Auscultation and Remote Monitoring P oten tial The pre-training of HeAR on large-scale heterogeneous audio data aims to pro duce repre- sen tations that can handle acoustic v ariability across differen t recording sources and devices. A ccording to the HeAR mo del card, downstream mo dels trained with HeAR hav e b een shown to generalize well to sounds recorded from previously unseen devices [ 13 ]. While this prop erty is conceptually relev ant for scenarios where respiratory sounds may b e recorded outside con- v en tional clinical en vironmen ts, this h yp othesis was not directly tested in the present study . Recen t evidence suggests that smartphone-based sound recording to ols can also b e used for p ediatric respiratory assessment; Jeong et al. demonstrated the feasibilit y of respiratory assessmen t outside healthcare facilities using a smartphone-based self-auscultation to ol [ 8 ]. Den t et al. highlighted the remote monitoring p otential of artificial intelligence in preschool wheeze managemen t [ 9 ]. T zeng et al. sho wed that audio enhancemen t prepro cessing impro v ed classification robustness in noisy scenarios [ 24 ]. T ak en together, these findings suggest that foundation mo del-based respiratory sound analysis may represent a promising av enue for digital auscultation-based decision supp ort; how ever, its practical clinical v alue cannot b e established un til generalizabilit y across devices, en vironmen ts, and cen ters is systematically ev aluated. A ccordingly , future v alidation studies incorp orating differen t recording devices and home-en vironmen t data will b e essential to empirically assess this p oten tial. The clinical v alue of AI-assisted lung sound analysis will b e determined not only by high discriminativ e p erformance but also by the abilit y to function reliably across different p opu- lations, devices, and care settings. The decline in p erformance observ ed in external cohorts in the DeepBreath study , despite strong internal v alidation, demonstrates that generaliz- abilit y should b e treated as a separate success criterion in p ediatric lung sound mo dels [ 18 ]. A ccordingly , while the presen t results should b e interpreted as a strong pro of-of-concept for digital auscultation-based decision supp ort, claims of clinical applicabilit y will require confirmation through prosp ective, multi-cen ter, and cross-device v alidation studies [ 11 ]. Limitations The most imp ortan t limitation of this study is the absence of external v alidation. SPRSound originates from a single p ediatric cen ter and all recordings w ere obtained with the same elec- tronic stethoscop e mo del; as demonstrated b y the DeepBreath study , external v alidation p er- formance can decline substan tially when geographic and device b oundaries are crossed [ 18 ], and Y ang et al. prop osed a device-inv arian t framew ork sp ecifically to address this issue [ 25 ]. While the patient-lev el aggregation strategy uses confidence-based w eigh ting, it do es not mo del temp oral patterns or spatial relationships across lo cations; when considered along- side the lo w recall observ ed in the Normal class, this leav es room for improv ement in the aggregation metho d. Class imbalance is particularly pronounced for rare disease lab els, and the effect of additional balancing strategies b eyond weigh ting (o v ersampling, augmentation, fo cal loss) w as not systematically compared. Noise robustness w as not directly tested with recordings from non-clinical environmen ts, and annotation noise should b e considered as a natural limitation of exp ert-lab eled audio datasets. 14 F uture Directions Multi-cen ter external v alidation, ideally with prosp ective data collection across div erse de- vices and p opulations, is the most critical next step. Patien t-level aggregation strategies that mo del temp oral and spatial patterns, noise robustness assessment using syn th etic noise injection and real-w orld home recordings, and prospective clinical utilit y studies are the priorit y directions for future research. Conclusion PulmoV ec sho ws that HeAR-based acoustic embeddings, multi-task classification, and patien t-lev el aggregation can b e combined into a clinically oriented framework for p ediatric respiratory sound analysis. The findings supp ort the feasibility of foundation-mo del-based digital auscultation for decision supp ort, while underscoring the need for prosp ective multi- cen ter and cross-device v alidation b efore clinical deploymen t. DECLARA TIONS A ckno wledgemen ts: None. F unding: This researc h receiv ed no sp ecific gran t from an y funding agency in the public, commercial, or not-for-profit sectors. Comp eting Interests: The authors ha v e no relev ant financial or non-financial comp eting in terests to disclose. Author Con tributions: I.T.A. led the conceptualization and metho dology , developed the soft w are, p erformed the formal analysis, curated the data, created the visualizations, wrote the original draft, and led pro ject administration. O.S. contributed to v alidation, sup ervision, and writing–review & editing. Data A v ailability: The datasets generated and analysed during the curren t study are publicly a v ailable in the Mendeley Data rep ository . Co de A v ailability: The custom co de used in this study is av ailable at https://github. com/turkalpmd/PulmoVec . Ethics Approv al: This study used retrosp ective, fully de-identified, publicly av ailable data and w as therefore exempt from institutional review b oard review and conducted in accor- dance with applicable ethical standards. Consen t to Participate: Informed consent w as waiv ed due to the retrosp ective use of fully de-iden tified data and minimal risk to patients. Consen t to Publish: Not applicable. Use of Generative Artificial In telligence: Generative artificial intelligence ( Claude Opus 4.6 ) w as used solely for language editing and clarit y . No artificial in telligence was used in the generation of scientific conten t, data analysis, or interpretation. All authors reviewed and appro v ed the final manuscript and tak e resp onsibility for its conten t. 15 References [1] Serin O, Akbasli IT, Cetin SB, K oseoglu B, Deveci AF, Ugur MZ, Ozsurek ci Y. Pre- dicting Escalation of Care for Childho o d Pneumonia Using Machine Learning: Retro- sp ectiv e Analysis and Mo del Developmen t. JMIRx Me d 2025;6(1):e57719. [2] V os T, Lim SS, Abbafati C, Abbas KM, Abbasi M, et al. Global burden of 369 diseases and injuries in 204 countries and territories, 1990–2019: a systematic analysis for the Global Burden of Disease Study 2019. The L anc et 2020;396(10258):1204–1222. [3] Huang D-M, Huang J, Qiao K, Zhong N-S, Lu H-Z, W ang W-J. Deep learning-based lung sound analysis for intelligen t stethoscop e. Mil Me d R es 2023;10(1):44. [4] Bohadana A, Izbic ki G, Kraman SS. F undamen tals of Lung Auscultation. N Engl J Me d 2014;370(8):744–751. [5] A viles-Solis JC, Storvoll I, V an b elle S, Melby e H. The use of sp ectrograms improv es the classification of wheezes and crackles in an educational setting. Sci R ep 2020;10(1):8461. [6] K otb MA, Elmahdy HN, Dein HMSE, Mostafa FZ, Refaey MA, Rjo ob KWY, Draz IH, Basan ti CWS . The Machine Learned Stethoscop e Provides Accurate Op erator Indep en- den t Diagnosis of Chest Disease. Me d Devic es Evid R es 2020;13:13–22. [7] Mor R, Kushnir I, Meyer J-J, Ekstein J, Ben-Do v I. Breath Sound Distribution Images of P atien ts With Pneumonia and Pleural Effusion. R espir Car e 2007;52(12):1753–1760. [8] Jeong SG, Nam SW, Jung SK, Kim S-E. iMedic: T ow ards Smartphone-based Self-Auscultation T o ol for AI-Po wered P ediatric Respiratory Assessment. 2025. [9] Den t A, Khan MK, Subbarao P . Lev eraging artificial in telligence for the management of presc ho ol wheeze: A narrative review. Pe diatr Al ler gy Immunol 2025;36(10):e70207. [10] Landry V, Matschek J, P ang R, Munipalle M, T an K, Boruff J, Li-Jessen NYK. Audio- based digital biomark ers in diagnosing and managing respiratory diseases: a systematic review and bibliometric analysis. Eur R espir R ev 2025;34(176):240246. [11] P ark JS, P ark S-Y, Mo on JW, Kim K, Suh DI. Artificial Intelligence Mo dels for Pedi- atric Lung Sound Analysis: Systematic Review and Meta-Analysis. J Me d Internet R es 2025;27(1):e66491. [12] Mo on HJ, Ji H, Kim BS, Kim BJ, Kim K. Mac hine learning-driv en strategies for en- hanced p ediatric wheezing detection. F r ont Pe diatr 2025;13. https://doi.org/10. 3389/fped.2025.1428862 [13] Baur S, Nabulsi Z, W eng W-H, Garrison J, Blank emeier L, Fishman S, Chen C, Kak ar- math S, Maim b olw a M, Sanjase N, et al. HeAR — Health Acoustic Represen tations. 2024. 16 [14] Bauer MS, Damschroder L, Hagedorn H, Smith J, Kilb ourne AM. An introduction to implemen tation science for the non-sp ecialist. BMC Psychol 2015;3(1):32. [15] Eh tesham A, Kumar S, Singh A, Kho ei TT. Pediatric Asthma Detection with Go ogle’s HeAR Mo del: An AI-Driven Respiratory Sound Classifier. 2025. [16] K w ong JCC, Khondker A, La jkosz K, McDermott MBA, F rigola X B, McCradden MD, Mamdani M, Kulk arni GS, Johnson AEW. APPRAISE-AI T o ol for Quan titativ e Ev alu- ation of AI Studies for Clinical Decision Supp ort. JAMA Netw Op en 2023;6(9):e2335377. [17] Zhang Q, Zhang J, Y uan J, Huang H, Zhang Y, Zhang B, Lv G, Lin S, W ang N, Liu X, et al. SPRSound: Op en-Source SJTU P aediatric Respiratory Sound Database. IEEE T r ans Biome d Cir cuits Syst 2022;16(5):867–881. [18] Heitmann J, Glangetas A, Do enz J, Derv aux J, Shama DM, Garcia DH, Benissa MR, Can tais A, Perez A, Müller D, et al. DeepBreath—automated detection of respiratory pathology from lung auscultation in 572 p ediatric outpatients across 5 coun tries. Npj Digit Me d 2023;6(1):104. [19] Huang D, W ang L, W ang W. A Multi-Cen ter Clinical T rial for Wireless Stethoscop e- Based Diagnosis and Prognosis of Children Comm unity-A cquired Pneumonia. IEEE T r ans Biome d Eng 2023;70(7):2215–2226. [20] Soundera jah V, Ashrafian H, Rose S, Shah NH, Ghassemi M, Golub R, Kahn CE, Estev a A, Karthikesalingam A, Mateen B, et al. A quality assessmen t to ol for ar- tificial in telligence-cen tered diagnostic test accuracy studies: QUADAS-AI. Nat Me d 2021;27(10):1663–1665. [21] Soundera jah V, Guni A, Liu X, Collins GS, Karthik esalingam A, Mark ar SR, Golub RM, Denniston AK, Shett y S, Moher D, et al. The ST ARD-AI rep orting guideline for diagnostic accuracy studies using artificial intelligence. Nat Me d 2025;31(10):3283–3289. [22] Reic henpfader D, Müller H, Deneck e K. A scoping review of large language mo del based approac hes for information extraction from radiology rep orts. Npj Digit Me d 2024;7(1):1–12. [23] Sark ar M, Madabhavi I, Niranjan N, Dogra M. Auscultation of the respiratory system. A nn Thor ac Me d 2015;10(3):158. [24] T zeng J-T, Li J-L, Chen H-Y, Huang C-H, Chen C-H, F an C-Y, Huang EP-C, Lee C-C. Improving the Robustness and Clinical Applicability of Automatic Respiratory Sound Classification Using Deep Learning-Based Audio Enhancemen t: Algorithm De- v elopmen t and V alidation. JMIR AI 2025;4(1):e67239. [25] Y ang M, Liu X, Du W, Liu Y, Zh u W, Bu Z, Mao J, W ang Q, Chen S, Zhou M, et al. A device-in v ariant multi-modal learning framew ork for respiratory disease classification. Npj Digit Me d 2026 F eb 26. https://doi.org/10.1038/s41746- 026- 02445- 4 17 Supplemen tary Material PulmoV e c: A Two-Stage Stacking Meta-L e arning Ar chite ctur e Built on the HeAR F ounda- tion Mo del for Multi-T ask Classific ation of Pe diatric R espir atory Sounds Akbasli et al., 2026 T able S1: Supplemen tary T able S1. Sixte en-Dise ase Distribution in the SPRSound Co- hort. F ul l distribution of 16 dise ase diagnoses with tr ain/test p atient c ounts and event c ounts. Disease T rain T est T rain T est patien ts patien ts ev en ts ev en ts Pneumonia (non-sev ere) 323 81 8,968 2,099 Unkno wn 94 26 3,793 1,020 Bronc hitis 88 18 1,857 465 Con trol Group 55 19 1,615 429 Asthma 54 12 1,385 207 Pneumonia (sev ere) 30 11 966 305 Bronc hiolitis 7 1 298 7 Other respiratory diseases 11 5 247 164 Bronc hiectasis 5 3 202 114 A cute upp er resp. infection 9 2 198 38 Hemopt ysis 2 2 143 91 Chronic cough 4 0 55 0 Airw a y foreign b o dy 2 0 38 0 Pulmonary hemosiderosis 1 1 32 40 Protracted bacterial bronc hitis 1 0 21 0 Ka w asaki disease 0 1 0 11 The SPRSound database con tains 16 disease diagnoses spanning a broad sp ectrum of p edi- atric respiratory conditions. Pneumonia (severe and non-sev ere combined) accounts for the ma jorit y of b oth patien ts and even ts, follo w ed b y bronchitis and the con trol group. Sev eral diagnoses, including Ka w asaki disease, protracted bacterial bronc hitis, and airw a y foreign b o dy , are represen ted by v ery few patients, con tributing to class imbalance in fine-grained classification tasks. The “Unkno wn” category includes patien ts for whom a definitiv e diag- nosis could not b e assigned from the a v ailable clinical records. Supplemen tary T able S2. L ab el Mapping fr om Original Annotations to Study T ar gets The lab el mapping reflects t w o complemen tary design decisions. In Part A, the original sev en adven titious ev en t types w ere consolidated in to three clinically actionable sound pat- terns: Normal, Crackles (com bining fine and coarse crackle subt yp es along with mixed wheeze-crac kle ev ents), and Rhonc hi (grouping wheeze, stridor, and rhonchi as con tinu- ous obstructiv e patterns). No Ev en t segmen ts were treated as Normal in the Screening and Sound P attern mo dels. In Part B, the 16 original disease diagnoses were group ed in to four syndrome-lev el categories guided by clinical reasoning: Pneumonia encompasses b oth 18 sev erit y grades; Bronchial diseases includ es conditions sharing obstructiv e airwa y physiology (asthma, bronc hitis, bronchiolitis, bronchiectasis, and protracted bacterial bronchitis); Nor- mal corresp onds to the control group; and Others captures rarer and heterogeneous diagnoses where individual class sizes would b e insufficient for reliable mo del training. T able S2: P art A. Event typ e to sound p attern mapping. ∗ No Event se gments wer e include d in the Normal class for the Sound Pattern R e c o gnition Mo del and Scr e ening Mo del. Original ev ent lab el Sound pattern Normal Normal Fine Crac kle Crac kles Coarse Crac kle Crac kles Wheeze+Crac kle Crac kles Wheeze Rhonc hi Stridor Rhonchi Rhonc hi Rhonc hi No Ev en t Normal ∗ T able S3: Part B. Original diagnosis to four-class dise ase gr oup mapping. Original diagnosis Disease group Pneumonia (sev ere) Pneumonia Pneumonia (non-sev ere) Pneumonia Asthma Bronchial diseases Bronc hitis Bronchial diseases Bronc hiolitis Bronc hial diseases Bronc hiectasis Bronc hial diseases Protracted bacterial bronc hitis Bronchial diseases Con trol Group Normal A cute upp er resp. infection Others Airw a y foreign b o dy Others Chronic cough Others Hemopt ysis Others Ka w asaki disease Others Other respiratory diseases Others Pulmonary hemosiderosis Others Unkno wn Others 19 T able S4: Supplemen tary T able S3. A dditional Classific ation R esults. Performanc e metrics for mo dels not pr esente d in the main manuscript. Al l metrics ar e fr om the stacking meta-mo del (LightGBM). Mo del Classes A ccuracy Macro F1 MCC ROC-A UC (macro) Ev ent t yp e Coarse Crac kle, Fine Crackle, 0.90 0.67 0.73 0.97 (6-class) Normal, Rhonchi, Wheeze, [0.89, 0.91] [0.63, 0.71] [0.71, 0.75] [0.96, 0.97] Wheeze+Crac kle Disease All 16 diagnoses 0.74 0.60 0.65 0.98 (16-class) (see T able S1) [0.73, 0.76] [0.55, 0.65] [0.62, 0.66] [0.97, 0.98] In addition to the three mo dels presented in the main man uscript (Screening, Sound P attern Recognition, and Disease Group Prediction), PulmoV ec was also ev aluated on tw o finer- grained classification targets. The 6-class even t type mo del retained the original SPRSound adv en titious sound taxonomy (excluding No Even t and Stridor due to v ery low coun ts) and ac hiev ed a macro ROC-A UC of 0.97, indicating strong discriminativ e capacit y ev en at high lab el granularit y . The 16-class disease mo del attempted to predict the original diagnosis lab el directly , yielding a macro R OC-A UC of 0.98 despite a lo w er accuracy of 0.74, whic h reflects the challenge of hard classification under severe class imbalance while confirming that probability-based discrimination is largely preserved. These results were not included in the main man uscript to maintain fo cus on the three clinically group ed targets but are pro vided here for completeness. T able S5: Supplemen tary T able S4. Mo del Hyp erp ar ameters. Final hyp erp ar ameters for HeAR b ase mo dels and LightGBM meta-mo del optimization r anges. Comp onen t P arameter V alue HeAR enco der Mo del go ogle/hear-p ytorc h HeAR enco der Embedding dimension 512 Classification head Hidden dimension 256 Classification head Drop out 0.3 T raining (Phase 1) Ep o chs (frozen enco der) 10 T raining (Phase 1) Learning rate 1 × 10 − 4 T raining (Phase 2) Ep o chs (end-to-end) 40 T raining (Phase 2) Learning rate 5 × 10 − 7 T raining Batc h size 32 Ligh tGBM n_estimators range 50–500 (Optuna) Ligh tGBM max_depth range 3–15 Ligh tGBM learning_rate range 0.01–0.3 (log) Ligh tGBM n um_leav es range 15–300 Ligh tGBM min_c hild_samples range 5–100 Ligh tGBM subsample range 0.6–1.0 Ligh tGBM colsample_b ytree range 0.6–1.0 Ligh tGBM early_stopping_rounds 20 Ligh tGBM Optimization Optuna TPE, 100 trials 20 T raining follo w ed a tw o-stage fine-tuning proto col. In Phase 1, the HeAR enco der was frozen and only the classification head w as trained at a higher learning rate to establish task- sp ecific decision b oundaries. In Phase 2, the full mo del including the enco der w as fine-tuned at a substan tially lo w er learning rate to adapt pretrained represen tations while minimizing catastrophic forgetting. LightGBM hy p erparameters were optimized indep enden tly for eac h classification target using Optuna’s T ree-structured Parzen Estimator with 100 trials. The ranges listed ab o ve reflect the search space; final selected v alues v aried across targets and are a v ailable in the co de rep ository . 21 Figure S1: Supplemen tary Figure S1. Dise ase versus event typ e he atmap. R ows r epr esent 16 dise ase diagnoses; c olumns r epr esent 8 event typ es (including No Event). Cel l values show the p er c entage distribution of event typ es within e ach dise ase. This heatmap rev eals several clinically relev ant patterns. Normal-sounding segmen ts dom- inate across nearly all diseases, consistent with the fo cal nature of most p ediatric respira- tory pathologies where only a fraction of recording lo cations and respiratory cycles pro duce adv en titious sounds. Bronchiolitis shows the highest prop ortion of wheeze even ts (43%), reflecting its c haracteristic small airw a y obstruction. The Unkno wn category exhibits a uniquely high fine crackle prev alence (38%), suggesting that these patients may harb or un- diagnosed parench ymal conditions. The control group shows 94.5% normal even ts, with the small prop ortion of adven titious sounds lik ely attributable to normal ph ysiological v ariation and annotation sensitivity . Sev ere pneumonia shows more wheeze and coarse crac kle even ts compared to non-sev ere pneumonia, consisten t with greater inflammatory burden and airwa y in v olv emen t. 22 Supplemen tary Figure S2. Confusion matric es for event-level classific ation tasks. (A) Sound Pattern R e c o gnition Mo del (3-class). (B) Scr e ening Mo del (2-class). (C) Event typ e mo del (6-class). (D) Dise ase Gr oup Pr e diction Mo del (4-class). (A) Sound Pattern Recognition Mo del (B) Screening Mo del 23 24 (C) Ev ent T yp e Mo del (6-class) (D) Disease Group Prediction Mo del 25 The confusion matrices illustrate the classification patterns across mo dels of v arying gran u- larit y . In the Sound P attern Recognition Mo del (A), the primary confusion o ccurs b etw een Normal and Crackles, with Rhonc hi b eing w ell-separated. The Screening Mo del (B) sho ws high Normal recall (0.97) with some Abnormal even ts b eing misclassified as Normal, re- flecting the challenge of detecting subtle adven titious sounds. The 6-class even t type mo del (C) demonstrates that fine-grained even t categories, particularly rare ones such as Coarse Crac kle and Wheeze+Crac kle, are more frequen tly confused with their dominan t paren t categories. The Disease Group Prediction Mo del (D) sho ws the strongest diagonal for Pneu- monia, consisten t with this class having the largest representation and the most distinct acoustic profile. 26 Supplemen tary Figure S3. ROC and Pr e cision-R e c al l curves for additional mo dels. (A) Dise ase Gr oup Pr e diction Mo del (4-class, event level). (B) Dise ase mo del (16-class, event level). (A) Disease Group Prediction Mo del (4-class) (B) Disease Mo del (16-class) The ROC curves demonstrate that probability-based discrimination remains strong ev en in the most granular classification scenario (16-class macro AUC = 0.98), despite the low er hard classification accuracy (0.74). This divergence b et w een AUC and accuracy highlights that the mo del assigns higher probabilities to the correct class in most cases ev en when the argmax prediction is incorrect, suggesting that the probabilistic outputs carry clinically useful ranking information beyond the top-1 lab el. The Precision-Recall curves provide a complemen tary view that is more sensitiv e to class imbalance; the low er A UPRC v alues for rare classes confirm that prev alence-adjusted p erformance should b e considered alongside standard R OC metrics when ev aluating clinical utility for minority conditions. 27

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment