DASH: Dynamic Audio-Driven Semantic Chunking for Efficient Omnimodal Token Compression

Omnimodal large language models (OmniLLMs) jointly process audio and visual streams, but the resulting long multimodal token sequences make inference prohibitively expensive. Existing compression methods typically rely on fixed window partitioning an…

Authors: Bingzhou Li, Tao Huang

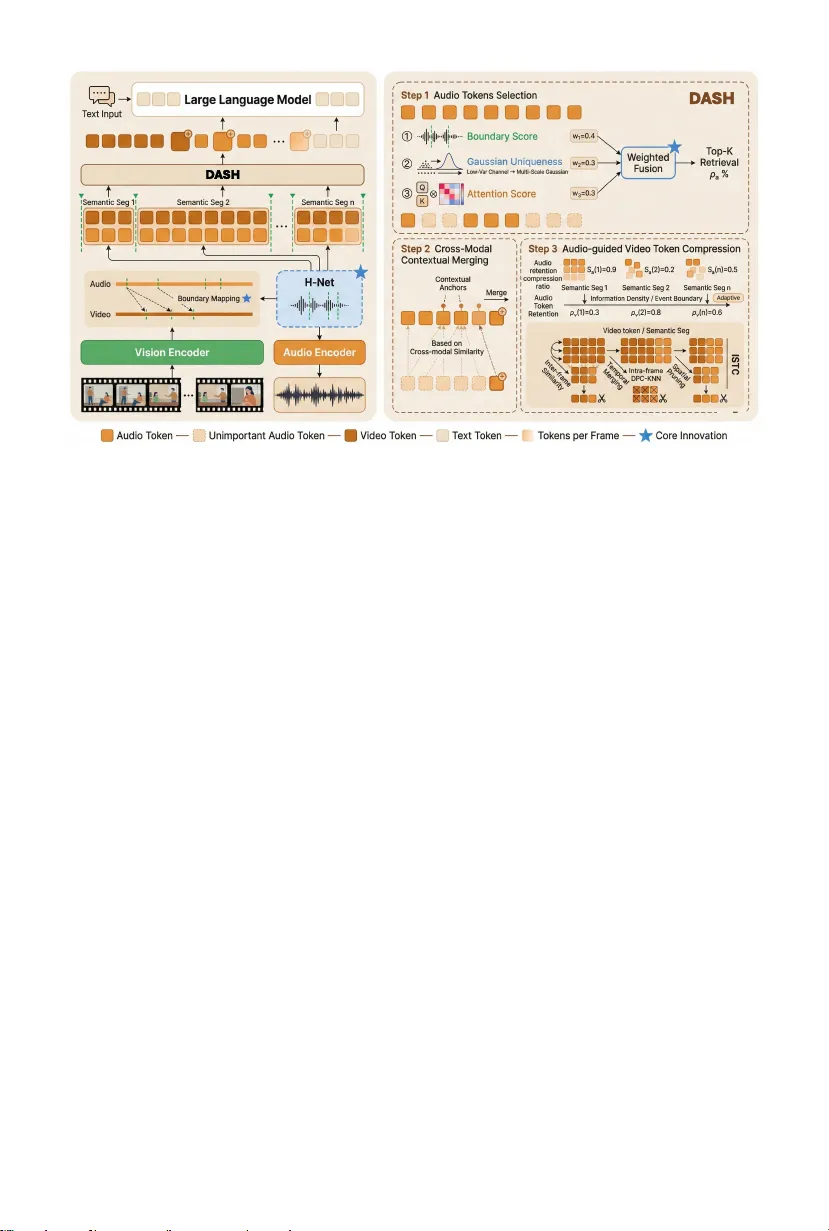

D ASH: Dynamic Audio-Driv en Seman tic Ch unking for Efficien t Omnimo dal T ok en Compression Bingzhou Li 1 , 2 and T ao Huang 1 ⋆ 1 Shanghai Jiao T ong Univ ersit y 2 T ong ji Universit y Abstract. Omnimo dal large language models (OmniLLMs) join tly pro- cess audio and visual streams, but the resulting long multimodal token sequences mak e inference prohibitively expensive. Existing compression metho ds typically rely on fixed windo w partitioning and attention-based pruning, which ov erlo ok the piecewise seman tic structure of audio-visual signals and b ecome fragile under aggressiv e tok en reduction. W e pro- p ose Dynamic Audio-driven Semantic cHunking (DASH), a training-free framew ork that aligns token compression with seman tic structure. D ASH treats audio embeddings as a semantic anc hor and detects b oundary can- didates via cosine-similarit y discontin uities, inducing dynamic, v ariable- length segments that approximate the underlying piecewise-coherent or- ganization of the sequence. These b oundaries are pro jected onto video tok ens to establish explicit cross-mo dal segmen tation. Within each seg- men t, token retention is determined by a tri-signal imp ortance estimator that fuses structural boundary cues, represen tational distinctiveness, and atten tion-based salience, mitigating the sparsity bias of attention-only se- lection. This structure-aw are allo cation preserves transition-critical to- k ens while reducing redundant regions. Extensive exp erimen ts on A VUT, VideoMME, and W orldSense demonstrate that DASH main tains sup erior accuracy while achieving higher compression ratios compared to prior metho ds. Co de is a v ailable at: https://github.com/laychou666/DASH . Keyw ords: Omnimodal Large Language Mo dels · T oken Compression · Audio-Visual Pro cessing 1 In tro duction Omnimo dal large language mo dels (OmniLLMs) [7, 19, 27, 29, 32, 34] e xtend video- language systems [4, 12, 15, 17, 40, 42] by join tly pro cessing audio and visual streams within a unified transformer framew ork. By integrating sp eec h, scene dynamics, and textual reasoning, these mo dels enable richer m ultimodal under- standing across tasks such as video question answering and real-world reasoning. Ho wev er, this capability comes at a significan t computational cost: a single video ⋆ Corresp onding author: T ao Huang ( t.huang@sjtu.edu.cn ). 2 B. Li and T. Huang clip can pro duce tens of thousands of multimo dal tokens, and the quadratic com- plexit y of self-attention quic kly makes inference memory- and latency-bound. T ok en compression [1, 2, 22, 33, 35, 37] has become a practical solution to mit- igate this b ottlenec k. By pruning or merging tok ens b efore they en ter the lan- guage model, prior work reduces sequence length without retraining large mod- els. Y et despite steady progress, existing approaches share a critical assumption: m ultimo dal tok ens are treated as flat, uniformly structured sequences. Com- pression decisions are typically made within fixed windows [1, 3, 31] and guided primarily by attention scores. This p ositional partitioning neglects the piecewise- coheren t structure of audio-visual sequences, where semantic transitions corre- sp ond to distributional shifts in em b edding space. The core challenge lies in the intrinsic structure of audio-visual signals. Un- lik e static images, audio and video streams evolv e o ver time with highly non- uniform semantic densit y . Speech con tains natural b oundaries ( e.g. , pauses, topic transitions, and speaker shifts) that often coincide with meaningful visual c hanges. These transitions delineate coherent semantic units that should ideally b e preserv ed as atomic structures during compression. When tokens are group ed uniformly and selected via sparse attention alone, these structural transitions are fragmented or discarded, leading to disproportionate information loss un- der aggressive pruning. In other w ords, current compression strategies optimize lo cal redundancy while o v erlo oking global seman tic organization. F rom this p er- sp ectiv e, token compression can b e view ed as allo cating limited representational capacit y across a temp orally structured and semantically segmented signal. In this work, we argue that effective omnimo dal compression should b e guided b y semantic structure rather than p ositional regularit y . In particular, the audio stream provides a natural structural prior. Compared to visual tokens, audio em b eddings exhibit sharp er distributional discontin uities at semantic transitions (e.g., pauses and topic shifts), making them a more reliable boundary signal. Due to cross-modal sync hronization, these audio transitions often coincide with meaningful visual changes. Audio therefore acts as a semantic anchor that can guide b oth segmen tation and imp ortance allocation across mo dalities. Building on this p erspective, we prop ose DASH (Dynamic Audio-driven Seman tic cHunking) , a training-free framework for structure-a ware omni- mo dal tok en compression (Fig. 1). D ASH mo dels the m ultimo dal sequence as a set of dynamically inferred seman tic s egmen ts instead of imp osing fixed windows. Sp ecifically , it detects b oundary candidates from cosine-similarity drops b etw een adjacen t audio em b eddings, treating sharp represen tational c hanges as pro x- ies for semantic discon tinuities. These boundaries induce v ariable-length c h unks that resp ect conten t rhythm and approximate the underlying piecewise structure of the sequence. T o establish explicit cross-mo dal alignment, detected audio b oundaries are pro jected on to video token indices via temp oral ratio mapping, yielding shared segmen tation across modalities without additional learning. Within each seg- men t, tok en selection becomes a multi-signal imp ortanc e estimation problem. W e combine (i) b oundary probability as a structural prior, (ii) multi-scale Gaus- D ASH 3 D A SH D A SH Fig. 1: Overview of D ASH. Left : F ormer metho ds ( e.g ., OmniZip [31]) use static fixed-size grouping (4 frames per group) that ignores semantic b oundaries and selects tok ens by atten tion alone. Righ t : D ASH detects audio seman tic boundaries, pro jects them onto video indices to form dynamic ch unks, and scores tokens via tri-signal fusion (b oundary probability + multi-scale Gaussian uniqueness + attention), with b oundary- a ware compression that preserves keyframes at seman tic transitions. sian uniqueness capturing representational distinctiveness, and (iii) normalized atten tion reflecting mo del-perceived salience. Their fusion yields a smo other im- p ortance landscap e (Fig. 2) and alleviates the heavy-tailed sparsity of attention- only selection. D ASH in tro duces no learnable parameters and op erates as a light w eight plug- in b et ween mo dalit y enco ders and the LLM. By reallo cating compression ca- pacit y according to seman tic density and preserving transition-critical tok ens, D ASH maintains narrativ e contin uity and cross-mo dal coherence under aggres- siv e pruning. Exp eriments on A VUT [36], VideoMME [6], and W orldSense [9] with Qwen2.5-Omni (7B and 3B) sho w that DASH main tains comp etitiv e ac- curacy at 25% token retention, matc hing or exceeding prior metho ds op erating at 35%, while achieving up to 3.8 × prefill sp eedup and 1.7 × end-to-end latency reduction. Our con tributions are threefold. – W e formulate omnimo dal token compression as a structure-aw are segmenta- tion problem, showing that compression should follow semantic b oundaries rather than fixed p ositional windo ws. – W e prop ose DASH, a training-free, audio-anchored framework that detects seman tic discontin uities in em b edding space and propagates them across mo dalities to enable explicit cross-modal alignmen t and densit y-a ware com- pression. – W e demonstrate on three audio-video b enchmarks that structure-aw are com- pression enables stable p erformance at substantially lo wer token retention 4 B. Li and T. Huang (25%) than prior methods (35%), achieving significan t speedups with negli- gible o verhead. 2 Related W ork 2.1 T ok en Compression for Multimo dal LLMs T ok en compression reduces input tokens to alleviate the computational b ottle- nec k of LLM inference, exploiting redundancy in multimodal inputs. F or image inputs, F astV [2] p erforms training-free inference acceleration b y monitoring at- ten tion patterns at a designated LLM la yer and pruning uninformative visual tok ens during prefill. LLaV A-PruMerge [22] selects represen tativ e tokens via adaptiv e clustering and absorbs the rest through weigh ted av eraging. T oMe [1] in tro duces a training-free bipartite matching algorithm that progressively merges similar token pairs across ViT la yers. Other methods use attention-based selec- tion [35], progressiv e la y er-wise reduction [33, 37], or learned pro jectors [13]. F or vide o inputs, DyCok e [30] prop oses a tw o-stage pipeline separating temporal and spatial redundancy , while others apply spatiotemp oral merging [23, 25] or atten tion-based pruning [10, 26] (see [24] for a survey). Ho wev er, these meth- o ds op erate within single modalities and ignore cross-mo dal correlations. Om- niZip [31] pioneers omnimo dal compression using audio atten tion to guide video pruning with in terleav ed spatio-temp oral compression. W e improv e up on Om- niZip with dynamic semantic ch unking, explicit cross-mo dal alignment via audio b oundary pro jection, and tri-signal fusion scoring. 2.2 Dynamic Chunking and Boundary Detection T raditional sequence pro cessing relies on fixed-size segmentation: subw ord to- k enizers ( e.g ., BPE, W ordPiece) split text at predetermined gran ularit y , and tok en compression metho ds uniformly group frames into equal-sized windows. This uniform strategy ignores seman tic structure—fragmen ting coherent units across arbitrary b oundaries and failing to adapt to information density . Dynamic c hunking addresses this by adapting segment lengths to semantic structure, yield- ing v ariable-length c hunks that resp ect natural b oundaries. MEGABYTE [38] partitions byte sequences into v ariable-length patc hes for m ultiscale mo deling, and Late Chunking [8] segments text for con textual retriev al. H-Net [11] pro- p oses training-free b oundary detection via cosine similarit y betw een adjacen t tok en embeddings, placing b oundaries where similarity drops sharply . This has b een extended to audio generation by MMHNet [28]. In video and audio, b ound- ary detection has b een studied for shot/scene segmentation [20, 21], action lo- calization [39], and token pruning [14, 16], but these typically require task- sp ecific supervision or op erate on raw signals. Inspired b y H-Net, we adapt cosine-similarit y-based b oundary detection to audio tokens in OmniLLMs with- out training, and uniquely extend to cross-mo dal segmen tation b y pro jecting audio b oundaries onto video indices—ac hieving explicit audio-visual alignment absen t from all prior work. D ASH 5 3 Metho d W e present DASH, a training-free framework that op erationalizes the principle of structure-aw are compression through three key inno v ations. As illustrated in Fig. 1, given an input video with audio, DASH first detects semantic b oundaries in the audio stream via cosine-similarity-based b oundary detection (Sec. 3.2). It then pro jects these b oundaries on to video token indices through temporal ratio mapping to form dynamic segments (Sec. 3.3), ac hieving explicit cross-modal seman tic alignmen t without learned parameters. Finally , it scores tokens within eac h segmen t via tri-signal fusion for selectiv e reten tion (Sec. 3.4). The retained tok ens are passed to the LLM for inference. 3.1 Problem F ormulation Giv en an OmniLLM with audio enco der E a and video encoder E v , we obtain audio token sequence A = { a t } N a t =1 ∈ R N a × D and video token sequence V = { v t } N v t =1 ∈ R N v × D , where D is the embedding dimension. F or video, w e ha ve N v = F × K tokens, where F is the num b er of frames and K is the num b er of tok ens p er frame. Static grouping in prior work. Existing metho ds ( e.g ., OmniZip [31]) divide video in to fixed groups of 4 frames ( G v = 4 K tokens) and audio in to fixed groups of 50 tok ens ( G a = 50 ), regardless of semantic conten t. This rigid partitioning treats information-dense segments (rapid dialogue, complex actions) and information-sparse ones (silence, static scenes) iden tically . Our goal. W e aim to replace static grouping with dynamic semantic ch unk- ing that adapts to con tent structure, and replace single-signal tok en selection with m ulti-signal fusion scoring that captures structural, conten t, and mo del- p erceiv ed imp ortance join tly . 3.2 Dynamic Semantic Chunking (Segment-Lev el) Rather than imp osing fixed b oundaries every G a tok ens, w e let the audio con- ten t itself determine where segmentation should o ccur. This design c hoice di- rectly addresses the core limitation of prior work: p ositional p artitioning tr e ats information-dense and information-sp arse se gments identic al ly . Our approach is motiv ated by a key property of audio enco der embeddings: within a seman- tically coherent segment—suc h as a contin uous sentence or a single topic— adjacen t tok ens encode similar contextual information, producing high cosine similarit y . Con versely , at semantic transitions—sen tence pauses, topic shifts, or sp eak er changes—the embedding space shifts abruptly , causing a sharp similarity drop. This prop ert y provides a training-free signal for b oundary detection that is b oth reliable and computationally efficient. W e adapt H-Net’s [11] training-free b oundary detection to exploit this property in the audio domain. 6 B. Li and T. Huang Boundary probabilit y computation. F or the audio tok en sequence A = { a t } N a t =1 , w e compute the b oundary probability at p osition t as: sim t = ⟨ a t − 1 , a t ⟩ ∥ a t − 1 ∥ · ∥ a t ∥ , (1) p boundary t = clip 1 − sim t 2 , 0 , 1 , (2) where p boundary t ∈ [0 , 1] is high when adjacen t tokens are dissimilar (semantic discon tinuit y) and low when they are similar (seman tic con tinuit y). The first tok en is alwa ys treated as a b oundary ( p boundary 1 = 1 ). Boundary detection with minim um ch unk constraint. A boundary is de- tected at position t when the cosine similarity drops below threshold τ a (default 0.4) and the distance from the last b oundary exceeds a minimum c hunk size C min (default 30 tok ens, ≈ 1 second of audio): m boundary t = I ( sim t < τ a ) · I ( t − t last ≥ C min ) , (3) where t last is the p osition of the most recen t b oundary . The minim um ch unk constrain t is essential: without it, noise-induced similarity fluctuations w ould pro duce excessively short c hunks (2–3 tok ens) that are too small for meaningful imp ortance comparison. The default C min = 30 corresponds to approximately 1 second of audio, ensuring each ch unk spans at least one proso dic unit while still capturing sen tence-level pauses and topic shifts. This produces a set of audio b oundary positions B a = { b a 0 , b a 1 , . . . , b a S } . The resulting v ariable-length ch unks ensure that tokens within eac h ch unk share coheren t semantics for fairer imp ortance comparison, and naturally adapt to information densit y . 3.3 Audio-Driv en Visual Segmen tation (Cross-Mo dal Lev el) A key observ ation in audio-guided video unde rstanding is that sp e e ch is the pri- mary semantic c arrier : audio b oundaries (sen tence pauses, topic transitions) often corresp ond to visual seman tic transitions (scene changes, action shifts). This cross-mo dal corresp ondence is not coincidental—it reflects the underlying narrativ e structure shared by b oth mo dalities. Since audio and video tok ens are temp orally co-registered in the OmniLLM’s time-window structure, temp oral p o- sition serves as a v alid proxy for semantic corresp ondence. W e exploit this by us- ing audio b oundaries as the anchor signal to drive video segmentation—ensuring that b oth mo dalities are ch unk ed along the same semantic structure. This design ac hieves explicit cr oss-mo dal semantic alignment without any learned parame- ters, in con trast to the implicit and often misaligned segmen tation pro duced b y fixed step sizes. T emporal ratio mapping. Given audio b oundaries B a detected on N a audio tok ens, we pro ject them on to video token indices via linear scaling: b v i = b a i · N v N a , i = 0 , 1 , . . . , S , (4) D ASH 7 where N v is the total num b er of video tokens. The pro jected b oundaries B v = { b v 0 , b v 1 , . . . , b v S } are deduplicated, sorted, and clamp ed to [0 , N v ] , with b v 0 = 0 and b v S = N v enforced. This ensures each video segment corresp onds to a seman- tically coherent audio segment. Unlik e implicit alignment via fixed step sizes, our approac h captures semantic-lev el corresp ondences—a scene change coincid- ing with a sentence pause will naturally become a shared b oundary for b oth mo dalities. Strength-based b oundary refinement. The pro jected b oundaries B v ma y pro duce video segments shorter than the minimum required by in terleav ed spatial- temp oral compression ( 2 K tok ens, i.e., tw o frames). T o resolve this, we apply a greedy refinement: each inner boundary b v i is assigned a strength p boundary b a i from its corresp onding audio position. W e sort inner boundaries b y strength in descending order and greedily insert them into the final set B ∗ v , initialized as { 0 , N v } . A b oundary is accepted only if b oth adjacen t segments exceed 2 K tok ens: b v i ∈ B ∗ v ⇐ ⇒ ( b v i − b left ) ≥ 2 K ∧ ( b right − b v i ) ≥ 2 K , (5) where b left and b right are the nearest existing b oundaries in B ∗ v . By prioritizing the strongest b oundaries (highest p boundary ) rather than arbitrarily dropping b ound- aries, this greedy strategy maximally preserv es the most semantically meaning- ful transitions while ensuring all segments are large enough for the in terleav ed spatial-temp oral compression that follo ws. 3.4 T ri-Signal F usion T oken Scoring (T ok en-Lev el) Existing metho ds select audio tokens solely by atten tion scores from the audio enco der. Ho wev er, as shown in Fig. 2 (a), attention distributions are extremely sparse—imp ortance concentrates on a handful of tok ens while the v ast ma jorit y receiv e near-zero scores. This sparsit y is problematic for compression: a single signal cannot capture the full sp ectrum of tok en imp ortance. Structurally criti- cal tokens at semantic b oundaries and conten t-distinctiv e tokens carrying unique information are discarded if they happen to fall outside the attention spotlight. The consequence is that aggressiv e compression (e.g., 25% retention) based on atten tion alone destroys narrative contin uit y by removing transition anchors. T o address this fundamental limitation, we prop ose a tri-signal fusion mec hanism that combines three complementary imp ortance signals, eac h capturing a dif- feren t asp ect of tok en imp ortance: structural criticality , con tent distinctiveness, and mo del-perceived salience. Signal 1: Boundary probability ( w b = 0 . 4 ). T okens at seman tic b ound- aries are conten t transition p oin ts and should b e prioritized. W e normalize the b oundary probabilit y from Eq. (2) s bnd t = p boundary t max j p boundary j + ϵ . (6) Signal 2: Probabilistic density-based uniqueness ( w u = 0 . 3 ). Inspired b y the density-peak clustering (DPC) principle used in VidCom [5, 18], w e mea- 8 B. Li and T. Huang B ou n d a r y P r ob a b i l i t y G a u ss i a n U n i q u en es s 0.0 0.2 0.4 0.6 A ttention Score C om b ined Scor e Selectio n (a) B ou n d a r y P r ob a b i l i t y G a u ss i a n U n i q u en es s A tte n t i on Scor e 0.0 0.1 0.2 0.3 0.4 0.5 Combined Score Selectio n (b) B ou n d a r y P r ob a b i l i t y G a u ss i a n U n i q u en es s A tte n t i on Scor e C om b ined Scor e 0 25 50 75 100 125 150 175 200 Audio T oken Index Selection Rescued by fusion (631) Selected by both (866) A ttention-only (631) (c) Fig. 2: T oken scoring and selection comparison. (a) A ttention score alone is extremely sparse, concentrating on a few tokens. (b) T ri-signal combined score pro duces a balanced imp ortance landscap e. (c) Selection comparison: green = re scued by fusion (631), blue = selected by b oth (866), pink = attention-only tokens replaced (631). F usion rescues ∼ 42% of retained tok ens that atten tion alone would discard. sure the distinctiveness of each tok en. Unlike v anilla DPC which uses a hard distance cutoff for density estimation [5], we prop ose a multi-scale Gaussian ker- nel to capture tok en imp ortance across v arious feature gran ularities, providing a con tinuous and robust densit y approximation. T o enhance robustness, we first perform lo w-v ariance channel selection . Giv en token features A ∈ R N a × D , w e compute p er-channel v ariance σ 2 d = V ar ([ A ] : ,d ) and retain the bottom- ⌊ D / 2 ⌋ c hannels b y v ariance, yielding ˜ A ∈ R N a × D/ 2 . This prepro cessing step ensures that our uniqueness score is computed based on stable semantic dimensions rather than noisy , high-v ariance channels that ma y contain transien t artifacts. W e then compute the ℓ 2 -normalized features ˆ A = normalize ( ˜ A ) and the global center c = 1 N a P t ˆ a t . T o appro ximate the local densit y ρ defined in DPC- KNN [5] in a con tinuous space, w e define the multi-scale Gaussian similarit y: g t = X α ∈A exp − ∥ ˆ a t − c ∥ 2 2 α , (7) where A = { 0 . 125 , 0 . 25 , 0 . 5 , 1 . 0 , 2 . 0 } are m ulti-scale bandwidth parameters that enable the k ernel to capture both fine-grained and coarse-grained feature v aria- tions. The uniqueness score is: s uniq t = 1 − g t max j g j + ϵ . (8) D ASH 9 Signal 3: Atten tion score ( w a = 0 . 3 ). W e use the attention logits from the audio enco der, normalized to [0 , 1] : s attn t = attn t max j attn j + ϵ . (9) F usion and selection. The final imp ortance score is the w eighted sum: s t = w b · s bnd t + w u · s uniq t + w a · s attn t , (10) where w b = 0 . 4 , w u = 0 . 3 , w a = 0 . 3 . W e select the top- N keep tok ens b y s t as the retained audio tok ens, where N keep = ⌊ (1 − ρ a ) · N a ⌋ . The three signals capture orthogonal imp ortance dimensions: b oundary prob- abilit y measures structur al imp ortance at transition p oints, density-based unique- ness measures c ontent distinctiv eness, and attention reflects mo del-p er c eive d imp ortance. A b oundary tok en may receive low atten tion but high boundary probabilit y; fusion ensures suc h tok ens a re not o verlooked. W e set w b = 0 . 4 sligh tly higher b ecause structural b oundaries are critical under aggressive com- pression. When b oundary information is unav ailable ( e.g ., very short sequences), the metho d falls bac k to attention-only selection. 3.5 Boundary-A w are Video Compression Giv en the audio-driven video segments, we p erform adaptiv e compression within eac h segment. The k ey idea is that not all segments deserv e equal compression: segmen ts aligned with information-dense audio (rapid sp eec h, complex narra- tion) should retain more video tokens to preserve the rich visual context, while segmen ts corresponding to silence or am bient noise can b e compressed more aggressiv ely . Audio-guided adaptiv e compression ratio. W e use the audio retention rate as a pro xy for segment-lev el information density . F or each video segment s corre- sp onding to audio segment [ b a s − 1 , b a s ) , w e compute the audio reten tion rate ¯ m ( s ) a (fraction of audio tokens retained in that segment b y tri-signal fusion). The video compression ratio is then adapted: ρ ( s ) v = ρ v + λ r (0 . 5 − ¯ m ( s ) a ) , ρ ( s ) v ∈ [0 . 1 , 0 . 95] , (11) where ρ v is the base video compression ratio and λ r = 0 . 1 controls adaptation strength. Segmen ts with high audio retention (semantically imp ortan t) receive lo wer compression; segmen ts with low audio retention receive higher compres- sion. Boundary frame protection. F or frames at b oundary p ositions, we increase the reten tion ratio: r boundary f = r s + (1 − r s ) · 0 . 3 · p boundary f , (12) where r s = 1 − ρ ( s ) v is the base retention ratio. The intuition is that b oundary frames contain the visual context of a narrative transition (e.g., the first frame of 10 B. Li and T. Huang a new scene), and losing them would remo ve the only visual anchor connecting t wo adjacent seman tic segments. This targeted protection yields disprop ortionate qualit y gains at negligible cost. In terleav ed spatial-temp oral pruning. Within each segmen t, we adopt the in terleav ed spatial-temp oral compression (ISTC) strategy [31] with the adaptive reten tion ratio: Even frames : Spatial pruning via DPC-KNN [5] that remov es tok ens with high local density (spatially redundant). Odd frames : T emp oral pruning that remo ves tok ens most similar to the previous frame (temp orally redundan t). 4 Exp erimen ts 4.1 Ev aluation Setups and Implementation Details Benc hmarks. F ollo wing OmniZip [31], we ev aluate on established audio-video understanding benchmarks: A VUT [36], VideoMME [6], and W orldSense [9]. A VUT is an audio-centric video understanding benchmark fo cusing on six tasks: ev ent localization (EL), ob ject matc hing (OM), OCR matc hing (OR), infor- mation extraction (IE), con tent counting (CC), and character matc hing (CM). VideoMME is widely used for video-understanding ev aluations where including audio can improv e accuracy . W orldSense assesses mo dels’ abilit y to understand audio and video join tly across eight domains. Comparison metho ds. Given the absence of token pruning metho ds specif- ically designed for the omnimo dal setting, we follow OmniZip and select rep- resen tative prior metho ds from single-mo dal domains for adaptation: F astV [2] p erforms training-free inference-time pruning by utilizing the atten tion score matrix of the L -th lay er; DyCok e [30] applies its TTM mo dule to b oth video and audio tokens; Random pruning serves as a con trol group. W e also compare directly with OmniZip [31]. F or fair comparison, we repro duce results for Om- niZip and DASH, while results for Random, F astV, and DyCoke are tak en from OmniZip [31]. Implemen tation details. W e implement DASH on Qw en2.5-Omni (7B and 3B) mo dels [34] using NVIDIA H20 (96GB) GPUs. W e use the ov erall FLOPs ratio as the metric to ensure fair comparison across metho ds. F or video input, w e cap the maxim um num b er of frames at 768 for VideoMME and 128 for other datasets. Eac h time windo w contains 50 audio tok ens and 288 video tok ens. F or D ASH-sp ecific h yp erparameters, we set τ a = 0 . 4 , C min = 30 , tri-signal w eights w b = 0 . 4 , w u = 0 . 3 , w a = 0 . 3 , and c hannel selection ratio 0 . 5 . The rep orted reten tion ratios (e.g., 25%, 35%) represen t target compression lev els; in practice, actual p er-sample retention may deviate from these targets due to v ariations in dataset characteristics and individual sample prop erties (e.g., audio- video token distribution, conten t complexity). F or all exp erimen ts, we leverage FlashA ttention to reduce memory usage. D ASH 11 T able 1: Comparison of different metho ds on omnimo dal (audio & video) QA b enc hmarks. Norm. A vg. is the mean of p er-benchmark normalized scores, where the baseline accuracy is 100%. “-” indicates OOM error. † F astV Norm. A vg. is computed from A VUT only . Best result among tok en pruning metho ds is in b old , second b est is underlined. Method Retained FLOPs A VUT VideoMME Norm. Ratio Ratio EL OR OM IE CC CM A vg. wo A vg. Qw en2.5-Omni-7B F ull T okens 100% 100% 38.2 67.8 59.6 85.6 44.1 66.7 64.5 66.0 100% Random 40% 34% 31.7 58.5 53.3 74.9 43.2 59.0 56.9 65.0 93.4% F astV 35% 42% 24.1 60.7 54.3 81.6 40.7 58.3 57.8 - 89.6% † DyCoke (V&A) 35% 29% 32.9 62.1 54.9 74.5 39.0 58.3 57.4 65.2 93.9% OmniZip 35% 29% 33.3 67.2 54.6 84.7 38.4 61.4 60.6 66.0 97.0% DASH (ours) 35% 29% 32.0 62.1 58.9 85.5 40.4 64.2 61.5 66.7 98.2% DASH (ours) 25% 20% 36.0 64.7 56.0 86.3 36.5 60.8 60.9 66.0 97.2% Qw en2.5-Omni-3B F ull T okens 100% 100% 32.9 65.3 58.4 85.0 44.1 62.6 62.2 62.6 100% Random 40% 31% 28.2 60.8 54.9 73.1 42.3 61.6 57.5 60.6 94.6% F astV 35% 37% 24.2 60.8 54.3 81.6 40.7 58.3 57.7 - 92.8% † DyCoke (V&A) 35% 26% 32.9 62.1 54.9 74.5 38.9 58.3 57.4 61.0 94.9% OmniZip 35% 26% 28.2 58.6 57.8 80.9 41.5 62.1 58.7 61.9 96.6% DASH (ours) 35% 26% 32.9 61.7 55.6 83.4 42.4 60.0 59.9 62.6 98.2% DASH (ours) 25% 18% 29.0 65.0 59.5 81.1 39.1 58.4 58.8 61.7 96.6% 4.2 Main Results W e ev aluate on Qwen2.5-Omni at tw o parameter scales (7B and 3B). F ollo wing OmniZip, w e rep ort baselines at 35% tok en reten tion. DASH is ev aluated at b oth 35% and 25% reten tion to demonstrate its abilit y to main tain accuracy under more aggressiv e compression. F or VideoMME, w e use LMMs-Ev al [41] for ev aluation. F ollowing OmniZip, results in T ab. 1 are normalized with the baseline mo del’s accuracy set to 100%. Comparison with state-of-the-art methods. T ab. 1 compares D ASH with existing metho ds. The k ey finding is that structure-aw are compression en- ables stable performance at substantially low er tok en reten tion : DASH at 25% reten tion ac hieves 60.9% A VUT a verage on the 7B mo del, competi- tiv e with OmniZip at 35% (60.6%), despite using only 20% FLOPs. This 10- p ercen tage-p oin t reduction in tok en retention with maintained accuracy directly v alidates our core claim that seman tic structure, not p ositional regularit y , should guide compression decisions. On VideoMME, DASH at 25% matc hes the full- tok en baseline (66.0%), confirming that con tent-a w are c hunking preserves infor- mation that fixed-size grouping destro ys. Metho ds ignoring cross-modal structure (Random, F astV) degrade signifi- can tly , confirming that temporal window structure is critical for audio-video understanding. The Norm. A vg. column highligh ts the ov erall adv antage: DASH at 35% achiev es 98.2% on both mo del scales, surpassing OmniZip (97.0% on 12 B. Li and T. Huang T able 2: Comparison of different metho ds on the W orldSense benchmark. FLOPs calculation considers only multimodal tokens from audio and video inputs. Best result a mong tok en pruning metho ds is in b old , second b est is underlined. Method Retained FLOPs T ec h & Culture & Daily Film & Perfor- Games Sports Music A vg. Ratio (T) Science P olitics Life TV mance Qwen2.5-Omni-7B F ull T okens 100% 73.2 52.4 50.1 48.5 44.6 43.8 41.6 41.6 47.3 46.8 Random 55% 35.5 47.1 47.0 44.4 41.2 40.0 40.1 40.1 46.3 43.6 F astV 50% 39.3 48.8 47.4 44.2 44.1 41.2 38.3 40.0 46.6 44.3 DyCoke (V&A) 50% 31.9 48.4 49.9 46.7 41.4 39.9 40.8 40.2 46.5 44.6 OmniZip 35% 21.4 48.8 49.5 47.1 40.6 40.4 40.8 40.7 45.6 44.7 DASH (ours) 25% 14.9 50.0 49.2 47.0 39.3 41.6 39.9 41.6 45.8 44.9 Qwen2.5-Omni-3B F ull T okens 100% 37.4 51.5 50.8 45.0 45.4 43.8 42.5 44.2 46.1 46.4 Random 55% 17.0 48.2 46.3 40.7 41.4 38.6 40.0 41.8 43.4 42.8 F astV 50% 18.2 50.0 50.5 44.1 43.0 40.5 41.6 41.8 42.1 44.4 DyCoke (V&A) 50% 15.1 48.1 48.5 42.3 43.3 39.7 43.4 42.1 43.0 44.0 OmniZip 35% 9.9 48.4 49.2 41.6 44.3 41.2 40.8 43.3 43.6 44.1 DASH (ours) 25% 6.7 50.2 47.9 44.7 43.3 41.2 42.5 40.9 43.6 44.6 7B, 96.6% on 3B). The consistency across scales indicates architecture-general impro vemen ts rather than mo del-specific tuning artifacts. T ab. 2 presen ts per-domain results on W orldSense. D ASH at 25% reten- tion achiev es 44.9% av erage accuracy on the 7B mo del, matching or surpassing OmniZip’s 44.7% at 35% retention while consuming only 14.9T FLOPs v ersus 21.4T—a 30% computational saving with impro v ed accuracy . On the 3B model, D ASH (44.6%) lik ewise outp erforms OmniZip (44.1%) with 32% fewer FLOPs. A cross b oth scales, DASH leads in domains suc h as T ec h & Science and Sp orts, while remaining comp etitiv e in others, suggesting that dynamic semantic ch unk- ing generalizes w ell to diverse audio-video con tent t ypes. Fig. 3: A ccuracy vs. retention ratio on W orldSense (Qwen2.5-Omni-7B). In Fig. 3, w e further illustrate the accuracy-reten tion trade-off of differ- en t methods across retention ratios from 15% to 60%. D ASH main tains a clear adv an tage at low-to-mid re- ten tion, with its 25% p oin t (44.9%) already exceeding OmniZip at 35% (44.7%) and DyCok e at 45% (44.6%). This result is particularly significant: D ASH achiev es with 25% tokens what prior methods require 35– 45% tokens to accomplish . As re- ten tion increases, all metho ds conv erge to ward the F ull T okens ceiling, indicat- ing that the benefit of con ten t-aw are compression is most pronounced under aggressiv e pruning—precisely the regime where efficiency gains matter most for practical deplo yment. D ASH 13 T able 3: Inference efficiency on W orldSense. (a) Qwen2.5-Omni-7B Method Mem. ↓ Prefill ↓ Acc. ↑ Latency ↓ F ull 35G 1.0 × 46.8 1.0 × F astV OOM – – – DyCoke 31G 1.6 × 44.6 1.2 × OmniZip 25G 3.4 × 44.7 1.4 × Ours 26G 3.5 × 44.9 1.7 × (b) Qwen2.5-Omni-3B Method Mem. ↓ Prefill ↓ Acc. ↑ Latency ↓ F ull 25G 1.0 × 46.4 1.0 × F astV 45G 1.2 × 44.4 1.1 × DyCoke 20G 1.5 × 44.0 1.2 × OmniZip 16G 3.3 × 44.1 1.3 × Ours 16G 3.8 × 44.6 1.4 × 4.3 Efficiency Analysis T ab. 3 rep orts inference efficiency on W orldSense. A t 25% reten tion, DASH ac hieves 3.5 × prefill sp eedup on the 7B mo del and 3.8 × on the 3B mo del, sur- passing OmniZip at 35% (3.4 × and 3.3 × ) on b oth scales. End-to-end latency is also reduced to 1.7 × (7B) and 1.4 × (3B), while maintaining comp etitiv e ac- curacy . These sp eedups translate directly to impro ved user experience: a 60- second video that previously required 10 seconds of prefill no w completes in under 3 seconds. Critically , the ov erhead of boundary detection and tri-signal scoring is negligible ( < 40ms), as these operations in volv e only cosine similar- it y and element-wise computations on the already-extracted tok en embeddings. This confirms that structure-a ware compression adds minimal computational cost while deliv ering substantial efficiency gains—a fav orable trade-off for prac- tical deplo yment. 4.4 Ablation Studies All ablation exp erimen ts are conducted on Qwen2.5-Omni-3B at 25% retention ratio on the W orldSense b enc hmark. Comp onen t ablation and sensitivity analysis. T ab. 4 progressively adds eac h DASH comp onent. Starting from the OmniZip baseline with static grouping and attention-only selection, we first add tri-signal fusion alone (Static+TSF), then dynamic ch unking with atten tion-only selection (DSC+ADVS), and finally the full DASH combining all three comp onen ts. Fig. 4 shows the sensitivit y of w b . F rom T ab. 4, b oth Static+TSF and DSC+ADVS indep endently improv e o ver OmniZip at 35% (44.1% → 44.4%), confirming that tri-signal fusion and dynamic c hunking pro vide complementary gains of comparable magnitude; com bining them in F ull DASH further lifts accuracy to 44.6%. This additiv e improv emen t pattern v alidates our design principle: structure-a w are segmentation and m ulti- signal imp ortance estimation address orthogonal limitations of prior w ork. Boundary detection algorithm comparison. W e ev aluate four similarity metrics for audio boundary detection (T ab. 5). Cosine similarity ac hieves the highest accuracy (44.6%). The p erformance gap b et ween cosine and dot pro duct (43.6%) confirms the imp ortance of scale inv ariance in handling feature magni- tude v ariations across audio segments. Change rate (44.0%) and random baseline 14 B. Li and T. Huang Configuration A cc. (%) OmniZip (35% ret.) 44.1 Static + TSF 44.4 DSC+AD VS (attn-only) 44.4 F ull D ASH 44.6 T able 4: Ablation on Qw en2.5- Omni-3B (W orldSense, 25% ret.). OmniZip at 35% retention is the reference baseline. w b w b Fig. 4: Sensitivit y of w b on W orldSense (3B, 25% retention). Similarity Metho d Acc. ( %) Random 43.8 Dot Pro duct 43.6 Change Rate 44.0 Cosine 44.6 T able 5: Boundary detec- tion algorithm comparison on W orldSense (3B, 25% ret.). (43.8%) p erform significantly worse, v alidat- ing that semantic-a w are b oundary detection is crucial for effective c hunking. The consistent p erformance of cosine similarity o ver random selection demonstrates that our boundary de- tection mo dule successfully captures mean- ingful seman tic transitions in audio-visual se- quences. F rom Fig. 4, p erformance is stable for w b ∈ [0 . 3 , 0 . 5] , p eaking at w b =0 . 4 , and degrades for w b > 0 . 5 . The degradation b ey ond 0.5 suggests that o ver-emphasizing b ound- ary probability at the exp ense of conten t distinctiveness and attention leads to sub optimal selection, v alidating our default weigh ts. 5 Conclusion W e presented DASH, a training-free framework for structure-aw are token com- pression in omnimo dal large language mo dels. Rather than treating multimodal tok ens as uniformly structured sequences, DASH aligns compression with the in- trinsic semantic organization of audio-visual signals. By using audio embeddings as a seman tic anchor, DASH detects boundary candidates that approximate the piecewise structure of multimodal sequences and propagates this structure across mo dalities to guide token reten tion. This design preserves transition-critical in- formation while reducing redundan t regions, enabling aggressiv e compression without disrupting cross-mo dal coherence. Exp erimen ts on A VUT, VideoMME, and W orldSense show that DASH maintains comp etitive accuracy at substan- tially lo wer tok en reten tion while significan tly improving inference efficiency . These results highlight the imp ortance of aligning compression with semantic structure for efficien t omnimo dal reasoning. D ASH 15 References 1. Boly a, D., F u, C.Y., Dai, X., Zhang, P ., F eic htenhofer, C., Hoffman, J.: T oken merging: Y our vit but faster. In: ICLR (2023) 2. Chen, L., Zhao, H., Liu, T., Bai, S., Lin, J., Zhou, C., Chang, B.: An image is w orth 1/2 tokens after lay er 2: Plug-and-play inference acceleration for large vision- language mo dels. In: ECCV (2024) 3. Chen, X., T ao, K., Shao, K., W ang, H.: Streamingtom: Streaming token compres- sion for efficien t video understanding. arXiv preprint arXiv:2510.18269 (2025) 4. Chen, Z., W u, J., W ang, W., et al.: Intern vl: Scaling up vision foundation mo dels and aligning for generic visual-linguistic tasks. In: CVPR (2024) 5. Du, M., Ding, S., Jia, H.: Study on density p eaks clustering based on k-nearest neigh b ors and principal comp onen t analysis. Knowledge-Based Systems 99 , 135– 145 (2016) 6. F u, C., Dai, Y., Luo, Y., et al.: Video-mme: The first-ever comprehensive ev aluation b enc hmark of multi-modal llms in video analysis. In: CVPR (2025) 7. F u, C., Lin, H., Long, Z., et al.: Vita: T o wards op en-source in teractive omni mul- timo dal llm. arXiv preprint arXiv:2408.05211 (2024) 8. Gün ther, M., Selvi, J.T., et al.: Late ch unking: Con textual ch unk embeddings using long-con text em b edding mo dels. arXiv preprint arXiv:2409.04701 (2024) 9. Hong, J., et al.: W orldsense: Ev aluating real-world omnimodal understanding for m ultimo dal llms. arXiv preprin t arXiv:2502.04326 (2025) 10. Huang, X., Zhou, H., Han, K.: Prunevid: Visual token pruning for efficient video large language mo dels. In: Findings of the Asso ciation for Computational Linguis- tics: A CL (2025) 11. Hw ang, S., W ang, B., Gu, A.: Dynamic ch unking for end-to-end hierarchical se- quence modeling. arXiv preprint arXiv:2507.07955 (2025) 12. Li, K., et al.: Video c hat: Chat-cen tric video understanding. In: ICCV (2023) 13. Li, W., Y uan, Y., Liu, J., et al.: T ok enpack er: Efficien t visual pro jector for multi- mo dal llm. In ternational Journal of Computer Vision (IJCV) 133 (10), 6794–6812 (2025) 14. Li, Y., et al.: Accelerating transducers through adjacen t tok en merging. In: In ter- sp eec h (2023) 15. Lin, B., Y e, Y., Zh u, B., et al.: Video-lla v a: Learning united visual represen tation b y alignmen t before pro jection. In: EMNLP (2024) 16. Lin, W., Rob erts, J., Albanie, S., et al.: Sp eec hprune: Context-a w are token pruning for sp eech information retriev al. In: ICME (2025) 17. Liu, H., Li, C., W u, Q., Lee, Y.J.: Visual instruction tuning. In: NeurIPS (2023) 18. Liu, X., W ang, Y., Ma, J., Zhang, L.: Video compression commander: Plug-and- pla y inference acceleration for video large language mo dels. In: Pro ceedings of the 2025 Conference on Empirical Metho ds in Natural Language Pro cessing (EMNLP). pp. 1910– 1924 (2025) 19. Op enAI: Gpt-4 tec hnical report. arXiv preprint arXiv:2303.08774 (2023) 20. Rao, A., Xu, L., Xiong, Y., Xu, G., Huang, Q., Zhou, B., Lin, D.: A large-scale, div erse dataset for shot type classification. In: A CM MM (2020) 21. Rao, A., Xu, L., Xiong, Y., Xu, G., Huang, Q., Zhou, B., Lin, D.: A lo cal-to-global approac h to multi-modal mo vie scene segmentation. In: CVPR (2020) 22. Shang, Y., et al.: Llav a-prumerge: Adaptiv e token reduction for efficient large mul- timo dal mo dels. In: ICCV (2025) 16 B. Li and T. Huang 23. Shao, K., T ao, K., Qin, C., et al.: Holitom: Holistic token merging for fast video large language mo dels. arXiv preprin t arXiv:2505.21334 (2025) 24. Shao, K., T ao, K., Zhang, K., et al.: When tokens talk to o muc h: A survey of mul- timo dal long-con text token compression across images, videos, and audios. arXiv preprin t arXiv:2507.20198 (2025) 25. Shen, L., Gong, G., et al.: F astvid: Dynamic density pruning for fast video large language mo dels. In: NeurIPS (2025) 26. Shen, W., e t al.: Longvu: Spatiotemp oral adaptiv e compression for long video- language und erstanding. In: ICML (2025) 27. Sh u, F., Zhang, L., Jiang, H., Xie, C.: Audio-visual llm for video understanding. In: ICCV (2025) 28. Simon, C., Ishii, M., W ang, W.Y., et al.: Echoes o ver time: Unlocking length gen- eralization in video-to-audio generation mo dels. arXiv preprint (2026) 29. T ang, C., Li, Y., Y ang, Y., et al.: Video-salmonn 2: Captioning-enhanced audio- visual larg e language mo dels. arXiv preprint arXiv:2506.15220 (2025) 30. T ao, K., Qin, C., Y ou, H., Sui, Y., W ang, H.: Dycoke: Dynamic compression of tok ens for fast video large language mo dels. In: CVPR (2025) 31. T ao, K., Shao, K., Y u, B., W ang, W., Liu, J., W ang, H.: OmniZip: Audio-guided dy- namic token compression for fast omnimo dal large language mo dels. arXiv preprint arXiv:2511.14582 (2025) 32. T eam, G., et al.: Gemini: a family of highly capable multimodal mo dels. arXiv preprin t arXiv:2312.11805 (2023) 33. Xing, L., Huang, Q., Dong, X., et al.: Pyramiddrop: Accelerating your large vision- language mo dels via pyramid visual redundancy reduction. In: CVPR (2025) 34. Xu, J., Guo, Z., He, J., et al.: Qwen2.5-omni tec hnical report. arXiv preprint arXiv:2503.20215 (2025) 35. Y ang, S., Chen, Y., et al.: Visionzip: Longer is better but not necessary in vision language mo dels. In: CVPR (2025) 36. Y ang, Y., Zhuang, J., Sun, G., et al.: Audio-centric video understanding b enc hmark without te xt shortcut. In: EMNLP (2025) 37. Y e, W., W u, Q., Lin, W., Zhou, Y.: Fit and prune: F ast and training-free visual tok en pruning for multi-modal large language mo dels. In: Pro ceedings of the AAAI Conference on Artificial Intelligence. vol. 39, pp. 22128–22136 (2025) 38. Y u, L., Simig, D., Flaherty , C., et al.: Megabyte: Predicting million-byte sequences with m ultiscale transformers. arXiv preprint arXiv:2305.07185 (2023) 39. Zhang, C., W u, J., Li, Y.: Actionformer: Lo calizing moments of actions with trans- formers. In: ECCV (2022) 40. Zhang, H., Li, X., Bing, L.: Video-llama: An instruction-tuned audio-visual lan- guage mo del for video understanding. In: EMNLP (2023) 41. Zhang, K., L i, B., Zhang, P ., et al.: Lmms-ev al: Reality chec k on the ev aluation of large m ultimo dal models. In: Findings of the Asso ciation for Computational Linguistics: NAA CL (2025) 42. Zhang, Y., et al.: Llav a-video: Video instruction tuning with synthetic data. arXiv preprin t arXiv:2409.20215 (2024) D ASH 17 A Qualitativ e Analysis 0 20 40 60 80 Audio T oken Index (segment [0:100]) 0.0 0.1 0.2 0.3 0.4 0.5 p a u d i o b y p a u d i o b o u n d . Thr .=0.30 Boundaries (2) (a) p a u d i o b F1 F2 F4 F5 F7 (b) p a u d i o b 0 100 200 300 400 500 V ideo T oken Index Dynamic (Ours) Fixed 288 288 172 231 173 2 s e g , µ = 2 8 8 , σ = 0 3 s e g , µ = 1 9 2 , σ = 2 8 (c) Fig. 5: Boundary detection visualization. (a) Boundary probability p boundary t o ver audio tokens (blue curv e), with threshold τ a (gra y dashed) and detected b oundaries (red dots). P eaks exceeding the threshold correspond to semantic transitions in the sp eec h stream. (b) Video frames at b oundary lo cations: blue-bordered frames precede and red-bordered frames follo w a detected transition, confirming cross-modal align- men t betw een audio boundaries and visual scene c hanges. (c) Fixed grouping (2 equal segmen ts, σ =0 ) vs. dynamic ch unking (ours, 3 v ariable-length segments, σ =28 ), where σ denotes the standard deviation of segmen t lengths in tokens. Our metho d adapts segmen t gran ularity to con tent structure rather than imposing uniform partitions. Boundary detection visualization. Fig. 5 visualizes audio-driven dynamic segmen tation on a representativ e segmen t. (a) plots the raw b oundary proba- bilit y p boundary t o ver audio tokens; detected b oundaries (red dots) corresp ond to p ositions where the probability exceeds the threshold (gray dashed line), indicat- ing sp eec h pauses or topic shifts. (b) displays the video frames surrounding each b oundary , where blue-b ordered frames precede and red-b ordered frames follow a detected transition, confirming that audio-detected boundaries align with visual scene changes. (c) con trasts fixed-size grouping (2 equal segments, σ =0 ) with our dynamic ch unking (3 v ariable-length segments, σ =28 ): our metho d introduces an additional segment b oundary at a semantically meaningful p osition, adapting gran ularity to con tent structure rather than imposing uniform partitions. T ok en imp ortance heatmap. Fig. 2 visualizes the atten tion sparsity discussed in the introduction on the first 200 audio tokens. (a) sho ws that attention scores are dominated by near-zero v alues with isolated peaks—a hea vy-tailed distribu- tion that makes atten tion-only selection brittle under aggressive compression. (b) shows tri-signal fusion produces a smo other imp ortance landscap e by fill- 18 B. Li and T. Huang ing gaps atten tion alone misses, rescuing structurally critical tokens at seman tic b oundaries. (c) quan tifies the impact: among top-50% retained tokens, 631 are rescued b y fusion, 866 are shared, and 631 attention-only tok ens are replaced— a 42% turnov er confirming that fusion fundamentally reshap es selection. This high turno ver rate explains why DASH main tains accuracy at 25% reten tion where attention-only metho ds degrade: the rescued tokens are precisely those that preserv e narrative con tinuit y and cross-modal coherence. These qualitativ e results corrob orate the quan titative findings in our experi- men ts: fusion rescues structurally critical tokens that sparse attention w ould dis- card, with the gap widening under aggressiv e compression. T ogether, Fig. 5 and Fig. 2 provide visual evidence for the tw o core innov ations of DASH—dynamic seman tic c hunking adapts to con tent structure, and tri-signal fusion captures imp ortance dimensions that atten tion alone misses. B Algorithm Ov erview Algorithm 1 presents the complete D ASH pip eline. Giv en audio tokens A ∈ R N a × D , video tokens V ∈ R N v × D (with N v = F × K , where F is the n um- b er of frames and K is the n umber of tok ens per frame), and audio atten tion logits, DASH pro ceeds in four stages: (1) audio b oundary detection via cosine similarit y (Sec. 3.2), (2) cross-mo dal b oundary pro jection with strength-based refinemen t (Sec. 3.3), (3) tri-signal fusion for audio token selection (Sec. 3.4), and (4) boundary-aw are adaptive video compression (Sec. 3.5). All op erations are training-free and require only elemen t-wise or pairwise computations on the already-extracted enco der em b eddings. C Implemen tation Details Hyp erparameter summary . T able 6 lists all D ASH-specific h yp erparameters used across exp erimen ts. These v alues are fixed for all b enc hmarks and b oth mo del scales (7B and 3B) unless otherwise noted. Time-windo w structure. F ollo wing OmniZip [31], the Qwen2.5-Omni mo del organizes multimodal inputs into time windo ws. Each window con tains 50 au- dio tok ens and 288 video tok ens. DASH op erates indep enden tly on eac h time windo w, detecting boundaries and performing compression within the window’s audio and video tok en sequences. Ev aluation proto col. W e ev aluate on three benchmarks: – A VUT [36]: An audio-cen tric video understanding b enc hmark with six subtasks— ev ent lo calization (EL), ob ject matc hing (OM), OCR matc hing (OR), in- formation extraction (IE), conten t counting (CC), and c haracter matching (CM). W e rep ort per-task accuracy and the ov erall av erage. – VideoMME [6]: A general video understanding b enc hmark ev aluated using LMMs-Ev al [41]. W e rep ort the “without subtitle” setting to fo cus on audio- visual reasoning. The maxim um num b er of input frames is capped at 768. D ASH 19 Algorithm 1 DASH: Dynamic Audio-Driv en Semantic Ch unking Require: Audio tok ens A = { a t } N a t =1 , video tokens V = { v t } N v t =1 , atten tion logits attn , audio compression ratio ρ a , video compression ratio ρ v , threshold τ a , minim um c hunk size C min , tok ens p er frame K Ensure: Retained audio mask m a , re tained video mask m v 1: // Stage 1: Dynamic Semantic Ch unking (Sec. 3.2) 2: Compute cosine similarit y: sim t ← ⟨ a t − 1 , a t ⟩ ∥ a t − 1 ∥ ∥ a t ∥ for t = 2 , . . . , N a 3: Compute b oundary probabilit y: p bnd t ← clip ((1 − sim t ) / 2 , 0 , 1) 4: B a ← { 0 } ; t last ← 0 5: for t = 2 to N a do 6: if sim t < τ a and t − t last ≥ C min then 7: B a ← B a ∪ { t } ; t last ← t 8: end if 9: end for 10: B a ← B a ∪ { N a } { App end end p osition} 11: // Stage 2: Audio-Driven Visual Segmen tation (Sec. 3.3) 12: Pro ject: b v i ← ⌊ b a i · N v / N a ⌋ for eac h b a i ∈ B a 13: Sort inner b oundaries { b v i } |B v |− 2 i =1 b y strength p bnd b a i in descending order 14: B ∗ v ← { 0 , N v } 15: for each inner boundary b v i in strength order do 16: if ( b v i − b left ) ≥ 2 K and ( b right − b v i ) ≥ 2 K then 17: Insert b v i in to B ∗ v 18: end if 19: end for 20: // Stage 3: T ri-Signal F usion T oken Scoring (Sec. 3.4) 21: s bnd t ← p bnd t / (max j p bnd j + ϵ ) {Boundary signal} 22: Select low-v ariance channels: ˜ A ← ChannelSelect ( A , 0 . 5) 23: ˆ A ← ℓ 2 -normalize ( ˜ A ) ; c ← mean ( ˆ A ) 24: g t ← P α ∈A exp( −∥ ˆ a t − c ∥ 2 / 2 α ) {Multi-scale Gaussian} 25: s uniq t ← 1 − g t / (max j g j + ϵ ) {Uniqueness signal} 26: s attn t ← attn t / (max j attn j + ϵ ) {A tten tion signal} 27: s t ← 0 . 4 · s bnd t + 0 . 3 · s uniq t + 0 . 3 · s attn t 28: m a ← top- ⌊ (1 − ρ a ) · N a ⌋ to kens by s t 29: // Stage 4: Boundary-A ware Video Compression (Sec. 3.5) 30: for each video segmen t s defined b y B ∗ v do 31: Compute audio retention ¯ m ( s ) a for th e corresp onding audio segmen t 32: ρ ( s ) v ← clip ( ρ v + 0 . 1 · (0 . 5 − ¯ m ( s ) a ) , 0 . 1 , 0 . 95) 33: for eac h frame f in segment s do 34: r f ← 1 − ρ ( s ) v {Base frame reten tion} 35: if frame f is at a b oundary p osition then 36: r f ← r f + (1 − r f ) · 0 . 3 · p bnd f {Boundary protec tion} 37: end if 38: Apply in terleav ed spatial-temporal pruning with reten tion r f 39: end for 40: end for 41: return m a , m v 20 B. Li and T. Huang T able 6: D ASH h yp erparameters. All v alues are fixed across benchmarks and mo del scales. Sym b ol V alue Eq. Description τ a 0.4 (3) Cosine similarity threshold for b oundary detection C min 30 (3) Minim um c hunk size ( ≈ 1 s of audio) 2 K 2 × K (5) Minimum video segmen t size for ISTC w b 0.4 (10) Boundary probabilit y w eight in tri-signal fusion w u 0.3 (10) Uniqueness w eight in tri-signal fusion w a 0.3 (10) Atten tion w eight in tri-signal fusion A { 2 k } 1 k = − 3 (7) Multi-scale Gaussian bandwidth set Channel ratio 0.5 – F raction of low-v ariance c hannels retained λ r 0.1 (11) Adaptation strength for video compression ratio [ ρ min v , ρ max v ] [0 . 1 , 0 . 95] (11) Clamping range for adaptiv e video compression – W orldSense [9]: A b enc hmark for joint audio-video understanding across eigh t domains (T ech & Science, C ulture & Politics, Daily Life, Film & TV, P erformance, Games, Sp orts, Music). The maxim um num b er of input frames is capp ed at 128. Baseline repro duction. F or fair comparison, we repro duce results for OmniZip and DASH under iden tical settings (same mo del c heckpoints, input preprocess- ing, and ev aluation scripts). Results for Random, F astV [2], and DyCoke [30] are tak en directly from OmniZip [31] as rep orted in their pap er.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment