Argumentation for Explainable and Globally Contestable Decision Support with LLMs

Large language models (LLMs) exhibit strong general capabilities, but their deployment in high-stakes domains is hindered by their opacity and unpredictability. Recent work has taken meaningful steps towards addressing these issues by augmenting LLMs…

Authors: Adam Dejl, Matthew Williams, Francesca Toni

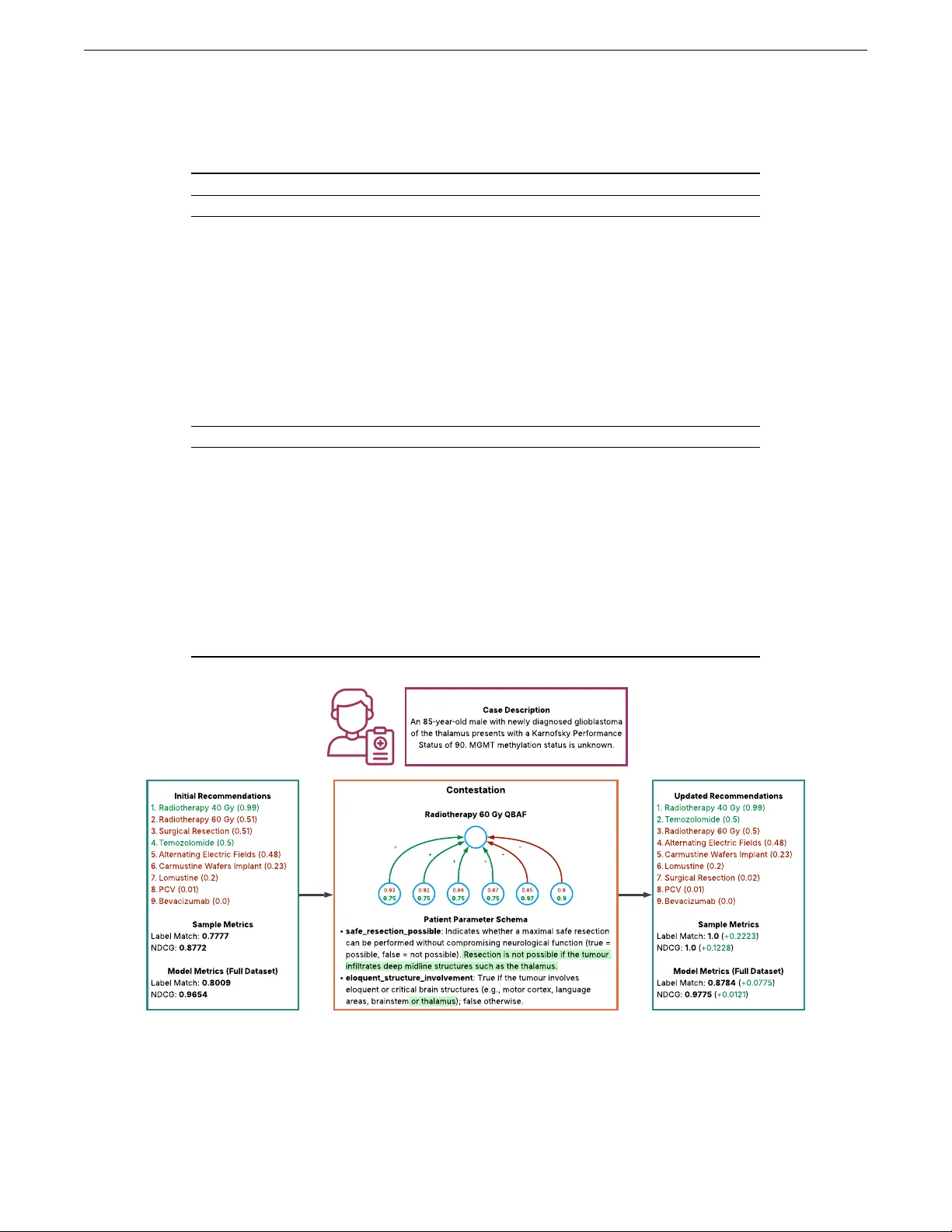

Argumentation f or Explainable and Globally Contestable Decision Support with LLMs Adam Dejl 1 , Matthew W illiams 2 and Francesca T oni 1 1 Department of Computing, Imperial College London 2 Department of Surgery & Cancer , Imperial College London {adam.dejl18, matthew .williams, ft}@imperial.ac.uk Abstract Large language models (LLMs) exhibit strong general capabilities, b ut their deployment in high-stak es domains is hindered by their opacity and unpredictability . Re- cent work has taken meaningful steps to wards address- ing these issues by augmenting LLMs with post-hoc rea- soning based on computational argumentation, providing faithful explanations and enabling users to contest incor- rect decisions. Howe ver , this paradigm is limited to pre- defined binary choices and only supports local contes- tation for specific instances, lea ving the underlying de- cision logic unchanged and prone to repeated mistakes. In this paper, we introduce ArgEval, a framework that shifts from instance-specific reasoning to structured ev al- uation of general decision options. Rather than mining arguments solely for individual cases, ArgEv al system- atically maps task-specific decision spaces, builds cor- responding option ontologies, and constructs general ar- gumentation framew orks (AFs) for each option. These framew orks can then be instantiated to provide explain- able recommendations for specific cases while still sup- porting global contestability through modification of the shared AFs. W e in vestigate the effecti veness of ArgEv al on treatment recommendation for glioblastoma, an ag- gressiv e brain tumour, and show that it can produce ex- plainable guidance aligned with clinical practice. 1 Introduction T rained on large corpora of general textual data as well as spe- cific problem-solving tasks, Large Language Models (LLMs) have demonstrated strong performance in a variety of settings (Bubeck et al., 2023; V an V een et al., 2024; Luo et al., 2025; McDuf f et al., 2025; Bermejo et al., 2025). Howe ver , due to their reliance on stochastic next-tok en prediction, LLMs can also be notoriously un- reliable, as e videnced by issues such as hallucinations (Huang et al., 2025) and omissions of critical information (Busch et al., 2025). These issues pose a substantial obstacle to safely using LLMs in high-stakes domains. Importantly , the inherent opacity of LLMs makes it impossible to faithfully explain their outputs or to reliably redress issues. While methods such as chain-of-thought (W ei et al., 2022) or verbalised explanations can provide some de gree of ratio- nalization, they hav e been found to be unfaithful, i.e., inaccurate in describing the true internal reasoning of models (Chen et al., 2025). 1 Icons from Noun Project (Patient by Alzam and Data by Anna Riana, CC BY 3.0). 2 Icons from Noun Project (Policy by Puspito, Decision by Kamin Ginkaew , report analysis by kartini 1, Patient by Alzam and Data by Anna Riana, CC BY 3.0). Cas e Des c r ip t io n An 85 ? ye ar? ol d m ale w it h n ew ly d iag no sed gli ob last oma o f th e th alam us p res ent s w ith a Kar nof sk y Per f or manc e St atu s of 9 0. M G M T m et hyl atio n s tat us is unk now n . Cas e Para me t er s { age : 85 , g lio ma_ w ho _ g ra de: 4 , k ps: 90 , elo qu ent _ st ruc t ure_inv olv eme nt: tr ue, saf e_rese ct ion _ p oss ibl e: f alse , ...} R o ot A rg u me nt Sc or e 0 .5 : The tre atm ent ' Surg ic al Tumo r R e sec ti on' is re co mme nd ed as one of th e su ita ble tre atm ent op tio ns. Sc or e 0 .8 : Gro ss t ot al res ec tio n is asso ci ated w it h su per io r ov eral l sur v ival and p rog res sion - f ree sur v ival co mp ared to inc o mpl ete rese ct ion or bi ops y. Co nd i ti on : g lio ma_ w ho _ g rad e = 4 ? s afe _ res ec tio n_pos sib le ? 70 <= k ps <= 1 0 0 + Sc or e 0 .8 : Sur ge ry is t he s tan dard in iti al th erap eu tic ap pro ac h f or malig nan t g liom a, p rov idi ng tum or deb ul kin g an d t issu e f or diag no sis ( ...) Co nd i ti on : g lio ma_ w ho _ g rad e = 4 ? s afe _ res ec tio n_pos sib le + Sc or e 0 .8 8 : W hen mic ro sur gic al r ese ct ion is n ot safe ly fe asib le b ec ause of tu mo ur l oc ati on ( ...) b io psy sh oul d b e p erf or me d i nst ead of res ec tio n. Co nd i ti on : e loq uen t_ st ru ct ur e_ in vol vem ent ? kp s < = 49 - Sc or e 0 .7 : In el der ly pat ien ts ( > 65 - 70 y ear s), r and om ized tr ial s hav e no t sho w n a su rv iv al ad van tag e of s urg ic al re sec tio n o ver bio ps y ( ...) Co nd i ti on : g lio ma_ w ho _ g rad e = 4 ? ag e > = 65 - Pre di c t io n A ft e r In st an t iat i on Surg ic al re sec ti on n ot rec om men de d ( sco re 0 .0 2) Gen er al QBA F Figure 1. Illustration of ArgEval inference for one of the treat- ment options for glioblastoma 1 . Case Parameters extracted from the Case Description are used to instantiate the General QB AF associated with the giv en option ( Root Argument ), removing the dashed nodes whose conditions are not satisfied. The Prediction After Instantiation is obtained from the instantiated framework, which also serves as a faithful e xplanation. In this work, we introduce Ar gEval , a new framework for deci- sion support with LLMs that lev erages argumentati ve reasoning to- wards explainable predictions that can be ‘globally’ contested. Like existing approaches combining LLMs with argumentation (Freed- man et al., 2025; Zhou et al., 2025; Zhu et al., 2025; Gorur et al., 2025), ArgEv al uses an LLM for mining arguments for and against a particular decision as well as estimating numerical scores indicat- ing their intrinsic strength (also called base scor es ). The arguments and scores are then used to form a quantitative bipolar argumenta- tion fr amework (QBAF), enabling deterministic inference via grad- ual semantics (Baroni et al., 2019). Applying the semantics yields a set of final argument strengths, which indicate the degree of ac- ceptability of each argument after considering its interactions with the other arguments in the QB AF . The strength of the argument rep- 1 A. Dejl, M. W illiams and F . T oni Argumentation for Explainable and Globally Contestable Decision Support with LLMs Nat ur al- Lang uag e Poli cy Doc u men ts Inp ut s Gene ral Task Proc e ssin g Case - Sp ec if ic Inf er enc e Co nte st atio n Dec is ion Onto lo gy Co nst ru c tio n Dec is ion Spac e Ont olo g y Gene ral QBAF Co nst ru c tio n Gene ral QBAFs (Per- Opt io n) + - AF In st ant iat ion E x pl ainab le R ec o mm end ati on s ? + Case Param ete r E x t rac ti on Case Param ete rs Key Us er Con te sta tio n Nat ur al- Lang uag e Case Desc r ip ti on Figure 2. Overvie w of the ArgEv al pipeline 2 . T op: giv en natural-language policy documents that specify general criteria for decision-making in a certain domain, ArgEval builds a decision-space ontology and constructs general QB AFs for each candidate decision in the ontology . Bottom: at inference time, the general QB AF is instantiated with the parameters of a specific case, providing faithfully explainable decision recommendations. Users can contest the decision-space ontology , the general QB AFs, the extracted case parameters and the general parameter schema specifying the properties to be extracted, in response to incorrect recommendations or e xplanations produced by the model. resenting the considered decision can then be used for making pre- dictions, with the QB AF acting as an inherently faithful explanation for these predictions. While sharing the use of QB AFs with prior works, ArgEval dif- fers in sev eral ways. Unlike ArgLLMs (Freedman et al., 2025; Zhou et al., 2025) and like ArgRA G (Zhu et al., 2025), ArgEval uses external sources to guide the mining of QBAFs. Howe ver , these sources are used to compile a decision-space ontology and general QBAFs , respecti vely representing the av ailable decision options and general knowledge concerning the decision-making problem of in- terest, differing from ArgRA G, which only uses them for making predictions relating to specific instances. Unlike both Ar gLLMs and Ar gRAG, ArgEval is not restricted to binary questions (claims) but is applicable to open-ended decisions, in the spirit of Evaluati ve AI (Miller, 2023). Uniquely , when making predictions for specific cases, ArgEv al instantiates the general QB AFs associated with each decision op- tion rather than constructing these QB AFs from scratch (see il- lustration in Figure 1). Similarly as for Ar gLLMs and ArgRA G, the resulting (instantiated) QBAFs can be used as faithful explana- tions. Users can inspect these QB AFs and contest them to correct any mistakes, either by changing their base scores or by adding additional arguments. Ho wever , Ar gEval shifts contestation from case-specific, local contestability , as supported by ArgLLMs and ArgRA G, to global contestability , potentially affecting other future cases. Since the arguments in the instantiated QBAF directly cor- respond to arguments in the general QB AF , any changes to those arguments will directly af fect predictions for any cases satisfying their applicability conditions. Apart from the QBAFs, users may also inspect and adjust the decision-space ontology , the extracted case parameters used for QBAF instantiation and the general pa- rameter schema specifying the properties to be extracted for each case. T o ev aluate the effecti veness of ArgEval, we apply it to the task of recommending suitable treatments for dif ferent cases of glioblas- toma based on rele vant clinical guidelines. W e show that variants of ArgEv al perform competitively against the reasoning LLM base- line and ArgLLMs-O, an adapted version of ArgLLMs using the extracted decision ontology for supporting open-ended decisions. Importantly , ArgEv al achieves this performance using a fraction of the computational costs associated with the other methods while providing a significant qualitative advantage in the form of global contestability . T o summarise, our key contrib utions are as follows: • W e propose ArgEv al (see Figure 2), a new framework for deci- sion support that provides faithful explainability and global con- testability through argumentation. • W e apply ArgEv al to treatment recommendation for glioblastoma brain tumours, showing that it exhibits competitiv e performance compared to other methods at a fraction of the inference costs. • W e demonstrate the benefits of global contestability in a case study where contestation on a single sample substantially im- prov es the downstream performance of the model. 2 A. Dejl, M. W illiams and F . T oni Argumentation for Explainable and Globally Contestable Decision Support with LLMs T able 1. Qualitativ e comparison between ArgEv al and other meth- ods. ( ✓ ) in the third row signifies that local contestability for LLMs is reliant on their opaque mechanisms rather than being fully trans- parent. A higher number of E symbols denotes better speed. Property Method LLMs ArgLLMs ArgRA G ArgLLMs-O ArgEval Open-Ended Decisions ✓ ✗ ✓ ✓ Faithful Explainability ✗ ✓ ✓ ✓ Local Contestability ( ✓ ) ✓ ✓ ✓ Global Contestability ✗ ✗ ✗ ✓ Inference Speed EE E E EEE 2 Related W ork Argumentation and LLMs The use of argumentation in combi- nation with LLMs is advocated by several. Ar gumentative LLMs (ArgLLMs) (Freedman et al., 2025) have been recently proposed as a step to wards making LLMs more reliable and responsive to user-dri ven error corrections (i.e., contestations ), in the form of a paradigm combining general LLM kno wledge and capabilities with formal argumentativ e reasoning. W e adopt the same philosophy as ArgLLMs and use them as a baseline to compare against, but are not restricted to binary decisions (such as determining whether a certain claim is truthful or not) or local contestation. In a similar spirit, ArgRA G (Zhu et al., 2025) uses retriev al-augmented gener- ation (RAG) with external sources for generating QB AFs, making binary judgements about claim validity . Like ArgRA G, we inform our QB AF generation with external sources, but these are compiled into a structured ontology instead of being retrieved ad hoc as in ArgRA G. Moreover , similarly to ArgLLMs and unlike ArgRA G, we restrict the QBAF extraction to acyclic graphs. Both ArgLLMs and ArgRA G were originally proposed for the task of claim veri- fication, and hav e since been adapted to other use-cases, such as judgemental forecasting (Gorur et al., 2025). In addition to these approaches, extracting full QB AFs, some works use LLMs for mining attack and support relations between pieces of texts seen as arguments, as in Gorur et al. (2025), or ar- guments, their components and/or relations between them, as in Cabessa et al. (2025). Argumentation Schemes and Ontologies Our general QBAFs, such as those used in the glioblastoma treatment recommendation experiment, can be informed by (e xisting or new) argumentation schemes with critical questions (W alton et al., 2008), to be instan- tiated for specific cases of interest. This approach is inspired by A yoobi et al. (2025), which, ho wever , does not use LLMs or any ontology to instantiate the argument schemes. Our use of ontolo- gies for QB AF instantiation is related to Rago et al. (2025), which howe ver uses them in combination with te xt mining directly , rather than than to form general QB AFs. Contestable AI Contestability is increasingly advocated as im- portant in human-in-the-loop AI systems (L yons et al., 2021), with argumentation indicated as particularly suitable to support AI con- testability (Leofante et al., 2024; Dignum et al., 2025). Ho wev er, existing approaches to contestability via argumentation, notably Freedman et al. (2025); Y in et al. (2025), focus on local contestabil- ity , for single input-output pairs. De Angelis et al. (2025) propose a form of global contestability , where learnt argumentation-based models are contested, but these are structured argumentation frame- works rather than QB AFs. Faithfulness of Explanations While often proposed as a sim- ple way of explaining LLM reasoning, chain-of-thought (CoT) has been found to be unfaithful to the true reasoning process of models, failing to mention factors that af fect the outputs (Chen et al., 2025). Thus, CoT is insufficient for faithful interpretability (Barez et al., 2025). Some works, e.g. Atanasova et al. (2023), study the faith- fulness of various post-hoc e xplanation methods for LLMs’outputs, e.g. saliency maps and counterfactuals. Some other works, e.g. Siegel et al. (2024), propose faithfulness metrics for explanations generated as reasoning traces by LLMs. ArgEv al, like ArgLLMs and Ar gRAG, instead opts for drawing explanations from gener- ated QB AFs, in the spirit of Cyras et al. (2021), which are faithful by construction. 3 Preliminaries A QBAF is a quadruple Q = A , R − , R + , τ where: • A is a finite set of ar guments ; • R − ⊆ A × A is a binary attack relation; • R + ⊆ A × A is a binary support relation; • R − ∩ R + = ∅ ; • τ : A → [0 , 1] is a base scor e function . Arguments in QBAFs may be ev aluated by gradual seman- tics (Baroni et al., 2019), total function σ : A → [0 , 1] which, for any α ∈ A , assigns a strength σ ( α ) to α . While τ ( α ) can be seen as the intrinsic strength for α , the strength σ ( α ) can be seen as ‘dialectical’, following the debate captured by R − and R + . In this paper we adopt the following restrictions on QB AFs. Each QB AF is a tree, with the root an argument representing a decision option (similarly to ArgLLMs, where the root is a claim). Each such tree has either depth 1 or depth 2 . At depth 1, the root is attacked and/or supported by a finite number of arguments; at depth 2, each of those ar guments can be attack ed and/or supported by any number of further arguments. Unlike ArgLLMs, which allo w exactly one attacker and one supporter for each argument, our QB AFs can ha ve arbitrary breadth. For the e xperiments we use the discontinuity-free quantitative ar gumentation debate (DF-QuAD) gradual semantics (Rago et al., 2016), which is defined as follows. For any α ∈ A with n ≥ 0 at- tackers with strengths v 1 , . . . , v n , m ≥ 0 supporters with strengths v ′ 1 , . . . , v ′ m and τ ( α ) = v 0 , σ ( α ) = C ( v 0 , F ( v 1 , . . . , v n ) , F ( v ′ 1 , . . . , v ′ m )) , where • for v a = F ( v 1 , . . . , v n ) and v s = F ( v ′ 1 , . . . , v ′ m ) : if v a = v s then C ( v 0 , v a , v s ) = v 0 ; else if v a > v s then C ( v 0 , v a , v s ) = v 0 − ( v 0 · | v s − v a | ) ; otherwise C ( v 0 , v a , v s ) = v 0 + ((1 − v 0 ) · | v s − v a | ) ; and • giv en n arguments with strengths v 1 , . . . , v n , if n = 0 then F ( v 1 , . . . , v n ) = 0 , otherwise F ( v 1 , . . . , v n ) = 1 − Q n i =1 ( | 1 − v i | ) . Ar gument schemes (W alton et al., 2008) are templates for debate composed of major/minor premises, conclusions and critical ques- tions about the minor premises’ validity . The schemes guide how arguments are built and the critical questions point to ways to attack them. 3 A. Dejl, M. W illiams and F . T oni Argumentation for Explainable and Globally Contestable Decision Support with LLMs Algorithm 1 Decision Ontology Construction Input : A set of documents D and a set of text chunks T ′ d for each document d ∈ D Output : Constructed ontology O 1: Initialise E ← ∅ , T ← ∅ , H ← ∅ , S ← ∅ 2: for d ∈ D do 3: for t ∈ T ′ d do 4: ▷ Extract chunk entities and hier arc hy relations 5: ⟨E t , H t ⟩ ← mine_ontology ( E , H , t ) 6: E ← E ∪ E t 7: T ← T ∪ { t } 8: H ← H ∪ H t 9: S ← S ∪ { ( e, t ) | e ∈ E t } 10: end for 11: end for 12: O ← ⟨E , T , H , S ⟩ 13: retur n O 4 ArgEval The ArgEv al pipeline (illustrated in Figure 2) consists of two main stages: general task processing, which is responsible for mapping the task domain and identifying general rules for making decisions therein, and case-specific inference, which provides decision rec- ommendations for specific instances. W e describe the specifics of each stage below . 4.1 General T ask Processing Decision Ontology Construction The initial step of the general task processing automatically maps the decision space associated with the gi ven domain, constructing a structured ontology of possible decision options. As a source mate- rial for constructing the ontology , the pipeline relies on a corpus of natural-language policy documents D describing the general proce- dures for making decisions on the given task. For example, when aiming to provide decision support for choosing between different treatments for a certain medical condition, D may consist of the relev ant clinical guidelines. Each document d ∈ D is decomposed into a set of text chunks T d , split semantically according to the doc- ument sections. Optionally , these chunks can be filtered according to their relevance to the considered task using an LLM, yielding a filtered set T ′ d (or T ′ d = T d when filtering is not applied). The obtained chunks are then used to construct an ontology O = ⟨E , T , H , S ⟩ where E is a set of decision entities in the ontology , T is the associated set of source text chunks, H ⊆ E × E is a hierarchy relation linking specific v ariants of decision options to their general categories and S ⊆ E × T is a prov enance relation linking each decision entity to all the relev ant chunks in which it is mentioned. The ontology is constructed in an iterative way , starting from a tuple of empty sets and being progressi vely expanded with ne wly appearing decision entities as well as links recording all entity men- tions from the indi vidual text chunks, as sho wn in Algorithm 1. The mine_ontology function is implemented by an LLM pro- vided with the current hierarchy of entities and the text of the cur- rent chunk in each iteration, and outputting data about new entities and entity mentions as part of a structured JSON. An example deci- sion option ontology for the glioblastoma treatment recommenda- tion task is shown in Figure 3. Algorithm 2 General QB AF Construction Input : Ontology O = ⟨E , T , H , S ⟩ , mining depth d , argument scheme s arg (optional), boolean flag score_root Output : A set of general QBAFs G = {G e } e ∈E and global param- eter schema Π 1: Initialise G ← ∅ , Π ← ∅ 2: for e ∈ E do 3: Initialise τ e ← ∅ , χ e ← ∅ 4: T e ← { t ∈ T | ( e, t ) ∈ S } 5: ▷ 1. Mine a bipolar ar gumentation framework 6: ▷ with a natural-languag e condition function χ N e 7: ⟨A e , R − e , R + e , χ N e ⟩ ← mine_baf ( e, T e , d, s arg ) 8: for a ∈ A e do 9: ▷ 2. Estimate base scor es 10: if is_root ( a ) and not score_root then 11: τ e ( a ) ← 0 . 5 12: else if is_root ( a ) then 13: τ e ( a ) ← score ( a, T e ) 14: else 15: p ← get_parent ( a, R − e , R + e ) 16: is_sup ← ( a, p ) ∈ R + e 17: τ e ( a ) ← score ( a, T e , p, is_sup , χ N e ( a )) 18: ▷ 3. F ormalise conditions, updating schema 19: ⟨ χ e ( a ) , Π ⟩ ← form_cond ( a, χ N e ( a ) , Π) 20: end if 21: end for 22: G e ← ⟨A e , R − e , R + e , τ e , χ e ⟩ 23: G ← G ∪ {G e } 24: end for 25: retur n G , Π Glioblastoma T reatment Option Ontology Surgical T umour Resection Radiotherapy Radiotherapy 60 Gy in 30 Fractions Radiotherapy 40 Gy in 15 Fractions Alkylating Agent Chemotherapy T emozolomide Chemotherapy Carmustine Chemotherapy Carmustine Polymer W afer Implantation PCV Chemotherapy Lomustine (CCNU) Chemotherapy Alternating Electric Field Therapy Bev acizumab Figure 3. Subset of a glioblastoma treatment option ontology auto- matically constructed from the rele vant clinical guidelines. Only the entities and the hierarchical relations are visualised without the corresponding text chunks and provenance relations. Note that the used LLM has incorrectly categorised Lomustine as a separate treatment rather than a variant of Alkylating Agent Chemotherapy , although this has no ef fect on the rest of the ArgEv al pipeline. The 9 leav es of the ontology are used in our main experiments. General QB AF Construction After extracting the decision space ontology O = ⟨E , T , H , S ⟩ for a specific task, ArgEv al uses this ontology to mine the gen- eral QB AFs G = {G e } e ∈E for each identified option e ∈ E . Each general QBAF is a tuple G e = ⟨A e , R − e , R + e , τ e , χ e ⟩ where 4 A. Dejl, M. W illiams and F . T oni Argumentation for Explainable and Globally Contestable Decision Support with LLMs Listing 1. JSON schema formalising the condition extracted for the first attacking argument from Figure 1. 1 { 2 "$schema" : "https://json − schema.org/draft /2 020 − 12 /schema", 3 "type" : "object", 4 "anyOf" : [ 5 { 6 "properties" : { 7 "eloquent _ structure _ involvement" : { 8 "type" : "boolea n", 9 "const" : true 10 } 11 } 12 }, 13 { 14 "properties" : { 15 "kps" : { 16 "type" : "intege r", 17 "maximum" : 49 18 } 19 } 20 } 21 ] 22 } A e , R − e , R + e , τ e denote arguments, a binary support relation, a binary attack relation and a base score function as for standard QB AFs (see Section 3), while χ e : χ e → Φ is a condition func- tion mapping arguments to formal conditions specifying the cases in which the giv en argument applies. Φ denotes the domain of con- ditions expressible in some suitable formal language. Apart from the general QB AFs themselves, the procedure also produces a global parameter schema Π , containing the definitions of all parameters appearing in the e xtracted ar gument conditions. In our instantiation of the method, both the global parameter schema and the formalised conditions are expressed using JSON schemas, 3 which are relatively easy to generate for LLMs while establishing structure and value constraints that can be easily verified in an au- tomated fashion. An example schema formalising the condition for one of the arguments from Figure 1 is gi ven in Listing 1. The general QBAF construction procedure itself iterates over all the identified options e ∈ E in the ontology , proceeding in three main steps: bipolar argumentation framework mining, argument base score estimation and argument condition formalisation (see Algorithm 2). 1) Bipolar Ar gumentation F ramework Mining . In the first step, the procedure employs an LLM to recursiv ely mine arguments support- ing and attacking the giv en decision option up to a specified depth d , forming a tree bipolar argumentation framew ork structure. This process is similar to the one used for ArgLLMs (Freedman et al., 2025) with some important deviations: the LLM mining the argu- ments is also provided with all text chunks referring to the gi ven decision, may be optionally supplied with an ar gument scheme s arg to guide the ar gument generation using domain-specific criteria (see an example in T able 2), can generate frameworks with an arbitrary breadth (numbers of attackers/supporters, which can differ from each other) and produces natural-language conditions χ N e describ- ing the circumstances under which each of the argument applies. 2) Ar gument Base Scor e Estimation . Once the arguments have been generated, the second step of the construction process esti- 3 https://json- schema.org/ Major premise Generally , if a clinical intervention provides a net medi- cal benefit and is comparatively suitable among its mu- tually exclusi ve alternati ves for a given patient, then it is recommended. Minor premise The clinical intervention pro vides a positive benefit-risk balance for the considered patient. Minor premise The clinical intervention is superior or equivalent to its incompatible alternativ es for the considered patient. CQ1 Are the clinical features of the patient free of any con- traindications against the considered intervention? CQ2 Does the available evidence from clinical guidelines es- tablish that the intervention provides a net benefit to the giv en patient? CQ3 Is there no alternative intervention that offers a better benefit-risk profile for the given patient and is incom- patible with the currently considered intervention? T able 2. Premises and critical questions of the argument scheme s arg used for the glioblastoma treatment recommendation task. mates their base scores. Depending on a boolean hyperparame- ter score_root , this can either be done only for non-root ar- guments with the root receiving a midpoint score of 0 . 5 or for all arguments in the framework including the root. The former option makes ArgEv al predictions more sensitive to the influences from the root argument attackers and supporters. Apart from the argument text itself, the scoring function is also pro vided with all text chunks associated with the currently considered option, and, for non-root arguments, the text of the parent argument, its relation to the cur- rently ev aluated argument and the natural-language condition as- sociated with the argument. Similarly to ArgLLMs, our scoring function uses an LLM to directly output the estimated base scores, as this simple strategy has been empirically found to outperform other , more complex approaches (Zhou et al., 2025). 3) Ar gument Condition F ormalisation . The final stage of the QB AF construction process translates the conditions associated with each argument from their natural-language versions χ N e to the corre- sponding formal representations χ e . This procedure also itera- tiv ely updates the global parameter schema Π with an y new param- eters referenced in the formalised conditions. Similarly to the other stages of the construction pipeline, this step lev erages an LLM to generate each condition as well as the updated parameter schema. After the construction process is finished, the obtained general QB AFs G = {G e } e ∈E and the parameter schema Π can be used for case-specific inference, as described in the following Section. 4.2 Case-Specific Inference The case-specific inference procedure of ArgEv al first uses an LLM to extract a set of structured case parameters P from a natural- language description of the considered case c , guided by the global parameter schema Π . For each general QBAF G e ∈ G , these pa- rameters can then be checked against the conditions associated with its arguments, instantiating these framew orks by removing any ir- relev ant ar guments whose conditions are not satisfied, their descen- dants and any associated relations. This procedure yields regular , instantiated QB AFs Q = {Q e } e ∈E , which can be ev aluated by a gradual semantics σ . The final strengths of the root QBAF argu- ments produced by the semantics can then be used as recommen- dation scores S = { s e } e ∈E quantifying the relative and absolute suitability of the associated decision options. An illustration of the inference process for a single decision option is shown in Figure 1, with the full procedure detailed in Algorithm 3. 5 A. Dejl, M. W illiams and F . T oni Argumentation for Explainable and Globally Contestable Decision Support with LLMs Algorithm 3 Case-Specific Inference Input : A set of general QBAFs G = {G e } e ∈E , global parameter schema Π , natural-language case description c , argumentation se- mantics σ Output : A set of instantiated QBAFs Q = {Q e } e ∈E and decision recommendation scores S = { s e } e ∈E 1: Initialise Q ← ∅ , S ← ∅ 2: ▷ 1. Extract par ameters fr om case description 3: P ← extract_params ( c, Π) 4: for G e ∈ G do 5: ⟨A e , R − e , R + e , τ e , χ e ⟩ ← G e 6: ▷ 2. Instantiate general QB AF into r egular QB AF 7: Initialise A ′ e ← A e , R ′− e ← R − e , R ′ + e ← R + e 8: for a ∈ A e do 9: ▷ Remove ar guments with unsatisfied conditions 10: ▷ and the associated r elations 11: if a ∈ A ′ e and not eval_cond ( χ e ( a ) , P ) then 12: A rm ← { a } ∪ descendants ( a, R ′− e , R ′ + e ) 13: A ′ e ← A ′ e \ A rm 14: R ′− e , R ′ + e ← rm_rels ( A rm , R ′− e , R ′ + e ) 15: end if 16: end for 17: τ ′ e ← τ e restricted to A ′ e 18: Q e ← ⟨A ′ e , R ′− e , R ′ + e , τ ′ e ⟩ 19: ▷ 3. Calculate the r ecommendation score 20: r e ← get_root ( Q e ) 21: s e ← σ Q e ( r e ) 22: Q ← Q ∪ {Q e } 23: S ← S ∪ { s e } 24: end for 25: retur n Q , S 5 Experiments Here, we describe the experiments applying Ar gEval and additional baseline methods to the task of recommending suitable treatments for glioblastoma, an aggressive brain tumour with poor prognosis. Apart from reporting the initial performance on this task, we also conduct a case study illustrating the impact of contestation on the key e valuation metrics. 5.1 Experimental Setup T ask In our experiments, we consider the task of providing patient- specific recommendations regarding suitable interventions for glioblastoma. The intention is to partially simulate the applica- tion of ArgEv al and other baselines as a clinical decision support system advising clinicians on therapies that should be considered as part of the overall treatment plan. W e believe this task poses a realistic and relev ant e valuation setting, as the deployment of ex- plainable AI systems would lik ely provide most substantial benefits in high-stakes domains such as healthcare. Policy Documents W e use four established clinical guidelines on treating high-grade glioma or glioblastoma as our policy documents: the European So- ciety For Medical Oncology (ESMO) clinical practice guidelines for high-grade glioma (Stupp et al., 2014), the National Compre- hensiv e Cancer Network ® (NCCN ® ) guidelines on central nervous system cancers 4 (NCCN, 2025), the guideline on primary brain tu- mours and brain metastases in over 16s from the National Institute for Health and Care Excellence (NICE, 2018), and the Society for Neuro-Oncology (SNO) and European Society of Neuro-Oncology (EANO) consensus review on glioblastoma management in adults (W en et al., 2025). Due to the length of the selected guidelines, spanning more than 200 pages in one case, we manually extracted pages relev ant to glioblastoma treatment. T o parse and preprocess the unstructured guideline PDFs, we used the Docling library (Deep Search T eam, 2024) along with its heron document layout analysis model (Livathinos et al., 2025) and a hybrid chunker splitting the documents into shorter chunks. These chunks were postprocessed by gpt-oss-20b (OpenAI, 2025), merg- ing semantically related chunks that were separated by page breaks, figures or other PDF elements and filtering out chunks unrelated to glioblastoma treatment. This process resulted in a total of 67 chunks. Giv en the refined chunks, we used gpt-oss-20b to construct a de- cision space ontology using the procedure described in Section 4, identifying a total of 179 treatment entities. T o constrain our ex- periments to the most common atomic treatment options, we only retained leaf entities associated with mentions in at least three out of the four considered guideline documents that were not combina- tion treatments (i.e., they had a single root ancestor in the ontology hierarchy). This yielded an ontology with 9 different treatment op- tions captured in Figure 3. A revie w by an expert oncologist de- termined that the ontology captures the most commonly considered non-combination therapies for glioblastoma, along with some less relev ant options, providing a useful basis for the subsequent exper- iments. Case Descriptions T o source the patient case descriptions, we again liaised with an ex- pert clinical oncologist, providing us with a set of four ke y parame- ters substantially af fecting the treatment decisions for glioblastoma patients along with suitable v alues ranges: age (ranging ov er the values 50, 60, 75 and 85), MGMT methylation status (ranging over the v alues “meth ylated”, “unknown” and “unmethylated”), Karnof- sky Performance Status (KPS, ranging ov er the values 10, 30, 50, 70 and 90) and tumour location (ranging over the values “non- dominant frontal lobe”, “thalamus” and “brainstem”). In addition to these properties, we also add a spurious parameter indicating the patient sex (“male” or “female”), which is typically seen as having no impact on the choice of appropriate therapy for glioblastoma. Considering all possible parameter value combinations provides us with 4 × 3 × 5 × 3 × 2 = 360 unique patient parameter sets, which we then con vert into brief patient vignettes using an LLM (see Fig- ure 1 for an example). W e performed manual checks to ensure that the vignettes accurately represent the input patient parameters with no omissions or extraneous information. Labels The ground-truth treatment recommendation labels were deter- mined according to an algorithm with rules informed and re viewed by the clinical oncologist, aiming to capture the general clinical practice when deciding on treatments recommended to different pa- tient subgroups. The labels are assigned to each of the patient vi- gnette and treatment combinations, resulting in 360 × 9 = 3240 4 Disclaimer: NCCN makes no warranties of any kind whatsoever regarding their content, use or application and disclaims any responsibility for their application or use in any way . 6 A. Dejl, M. W illiams and F . T oni Argumentation for Explainable and Globally Contestable Decision Support with LLMs labels in total. Each label indicates whether a specific treatment is “recommended”, “maybe recommended” or “not recommended” for a patient with the giv en characteristics, with the “maybe recom- mended” label indicating potential suitability with relatively weak or conflicting evidence. LLM Backbones W e consider two reasoning LLMs from two different providers, gpt- oss-20b (OpenAI, 2025) and Qwen3-30B-A3B-FP8 (Y ang et al., 2025). Both models rely on the Mixture-of-Experts (MoE) archi- tecture for more efficient inference, with 3.6B and 3.3B active pa- rameters, respecti vely . In our experiments, both models were run in reasoning mode, setting the reasoning effort level to “medium” for gpt-oss-20b. Inference was performed using the vLLM engine (Kwon et al., 2023) with the sampling temperature set to 1 . 0 and other generation parameters taking their default values. While the main e xperiments use both models, the initial document processing and ontology construction were only performed using gpt-oss-20b, ensuring that all the experimental results are based on a consistent ontology . Baselines W e compare our method to two baselines: base LLMs and ArgLLMs-O. Base LLMs are directly instructed to provide a nu- merical score ranging from 0 to 1 indicating the degree to which a treatment is recommended to a given patient, with this process being repeated for each of the 9 treatment options. The LLM is provided with all the text chunks associated with the currently eval- uated treatment option as well as the patient case description, and can use the reasoning chain for intermediate w ork before gi ving the final answer . The ArgLLMs-O baseline is an adapted version of the original ArgLLMs (Freedman et al., 2025) using our treatment option ontology and generating a separate QBAF for each possible treatment option. During both argument generation and base score estimation stages, the LLM performing these operations is provided with all the rele vant guideline chunks as well as the patient case de- scription. Thus, both baselines can use the patient information dur - ing their entire reasoning process, while ArgEv al only gains access to it during the case-specific inference stage. Method V ariants W e consider eight different v ariants of ArgEv al arising from the choices of three hyperparameters: the general QB AF depth (either 1 or 2), root base score estimation (either enabled or disabled) and argument scheme inclusion (either enabled or disabled). See Sec- tion 4, Algorithm 2 and T able 2 for more information on these pa- rameters. For ArgLLMs-O, we similarly consider variations with or without root base score estimation and argument scheme inclusion. Due to very high computational requirements of ArgLLMs-O, we only consider depth 1 versions for this baseline. All argumentati ve models use the DF-QuAD gradual semantics (Rago et al., 2016), which is also the default semantics for Ar gLLMs. Metrics W e use three key metrics to compare the performance of the dif fer- ent methods. The label match rate (LMR) indicates the share of the absolute treatment recommendation scores matching their labels, with the “recommended” label considered to be matching for scores between 0 . 5 and 1 . 0 , the “maybe recommended” label matching scores between 0 . 25 and 0 . 75 and the “not recommended” label matching scores between 0 . 0 and 0 . 5 . Since the recommendation scores are intended to be helpful in ranking potential treatments from the most to least suitable, we also report the normalised dis- counted cumulati ve gain (NDCG) metric (Järvelin and K ekäläinen, 2002). NDCG indicates the level of agreement between the ground- truth ranking (starting from the “recommended” methods and end- ing with the “not recommended” ones) and the ranking defined by the predicted treatment recommendation scores. Finally , we report the total prompt and completion tokens used during the LLM infer- ence for each method. These measures include the general QB AF construction for ArgEv al but exclude the initial ontology mining, which is shared for all methods. 5.2 Perf ormance Results The results of our experiments are summarised in T able 3. There are sev eral trends that can be observed. Generally , the best-performing versions of ArgEval achie ve com- petitiv e results compared to the baselines. For e xample, the variant using Qwen3-30B model with depth 2, no root estimation and ar- gument scheme inclusion achiev es the best ov erall LMR of 0 . 8818 with a comparati vely high NDCG of 0 . 9771 , which is only sur- passed by 3/25 other method variants (one of which is another in- stance of Ar gEval). Nevertheless, Ar gEval seems to be more sensi- tiv e to the chosen hyperparameters with substantial variations be- tween the different versions. This is likely due to the fact that any deficiencies during general QB AF construction affect all subse- quent inferences. ArgEval variants using root base score estimation perform particularly poorly , probably because treatment options as- signed with high base scores may still be inappropriate for specific patients. Nev ertheless, the high performance of certain ArgEv al variants is notable given their low computational requirements. In particular, thanks to its global reasoning, ArgEv al requires substantially fe wer inference tokens compared to the other baselines, with ev en the most expensiv e depth 2 variant requiring ∼ 2.9 x and ∼ 8.7 x fewer completion tok ens compared to the cheapest base LLM version and the cheapest ArgLLMs-O v ersion, respectively . Overall, we argue that ArgEv al provides a good trade-off be- tween recommendation performance and computational costs, with a substantial qualitativ e benefit associated with global contestabil- ity . In the next Section, we demonstrate that this benefit can trans- late into direct performance gains. 5.3 Contestability Case Study T o demonstrate the e xplainability and global contestability capabil- ities of ArgEval, we conduct an additional experiment showing the performance ef fects of contesting the recommendations for a single output. W e intentionally choose an ArgEval variant with moderate performance, specifically , the version using gpt-oss-20b with depth 1, no root score estimation and argument scheme inclusion. As illustrated in Figure 4, the model correctly recommends Ra- diotherapy 40 Gy , but incorrectly ranks the unsuitable options Ra- diotherapy 60 Gy and Sur gical Resection above T emozolomide, an- other possible therapy . Inspecting the instantiated QB AFs for Ra- diotherapy 60 Gy , we observe that there are four supporting and two attacking arguments. While the attacking arguments correctly identify Radiotherapy 40 Gy as typically being the more appropri- ate regime for elderly patients, the y are outweighed by the support- ers arguing that Radiotherapy 60 Gy is generally suitable for pa- tients with good performance status. Aiming to perform a minimal contestation correcting the recommendations, we slightly increase 5 Icon from Noun Project (Patient by Alzam, CC BY 3.0). 7 A. Dejl, M. W illiams and F . T oni Argumentation for Explainable and Globally Contestable Decision Support with LLMs T able 3. Performance on glioblastoma (GBM) treatment prediction across models and inference configurations. LMR denotes the label match rate, indicating the share of the absolute treatment recommendation scores matching the labels, while NDCG quantifies the level of agreement between the ground-truth treatment ranking and the predicted ranking. The I/O T okens column reports prompt/completion token usage in millions (M) without the initial decision ontology construction, which is shared for all methods. LLM Backbone Method d Est. Root Arg. Scheme LMR ↑ NDCG ↑ I/O T okens (M) ↓ gpt-oss-20b (medium) gpt-oss-20b Base LLM – – – 0.8775 0.9578 16.95 / 6.59 gpt-oss-20b ArgLLMs-O 1 ✗ ✗ 0.8614 0.9739 80.51 / 20.12 gpt-oss-20b ArgLLMs-O 1 ✗ ✓ 0.8676 0.9629 80.41 / 19.89 gpt-oss-20b ArgLLMs-O 1 ✓ ✗ 0.8429 0.9718 95.91 / 22.69 gpt-oss-20b ArgLLMs-O 1 ✓ ✓ 0.8444 0.9707 95.98 / 22.72 gpt-oss-20b ArgEv al 1 ✗ ✗ 0.6910 0.9330 1.18 / 0.79 gpt-oss-20b ArgEv al 1 ✗ ✓ 0.8009 0.9654 1.20 / 0.62 gpt-oss-20b ArgEv al 1 ✓ ✗ 0.4432 0.9293 1.18 / 0.66 gpt-oss-20b ArgEv al 1 ✓ ✓ 0.6923 0.8983 1.15 / 0.68 gpt-oss-20b ArgEv al 2 ✗ ✗ 0.8485 0.9422 3.96 / 1.93 gpt-oss-20b ArgEv al 2 ✗ ✓ 0.7006 0.8514 4.09 / 2.02 gpt-oss-20b ArgEv al 2 ✓ ✗ 0.5244 0.9480 4.09 / 1.92 gpt-oss-20b ArgEv al 2 ✓ ✓ 0.6914 0.9241 4.51 / 2.29 Qwen3-30B-A3B-FP8 Qwen3-30B BaseLLM – – – 0.8630 0.9527 17.83 / 5.44 Qwen3-30B ArgLLMs-O 1 ✗ ✗ 0.8373 0.9752 78.06 / 22.11 Qwen3-30B ArgLLMs-O 1 ✗ ✓ 0.8617 0.9735 78.95 / 21.73 Qwen3-30B ArgLLMs-O 1 ✓ ✗ 0.8216 0.9815 93.93 / 25.80 Qwen3-30B ArgLLMs-O 1 ✓ ✓ 0.8417 0.9804 96.06 / 25.75 Qwen3-30B ArgEv al 1 ✗ ✗ 0.8812 0.9750 0.90 / 0.67 Qwen3-30B ArgEv al 1 ✗ ✓ 0.8623 0.9389 0.89 / 0.67 Qwen3-30B ArgEv al 1 ✓ ✗ 0.7417 0.9138 0.88 / 0.78 Qwen3-30B ArgEv al 1 ✓ ✓ 0.7497 0.9118 1.01 / 0.55 Qwen3-30B ArgEv al 2 ✗ ✗ 0.7886 0.9782 2.31 / 1.38 Qwen3-30B ArgEv al 2 ✗ ✓ 0.8818 0.9771 2.65 / 1.38 Qwen3-30B ArgEv al 2 ✓ ✗ 0.6284 0.8957 2.36 / 1.36 Qwen3-30B ArgEv al 2 ✓ ✓ 0.7694 0.9123 3.01 / 1.73 Cas e Des c r ip t i on An 85 ? ye ar? o ld male w ith new l y d iag no sed gl iob las tom a of the th alamu s p rese nts w it h a Kar no f sky Per fo rm anc e Stat us o f 9 0. M GM T me thy lat io n st atu s is unk no w n. In it i al R e c om m e nd at i on s 1 . Radio th er apy 4 0 G y (0 .9 9) 2 . R ad iot her apy 6 0 G y (0 .51 ) 3. Surg ic al R e sec ti on (0.5 1 ) 4 . T e moz ol omi de (0.5 ) 5. Alt er nat ing E l ec tr ic Field s ( 0.4 8 ) 6. Car mus tin e Waf er s Imp lan t (0 .2 3) 7 . L omu st ine (0 .2) 8. PCV (0 .01 ) 9. Bevac iz um ab (0.0 ) Sam p le M e t r ic s Lab el M at ch : 0 .7 77 7 NDCG: 0 .8 7 72 M o de l M et r i c s ( F u ll Dat as et ) Lab el M at ch : 0 .8 0 0 9 NDCG: 0 .9 6 5 4 Co n t es t at io n R a di ot h e ra py 6 0 Gy QBA F Pat ie n t Para m et er S c h e ma - s af e _ r es ec t i on _po s si b le : Ind ic at es w h et her a max imal saf e r esec t ion c an b e p er fo r med w it ho ut co mp ro mis ing neu ro log ic al f u nc tio n ( tr ue = p oss ibl e, f als e = no t p os sib le). R e sec ti on i s no t p os sib le if th e tu mo ur in f ilt rat es d eep m idl ine str uc t ure s suc h as the th alamu s. - e lo q ue n t _ s t r uc t u r e_ i n vo l v em en t : T rue i f th e t um our inv ol ves elo q uen t o r c r iti ca l br ain str uc t ure s (e.g ., m ot or c o rt ex, l ang uag e are as, b rai nst em or thal amu s); f als e o the rw i se. 0.9 3 0 .75 0.9 2 0 .75 0.8 8 0 .75 0.8 7 0 .75 0.8 5 0 .9 7 0.8 0 .9 + + + + - - Up d at ed R e c om m e nd at i on s 1 . R ad io th erap y 4 0 Gy (0 .99 ) 2 . T e mo zol om ide (0.5 ) 3. R ad iot he rap y 6 0 Gy (0 .5) 4 . Al ter nat ing E l ec tr ic Fiel ds ( 0.4 8 ) 5. Car mu sti ne Waf er s Imp lan t (0 .2 3) 6. Lom us tin e (0 .2 ) 7 . Sur gic al R e sec t ion ( 0.0 2 ) 8. PCV (0 .01 ) 9. Beva ci zum ab ( 0.0 ) Sam p le M e t ri c s Lab el M at ch : 1 . 0 (+0 .2 22 3 ) NDCG: 1 .0 ( +0 . 1 2 28 ) M o de l M et r i c s ( F u ll Dat as et ) Lab el M at ch : 0 .8 78 4 (+ 0.0 775 ) NDCG: 0 .9 7 75 ( +0 .0 1 2 1 ) Figure 4. Illustration of the ArgEv al contestability experiment 5 . T o correct the initially suboptimal recommendations, we make small adjustments to the base scores in the general argumentation framew ork for the radiotherapy 60 Gy treatment option (with initial scores sho wn in smaller red font and updated scores in larger green font) and clarify the descriptions of two parameters associated with surgical resection in the parameter schema. These modifications are sufficient to achie ve a perfect score on this instance while also substantially improving the ov erall performance. Treatments recommended by the ground-truth labels are sho wn in green, with those not recommended in red. 8 A. Dejl, M. W illiams and F . T oni Argumentation for Explainable and Globally Contestable Decision Support with LLMs the base scores of the attackers and decrease the base scores of the supporters in the general QB AF , reducing the Radiotherapy 60 Gy score to slightly below 0.5 (not visible in Figure 4 due to rounding). For Surgical Resection, our inspection of the instantiated QB AFs rev eals that ArgEv al has correctly captured conditions associated with sur gery contraindications, b ut is unable to infer that these con- traindications apply to patients with glioblastoma of the thalamus during parameter extraction. T o address this issue, we refine the corresponding parameter descriptions in the schema as illustrated. This results in correct extraction of the corresponding parameters and a substantial reduction in Surgical Resection score, with the inference process after these changes illustrated in Figure 1. Notably , performing the two contestations based a single sam- ple results in a substantial performance improv ement on the full dataset, reaching LMR of 0 . 8784 and NDCG of 0 . 9775 , which sur - passes the performance of all other methods using gpt-oss-20b . 6 Conclusion In this paper , we introduced ArgEv al, a novel frame work auto- matically mapping task decision spaces, mining the correspond- ing option ontologies and constructing general QB AFs capturing the benefits and drawbacks of each decision option depending on the characteristics of a specific case. Uniquely , this shift to gen- eral argumentati ve reasoning enables global contestability while also providing substantial computational cost savings. W e ev alu- ated the performance of ArgEval on the task of treatment decision recommendation for glioblastoma, finding it to be highly competi- tiv e, with contestability providing opportunities for further perfor- mance improv ements. Future work could further explore the utility of ArgEv al’ s explainability and contestability to end users through user studies or consider its application in other domains besides healthcare. Acknowledgments This research was partially supported by ERC under the EU’ s Hori- zon 2020 research and inno vation programme (grant agreement No. 101020934, ADIX), by NIHR Imperial BRC (grant no. RDB01 79560) and by Brain T umour Research Centre of Excellence at Im- perial. References Atanasov a, P ., Camburu, O.-M., Lioma, C., Lukasie wicz, T ., Si- monsen, J. G., and Augenstein, I. (2023). Faithfulness tests for natural language explanations. In Rogers, A., Boyd-Graber , J., and Okazaki, N., editors, Proceedings of the 61st Annual Meet- ing of the Association for Computational Linguistics (V olume 2: Short P apers) , pages 283–294, T oronto, Canada. Association for Computational Linguistics. A yoobi, H., Potyka, N., Rapberger , A., and T oni, F . (2025). Ar- gumentativ e debates for transparent bias detection [technical re- port]. CoRR , abs/2508.04511. Barez, F ., Wu, T .-Y ., Arcuschin, I., Lan, M., W ang, V ., Siegel, N., Collignon, N., Neo, C., Lee, I., Paren, A., et al. (2025). Chain- of-thought is not explainability . Preprint . Baroni, P ., Rago, A., and T oni, F . (2019). From fine-grained proper- ties to broad principles for gradual argumentation: A principled spectrum. Int. J. Appr ox. Reason. , 105:252–286. Bermejo, V . J., Gago, A., Gálvez, R. H., and Harari, N. (2025). LLMs outperform outsourced human coders on complex textual analysis. Scientific Reports , 15(1):40122. Bubeck, S., Chandrasekaran, V ., Eldan, R., Gehrke, J., Horvitz, E., Kamar , E., Lee, P ., Lee, Y . T ., Li, Y ., Lundberg, S. M., Nori, H., Palangi, H., Ribeiro, M. T ., and Zhang, Y . (2023). Sparks of artificial general intelligence: Early experiments with GPT -4. CoRR , abs/2303.12712. Busch, F ., Hoffmann, L., Rueger , C., van Dijk, E. H., Kader, R., Ortiz-Prado, E., Makowski, M. R., Saba, L., Hadamitzky , M., Kather , J. N., T ruhn, D., Cuocolo, R., Adams, L. C., and Bressem, K. K. (2025). Current applications and challenges in large language models for patient care: a systematic revie w . Communications Medicine , 5(1):26. Cabessa, J., Hernault, H., and Mushtaq, U. (2025). Ar gument min- ing with fine-tuned large language models. In Rambo w , O., W anner , L., Apidianaki, M., Al-Khalifa, H., Eugenio, B. D., and Schockaert, S., editors, Pr oceedings of the 31st Interna- tional Confer ence on Computational Linguistics , pages 6624– 6635, Ab u Dhabi, U AE. Association for Computational Linguis- tics. Chen, Y ., Benton, J., Radhakrishnan, A., Uesato, J., Denison, C., Schulman, J., Somani, A., Hase, P ., W agner , M., Roger , F ., Miku- lik, V ., Bowman, S. R., Leike, J., Kaplan, J., and Perez, E. (2025). Reasoning models don’t always say what they think. CoRR , abs/2505.05410. Cyras, K., Rago, A., Albini, E., Baroni, P ., and T oni, F . (2021). Argumentati ve XAI: A surve y . In IJCAI , pages 4392–4399. De Angelis, E., Proietti, M., and T oni, F . (2025). Learning to contest argumentati ve claims. In Hogan, A., Satoh, K., Dag, H., T urhan, A., Roman, D., and Soylu, A., editors, RuleML+RR , pages 237– 255. Deep Search T eam (2024). Docling technical report. T echnical report, Deep Search T eam. Dignum, V ., Michael, L., Nie ves, J. C., Slavkovik, M., Suarez, J., and Theodorou, A. (2025). Contesting black-box AI decisions. In Das, S., Nowé, A., and V orobeychik, Y ., editors, Pr oceed- ings of the 24th International Conference on A utonomous Agents and Multiagent Systems, AAMAS 2025, Detroit, MI, USA, May 19-23, 2025 , pages 2854–2858. International Foundation for Au- tonomous Agents and Multiagent Systems / A CM. Freedman, G., Dejl, A., Gorur , D., Y in, X., Rago, A., and T oni, F . (2025). Argumentati ve large language models for explainable and contestable claim verification. In W alsh, T ., Shah, J., and K olter, Z., editors, AAAI-25, Sponsor ed by the Association for the Advancement of Artificial Intelligence, F ebruary 25 - Mar ch 4, 2025, Philadelphia, P A, USA , pages 14930–14939. AAAI Press. Gorur , D., Rago, A., and T oni, F . (2025). Retriev al and argu- mentation enhanced multi-agent llms for judgmental forecasting. CoRR , abs/2510.24303. Huang, L., Y u, W ., Ma, W ., Zhong, W ., Feng, Z., W ang, H., Chen, Q., Peng, W ., Feng, X., Qin, B., and Liu, T . (2025). A survey on hallucination in lar ge language models: Principles, taxonomy , challenges, and open questions. ACM T rans. Inf. Syst. , 43(2). 9 A. Dejl, M. W illiams and F . T oni Argumentation for Explainable and Globally Contestable Decision Support with LLMs Järvelin, K. and K ekäläinen, J. (2002). Cumulated gain-based e val- uation of IR techniques. ACM T rans. Inf. Syst. , 20(4):422–446. Kwon, W ., Li, Z., Zhuang, S., Sheng, Y ., Zheng, L., Y u, C. H., Gonzalez, J., Zhang, H., and Stoica, I. (2023). Efficient memory management for lar ge language model serving with PagedAtten- tion. In Pr oceedings of the 29th Symposium on Operating Sys- tems Principles , SOSP ’23, page 611–626, Ne w Y ork, NY , USA. Association for Computing Machinery . Leofante, F ., A yoobi, H., Dejl, A., Freedman, G., Gorur , D., Jiang, J., Paulino-P assos, G., Rago, A., Rapberger , A., Russo, F ., Y in, X., Zhang, D., and T oni, F . (2024). Contestable AI needs compu- tational argumentation. In Marquis, P ., Ortiz, M., and Pagnucco, M., editors, Proceedings of the 21st International Confer ence on Principles of Knowledge Repr esentation and Reasoning, KR 2024, Hanoi, V ietnam. November 2-8, 2024 . Liv athinos, N., Auer, C., Nassar , A. S., de Lima, R. T ., L ysak, M., Ebouky , B., Berrospi, C., Dolfi, M., V agenas, P ., Omenetti, M., Dinkla, K., Kim, Y ., W eber, V ., Morin, L., Meijer , I., Kuropi- atnyk, V ., Strohmeyer , T ., Gurbuz, A. S., and Staar, P . W . J. (2025). Advanced layout analysis models for Docling. CoRR , abs/2509.11720. Luo, X., Rechardt, A., Sun, G., Nejad, K. K., Yáñez, F ., Y ilmaz, B., Lee, K., Cohen, A. O., Borghesani, V ., Pashkov , A., Marinazzo, D., Nicholas, J., Salatiello, A., Sucholutsky , I., Minervini, P ., Razavi, S., Rocca, R., Y usifov , E., Okalova, T ., Gu, N., Ferianc, M., Khona, M., Patil, K. R., Lee, P .-S., Mata, R., Myers, N. E., Bizley , J. K., Musslick, S., Bilgin, I. P ., Niso, G., Ales, J. M., Gaebler , M., Ratan Murty , N. A., Loued-Khenissi, L., Behler , A., Hall, C. M., Dafflon, J., Bao, S. D., and Lov e, B. C. (2025). Large language models surpass human experts in predicting neu- roscience results. Nature Human Behaviour , 9(2):305–315. L yons, H., V elloso, E., and Miller, T . (2021). Conceptualising contestability: Perspectiv es on contesting algorithmic decisions. Pr oc. ACM Hum. Comput. Inter act. , 5(CSCW1):106:1–106:25. McDuff, D., Schaekermann, M., T u, T ., Palepu, A., W ang, A., Gar- rison, J., Singhal, K., Sharma, Y ., Azizi, S., Kulkarni, K., Hou, L., Cheng, Y ., Liu, Y ., Mahdavi, S. S., Prakash, S., Pathak, A., Semturs, C., Patel, S., W ebster, D. R., Dominowska, E., Got- tweis, J., Barral, J., Chou, K., Corrado, G. S., Matias, Y ., Sun- shine, J., Karthikesalingam, A., and Natarajan, V . (2025). T o- wards accurate differential diagnosis with large language mod- els. Nature . Miller , T . (2023). Explainable AI is dead, long li ve explainable ai!: Hypothesis-driv en decision support using ev aluativ e AI. In Pr oceedings of the 2023 ACM Conference on F airness, Account- ability , and T ransparency , F AccT 2023, Chicago, IL, USA, June 12-15, 2023 , pages 333–342. A CM. NCCN (2025). Central nervous system cancers, version 3.2025. NICE (2018). Brain tumours (primary) and brain metastases in over 16s. NICE. OpenAI (2025). gpt-oss-120b & gpt-oss-20b model card. CoRR , abs/2508.10925. Rago, A., Cocarascu, O., Oksanen, J., and T oni, F . (2025). Argu- mentativ e revie w aggregation and dialogical explanations. Arti- ficial Intelligence , 340:104291. Rago, A., T oni, F ., Aurisicchio, M., and Baroni, P . (2016). Discontinuity-free decision support with quantitativ e ar gumen- tation debates. In KR , pages 63–73. AAAI Press. Siegel, N., Camb uru, O.-M., Heess, N., and Perez-Ortiz, M. (2024). The probabilities also matter: A more faithful metric for faith- fulness of free-text explanations in large language models. In Ku, L.-W ., Martins, A., and Srikumar , V ., editors, Pr oceedings of the 62nd Annual Meeting of the Association for Computational Linguistics (V olume 2: Short P apers) , pages 530–546, Bangkok, Thailand. Association for Computational Linguistics. Stupp, R., Brada, M., v an den Bent, M., T onn, J.-C., and Penth- eroudakis, G. (2014). High-grade glioma: ESMO clinical prac- tice guidelines for diagnosis, treatment and follow-up†. Annals of Oncology , 25:iii93–iii101. ESMO Updated Clinical Practice Guidelines. V an V een, D., V an Uden, C., Blankemeier , L., Delbrouck, J.-B., Aali, A., Bluethgen, C., Pareek, A., Polacin, M., Reis, E. P ., See- hofnerová, A., Rohatgi, N., Hosamani, P ., Collins, W ., Ahuja, N., Langlotz, C. P ., Hom, J., Gatidis, S., Pauly , J., and Chaudhari, A. S. (2024). Adapted large language models can outperform medical experts in clinical text summarization. Nature Medicine , 30(4):1134–1142. W alton, D., Reed, C., and Macagno, F . (2008). Argumentation Schemes . Cambridge Uni versity Press. W ei, J., W ang, X., Schuurmans, D., Bosma, M., Ichter , B., Xia, F ., Chi, E. H., Le, Q. V ., and Zhou, D. (2022). Chain-of-thought prompting elicits reasoning in lar ge language models. In K oyejo, S., Mohamed, S., Agarwal, A., Belgrave, D., Cho, K., and Oh, A., editors, Advances in Neural Information Pr ocessing Systems 35: Annual Conference on Neural Information Pr ocessing Sys- tems 2022, NeurIPS 2022, New Orleans, LA, USA, November 28 - December 9, 2022 . W en, P . Y ., W eller, M., Lee, E. Q., T ouat, M., Khasraw , M., Rah- man, R., Platten, M., Lim, M., Winkler , F ., Horbinski, C., V er- haak, R. G. W ., Huang, R. Y ., Ahluwalia, M. S., Albert, N. L., T onn, J.-C., Schiff, D., Barnholtz-Sloan, J. S., Ostrom, Q., Al- dape, K. D., Batchelor, T . T ., Bindra, R. S., Chiocca, E. A., Cloughesy , T . F ., DeGroot, J. F ., French, P ., Galanis, E., Galldiks, N., Gilbert, M. R., Hegi, M. E., Lassman, A. B., Le Rhun, E., Mehta, M. P ., Mellinghof f, I. K., Minniti, G., Roth, P ., San- son, M., T aphoorn, Andreas, M. J. B., W eiss, von Deimling, T ., W ick, W ., Zadeh, G., Reardon, D. A., Chang, S. M., Short, S. C., van den Bent, M. J., and Preusser, M. (2025). Glioblas- toma in adults: A society for neuro-oncology (SNO) and euro- pean society of neuro-oncology (EANO) consensus re view on current management and future directions. Neur o-Oncology , 27(11):2751–2788. Y ang, A., Li, A., Y ang, B., Zhang, B., Hui, B., Zheng, B., Y u, B., Gao, C., Huang, C., et al. (2025). Qwen3 technical report. CoRR , abs/2505.09388. 10 A. Dejl, M. W illiams and F . T oni Argumentation for Explainable and Globally Contestable Decision Support with LLMs Y in, X., Potyka, N., Rago, A., Kampik, T ., and T oni, F . (2025). Contestability in quantitative argumentation. CoRR , abs/2507.11323. Zhou, K., Dejl, A., Freedman, G., Chen, L., Rago, A., and T oni, F . (2025). Evaluating uncertainty quantification methods in ar- gumentativ e large language models. In Christodoulopoulos, C., Chakraborty , T ., Rose, C., and Peng, V ., editors, F indings of the Association for Computational Linguistics: EMNLP 2025 , pages 21700–21711, Suzhou, China. Association for Computa- tional Linguistics. Zhu, Y ., Potyka, N., Hernández, D., He, Y ., Ding, Z., Xiong, B., Zhou, D., Kharlamov , E., and Staab, S. (2025). ArgRA G: Ex- plainable retriev al augmented generation using quantitative bipo- lar argumentation. CoRR , abs/2508.20131. 11

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment