DepthLab: From Partial to Complete

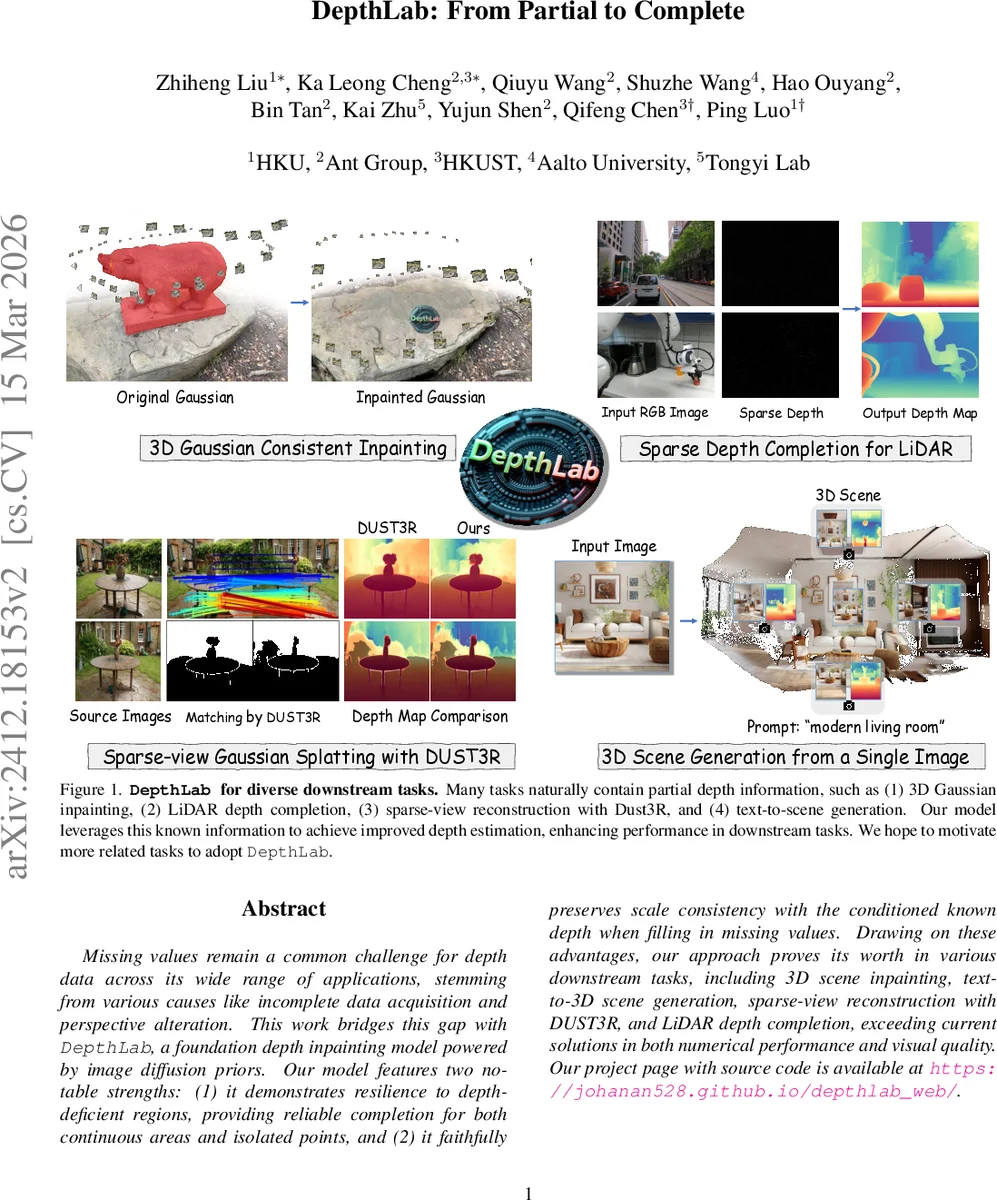

Missing values remain a common challenge for depth data across its wide range of applications, stemming from various causes like incomplete data acquisition and perspective alteration. This work bridges this gap with DepthLab, a foundation depth inpainting model powered by image diffusion priors. Our model features two notable strengths: (1) it demonstrates resilience to depth-deficient regions, providing reliable completion for both continuous areas and isolated points, and (2) it faithfully preserves scale consistency with the conditioned known depth when filling in missing values. Drawing on these advantages, our approach proves its worth in various downstream tasks, including 3D scene inpainting, text-to-3D scene generation, sparse-view reconstruction with DUST3R, and LiDAR depth completion, exceeding current solutions in both numerical performance and visual quality. Our project page with source code is available at https://johanan528.github.io/depthlab_web/.

💡 Research Summary

DepthLab introduces a foundation model for depth inpainting that leverages the powerful priors of image diffusion models. The core contribution is a dual‑branch diffusion framework: a Reference U‑Net extracts rich semantic features from an RGB image, while an Estimation U‑Net processes a noisy depth latent together with an encoded mask and the partially known depth. By integrating the RGB features layer‑by‑layer through cross‑attention, the model guides the inpainting process with fine‑grained visual cues while preserving the absolute scale of the known depth region.

A key technical novelty is the random scale normalization applied during training. Known depth values are linearly mapped to the

Comments & Academic Discussion

Loading comments...

Leave a Comment